Choosing the wrong data integration strategy costs more than most teams realize. Batch pipelines introduce latency that stalls real-time decisions. Overly complex streaming architectures add maintenance overhead that slows small teams. Point-to-point connections become unmanageable as system counts grow.

The right strategy depends on what you are trying to achieve: historical reporting, real-time operational sync, cloud migration, AI readiness, or all of the above. Most organizations end up using a combination.

This guide covers the core data integration strategies, how each one works, when to use it, and what trade-offs to expect. It also covers how to match your strategy to your latency needs, data volume, infrastructure maturity, and business goals.

What Is Data Integration?

Data integration is the process of combining data from multiple sources into a unified, consistent view that teams can use for analytics, AI, operations, and reporting. For a complete breakdown of what data integration is, how it works, and which tools support it, see our full guide to data integration.

Why Data Integration Strategy Matters

Data integration can reduce manual work, improve data quality, support real-time insights, and give teams a more complete view for decision-making. But those benefits only happen when the strategy matches the use case.

A batch-first strategy may work for historical reporting, but it can limit operational workflows that need fresher data. A streaming-first strategy may support real-time use cases, but it can add unnecessary cost and complexity if the business only needs daily reports.

For a deeper breakdown of the business value, see our guide to the benefits of data integration. Here, we’ll focus on choosing the right strategy.

Data Integration Strategies: How to Choose the Right Approach

Most data teams do not choose a single integration strategy and stick with it. They end up with a mix, one approach for historical loads, another for operational sync, another for moving data back into business tools. The goal is not to pick the "best" strategy in the abstract but to match the right approach to the specific problem you are solving.

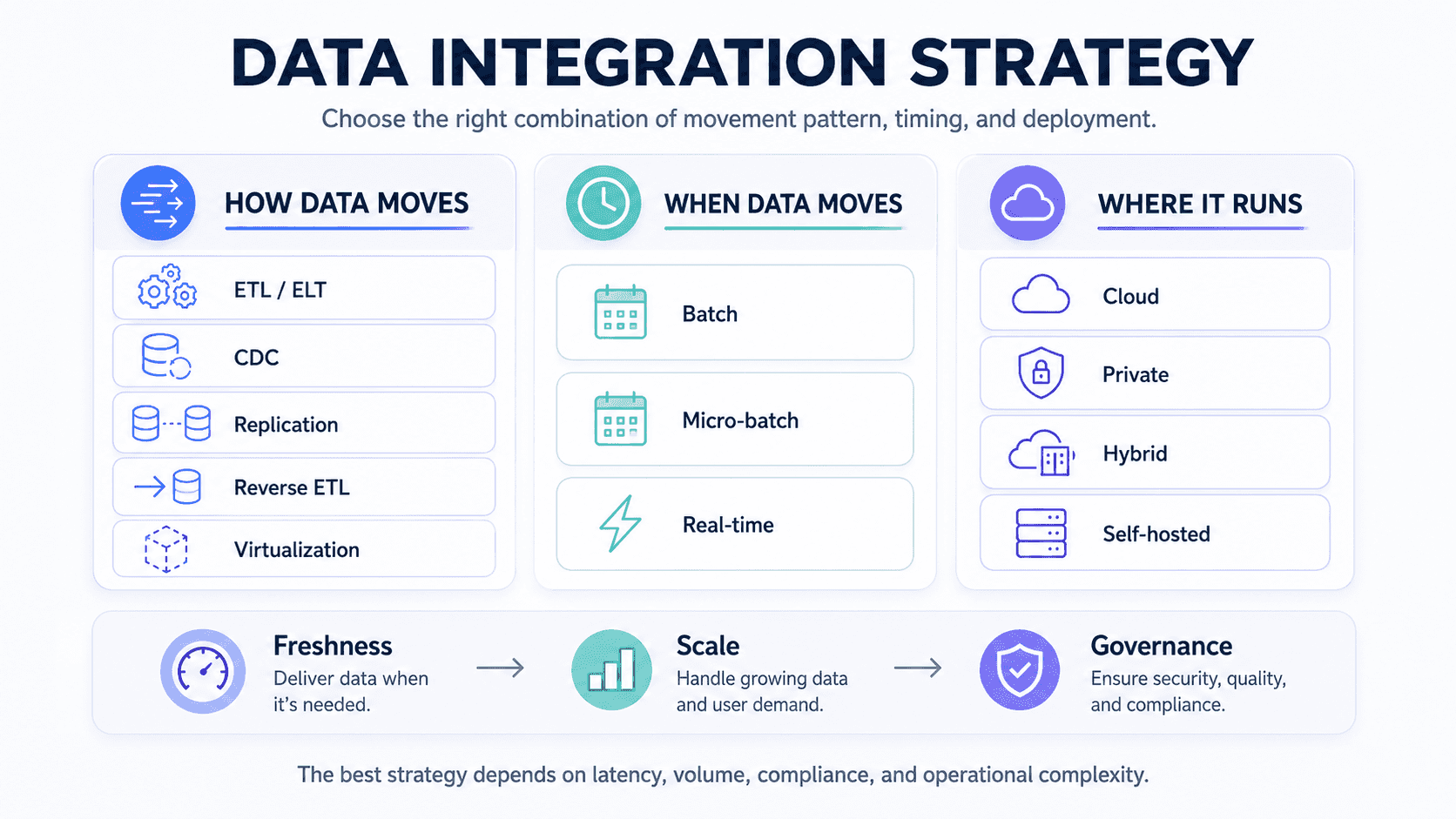

The strategies below are grouped into three categories to make comparison easier: methods that define how data moves and transforms, patterns that define when data moves, and deployment models that define where integration runs.

How Data Moves: Integration Methods

These are the core technical approaches. Each one handles data differently and fits different use cases.

ETL (Extract, Transform, Load)

ETL has been around for decades and it still works well for the right problems. You extract data from source systems, transform it in an intermediate environment to clean and standardize it, and then load it into a destination like a data warehouse.

The key advantage is control. Transformations happen before data lands anywhere, which means you can enforce quality rules, resolve schema conflicts, and validate data before it enters your analytical environment. This is why regulated industries like healthcare and finance still rely on ETL heavily: they need to prove what happened to data before it was stored, not after.

The limitation is that ETL is typically batch-based and resource-intensive. Complex transformation logic can slow pipelines down and make them brittle when source schemas change. If you are running ETL jobs that consistently miss their windows or require constant maintenance, that is a signal the approach may not scale to your current data volumes.

Best for: Historical data loads, regulatory environments where pre-load validation is required, structured data from well-defined sources. See also: what is an ETL pipeline.

ELT (Extract, Load, Transform)

ELT flips the sequence. Raw data goes into the destination first, usually a cloud data warehouse like Snowflake, BigQuery, or Redshift, and transformations happen inside the warehouse using its own compute power.

This approach became practical when cloud warehouses got cheap and scalable enough to make in-destination transformation economical. The main benefit is ingestion speed: since you are not waiting for transformations to finish before loading, data gets into your analytical environment faster. Tools like dbt sit on top of ELT workflows and bring version control, testing, and governance to the transformation layer.

The real risk with ELT is cost sprawl. If transformation queries run frequently on large datasets without good governance, warehouse compute costs climb quickly. Teams that run ad hoc transformations without clear ownership also end up with inconsistent metric definitions across dashboards.

Best for: Cloud-native stacks, large volumes of structured data, teams already using Snowflake or BigQuery with dbt. See also: ETL vs ELT.

Real-Time Streaming and CDC

For database sources, Change Data Capture is the most efficient way to move data in real time. Rather than re-running full queries or comparing snapshots, CDC reads directly from the database transaction log and captures only the changes: inserts, updates, and deletes, as they happen.

There are three types of CDC. Log-based CDC reads from the transaction log directly and has the lowest overhead on the source system. It is the method Estuary uses for PostgreSQL, MySQL, SQL Server, MongoDB, Oracle, and others. Trigger-based CDC fires on write events from within the application, which works when direct log access is unavailable. Query-based CDC polls tables on a schedule using timestamps or row versions. It is simpler to set up but adds query load to the source and can miss deletes if rows are physically removed.

For most production use cases, log-based CDC is the right choice. It handles high-volume environments without degrading source system performance, and it captures every change including hard deletes, which query-based approaches often miss.

Real-time streaming more broadly covers event-driven pipelines that use platforms like Apache Kafka or Apache Flink. These are powerful but come with operational complexity: you need to handle event ordering, exactly-once delivery, schema changes, and failure recovery. Managed platforms reduce that burden significantly.

Best for: Operational sync where data freshness matters, fraud detection, real-time dashboards, AI workflows that need current data, database migrations with zero downtime. See also: the complete introduction to CDC.

Data Replication

Replication copies data from one system to another without applying transformations. The destination ends up as a mirror of the source, kept in sync either continuously or on a schedule.

This is useful for disaster recovery, creating read replicas to offload query load from production databases, and syncing databases to analytical systems. CDC-based replication is the most efficient form because it only transmits changes rather than re-copying full datasets. See also: CDC replication.

Best for: Backup and disaster recovery, creating analytical replicas of production databases, cross-region synchronization.

Data Virtualization

Data virtualization creates a query layer on top of distributed data sources without physically moving the data. Users run queries against a virtual layer that retrieves results in real time from the underlying systems.

The appeal is that there is no data movement, no pipeline to maintain, and no storage duplication. For organizations where data residency requirements or storage costs make centralization impractical, virtualization offers a way to get a unified view without a physical integration project.

The practical limitation is query performance. When a virtualized query spans multiple large systems, latency compounds. It works well for lightweight analytics and reporting but breaks down for heavy transformations or aggregations over large datasets. Tools like Denodo and Dremio are the main options here.

Best for: Lightweight analytics across distributed sources, regulated environments where data cannot leave its origin system, quick access to data without a full integration build.

Reverse ETL

Reverse ETL sends data from a centralized warehouse back into operational tools like CRMs, marketing platforms, customer support systems, and product analytics tools. It closes the loop between your analytical environment and the systems your frontline teams actually use.

The typical use case is enriching a CRM with warehouse-calculated scores. A sales team does not want to query Snowflake to find out which accounts are most likely to convert. They want that score to appear automatically in Salesforce. Reverse ETL handles that automatically.

The trade-off is sync complexity. Destination systems have rate limits, strict field requirements, and identity mapping rules. Getting this wrong can overwrite records, create duplicates, or trigger unintended automations. Tools like Hightouch and Census specialize in this. Estuary also supports writing data back to operational systems as a materialization destination.

Best for: Enriching CRM records with warehouse data, activating marketing segments from analytics, syncing scored data to customer-facing tools. See also: do you actually need a reverse ETL platform.

Manual Data Integration

Manual integration means writing custom scripts or code to extract, transform, and load data between specific systems. It is the most flexible approach because you control everything, but also the most expensive to maintain.

It makes sense in a narrow set of situations: one-time data migrations, highly custom transformations that no off-the-shelf tool supports, or early-stage proofs of concept before you know what your production requirements will be. For anything that runs repeatedly, the maintenance cost of custom code usually outweighs the flexibility benefit within a few months.

Best for: One-time migrations, highly specific transformations not supported by available tools, early-stage pipeline experiments.

When Data Moves: Processing Patterns

These patterns define the timing and frequency of data movement. They apply on top of the methods above.

Batch Processing

Batch processing accumulates data over a period of time and processes it all at once, usually on a schedule. A nightly ETL job that loads the previous day's transactions into a warehouse is a classic example.

Batch is still the right choice when your use case genuinely does not need fresh data. Weekly executive reports, monthly financial reconciliation, and annual compliance snapshots are all fine on batch schedules. The risk is assuming batch is fine when it is not. A team making pricing decisions based on yesterday's sales data or a fraud team reviewing transactions hours after they clear is paying a latency cost they may not have explicitly chosen.

Modern data stacks are moving away from pure batch as a default. Real-time and micro-batch approaches have become accessible enough that batch is increasingly a deliberate choice for specific workloads rather than the starting point for everything.

Real-Time and Event-Driven Processing

Real-time processing handles data continuously as events occur. Rather than waiting for a batch window, changes are captured and delivered to targets within seconds or milliseconds.

The business case is straightforward for time-sensitive workflows: a fraud detection model that runs on yesterday's transactions is not actually doing fraud detection. A personalization engine that refreshes once a day is not personalizing. These use cases require real-time data to function correctly.

The trade-off is infrastructure complexity. Real-time pipelines run continuously, which means they need to handle retries, failures, out-of-order events, and schema changes gracefully. This is manageable with the right platform, but it requires more thoughtful architecture than a batch job.

Micro-Batch Processing

Micro-batch is a middle ground. Data is processed in small, frequent batches, every few seconds or minutes rather than hours, giving near-real-time freshness without the full complexity of event-driven streaming. Spark Streaming uses this model.

For many teams, micro-batch is the practical answer when "real-time" is the goal but true sub-second latency is not actually required. If a business intelligence dashboard refreshing every two minutes is sufficient, micro-batch is simpler to operate than a full streaming infrastructure.

Where Integration Runs: Deployment Models

These decisions affect security, cost, compliance, and operational control. They do not change how data moves, but they determine where the integration infrastructure lives.

Cloud-Based Integration

Cloud integration runs on managed infrastructure provided by a vendor. You do not provision servers, manage network configurations, or handle hardware failures. The vendor handles that. You pay for usage, typically by data volume, connector count, or compute time.

The benefits are speed to deploy and low operational overhead. Most modern integration platforms are cloud-native by default. The trade-off is that your data passes through a third party's infrastructure, which matters for organizations with strict data residency requirements or regulated data.

On-Premise Integration

On-premise integration runs on infrastructure your organization owns and controls. Data never leaves your network, which is the primary reason organizations choose it: security, compliance, and data sovereignty.

The cost is higher upfront and ongoing. Your team owns patching, scaling, hardware failures, and capacity planning. For most organizations, on-premise integration makes sense only when regulatory requirements mandate it or when the sensitivity of the data makes third-party infrastructure genuinely unacceptable.

Private and Hybrid Deployment

Many organizations land between the two extremes. A private deployment runs integration software in your own cloud environment, your AWS VPC or Google Cloud project, managed by the vendor but isolated from their shared infrastructure. This is sometimes called BYOC (Bring Your Own Cloud).

Hybrid architectures combine on-premise sources with cloud destinations, or mix cloud-native tools with on-premise data stores. Most large organizations operate in hybrid mode by default because they have legacy systems that cannot move to the cloud and modern SaaS tools that exist only in the cloud.

Estuary supports cloud, private BYOC, and self-hosted deployment. See deployment options for details.

Choosing the Right Combination

Most production data stacks use several of these strategies simultaneously. A practical starting point:

| If your main need is | Strategy to start with |

|---|---|

| Historical reporting and analytics | ETL or ELT into a cloud warehouse |

| Real-time database sync to a warehouse | Log-based CDC |

| Connecting SaaS apps and automating workflows | API-based integration or iPaaS |

| Querying distributed data without moving it | Data virtualization |

| Sending warehouse insights back to business tools | Reverse ETL |

| Backup and disaster recovery | Data replication |

| High-volume event processing | Streaming platform (Kafka, Flink) |

The right answer for your organization depends on three things: how fresh your data needs to be, how complex your transformations are, and how much infrastructure your team can realistically operate. Start with those constraints and the right approach usually becomes clear.

For a deeper look at the challenges that come up during implementation regardless of strategy, see our guide to data integration challenges.

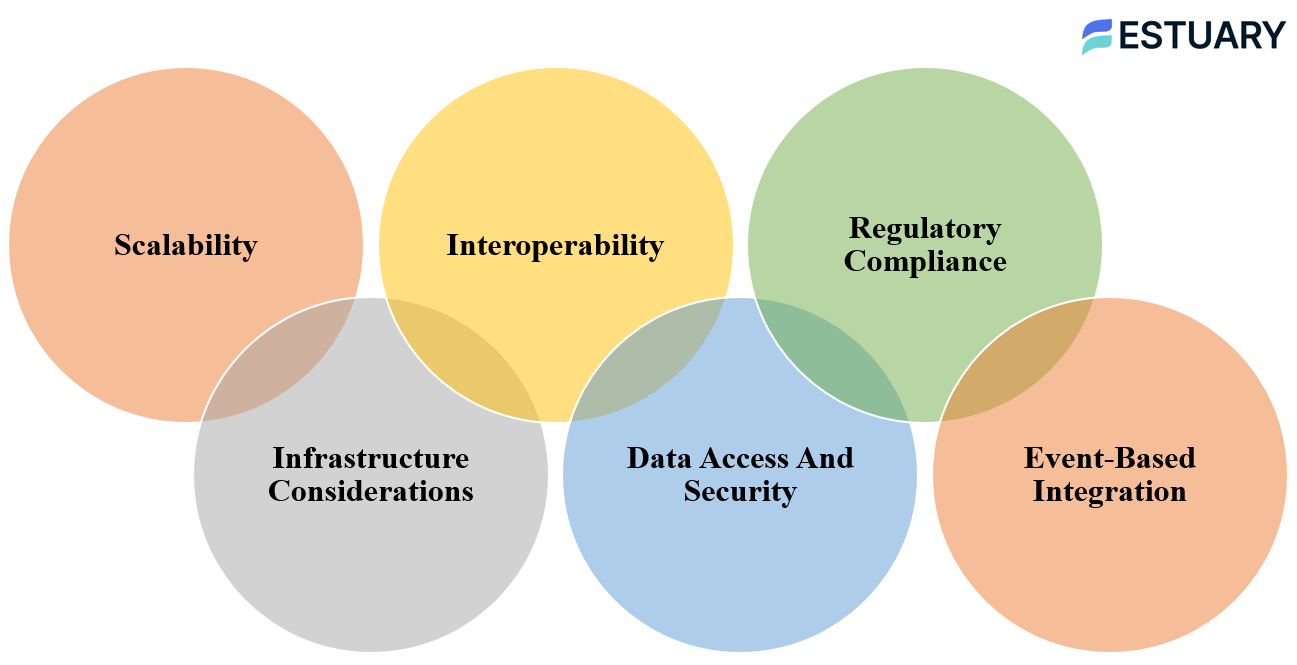

What a Modern Data Integration Strategy Needs

A modern data integration strategy has to do more than move data from one place to another. It needs to support growing data volumes, different deployment models, secure access, compliance requirements, and time-sensitive workflows.

| Requirement | Why it matters |

|---|---|

| Scalability | Pipelines should handle growing data volume without constant redesign |

| Deployment flexibility | Cloud, private, hybrid, and self-hosted needs vary by organization |

| Interoperability | Tools must connect to existing databases, SaaS apps, warehouses, and APIs |

| Security and access control | Data must be protected in transit and at rest |

| Compliance | Pipelines need to support governance, retention, and audit needs |

| Event-based integration | Time-sensitive workflows need updates as changes happen |

Scalability

As data volume grows, your integration strategy should scale without constant redesign. Look for pipelines that support parallel processing, automated resource allocation, backfills, retries, and monitoring so growth does not create fragile workflows.

Deployment Flexibility

Your strategy should support the deployment model your organization needs: cloud, private cloud, hybrid, or self-hosted. This matters for security, data residency, compliance, and how much infrastructure your team wants to manage.

Interoperability

Integration tools should connect cleanly with your existing systems, including databases, SaaS applications, APIs, warehouses, lakes, and event streams. The more heterogeneous your stack is, the more important connector coverage and schema handling become.

Security and Access Control

Data integration pipelines move sensitive data between systems, so security must be built in from the start. Use encrypted connections, role-based access, secret management, and clear permissions for who can create, modify, or access pipelines.

Compliance and Governance

Your strategy should support regulatory and governance requirements such as auditability, retention rules, data masking, and access controls. This is especially important for teams working with customer data, financial records, healthcare data, or regulated workloads.

Event-Based Integration

Some workflows need data to move when business events happen, not hours later. Event-based integration and CDC help teams support real-time dashboards, alerts, operational sync, fraud detection, AI workflows, and customer-facing applications.

How Estuary Fits Into a Data Integration Strategy

A strong data integration strategy usually needs more than one movement pattern. Some data can move in scheduled batches, while other workflows need CDC, streaming, or near real-time sync. Estuary is built for teams that need this flexibility without running separate infrastructure for each pattern.

Estuary supports right-time data movement: historical backfills for existing data, continuous CDC for operational databases, batch captures for lower-urgency sources, and many-to-many routing when the same source data needs to power multiple destinations.

Where Estuary fits best:

- CDC-first strategies: Capture inserts, updates, and deletes from databases like PostgreSQL, MySQL, SQL Server, MongoDB, and Oracle, then keep destinations continuously updated.

- Batch plus real-time strategies: Backfill historical data first, then keep new changes syncing through the same pipeline.

- Warehouse and lakehouse strategies: Materialize data into destinations like Snowflake, BigQuery, Redshift, Databricks, and Apache Iceberg.

- Operational sync strategies: Route data to downstream systems, applications, and event streams when teams need fresher operational data.

- Hybrid and private deployment strategies: Use Estuary Cloud, BYOC, private deployment, or self-hosted options depending on security, compliance, and infrastructure requirements.

- Many-to-many strategies: Capture data once and reuse it across multiple destinations, instead of rebuilding separate point-to-point pipelines.

Estuary is strongest when the goal is fresh, reliable data movement with less operational overhead. It helps teams avoid managing Kafka, Debezium, custom scripts, and one-off connector infrastructure for common CDC and integration workloads.

It is not a replacement for every layer of the data stack. Teams still use warehouses for storage and analytics, dbt or warehouse-native tools for complex modeling, BI tools for reporting, and catalogs or MDM platforms for governance. Estuary fits into the strategy as the data movement layer that keeps those systems supplied with current, trusted data.

Conclusion

Choosing the right data integration strategy comes down to three questions: how fresh does your data need to be, how complex are your transformations, and how much infrastructure are you willing to manage?

Batch ETL is still valid for scheduled, high-volume jobs where latency is acceptable. Real-time CDC is the right choice when freshness directly affects operations, decisions, or customer experiences. ELT works well in cloud-native stacks with strong governance. Virtualization fits when moving data is impractical. Most organizations end up combining several of these approaches across different pipelines.

Estuary supports all of these approaches in a single managed platform. Start building for free or talk to the team about your use case.

Related Reads

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.