Enterprise data integration is the process of connecting data across departments, business units, applications, databases, cloud platforms, and partner systems so large organizations can work from a consistent, governed view of their data.

It matters because enterprise data rarely lives in one place. Sales, finance, operations, product, HR, and customer teams often use different systems with different schemas, access rules, and reporting logic. Without integration, enterprises struggle with fragmented reporting, slow decisions, duplicate work, compliance risk, and AI initiatives built on incomplete data.

This guide explains what enterprise data integration is, how it differs from general data integration, the main challenges enterprises face, and the best practices for building scalable, governed, and AI-ready integration architecture.

Quick answer: Enterprise data integration connects data across departments, applications, databases, cloud platforms, legacy systems, and partner environments into one governed view. It helps large organizations improve analytics, operations, compliance, and AI readiness while reducing silos, duplicated work, and inconsistent reporting.

What Is Enterprise Data Integration?

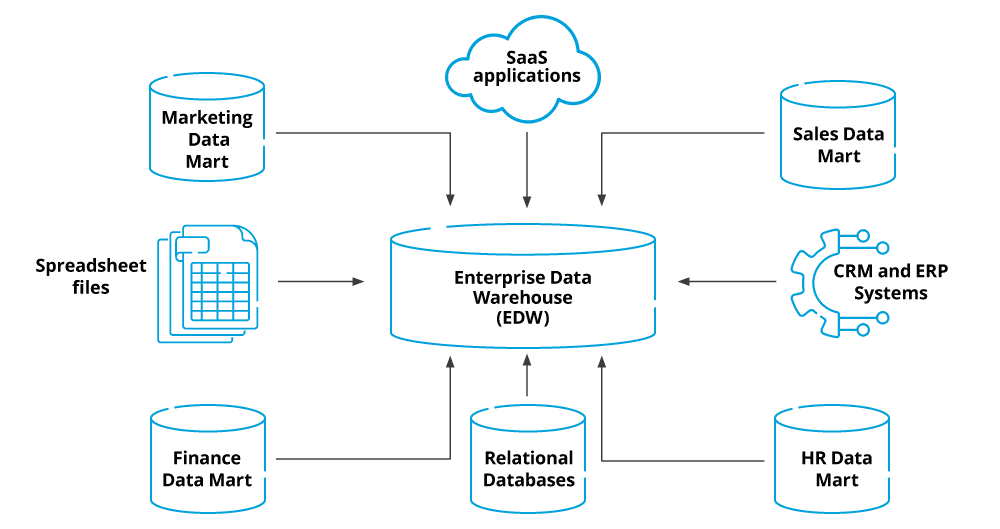

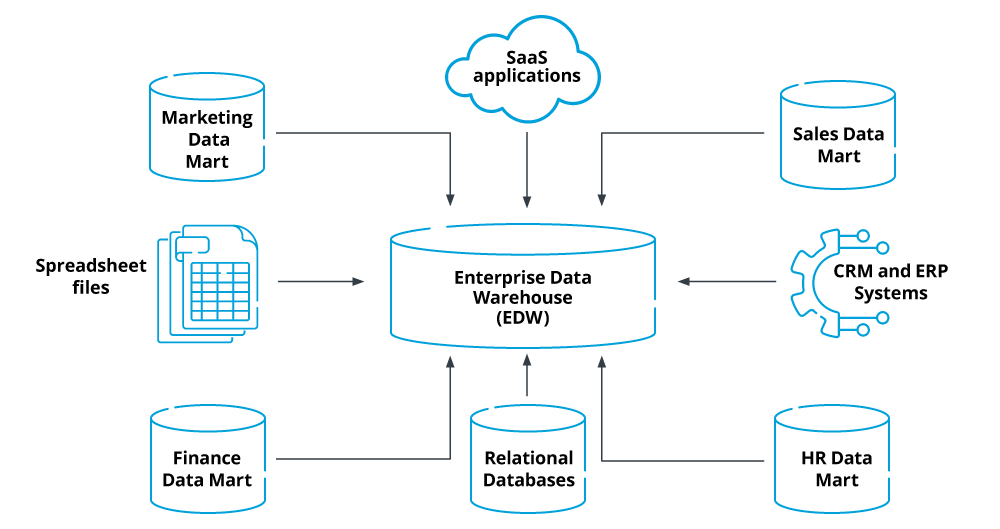

Enterprise data integration is the practice of connecting data across business units, applications, databases, warehouses, cloud platforms, legacy systems, and external partners into a consistent, governed view.

It builds on the broader discipline of data integration, but at enterprise scale. That means the work is not just about moving data between two systems. It also involves ownership, governance, metadata, security, compliance, lineage, architecture standards, and deployment models.

Common enterprise scenarios include mergers and acquisitions, multi-cloud analytics, customer 360 initiatives, ERP modernization, AI readiness, regulatory reporting, and operational data sharing across departments.

Why Enterprise Data Fragmentation Is Hard to Fix

Enterprise data fragmentation happens when departments, business units, and partner systems operate with separate applications, schemas, access rules, and reporting logic. At small scale, teams can sometimes work around this with exports or one-off pipelines. At enterprise scale, those workarounds create slow reporting, inconsistent metrics, governance gaps, and high maintenance overhead.

The challenge is not only technical. Enterprises also need to align data ownership, security policies, compliance requirements, and business definitions across many teams. That is why enterprise data integration requires a strategy for governance, architecture, and long-term maintainability, not just a tool for moving data.

Why Enterprise Data Integration Matters

Enterprise data integration matters because large organizations need trusted data across many teams, systems, and environments. Without it, business units operate from different versions of the truth, reporting slows down, compliance becomes harder, and AI or analytics projects rely on incomplete data.

| Enterprise need | How integration helps |

|---|---|

| Cross-department visibility | Connects data from sales, finance, operations, product, HR, and support into a shared view |

| Faster executive decisions | Reduces manual reporting and gives leaders access to current, trusted data |

| Governance and compliance | Centralizes controls for access, lineage, retention, masking, and auditability |

| Data quality and consistency | Standardizes definitions, formats, and source-of-truth rules across systems |

| AI and analytics readiness | Supplies dashboards, models, and AI workflows with complete and governed data |

| Mergers and acquisitions | Helps harmonize systems, schemas, and reporting across combined organizations |

| Lower operational overhead | Reduces point-to-point pipelines, manual exports, and duplicated integration work |

For a deeper breakdown of business value, see our guide to the benefits of data integration.

Common Enterprise Data Integration Challenges

Enterprise data integration is harder than smaller data integration projects because the number of teams, systems, policies, and data owners is much larger. Most enterprise challenges come from scale, governance, legacy systems, security requirements, and organizational complexity.

| Challenge | Why it matters |

|---|---|

| Legacy and cloud systems | Enterprises often need to connect mainframes, databases, SaaS apps, warehouses, and cloud platforms |

| Departmental silos | Business units may define metrics, ownership, and access rules differently |

| Governance complexity | Access, lineage, retention, masking, and compliance rules must work across many teams |

| Data quality and schema drift | Source systems change often, and inconsistent data can break downstream reporting |

| Real-time requirements | Operational analytics, AI, fraud detection, and customer workflows often need fresher data |

| Scale and performance | Enterprise pipelines need to handle high volume, backfills, retries, recovery, and monitoring |

| Tool sprawl | Too many point-to-point integrations increase cost, risk, and maintenance overhead |

For a deeper breakdown of these issues, see our guide to data integration challenges.

How Estuary Fits Enterprise Data Integration

Enterprise data integration often breaks down when every team builds its own pipeline. One department uses batch jobs, another runs custom scripts, another manages Kafka, and another depends on point-to-point SaaS syncs. Over time, this creates duplicated work, inconsistent data freshness, weak ownership, and high maintenance overhead.

Estuary helps enterprises standardize the data movement layer without forcing every workload into the same pattern. Teams can use batch for lower-urgency reporting, CDC for operational databases, and many-to-many routing when the same source data needs to serve multiple destinations.

This is especially useful for enterprise environments where data must move across warehouses, lakes, applications, event streams, and AI systems while still respecting security, compliance, and infrastructure requirements.

Where Estuary fits best:

- Reducing pipeline sprawl: Replace fragile point-to-point jobs and custom scripts with reusable real-time and batch pipelines.

- Supporting enterprise deployment needs: Use Estuary Cloud, BYOC, private deployment, or self-hosted options depending on security, compliance, and data residency requirements.

- Handling large backfills and continuous sync: Load historical data first, then keep operational databases continuously updated through CDC.

- Routing data across many destinations: Capture once and deliver to Snowflake, BigQuery, Redshift, Databricks, Apache Iceberg, Kafka, and operational systems.

- Managing schema change: Detect and handle source schema changes so downstream analytics, AI workflows, and operational systems are less likely to break silently.

- Reducing infrastructure overhead: Avoid managing separate Kafka, Debezium, connector, retry, and recovery infrastructure for common integration workloads.

Customer proof: Xometry reduced data integration costs by 60% using Estuary’s private deployment while improving freshness for analytics, sales, marketing, operations, accounting, and data science. Hayden AI completed a 5TB backfill, reduced replication lag from 24 hours to about 1 hour, and cut monthly replication costs by 60%. Envoy uses Estuary with sources including Salesforce, Marketo, Chargebee, webhooks, and PostgreSQL to unify real-time and batch integration across the business.

For enterprise teams, the value is not just moving data faster. It is reducing pipeline sprawl, improving freshness, and giving multiple teams a more reliable way to use the same operational data across analytics, AI, and business systems.

Enterprise Data Integration Best Practices

Start with business-critical data domains

Identify the domains that matter most to the organization, such as customers, accounts, orders, products, transactions, employees, suppliers, or inventory. For each domain, define the source systems, owners, access rules, quality expectations, and downstream consumers before building pipelines.

Standardize definitions and ownership

Enterprise integration fails when teams use different definitions for the same metric or entity. Create shared definitions, assign data owners, document source-of-truth rules, and make ownership visible across business and technical teams.

Choose the right integration pattern for each workload

Not every enterprise workflow needs the same approach. Use batch or ELT for lower-urgency reporting, CDC for operational database changes, streaming for event-driven workflows, and reverse ETL when warehouse data needs to be activated in business systems. For a deeper framework, see our guide to data integration strategy.

Design for governance and security from the start

Plan access controls, encryption, masking, auditability, lineage, and retention policies before data moves across systems, teams, or regions. This is especially important for enterprises handling customer data, financial data, healthcare data, or regulated workloads.

Build for schema change and scale

Enterprise systems evolve constantly. New fields are added, APIs change, teams rename objects, and data volume grows. Use schema-aware pipelines, monitoring, retries, backfills, and recovery so integrations can keep working as sources and requirements change.

Avoid point-to-point pipeline sprawl

Point-to-point connections may work early, but they become hard to govern and maintain at enterprise scale. Prefer reusable patterns, shared platforms, and many-to-many routing where possible so teams do not rebuild the same integrations repeatedly.

Measure pipeline health and business impact

Track data freshness, latency, sync failures, quality issues, costs, and downstream usage. Tie integration outcomes to business goals like faster reporting, AI readiness, lower integration cost, improved customer experience, or better operational visibility.

The Takeaway

Enterprise data integration is not just about centralizing data. It is about giving large organizations a governed, scalable, and reliable way to use data across departments, systems, cloud environments, and business workflows.

The right approach depends on the enterprise context. A company modernizing legacy systems may need CDC and cloud migration patterns. A company preparing for AI may need cleaner, fresher, better-governed data. A company dealing with M&A may need to harmonize systems, schemas, and reporting across business units.

Estuary helps enterprise teams reduce pipeline sprawl and keep data moving across real-time and batch workflows, with support for CDC, historical backfills, schema-aware pipelines, and many-to-many routing.

Start building with Estuary for free or talk to our team about your use case.

Frequently Asked Questions

What is enterprise data integration?

Enterprise data integration is the process of connecting data across departments, applications, databases, cloud platforms, legacy systems, and partner environments into a consistent, governed view. It helps large organizations use trusted data for analytics, operations, compliance, and AI.

How is enterprise data integration different from regular data integration?

Data integration can refer to any project that combines data from multiple systems. Enterprise data integration applies that discipline across a larger organization, with more emphasis on scale, governance, security, compliance, ownership, metadata, and long-term architecture.

Why is enterprise data integration important for AI?

AI systems need complete, current, and governed data. If enterprise data is fragmented across departments, SaaS tools, legacy systems, and cloud platforms, AI outputs can become incomplete, stale, or unreliable.

What are the biggest enterprise data integration challenges?

Common challenges include legacy systems, cloud and hybrid environments, departmental silos, poor data quality, schema drift, governance complexity, real-time requirements, tool sprawl, and security or compliance constraints.

What is the best enterprise data integration strategy?

The best strategy depends on business goals, latency needs, compliance requirements, and team capacity. Most enterprises combine batch, ELT, CDC, streaming, and governed deployment models rather than relying on one approach.

What should enterprises look for in a data integration platform?

Enterprises should look for reliable connectors, CDC support, batch and backfill support, schema-change handling, monitoring, recovery, security controls, flexible deployment options, and the ability to route data to multiple destinations without creating point-to-point pipeline sprawl.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.