Schema drift in Snowflake occurs when source schemas change without downstream updates, resulting in pipeline failures and rising costs. This article explains how to handle Snowflake schema evolution reliably.

The word “drift” suggests some kind of gentle, gradual process. And when you think about it, schema drift is exactly that. Just like snowflakes that accumulate, small schema changes compound over time, until one day, a machine learning job fails or a successful pipeline produces wrong metrics. The main causes of this are column-level changes (such as additions, deletions, or modifications) or changes to a column’s data type.

Enterprises use Snowflake to produce analytics, AI, or real-time applications. Regardless of the end product, schema drift is still one of the most significant hidden risks in data pipelines. As organizations produce more data, which is inevitable as they grow, they also start adding more sources, streaming larger volumes, and sending more real-time records downstream. As a result, the cost of schema evolution increases over time.

In this post, we're going to discuss why schema drift is a persistent issue in Snowflake pipelines, why we can't resolve it with traditional approaches, and how Estuary treats it as a first-class concern. We'll also mention how Estuary resolves this issue in both real-time and batch pipelines, and how it contributes to teams so that they can trust their data.

Why Schema Drift Is One of the Most Significant Problems in Snowflake Pipelines

Let’s define what schema drift is first. Basically, it refers to any unplanned or incremental change to the base structure of the data as it moves through the pipeline.

In practice, this can take many forms. Column additions or deletions are common, and especially troublesome if the communication between teams is not well-established. The same applies to data type changes (e.g., INT -> VARCHAR, or VARCHAR -> VARIANT).

The usage of semi-structured data is widespread in Snowflake, as well, and we can also observe nested object evolution in those data types (VARIANT). Finally, source teams may rename columns or change their nullability rules, which introduces issues downstream.

Below is an example Snowflake table before schema drift occurs:

sqlCREATE TABLE marketing_events (

event_id INTEGER,

user_id INTEGER,

campaign_id VARCHAR(50),

event_type VARCHAR(10),

utm_source VARCHAR(4)

event_ts TIMESTAMP

);After a while, stakeholders will request deeper analysis to gain better insights into the data. That’s why the team may decide to introduce more columns:

channelcolumn to analyze various marketing channels (VARCHAR(20));costcolumn so that they can calculate the cost of each channel, source, and similar (FLOAT);metadatacolumn that keeps location information per each row (VARIANT).

The source team may decide to change the data type of the user_id column to VARCHAR to reflect how they produce events.

As a consequence, the payload that the team receives will look like this:

json{

"event_id": "12345",

"user_id": "u-456",

"campaign_id": "c-789",

"event_type": "click",

"event_ts": "2026-01-10T12:01:45Z",

"channel": "paid_search",

"cost": 1.27,

"metadata": {

"keyword": "data pipelines",

"region": "EMEA"

}

}When pipelines are built with traditional methods that don't account for schema changes, they assume a fixed schema. This assumption can quickly cascade into failures. A change in the data type of a single column can cause the pipeline to fail and stop data from flowing downstream. This will create delays in the dashboards.

And even if the pipeline doesn’t fail, it will ignore the new columns, leading to silent information loss in the dashboards. Over time, downstream components become fragile. Eventually, they break after the inaccurate data arrives into the pipeline.

When these pipelines process millions or even billions of records, all these scenarios end up being extremely expensive. Incorrect dashboards result in a loss of trust, wrong metrics lead to wrong business decisions, and on-call escalations become more frequent. The cost extends beyond engineering time; it also represents a great opportunity cost.

As you can see, schema drift is both a technical problem and a business risk.

Why Traditional Snowflake Schema Evolution Approaches Break at Scale

In the traditional approach, Snowflake pipelines are often built with components that are glued together. These generally include CDC tools, record queues, custom transformation jobs, and manually managed loaders.

Let’s say our pipeline consists of Debezium + Kafka + custom Snowflake ingestion script.

With this setup, the entire pipeline becomes fragile in the face of schema changes.

- A new column is introduced to the source database table. → An engineer needs to update the schema registry compatibility rules accordingly.

Debeziumproduces a new schema version. → Again, another engineer needs to adapt components to match the new version.- The new field is also introduced to the Kafka topics, which now include wrong records.

- Snowflake ingestion jobs still expect the old schema. → Engineers need to modify the ingestion job, and because incorrect data has already been ingested, they may also need to backfill data and rerun downstream transformation jobs.

Since each step is manual and requires coordination, teams often resort to workarounds:

- They ignore the newly added unknown fields, or

- They cast some columns to error-prone types such as

VARCHARorVARIANT.

While these approaches reduce failures in the short term, they increase the long-term complexity. Eventually, each of these workarounds will have to be replaced with more robust solutions.

As you can see, not only does manual schema management in Snowflake create maintenance overhead, but it also degrades the reliability.

So, these approaches aren't really viable in real-world systems.

Main Principles for Reliable Snowflake Pipelines with Estuary

Estuary handles all schema evolution with a unified streaming approach, which is how it prevents issues from being pushed to downstream components. In addition, schema evolution isn’t treated as an exception. Changes are actually expected, and Estuary designs its pipelines accordingly.

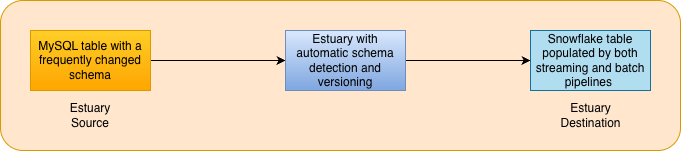

As a data movement layer for enterprises, Estuary unifies real-time and batch data and handles schema evolution automatically.

Below, I'll elaborate on some of its core principles.

Schema Evolution as a First-Class Concern

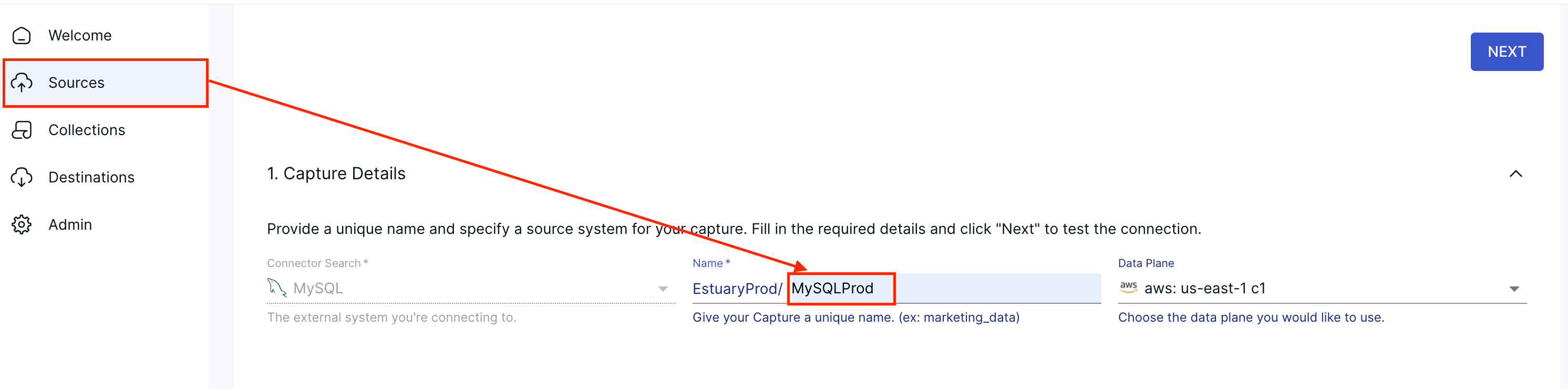

Let’s say we want to ingest data from a MySQL server into Snowflake. We can start a new pipeline in Estuary by setting up a MySQL source:

Once we enter credentials for the database, Estuary runs AutoDiscover to detect source tables and schemas. This process automatically populates the Target Collections section with available tables or other resources from the source system.

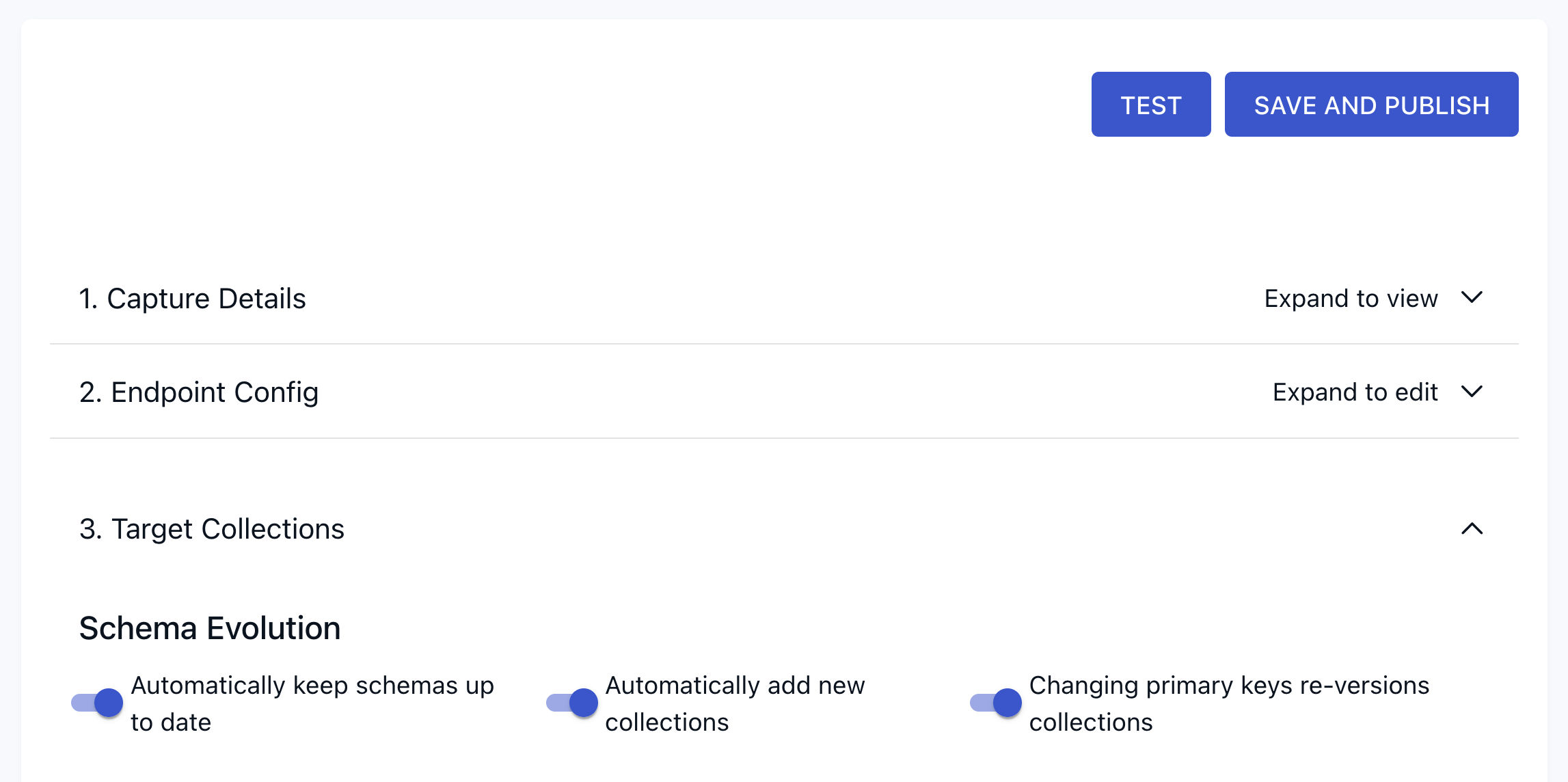

We can also continue to use AutoDiscover while the pipeline is running, tracking schema versions and their evolution over time. This is configured via a set of Schema Evolution settings, which allow Estuary to automatically keep schemas up to date, add new collections when new resources are discovered, and re-version collections when primary keys change.

These options allow Estuary to work with schema evolution dynamically based on the pipeline’s needs.

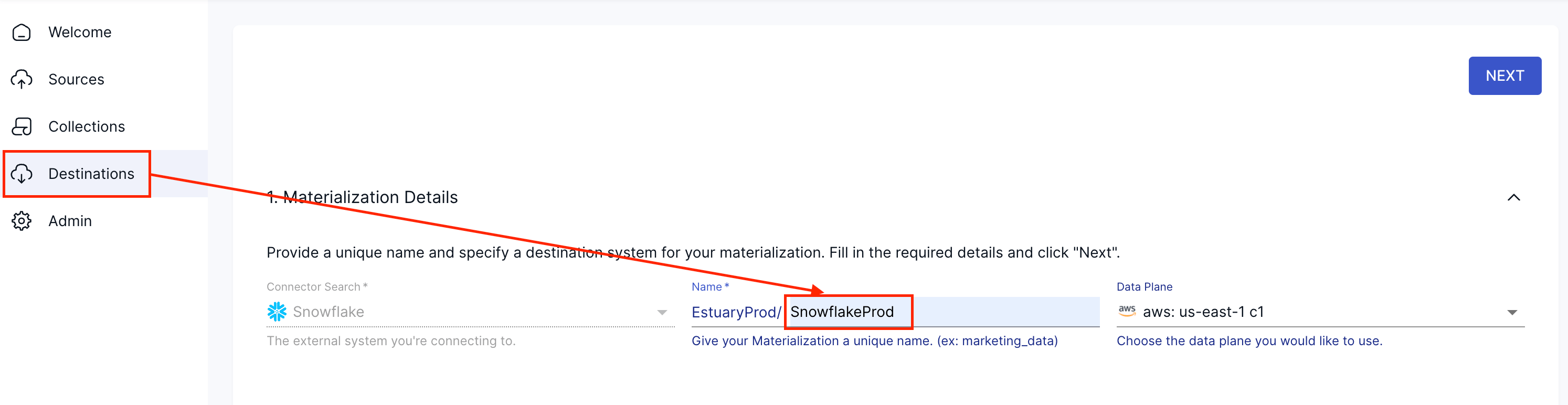

We can also set up our Destination as Snowflake and create the materialization accordingly.

Finally, we can link our source data to our materialization, completing the pipeline.

Throughout the process, we don’t need to define any data types or manually map source data types downstream. If we look behind the scenes, Estuary uses JSON as an intermediary representation, as it can translate between heterogeneous systems without schema rigidity enforcement.

Unified Real-Time and Batch Handling

Schema changes don't depend on how data arrives, whether it’s via streaming CDC or scheduled batch jobs. Estuary’s approach is also not tied to this. It ensures consistency across ingestion modes, which is a significant part of the unified approach it implements.

Automatic Detection and Versioning

With its AutoDiscover capability, Estuary can detect source schema changes automatically, version them, and send them downstream in a controlled manner. The most important point is that it does all of this without breaking any component of the pipeline:

How Does Estuary Handle Schema Drift for Snowflake?

Snowflake is a powerful analytical engine, but it’s not responsible for the data that arrives into it. Estuary is in charge of a pipeline that's capable of handling schema drift, and it provides schema-aware materialization directly into Snowflake.

Built-In Support for CDC and Event Streams

Estuary doesn’t require a custom schema migration logic. It can automatically ingest CDC streams and event data, and when you define a source in the Estuary UI, new columns are automatically added to the destination Snowflake table.

Reliable Backfills and Replays

Estuary natively supports replays and backfills. This is really important since schema changes might require historical data to be reprocessed in case of an issue. It ensures that all fields are populated consistently, without restructuring the entire pipeline.

Schema-Aware Materialization

Following the traditional approach, teams might dump everything into VARIANT, and push complexity to downstream components. Estuary, however, materializes schema-aware tables in Snowflake, which reduces query complexity, compute costs, and downstream failures.

Significant Outcomes: Stability, Cost Savings, and Faster Time to Value

Treating schema drift as a solved problem delivers measurable business outcomes:

Fewer Incidents and On-Call Escalations

If common schema evolution scenarios are automatically handled, the number of pipeline failures is bound to diminish. And even when errors do occur, the recovery will be much faster, saving teams plenty of their precious time. Built-in retries make pipelines more durable by catching and correcting failures instead of taking whole pipelines down.

Pipeline Cost Savings

Let’s consider an engineering team that manages 20 streaming pipelines simultaneously. If each pipeline requires 2 engineering hours per change and encounters only a single change per pipeline per month, that totals 480 engineering hours per year. At $50/hour, this alone amounts to $24,000 in annual savings.

This calculation only takes engineering time into consideration. It doesn’t account for Snowflake warehousing costs (because of backfills and replays), consequences due to wrong business decision, wasted leadership time, or any other hidden costs.

But Estuary also reduces Snowflake compute costs by avoiding backfills or inefficient VARIANT-heavy queries.

In the end, the total yearly savings are likely to be much higher than the number we’ve mentioned. Plus, these changes will also free up your engineers’ time and enable them to focus on new features and improvements.

| Manual approach | With Estuary | |

|---|---|---|

| Engineering time | 480 hours/yr (2 hours/change x 20 pipes x 12 months) | Near zero (automated discovery/updates) |

| Direct labor cost | $24k per year (at $50/hour) | $0 (it’s a feature) |

| Snowflake compute | High, due to frequent backfills and heavy VARIANT queries | Low, thanks to schema-aware materialization |

| Hidden costs | Meetings, on-call assistance, stress | Minimal, as your pipelines are reliable |

| Business risk | High: broken dashboards, wrong decisions, trust issues | Low: stable data flow and clear lineage |

| Opportunity costs | Engineers stuck on maintenance | Capacity for new projects |

Faster Introduction of New Sources

As we all know, AI and LLM applications are built on top of rapidly evolving data. Estuary allows teams to add new sources and fields easily, eliminating weeks of manual pipeline work. This approach improves reliability in the face of schema changes while also providing more room for experimentation.

Improved Compliance and Lineage

When it comes to compliance audits, clear data lineage and schema versioning are critical. Teams can maintain an explicit history of schema changes when new fields are introduced or when changes to the table are made.

Schema Drift as a Solved Problem, Not a Recurring Issue

Enterprises don’t need more custom scripts or methods; they need a dedicated data movement layer with a unified data approach.

Traditional architectures built from different components place extra workload on every team and every consumer. On the other hand, a unified platform handles such complexity with a centralized approach. It enforces consistency across each component and scales as the company grows.

Estuary builds architectures that are highly resilient to schema drifts. It treats real-time and batch ingestion pipelines with a unified approach, making our combined pipelines more resilient with a centralized solution. Additionally, schema discovery and versioning are automated, and our pipelines are stronger against schema evolution as a result. It also creates schema-aware Snowflake materialization and provides flexible deployment models, including BYOC and hybrid options.

The result? Fewer failures along your pipeline and an increased trust in your new architecture, your pipelines, and your business decisions.

Conclusion

If schema drift is one of the significant challenges that your engineering team is facing, it’s time to rethink your data architecture.

Estuary can become your enterprise data movement layer with its unified data approach. It simplifies pipelines, reduces maintenance overhead, and lowers costs significantly, allowing engineers to focus more on improvements.

With Estuary, schema evolution becomes a solved problem rather than a repetitive incident.

FAQs

How do functional workarounds create long-term technical debt?

Can Estuary handle schema drift for real-time streaming data as effectively as batch data?

How does Estuary ensure data lineage and compliance when schemas are constantly evolving?

About the author

Senior Data Engineer with over four years of experience delivering end-to-end analytical solutions across industries including e-commerce, marketplaces, SaaS, proptech, enterprise platforms, and supply chain operations. Strong expertise in AI, machine learning, cloud engineering, and modern data and DataOps architectures, with a focus on scalable analytics, real-time data processing, and production-grade AI systems.