Key Takeaways

- Amazon DynamoDB is built for high-throughput operational workloads, while Amazon Redshift is optimized for analytics, making data movement between the two essential for reporting and BI use cases.

- There are three primary ways to move data from DynamoDB to Redshift, each designed for different latency, scalability, and operational requirements::

(1) Estuary for real-time, managed CDC pipelines

(2) AWS Glue for scheduled, batch-based ETL

(3) DynamoDB Streams with AWS Lambda for custom near-real-time pipelines - Estuary is the simplest and most scalable approach for continuous synchronization, using DynamoDB Streams to capture changes and automatically materialize them into Redshift with minimal operational overhead.

- AWS Glue is best for batch or transformation-heavy workloads, but it introduces latency and requires managing crawlers, ETL jobs, and scheduling.

- DynamoDB Streams + Lambda enables near real-time updates, but requires custom code, schema mapping, and ongoing maintenance.

Introduction

Today, organizations collect large volumes of semi-structured data in systems like Amazon DynamoDB. While DynamoDB is optimized for high-throughput operational workloads, it is not designed for complex analytics or large-scale reporting.

Moving data from DynamoDB to Amazon Redshift allows teams to analyze operational data using SQL, run complex queries at scale, and generate analytics-ready datasets for business intelligence and decision-making.

In this guide, we’ll explore three practical methods to move DynamoDB to Redshift: using a real-time CDC platform like Estuary, running batch ETL jobs with AWS Glue, and leveraging DynamoDB Streams with AWS Lambda.

Before diving into the migration methods, let’s briefly review DynamoDB and Redshift and how their capabilities complement each other.

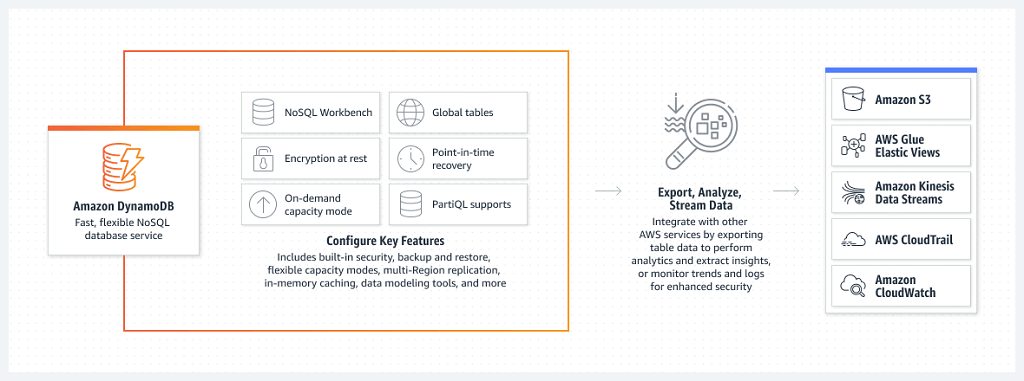

Amazon DynamoDB Overview

As a NoSQL database, Amazon DynamoDB is a modern and dynamic solution for data management needs. Backed by AWS, DynamoDB eliminates the need for provisioning, scaling, or handling servers, enabling you to focus on your core business operations.

With its flexible schema and seamless scalability, DynamoDB allows you to store and retrieve both structured and semi-structured data. The schema-less design eliminates the constraint of traditional relational databases, allowing you to adapt to evolving data needs.

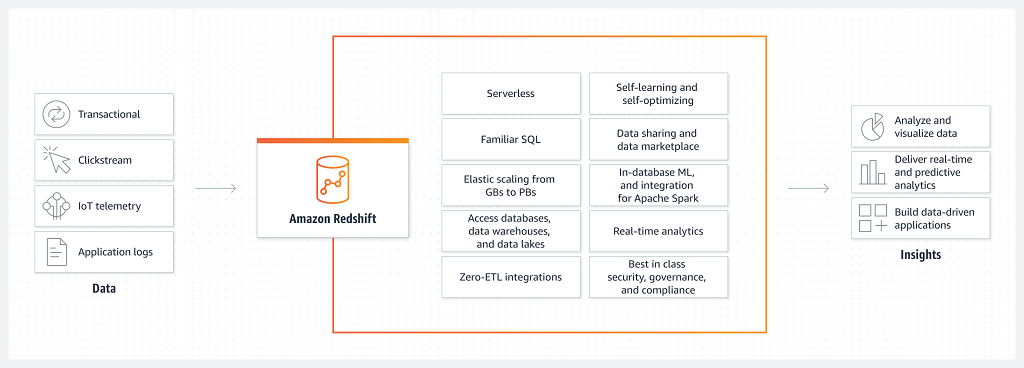

Redshift Overview

As a data warehouse, Amazon Redshift is a robust cloud-based data analytics and management platform. Designed to handle massive datasets, Redshift offers a high-performance, fully managed solution for storing, querying, and analyzing structured and semi-structured data. Its columnar storage architecture and parallel processing capabilities enable lightning-fast query performance, making it well-suited for complex analytic workloads.

In addition, Redshift scalability allows you to effortlessly expand storage and computational resources, ensuring optimal performance as data volume grows. These features help you to derive meaningful insights and drive data-driven decisions from your data.

3 Methods to Move Data from DynamoDB to Redshift

There are several approaches to moving DynamoDB to Redshift, depending on your specific use case. Below are three of the most effective methods:

Method #1: Use No-Code Tools Like Estuary for DynamoDB to Redshift

Method #2: Transfer Data from DynamoDB to Redshift Using AWS Glue (Batch ETL)

Method #3: Migrate data from DynamoDB to Redshift using DynamoDB Streams

Method #1: Move DynamoDB to Redshift Using Estuary (Recommended)

Estuary is a no-code–first data integration and Change Data Capture (CDC) platform built for reliable, real-time and batch data movement. It allows teams to move data from Amazon DynamoDB to Amazon Redshift without building or maintaining custom pipelines, Lambda functions, or brittle scripts.

Using DynamoDB Streams, Estuary continuously captures inserts, updates, and deletes from DynamoDB tables and materializes them into Redshift tables through an S3-backed staging process. This ensures your analytics data stays up to date while minimizing operational overhead.

Why Use Estuary for DynamoDB to Redshift:

- Unified Data Movement: Capture, transform, and load DynamoDB data into Redshift using a single managed pipeline instead of stitching together multiple AWS services.

- Seamless Integration: Move data from DynamoDB to Redshift without writing custom code, managing AWS Data Pipeline jobs, or maintaining Lambda-based stream processors.

- Real-Time CDC with DynamoDB Streams: Continuously capture item-level changes from DynamoDB Streams and materialize them into Redshift with low latency, keeping downstream analytics fresh.

- Schema Handling for NoSQL Data: Estuary automatically maps DynamoDB’s semi-structured data into Redshift-compatible tables, reducing manual schema management.

Before you begin, log in to your Estuary account or sign up for free.

Prerequisites:

- DynamoDB tables with DynamoDB Streams enabled (select New and old images for the stream view type).

- AWS credentials for Estuary: either Access Key ID + Secret Access Key, or an IAM Role ARN (if using a role, ensure Max Session Duration = 12 hours).

- An IAM principal with DynamoDB permissions (for example: ListTables, DescribeTable, DescribeStream, GetRecords, GetShardIterator, Scan/Query as needed).

- An S3 bucket for temporary staging files (best performance if it’s in the same AWS region as your Redshift cluster).

- A Redshift user with permission to create tables in the target schema (Estuary automatically creates and manages destination tables based on source schemas.).

Learn more about these connectors: DynamoDB, Amazon Redshift

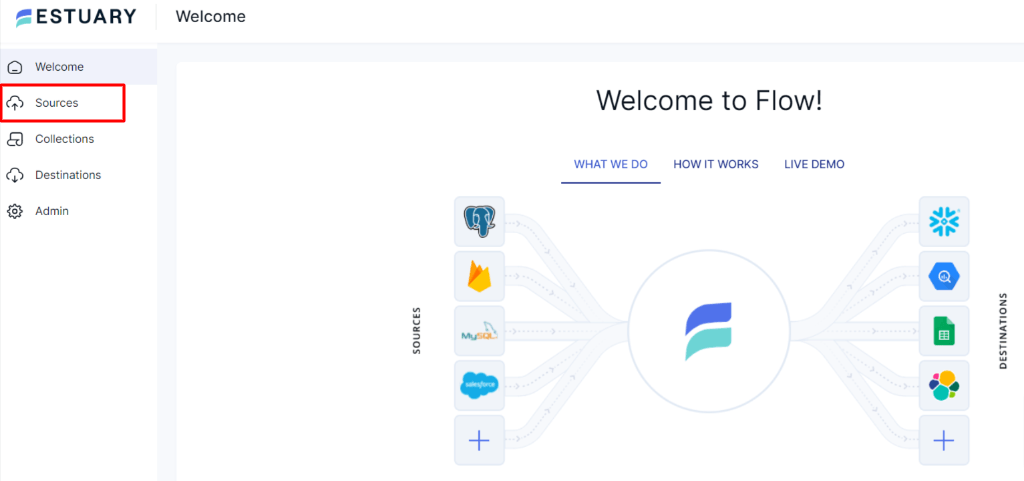

Step 1: Connect and Configure DynamoDB as Source

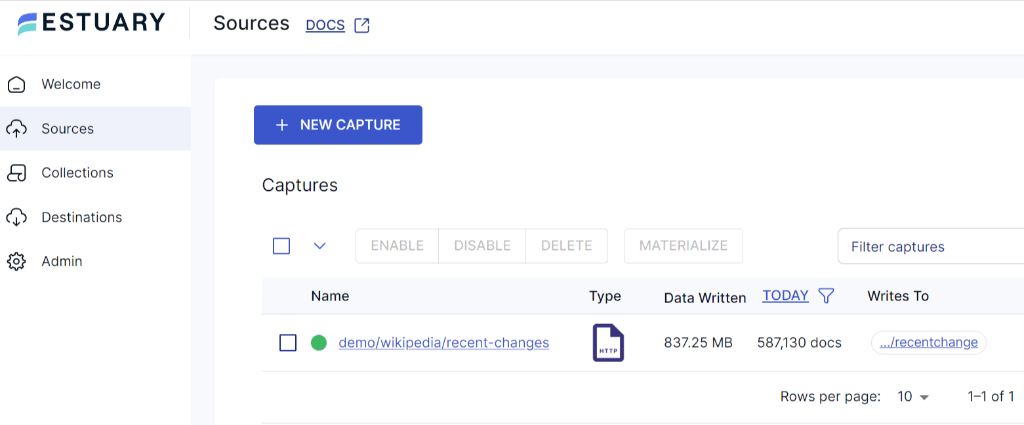

- After logging in, click on the Sources located on the left-side of the Estuary dashboard.

- On the Sources page, click on the + New Capture button.

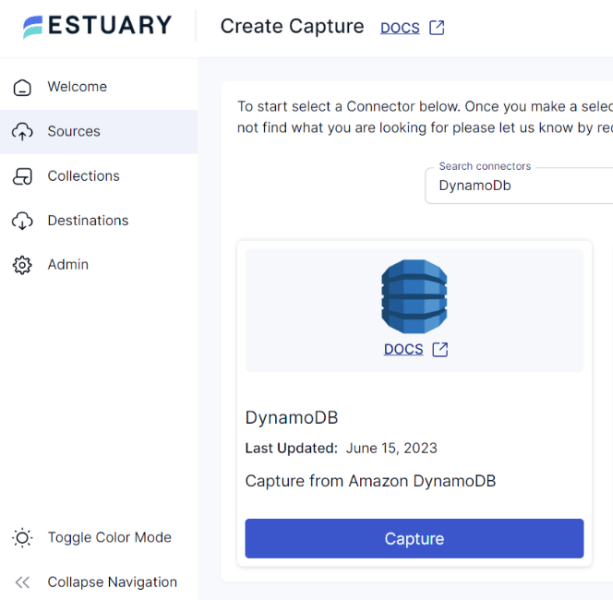

- To set DynamoDB as your data pipeline source, search for the DynamoDB connector in the Search Connectors Box on the Create Capture page. Then click on the Capture button in the same tile.

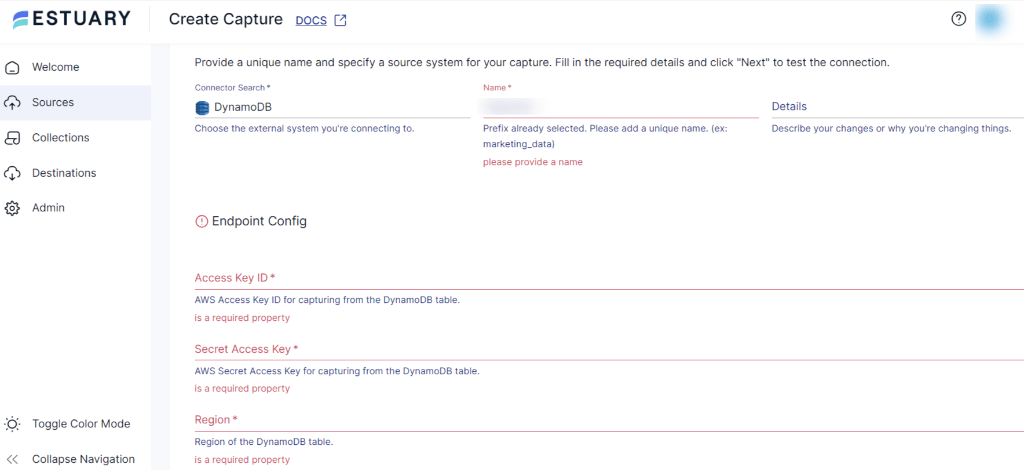

- unique name for your connector and specify the source system for your capture. Fill in the mandatory Endpoint Config details, such as Access Key ID, Secret Access Key, and Region.

- Click on the Next button to test the connection, followed by Save and Publish.

Estuary will automatically backfill existing table data and then transition to continuously capturing changes from DynamoDB Streams.

Step 2: Connect and Configure Redshift as Destination

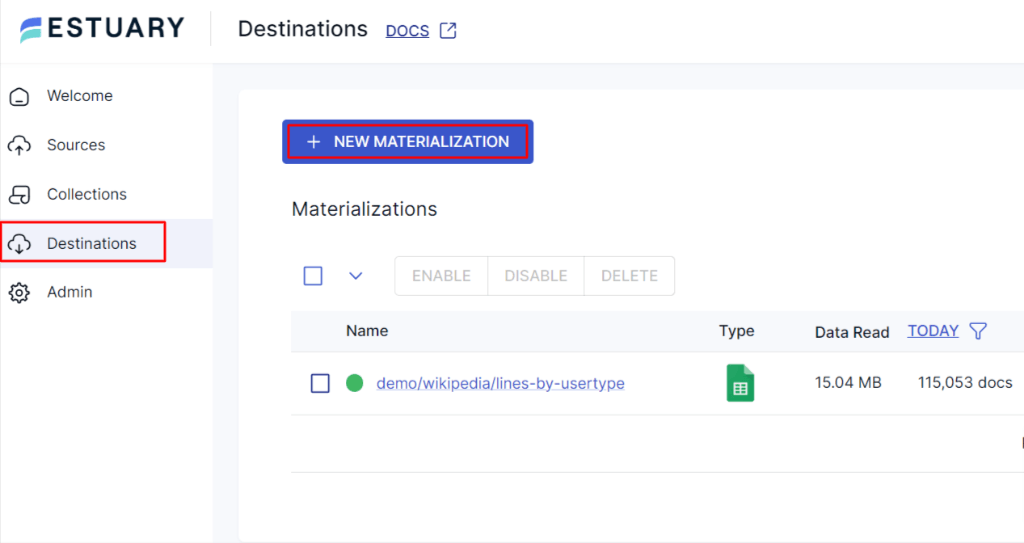

- To configure Amazon Redshift as your pipeline destination, navigate back to the Estuary Dashboard and click on Destinations.

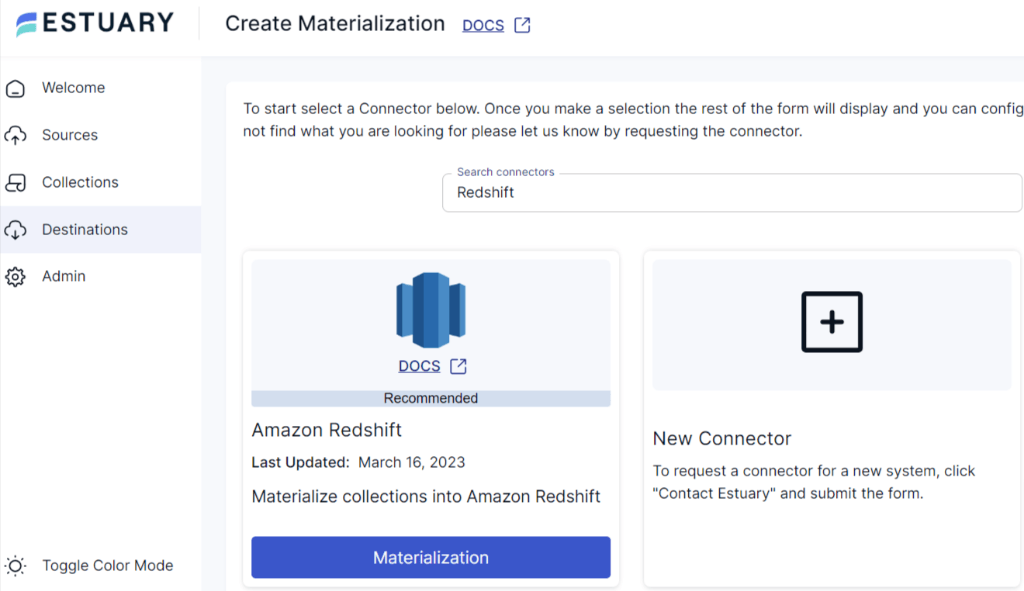

- On the Destinations page, click on the + New Materialization button.

- Search for the Amazon Redshift connector in the Search Connectors Box on the Create Materialization page. Then click on the Materialization button in the same tile.

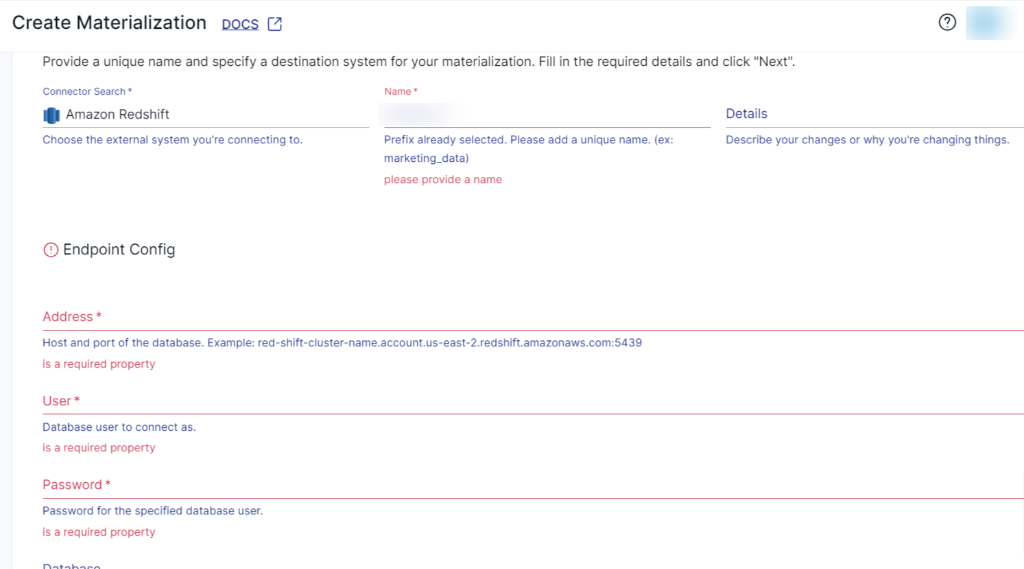

- On the Amazon Redshift Create Materialization page, provide a unique name for your connector and specify the destination system for your materialization. Fill in the mandatory Endpoint Config details, such as Address, Username, Password of the database, S3 Staging Bucket, Access Key ID, Secret Access Key, Region, and Bucket Path.

- While the Flow collections are automatically chosen, consider using the Source Collections section to manually pick the collections you wish to materialize into your Redshift database.

- Once you’ve filled in all the fields, click on Next, then proceed by Save and Publish. Estuary will now replicate your data from DynamoDB to Redshift in real-time.

Tip: For best performance, keep one Redshift materialization per schema and add multiple collections as bindings instead of creating multiple materializations.

Optional: If your workload is a good fit, you can enable delta updates per table binding to change how updates are applied in Redshift.

When to Choose This Method

This approach is ideal if you need:

- Near real-time analytics on DynamoDB data

- A managed alternative to AWS Data Pipeline or custom Lambda code

- Continuous synchronization instead of one-time exports

- A scalable solution that grows with your data volume

For a comprehensive guide to set a complete flow, follow the Estuary’s documentation:

Method #2: Transfer Data from DynamoDB to Redshift Using AWS Glue (Batch ETL)

AWS Glue is a fully managed ETL service that can be used to move data from Amazon DynamoDB to Amazon Redshift using scheduled batch jobs. In this approach, DynamoDB data is first made available in Amazon S3, transformed using AWS Glue, and then loaded into Redshift using the COPY command.

This method is best suited for periodic migrations or transformation-heavy workflows, rather than real-time analytics.

When to Use AWS Glue

AWS Glue is a good option if you:

- Need batch or scheduled data loads

- Want to apply custom transformations before loading data into Redshift

- Prefer an AWS-native solution

- Do not require continuous, low-latency updates

Prerequisites

Before getting started, ensure you have:

- One or more DynamoDB tables

- DynamoDB export to S3 enabled or an export process in place

- An Amazon S3 bucket for staging data

- An Amazon Redshift cluster

- An IAM role with access to DynamoDB, S3, Glue, and Redshift

Step 1: Export DynamoDB Data to Amazon S3

AWS Glue does not directly stream data from DynamoDB. Instead, data must be available in Amazon S3.

You can export DynamoDB data to S3 using:

- DynamoDB’s on-demand export to S3, or

- A scheduled batch export process

Exported data is typically stored in JSON or Parquet format, which integrates well with Glue.

Step 2: Catalog the Data Using AWS Glue Crawler

- Open the AWS Glue console

- Create a Crawler

- Point the crawler to the S3 location containing the exported DynamoDB data

- Run the crawler to populate the Glue Data Catalog

This allows Glue to infer schemas and prepare the data for transformation.

Step 3: Create and Run an AWS Glue ETL Job

- Create a new Glue job (Visual ETL, Notebook, or Script mode)

- Select the DynamoDB export from the Glue Data Catalog as the source

- Apply transformations as needed:

- Flatten nested attributes

- Normalize data types

- Align fields with Redshift table schema

- Write the transformed data back to Amazon S3

Glue jobs can be run on demand or scheduled.

Step 4: Load Data into Amazon Redshift

Once transformed data is available in S3, load it into Redshift using the COPY command:

plaintextCOPY target_table

FROM 's3://your-bucket/path/'

IAM_ROLE 'arn:aws:iam::account-id:role/redshift-role'

FORMAT AS PARQUET;This efficiently loads large datasets into Redshift for analytics.

Limitations of the AWS Glue Approach

- Batch-only: No real-time or near-real-time updates

- Higher setup complexity: Requires managing crawlers, jobs, and IAM roles

- Operational overhead: Monitoring and cost management are necessary

- Latency: Data freshness depends on job schedules

For continuous synchronization or low-latency analytics, a CDC-based platform like Estuary is a better fit.

Method #3: Migrate Data from DynamoDB to Redshift Using DynamoDB Streams

DynamoDB Streams is a feature of DynamoDB that allows you to capture and record real-time changes to data stored in a DynamoDB table. It maintains a time-ordered sequence of item-level modifications within a DynamoDB table. This helps you track changes such as item creation, updation, and deletions as they occur.

Amazon Dynamo to Amazon Redshift migration using DynamoDB Stream involves the following steps:

- Enable DynamoDB Streams for the source table in the DynamoDB console dashboard to capture changes in real-time.

- Create and configure an AWS Lambda function using the AWS Lambda service. AWS Lambda allows you to execute code in response to events and triggers from different AWS services or custom-defined events.

- In the Lambda function, process the stream records and transform the data into a Redshift-suitable format. This may include formatting, data enrichment, and other necessary changes.

- Set up an Amazon S3 bucket to temporarily store the transformed data from the Lambda function.

- Create your Amazon Redshift cluster if you don’t have one. Design the table schema to align with the transformed data from DynamoDB.

- Execute the COPY command in the Lambda function that loads the transformed data from the S3 bucket into the Redshift table.

By leveraging DynamoDB Streams, you can create an efficient and near real-time migration process from DynamoDB to Redshift.

Limitations of Using DynamoDB Streams

While using Stream to migrate data from DynamoDB to Redshift offers real-time integration capabilities, there are certain limitations to consider:

- Lambda Function Development: Configuring Lambda function to process DynamoDB Streams and transform data involves coding skills, error handling, and understanding of event-driven architecture. Developing and maintaining these functions can be challenging.

- Data Complexity: Mapping DynamoDB’s NoSQL data to Redshift’s relational database can be complex. Transforming and mapping certain data types will need additional processing and might not have straightforward one-to-one mapping.

Conclusion

Moving data from Amazon DynamoDB to Amazon Redshift makes it possible to analyze high-volume operational data using SQL and run analytics at scale. While DynamoDB is built for fast, transactional workloads, Redshift is optimized for reporting, dashboards, and complex queries.

In this guide, we covered three approaches: batch ETL with AWS Glue, near real-time pipelines using DynamoDB Streams, and a managed CDC-based approach with Estuary. Each method has trade-offs in terms of latency, complexity, and maintenance.

For teams that need continuous synchronization with minimal operational effort, Estuary offers the simplest path. By handling change capture, schema mapping, staging, and loading automatically, Estuary keeps Redshift in sync with DynamoDB without custom code or fragile infrastructure.

Choose the approach that best matches your data freshness and operational needs—and if real-time analytics is the goal, a managed CDC solution like Estuary is often the fastest path forward.

Effortlessly migrate Amazon DynamoDB to Redshift with Estuary—get started with a free pipeline now!

Related blogs:

FAQs

Can DynamoDB data be queried directly in Redshift?

Does DynamoDB support real-time replication to Redshift?

Can DynamoDB Streams be used to load data into Redshift?

Is AWS Glue suitable for DynamoDB to Redshift migration?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.