Amazon DynamoDB is known for its scalability and flexibility in handling massive datasets, while Snowflake provides a robust analytical environment designed for fast, reliable insights. By integrating these platforms, organizations can continuously stream data from operational systems into Snowflake, enabling real-time analytics and better decision-making.

Whether you're setting up a one-time data transfer or building a real-time integration pipeline, this guide walks you through three effective approaches to move or sync DynamoDB data into Snowflake:

- Manually exporting and loading data

- Using DynamoDB Streams for event-driven replication

- Leveraging SaaS tools for low-latency, real-time data integration

What Is DynamoDB? An Overview

Amazon DynamoDB is a NoSQL database designed to seamlessly store and retrieve data. With its schemaless design, DynamoDB accommodates structured and semi-structured data while ensuring low-latency access and high availability. This enables you to efficiently manage diverse data types.

To capture and stream data in real time, DynamoDB offers Streams that provide a time-ordered sequence of changes to data items. This feature allows you to track updates, trigger downstream actions, and integrate DynamoDB with analytical systems like Snowflake. By using Streams, you can implement event-driven architectures and enable real-time data pipelines.

What Is Snowflake? An Overview

Snowflake is a powerful cloud-based data warehouse that allows you to seamlessly store, access, and retrieve data. You can use ANSI SQL and advanced SQL with Snowflake to efficiently handle a wide range of structured and semi-structured data types. This helps you conduct in-depth analysis, achieve deeper insights, and make data-driven decisions.

These features are driven by Snowflake’s internal and external stages, which play a vital role in data management. Internal stages are used for loading data from local systems. On the other hand, external stages provide a means to access data from cloud-based storage (like S3) and load it directly into the Snowflake table. These stages streamline data integration, making it easier to load and continuously sync data into Snowflake for analysis.

3 Methods to Move Data from DynamoDB to Snowflake

- Method #1: Manually Migrate Data from DynamoDB to Snowflake

- Method #2: Move Data from DynamoDB to Snowflake Using DynamoDB Stream

- Method #3: Load Data from DynamoDB to Snowflake Using SaaS Tools

Method #1: Manually Move Data from DynamoDB to Snowflake (Batch Integration)

Manually moving data from DynamoDB to Snowflake involves a series of steps and is best suited for initial setup or infrequent batch updates. This approach can be useful for one-time data transfers or as a foundation before implementing a more automated integration. Here are the prerequisites:

- An active AWS account with access to DynamoDB tables.

- An active Snowflake account with the target database.

Step 1: Extract Data from DynamoDB

To extract data from DynamoDB, you can use the AWS Management Console or AWS CLI.

Using the console:

- Log in to your AWS Management Console.

- Navigate to the DynamoDB service and select the table from where you want to export data. Now, choose the Operation Builder tab.

- In Operation Builder, create a scan or query operation based on your requirements. Add filters, conditions, and attributes that you need while exporting data.

- In the Result tab, select Export to CSV.

- Specify the filename and location to store the CSV file and click Save.

- The data, exported from the DynamoDB table in the CSV file format, will now be saved in your local system.

Using the CLI:

- Install and configure AWS CLI with your AWS credentials.

- Now, you can use the aws dynamodb scan command in the CLI to retrieve data from the DynamoDB table. Make sure you specify the desired output format as CSV or JSON in the output flag.

plaintextaws dynamodb scan --table-name Employee_data --output csv > output.csvIn the following command, Employee_data is the DynamoDB table name and output.csv is the output CSV file name. The data will be saved in the output.csv in your local system.

- Clean and enrich the CSV file data as per the requirements.

Step 2: Store Data in an Amazon S3 Bucket

Next, you’ll need to move the CSV files to the Amazon S3 bucket. There are two ways to do this:

Using the console:

- Choose an existing Amazon S3 bucket or create one in your AWS account. Need help? Refer to this documentation.

- After selecting or creating a S3 bucket, create a new folder in the bucket.

- Navigate to that specific folder and click the Upload button.

- In the Upload dialog, choose Add Files and select the CSV files from your local machine.

- Configure other settings as needed, such as metadata and permissions.

- Click the Start Upload button to upload the CSV file to your S3 bucket, where it can be accessed later by Snowflake during the loading process.

Using the CLI:

- You can use the aws s3 cp command in AWS CLI to copy CSV files data to your S3 bucket. Here’s an example of the command:

plaintextaws s3 cp “local_file_path/output.csv” s3://bucket_name/folder_nameReplace the local_file_path/output.csv with the local path of your CSV file and bucket_name/folder_name with the name and path of your S3 bucket.

Step 3: Copy Data to Snowflake

With your DynamoDB data now stored in S3 as CSV files, you can proceed to load it into Snowflake for analysis. This step completes the batch-style integration between the two platforms.

- To upload CSV files into the Snowflake table, log into your Snowflake account.

- In Snowflake, an external stage refers to the location where the data files are stored or staged, such as the S3 bucket. You’ll need to create an external stage that points to your S3 bucket. To create an external stage, navigate to the Worksheet tab, where you can execute SQL queries and commands.

plaintextCREATE OR REPLACE EXTERNAL STAGE <stage_name>

URL = 's3://<bucket_name>/<path>'

St=TORAGE PROVIDER = S3

CREDENTIALS = ( AWS_KEY_ID = '<aws_key_id>' AWS_SECRET_KEY = '<aws_secret_key>' )

FILE_FORMAT = CSV;Replace stage_name with the name you want to give to your external stage and make sure the URL points to the location where data files are stored in the S3 bucket. Specify the AWS credentials and file format as CSV.

- Use the existing target Snowflake table or create one using the SQL statements.

- With the stage and target table in place, you can now use the COPY INTO command to load data from the S3 stage into your Snowflake table. Here’s an example:

plaintextCOPY INTO target_table

FROM @stage_name/folder_name

FILE_FORMAT = (TYPE = CSV);Replace target_table with the name of your Snowflake target table, @stage_name with the name of your Snowflake external stage pointing to the S3 bucket, and folder_name with the specific folder path within your S3 bucket where the CSV files are located.

- After executing the above command, the CSV file data from the S3 bucket will be loaded into the Snowflake target table.

- For more automation, you can use Snowpipe, Snowflake’s continuous data ingestion service, to monitor your S3 bucket and load new files into Snowflake as they arrive. While this adds some level of automation, it's still fundamentally a batch-based ingestion mechanism and does not provide real-time data streaming or change data capture.

plaintextCREATE OR REPLACE PIPE snowpipe_name

AUTO_INGEST = TRUE

AS

COPY INTO target_table

FROM @stage_name/folder

FILE_FORMAT = (FORMAT_NAME = CSV);Replace snowpipe_name with the preferred name, target_table with the Snowflake destination table, and @stage_name/folder with the path of your data in the external stage.

That’s it! You’ve successfully completed the DynamoDB to Snowflake ETL process manually. Congratulations.

While this manual process works for initial data transfers or periodic syncing, it involves numerous steps, introduces latency, and requires technical expertise with AWS and Snowflake. These limitations make it difficult to maintain up-to-date data in Snowflake, especially if you need real-time integration or continuous synchronization.

Unfortunately, these requirements can sometimes act as a barrier, slowing down the migration process. These complexities can also lead to delays in achieving real-time updates and efficient data replication.

Method #2: Stream DynamoDB Data into Snowflake Using DynamoDB Streams

Want to reduce manual steps and begin streaming data from DynamoDB to Snowflake in near real time? DynamoDB Streams is a native AWS feature that captures changes in your database and enables event-driven integrations with other systems, including Snowflake.

To do that, you must enable Stream for the table you want to migrate and write code in the Lambda function. Here’s how to do it:

- Enable DynamoDB Stream: In the AWS Management Console, select the DynamoDB table you want to integrate with Snowflake. Under the Overview tab, enable Stream to capture real-time data changes. These changes will serve as the source for your data pipeline.

- Create an external stage: Create an external stage in Snowflake that points to your S3 bucket. This stage will be used to receive the streamed data from DynamoDB and load it into Snowflake tables.

- Configure the Lambda function: Create and configure the Lambda function using the AWS Lambda service. In the Lambda function, write and execute the code to fetch DynamoDB data and export it into your S3 bucket. This can also include converting the data into CSV or JSON file format, depending on your Snowflake stage setup.

- Load Data into Snowflake: Load Data into Snowflake: Use the COPY INTO command (or Snowpipe) to ingest the streamed records stored in S3 into your Snowflake tables. While this achieves near-real-time ingestion, it still requires monitoring and maintenance of custom scripts and cloud infrastructure.

While this method enables near real-time integration between DynamoDB and Snowflake, it comes with trade-offs: you must write and maintain custom code, handle error recovery, and manage multiple cloud services. For teams without dedicated engineering resources, this complexity can become a bottleneck to achieving truly reliable data streaming.

Method #3: Integrate DynamoDB with Snowflake Using SaaS Tools (Real-Time Streaming)

Looking for another option? You’re in luck.

SaaS platforms provide an intuitive, low-code interface for setting up real-time integrations between operational systems like DynamoDB and analytics platforms like Snowflake. These tools eliminate the need for complex scripts or infrastructure, making it easy to stream data continuously and reliably.

Estuary is a powerful SaaS platform that simplifies the DynamoDB to Snowflake integration process through real-time, change data capture (CDC) pipelines. With just a few clicks, you can continuously stream updates from your DynamoDB tables directly into Snowflake, ensuring your analytics are always based on the latest operational data.

With its change data capture (CDC) capabilities, Estuary ensures low-latency synchronization between DynamoDB and Snowflake. Any inserts, updates, or deletes in DynamoDB are automatically streamed to Snowflake, enabling you to maintain a reliable single source of truth for analytics and AI applications.

Here's a step-by-step guide to set DynamoDB to Snowflake data pipeline using Estuary. But before proceeding, let’s understand the prerequisites:

- One or more DynamoDB tables with DynamoDB streams enabled.

- A Snowflake account that includes a target database, a user with appropriate permissions, a virtual warehouse, and a predefined schema.

- Snowflake account's host URL.

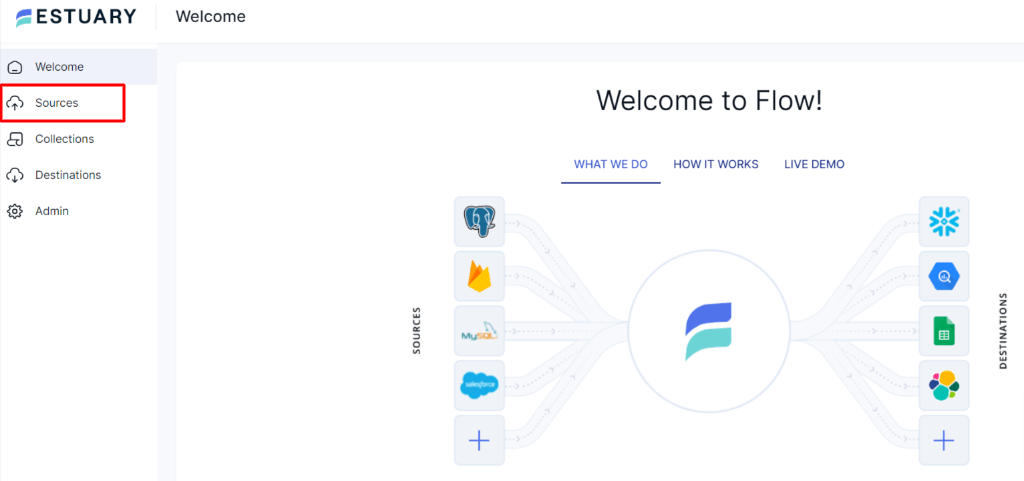

Step 1: Signup or Login

- To create a DynamoDB to Snowflake data pipeline, log in to your Estuary account or create one for free.

Step 2: Connect and Configure DynamoDB as Source

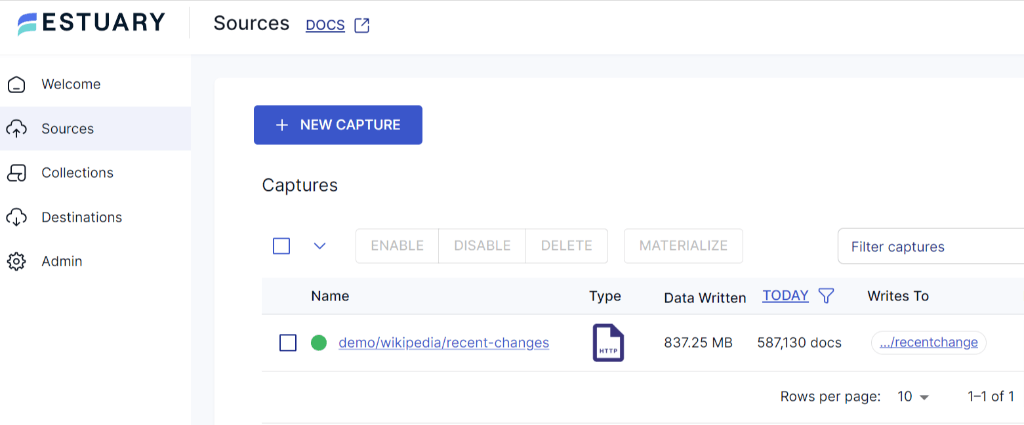

- After logging in, you’ll land on Estuary’s dashboard. To connect DynamoDB as the source of your data pipeline, locate and select Sources on the left-hand side of the dashboard.

- Click the + New Capture button on the Sources page.

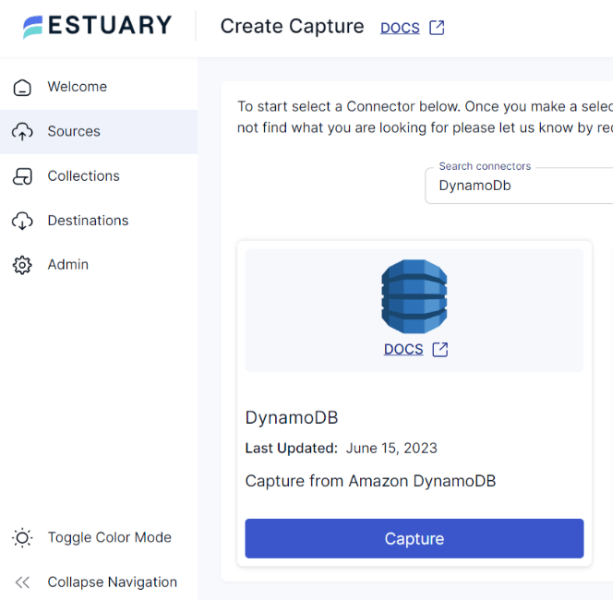

- You’ll be directed to the Create Capture page and search for the DynamoDB connector in the Search Connector Box.

- Click the Capture button.

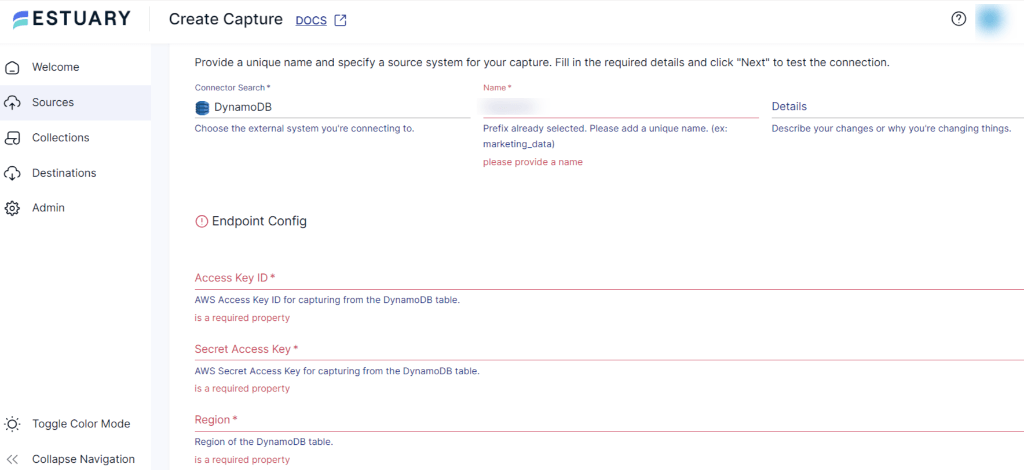

- Now, the DynamoDB Create Capture page will appear. Provide a unique name for your connector and mention the source system for your capture. Fill in the Endpoint Details, such as Access Key ID, Secret Access Key, and Region.

- Once you fill in all the details, click Next and test your connection. Then click Save and Publish.

Step 3: Connect and Configure Snowflake as Destination

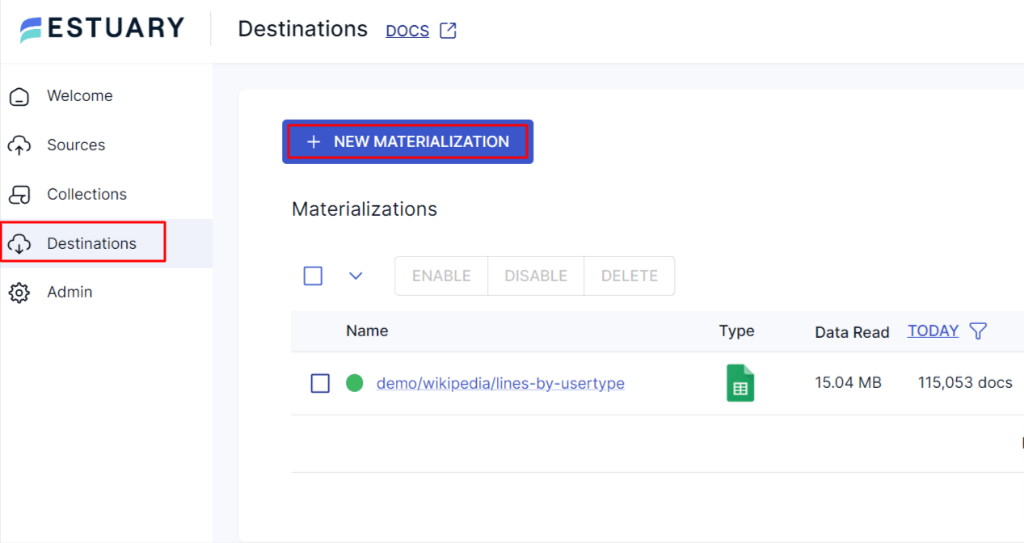

- Navigate back to the Estuary dashboard to connect Snowflake as the destination of your data pipeline. Locate and select Destinations on the left-hand side of the dashboard.

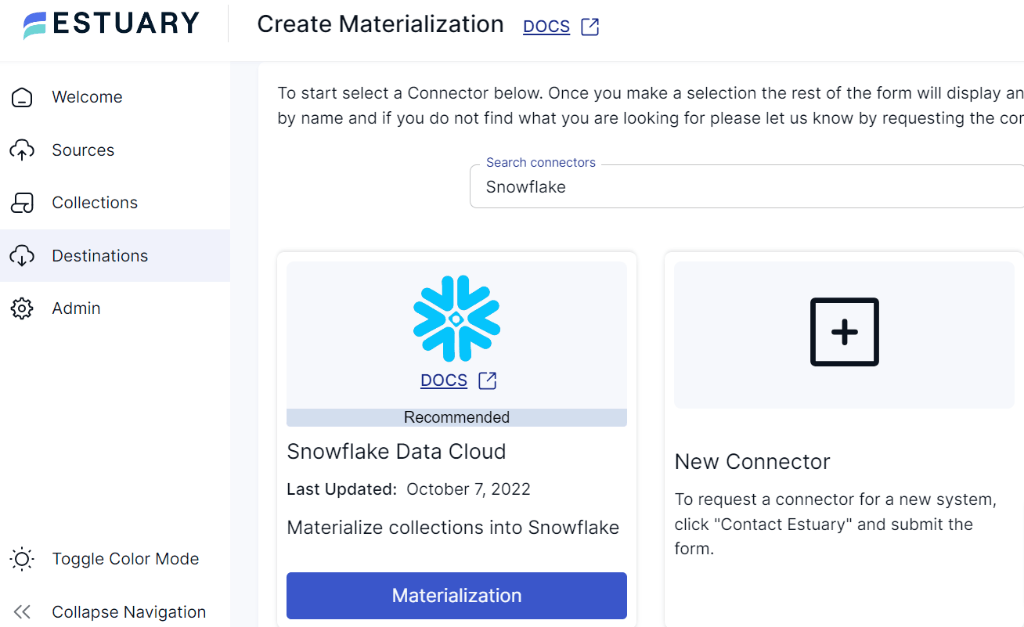

- Click the + New Materialization button on the Destinations page.

- You’ll be directed to the Create Materialization page. Search for the Snowflake connector in the Search Connector Box.

- Click the Materialization button.

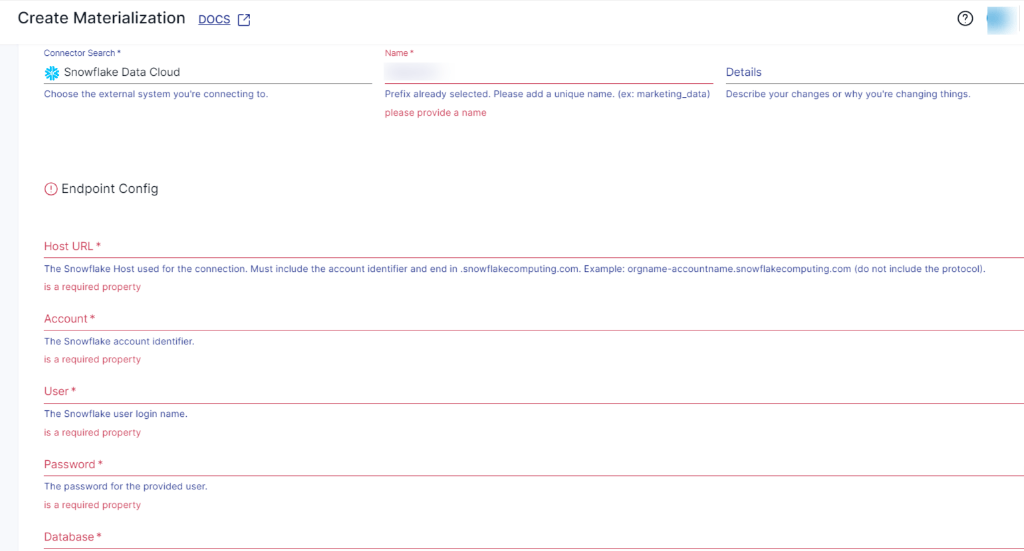

- On the DynamoDB Create Capture page, provide a unique name for your connector and mention the destination system for your materialization. Fill in the Endpoint Details, such as Snowflake Account identifier, User login name, Password, Host URL, SQL Database, and Schema information.

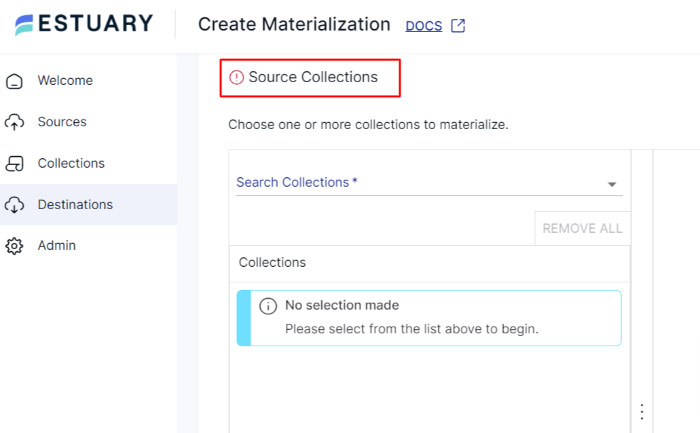

- You can also choose the collections you want to materialize into your Snowflake database from the Source Collection section.

- After filling in all the details, click Next and proceed by clicking Save and Publish. Estuary now continuously streams data between DynamoDB and Snowflake with just two setup steps — no infrastructure, no code, and no delays.

For a comprehensive guide on how to create a complete flow, follow Estuary’s documentation:

Benefits of Using Estuary

- Pre-built Connectors: Estuary offers 200+ fully managed connectors that support real-time, scalable data integration across a wide range of sources and destinations. These connectors include popular databases, warehouses, APIs, and more.

- Data Synchronization: Estuary efficiently synchronizes data across multiple destinations, ensuring both data accuracy and the availability of real-time information across platforms.

- No-code Solution: With its user-friendly interface and pre-built connectors, you can design, configure, and automate data integration workflows without extensive coding expertise.

Conclusion

By now, you’ve explored three different approaches to sync data from DynamoDB to Snowflake — from manual batch exports to fully automated real-time streaming.

While the first two methods may work for initial setup or specific use cases, they come with trade-offs like latency, coding overhead, and operational complexity.

In contrast, Estuary makes real-time integration effortless. Its CDC-powered pipelines stream every change from DynamoDB into Snowflake as it happens, giving your teams the freshest data possible for analytics, reporting, or AI workloads.

Looking to integrate DynamoDB and Snowflake in minutes, not months? Try Estuary today — no code required.

Are you interested in moving your DynamoDB to other destinations? Check out these comprehnsive guides:

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.