In today's world, data is like gold – it's incredibly valuable and absolutely essential for smooth operations. So, when we talk about data replication strategies, they go beyond mere duplication; they form the foundation of an advanced and reliable data management system. Availability, scalability, and data integrity are what they are all about, plain and simple!

Still not convinced? Here’s something to consider: 82% of companies go through at least one unplanned outage every single year. This shows how important it is to have solid data replication strategies in place.

We thought so too, and that’s why we have put together a power-packed guide in which we’ll explore 7 proven data replication strategies. We’ll also look at reliable tools to use and two real-world use cases to show you how these strategies work in action.

What Is Data Replication?

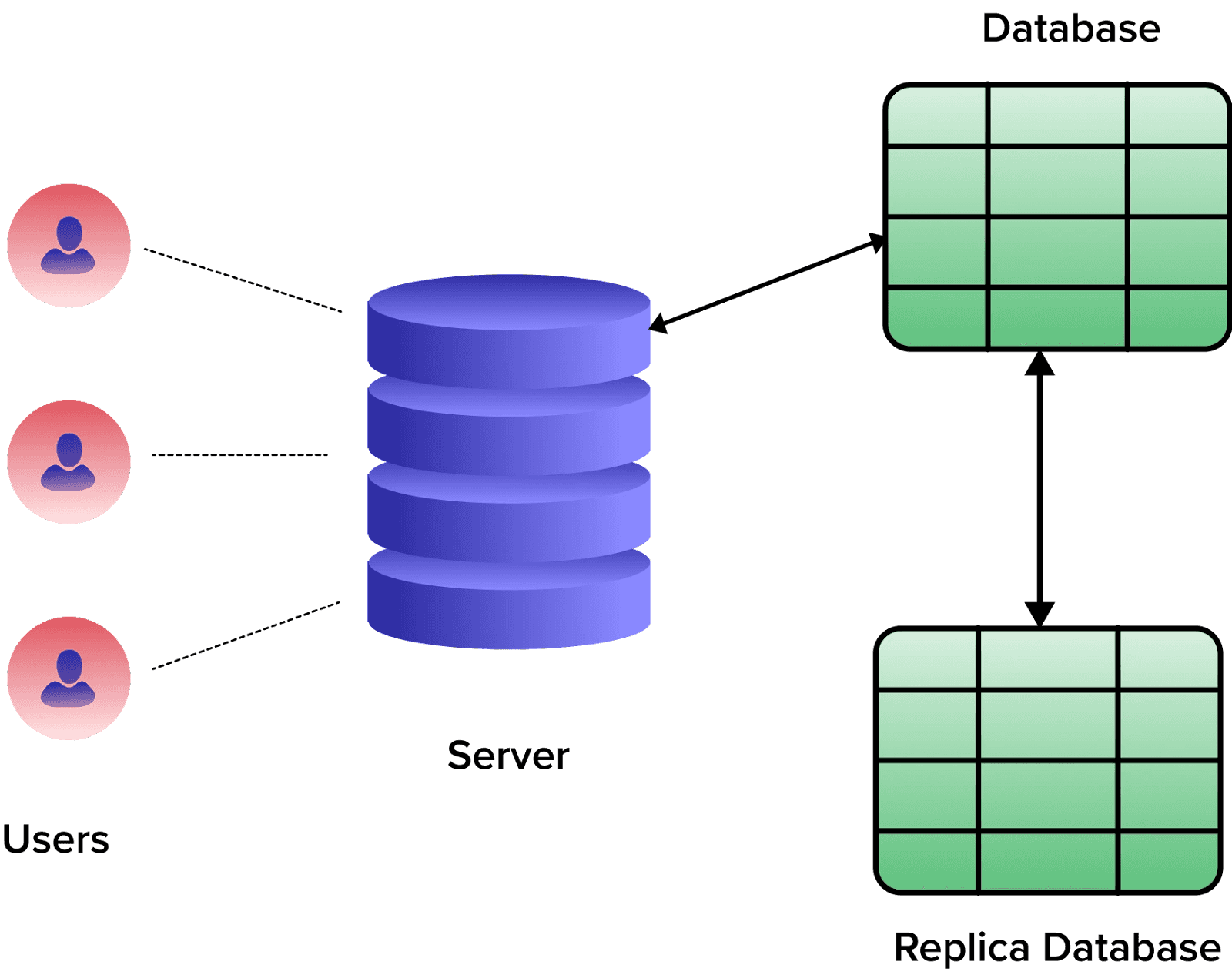

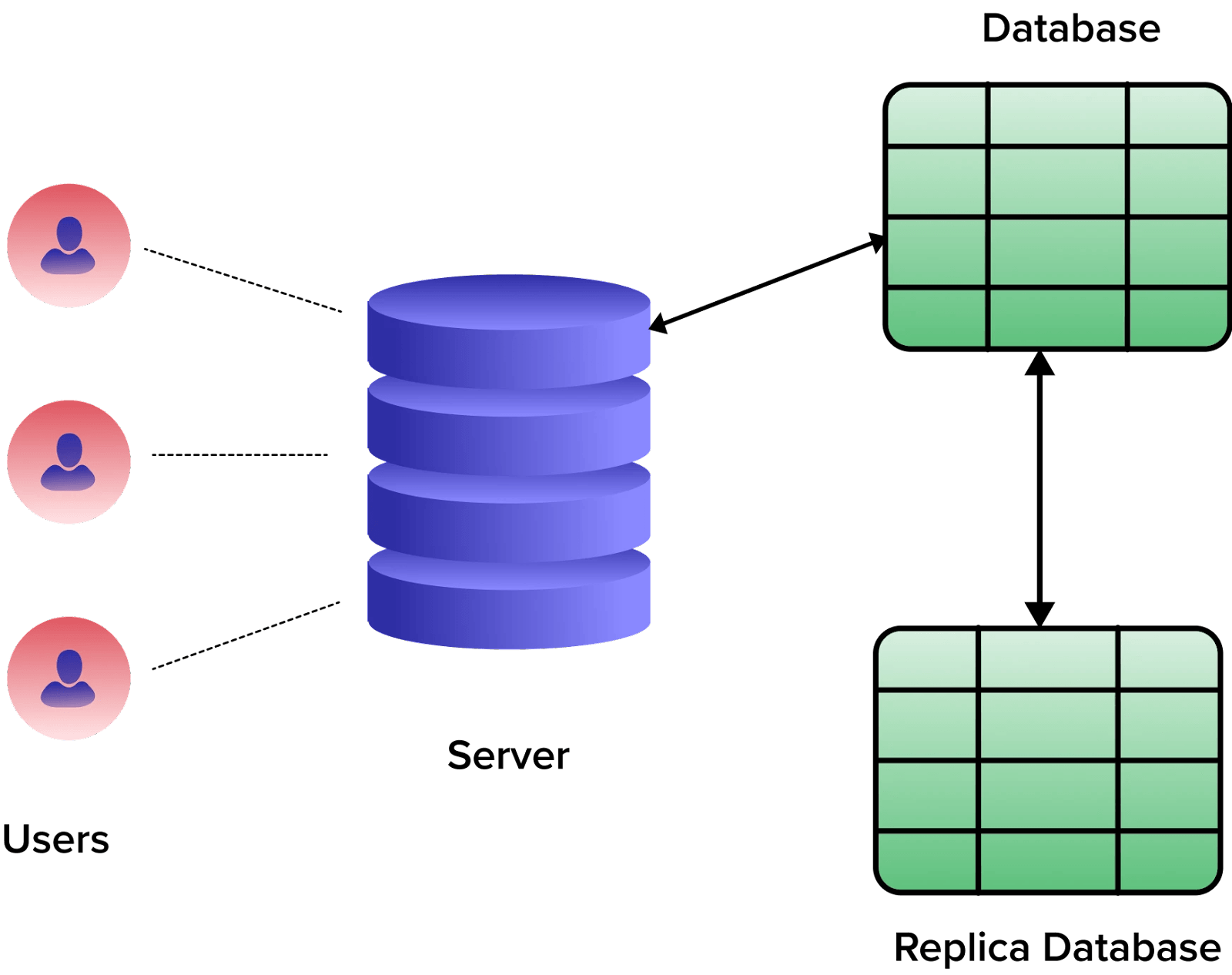

Data replication is all about creating and maintaining copies of data in multiple locations or storage systems to ensure data availability, reliability, and fault tolerance. It synchronizes data between the original source (master) and its replicas (copies) in real-time or at scheduled intervals.

When new data is added, updated, or deleted from the master, these changes are propagated to the replica systems. The replication process typically involves 2 main steps:

- Data Capture: Changes made to the master data are recorded and captured. This is done through various methods, like log-based replication, trigger-based replication, or snapshot-based replication.

- Data Distribution: The captured changes are then transmitted and applied to the replica systems. These replicas can be located in different data centers, geographical regions, or on various servers within the same infrastructure.

Data replication maintains consistent and up-to-date copies of data across multiple locations for high availability, disaster recovery, and load balancing in distributed systems. This redundancy enhances data access speeds, improves fault tolerance, and lets you access data from the nearest available replica.

7 Proven Data Replication Strategies To Ensure Data Integrity & High Availability

Let’s take a closer look at the seven data replication strategies that will streamline your data workflows and optimize data accessibility throughout your organization.

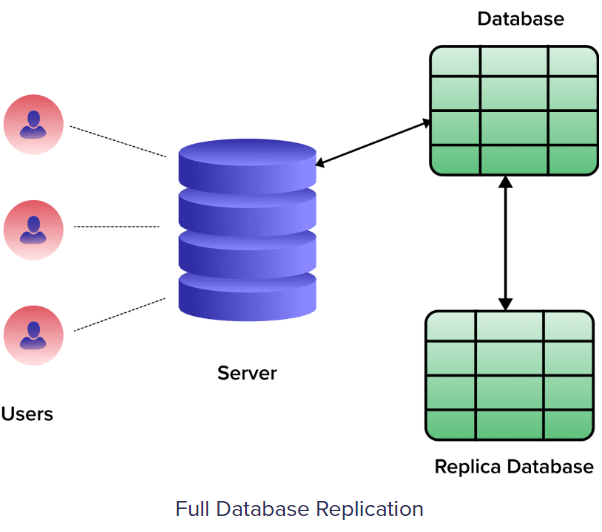

1. Full Table Replication

Full table replication is the strategy where an entire database, including all new, existing, and updated rows, is copied from the source to the destination. This data replication method doesn't characterize the changes in the source and replicates irrespective of any modifications. So, there's no need to track changes since everything is consistently copied to the destination as the exact mirror of the source.

Benefits Of Full Table Replication

- Complete data accuracy: The replicated data perfectly mirrors the original with no missing pieces

- Hard delete detection: Full table data replication strategy can detect hard deletions in the source database.

- Optimize data availability: For global applications, creating exact replicas in multiple locations makes content easily accessible and loads instantly for users everywhere.

Limitations Of Full Table Replication

- Potential errors: The mix of high processing and possible low latency can pose a greater risk of replication errors.

- Time consumption: Because of the sheer volume of data transferred, replication duration can vary based on network strength.

- High resource demand: Intensive processing power is needed which introduces latency during each replication cycle.

When To Use This Data Replication Strategy

Full table replication is best:

- For recovery of deleted data when other restoration methods aren't viable.

- For creating a backup or archive of the entire database at a specific point in time.

- When initializing a new replication system where a complete copy of the source is required.

- When there’s an absence of logs or suitable replication keys that would facilitate other forms of replication.

2. Log Based Incremental Replication

Log-based incremental replication is a method where a replication tool accesses transaction logs from a database. It examines these logs to detect and identify specific changes like INSERT, UPDATE, or DELETE operations in the source database. Instead of copying the entire dataset, it only replicates the changes so the replica data destination remains up-to-date.

Benefits Of Log-Based Incremental Replication

Log-based incremental replication has many pros, including:

- Reduced load: Since only changes to tables are streamed, the strain on the source database is minimal.

- Low latency: Changes are captured and updated frequently which minimizes delays in data synchronization.

- Consistency: The source database continually logs changes so there's minimal risk of missing important business transactions.

- Scalability: As it deals mainly with incremental changes, this strategy can scale up without additional costs that are often associated with bulky data queries.

Limitations Of Log-Based Incremental Replication

This replication strategy also faces several hurdles. Some important ones are:

- Database-specific approach: Only works with databases that support binary log replication, like MongoDB, MySQL, and PostgreSQL.

- Log format variance: As each database has distinct log formats, creating a universal solution for all databases is challenging.

- Data loss risk: If the destination server goes offline, it's important to maintain and update the logs. Or you might lose critical data.

When to Use This Data Replication Strategy

Log-based incremental replication is apt in these scenarios:

- When aiming for a balance between continuous data updates and conserving system resources.

- In businesses where immediate data availability is crucial.

- For real-time or near-real-time data synchronization, especially when you want to reduce strain on the source system.

- For systems that already maintain transaction logs. This gives you both the benefits of disaster recovery and efficient replication.

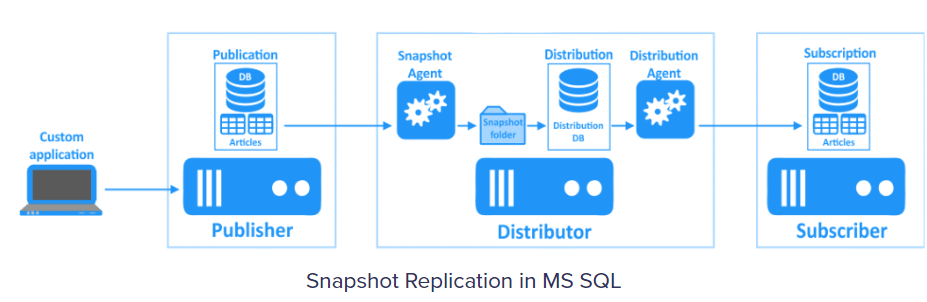

3. Snapshot Replication

Snapshot replication is a straightforward approach for replicating data that is perfect for you if you are looking to keep your data warehouse updated without requiring real-time updates. It captures a "snapshot" of the source data at a specific moment and then replicates this data to the replicas.

As a result, the replicas mirror the data exactly as it was during the snapshot, not considering any changes or deletions that occur in the source afterward. To understand how snapshot replication works, let’s understand the two primary agents at play:

- Snapshot agent: This agent gathers and stores files that contain the database schema and objects. Once collected, these files are synchronized with the distribution database.

- Distribution agent: It safely delivers these files to the destination or replicated databases.

Benefits Of Snapshot Replication

Snapshot replication, with its uncomplicated nature, boasts several advantages:

- Minimal update suitability: It’s best suited for databases that witness minimal updates.

- Perfect initial sync: It’s perfect for establishing an initial synchronization between the original data source and its replicas.

- Rapid change efficiency: It is especially helpful in scenarios where numerous rapid changes render other replication strategies inefficient.

Limitations Of Snapshot Replication

Snapshot replication isn’t without its limitations. Here are some:

- High resource demand: Replicating big datasets needs some major processing power which can be resource-intensive.

- Lack of real-time updates: Once a snapshot is taken, it doesn’t track later data changes or erasures, leaving replicas potentially out of sync with real-time modifications.

When to Use This Data Replication Strategy

Snapshot replication is effective in these scenarios:

- If you’re dealing with a relatively small dataset.

- When the source database rarely undergoes updates.

- When you can afford temporary discrepancies between the source and its replicas.

- In cases of rapid and massive data changes where alternative replication methods fall short.

4. Key-Based Incremental Replication

Key-based incremental replication updates only the changes that occur in the source since the last replication. To determine these changes, it uses a replication key which is a specific column in your database table. This replication key can be an ID, timestamp, float, or integer.

During each replication, the tool identifies and stores the maximum value of the replication key column. In subsequent replications, it compares this stored value with the current maximum in the source and updates the replica accordingly.

Benefits Of Key-Based Incremental Replication

Using key-based incremental replication has many advantages for data management.

- Efficiency: This method is often faster than full table replication since it only updates recently altered data.

- Resource-saving: Given that only new or updated data is replicated, it uses fewer resources than copying an entire table.

Challenges

While promising, this replication strategy presents specific hurdles.

- Duplication issues: There’s a possibility of duplicate rows if records have identical replication key values. The method can repeatedly copy these records until a higher replication key is encountered.

- Delete operations: Key-based incremental replication doesn’t recognize delete operations in the source. When a data entry is removed, the replication key also vanishes which makes it impossible for the tool to detect this change.

When to Use This Data Replication Strategy

Key-based incremental replication can be used:

- When capturing only the recent changes in your data is important.

- In scenarios where log-based replication isn’t supported or practical.

- For businesses that prioritize efficient and resource-friendly data updates.

- When looking to maintain updated replicas without overburdening systems.

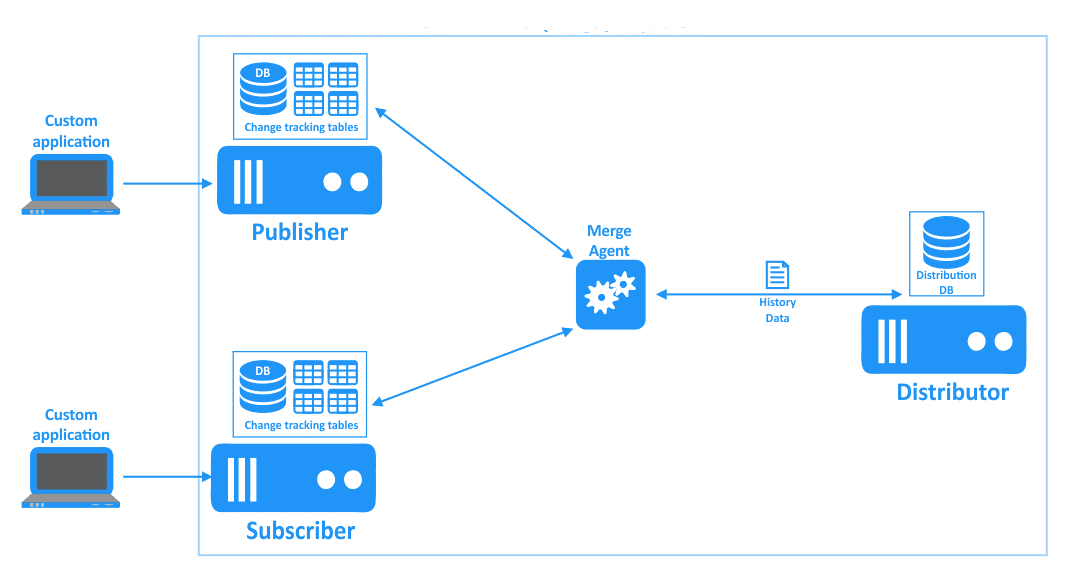

5. Merge Replication

Merge replication combines 2 or more databases into a singular entity so that any update made to a primary database is mirrored in the secondary ones. Both primary and secondary databases can modify data. When changes are made to a database, they’re synced offline and then integrated with the main and secondary databases.

This replication mechanism employs 2 key agents:

- The snapshot agent initiates the process and creates a snapshot of the primary database.

- The merge agent applies the snapshot files to the secondary database, replicates incremental changes, and resolves data conflicts that arise during the replication.

Benefits Of Merge Replication

This unique replication strategy has the following benefits:

- Conflict resolution: Given the multiple points of data change, merge replication provides a mechanism to set rules and efficiently resolve data inconsistencies.

- Operational continuity: In instances where a database goes offline, the other can be used to continue operations. Later, data can be synced to keep all databases up-to-date.

Challenges Of Merge Replication

Merge replication presents some challenges. 2 important ones are:

- Potential for data conflicts: Every database in the setup, primary or secondary, can change data which can result in conflicts.

- Complexity: Setting up merge replication can be difficult, especially when accommodating various client-server environments like mobile applications.

When to Use This Data Replication Strategy

Merge replication is ideal:

- When each replica needs a distinct segment of data.

- In environments where it’s important to prevent data conflicts.

- When the focus is primarily on the latest value of a data object rather than the number of times it changes.

- When you require a configuration where replicas not only update but also mirror those changes in the source and other replicas.

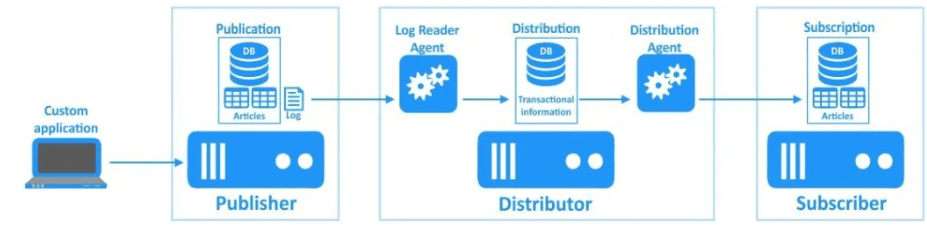

6. Transactional Replication

In transactional replication, all the existing data is first replicated from a source (known as the publisher) into a destination (termed as the subscriber). Then the changes made to the publisher are mirrored in the subscriber sequentially. This mechanism relies on a detailed snapshot of the publisher’s data that lets the subscriber keep an accurate and coherent reflection of the original dataset.

The agents that play a vital role in transactional replication are:

- Snapshot agent: Gathers all files and data from the source database.

- Log reader agent: Examines the publisher’s transaction logs and duplicates these transactions in an intermediary space called the distribution database.

- Distribution agent: Transfers the gathered snapshot files and transaction logs to the subscribers.

Benefits Of Transactional Replication

The major advantages of transactional replication are:

- Real-time updates: Changes to the publisher are reflected in the subscriber in near real-time.

- Read-Heavy workloads: Subscribers in transactional replication are typically used for reading data which makes it ideal for high-volume data reads.

- Transactional consistency: Since changes replicate in the same order as they occur in the publisher, this method offers strong transactional consistency.

Challenges Of Transactional Replication

While effective, there are some challenges that you should be aware of before implementing this data replication method:

- Complex setup: Having multiple agents and an intermediary distribution database can make the setup and maintenance complex.

- Potential latency: Despite being real-time, if there’s a surge in data changes, there might be slight delays before they’re mirrored in the subscriber.

When To Use This Data Replication Strategy

Transactional replication is best where:

- The database undergoes regular and frequent updates.

- Subscribers should get incremental changes as they happen.

- Business operations hinge on the availability of the most recent data for accurate analytics.

- Business continuity is important and any downtime beyond a few minutes could be detrimental.

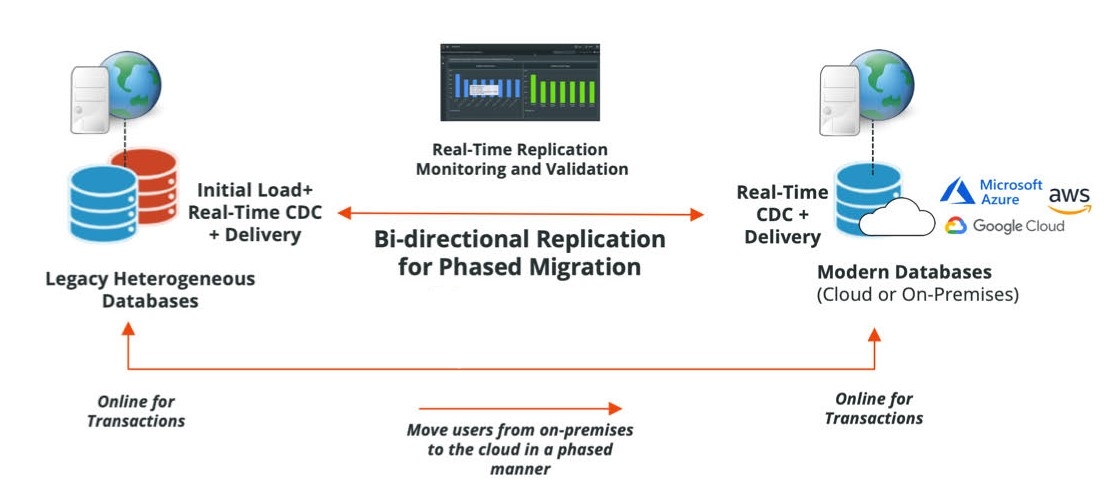

7. Bidirectional Replication

Bidirectional replication involves 2 databases or servers actively exchanging data and updates. Unlike other data replication strategies, no definitive source database exists here. Any changes in one table are mirrored in its counterpart and vice versa. This interaction needs both databases to remain active throughout the replication process.

Benefits of Bidirectional Replication

Using bidirectional replication has many advantages:

- Full utilization: Bidirectional replication optimizes the use of both databases for maximum efficiency.

- Disaster recovery: It doubles as a disaster recovery solution since both databases constantly mirror each other’s updates.

Challenges of Bidirectional Replication

The complexities associated with its implementation include:

- Conflict risks: Since both databases can be updated simultaneously, conflicts may arise.

- Active requirement: The success of transactions relies on both databases to be active which is challenging in some scenarios.

When To Use This Data Replication Strategy

Bidirectional replication shines in specific scenarios like:

- If the objective is to use both databases to their peak performance.

- When an immediate backup solution or disaster recovery is important.

Estuary: The New-Age Data Replication Solution

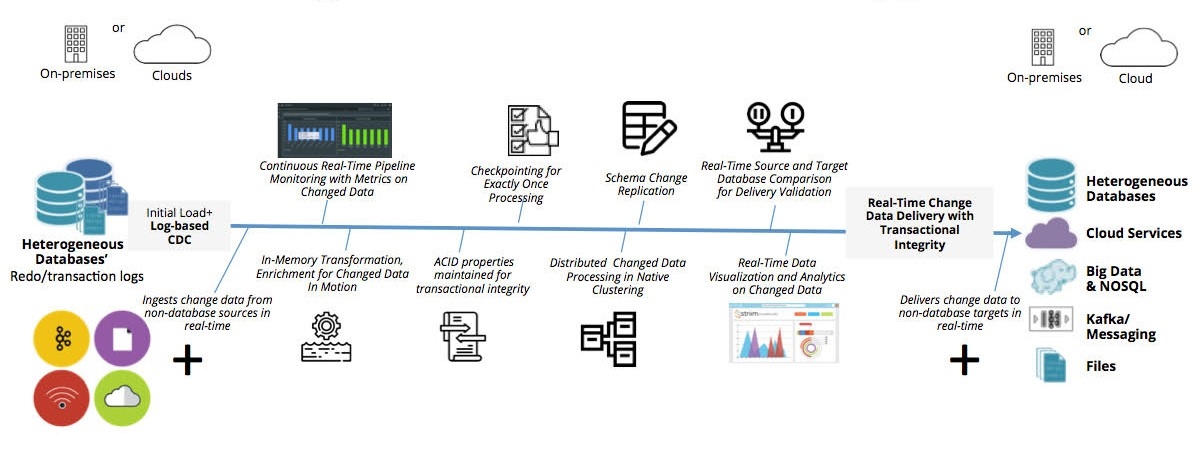

Estuary is our innovative, fully-managed data integration platform and CDC connector solution. What makes it stand out is its use of an ecosystem of open-source connectors, with several developed and maintained internally.

Estuary doesn’t just apply the log-based CDC (change data capture) method; it refines it to bring a unique twist. At its core, Flow is a user-friendly UI-based product with its runtime rooted in Gazette, a state-of-the-art distributed pub-sub streaming framework.

Key Features Of Estuary

- Handling large data: Its connectors smoothly handle millions of rows, avoiding common DML issues.

- Data transformation: You can easily set up data transformations in Flow using either SQL or TypeScript.

- Adaptable schemas: It checks data against a JSON schema that you can change without stopping the system.

- Event-centric operation: Unlike other platforms, Flow focuses on events which makes real-time CDC more effective.

- Exactly-once processing: Courtesy of Gazette, Flow has a unique capability to de-duplicate real-time data for greater accuracy.

- Cloud-based backfill: It can store temporary data in your cloud storage. This means you can backfill without going back to the database.

- Detailed data capture: It uses advanced techniques to track small data changes at the source. This reduces delays and keeps your data pure.

Data Replication Case Studies For Better Insights

Let’s discuss 2 case studies to understand how different organizations have used and benefited from innovative data replication strategies.

Case Study 1: Bolt's Seamless Transition To Real-Time Analytics

Bolt, often seen as the European competitor to Uber, started in the ride-hailing space and expanded into micro-mobility and food delivery. As it grew, it faced challenges with its data analytics platform.

The existing process, known as query-based CDC, was unreliable. Data was often missing or duplicated which made the numbers unreliable. The system was not standardized with some services working over APIs and others directly calling databases. There was a clear need for a more reliable and real-time data replication solution.

Solution

The data architect department at Bolt proposed a shift to log-based data replication. Instead of polling the database, this method reads the internal binary logs of databases, converts them to messages, and sends them to Apache Kafka for real-time data processing.

With the adoption of Kafka, Bolt also integrated ksqlDB for stream processing. This combination helped Bolt replicate data from OLTP databases to a centralized location for analytics. KsqlDB's declarative nature made it easy to deploy and modify, making it a preferred choice over Kafka Streams for Bolt’s use case.

Benefits

- The new system instantly gained trust as the numbers were precise and without delays.

- KsqlDB's headless mode made it easy to deploy and manage queries, particularly in batches.

- KsqlDB provided a scalable solution, especially when dealing with schema changes in databases.

- The adoption of Kafka and ksqlDB brought a level of standardization to Bolt’s data processes.

- With ksqlDB, Bolt could perform real-time analysis which enhanced their analytics capabilities.

Case Study 2: Multi-Region Data Replication At Societe Generale

Societe Generale is a multinational investment bank and financial services company with over €24B in annual revenue. They had a diverse ecosystem of 200 applications, including those for pre-trade, risk management, and accounting services.

But this diversity brought some major issues. With each record system having its unique API, the bank struggled with data replication across these systems. This made it difficult to consolidate and obtain a comprehensive perspective on its vast data.

The fragmentation of data led to the absence of a consistent source of truth. Their existing infrastructure was falling short, achieving only 99% availability – insufficient for the bank's stringent SLAs.

Solution

In a strategic move, Societe Generale deployed a data management platform that was designed to act both as a data store and a processing layer. This platform:

- Offered a single API interface for 7 distinct systems of record.

- Integrated a smart caching module which significantly sped up data access.

- Enabled data replication in real-time asynchronously, covering key financial hubs: Paris, New York, London, and Hong Kong.

Benefits

Having this data management platform as its primary solution, Societe Generale had:

- 99.999% availability rate.

- 700K read operations daily.

- Seamless handling of hundreds of concurrent internal users.

- An impressive expansion in their application count, skyrocketing from 200 to over 400 in 3 years.

- Massive data traffic management with 20 million daily real-time event triggers directed to various applications.

Conclusion

The message is clear: data replication is not just an insurance policy; it is the foundation of modern data management. So let’s no longer debate its significance. Right now, what's important is to harness the power of data replication strategies to strengthen our defenses and keep things running smoothly. It's all about continuity and resilience!

Estuary is an ideal choice here. Its intuitive platform automates real-time replication across systems - no coding needed. Legacy tools are complex and brittle. Flow streamlines replication with easy workflows and transforms siloed data into an integrated asset.

Want to get in on the action? Sign up today for Flow – it's totally free. If you need more info or have any questions, don't hesitate to contact us

Curious to explore more on data replication strategies? Dive deeper into the topic with these insightful articles:

- Types of Data Replication: Uncover the various approaches to replicating data and their implications on your systems' performance and reliability.

- Real-Time Data Replication: Discover the significance and challenges of real-time data replication in modern data architectures, and how it can enhance your data management processes.

- Database Replication: Delve into the intricacies of database replication, its benefits, and the different techniques employed to ensure data consistency and availability across distributed environments.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.