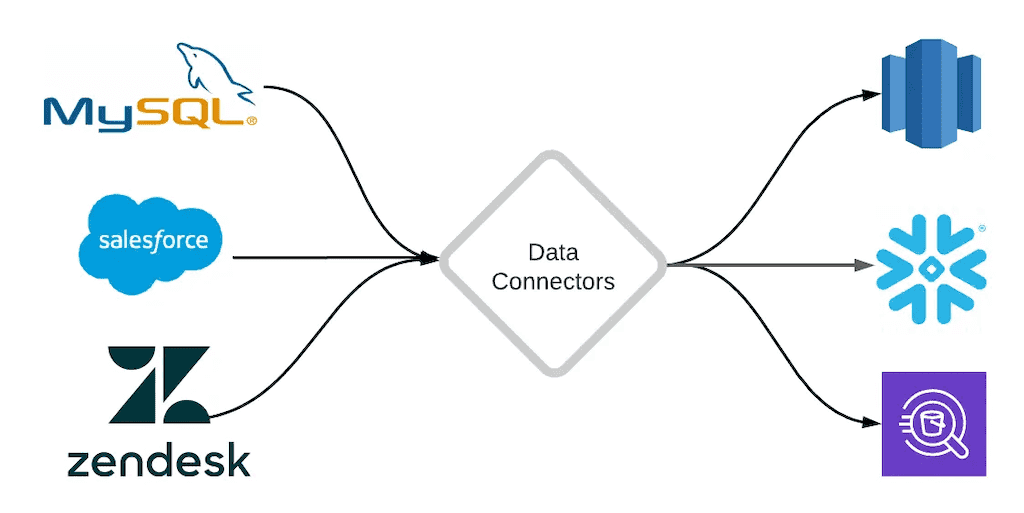

The data technology landscape is a fractured, often-confusing place. Businesses rely on a variety of platforms to collect, store, analyze, and leverage data, but getting all these systems to talk to each other is a challenge. Data connectors are critical for bridging the gap between systems and allowing data to travel seamlessly.

Data connectors are also a key feature of modern data integration platforms — and the reason you can use such platforms to quickly build a wide variety of data pipelines.

In this article, we’ll provide in-depth insight into data connectors to bring you the best resource to understand and learn about the concept. By the end of it, you’ll be well-versed in data connectors, their importance, the multiple types you can expect to find, and some key examples to help you visualize their operation.

But before we get to the heart of the subject, it is important to know what data connectors are and how they function in today’s data integration platforms and data pipelines in general.

Let’s get started.

What Are Data Connectors?

Data connectors are software components that read data from a source system and write it to a destination system, reconciling differences and incompatibilities along the way. In the context of a data pipeline, connectors are often used to get data in and out of the central pipeline technology.

They essentially keep the playing field level and simple so manual connections or input of data isn’t required. Manual data input would otherwise require you to work with multiple data systems, transports, stores, and services — all things that data connectors handle for you.

Each data connector is designed with a specific protocol or API that handles the details of data source and destination access so that engineers and analysts can focus on data manipulations and relationships, and any additional custom processing such as data filtering and consistency control.

The Inner Workings Of Data Connectors

On a more technical level, data connectors help businesses to integrate a central data system or pipeline directly with other data systems they use.

- If a system is a SaaS application, its connector integrates with its API.

- If a system is a non-SaaS data storage or processing system (like a database, pub-sub, or cloud storage), its connector integrates directly with that system via its unique protocols and data models.

When a business uses any SaaS platform, be it an ERP, a CRM, or a CMS, they receive a list of third-party applications that can integrate into the software. For instance, if a business uses Hubspot, they’ll see automatic integrations for Shopify and Snipcart directly available with the tools.

However, SaaS platforms don’t always offer all the integrations you need. And in the case of non-SaaS data systems, it’s even less likely.

In cases where these aren’t available, connectors come in handy – as in the case of integrating WordPress into the Snowflake data warehouse, or Google Sheets into PostgreSQL. Estuary is one such example of a platform that helps integrate these systems.

Estuary captures data from and materializes it into many different data systems. Each one requires a specific interface to be able to connect. However, once you account for all the different interfaces at play, the system can quickly become complex.

To get around this issue, Estuary uses connectors that bridge the gap between the main Flow system and the huge variety of external data systems on the market today. You can then easily swap connectors out and build new interfaces for new systems.

To help, Estuary uses an open-source connector model, where some of the pre-built data connectors in use were made by their development teams, and others from third parties.

The connectors allow you to build pipelines between two APIs or protocols, allowing data from one application or solution to be received and processed so that it may be understood and accessed by the other. They function this way regardless of whether any direct form of integration was available in the two solutions.

Now that we know the functionality of data connectors, let’s take a look at their importance.

The Importance Of Data Connectors

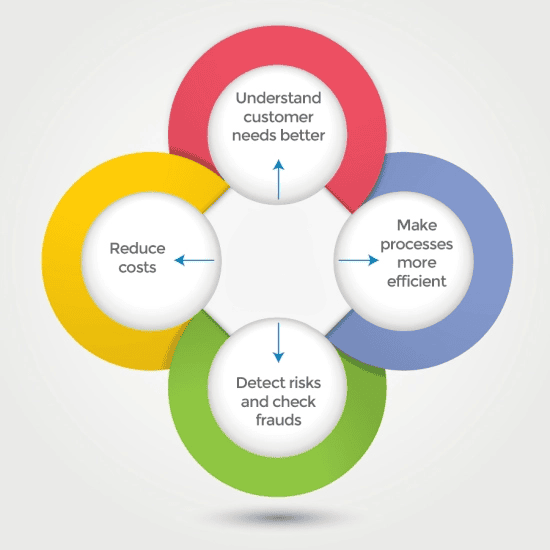

Data connectors are an essential player when determining the functional and strategic areas of any business. As discussed earlier, companies require them to connect their applications and systems and complete important data-driven processing such as order-to-cash. This process, in particular, is often spread across several different systems and is automated.

Besides order-to-cash, businesses may also need to connect to customers to deliver products and services. All of these digital performances require customization, targeting, and a better understanding of customer behavior – which are largely data-driven processes. Data connectors can help simplify these processes.

Businesses have also developed an urgent requirement to remain agile today. This agility means that they should go to market faster and adapt to their competition and changing requirements.

Data connectors can help pool relevant data for these endeavors and enable businesses to interact with customers and how they produce goods and services to stay relevant within their marketplaces.

There are other benefits of data connectors. Let’s discuss them in detail below.

1. Improve Business Decision-Making

Data originates and is stored in different systems across departments. By connecting these systems, data connectors allow you to access all business data in real time and can enable you to provide better business decisions. Authorized members at the company may also have pressing business search queries that can be resolved with the access data connectors provide.

2. Streamlines Operations

Different departments in any business can benefit from data connections to help consolidate data from different sources to a single location. The finance department can fetch the necessary cash flow data to make their financial reporting more efficient.

Other business operations such as human resources, logistics, sales, IT, and marketing can also benefit from insights gained from analyzing and accessing data in real time.

3. Increases Productivity

Collecting different data sets from multiple departments manually is a challenging task even for a seasoned professional. Especially if they are to remain productive in their actual tasks. Data connectors can work within a full stack of BI tools or reporting applications, and bring different kinds of data from different systems to central platforms, like data warehouses.

4. Predicting The Future

Data analysts can use connectors to gather historical data collected through seamless data integration to help predict customer and user behaviors. These can then be trained on machine learning and artificial intelligence tools to generate forecasts.

5. Improves Security

Influential cybersecurity professionals at the biggest cyber solutions providers leverage data to build a centralized system that enables business analysts to request data they need directly from the central system. This method replaces the need for operational source systems and helps improve overall security as analysts don’t need admin-level access to each operating system.

6. Protocol Mediation And Data Discovery

Connectors can translate the native communication of a target app into a standard communication protocol, like REST, or in the case of a data pipeline, the pipeline’s protocol. They can also inspect systems and provide a list of data objects and operations available for use in integration.

7. Data Format Mediation and Validation

Connectors can translate the native data format of the target application to a much more functional, standardized data format, for example, JSON. It can then apply a schema for integration purposes, thereby making the data more organized and available for analytics.

How Do Data Connectors Help With Integration?

Data integration is the process of connecting apps and software so data from one system can be accessed by another. Connectors are an important technological component that makes data integration possible.

Often, integration involves the use of third-party (or middleware) platforms that help translate data to make it understandable by the recipient system. The path that the middleware platform creates between two systems is called a data pipeline.

Today’s data integration platforms can do a lot more than data mapping and can even add complex logic and orchestration to control how specific software and apps interact. But all data integration efforts rely heavily on the ability to connect apps and data sources.

You can hand-code a data integration, but the process can be complex, requires a lot of time to maintain, and is tedious to repeat. This is why most data integration platforms offer connectors since these bring the entire business ecosystem together in an easy and repeatable way.

As part of data integration, connectors can then help solve difficult workflows and automate important processes.

I. Coded vs Managed Connectors

Coded connectors are connectors that are hand-coded by an in-house team of software or data engineers. They are purpose-built and used for complex integration scenarios where you need to customize the data flow or add additional logic. However, they are a pricey option considering the high salaries of data engineers that can increase the cost of building one exponentially.

On the other hand, managed connectors offer a ready-made solution that simply needs to be configured to connect with your particular systems, eliminating the most laborious aspect of writing the code. They provide more possibilities and flexibility and are a great option since they’re easier to use and tend to be cheaper than coded ones. Managed connectors are a key offering of many data integration or data pipeline vendors, including Estuary and many others.

II. API vs Database Connectors

APIs are developer tools designed to provide access to SaaS apps. API connectors are pre-built integrations that make data integration possible with any system that has an API. They allow you to send information from one application to another and receive data from outside into your application.

Database connectors are used to connect applications to databases. They translate methods and instructions between the application layer and the database layer, so you can send data to a destination after extracting it from a source. Without connectors, most applications would not be able to communicate with databases. Custom database connectors are complex to create but pre-made solutions in this case are a viable option.

III. Batch vs Real-Time Database Connectors

Batch connectors update data in large amounts at once and refresh at a set interval, while real-time connectors process data change events the instant they occur.

In a world that increasingly demands real-time insights, this distinction is key. Many connectors available on the market today process data in batches, which can bottleneck processes that are otherwise real-time. This is of particular concern for connectors that capture data from databases. CDC (change data capture) connectors are a solution.

IV. Snapshot vs Incremental API Connectors

API connectors can handle data in different ways. Snapshot API connectors fetch complete data for an object at every run, completely overwriting all data that was previously captured for that object. This type of connector is acceptable when only a small quantity of data needs to be retrieved.

Incremental API connectors only retrieve partial data for an object at every run. Typically, incremental connectors fetch only the data that has been created and changed since the previous fetch.

Data connectors can extract data more quickly and put less strain on the data source when operating in incremental mode because there is less data that needs to be retrieved from the source.

Data Connector Use Case: Data Pipeline Platform

As we’ve touched on already, data connectors are a key component of managed data integration or data pipeline platforms like Estuary.

In this context, the data pipeline platform acts as middleware. Say you need to move data from system A to system B. You’d use a connector to capture data from System A into the data pipeline platform, and another to push data from the pipeline platform into System B. But why move the data through the central pipeline platform to begin with? Why not just use one connector to get from System A to System B?

There are several reasons why complete, well-architected data pipelines have become the standards for businesses of all sizes. Here are just a few:

- You can perform better data validation. As the data moves through the pipelines, the pipeline can check whether the data is in the correct shape and type that will be accepted by the destination system. This is critical for preventing errors and failures. For example, Estuary uses JSON schema to validate data.

- You can transform data. Data transformation can be a simple re-shaping, or a more complex join or aggregation.

- Data pipeline platforms offer monitoring and backups. Despite our best efforts, failures can still happen. A good data pipeline platform can make sure that in the event of a failure, you’ll be able to pick up right where you left off without data loss.

- Efficiently mix-and-match connectors. The data pipeline platform acts as the central hub for all your integrations. Say you captured data from System A and want to push it to both System B and System C. A platform like Estuary allows you to capture the data just once and push it to both destinations.

Conclusion

Almost every business today has implemented various data analysis practices using dispersed data for intelligent business decisions, and relies on data-driven operational systems to run processes day in and day out. Data connectors are essential for pretty much any ongoing process that involves multiple data systems.

These interchangeable components vastly streamline the data integration process — especially in the context of data pipelines. The benefits of data connectors extend across the entire company, allowing better business intelligence decisions, improved security, and a robust and seamless flow of information.

All of these benefits ultimately improve the overall data management process for every company and help achieve its business goals. Knowing the types of connectors you can use and how they work can be essential for the proper utilization of these tools and help you extend the capabilities of your data-capturing process.

We hope our guide on data connectors provides you with all the resources you need to better utilize them for your data replication or migration endeavors. If you’re interested in the product or want to learn more about Estuary, start your free trial or contact our team.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.