MongoDB change data capture (CDC) is the process of detecting inserts, updates, deletes, and other changes in MongoDB and streaming those changes to another system in near real time. Teams use MongoDB CDC to keep data warehouses, operational databases, analytics platforms, search indexes, and applications synchronized without repeatedly exporting full collections.

For production workloads, MongoDB CDC is usually handled through MongoDB change streams or a managed CDC connector. These approaches continuously listen for changes in MongoDB and apply them to destinations such as PostgreSQL, Snowflake, BigQuery, Databricks, or Kafka-compatible consumers.

In this guide, you’ll learn how MongoDB CDC works, why it matters, which methods and tools are available, and how to build a real-time MongoDB to PostgreSQL CDC pipeline with Estuary.

Key Takeaways

MongoDB CDC captures inserts, updates, deletes, and other database changes so downstream systems stay current.

MongoDB change streams are the primary native mechanism for listening to changes in MongoDB.

CDC is useful for real-time analytics, operational replication, search indexing, cache updates, AI pipelines, and event-driven applications.

Estuary can capture MongoDB changes and stream them to destinations such as PostgreSQL, Snowflake, BigQuery, Databricks, and Kafka-compatible consumers.

Production MongoDB CDC pipelines should account for initial backfills, resume tokens, deletes, schema changes, connector throughput, and destination consistency.

Estuary’s MongoDB connector has been optimized for high-volume CDC workloads, including faster BSON decoding and improved batch prefetching.

Looking for something else? See:

Why MongoDB Change Data Capture Matters

Real-Time Analytics and BI

Change Data Capture (CDC) is a crucial technique in data analytics: CDC helps to accelerate reporting and business intelligence capabilities. It helps with real-time tracking and insight into how a business is performing.

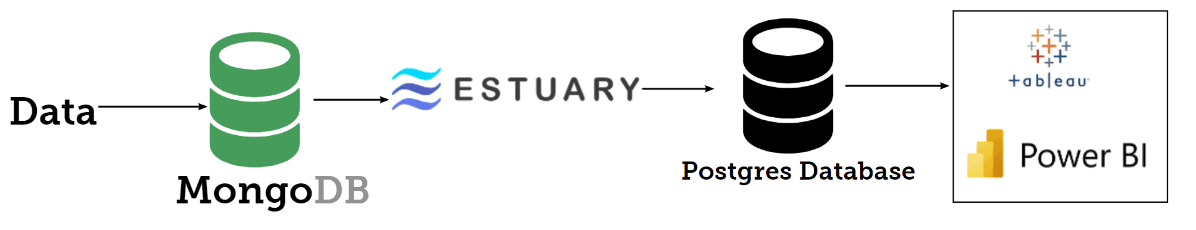

For example, in our case, MongoDB is not suitable for analytical workloads; MongoDB will not be able to support complex SQL queries that will be sent across from Tableau or PowerBI dashboards to the data. But we need to be able to analyze the data in our microservices with great KPIs to provide insight into the company's growth.

The best thing to do here is to enable Change Data Capture to a PostgreSQL database that supports all of these functionalities. This would let you leverage Postgres support for a wide range of SQL functions and window functions, handle large datasets with high performance, scale horizontally to handle more data, and leverage large-scale data processing

All of these are achieved by capturing every modification to the data, which in turn enables the identification of trends, patterns, and anomalies, leading to optimized business decisions and gaining a competitive advantage. CDC is particularly important in scenarios where traditional batch processing may not be feasible or fast enough to make informed decisions. In this case, CDC can be implemented to ensure data is always up-to-date and accurate, allowing analysts and data scientists to make informed decisions based on the most current information.

Synchronizing On-Premises MongoDB Data to the Cloud

Change Data Capture (CDC) is important in synchronizing on-premise data to the cloud because it allows applications sitting in the cloud to have access to data in the on-premise data stores. This ensures that the data in the cloud is always up-to-date and consistent with the on-premise data.

Building Audit Logs from MongoDB Changes

CDC is very helpful in building an audit log because it captures and records every modification made to a data store in real time. With CDC, you create an audit trail of all operations of the data stores. This allows you to create a detailed and accurate log of all changes to the data, including who made the changes, when they were made, and what was modified.

Information from the logs is very important for different purposes. We can use it to understand user behaviors, identify errors, and monitor the performance for queries that are taking longer time.

As regards security, it helps to enforce compliance with regulatory requirements and helps organizations identify and address security breaches and other unauthorized changes to the data. Interestingly, this in turn enables more efficient and accurate data recovery in the event of system failures or data loss. In fact, this information can be sent to systems like Elasticsearch for further processing.

Reducing Pressure on Operational MongoDB Workloads

CDC supports the offloading of certain data-intensive tasks to secondary systems, which can help to reduce the burden on the primary operational database.

In the era of big data, data volume in businesses is growing faster, doubling the amount of data we manage each year. Of course, big data will surely improve decision-making across the business, it would also help to drive insights into customers' behavior, preferences, and needs.

Interestingly, an increase in the volume of data in our database would on the other hand cause data to stress transaction processing by slowing things down. One of the strategies to manage this stress is to stream data into the upstream data store and free up space in the primary database using the principle of change data capture.

This can lead to:

- Improved scalability

- Increased throughput

- Faster response times

This helps you handle large volumes of data, optimize resource use and reduce costs.

Application Integration and Event-Driven Workflows

Change Data Capture (CDC) is important in application integration because it enables real-time tracking and integration of changes to data across multiple applications.

One of the use cases of CDC is data replication or propagation, you might be in a situation where you want to make your data available in other regions to solve latency and performance issues. Also, you might want to make sure the data in your data warehouse is up to date. With CDC you can capture these changes in the data stores and stream the data into your data warehouse using CDC.

Another use case is making data available to your customers, vendors, or your partners. In some cases, you don't want to give absolute accessibility to your database. In this case, you can capture data change from your own database and provide this data to the partner's database.

By capturing and propagating data changes in real time, CDC supports seamless data integration, improving data accuracy, and consistency across multiple systems. For example, CDC also supports the implementation of data integration architectures, such as event-driven and microservices applications.

With the help of these architectures, you can:

- Create more flexible and scalable systems

- Reduce development and maintenance costs

- Improve time to market

MongoDB CDC Methods Compared

MongoDB Change Streams

MongoDB change streams are the primary native way to listen for changes in MongoDB. They allow applications and CDC tools to subscribe to inserts, updates, deletes, and other changes as they happen.

Change streams are useful for real-time data pipelines because they let downstream systems receive MongoDB changes without repeatedly scanning full collections. A CDC tool can use change streams to capture changes from MongoDB and apply them to destinations such as PostgreSQL, Snowflake, BigQuery, Databricks, or Kafka-compatible consumers.

Advantages: Change streams are native to MongoDB, support real-time change tracking, and are well suited for production CDC pipelines.

Limitations: They require the right MongoDB deployment configuration and a reliable pipeline that can handle resume behavior, backfills, deletes, schema changes, and destination writes.

Oplog-Based CDC

Oplog-based CDC captures changes by reading MongoDB’s operation log, or oplog. The oplog records database operations such as inserts, updates, and deletes, making it possible for CDC tools to identify what changed and replicate those changes to another system.

This method can be efficient because it avoids repeatedly querying full collections. Instead, the CDC process reads database changes from MongoDB’s change history and streams them to the target system.

Advantages: Oplog-based CDC can support high-volume, low-latency replication and is less intrusive than full-table or full-collection polling.

Limitations: It requires access to MongoDB’s oplog and careful handling of resume behavior, oplog retention, errors, and recovery after interruptions.

Timestamp-Based Polling

Timestamp-based polling captures changes by repeatedly querying MongoDB for records that were created or modified after the last recorded timestamp. This approach usually depends on fields such as `created_at`, `updated_at`, or another reliable modification timestamp.

This method is relatively simple to understand and can work for basic incremental sync workflows. However, it is not a true real-time CDC method and can miss important changes if timestamps are missing, inconsistent, delayed, or overwritten.

Advantages: Timestamp-based polling is easier to set up than lower-level CDC methods and can be useful for simple incremental sync use cases.

Limitations: It depends on reliable timestamp fields, can add query load to MongoDB, may miss deletes, and is usually not ideal for production real-time CDC pipelines.

Snapshot-Based CDC

Snapshot-based CDC captures changes by periodically taking a snapshot of MongoDB data and comparing it with a previous snapshot. Any differences between the two snapshots are treated as changes and then moved to the target system.

This method can work for smaller datasets or low-frequency synchronization, but it becomes expensive as data volume grows. It can also be slower than change streams or oplog-based CDC because it requires repeated scans or comparisons.

Advantages: Snapshot-based CDC can be easier to reason about and may work when real-time change tracking is not required.

Limitations: It can be resource-intensive, slow for large collections, and less suitable for low-latency CDC pipelines.

Application-Level Change Capture

Application-level change capture tracks MongoDB changes from inside the application rather than directly from the database log or change stream. For example, an application might publish an event whenever it writes, updates, or deletes a MongoDB document.

This approach can be useful when teams want full control over the event structure or need to capture business-level events instead of raw database changes. However, it also adds responsibility to the application layer.

Advantages: Application-level capture can produce clean business events and gives developers control over what gets published downstream.

Limitations: It can miss changes made outside the application, requires custom development, and can become difficult to maintain across multiple services or write paths.

MongoDB Change Data Capture Tools

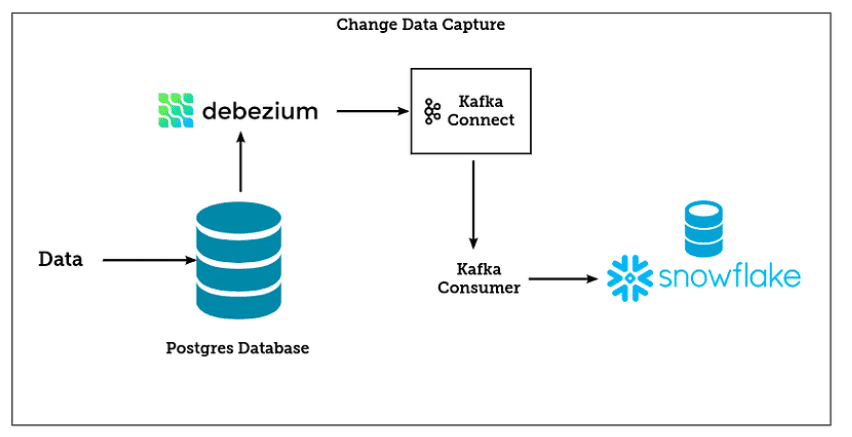

Debezium for MongoDB CDC

Debezium is an open-source distributed platform for change data capture. It monitors databases in order to be able to immediately react to changes in the database. Not only insert, update, and delete events, but also schema changes for example can be detected. Typically Debezium architecture revolves around connectors. The connectors help in capturing data changes as streams from the source system and sync the data into the target system.

Oracle GoldenGate for CDC

Oracle GoldenGate is a licensed software from Oracle used for real-time change data capture and replication in enterprise-level database environments. Oracle GoldenGate creates trail files that contain the most recently changed data from the source database and then pushes these files to the destination database. You can use Oracle GoldenGate to perform minimal downtime in production data migration and you can also use it for nearly continuous data replication tasks.

IBM InfoSphere CDC

InfoSphere CDC is a replication solution that captures database changes as they happen and delivers them to message queues, target databases, or an extract, transform, load (ETL) solution such as InfoSphere DataStage. This process is based on table mappings that are configured in the management console's graphical user interface.

The replication solution can be designed to work as an orchestration tool, where you can define the InfoSphere CDC subscription to run on a schedule. It can be used for dynamic data warehousing solution, application integration or migration, service-oriented architecture projects, and operational business intelligence.

Fivetran for MongoDB CDC

Fivetran is a modern data integration solution. The platform provides more than 200 pre-built connectors. Fivetran’s connectors are also optimized with CDC technology, ensuring efficient, high-volume data movement for various deployment options.

Fivetran is fault tolerant to service interruptions and incidents. If there should be an interruption in the data capture process, Fivetran will automatically resume syncing process exactly where it left off once the issue is resolved, even if days or weeks after, as long as the logs are still present.

For the database connectors, Fivetran primarily uses log-based replication and a replication strategy that offers complete snapshots of databases with a speed that is close to that of log-based systems. After the first sync of your historical data, Fivetran will move to incremental updates of any new or modified data from your source database. Interestingly, each database uses a different data capture mechanism in Fivetran.

However, Fivetran uses a batch-based mechanism to move data to destination systems. This adds latency to your data pipeline, even if you ingested the data with CDC.

StreamSets for CDC Pipelines

StreamSets is an enterprise data integration platform with multiple CDC connectors to databases such as Microsoft SQL Server, Oracle, MySQL, and PostgreSQL. StreamSets Data Collector offers origins that are CDC-enabled right out of the box.

StreamSets supports different source-destination configurations. It supports change data capture enabled origin with CRUD-enabled destinations, change data capture enabled with non-CRUD destinations, and non-CDC origin to CRUD destinations.

With some simple configuration, you can set up a CDC operation within minutes and start to stream data into your database. With SteamSet’s data pipelines, you can build highly customizable and fully functioning data pipelines leveraging CDC via a drag-and-drop interface.

Estuary for MongoDB CDC

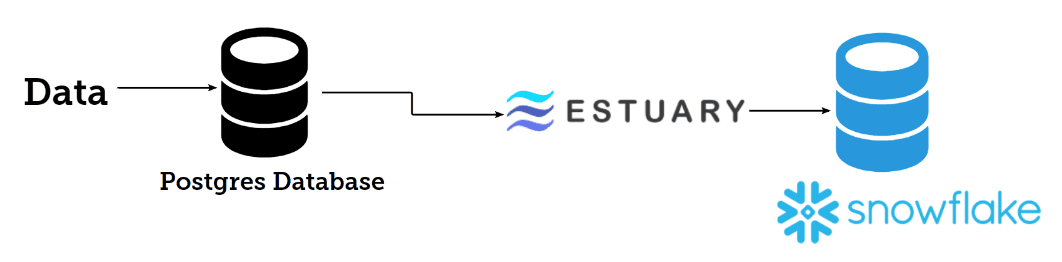

Estuary helps teams build real-time MongoDB CDC pipelines using a managed MongoDB capture connector and destination materializations. It supports real-time streaming as well as batch data movement when continuous sync is not required.

How to Build a MongoDB to PostgreSQL CDC Pipeline with Estuary

With Estuary, you can connect to your databases and stream your data into your target system in real-time without needing to set up infrastructures/resources on-premise or in the cloud. You and your data team can focus more on developing the business application, which is very good for the business both now and in the future.

As part of this, you don't have to reinvent the wheel when there is a solid tool like Estuary that can help you to achieve the same purpose with a few clicks.

Estuary supports a lot of different database systems. You can use CDC on databases like MongoDB, PostgreSQL, MySQL, and Microsoft SQL Server.

Right now, Estuary supports more than 50 data sources, and more sources are being added. One of the advantages of using Estuary over other CDC tools is the ease of use. With Estuary, you can easily provide the name of your database, the endpoint, ports, and the name of the table to capture, and Estuary will do its thing. Yes, it’s as simple as that. Also, in my experience using Estuary data capture from the source system to the target system is fast.

Choosing the right MongoDB CDC tool is not only about connector availability. For production workloads, performance, resume behavior, backfill speed, and destination consistency also matter.

MongoDB CDC Performance Considerations

MongoDB CDC performance depends on the size and frequency of changed documents, connector throughput, destination write capacity, network latency, and how efficiently BSON documents are decoded and processed.

For high-volume pipelines, watch for:

- Capture lag between MongoDB and the destination.

- Small-document workloads that create high per-document overhead.

- Large initial backfills that need higher throughput.

- Network wait time between cursor batches.

- Destination write bottlenecks.

- Schema changes that affect downstream tables or collections.

Estuary’s MongoDB capture connector was optimized to reduce overhead in high-volume workloads. In an engineering benchmark, Estuary increased throughput for standard-sized MongoDB documents from 34 MB/s to 57 MB/s, which translated to roughly 200 GB of ingestion per hour. Read the full MongoDB capture optimization engineering deep dive for the methodology and implementation details.

Implementing MongoDB Change Data Capture

Let's quickly look at how we can capture data changes from our MongoDB into our Postgres database sitting on AWS. There are different reasons why we might want to capture data changes to a new database, this might be for data replication for reliability and high availability. It might be that we are trying to keep the data in our data warehouse in sync so that other systems and applications can get the updated data from our data warehouse.

Note that this method can be used to send your data to a variety of destinations: for example, moving data from MongoDB to Snowflake.

For example, we might be interested in analyzing the data produced from our microservice application and we want to be able to make an informed decision about sales in real-time.

In fact, we can go a step further to connect our data in the Postgres database to a Tableau /Power BI dashboard to see how sales are performing. To get data into the Postgres database, we are going to perform the following:

- Create an account on Estuary

Head on to the Estuary web app and create an account: make sure you activate your account. After this, you are all set to start performing change data capture of your database.

- Create an account on Atlas on MongoDB Atlas

If you don't have a MongoDB cluster to test things out, you can go to the MongoDB Atlas page to create a MongoDB cluster for free. Make sure to provide the necessary permissions needed to connect to your database from the internet. You can check out MongoDB to BigQuery post to see how you can create a MongoDB cluster on Atlas cloud.

- Use MongoDB Visual Studio Code Extension to access MongoDB

With the MongoDB cluster up and running, now head on to VSC to download the MongoDB extension provided by Atlas. Install the extension: this extension would allow you to develop on MongoDB easily. Also, make sure to provide the connection string needed to connect to the MongoDB cluster you created in the previous step from the extension.

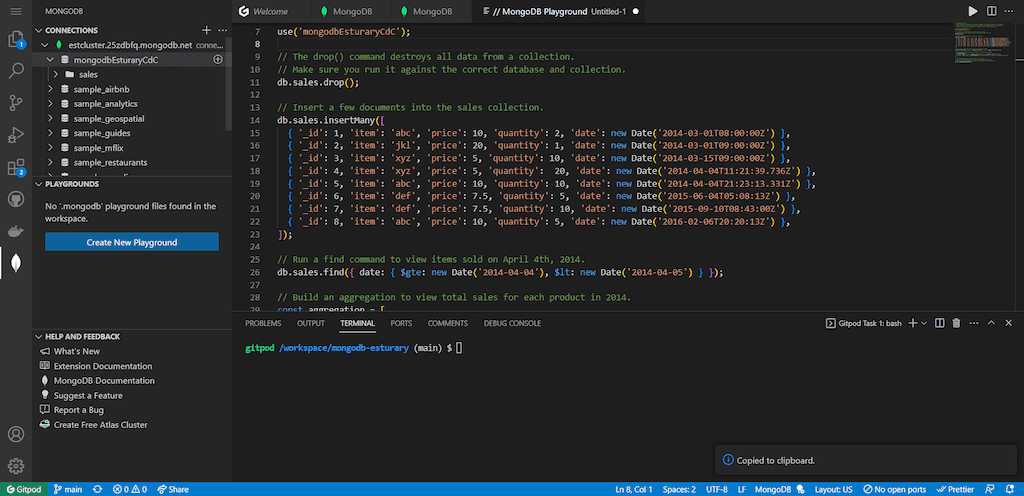

- Insert data into your MongoDB cluster using the VSC extension

Use the VSC extension to insert data into your MongoDB database. In my case, I have created a database called mongodbEsturaryCdC and a collection called sales.

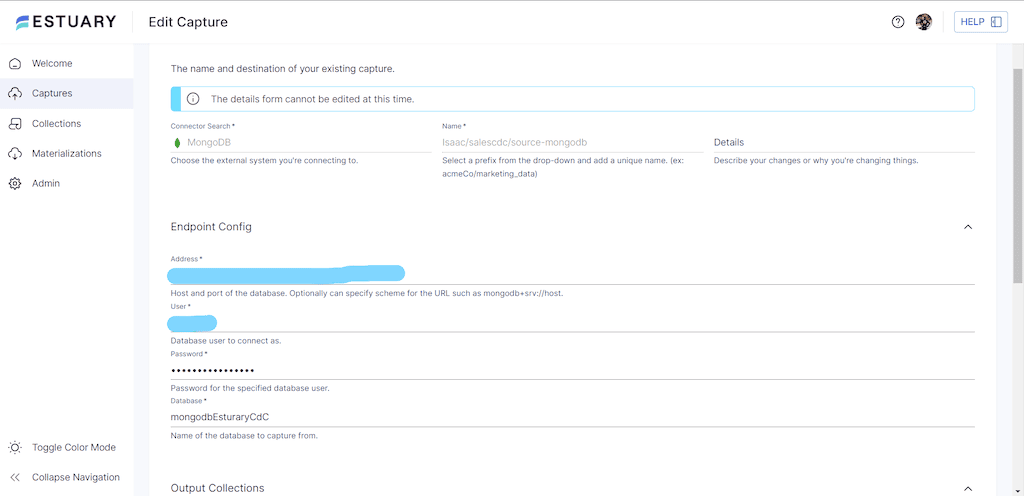

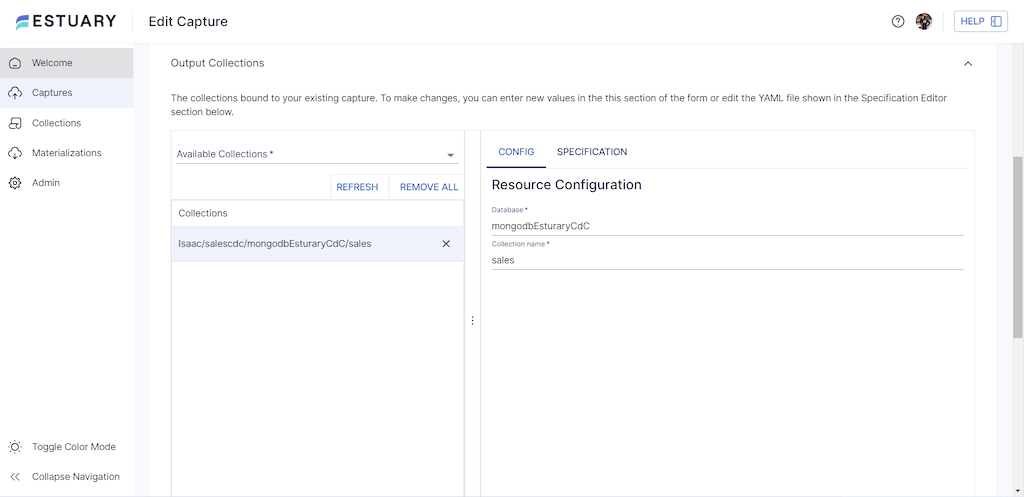

- Use Estuary to capture data in your MongoDB cluster

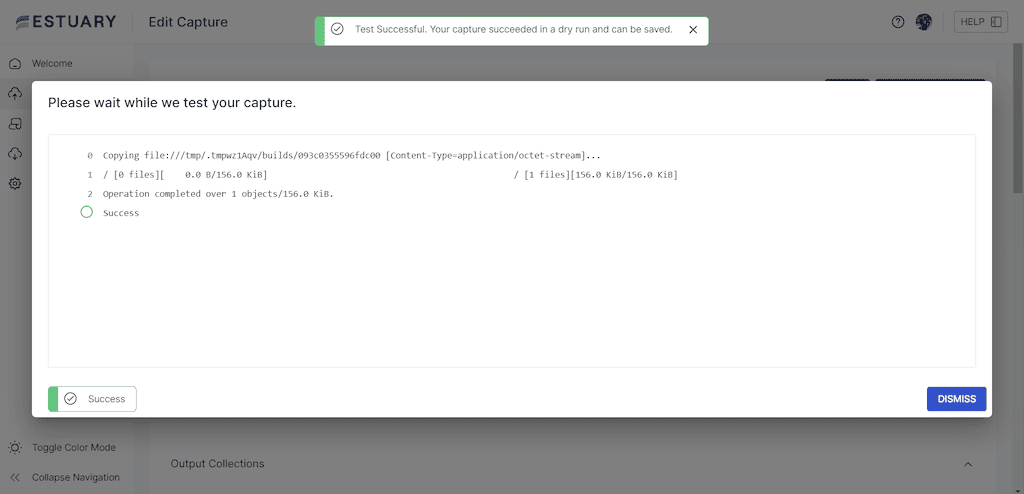

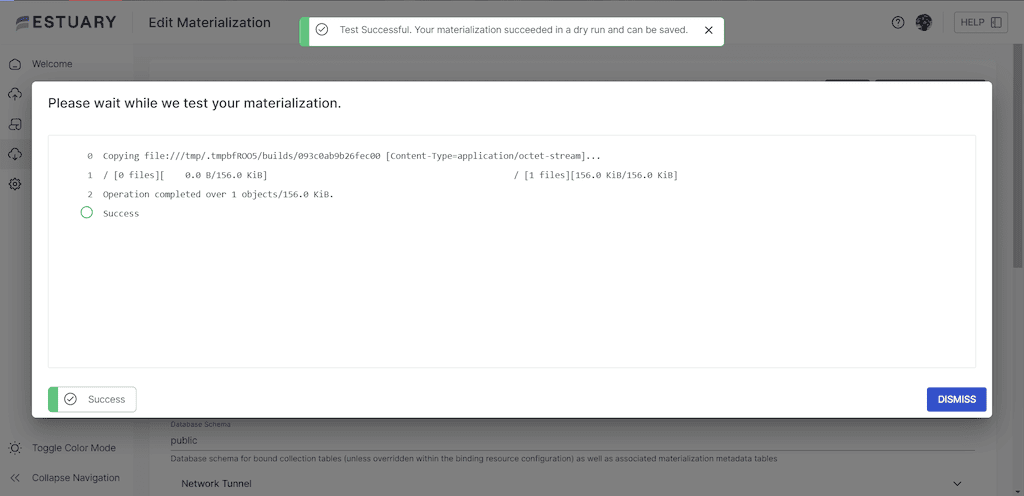

Now you need to go to Estuary to connect to the MongoDB cluster and make sure to specify the right database and collection for Estuary to capture. In my case, I have specified mongodbEsturaryCdC and sales as my database and collection. Click on Next and test the connection, make sure you see a success message before you Save and Publish the CDC capture.

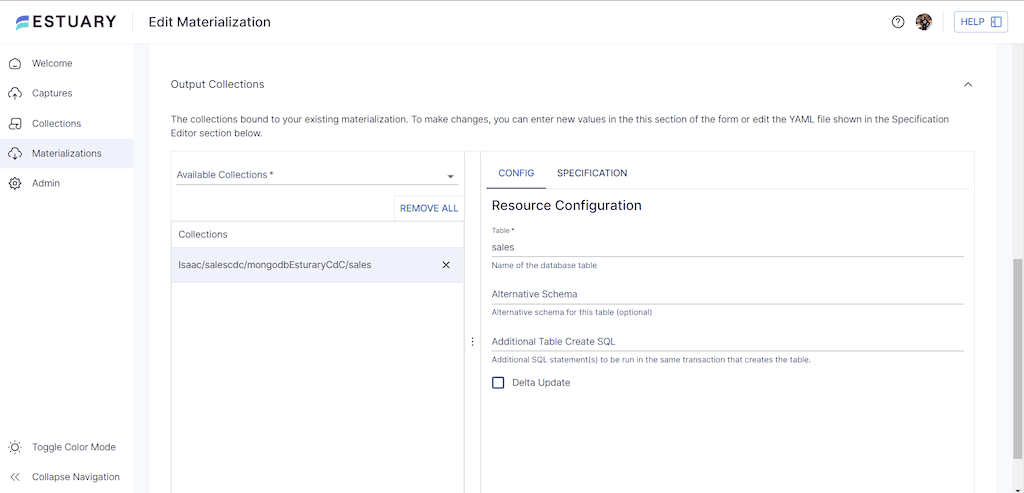

- Create a Materialization connection for the Postgres database to capture the Sales data in MongoDB

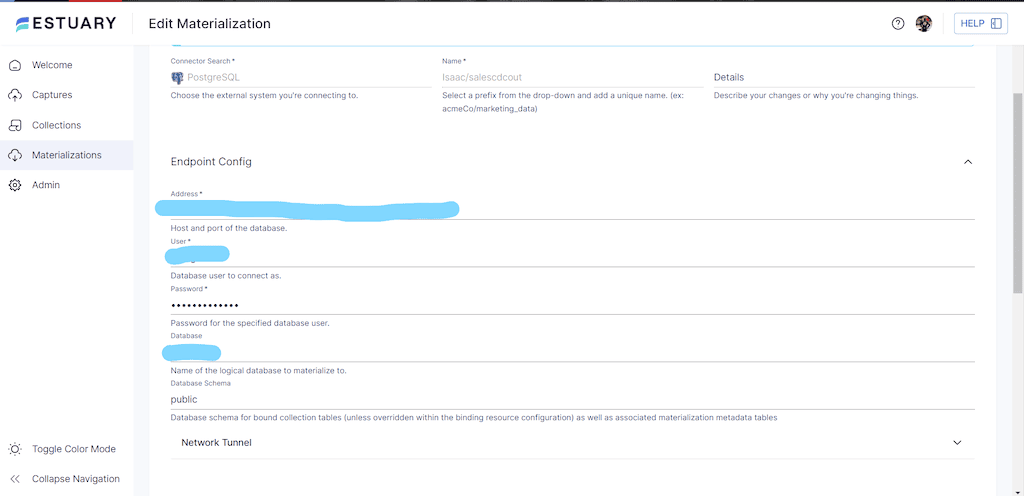

Now we are ready to materialize data from Estuary into our Postgres database. First, we need to configure our connection setting to our Postgres database on Estuary. Also, we must make sure we are able to connect to our database from your network. In my case, my Postgres database is running on Amazon Web Services, and I have set the public accessibility to true. My connection setting looks like the one below. Again, make sure you test the connection before you move forward.

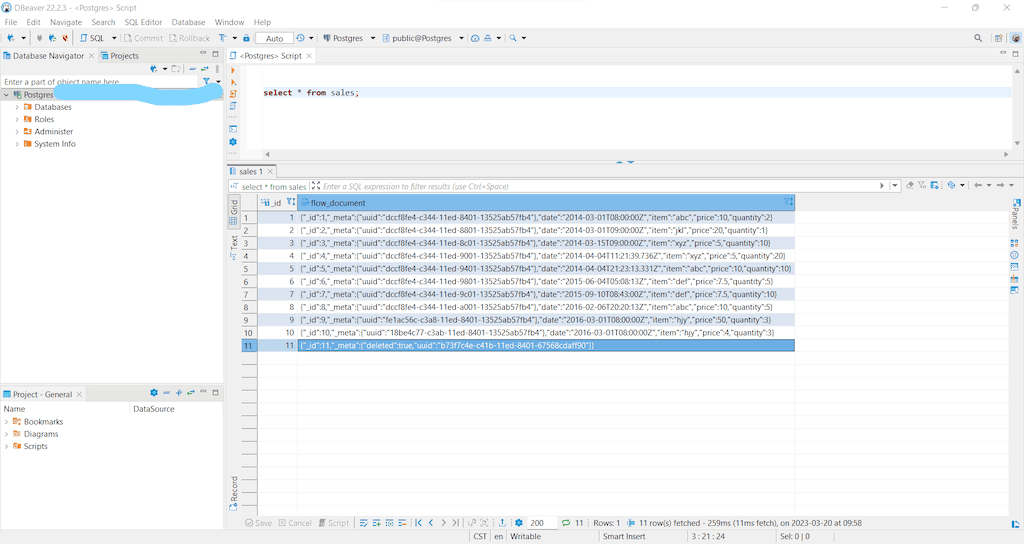

- Go into your Postgres Database to confirm that your data is present

At this point, our data have gotten to the Postgres database using Estuary as the CDC tool. Interestingly, the data from MongoDB only took less than a second to get to the Postgres database. We can further use Data Build Tool (dbt) to further transform the data for the analytical dashboards.

For more details on this process, see our docs on:

Conclusion

Recently, there has been a lot of work on change data capture. Different modern data tools have been developed that can handle CDC, but using an ultra-simple tool like Estuary is very important and a game changer. Using Estuary would make the development process much faster and more seamless. You would be able to concentrate on delivering value and insight to the business instead of worrying about managing data infrastructures.

Are you an engineer looking for more info on how Estuary approached our CDC solutions? Check out the code on GitHub or talk directly to our engineering team!

FAQs

Can MongoDB CDC capture deletes?

What affects MongoDB CDC performance?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.