The impact of Real-Time Data Replication goes beyond corporate boardrooms and touches the core of our daily lives – from eCommerce transactions that occur in the blink of an eye to vital healthcare information that saves lives within milliseconds. Data replication acts as a safety net – promoting high data availability and ensuring smooth operations even if the primary system experiences an outage.

Unplanned downtime incidents can strike at any time, hitting businesses where it hurts the most.

Industry surveys consistently show that a large majority of companies experience at least one unplanned outage within a three-year period, leading to lost revenue, operational disruption, and reduced customer trust. This makes real-time data replication all the more important for maintaining business continuity and system resilience.

But, do you know what is even more important? Knowing the right strategies and tools to implement real-time data replication. In this article, we walk through five practical strategies and introduce some of the most widely used real-time data replication tools in use today.

What Is Real-Time Data Replication?

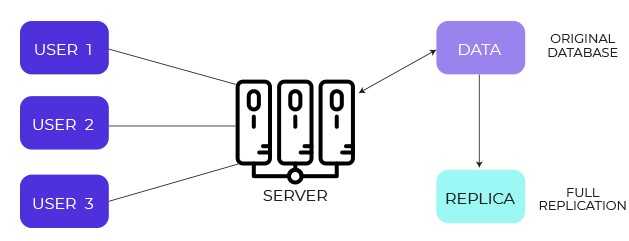

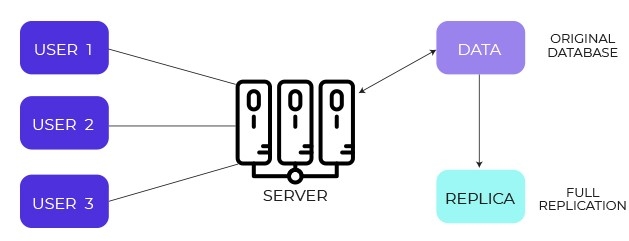

Real-time data replication is the continuous data transfer from a source to a target storage system. This target could be a data warehouse, data lake, or another database. The goal is to ensure that changes made in the source system are propagated to the destination with minimal delay, so data remains consistently synchronized across environments.

In practice, “real-time” does not always mean truly instantaneous. Depending on the database, network conditions, and replication method used, replication latency can range from sub-second to a few seconds or, in some cases, minutes. What matters most is that changes are captured and delivered continuously rather than in large, delayed batches.

Real-time data replication is commonly used to support analytics, disaster recovery, high availability, and operational use cases where access to fresh data is critical. By keeping systems aligned as changes occur, organizations can reduce data lag, improve reliability, and make decisions based on up-to-date information.

3 Components Of Real-Time Data Replication

The 3 major components of real-time data replication include:

1. Data Ingestion

The initial stage focuses on collecting and moving data from the source system to the replication pipeline. This step ensures that data changes are reliably captured as they occur and prepared for delivery to downstream systems.

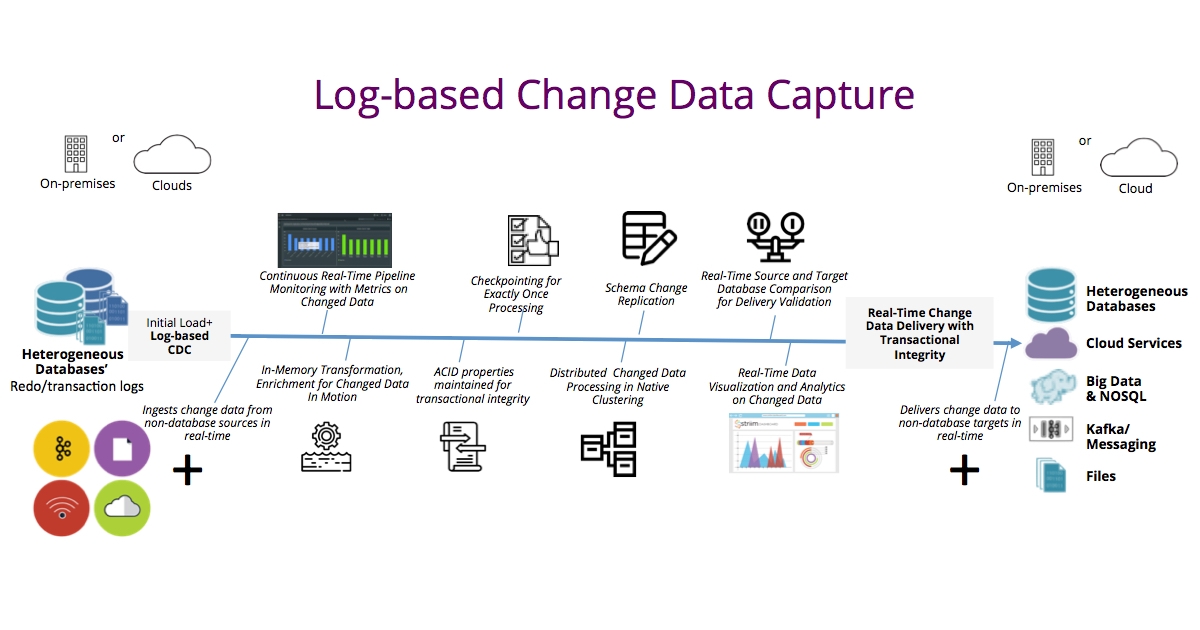

In modern replication setups, data ingestion often relies on change data capture (CDC) techniques that read database logs or streams rather than repeatedly querying the source database. This helps minimize load on production systems.

2. Data Integration

This phase involves consolidating data from one or more sources into a unified and usable format. It helps eliminate inconsistencies and ensures that data arriving at the destination adheres to expected schemas and structures.

Data integration becomes especially important when replicating data across heterogeneous systems, such as moving data from operational databases into analytical warehouses or data lakes.

3. Data Synchronization

This is the core of real-time data replication. During synchronization, changes captured from the source are continuously applied to the target system so both environments remain aligned.

Effective data synchronization accounts for inserts, updates, deletes, and schema changes, while maintaining ordering and consistency. This ongoing process ensures the destination reflects the most current state of the source with minimal lag.

Mastering Real-Time Data Replication: 5 Simple Strategies You Need To Know

Real-time data replication is an integral part of modern data management practices. It's the backbone of businesses, keeping them up and running, providing valuable data analysis, and acting as a safety net for disaster recovery. But putting it into action can be quite a challenge – there are all sorts of technological and logistical hurdles to overcome.

To simplify this process, we’ve put together 5 straightforward strategies that effectively address this challenge. No matter what type of database you're dealing with or how big your data is, these methods give you all the right tools to make real-time data replication smooth.

Strategy 1: Using Transaction Logs

Many databases store transaction logs to observe ongoing operations. Transaction logs contain extensive data about actions like data definition commands, updates, inserts, and deletes. They even monitor specific points where these tasks take place.

To implement real-time data replication, you can scan the transaction logs and identify the changes. When done, a queuing mechanism is used to gather and then apply these changes to the destination database. However, a custom script or specific code may be needed to transfer transaction log data and make the required changes in the target database.

Considerations When Using Transaction Logs

- Connector requirements and limitations: Accessing transaction logs typically requires special connectors for real-time data replication. These connectors, whether open-source or licensed, might not function as needed or can be costly.

- Labor and time-intensive: Using transaction logs is laborious and time-consuming. If an appropriate connector is not found, you’ll need to write a parser to extract changes from the logs and replicate the data to the destination database in real time. Using this strategy can be helpful, but just to be upfront, it might need a lot of work and effort to get it up and running.

Strategy 2: Using Built-In Replication Mechanisms

Built-in replication mechanisms rely on native change tracking capabilities provided by the database itself, often implemented through log reading as part of change data capture functionality. This approach replicates data to a compatible replica database and is commonly used for backups, read replicas, and high availability setups.

Many transactional databases, such as Oracle, MySQL, and PostgreSQL, include built-in replication features. These mechanisms are generally reliable, well-documented, and familiar to database administrators, which makes them relatively straightforward to configure and operate.

Considerations When Using Built-In Mechanisms To Replicate Data

- Cost implications: When you use built-in mechanisms, keep the costs in mind. Some vendors, like Oracle, will ask you to purchase software licenses for using their built-in data replication mechanisms.

- Compatibility issues: Built-in replication mechanisms' performance largely depends on the versions of the source and destination databases. Databases running on different software versions face difficulties during the replication process.

- Cross-platform replication limitations: They work best when both the source and destination databases are from the same vendor and share a similar technology stack. Replicating data across different platforms can be technically challenging.

Strategy 3: Using Trigger-Based Custom Solutions

Many relational databases, such as Oracle and MariaDB, support triggers that can be defined on tables or columns. These triggers execute automatically when specific database events occur, such as inserts, updates, or deletes.

In trigger-based replication setups, these events are captured and written to a separate change table, often functioning as an audit or staging log. This change table records the operations that need to be applied to the destination database. A downstream process or tool is then responsible for reading these changes and applying them in the correct order.

This approach does not rely on timestamp columns but instead uses database-level logic to detect changes as they occur. However, it still requires an external mechanism to move and apply the captured data to the target system.

Considerations When Using a Trigger-Based Custom Solution

- Limited operational scope: Triggers can’t be used for all database operations. They are typically tied to a limited set of operations like calling a stored procedure, performing insert, or updating operations. As a result, for comprehensive change data capture (CDC) coverage, you have to pair trigger-based CDC with another method.

- Potential for additional load: Triggers can add an extra burden on source databases that impacts overall system performance. For example, if a trigger is associated with a particular transaction, that transaction will be on hold until the trigger is successfully executed. This can lock the database and create a standby for future changes until the trigger is executed.

Strategy 4: Using Continuous Polling Methods

Continuous polling mechanisms create custom code snippets that copy or replicate data from the source database to the destination database. Then the polling mechanism actively monitors for any changes in the data source.

These custom code snippets are designed to detect changes, format them, and finally update the destination database. Continuous polling mechanisms use queuing techniques that decouple the process in cases where the destination database cannot reach the source database or requires isolation.

Considerations When Using Continuous Polling Mechanisms

- Monitoring fields required: To use continuous polling methods, you need a specific field in the source database. Your custom code snippets will use this field to monitor and capture changes. Typically, these are timestamp-based columns that are updated when the database undergoes any modifications.

- Increased database load: Implementing a polling script causes a higher load on the source database. This additional strain affects the database’s performance and response speed, particularly on a larger scale. Therefore, continuous polling methods should be carefully planned and executed while considering their potential impact on database performance.

Strategy 5: Using Cloud-Based Mechanisms

Many cloud-based databases that manage and store business data already come with robust replication mechanisms. These mechanisms effortlessly replicate your company's data in real time. The advantage of using these cloud-based mechanisms is that they can replicate data with minimal or no coding. Integrating database event streams with other streaming services achieves this.

Considerations When Using Cloud-Based Replication Mechanisms

- Compatibility issues with different databases: If the data source or destination belongs to a different cloud service provider or any third-party database service, the built-in data replication method becomes difficult.

- Custom code for transformation-based functions: If you want to use transformation-based functions in your replication process, you’ll need custom coding. This additional step is necessary to manage the transformations effectively.

How to Choose the Right Replication Strategy

Choosing the right real-time data replication strategy depends less on the technology itself and more on how your systems operate and what your business requires. There is no single best approach for every use case, but the following criteria can help guide the decision.

1. Define Your Latency and Recovery Requirements

Start by clarifying how fresh the data needs to be and how much data loss is acceptable.

- Latency expectations: Some use cases require sub-second updates, while others can tolerate seconds or minutes of delay.

- Recovery objectives: Consider how quickly systems must recover after an outage and whether any data loss is acceptable during that window.

These requirements will immediately rule out certain approaches, such as batch-based or low-frequency polling methods.

2. Understand Your Source System Constraints

Different replication strategies place different levels of load on source systems.

- Log-based CDC generally has the lowest impact on production databases.

- Triggers and polling can introduce additional overhead, especially at higher write volumes.

- Built-in replication is often optimized but may be limited to same-vendor databases.

Knowing how much additional load your source systems can tolerate is critical for long-term stability.

3. Consider Heterogeneity and Future Growth

Replication becomes more complex when data must move across different technologies.

- Same-database replication is often easiest using native tools.

- Cross-platform replication typically requires CDC-based or managed platforms.

- If future migrations, new destinations, or analytics use cases are likely, flexibility becomes more important than simplicity.

Planning only for today’s architecture can lead to rework later.

4. Evaluate Operational Complexity

Some strategies require significant ongoing effort to maintain.

- Custom solutions (triggers, polling, log parsers) demand ongoing development and monitoring.

- Managed platforms reduce operational burden but introduce vendor dependency.

- Enterprise tools may offer strong guarantees but require dedicated expertise and licensing management.

Operational simplicity often matters as much as technical capability.

5. Account for Schema Changes and Data Correctness

Real-world data evolves continuously.

- Ensure the replication approach handles schema changes gracefully.

- Confirm support for deletes, updates, and ordering guarantees.

- Understand how failures are handled and whether replay or recovery is possible without data loss.

Replication strategies that work in demos often fail under real schema evolution and failure scenarios.

6. Match the Strategy to the Use Case

As a general guideline:

- Disaster recovery and high availability: Built-in replication or log-based CDC

- Analytics and reporting: Log-based CDC or managed replication platforms

- Operational data sharing: CDC with streaming or real-time materialization

- File and dataset distribution: File-level replication tools

Choosing a strategy aligned with the actual use case helps avoid unnecessary complexity.

By evaluating latency requirements, source system constraints, operational overhead, and future flexibility, teams can select a replication strategy that remains reliable as systems scale and evolve. Real-time data replication works best when it is chosen deliberately, not retrofitted under pressure.

Real-Time Data Replication Made Easy: 5 Best Tools You Shouldn't Miss

Real-time data replication tools help maintain consistent data flow and synchronization across systems and platforms. These tools are commonly used to preserve data integrity, support analytics, and reduce the operational impact of failures by keeping systems aligned as changes occur.

Below are 5 widely used data replication tools in 2026, each designed to support different replication needs and deployment models.

Estuary

Estuary is a right-time data platform designed to support continuous data movement across operational systems, analytical platforms, and cloud storage. It enables teams to replicate, transform, and deliver data with low latency while maintaining consistency and reliability.

Estuary features

- Change Data Capture (CDC): Captures inserts, updates, and deletes from databases using log-based CDC, minimizing load on source systems.

- Real-time materializations: Maintains continuously updated datasets across warehouses, databases, and streaming systems.

- Schema management: Automatically infers and evolves schemas while enforcing data contracts to maintain consistency downstream.

- Built-in reliability: Designed for fault tolerance with exactly-once processing semantics for supported destinations.

- Integrated transformations: Supports real-time SQL and JavaScript transformations as part of the replication pipeline.

- Scalable architecture: Handles high-throughput replication and large backfills without impacting source system performance.

- Broad connector ecosystem: Replicates data from databases and SaaS sources to warehouses, lakes, and operational targets.

Qlik Replicate

Qlik Replicate is an enterprise data replication tool that uses log-based change data capture to move data from operational systems into analytics platforms, data lakes, and cloud environments.

Qlik Features

- Log-based CDC: Reads database transaction logs to capture changes without querying source tables.

- Low source impact: Designed to minimize performance overhead on production databases.

- Broad source and target support: Works across on-premise, cloud, and hybrid environments.

- Centralized management: Provides monitoring and control through a single management interface.

- Enterprise-focused deployments: Commonly used for large-scale, multi-database replication projects.

IBM Data Replication (Infosphere / IIDR family)

IBM's data replication solution provides real-time synchronization between heterogeneous data stores. This modern data management platform is offered both as on-premise software and as a cloud-based solution. IBM Data Replication synchronizes multiple data stores in near-real time and tracks only the changes to data to keep the impact minimal.

IBM Data Replication Software Features

- Near real-time CDC: Streams incremental changes from source databases to multiple targets.

- Heterogeneous replication: Supports replication across different database technologies.

- High availability support: Designed to reduce downtime during outages and migrations.

- Enterprise governance: Integrates with broader IBM data management and governance tooling.

Note: Capabilities and licensing vary by IBM edition and deployment model.

Hevo Data

Hevo Data is a no-code data pipeline that provides a fully automated solution for real-time data replication. This tool integrates with more than 100 data sources, including different data warehouses. Once set up, it requires zero maintenance, which makes it an ideal choice for businesses looking to enhance their real-time data replication efforts.

Hevo Data Replication Features

- Fault-tolerance: Its architecture detects data anomalies to protect your workflow from potential disruptions.

- Bulk data integration: Hevo integrates bulk data from diverse sources like databases, cloud applications, and files to provide up-to-date data.

- Data capture: This feature captures database modifications from various databases, making it easy to deliver these changes to other databases or platforms.

- Automated data mapping: Intelligent mapping algorithms are employed for automatic data mapping from various sources to enhance the efficiency of the integration process.

- Uninterrupted operations: Hevo creates standby databases for automatic switch-over. This minimizes the impact of unplanned disruptions and maintains high data availability.

- Scalability: Designed to handle millions of records per minute without latency, it is built to deal with large data volumes and perform data replication without compromising system performance.

Resilio Connect

Resilio Connect is a reliable file sync and share platform that elevates real-time data replication and employs innovative and scalable data pipelines. It uses an advanced P2P sync architecture and proprietary WAN acceleration technology to secure low-latency transport of massive data volumes without impacting the operational network.

Resilio Connect Features

- No single point of failure: Its unique P2P architecture removes the single point of failure commonly found in other data replication solutions.

- Robust scalability: Its peer-to-peer architecture supports rapid data replication which is easily scalable to accommodate growing data demands.

- Transactional integrity: Resilio Connect accurately replicates every file change across all devices to ensure end-to-end data consistency at the transaction level.

- Automatic file block distribution: Resilio Connect effectively auto-distributes file blocks. It detects and aligns file changes across all devices to simplify the replication process.

- Guaranteed data consistency: With its optimized checksum calculations and real-time notification system, it maintains data consistency across all devices and eliminates discrepancies or inaccuracies.

- Accelerated replication without custom coding: Resilio Connect lets users replicate data easily without writing any custom code. You can configure it using a user-friendly interface. Comprehensive support and documentation further ease the deployment experience.

Important distinction: Resilio Connect is best suited for file and dataset replication, not database-level CDC.

Conclusion

The importance of real-time data replication goes far beyond basic redundancy. It helps preserve data integrity, improves system availability, and ensures that decisions are made using the most current information available. Whether supporting mission-critical applications, analytics, or disaster recovery, real-time replication enables organizations to respond faster to operational and customer needs.

While real-time data replication can be complex, choosing the right strategy and tools makes a significant difference. Each approach comes with trade-offs related to latency, system impact, and operational overhead, which is why aligning replication methods with business requirements is essential.

Estuary is designed to support reliable, right-time data replication across systems. By continuously capturing and delivering data changes, it helps teams keep data synchronized across applications and environments without relying on brittle batch jobs or manual recovery processes.

With the right replication strategy in place, organizations can reduce downtime risk, improve data consistency, and build systems that remain dependable as data volumes and complexity grow.

Ready to simplify real-time data replication?

Get started with Estuary or talk to our team to explore your use case.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.