You’re planning on using Snowflake for all your data analytics, and change data capture (CDC) from your production Postgres instance is an absolute must. You may also need SaaS ingestion or a streaming ingestion from Kafka. Now, the question is how to get all this data where it needs to be. You’ve narrowed it down to two options: Snowflake Openflow or Estuary. Both tools move and process data, but they do it in fundamentally different ways.

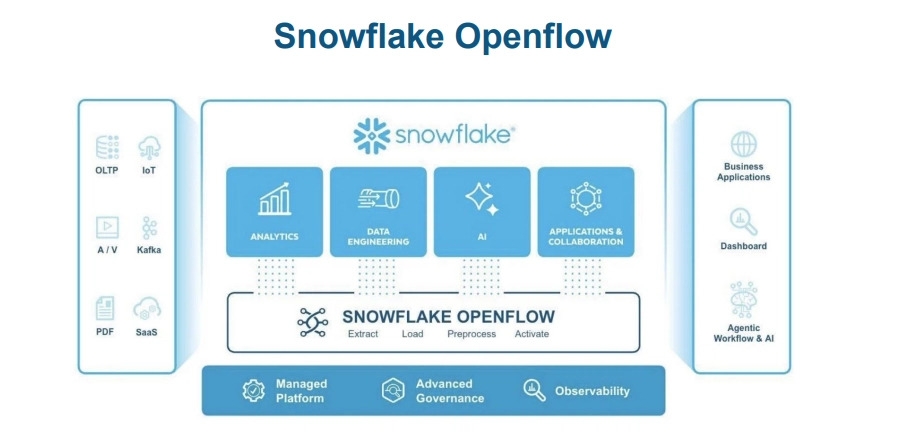

Snowflake Openflow is a native integration service built directly within Snowflake. It’s designed to bring data into Snowflake and keep everything centralized.

Estuary is a right-time data platform that can handle both batch and real-time data across a number of systems, not just Snowflake.

In this guide, we’ll cover how each of these tools works, where they perform best, and how you can decide between them based on your own use case.

What Snowflake Openflow Gives You (and Where It Might Fall Short)

Snowflake Openflow is used for creating managed pipelines. Accessed from Snowsight, it runs on top of Apache NiFi but is fully integrated with Snowflake, including authentication, permissions, and security. You can use roles, External Access Integrations, and other common methods like TLS and Private Link without adding any other technology.

Openflow is best suited if Snowflake is your primary system. In that case, your data will come into, be processed within, and go out from Snowflake. It supports change data capture from databases like PostgreSQL, MySQL, Oracle, and SQL Server and can also handle streaming data from technologies like Kafka and Kinesis. It’s particularly useful if your team prefers UI-based workflows as opposed to a more coding-heavy approach.

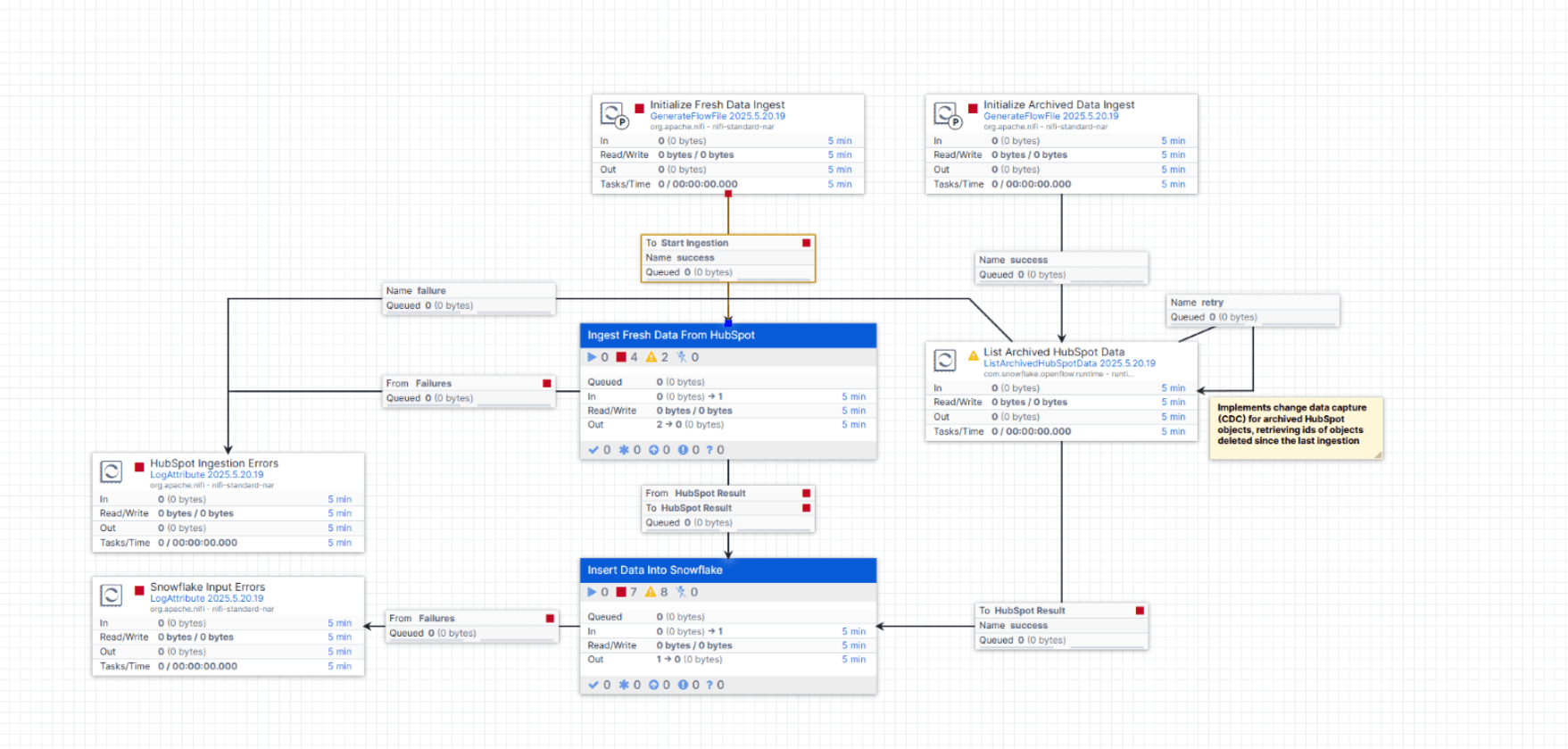

What the Openflow Interface Visually Represents

The Openflow interface stands out from other configuration options, such as configuration files or SQL scripts, because it provides a visual, drag-and-drop interface where users can draw a data pipeline by placing components on a canvas and connecting them. An example would be dragging a CDC component from PostgreSQL, linking it to another component for transformation, and finally connecting it to another one that loads data into Snowflake.

The advantage of this approach is obvious: no more configuration files or code writing. Users just point and click on configuration options like database credentials and table names, and they're good to go. This is much more appealing to SQL-savvy users, as it’s got fewer code requirements compared to Fivetran’s configuration UI or Airflow’s Python scripting.

However, there are limitations to this approach. While it is good for simple data pipelines like CDC or SaaS connectors, users will eventually need to configure data processors with options that aren’t immediately obvious in the UI and that require referring to documentation and learning about NiFi’s concepts, some of which may not be immediately intuitive. As data pipelines become more complex, the visual interface becomes less effective.

The drag-and-drop interface is good enough for initial configuration, but working with complex data pipelines in production is cumbersome and not really worth it unless users are completely stuck when using anything else. Version control is limited, and you have to choose to use exclusively this UI or work around it by exporting configurations to JSON and managing them manually, which defeats the point of a visual interface.

So, while this interface is more appealing than code-centric alternatives like Airflow’s Python scripting or Fivetran’s configuration UI, it’s not necessarily a complete solution because it requires users to learn another set of concepts, particularly those related to data processors.

Reality Check: What Works, What Doesn’t, and What’s Still Evolving

Openflow is still in its early days. It has just achieved general availability, which means that the vast majority of potential users have not had the time to test it out. Big corporations may still be waiting to see if it will stick around or what the biggest issues around it could be before heavily investing their architecture in the solution.

While it’s true that early adopters have had success, it’s usually with relatively simple applications. A CDC from a single Postgres or MySQL database into Snowflake works. Accessing data from Salesforce, Google Ads, or Slack works, too. These are the kinds of applications where Openflow is doing what would otherwise be done with Fivetran or another connector.

However, there are some limitations. If a source system doesn’t already have a connector with Openflow, then creating a custom one is necessary, which is more than just a minor pain. If your application requires significant transformation before it gets to Snowflake (often handled by dbt), you should know that Openflow is not meant for that. Likewise, if you are already using Fivetran or Airbyte with dbt, then switching to Openflow offers no real benefits.

There’s also the deployment issue. BYOC is only supported on AWS. If your application is on GCP or Azure, and you don’t want to pay for the infrastructure with Snowflake, then Openflow isn’t an option.

Finally, the pricing is complex. In addition to Openflow’s fees (which aren’t insignificant), there’s also the cost of underlying Snowflake resources, including management compute pools, potential streaming via Snowpipe, the warehouse, and telemetry. There is no simple per-record or per-gigabyte fee, so a trial is necessary to see what it will really cost.

What Estuary Provides (and How It Differs)

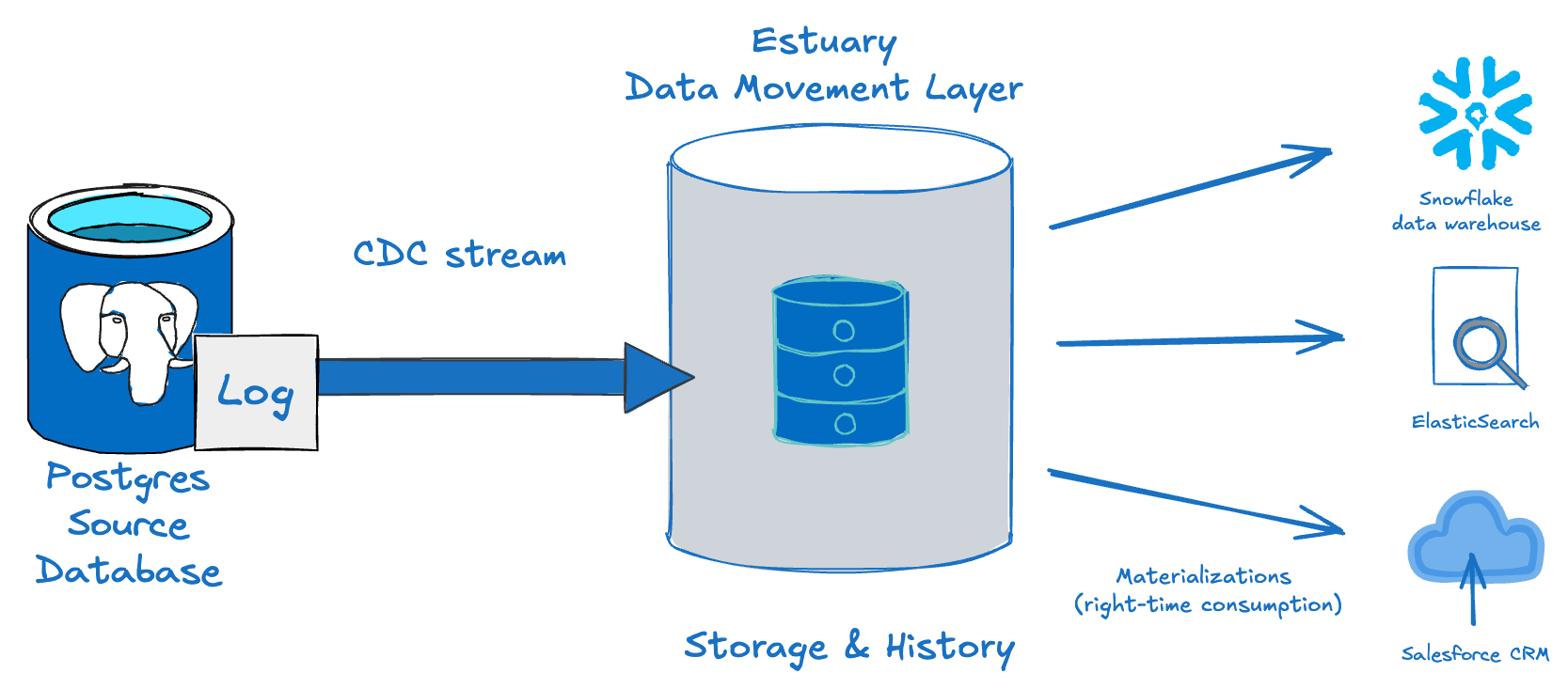

Estuary takes a different approach. Rather than focusing on a single data warehouse, it views data as a real-time stream, which can be transferred from one place to another. It works with both batch and streaming data, but the underlying architecture is based on a distributed log and a set of persistent data collections.

The fundamental idea here is that Estuary decouples the transfer of the data from its destination. So, for example, when you're capturing data from a Postgres database, it becomes a reusable stream that can be sent to multiple systems without being re-extracted.

This approach has a number of advantages. You get low-latency CDC, the ability to replay the data, and the option to transform it before it reaches the final destination. Rather than creating a pipeline to each destination, you define it once and use it multiple times.

This is particularly useful when your system is growing. If Snowflake is just one of several destinations, including Kafka, Databricks, or object storage, Estuary allows you to scale without re-building your pipelines.

Main Differences Between Openflow and Estuary

At a high level, the difference lies in the architecture.

Openflow revolves around Snowflake, where data flows in, gets modified, and goes out. In contrast, Estuary treats data as constantly moving from one place to another, with no end point.

This influences pipeline creation, data reuse, and architecture evolution.

| Category | Snowflake Openflow | Estuary |

|---|---|---|

| Architecture | Snowflake-centric | Stream-centric: Data is captured into durable collections and distributed from there |

| Data flow | Into Snowflake | Across systems: One capture feeds multiple destinations without re-extracting |

| Transformations | After ingestion | In-flight during streaming: SQL, Python, and TypeScript derivations are applied as data flows, before it reaches any destination |

| Destinations | Primarily Snowflake | Multiple systems: Snowflake, BigQuery, ClickHouse, Kafka, S3, and more |

| Replay/backfill | Limited | Built-in: Collections store history, enabling backfills to new destinations without re-querying the source |

| Delivery model | Less explicit | Exactly-once across streaming, batch, and mixed pipelines |

| Maturity | Newer, pilot-stage for many teams | Established for multi-destination architectures |

| UI/Workflow | Visual drag-and-drop (no-code) | No-code connector setup for straightforward pipelines, with code options for complex transformations and configuration |

Costs can be more difficult to compare, as Openflow interacts with multiple Snowflake components. That’s why they cannot be estimated at first glance.

For example, in Openflow, Snowpark Container Services compute pools are used for both management and runtime. The management layer works continuously as long as it’s set up, regardless of the number of pipelines that are currently active.

Also, similar to Snowpipe or Snowpipe Streaming, there might be data ingestion costs, as well as potential warehouse and telemetry costs depending on your pipelines.

Since these expenses involve multiple services provided by Snowflake, numerous usage metrics need to be analyzed in order to understand them fully.

In contrast, Estuary’s cost model is a flat per-connector fee with a per-GB usage rate.

Architecture Example: Single Destination vs. Reusable Stream

Here’s a simple scenario for you: get the data from Postgres and send it to Snowflake.

Normally, with Openflow, you would set up a CDC connector, load the data into Snowflake tables, and transform it in Snowflake. Later on, if you want the same data in another location, you would create another pipeline or use a reverse ETL approach.

With Estuary, it’s a bit different. You would capture the data once and load it as a stream (a collection). Later on, you can send the same data to multiple locations without touching the original location again.

yamlcaptures:

your-org/postgres-capture:

endpoint:

connector:

image: ghcr.io/estuary/source-postgres:v3

config:

address: your-db-host:5432

user: your-db-user

database: your-db-name

credentials:

auth_type: UserPassword

password: your-db-password

bindings:

- resource:

table: public.orders

target: your-org/orders

materializations:

your-org/snowflake-mat:

endpoint:

connector:

image: ghcr.io/estuary/materialize-snowflake:dev

config: path/to/snowflake-config.yaml

bindings:

- source: your-org/orders

resource:

table: orders

your-org/clickhouse-mat:

endpoint:

dekaf:

config:

token: your-auth-token

strict_topic_names: false

deletions: kafka

variant: clickhouse

bindings:

- source: your-org/orders

resource:

topic_name: ordersThe key idea here is to:

- Fetch the data once from the PostgreSQL database using CDC.

- Load the data into a collection (

your-org/orders). - Use the same collection to materialize the data into multiple destinations.

You’re not querying Postgres again, nor are you creating new pipelines. You are reusing the same data stream.

plaintext language-yamlPostgreSQL

│

▼

[Estuary Capture (CDC)]

│

▼

Collection (orders)

│

├──► Snowflake

├──► ClickHouse

└──► Kafka / others

*Data is captured once from PostgreSQL and stored as a collection.

Multiple destinations consume from the same stream without adding load to the source system.*Instead of creating a pipeline for each destination, you’re creating a single definition of the data and directing it where it needs to go.

Transformations: Routing vs. Streaming Logic

Another difference lies in the way in which transformations are handled.

For Openflow, transformations are typically done in one of the two ways: within the NiFi pipeline or after landing in Snowflake. Typically, data is ingested and then transformed as part of the warehouse process.

With Estuary, however, transformation can be done earlier in the pipeline. You can use SQL (SQLite), TypeScript, or Python (currently limited to private BYOC data planes) to transform data in-flight between capture and materialization. This can help you start off with clean data, without paying warehouse costs for the data you don’t intend to use in Snowflake.

For example:

yamlderive:

using:

sqlite: {}

transforms:

- name: fromOrders

source: your-org/orders

shuffle: any

lambda:

SELECT $user_id,

LOWER(email) AS normalized_email,

created_at;This SQLite transformation reads from a collection of orders data and creates a new derived collection with the transformed data. That collection can be used like any other, which means you can read from it into multiple destinations without having to re-apply transformations.

You can find more details on working with derivations here.

When to Use Snowflake Openflow vs. Estuary

If you’re already using a setup centered around Snowflake, Openflow can be a great option. It integrates well when most of your data is stored in Snowflake and when your rules and permissions rely on Snowflake roles. It’s particularly good if you want a managed experience, minimal infrastructure overhead, and a visual tool to build your data pipelines. With Openflow, you get all of that within a single environment for ingestion, transformation, and serving.

On the other hand, if your setup is a bit more complex, Estuary may be a better choice. It offers predictable pricing and allows you to send data to a lot of platforms, with the flexibility to adapt as your environment evolves.

Data is stored once, and it’s durable. You can add new destinations at any point without having to re-ingest it from the source: new systems simply read from the existing data stream. Combined with exactly-once delivery, this makes both streaming and batch pipelines more reliable and predictable. Estuary is also a great option if you need to use features like replay and backfill.

In these cases, having a separate integration layer away from your data warehouses will provide you with greater flexibility in the future, particularly as your data stack grows or changes.

Can They Be Used Together?

These tools are not mutually exclusive.

You can use Openflow to integrate with Snowflake, while having Estuary as a real-time backbone across different systems. In that setup, Snowflake will remain a key destination but will no longer be the only one.

Most teams will tend to favor one approach or the other, depending on how their architecture is built.

Conclusion

Openflow matches Snowflake's approach to security, setup, and overall ecosystem. As such, it’s great for those teams that want everything to live within Snowflake.

Estuary, on the other hand, is designed for systems where data is flowing constantly across multiple platforms. Reuse, real-time processing, and flexibility are the main focus here.

Ultimately, it's a simple choice: do you want your integration layer to live within a data warehouse, or to operate independently, as a streaming backbone?

In short, if you are fully Snowflake-based and use common, straightforward source systems, Openflow is a good choice. But, if your system is expanding beyond Snowflake, Estuary gives you the room to grow without re-creating your integration pipelines.

FAQs

Does Snowflake Openflow support change data capture (CDC) from PostgreSQL?

How does Estuary handle sending data to multiple destinations from a single source?

How does Snowflake Openflow pricing work, and how does it compare to Estuary?

When should you choose Estuary over Snowflake Openflow?

About the author

I am a dynamic and results-driven data engineer with a strong background in aerospace and data science. Experienced in delivering scalable, data-driven solutions and in managing complex projects from start to finish. I am currently designing and deploying scalable batch and streaming pipelines at Banco Santander. I also create technical content on LinkedIn and Medium, where I share daily insights on data engineering.