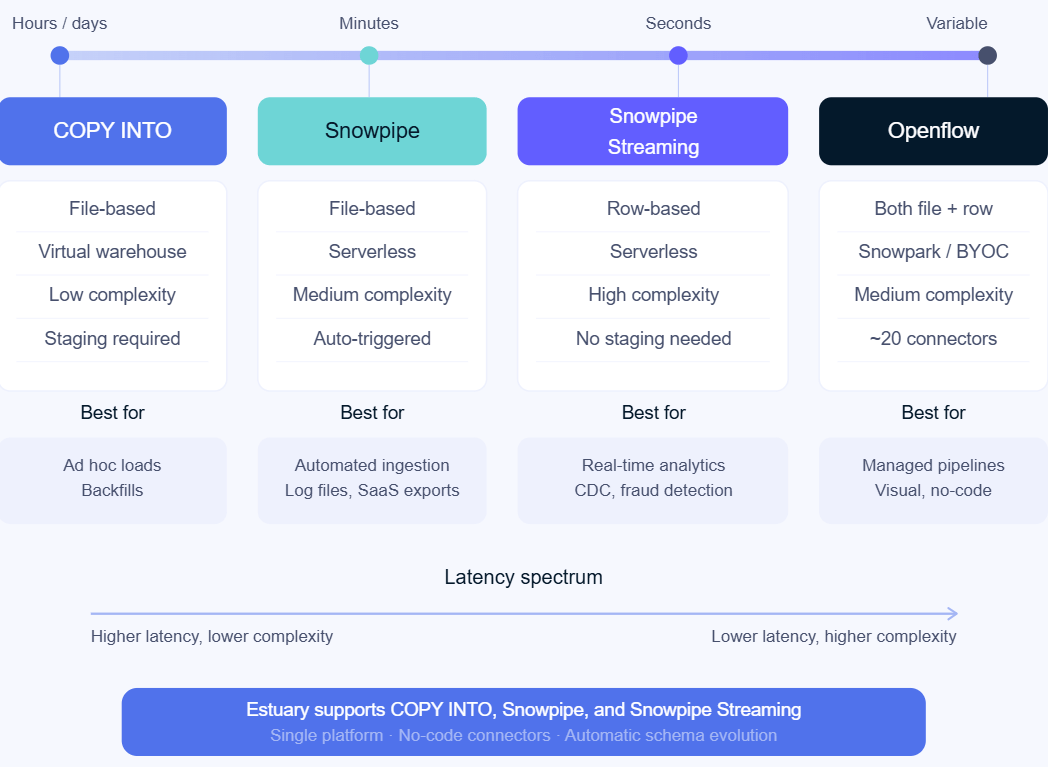

Snowflake supports several native ingestion methods: COPY INTO for bulk file loading, Snowpipe for automated micro-batch ingestion, Snowpipe Streaming for real-time row-level delivery, and a managed service, Openflow, that can utilize these building blocks. Each is designed for a different latency tier.

That range is genuinely useful: it means you can match pipeline design to what each workflow actually needs rather than engineering everything to the same spec. But having options means making choices, and the wrong choice has real cost implications that compound quietly over time.

We enable data pipelines for Snowflake at Estuary, which means we've seen these ingestion tradeoffs play out across hundreds of customer environments. Some teams jump into DIY Snowpipe Streaming integrations for real-time data without accounting for the associated engineering lift, while other teams quietly burn warehouse credits on batch workflows that aren't optimized. This guide reflects what we've learned from that experience.

This post breaks down each method: how it works, what it costs, and when it's the right fit. If you're building a new pipeline or auditing an existing one, this is the framework we use to make that call. For a deeper technical walkthrough of each method's implementation details, see our Snowflake data ingestion guide.

Key Takeaways

Use COPY INTO for bulk loads, backfills, and workflows where daily or scheduled ingestion is sufficient.

Use Snowpipe when you need automated ingestion from files with minute-level latency and minimal infrastructure management.

Use Snowpipe Streaming for real-time event data, CDC pipelines, and use cases that require second-level latency.

Use Openflow when you want a managed, visual pipeline builder within Snowflake and your sources fit its connector ecosystem.

Production architectures can combine multiple methods to match different latency and workload requirements.

The main tradeoffs across all methods are between latency, cost efficiency, and engineering complexity.

Method 1: Bulk Loading with COPY INTO

COPY INTO is the most fundamental way to load data into Snowflake. It is a SQL command that moves data from a staging location (typically a cloud storage bucket like S3, GCS, or Azure Blob) into a Snowflake table.

How it works

Data must already be in a staging location before you run the command. You stage the files, run the COPY INTO query, and Snowflake loads the data. A simple example loading from S3 might look like:

sqlCOPY INTO snowflake_table

FROM 's3://your-bucket/path'

STORAGE_INTEGRATION = your_integration_name

FILE_FORMAT = (TYPE = 'CSV');You'll need a virtual warehouse running to execute the load, and you're responsible for sizing it appropriately and suspending it when it's not in use. In practice, forgetting to configure auto-suspend is one of the most common sources of runaway Snowflake costs we see, especially for teams new to the platform.

Cost model:

COPY INTO uses Snowflake virtual warehouse resources, so you'll need to provision a correctly-sized warehouse. Assuming you auto-suspend the warehouse as necessary, total credit consumption for COPY INTO workloads is then based on:

- The size of your warehouse

- How long the query runs

- How often you run your batches

When to use COPY INTO

- Ad hoc or infrequent data loads

- Historical backfills

- Workflows where daily or weekly data refresh is genuinely sufficient

- Teams getting started with Snowflake who want a simple foundation

Where COPY INTO falls short

COPY INTO is a building block, not a complete pipeline. Turning it into a robust, automated batch workflow requires additional engineering: scheduling the job, managing new file detection, handling failures and retries, and monitoring for gaps. What starts as a simple SQL query can become a meaningful maintenance burden at scale.

It also has no built-in change data capture (CDC) awareness. If your source data changes incrementally, you are responsible for tracking which files are new, deduplicating records, and managing merge logic yourself.

Method 2: Continuous Micro-Batches with Snowpipe

Snowpipe automates the COPY INTO pattern. Rather than running the command manually or on a fixed schedule, Snowpipe listens for new files arriving in your staging location and ingests them automatically, typically within a few minutes of arrival.

How Snowpipe works

You create a pipe object with an associated COPY statement that defines where data should go, then configure event notifications from your cloud storage to trigger ingestion when new files land. Snowpipe uses serverless compute rather than a user-managed warehouse, so you don’t need to worry about a warehouse sitting idle between loads.

A simple pipe creation statement looks like:

sqlCREATE PIPE pipe_name AUTO_INGEST = TRUE AS

COPY INTO snowflake_table

FROM @stage

FILE_FORMAT = (TYPE = 'CSV');

Cost Model:

Snowpipe is billed on a per-GB basis. Text files are charged based on their uncompressed size, while binary files are charged based on their observed size.

When to use Snowpipe

- Workflows that need fresher data than a daily batch but don't require true real-time

- Teams who want automated ingestion without managing a streaming SDK

- Use cases where data arrives in files (from SaaS exports, application logs, etc.)

- Latency in the minutes range is acceptable

Where Snowpipe falls short

Snowpipe latency is typically in the several-minute range, which works for many use cases but rules it out for anything requiring near-instant freshness. It's also file-based, so it doesn't naturally fit row-level or event-driven data streams. And while the serverless model simplifies compute management, it comes with its own cost structure (per-file or per-GB depending on your Snowflake edition) that can add up with high-frequency small files.

Method 3: Real-Time Streaming with Snowpipe Streaming

Snowpipe Streaming is Snowflake's lowest-latency native ingestion option. Unlike COPY INTO and Snowpipe, which are file-based, Snowpipe Streaming ingests data at the row level, no intermediate staging location required.

How Snowpipe Streaming works

You integrate using one of Snowflake's SDKs (Java or Python). Open a channel and stream rows directly into a Snowflake table. Latency is in the seconds range, making it suitable for genuinely real-time use cases.

There are two versions to be aware of:

- Classic Snowpipe Streaming is more established, supports earlier Java versions, does not require a pipe object. However, has a limited lifespan: Snowflake has announced they will deprecate Classic in favor of High Performance in a future release.

- High Performance Snowpipe Streaming is Snowflake's recommended option for new implementations; supports higher throughput (up to 10 GB/s per Snowflake's documentation), requires a pipe object with a COPY statement, and offers a simplified pricing structure.

Cost Model:

Snowpipe Streaming costs are dependent on version. Classic charges for serverless compute and the number of client instances, while High Performance uses a newer per-GB flat fee model.

When to use Snowpipe Streaming

- Use cases that genuinely require sub-minute data freshness

- Event-driven pipelines where data arrives as a continuous stream rather than files

- Real-time analytics, fraud detection, live operational dashboards

- Applications that need to act on data as soon as it's generated

Where Snowpipe Streaming Falls Short

Snowpipe Streaming requires implementing and maintaining an SDK integration and that complexity is real. You'll need to handle authentication, channel management, schema validation, error recovery, and monitoring.

We've seen teams underestimate this investment significantly as it is common for the initial SDK integration to go smoothly. Once the integration strays from the happy path, however, incorrect error handling, inconsistent schema validation, and schema evolution can create outages that take days to recover from. This is manageable for a team with streaming experience, but it's a meaningful investment. For a deeper look at how Snowpipe Streaming works under the hood, including Classic vs High Performance architecture, see our Snowpipe Streaming guide.

Snowpipe Streaming is also strictly a real-time tool. If you later need to ingest data as a scheduled batch, whether for cost or clean merging behavior, you're managing a second integration alongside it.

Method 4: Managed Ingestion with Openflow

Openflow is Snowflake's newest native offering, a managed, visual pipeline builder built on Apache NiFi and accessible directly through Snowsight. It provides a more user-friendly way to configure and run data ingestion pipelines without writing low-level code.

How Openflow works

You create an Openflow project in Snowsight, configure source connections (database, SaaS, or streaming), and build a data flow on a visual canvas by connecting source and target processors. Under the hood, Openflow uses Snowflake's existing ingestion methods (bulk COPY, Snowpipe, or Snowpipe Streaming) depending on how you configure the target processor.

Cost Model:

Openflow’s pricing can be complex, making it difficult to estimate final costs. This is because Snowflake’s managed pipeline service touches on so many different Snowflake components. BYOC and Snowflake deployments also have their own cost models, so it’s best to check Snowflake’s docs for details.

To provide an example, a Snowflake deployment for Openflow can rack up costs for:

- Compute based on instance size

- Snowpark infrastructure

- Data ingestion and telemetry data ingestion

- Separate connector charges (such as for an Oracle license)

When to use Openflow

- Teams who want a managed pipeline experience without leaving the Snowflake ecosystem

- Use cases where Openflow's available connectors cover your sources

- Organizations that prefer a visual interface for pipeline configuration

Where Openflow falls short

Openflow is promising but still has growing pains. Because it's so new, we'd recommend piloting it in a non-critical pipeline before committing to it for production workloads.

The current Snowflake-managed connector library covers around 20 sources, which is a small fraction of what more established third-party platforms offer. It's currently limited to AWS and Azure deployments (BYOC is AWS only). And transformation capabilities in the visual canvas are limited compared to code-based approaches.

If your sources are covered and your deployment requirements fit within AWS or Azure, Openflow is worth evaluating. For a full breakdown of Openflow's architecture, deployment models (SPCS vs BYOC), and how it compares to third-party alternatives, see our Snowflake Openflow deep dive. For organizations with broad connector needs or multi-cloud requirements, it's likely a partial solution at best today.

Comparing Snowflake’s Ingestion Methods

| COPY INTO | Snowpipe | Snowpipe Streaming | Openflow | |

|---|---|---|---|---|

| Latency | Hours to days | Minutes | Seconds | Varies by method |

| Data granularity | File-based | File-based | Row-based | Both |

| Staging required | Yes | Yes | No | Optional |

| Compute model | Virtual warehouse | Serverless | Serverless | Snowpark/BYOC |

| Setup complexity | Low | Medium | High | Medium |

| Supported sources | Staging files | Staging files | SDK-dependent | ~20 native connectors |

| Best for | Ad hoc, backfills | Automated micro-batch | Real-time use cases | Managed pipelines |

In short: COPY INTO is best for ad hoc and backfill loads where latency does not matter. Snowpipe fits automated file-based micro-batch workflows with minute-level freshness. Snowpipe Streaming serves sub-second real-time ingestion for CDC and event streams. Openflow works for teams who want a managed visual pipeline within Snowflake's ecosystem but can accept its current connector and deployment limitations.

The Answer Is Often More Than One Method

One important thing this comparison reveals: the right ingestion method for your organization is probably not a single choice. A well-designed Snowflake data stack typically uses different methods for different data streams, matching the latency tier to what each workflow actually needs rather than defaulting to one approach across the board.

You might use Snowpipe Streaming for your product events and user activity data, scheduled COPY INTO for financial exports that only need daily refresh, and Snowpipe for audit logs that benefit from micro-batch freshness. Each method matches what the workflow actually needs.

The challenge is that managing multiple ingestion methods yourself, which can include separate implementations, separate monitoring, and separate failure handling, multiplies your operational surface area.

This is where third-party integration platforms provide the most value: a single tool can deliver data to Snowflake using the appropriate ingestion method for each source, with unified configuration, monitoring, and schema management across all pipelines. Rather than building and maintaining three different integrations, you configure one pipeline per source and let the platform handle the delivery mechanism.

How Hidden Costs Add Up

The next post in this series breaks down the total cost of a Snowflake integration, whether native or third-party, how hidden costs can add up, and why choosing the wrong method (or the wrong tool) will quietly cost you far more than the platform price tag suggests.

Estuary supports all of Snowflake's basic underlying ingestion methods — bulk COPY INTO, Snowpipe, and Snowpipe Streaming — from a single platform with no-code connectors and automatic schema evolution. Try it free or download the complete Snowflake integration guide for detailed setup walkthroughs.

FAQs

What is the difference between Snowpipe and Snowpipe Streaming?

When should I use COPY INTO instead of Snowpipe?

Can I use multiple Snowflake ingestion methods together?

About the author

Emily is an engineer and technical content creator with an interest in developer education. At Estuary, she works with data pipelines for both streaming and batch data and finds satisfaction in transforming a mess of information into usable data. Previous roles familiarized her with FinTech data and working closely with REST APIs.