During ingestion, Snowflake data quality often breaks down due to late-arriving records, duplicates, and unexpected schema changes. When these issues go unmanaged, they quietly inflate metrics, delay dashboards, and break downstream models.

Let’s illustrate this with an example. Meet Sarah. She's a hard-working, passionate young data engineer who landed her first tech job in an e-commerce company called OptimaCart a couple of months ago. She’s been working on a data ingestion pipeline to move data from the company’s PostgreSQL database into a downstream Snowflake data warehouse.

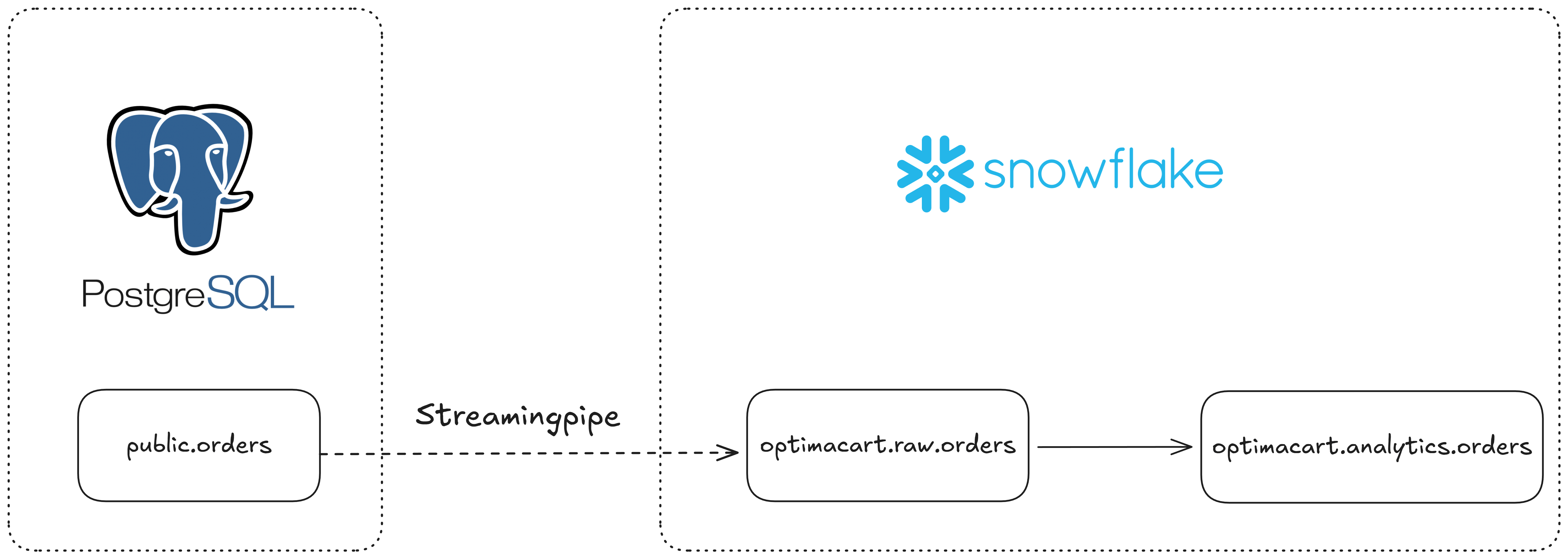

Sarah started out by loading the change data capture (CDC) from the PostgreSQL tables into Snowflake via Snowpipe Streaming. Her pipelines were running smoothly. The populated tables in Snowflake were used by various teams downstream. Data analysts built dashboards, data scientists built machine learning models; everyone was happy.

Until the moment when everything went wrong.

As an on-call member, Sarah got paged when her pipelines broke at 2 a.m. on a Saturday morning. She jumped directly into the investigation.

- The reporting dashboards hadn’t been refreshed for two days. Data came really late into the system.

- The cost alert had been fired a couple of times. Storage cost had almost doubled since last week.

- There was also a ticket from the data scientists asking why their model trainings were failing.

At that moment, Sarah realized that she hadn’t given much thought to data quality. And bad data was causing her and her company a lot of trouble.

Data quality is “the silent killer” of data systems.

According to a 2025 report from IBM, 43% of chief operations officers claimed that data quality is their most significant data priority. And another study by Forbes found that over a quarter of organizations estimated a $5 million annual loss due to poor data quality.

Garbage in, garbage out. No matter how reliable and robust your pipelines are, a single malformed record can break things out.

In data engineering, data quality refers to the accuracy, completeness, consistency, timeliness, and relevance of data. It is the degree to which it meets the requirements of its intended use.

With Snowflake, data quality can be monitored, detected, and mitigated directly in the ingestion phase. There are several methods that can help you bring outside data into Snowflake: COPY INTO, Snowpipe, and Snowpipe Streaming.

In this article, we’ll explore how to handle data quality in Snowflake data ingestion together with Sarah. We will look into Snowflake's capabilities to deal with late data, duplicate data, and schema drift.

In addition, using Estuary, the unified, right-time data movement platform, we'll see how easy it is to handle late data, duplicates, and schema change when ingesting data into Snowflake.

Sarah will apply these techniques to her existing and future pipelines to produce high-quality, trustworthy datasets. She'll rely on schema evolution, data metric functions (DMFs), dynamic tables, and so on. These will keep downstream teams happy, regardless of what happens in the data source.

Key Takeaways

Snowflake ingestion data quality issues usually involve late-arriving data, duplicates, and schema drift. If unmanaged, these can break dashboards and downstream models.

Dynamic tables with TARGET_LAG help manage late data, keeping analytics tables consistent even when events arrive out of order.

QUALIFY with ROW_NUMBER() enables clean deduplication, ensuring only one record per logical business key is retained.

Schema evolution prevents pipeline failures when new columns are introduced in the source system.

Data Metric Functions (DMFs) support proactive monitoring, helping detect freshness, volume, and null-rate issues early.

Upstream validation can further reduce cleanup work, preventing bad or duplicate records from landing in Snowflake in the first place.

The Flash Sale: A Data Engineer’s Nightmare

Like any other e-commerce company, OptimaCart wanted to boost its sales with a great conversion. It launched a flash sale campaign during which the website traffic skyrocketed.

And that’s when Sarah’s nightmare began.

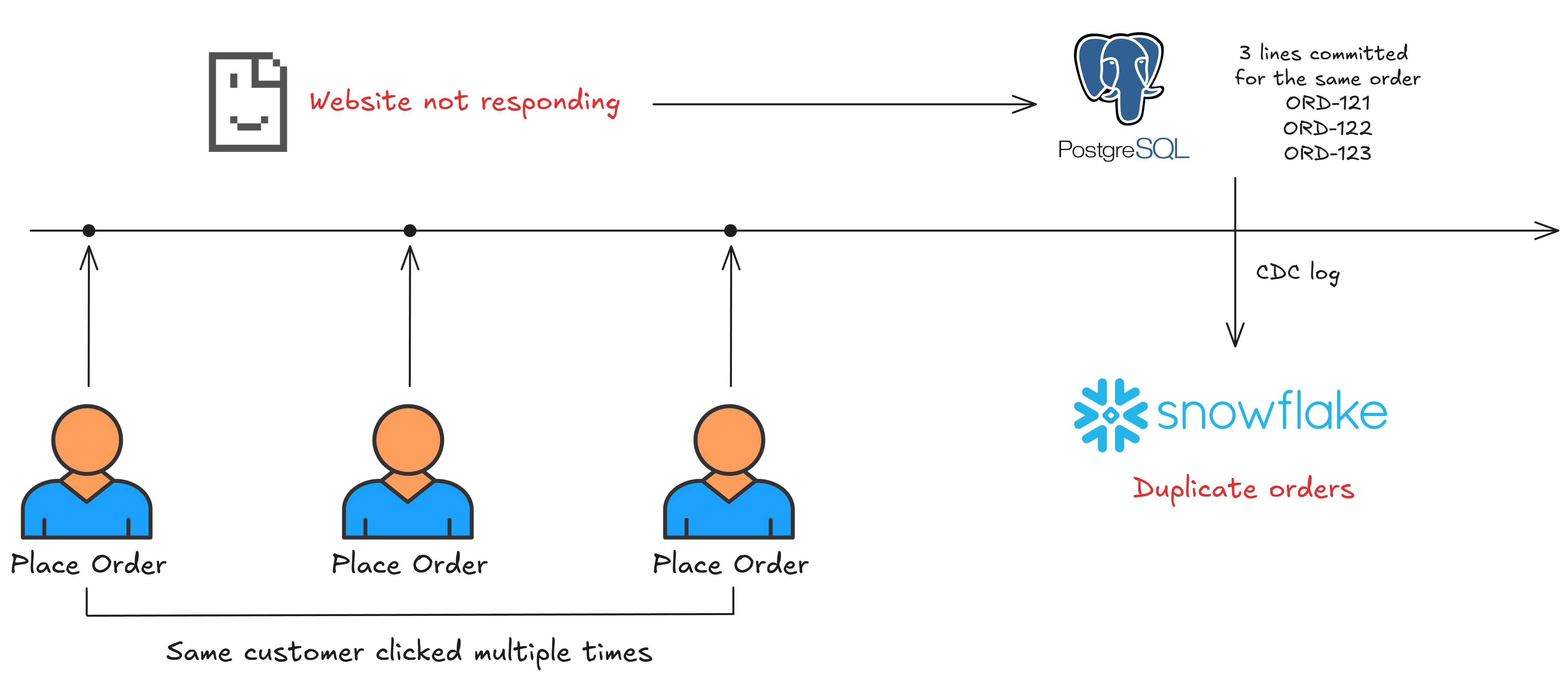

The fragile data ingestion pipelines from PostgreSQL to Snowflake experienced some issues at the beginning of the promotion period. Because of the high-concurrency web traffic, the simultaneous reads and writes brought duplicates into the Snowflake data warehouse.

In addition, shoppers made purchases on their mobile devices with unstable connections, which caused data to arrive in the system really late. Finally, in the middle of the campaign, the marketing team decided that they needed a discount_code column to better track the conversion.

The backend team pushed this change without informing the data engineer first. As a result, the schema change broke many downstream pipelines.

Sarah got constantly paged because of all of these data quality problems. Instead of working toward a more reliable solution, she found herself in a constant firefighting mode. OptimaCart’s executive team wasn’t happy about this, and they ultimately tasked her with addressing data quality using the native capabilities of Snowflake.

Late-Arriving Data in Snowflake and the Ghost Inventory Problem

Late-arriving data is unavoidable in distributed systems. In an event-driven architecture, data engineers sometimes have to settle for the tradeoff between eventual consistency and strong consistency.

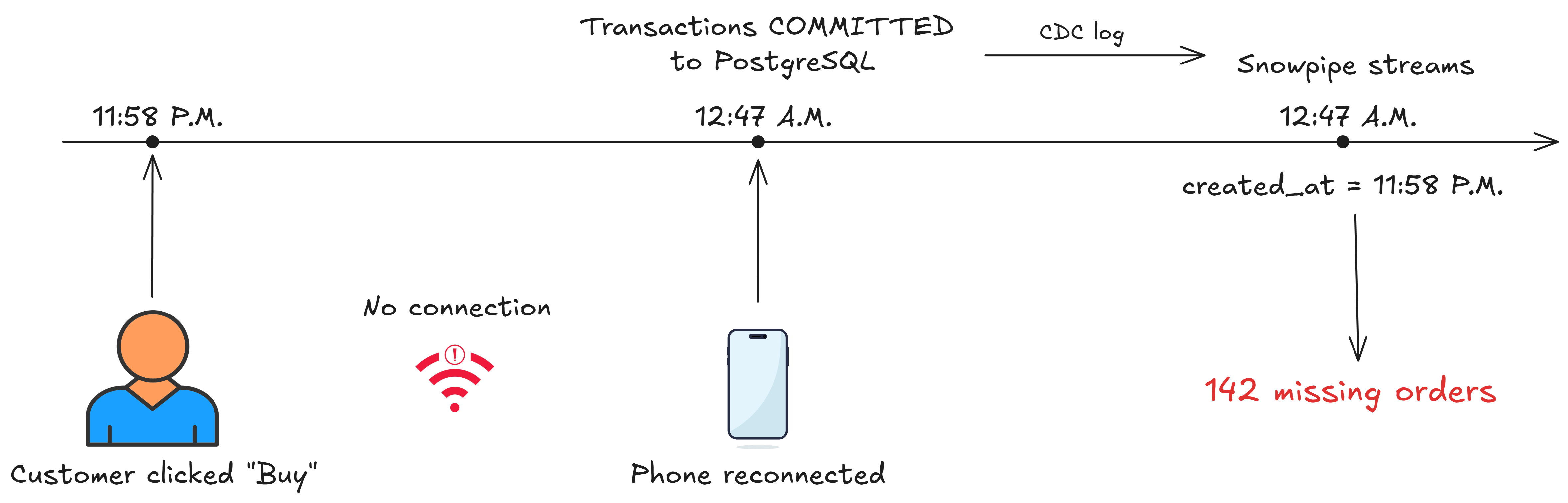

In Sarah’s case, the data sent by shoppers with a poor internet connection arrived out of chronological order. This led to discrepancies between different systems.

The financial dashboard was showing a total number of 1,389 orders, while the analytics dashboard only showed 1,247. These missing numbers were being questioned by the analytics team, and Sarah had to understand where the other 142 orders went.

She pulled out her laptop and started investigating.

sql-- PostgreSQL source count

SELECT COUNT(*), MAX(created_at)

FROM public.orders

WHERE created_at >= '2025-01-25 18:00:00';

-- Result: 1,389 orders | Latest: 2025-01-26 02:47:33

-- Snowflake analytics table count

SELECT COUNT(*), MAX(created_at)

FROM optimacart.analytics.orders

WHERE created_at >= '2025-01-25 18:00:00';

-- Result: 1,247 orders | Latest: 2025-01-26 01:22:18The truth is that some of the latest orders from PostgreSQL didn’t appear in the Snowflake data warehouse. There was an hour difference between the timestamps. Sarah found out that the analytics dashboard was using the created_at column rather than the actual time when the data was received. During peak traffic, some orders took longer to arrive in Snowflake even though they were created in PostgreSQL.

Luckily, Snowflake has a solution for late data, and by using a dynamic table with TARGET_LAG, Sarah could anticipate the orders that might come with some delay. But first, she had to understand what dynamic tables were and how target lag could help her.

According to Snowflake’s documentation, dynamic tables are tables that automatically refresh based on a defined query and target freshness, which is set by the target lag configuration. With target lag, Sarah could control how up-to-date she wanted the data to be. If there was a delay between the source and the target table, she could set a target lag to account for the discrepancy.

Based on the diagram above, here is Sarah’s current approach to creating the orders view for the analytics:

sqlCREATE OR REPLACE VIEW optimacart.analytics.orders AS

SELECT

order_id,

user_id,

product_id,

amount,

created_at,

status

FROM raw.orders

WHERE status = 'completed';Instead of keeping the same view, which would not work in case of late data, Sarah could take advantage of Snowflake dynamic tables:

sqlCREATE OR REPLACE DYNAMIC TABLE optimacart.analytics.orders

TARGET_LAG = '1 hour'

WAREHOUSE = optimacart_wh

AS

SELECT

order_id,

user_id,

product_id,

amount,

created_at,

status,

-- Metadata for observability

_metadata$row_created_at AS ingested_at,

DATEDIFF('second', created_at, _metadata$row_created_at) AS lag_seconds,

_cdc_operation,

-- Add a processing timestamp

CURRENT_TIMESTAMP() AS processed_at

FROM raw.orders

WHERE

_cdc_operation IN ('INSERT', 'UPDATE')

AND status = 'completed'With TARGET_LAG, she could tell Snowflake to keep the analytics.orders table fresh within 1 hour of the source data changing. This can be done because Snowflake automatically:

- Monitors the source table

raw.ordersfor changes; - Performs incremental refreshes, updating the target table only when there are new or changed rows since the last refresh;

- Optimizes scheduling: if no changes are detected, it will skip the refresh, thereby keeping the operational cost low.

Now, once the new dynamic table was refreshed, Sarah wanted to check whether she would obtain the same number of orders.

sql-- Check the dynamic table

SELECT COUNT(*)

FROM optimacart.analytics.orders

WHERE created_at >= '2025-01-25 18:00:00';

-- Result: 1,389 orders ✅We have seen how a simple dynamic table with a target lag configuration could help Sarah fight against late-arriving data. Now, there’s another enemy for her to conquer: data duplication.

Eliminating Duplicates in Snowflake Using QUALIFY and ROW_NUMBER

In a high-traffic scenario on its e-commerce website, OptimaCart’s backend server suffered API retries. An angry shopper who was constantly clicking on the “Place Order” button when it was unresponsive actually sent multiple records to the public.orders PostgreSQL table. Those were the same order, but with different IDs, which caused Sarah a headache.

She started another investigation.

sqlSELECT

order_id,

COUNT(*) as duplicate_count,

SUM(total_amount) as inflated_revenue

FROM optimacart.analytics.orders

WHERE order_date >= '2025-01-27'

GROUP BY order_id

HAVING COUNT(*) > 1

ORDER BY duplicate_count DESC

LIMIT 10;

plaintextORDER_ID | DUPLICATE_COUNT | INFLATED_REVENUE

------------|-----------------|------------------

ORD-8472 | 3 | $267.00

ORD-8513 | 2 | $290.00

ORD-8519 | 4 | $516.00

ORD-8527 | 2 | $178.00

ORD-8534 | 3 | $357.00

...To deduplicate the orders, Sarah had to use another event-based table, where each of the customer actions had been captured from the e-commerce website. A single customer made multiple orders due to retries, which was a typical application-level duplicate. Sarah would have to use a composite key to create a unique combination and then ROW_NUMBER() to rank the records.

Snowflake has a built-in capability that could help Sarah do that directly in her dynamic table.

In their documentation, Snowflake claims that QUALIFY does with window functions what HAVING does with aggregate functions and GROUP BY clauses.

Sarah discovered that Snowflake’s QUALIFY clause is like a supercharged WHERE clause that works with window functions. This should help Sarah simplify her query logic and make the code much more comprehensible.

Looking back at her data models, Sarah decided to use a combination of three columns to deduplicate the events: event_id, session_id, and event_timestamp. This approach guarantees that she selected the same event from the same customer for the same order.

sqlCREATE OR REPLACE DYNAMIC TABLE optimacart.events.cart_events

... # capture late data, similar to orders

-- Deduplication strategy: composite key (multiple columns)

QUALIFY ROW_NUMBER() OVER (

PARTITION BY

event_id, -- Client UUID (can duplicate due to retries)

session_id, -- User session

event_timestamp -- Exact millisecond

ORDER BY

_metadata$row_created_at DESC -- Latest ingestion wins

) = 1;Here, QUALIFY just filters directly on the window function result. No subquery, no materialized row_version column. With Snowflake, deduplication becomes refreshingly simple.

Sarah also added the ORDER BY to have a tie breaker and keep only the latest ingested row for each event. Now, she needed to match the event with the orders and create a orders_deduplicated table where every order is unique.

At this point, everything was working, from late data to duplication. However, in the middle of the flash sale campaign, the marketing team decided to change the data schema. And just like that, she was faced with yet another data quality challenge.

Taming Schema Drift in Snowflake Ingestion

To better track customer engagement during the campaign, the marketing team needed yet another business indicator. They wished to have a discount code information in the orders so that they could distinguish between the customers who had seen the advertisement and those who had not.

The backend team was onboard with this. They pushed for a change on the PostgreSQL table to add discount_code to the orders table. This was done without any communication to the data engineering team. It was, of course, an ordeal for Sarah.

She arrived at work only to discover her ingestion pipelines had failed with:

jsonError: Column 'discount_code' not found in target table optimacart.raw.ordersSarah knew that she could add the column manually to the raw table and then propagate the change to downstream tables.

sqlALTER TABLE optimacart.raw.orders

ADD COLUMN discount_code VARCHAR(50);But she needed a better way: a long-term solution to handle an unexpected schema drift. The good news is, Snowflake has a solution for this.

Snowflake tables can have their schema evolve automatically. According to its website, the platform supports two types of schema evolution:

- Automatically adding new columns

- Automatically dropping the NOT NULL constraint from columns that are missing in new data files

Sarah could apply this built-in capability from Snowflake to her raw table:

sqlCREATE OR REPLACE TABLE optimacart.raw.orders (

order_id VARCHAR,

customer_id VARCHAR,

order_date TIMESTAMP_NTZ,

total_amount DECIMAL(10,2),

status VARCHAR,

payment_method VARCHAR,

-- Metadata columns

_metadata$row_id VARCHAR,

_metadata$row_created_at TIMESTAMP_LTZ,

_cdc_operation VARCHAR,

_ingested_at TIMESTAMP_LTZ DEFAULT CURRENT_TIMESTAMP()

)

-- Schema evolution

ENABLE_SCHEMA_EVOLUTION = TRUE

CHANGE_TRACKING = TRUE;In addition, when working with semi-structured data like JSON or CSV, Snowflake allows column matching by using the MATCH_BY_COLUMN_NAME configuration.

Proactive Monitoring with Data Metric Functions (DMFs)

So far, Sarah had successfully addressed late data, duplicates, and schema drift. But those were all reactive measures. She needed proactive monitoring to prevent data quality issues in the long term and make her pipelines more reliable and robust.

In Snowflake, Data Metric Functions (DMFs) are a native way to define, measure, and monitor data quality at the table level. DMFs are basically SQL functions that you attach to tables. Snowflake then runs these functions on a pre-defined schedule and tracks the results over time. You can actively monitor them or set a threshold for certain metrics, and when a metric crosses a threshold, you get an alert.

Sarah needed more insights on her tables to prevent some of the recurring data quality issues. She studied DMFs intensively and decided to implement a full list of data quality metrics in her orders table. The DMFs would reflect the following aspects of data quality monitoring:

- Freshness - On-time arrival of data

sql-- ===========================================

-- DMF: Data Freshness

-- Measures the lag between order creation and ingestion

-- ===========================================

CREATE OR REPLACE DATA METRIC FUNCTION

optimacart.analytics.dmf_orders_freshness()

RETURNS NUMBER

AS

$$

-- Return average ingestion lag in minutes for last hour

SELECT

AVG(ingestion_lag_seconds) / 60.0

FROM optimacart.analytics.orders_gold

WHERE processed_at >= DATEADD('hour', -1, CURRENT_TIMESTAMP())

$$;

ALTER TABLE optimacart.analytics.orders_gold

ADD DATA METRIC FUNCTION optimacart.analytics.dmf_orders_freshness();- Completeness - Absolute row count

sql-- ===========================================

-- DMF: Row Count per Hour

-- Track volume trends

-- ===========================================

CREATE OR REPLACE DATA METRIC FUNCTION

optimacart.analytics.dmf_orders_hourly_volume()

RETURNS NUMBER

AS

$$

SELECT COUNT(*)

FROM optimacart.analytics.orders_gold

WHERE processed_at >= DATEADD('hour', -1, CURRENT_TIMESTAMP())

$$;

ALTER TABLE optimacart.analytics.orders_gold

ADD DATA METRIC FUNCTION optimacart.analytics.dmf_orders_hourly_volume();- Accuracy - NULL rate in critical columns

sql-- ===========================================

-- DMF: NULL Rate in Critical Fields

-- ===========================================

CREATE OR REPLACE DATA METRIC FUNCTION

optimacart.analytics.dmf_orders_null_rate()

RETURNS NUMBER

AS

$$

SELECT

(COUNT(CASE

WHEN customer_id IS NULL

OR total_amount IS NULL

OR order_date IS NULL

THEN 1

END) * 100.0 / NULLIF(COUNT(*), 0))

FROM optimacart.analytics.orders_gold

WHERE processed_at >= DATEADD('hour', -1, CURRENT_TIMESTAMP())

$$;

ALTER TABLE analytics.ecommerce.orders_fully_deduplicated

ADD DATA METRIC FUNCTION optimacart.analytics.dmf_orders_null_rate();Some other aspects include validity, consistency, uniqueness, and timeliness.

Handling Snowflake Ingestion Data Quality with Estuary

After a week of wrestling with Snowflake ingestion pipelines, the flash sale was finally over. Sarah learned many lessons about maintaining data quality, but after spending so much time working on this solution, she started looking for a simpler approach. That’s when she discovered Estuary, the right-time data movement platform.

With Estuary, when you’re moving data from sources like PostgreSQL to Snowflake, data quality is not something you deal with afterward. It’s built into the core architecture.

Here are Estuary’s key features that help ensure a high quality of data for Sarah’s ingestion pipelines:

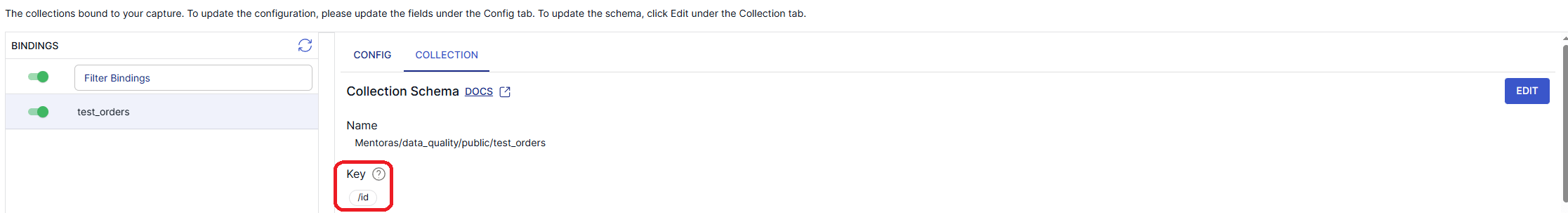

Exactly-once delivery: Estuary guarantees no duplicates. With exactly-once delivery, there’s no need for QUALIFY anymore in Snowflake’s target table. This Estuary feature is based on deterministic event processing with state management. For example, if Sarah sets the key for Estuary’s collections to order_id, Estuary will track the last seen event timestamp, last seen CDC log position, and the checksum of document content for each order. The duplicates will be automatically filtered before they land.

Collections with JSON schemas: The pre-defined JSON schemas provide a safety measure and act as a schema-as-a-contract model. This will allow Sarah to enforce the schema at capture time, and not when malformed data is already at the target table.

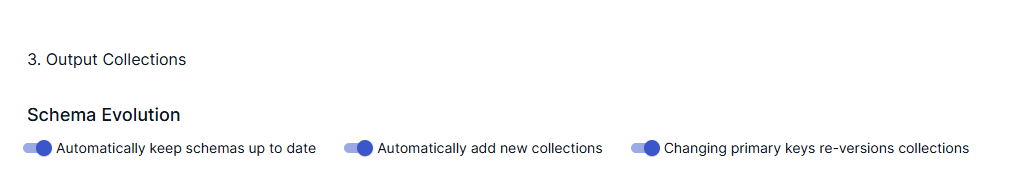

AutoDiscovery & schema evolution:When creating Estuary captures from PostgreSQL to Snowflake, Sarah can now choose to add an autoDiscover property to the capture. This feature will automatically and periodically check for any changes in the source. She can also enable evolveIncompatibleCollections, which applies any detected changes in the source table directly to the capture.

Event-time watermarking: With Snowflake’s TARGET_LAG, Sarah had to guess how late data might arrive based on ingestion time. Estuary, on the other hand, maintains watermarks per each time partition. In fact, it keeps the partition open for late-arriving data. By relying on event time as the source of truth, Sarah can be confident that events are always put in the right time bucket automatically. No more guessing with TARGET_LAG.

In-flight typescript transformation: Estuary offers the same philosophy to Data Metric Functions (DMFs) and beyond, which allows Sarah to proactively detect and mitigate data quality issues before data lands in the target table. For example, when moving from PostgreSQL public.orders to Snowflake, every single order can be validated before it enters the data warehouse. Sarah can create TypeScript transformations to check required fields, validate data ranges, verify business rules, and enforce referential integrity.

Here’s an example of a validation by an in-flight TypeScript transformation:

plaintext language-tsxexport class Derivation implements IDerivation {

// ─────────────────────────────────────────────────────────

// Transform: validateFromRaw

// Validates orders from the raw collection

// ─────────────────────────────────────────────────────────

validateFromRaw(source: SourceValidateFromRaw): Document[] {

const errors: string[] = [];

const warnings: string[] = [];

// ══════════════════════════════════════════════════════════

// Data Validation: Required Fields

// ══════════════════════════════════════════════════════════

if (!source.order_id || source.order_id.trim() === '') {

errors.push('Missing required field: order_id');

}

if (!source.customer_id || source.customer_id.trim() === '') {

errors.push('Missing required field: customer_id');

}

if (source.total_amount === undefined || source.total_amount === null) {

errors.push('Missing required field: total_amount');

}

if (!source.order_date) {

errors.push('Missing required field: order_date');

}

}

if (errors.length > 0) {

return [{

...source,

_validation_status: 'FAILED',

_validation_errors: errors,

_validated_at: new Date().toISOString(),

_source_document: source, // Keep full source for debugging

}];

} else {

// Valid documents are NOT published to error collection

return [];

}

}

}With Estuary, you can handle data quality issues closer to the source. If you apply the native capabilities mentioned above, the bad data will never reach the target table. Compared to Snowflake, Estuary provides a more flexible approach with less operational overhead approach, which makes it easier to maintain clean and reliable data.

Snowflake vs. Estuary for Data Quality During Ingestion

So far we have seen how Snowflake and Estuary offer some methodologies to combat data quality problems. In reality, it depends on your use case, the nature of your data, and your technical requirements to know which ones to apply.

Let’s compare them side by side to help you make an informed choice:

| Data Quality Issue | Snowflake | Estuary |

| Duplicates | • Manual deduplication required • A combination of QUALIFY and ROW_NUMBER()• Duplicates land first, then clean | • Exactly-once delivery guaranteed • Duplicates never reach destination • Only need to set collection key in YAML or via the UI |

| Late-arriving data | • Use of windows event-time processing • Dynamic tables with TARGET_LAG• Guessing game with a different lag per table | • Native, built-in • Automatic watermarking • Dynamically adapts to lateness |

| Schema drift | • Pipelines that break by default • New column rejected by Snowflake • Use of ENABLE_SCHEMA_EVOLUTION = TRUE | • Schema change handled automatically through AutoDiscovery • Periodic check for any modification in the data source |

| Data validation | • Build Data Metric Functions (DMFs) • Run checks on fixed schedule • Bad data potentially in data warehouse | • Bad data nowhere near data warehouse • In-flight transformation (with TypeScript or other languages) built to validate records |

Conclusion

Data quality monitoring is not a one-off issue. It’s an ongoing effort to keep your data output trustworthy. The closer we can monitor data quality to the source, the better.

Snowflake offers some native capabilities to detect and mitigate data quality issues in the ingestion phase. These include dynamic tables and TARGET_LAG for handling late-arriving data, deduplication with QUALIFY, anticipation of schema drift with schema evolution, and finally, Data Metric Functions (DMFs) for proactively monitoring your data pipelines.

With Estuary, tackling data quality issues becomes child’s play. Its exactly-once semantics handles duplicates, AutoDiscovery adapts to the schema change, and event-time marking prevents out-of-order data. Unlike with Snowflake, where you need to dig deep into documentation, Estuary offers you these capabilities out-of-the-box and ready to use.

Start building with Estuary today. Book a demo to explore how right-time data movement can support your growth.

FAQs

What are the most common data quality issues during Snowflake ingestion?

How does Estuary prevent duplicate data from reaching Snowflake?

About the author

Results-driven data professional with 7+ years of experience designing robust data platforms and AI solutions. Proven track record in building scalable pipelines, mentoring teams, and translating complex data into actionable insights. Fluent in Python, Spark, and other cloud technologies.