You can migrate MongoDB to MySQL in two main ways: use a CDC-powered pipeline for continuous sync, or export MongoDB collections to CSV and import them into MySQL for a one-time migration. For production workloads, Estuary is usually the better option because it can capture MongoDB changes continuously and materialize them into MySQL without repeated manual exports.

CSV export/import works for small, static datasets, but it becomes risky when MongoDB continues receiving inserts, updates, or deletes during migration. In this guide, we’ll compare both methods, explain where each one fits, and show how to move MongoDB data into MySQL with less manual effort.

Key Takeaways

You can migrate MongoDB to MySQL using an automated CDC pipeline or a manual CSV export/import process.

CSV export/import is suitable for small, one-time migrations where the data does not change during the move.

Estuary is a stronger fit for production use cases that need MongoDB and MySQL to stay continuously synchronized.

The hardest part of MongoDB to MySQL migration is usually schema mapping because MongoDB stores flexible BSON documents while MySQL requires relational tables.

Before migrating, teams should plan how to handle nested objects, arrays, optional fields, primary keys, deletes, and data validation.

Estuary helps teams build dependable MongoDB to MySQL pipelines without maintaining custom scripts, cron jobs, or complex streaming infrastructure.

MongoDB Overview

MongoDB is a modern, non-relational document database. It uses a document-oriented approach, which allows storing and retrieving data in documents instead of tables. Each document in MongoDB is an independent unit, representing hierarchical relationships within a single document.

MongoDB stores data in a JSON-like format called Binary Javascript Object Notation (BSON), making it easy for developers to work with data. This database is a go-to choice for organizations because of its high availability, high performance, and automatic scaling.

MongoDB offers many features to meet the demands of modern applications, including:

- Schema Design Flexibility: MongoDB’s schema-less approach, utilizing BSON, accommodates dynamic data structures, making it invaluable for applications with evolving requirements.

- Query Language: MongoDB offers a rich query language for manipulating and retrieving data. You can run searches to sort results, filter data, and even perform geospatial queries to locate data based on coordinates. MongoDB is also known for its ability to perform ad-hoc queries without any predefined schema or structure.

- Load Balancing: Horizontal scaling capabilities, like replication and sharding, allow MongoDB to perform extensive load balancing. The database can handle several concurrent read and write requests for the same data, ensuring data consistency. This ensures that every user has a consistent view and data accessibility.

MySQL Overview

MySQL is a widely used Relational Database Management System (RDBMS). The syntax and features of this database are similar to PostgreSQL and Oracle. Just like other relational databases, MySQL stores data in tables using rows and columns.

The structured nature of this database is suited for situations where data consistency, integrity, and reliability are crucial. It ranks among the most popular databases due to its robust security measures, transaction support, and impressive scalability.

Key features of MySQL include:

- ACID Compliance: MySQL follows the principles of Atomicity, Consistency, Isolation, and Durability (ACID), ensuring the dependability of database transactions even in the face of system failures. This reliability of MySQL makes it a good choice for applications like financial systems and e-commerce platforms that require data integrity.

- Support for Structured Query Language (SQL): SQL is a standardized language for maintaining and querying relational databases, and MySQL is known for its powerful implementation. It enables database users to perform various operations, such as data updates, retrieval, and other complex queries.

- Replication: MySQL offers database replication, allowing you to make several copies of your database. This feature is beneficial for various purposes, such as load balancing, fault tolerance, high availability, and scalability in distributed environments.

Before You Migrate: Plan Your MongoDB to MySQL Schema Mapping

Before moving data from MongoDB to MySQL, you need to decide how your MongoDB documents will map to relational MySQL tables. This is often the most important part of the migration because MongoDB and MySQL use very different data models.

MongoDB collections can contain nested documents, arrays, optional fields, and mixed data types. MySQL, on the other hand, requires a more structured schema with defined tables, columns, keys, and data types.

Use the table below to plan your migration before you start moving data.

| MongoDB pattern | MySQL mapping option | What to watch |

|---|---|---|

| Flat document fields | Store fields as columns in a single MySQL table | Make sure field types are consistent across documents |

| Nested objects | Flatten into columns or move to a child table | Avoid creating too many sparse columns |

| Arrays of values | Store as JSON, flatten into columns, or move to a child table | Choose based on how the data will be queried |

| Arrays of objects | Move to a separate child table with a foreign key | Preserve relationships to the parent record |

| Optional fields | Use nullable columns or default values | Prevent failed inserts when fields are missing |

| Mixed data types | Normalize values before loading into MySQL | Avoid type conversion errors |

MongoDB _id field | Use as a primary key or unique identifier | Preserve identity for updates and deduplication |

| Deletes | Configure delete handling in your sync method | Required if MySQL needs to reflect MongoDB deletes |

For simple one-time migrations, you may be able to flatten MongoDB documents manually before importing them into MySQL. For production pipelines, a CDC-based approach is usually better because it can keep MySQL updated as MongoDB changes.

How to Migrate from MongoDB to MySQL: 2 Methods

You can move data from MongoDB to MySQL using one of the following two methods:

- Method 1: Migrate from MongoDB to MySQL Using Estuary (ETL Tool)

- Method 2: Convert MongoDB to MySQL Using CSV Export and Import

Method 1: Using Estuary to Migrate MongoDB to MySQL

Estuary helps teams build real-time MongoDB to MySQL data pipelines using CDC, while also supporting batch data movement when real-time sync is not required.

For MongoDB to MySQL migration, Estuary can capture data through its MongoDB connector and materialize it into MySQL through its MySQL materialization connector. This is especially useful when you need more than a one-time export, such as keeping MySQL updated as MongoDB continues receiving new records, updates, or deletes.

Use Estuary when you want to:

- Move MongoDB data into MySQL without writing custom migration scripts.

- Keep MySQL synchronized with MongoDB in real time.

- Reduce downtime during migration.

- Avoid repeatedly exporting and importing CSV files.

- Build a dependable data pipeline with built-in connectors.

- Support both initial backfills and ongoing change capture.

- Handle real-time and batch data movement in one platform.

Prerequisites

Ensure you complete the prerequisites before starting the migration process. Refer to Estuary’s documentation for more information:

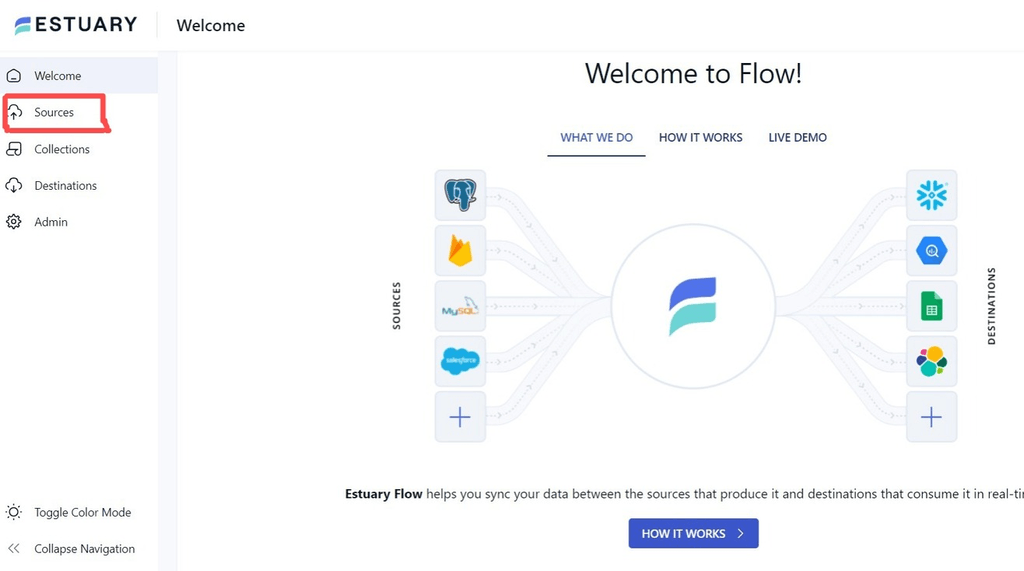

Step 1: Sign in to your Estuary account. You can register for free if you don't already have an account.

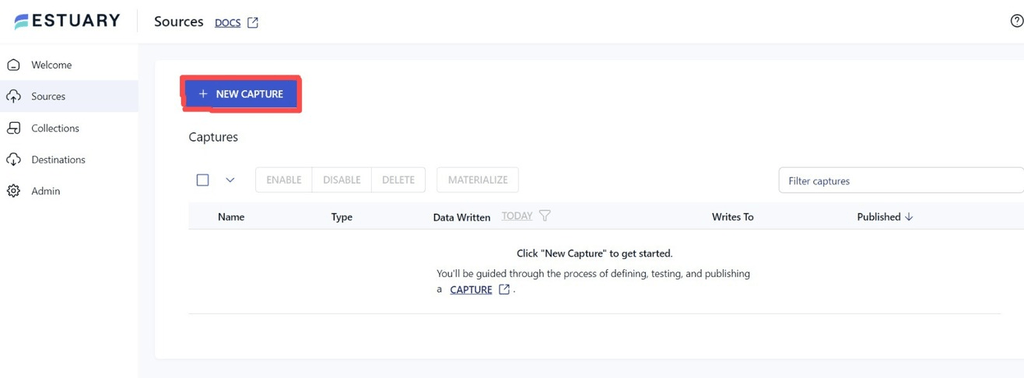

Step 2: Set Up MongoDB as a Source

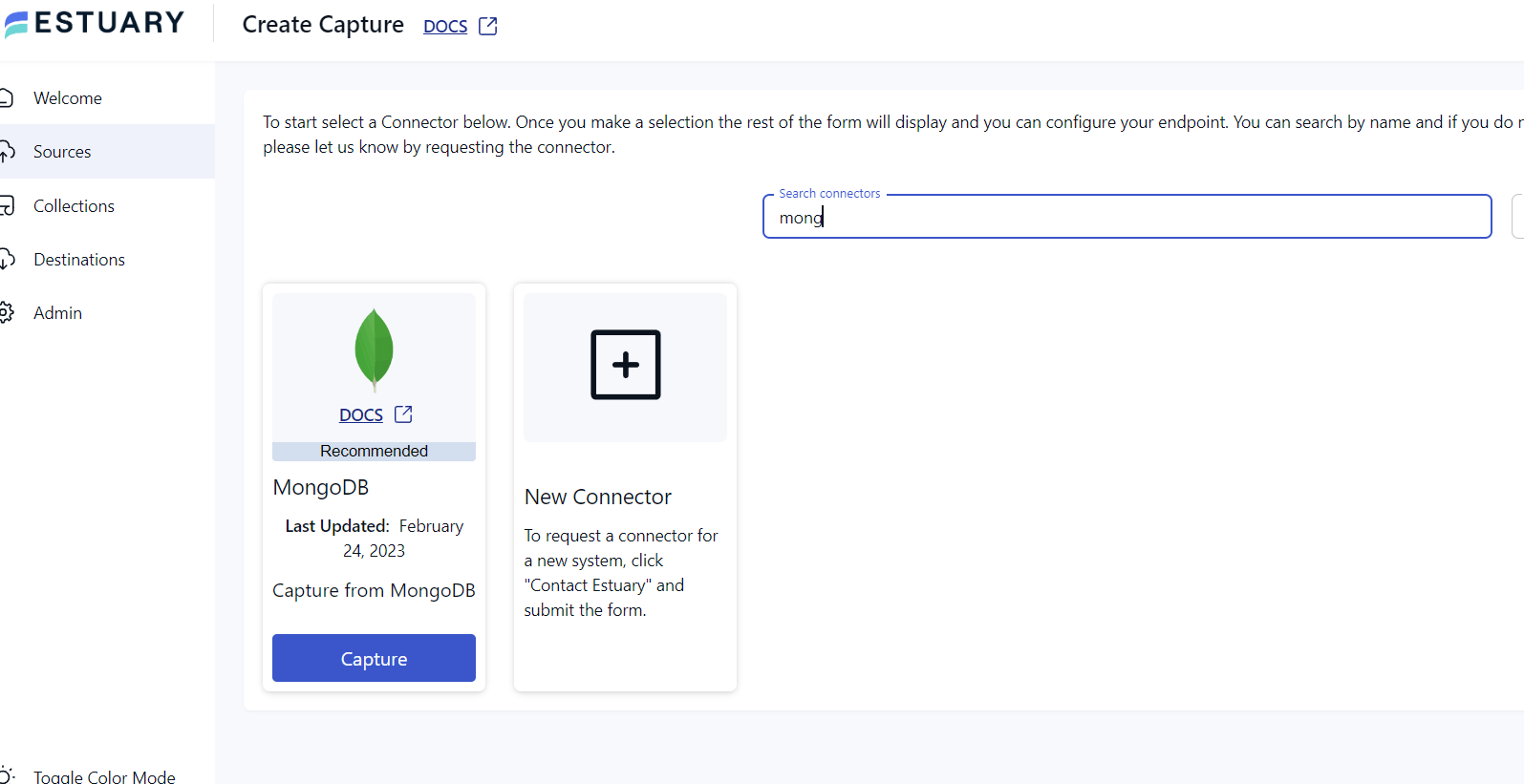

- On the left-hand side of the Estuary dashboard, select Sources.

- Click the + NEW CAPTURE button.

- Use the Search connectors box to find the MongoDB connector and select the connector’s Capture option to configure the source end of the data pipeline.

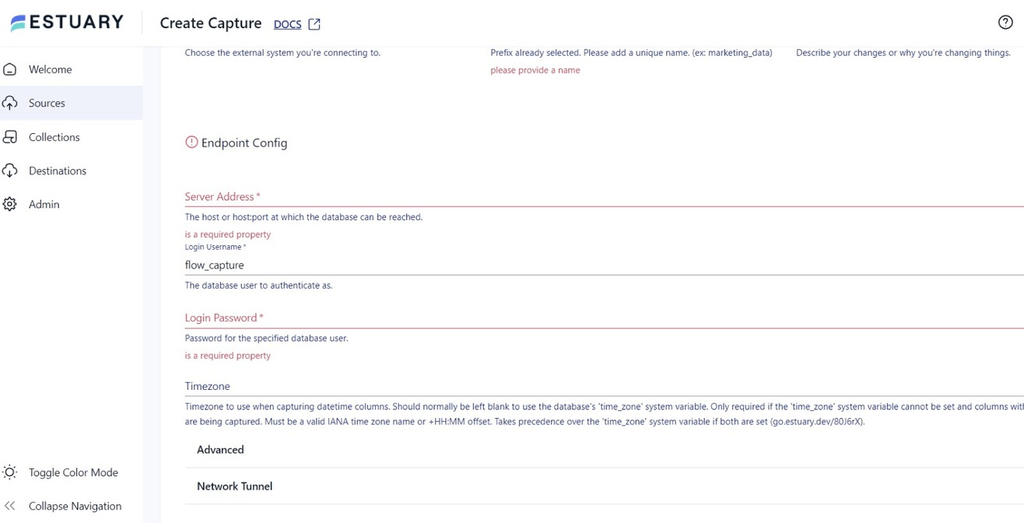

- Fill in the Capture details and give it a unique Name. Provide the required information in the Endpoint Config, such as Username, Password, Server Address, and Timezone. Then click NEXT.

- Click the SAVE AND PUBLISH button to save the source configuration.

Step 3: Set Up MySQL as a Destination

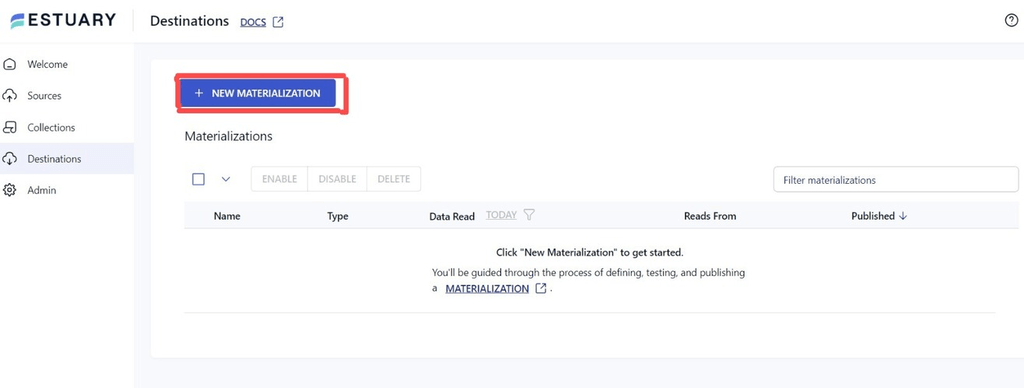

- On the left-hand side of the Estuary dashboard, select Destinations.

- Click the + NEW MATERIALIZATION button.

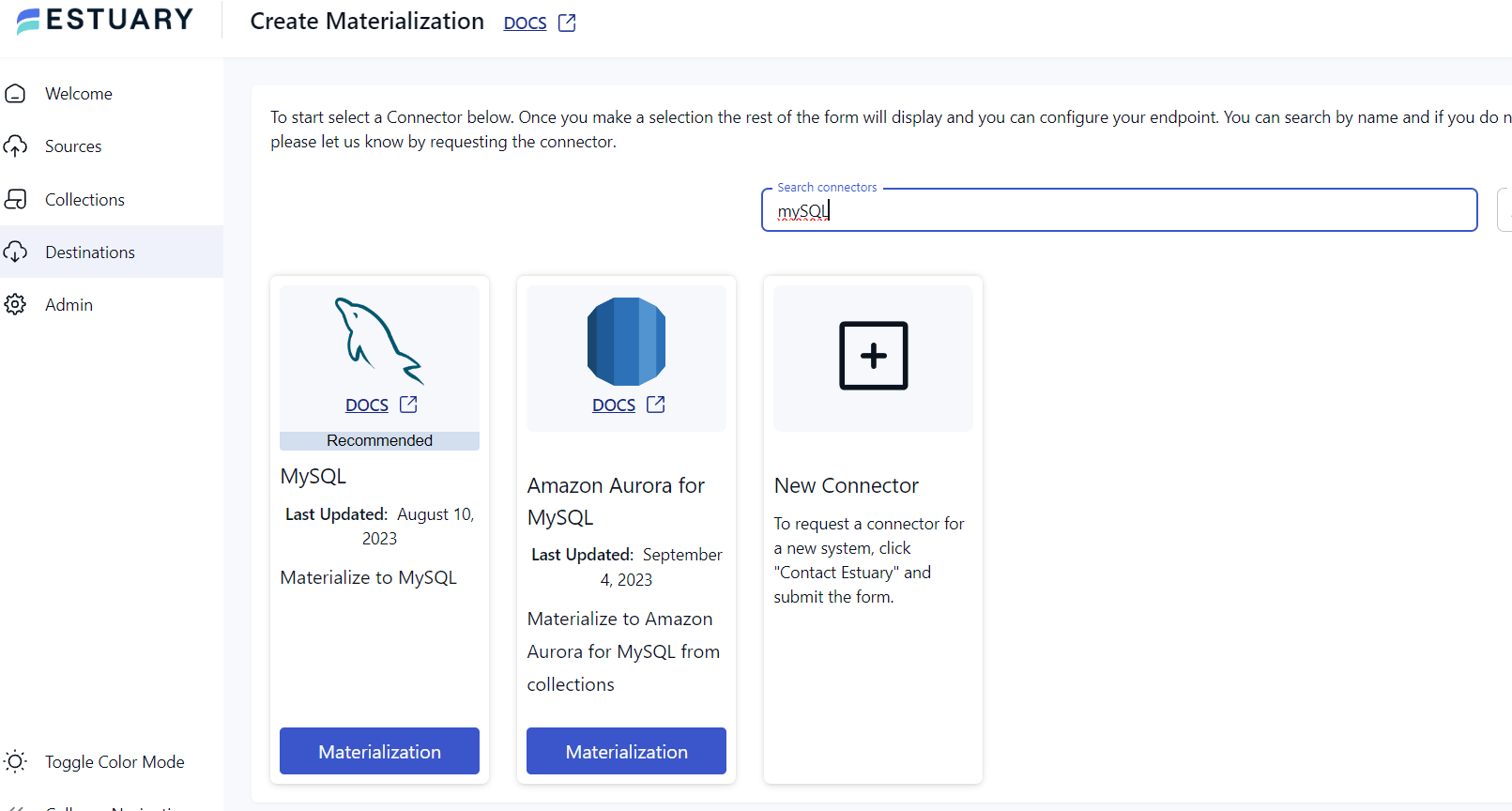

- Use the Search connectors box to find MySQL, then select the MySQL connector’s Materialization option.

- Fill in the materialization details and give it a unique name. In the endpoint configuration, provide the required MySQL connection details, such as:

- Host

- Port

- Database name

- Username

- Password

- SSL or network settings, if required

- If the data extracted from MongoDB wasn't filled in automatically, add the data from the Source Collections section.

- Review the generated table mappings and confirm that MongoDB fields are mapped correctly to MySQL columns. Pay special attention to primary keys, nested fields, arrays, and optional fields.

- To finish the configuration process, click NEXT, then SAVE AND PUBLISH.

After publishing, Estuary will begin moving data from MongoDB into MySQL based on your configuration.

Benefits of Using Estuary for MongoDB to MySQL Migration

Estuary offers several advantages for MongoDB to MySQL migration:

- Real-Time Data Sync: Estuary can capture changes from MongoDB and materialize them into MySQL, helping keep the destination database current after the initial migration. This is useful for production systems where MongoDB continues to receive new inserts, updates, and deletes.

- Lower Manual Effort: With Estuary, you do not need to manually export CSV files, create repeated import jobs, or maintain custom scripts. Once the pipeline is configured, data movement can continue automatically.

- Support for Initial Loads and Ongoing Changes: A MongoDB to MySQL migration often requires both a historical backfill and ongoing sync. Estuary can help support both patterns in one pipeline.

- Better Fit for Production Workloads: Manual migration methods can work for small datasets, but they become harder to manage as data volume, update frequency, and schema complexity increase. Estuary is better suited for production workloads where reliability, repeatability, and low-latency movement matter.

- Scalable: Estuary’s Change Data Capture (CDC) feature supports active workloads from databases of any size, providing an impressive throughput of 7 GB/s. This capability allows Estuary to manage massive data volumes efficiently.

- Pre-built Connectors: With an extensive library of pre-built connectors for popular data sources and destinations, Estuary simplifies data migration tasks. These pre-built connectors speed up integration and guarantee a hassle-free database migration.

When Should You Use Estuary for MongoDB to MySQL?

Use Estuary when you need MongoDB and MySQL to stay synchronized after the initial migration. Estuary captures changes from MongoDB and materializes them into MySQL, so inserts, updates, and deletes can continue flowing without repeated CSV exports or custom scripts.

Estuary is a strong fit when:

- You need real-time MongoDB to MySQL replication.

- Your MongoDB collections change frequently.

- You want to reduce migration downtime.

- You need a no-code or low-code setup.

- You want to support both real-time and batch data movement.

- You want predictable data movement without maintaining Kafka, scripts, cron jobs, or custom ETL jobs.

- You need a dependable data pipeline for analytics, reporting, or operational use cases.

CSV export/import can move MongoDB data into MySQL once, but it does not keep MySQL updated as MongoDB changes. Estuary is better suited for ongoing synchronization.

Method 2: Using CSV File Export/Import to Convert MongoDB to MySQL

The CSV export/import method involves extracting data from MongoDB into a CSV file and loading that file into a MySQL table. This approach can work for small, one-time migrations where the data is mostly flat and does not change during the migration window.

However, CSV export/import is not ideal for production systems that need ongoing synchronization. It does not automatically capture new inserts, updates, or deletes after the export is complete. It can also require extra work when MongoDB documents contain nested objects, arrays, optional fields, or inconsistent data types.

Use this method when:

- You only need a one-time migration.

- Your MongoDB collection is small or medium-sized.

- Your data is relatively flat.

- You can tolerate manual schema mapping.

- The source data will not change significantly during the export/import process.

Warning: CSV export/import is best for one-time migrations of small, mostly flat collections. It is not ideal for production systems where MongoDB continues receiving writes during migration. If your application needs MySQL to stay current after the initial load, use a CDC-based method instead. Otherwise, your MySQL database may become stale as soon as new records, updates, or deletes occur in MongoDB.

Step 1: Extract Data from MongoDB to a CSV File

To extract the data, use the mongoexport command on an existing MongoDB collection. For example:

plaintextmongoexport --host localhost --db librarydb --collection books --type=csv --out books.csv --fields book_name,genre,author_name, page_count- librarydb is the database name

- books is the collection name

- books.csv is the target CSV file that contains the data stored in the MongoDB database

- fields must have the list of fields you want to export from your database collection

Step 2: Create a Table in MySQL to Store the MongoDB Data

Use the CREATE TABLE command to create a table in MySQL and structure it accordingly. For example:

plaintextCREATE TABLE books

(id INT(6) UNSIGNED AUTO_INCREMENT PRIMARY KEY,

bookname VARCHAR(30) NOT NULL,

genre VARCHAR(30) NOT NULL,

authorname VARCHAR(30) NOT NULL,

pagecount VARCHAR(30) NOT NULL);This command creates a table named books with the fields (column name) bookname, genre, authorname, and pagecount.

Step 3: Load the CSV File to MySQL

To complete the data migration, load the extracted MongoDB data into the newly created MySQL table. For example:

plaintextLOAD DATA LOCAL INFILE 'books.csv'

INTO TABLE books

FIELDS TERMINATED BY ','

ENCLOSED BY '"'

LINES TERMINATED BY '\n'

(book_name,genre,author_name, page_count)This command loads the data in a CSV file called books.csv and formats it for readability.

These steps will successfully complete the process of moving data from MongoDB to MySQL using CSV files.

Limitations of Using the CSV Export/Import Method

While the CSV export/import method for migrating data from MongoDB to MySQL is a viable option, it comes with limitations, including:

- Time-consuming: Manually extracting data from MongoDB and creating a MySQL schema can be time-consuming, especially for large datasets that require modification to fit the new structure. This becomes even more challenging because applications need to remain operational without significant downtime during the transfer process.

- Error-Prone: Manual data migration can introduce errors in data transformation, extraction, and loading processes. To avoid any errors, data must be carefully mapped from MongoDB's flexible schema-less structure to MySQL's structured schema format.

- Effort-Intensive: The manual approach for migrating databases is repetitive and effort-intensive. You have to keep updating the CSV file, performing the same steps repeatedly as the data gets updated.

MongoDB to MySQL Migration Methods Compared

| Method | Best for | Handles ongoing changes? | Downtime risk | Technical effort | Recommended for production? |

|---|---|---|---|---|---|

| Estuary CDC pipeline | Continuous sync, analytics, operational reporting, and production migration | Yes | Low | Low to medium | Yes |

| CSV export/import | Small one-time migrations or test datasets | No | Medium to high | Medium | Usually no |

| Custom scripts | Highly customized migration logic | Only if built manually | Medium | High | Sometimes |

| Debezium and Kafka pipeline | Engineering-heavy CDC architecture | Yes | Low | High | Yes, if your team can operate Kafka |

Common MongoDB to MySQL Migration Mistakes

Avoid these common mistakes when moving data from MongoDB to MySQL.

Treating MongoDB and MySQL Schemas as Equivalent

MongoDB documents do not always map cleanly to MySQL tables. Plan your target schema before migrating.

Ignoring Nested Objects and Arrays

Nested documents and arrays often require special handling. You may need to flatten fields, create child tables, or store some structures as JSON depending on your query requirements.

Not Preserving Primary Keys

If you do not preserve MongoDB identifiers correctly, it becomes harder to update records, deduplicate data, or maintain relationships in MySQL.

Assuming CSV Handles Ongoing Changes

CSV export/import is a point-in-time method. It does not automatically capture changes after the export is complete.

Skipping Data Validation

Always compare record counts, sample records, data types, null values, and update behavior before using the migrated data in production.

Forgetting About Deletes

If records are deleted in MongoDB, decide whether those deletes should also be reflected in MySQL. This is especially important for continuous sync pipelines.

The Takeaway

Migrating from MongoDB to MySQL can help teams move document-based data into a relational database for structured reporting, analytics, application workflows, or compliance use cases. The best migration method depends on whether you need a one-time transfer or an ongoing sync.

CSV export/import can work for small, static datasets, but it requires manual schema mapping and does not keep MySQL updated after the export. This makes it less suitable for production systems where MongoDB continues to change.

Estuary is a better fit when you need a dependable MongoDB to MySQL pipeline that supports both initial loading and ongoing synchronization. It helps teams move data in real time when low latency matters, while also supporting batch movement for workloads that do not require continuous sync.

FAQs

Is MongoDB to MySQL migration possible without coding?

Does Estuary support continuous sync between MongoDB and MySQL?

Is CSV export enough for MongoDB to MySQL migration?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.