LOVESPACE, a UK collection and storage company, ran their operations and analytics on the same SQL Server database. Every time someone in the business pulled a report, queries piled up on the operational system, and warehouse teams had to ask analysts to stop running queries so the system could keep up. Their data was current, but only because operations were paying for it.

This is the bind most data teams know well. Every downstream system your business relies on, whether it's a dashboard, a forecasting model, or a data warehouse, is only as useful as the data being fed to it. And that data is usually a little bit wrong, because the source has already recorded what changed but the downstream copy hasn't caught up yet. A customer moved, an order shipped, a payment failed. The standard fix is to schedule a batch job that re-copies tables wholesale every few hours and hope nothing important happens in between.

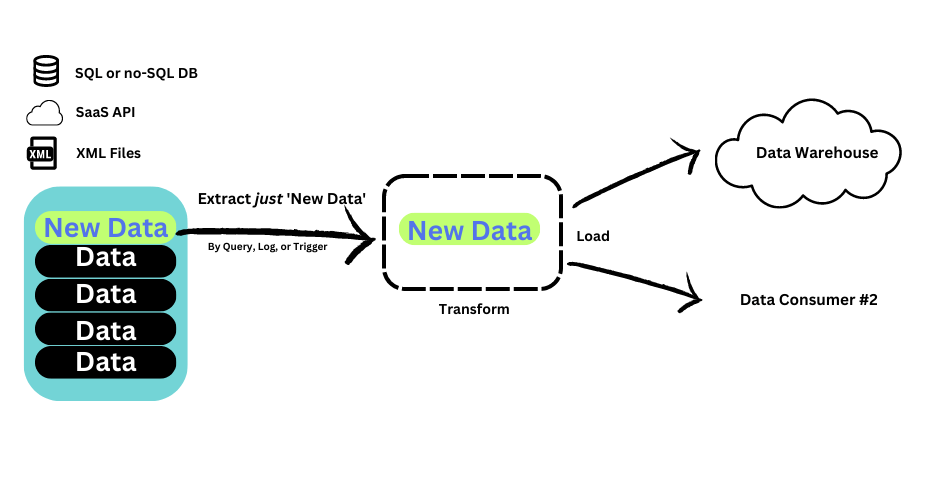

Change data capture (CDC) is the alternative. Instead of re-copying your entire database, CDC captures only what actually changed at the source and moves those changes to where they need to go in real time.

This guide explains what CDC is, how it works, why it matters, and common uses for real-time data movement.

Key Takeaways

CDC moves committed change events (inserts, updates, deletes), not full tables.

Reliable CDC requires ordering guarantees, retry handling, and explicit delete semantics to avoid silent drift at the destination.

Log-based CDC is usually best for low-latency, high-change systems; polling and triggers have clear tradeoffs.

Most “CDC is slow” issues come from destination apply and backpressure, not capture.

Production CDC requires snapshots, backfills, recovery, and schema-change policies to avoid rebuilds and silent drift.

What is change data capture (CDC)?

To start off, let’s understand what change data capture actually is and how the different implementations compare, because the method of CDC you choose has a direct effect on the lift required and benefits received.

At its core, change data capture continuously captures committed inserts, updates, and deletes in a source system, whether that's a database, a data warehouse, or a SaaS platform, and delivers them downstream as an ordered stream of change events. Instead of re-copying entire tables on a schedule, CDC only ships what changed, which keeps analytics platforms, replicas, and event-driven applications in sync with minimal staleness.

CDC has always mattered where stale data has a direct cost, like fraud detection, inventory sync, and financial reconciliation. What's changed is how many systems now fall into that category. Operational databases feed ML feature stores, and data that once lived only in a warehouse now drives real-time decisions. When downstream systems passively consume reports, batch lag is a nuisance. When they act on data as it arrives, it's a correctness problem.

What are the common change data capture methods?

There are three common ways to implement CDC, and they’re not interchangeable. While each method answers the same question ("what changed?"), they differ dramatically in latency, source impact, correctness (especially deletes), and operational complexity.

Log-based change data capture (CDC)

Log-based CDC reads directly from the database's transaction log, such as the PostgreSQL WAL or the MySQL binlog. The log is already being written for replication and recovery, so capturing from it adds almost no load to the source. Log-based CDC delivers the lowest latency, captures every committed change including deletes, and preserves the exact order of transactions. This is the approach used in most production systems where freshness and source performance both matter.

What log-based CDC is best at:

- Near real-time replication into warehouses, lakes, and operational targets

- High-change workloads where polling would create unacceptable source load

- Systems where correctness depends on ordering and reliable recovery

Where log-based CDC breaks down:

- Permissions and access: Reading logs often requires elevated privileges and careful security review

- Operational requirements: If consumers fall behind and logs roll over, you may need resnapshot/rebootstrap

- Schema changes: DDL and type changes can break consumers if not handled intentionally

- Replication slot misconfiguration: Proper configuration and management of Postgres replication slots is important, otherwise you risk WAL bloat disrupting your pipelines

Trigger-based change data capture (CDC)

Trigger-based CDC relies on updates triggering when change events occur. SaaS systems may send webhooks, or you can set up database triggers that fire on insert, update, and delete operations and write change records to a shadow table. It works on systems where log access is restricted, but every write to the source now incurs the cost of an additional write, which can degrade performance on busy tables.

What trigger-based CDC is best at:

- Capturing all operations including deletes without requiring log access

- Attaching extra metadata at write time (who/what changed it, application context)

- Smaller systems where write overhead is acceptable and tightly controlled

Where trigger-based CDC breaks down:

- Write amplification: Triggers add extra writes on every change, which can materially affect high-throughput OLTP systems

- Operational complexity: Managing triggers across many tables and schema changes becomes brittle over time

- Failure modes: If the trigger/audit mechanism fails or backlogs, it can create cascading write issues

Query-based change data capture (CDC)

Query-based (or polling) CDC runs scheduled queries against the source, typically using an updated_at column or a high-water-mark cursor, and pulls anything new since the last poll. It is the simplest approach to set up, but it adds query load to the source, misses deletes unless you build extra logic around soft-delete columns, and trades latency for polling interval.

What query-based CDC is best at:

- Simple incremental loads where “near real-time” is not required

- Low-change tables where polling won’t compete with production traffic

- Situations where you only have read access and can’t access logs

Where query-based CDC breaks down:

- Deletes: Hard deletes are invisible unless you implement soft deletes or maintain a separate delete log

- Latency: Bounded by your polling interval (5 minutes means up to 5 minutes of staleness)

- Correctness under retries: “last_run_time” style logic can miss updates if clocks skew or timestamps aren’t reliably updated

- Source impact: Polling large tables/indexes frequently can create contention, especially as data grows

Many data teams hesitate to adopt CDC because they assume any continuous capture process will hammer the source database, which is exactly the problem LOVESPACE was trying to escape. That concern depends entirely on the type of CDC. Query-based CDC does add load, since it polls tables on an interval. Log-based CDC works differently. It reads from the database's transaction log, the same log the database writes to anyway for replication and recovery, so the source system barely notices it's there.

That makes log-based CDC one of the most efficient ways to move data in real time: it only moves what changes, it does so without competing with the application for resources, and it provides a complete change record.

Important nuance: Log-based CDC usually has minimal additional load compared to polling CDC, but it’s not “zero.” It still requires correct configuration, monitoring, and enough log retention to avoid falling behind.

How does change data capture work?

Change data capture (CDC) works by turning database (or application) changes into an ordered stream of change events, then reliably applying those events to one or more downstream systems so they stay synchronized with the source. CDC is powerful because it ships committed changes in order and applies them safely.

That's what enables low-latency sync without constantly re-reading the source system, and it's why strong CDC implementations invest heavily in ordering, checkpointing, recovery, and delete handling.

At a systems level, CDC has three stages: detect → stream → apply.

Step 1: Detect changes in the source

CDC starts by identifying what changed in your source system. The detection mechanism depends on the CDC method and the technology you're capturing from:

- Log-based CDC (most common at scale): The CDC connector reads the database's transaction log (for example: PostgreSQL WAL, MySQL binlog, SQL Server log). It observes changes at commit-time, which is why it can preserve order and reliably capture inserts, updates, and deletes.

- Query-based CDC: A scheduled query repeatedly checks for rows that changed since the last run (usually requiring an

updated_attimestamp or version column). - Trigger-based CDC: Triggers write changes into an audit table whenever inserts/updates/deletes occur.

For most production pipelines where correctness and low latency matter, log-based CDC is preferred because it avoids repeated table scans and captures the same sequence of changes the database uses for recovery.

Step 2: Convert changes into an ordered change stream

Once changes are detected, CDC converts them into events and publishes them as a stream your pipeline can process and transport. Each event includes record identity, operation type, and ordering metadata. That ordering metadata is what makes CDC operationally safe: it allows consumers to checkpoint progress ("processed up to X") and resume correctly after restarts without guessing.

If your pipeline also does transformations (filtering fields, standardizing types, masking PII, computing derived fields), this is typically done on the stream before delivery, not by re-querying the source.

Step 3: Apply changes to targets (warehouse, lake, search, services)

Finally, the pipeline applies those CDC events to destinations so they reflect the latest source state. The apply strategy depends on the target and intended use:

- Warehouses / OLAP (Snowflake, BigQuery, Redshift, Databricks): Events are often applied using merge/upsert logic keyed on the primary key. Deletes must be mapped explicitly (hard delete or soft delete).

- Operational databases / indexes (Postgres, Elasticsearch, Redis, Firestore): Events usually become upserts and deletes applied directly to the target system.

- Event streaming platforms (Kafka, Kinesis): CDC events are published as topics/streams for multiple consumers.

Benefits of change data capture

CDC is valuable because it changes the unit of data movement from "tables on a schedule" to "committed changes in order." That shift improves freshness, correctness, and operational stability in ways batch pipelines struggle to match as systems scale.

Here are a few concrete benefits that CDC can have on your data integration workflows:

- Lower freshness lag without batch spikes: Batch pipelines create unavoidable staleness windows and load spikes (every run pulls a lot of data at once). CDC distributes work continuously, so destinations stay closer to current state and the source avoids periodic extraction surges.

- More correct replication of updates and deletes: Incremental batch approaches often miss deletes or approximate them with workarounds. CDC carries operation intent (insert, update, delete), which makes it easier to keep warehouses, search indexes, caches, and downstream services consistent.

- Better recovery and rebuild options: CDC pipelines that track explicit positions can resume after failures without guessing. That reduces the frequency of "full reload" incidents and makes destination rebuilds and onboarding new consumers more controlled.

- One change stream can feed multiple consumers: Once changes are captured as events, the same stream can power analytics, operational systems, and event-driven workflows without each team implementing its own extraction logic.

- Scales better as data grows and changes accelerate: As tables grow, polling and batch windows tend to get longer and more fragile. CDC focuses on the delta, which is usually a better match for high-change systems where "only what changed" is the sensible unit of work.

- Fewer brittle incremental loads: CDC reduces the need for "last updated timestamp" logic, incremental merge jobs, and full refresh fallbacks that commonly break as schemas and workloads evolve.

Examples of change data capture use cases

Use change data capture when the business outcome depends on freshness, correct replication of updates and deletes, or continuous downstream processing—and when batch jobs become fragile as volume grows. CDC is often utilized for workflows involving fraud detection, Internet of Things (IoT) device data management, supply chain and inventory management, and regulatory compliance (GDPR, HIPAA, SOX, etc).

Common business use cases for change data capture include:

CDC for real-time or near real-time analytics

If dashboards, anomaly detection, or operational reporting require current data, CDC is the most direct path to keep analytics systems synchronized without repeatedly re-reading large tables. For an end-to-end blueprint of how CDC fits into a modern analytics stack, check out our New Reference Architecture for CDC framework.

CDC for artificial intelligence and machine learning (AI/ML)

With the prevalence of agentic AI, data teams need a strong CDC system in place. Agents depend on fresh real-time data and comprehensive change history to operate effectively. While you could have agents querying your source databases, log-based CDC is an alternative that reduces the burden on your production system. And CDC provides a continuous stream of data, as opposed period batch updates, which allows agents to always act on relevant data–even when schemas evolve.

Think of CDC as a prequisite foundation to enable your team's AI initiatives. Without solid change data capture in place, your AI workflows run the risk of operating on stale, untrustworthy data.

CDC for replication and system synchronization

CDC is a strong fit when you need to keep a secondary database, cache, search index, or service in sync with a source of truth—especially when updates and deletes must propagate correctly. This is the core replication use case.

CDC for streaming ETL and event-driven applications

If downstream systems need to react to changes (fraud signals, inventory updates, workflow triggers), CDC provides a reliable change stream you can transform and route in motion. Check out our streaming ETL with CDC guide to learn more.

CDC when batch ETL becomes operationally expensive

If you're constantly tuning schedules, fighting long batch windows, or rebuilding broken incremental loads, CDC can reduce operational churn—especially when implemented as log-based CDC rather than polling. For a clear comparison of CDC vs traditional extraction patterns, see our Log-based CDC vs Traditional ETL guide.

How do you choose the appropriate change data capture tool?

Choosing a CDC tool requires asking more than just "does it support Postgres?" Most tools do. The real differentiators are delivery semantics, backfill and recovery behavior, schema-change handling, and how much infrastructure you're willing to operate.

If you’re feeling overwhelmed with how to evaluate different change data capture solutions, here are some fast decision rules to get started:

- If you need sub-minute freshness and reliable propagation of updates and deletes, prioritize tools that use log-based CDC with clear retry and dedupe semantics. You should also check the tool’s latency on downstream connections.

- If you expect to add destinations over time, prioritize systems that support repeatable backfills and bounded replay instead of frequent full resyncs.

- If your destination is a warehouse, validate the apply strategy (merge/upsert behavior, delete handling, batching) because destination apply is often the real bottleneck.

- If your schemas evolve frequently, choose a tool with explicit behavior for breaking changes (pause + alert or quarantine), not silent drops.

If freshness, deletes, and source performance all matter, log-based CDC is almost always the right choice. If your workload is small and freshness requirements are loose, query-based CDC can be good enough with far less setup. Trigger-based CDC is a middle path that's worth considering only when log access isn't available.

How does Estuary handle change data capture?

In Estuary, change data capture is a continuous pipeline, not a one-off connector job. Captures ingest changes into collections (a durable streaming layer), and materializations apply those changes to destinations with backpressure-aware batching.

Most CDC tools write changes row by row, meaning destinations can briefly see states that never existed at the source, such as partial transactions or mid-commit snapshots. Estuary reads transactions as committed units, so downstream systems see data the way the source committed it.

CDC tools typically produce append-only event streams, creating write amplification and small-file problems in columnar warehouses. Estuary's standard behavior groups and merges events before writing, so warehouses receive correctly upserted rows. For use cases that require append-only streams, such as audit logs, Estuary's History Mode and Delta Updates keep the full unmerged change data history.

Estuary collections support replay and backfill without re-extracting from the source, and schema evolutions update affected bindings and trigger backfills where needed.

Real-time CDC with Estuary doesn't require Kafka or other streaming infrastructure: capture, transformation, and delivery run as a fully managed pipeline with sub-second latency. If your requirements include multiple destinations, repeatable backfills, and clear semantics under retries, platform-level behaviors matter more than connector checklists.

If you're ready to build dependable pipelines that power your real-time data needs, reach out to us and schedule a demo today.

FAQs

Is CDC the same as replication?

What is the best CDC method?

Do I need Kafka for CDC?

What is the difference between CDC and ETL?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.