Editorial Disclosure

This article is written and maintained by the team at Estuary, a real-time data integration platform. Estuary appears in this list and has been evaluated under the same criteria as every other tool.

For tools outside our day-to-day experience, all summaries draw from G2 user reviews, Gartner Peer Insights, official vendor documentation, and r/dataengineering. Every pricing figure was verified from vendor websites in March 2026.

Introduction

Pick the wrong data integration tool, and you spend the next 18 months migrating away from it. Pick the right one and your data team stops babysitting pipelines and starts doing actual analytical work.

The category is genuinely crowded. Batch ETL tools from the early 2000s sit alongside streaming platforms launched in 2020, open-source projects backed by venture capital, and enterprise suites that cost more than most engineering salaries. They do not all solve the same problem, and a flat comparison list without context does nobody any favours.

This guide groups 11 tools by their natural category, explains what each one is genuinely good at, names the real limitations that vendor marketing leaves out, and gives you verified pricing so you can have an honest budget conversation. A quick-pick summary is at the top for when you already know your use case. Detailed entries follow for when you need to make a proper case to your team.

One transparency note: Estuary is in this list, and we are the publisher. We have applied the same evaluation structure and the same honest-limitations section to ourselves as to every other tool. For independent perspectives, Gartner Peer Insights and G2's ETL tools category are good starting points.

Quick-Pick Summary by Use Case

If you already know your primary requirement, this table points you to the right tool without reading every entry.

| Your Situation | Best Fit | Why |

|---|---|---|

| You need data in under one minute from a live database | Estuary | Right-Time platform with log-based CDC and sub-100ms latency |

| You want fully managed ELT pipelines with zero maintenance | Fivetran | 700+ connectors, automatic schema repair, no engineering overhead |

| You want open-source flexibility and control | Airbyte | 400+ connectors, MIT-licensed, self-host or cloud |

| Your infrastructure is on Azure | Azure Data Factory | Serverless, native Azure integration, 90+ built-in connectors |

| Your infrastructure is on AWS | AWS Glue | Native serverless Spark ETL, no cluster to manage |

| You are a Microsoft on-prem SQL Server shop | SSIS | Included with SQL Server, no extra licensing |

| You need cloud ELT with strong transformation support | Matillion | Purpose-built ELT for Snowflake, BigQuery, and Redshift |

| You need to connect SaaS apps and automate workflows | Dell Boomi | 1,000+ connectors for app-to-app integration |

| You have complex enterprise ETL and governance needs | Informatica PowerCenter | Deepest legacy system coverage, data quality, and lineage |

| You run Oracle environments | Oracle Data Integrator | Native Oracle pushdown performance |

| You need AI-assisted enterprise iPaaS | SnapLogic | 500+ Snaps, AI-assisted pipeline building |

What Are Data Integration Tools?

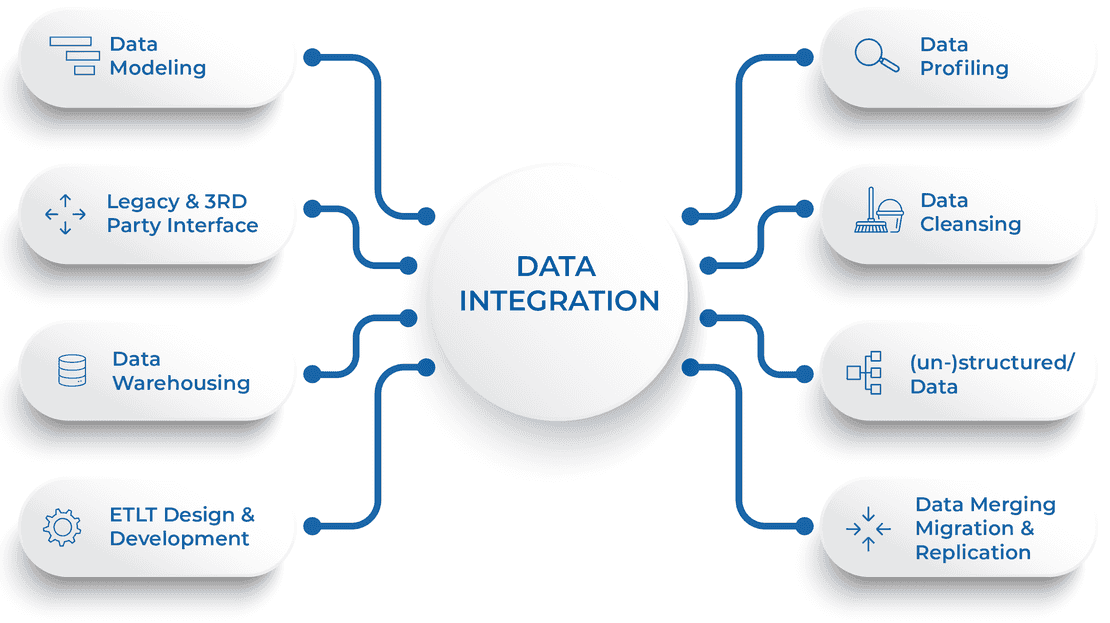

A data integration tool is software that moves data from one or more source systems into a destination, applying transformation logic along the way. The source might be a production database, a SaaS application, an event stream, or a file. The destination is typically a data warehouse, a data lake, or another operational system.

That simple definition covers a wide range of architectures. The right tool depends almost entirely on which architecture your use case requires.

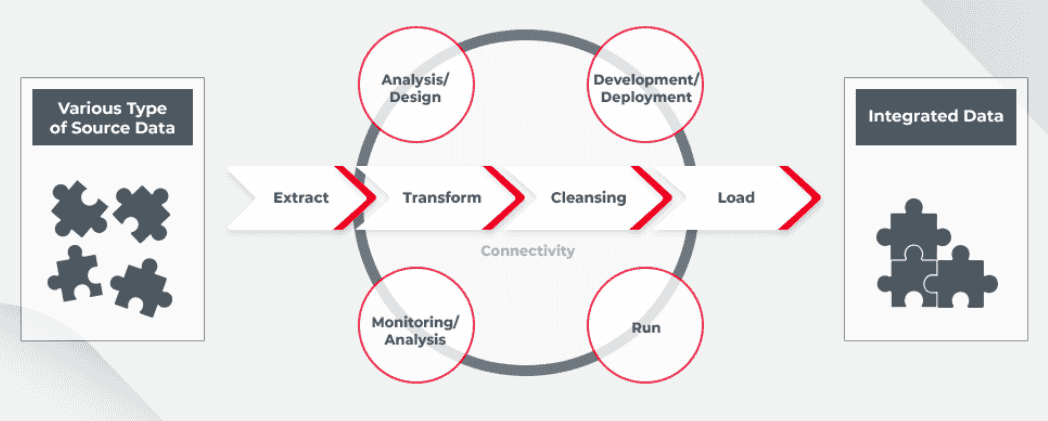

The Four Core Integration Patterns

Understanding these four patterns matters more than any feature comparison, because each one maps directly to a different category of tool.

| Pattern | How It Works | Typical Latency | Best For |

|---|---|---|---|

| Batch ETL | Extract on a schedule, transform, then load | Hours to daily | Reporting, nightly warehouse refreshes |

| ELT | Load raw data into the warehouse first, transform inside using SQL or dbt | Hourly to near-real-time | Modern data stack with Snowflake, BigQuery, or Redshift |

| CDC | Read the database transaction log and stream every insert, update, and delete as it happens | Sub-second to seconds | Real-time dashboards, fraud detection, live inventory sync |

| iPaaS | Connect SaaS applications via APIs using pre-built workflow connectors | Event-triggered | CRM-to-warehouse sync, business workflow automation |

Most teams start with batch ELT because it is simpler and cheaper. They graduate to CDC when business requirements demand fresher data. This distinction matters because tools designed for one pattern are usually a poor fit for another.

How We Evaluated These Tools

We started with a longlist of 40 tools drawn from G2 category leaders, Gartner Magic Quadrant rankings, and recommendations in the r/dataengineering community. We narrowed to 11 based on active development, meaningful user bases, and distinct positioning. For each tool we assessed:

- Connector breadth: verified count from official documentation, not marketing claims

- Real-time and CDC capability: whether the tool uses log-based CDC, query-based polling, or neither

- Transformation support: in-flight, in-warehouse, or none

- Deployment model: cloud SaaS, self-hosted, on-premises, or hybrid

- Pricing transparency: whether pricing is publicly available and predictable at scale

- User sentiment: synthesised from verified reviews on G2 and Gartner Peer Insights

Tools the Estuary team uses directly are noted as such. For all others, summaries are based on official documentation plus user feedback from G2 and Gartner Peer Insights.

All 11 Tools at a Glance

Alphabetical order. All data verified March 2026.

| Tool | Pattern | CDC | Connectors | Deployment | Entry Price |

|---|---|---|---|---|---|

| Airbyte | ELT | Query-based (partial) | 400+ OSS / 600+ Cloud | Cloud, self-hosted | Free (open source) |

| AWS Glue | ETL / ELT | No | AWS-native + JDBC | AWS cloud only | $0.44/DPU-hour |

| Azure Data Factory | ETL / ELT | Limited | 90+ built-in | Azure cloud, hybrid | Pay-per-activity-run |

| Dell Boomi | iPaaS | Yes (via connectors) | 1,000+ | Cloud, hybrid | Custom pricing |

| Estuary | CDC / Streaming / Batch | Yes (log-based, sub-100ms) | 200+ native / 500+ OSS | Cloud, Private, BYOC | Free (10 GB/mo) |

| Fivetran | ELT | Batch CDC | 700+ | Cloud, Hybrid (Enterprise+) | Free (500K MAR/mo) |

| Informatica PowerCenter | Enterprise ETL | Limited | 300+ | On-prem, Cloud, Hybrid | ~$2,000/mo+ |

| Matillion | ELT | No | 100+ native | Cloud (AWS, Azure, GCP) | Custom (vCore-hour) |

| Oracle Data Integrator | Enterprise ETL | Yes (Oracle ecosystem) | Oracle-native, broad | On-prem, Cloud | Custom |

| SnapLogic | iPaaS / ELT | Yes (via Snaps) | 500+ Snaps | Cloud, hybrid | Custom |

| SSIS | Batch ETL | No | SQL Server, Excel, Oracle, DB2+ | On-prem, Azure via ADF | Included with SQL Server |

Pricing verified March 2026. BYOC = Bring Your Own Cloud. DPU = Data Processing Unit (AWS Glue). MAR = Monthly Active Rows. Sources: Fivetran, Estuary, AWS Glue, Azure Data Factory.

Cloud-Native ELT Platforms

These tools are built for the modern data stack. Their job is to get your data into Snowflake, BigQuery, or Redshift reliably, at the right frequency, without requiring you to maintain custom pipeline code. This is the most popular category for analytics-focused data teams today.

1. Fivetran

Managed ELT with zero-maintenance connectors

| Best for | Analytics teams that want the fastest time-to-pipeline and can justify paying for zero-maintenance reliability |

|---|---|

| Connectors | 700+ pre-built |

| G2 | Read Fivetran reviews on G2 (797 verified reviews) |

| Gartner | Read Fivetran on Gartner Peer Insights |

What it does

Fivetran automates the extraction and loading of data from source systems into cloud data warehouses. Configure a connector, point it at a destination, and it handles schema mapping, drift when the source changes, incremental syncing, and failure recovery. The engineering overhead after initial setup is close to zero.

Where it genuinely stands out

- Connector reliability: Fivetran-maintained connectors adapt automatically to source API changes. Verified Gartner reviewers consistently describe it as 'low maintenance, set it and forget it' across multi-year deployments.

- Automated schema management: When a source system adds a column or changes a data type, Fivetran propagates that change to the destination automatically. For teams with many connectors this alone saves significant engineering hours each month.

- dbt Core integration: Fivetran supports dbt Core natively for in-warehouse transformations, fitting cleanly into the standard modern data stack alongside Snowflake or BigQuery.

Real limitations

- MAR pricing can surprise you at scale: Billing is based on Monthly Active Rows per connector since March 2025. Multiple verified G2 reviewers flagged significant cost increases, particularly for teams with many smaller connectors. One reviewer wrote: 'The pricing model, especially the new MARs per connector model, makes it impractical for event sources.' Full discussion at Fivetran G2 reviews.

- Enterprise database connectors are plan-locked: Oracle, SQL Server CDC, and high-volume agent connectors require the Enterprise or Business Critical plan. Standard plan does not include them.

- No in-flight transformation: Fivetran loads raw data. Filtering, masking, or enriching data before it lands requires a separate tool.

- Limited customisation: The fully managed nature restricts deeper customisation, and getting enhancements added to existing connectors is difficult according to multiple Gartner reviewers.

Pricing

Free plan: up to 500,000 MAR per month. Standard: usage-based with $5 minimum per active connection. Enterprise: adds database connectors, faster sync intervals, advanced governance. Business Critical: adds private networking and compliance features. Annual contracts from $12,000/year.

Who should use Fivetran

- Teams that want the fastest time-to-pipeline and prioritise reliability over cost predictability at high volume.

- Organisations on Snowflake, BigQuery, or Redshift who use dbt and need data refreshed hourly or more frequently.

Who should not use Fivetran

- Teams with many high-volume connectors where per-connector MAR pricing becomes unpredictable. Use the cost estimator at pricing page before committing.

- Any use case requiring sub-minute latency or true log-based CDC. Fivetran is a batch ELT tool at its core.

2. Airbyte

Open-source ELT with the broadest connector ecosystem

| Best for | Teams that need open-source flexibility, self-hosted control, or connectors for niche or custom data sources |

|---|---|

| Connectors | 400+ managed (self-managed); 600+ (Cloud); 10,000+ community. |

| G2 | Read Airbyte reviews on G2 (G2 Leader badge, top 3% of all software on G2) |

| Gartner | Read Airbyte on Gartner Peer Insights |

What it does

Airbyte is an open-source data movement platform built for ELT pipelines. Founded in 2020, it has built one of the largest connector ecosystems in the category. Its MIT licence means teams can self-host for free, modify connectors, and build custom ones without licensing fees.

Where it genuinely stands out

- Largest open-source connector library: 400+ managed connectors plus 10,000+ community connectors give coverage of niche sources no other platform supports. The AI-powered Connector Builder generates new connectors from any REST API specification.

- Deploy anywhere: Self-host on Kubernetes for full infrastructure control, or use Airbyte Cloud for a managed experience. Pipelines built on self-managed migrate to cloud without rebuilding.

Real limitations

- Connector quality is uneven: Community-maintained connectors vary in reliability. Multiple G2 reviewers reported connectors breaking silently after API updates with no error message. See Airbyte G2 reviews for the full picture.

- No native in-flight transformation: Airbyte loads raw data. Transformation happens downstream, typically with dbt. Filtering or masking before data lands requires additional tooling.

- Self-hosted requires real infrastructure: Production-scale self-managed Airbyte runs on Kubernetes or Docker and requires dedicated operational effort. Not suitable for teams without DevOps capacity.

Pricing

Open-source self-managed version is free. Airbyte Cloud offers Standard (volume-based), Airbyte Plus (fixed annual, for SMBs), and Airbyte Pro (capacity-based with governance, for enterprise).

Who should use Airbyte

- Teams that need a connector for a source nobody else supports, or that want to build proprietary connectors.

- Organisations with DevOps capacity preferring open-source infrastructure over vendor lock-in.

Who should not use Airbyte

- Small teams without infrastructure resources. The self-managed version carries real operational overhead.

- Teams that need guaranteed SLA on connector uptime. Community-maintained connectors do not carry that guarantee.

3. Matillion

Cloud-native ELT purpose-built for Snowflake, BigQuery, and Redshift

| Best for | Data teams that need powerful visual ELT with transformation built directly into the cloud warehouse |

|---|---|

| Connectors | 100+ native connectors |

| G2 | Read Matillion reviews on G2 |

| Gartner | Read Matillion on Gartner Peer Insights |

What it does

Matillion is a cloud-native ELT platform designed specifically for Snowflake, Amazon Redshift, Google BigQuery, and Databricks. It provides a visual drag-and-drop interface for building pipelines and runs all transformation logic inside the cloud data warehouse using pushdown SQL, which means transformation cost is borne by the warehouse compute rather than an external engine.

Where it genuinely stands out

- Warehouse-native transformation: Matillion pushes transformation logic directly into Snowflake, BigQuery, or Redshift. This is architecturally clean and performant for teams already paying for warehouse compute. Verified G2 reviewers praise the natural Snowflake integration in particular.

- Visual pipeline builder: The drag-and-drop interface lets analysts build complex transformation jobs without writing raw SQL for every step. G2 reviewers consistently highlight ease of use and fast onboarding.

- Git and CI/CD integration: Matillion supports version control through Git, which is important for teams that treat data pipelines as software and need proper change management.

Real limitations

- Connector library is smaller than competitors: 100+ native connectors versus 700+ for Fivetran. Teams needing broad SaaS source coverage may find gaps.

- Pricing is not publicly available: Matillion uses custom vCore-hour pricing. Based on publicly reported customer figures, annual costs typically range from $20,000 for small teams to $100,000 or more for larger deployments. Warehouse compute costs are additional.

- Warehouse-only deployment: Matillion is cloud data warehouse specific. It does not support on-premises destinations and is not suitable for operational data movement outside the warehouse.

- Git integration is clunky for larger teams: Multiple verified G2 reviewers specifically called out the Git workflow as awkward when multiple team members are working on overlapping projects.

Pricing

Custom pricing based on vCore-hours consumed. Pricing is available on annual subscription. Contact Matillion team directly. Note that warehouse compute costs (Snowflake, BigQuery, Redshift, Databricks) are separate and additional.

Who should use Matillion

- Data teams already invested in Snowflake, BigQuery, or Redshift who want visual ELT with warehouse-native transformation.

- Teams with SQL-proficient analysts who will use the visual interface to build and modify transformation pipelines without heavy engineering involvement.

Who should not use Matillion

- Teams without a cloud data warehouse destination. Matillion does not support on-premises or operational integration patterns.

- Organisations sensitive to unpredictable compute costs. Warehouse-native transformations add warehouse compute charges on top of the Matillion license.

Right-Time Data Platform: CDC and Streaming

Change Data Capture tools read the transaction log of a source database and stream every insert, update, and delete as it happens. Log-based CDC is the right architecture when data older than one minute has a real business cost: fraud detection, live inventory tracking, real-time operational dashboards, and synchronising a data warehouse with a production database that changes thousands of times per minute.

The phrase 'right-time' matters here. Not every pipeline needs sub-second delivery. Some pipelines need real-time; others work best on a scheduled batch. The ideal platform is one where you can set the latency per pipeline rather than running two separate systems to cover both patterns.

4. Estuary

The Right-Time Data Platform: CDC, Streaming, and Batch in One System

Publisher Note: Estuary is the company behind this article. We applied the same evaluation format and the same honest-limitations section to ourselves as to every other tool. For independent perspectives, see Estuary reviews on G2 - https://www.g2.com/products/estuary-flow/reviews

| Best for | Teams that need sub-second CDC, real-time streaming, and batch pipelines in one platform without running separate tools |

|---|---|

| Connectors | 200+ native; 500+ via open-source integrations |

| Certifications | SOC 2 Type II (zero exceptions), HIPAA-compliant. |

| Pricing page | estuary.dev/pricing |

What it does

Estuary is a Right-Time Data Platform. The core idea behind right-time is straightforward: different pipelines need data at different speeds. A fraud detection system needs rows in under a second. A weekly finance report needs a clean nightly batch load. Running two separate systems to cover both patterns adds cost, operational complexity, and pipeline fragmentation. Estuary unifies real-time CDC, streaming, and batch into a single platform where you set the delivery latency per pipeline.

The platform is built on log-based CDC, which reads directly from the database transaction log rather than polling. This captures every row-level change at sub-100ms latency, does not add query load to the source database, and captures deletes correctly, which polling-based tools typically miss.

The right-time difference in practice

A Fivetran or Airbyte pipeline syncing hourly means your data is up to 59 minutes stale. A right-time platform means the same source data flows to a real-time dashboard at sub-second frequency and to a cost-optimised daily batch load in the warehouse from the same single pipeline configuration. No duplicate pipelines, no managing two vendors, no reconciling which system has the freshest state.

Where it genuinely stands out

- Log-based CDC at sub-100ms latency: Estuary reads the database transaction log directly, the same technique databases use internally. More reliable than query-based polling, zero impact on source database performance, and correct capture of deletes and updates.

- Streaming and batch from one pipeline: Set delivery latency per destination. The same source data can power a real-time operational system and a scheduled warehouse load without maintaining two separate pipeline systems or two vendor contracts.

- Private cloud and BYOC deployment: The data plane runs inside your own cloud account. Your data never leaves your network. The control plane stays in Estuary's managed environment. Supports AWS PrivateLink, VPC peering, and SSH tunnels.

- Exactly-once delivery: Estuary supports exactly-once transactional delivery, so you do not need to build deduplication logic at the destination.

- Predictable per-GB pricing: Unlike MAR-based pricing that spikes with sync frequency, Estuary bills per GB of data moved. For CDC workloads moving only changed rows, this is typically significantly cheaper than row-based models. Company claims 40 to 60% savings vs MAR models.

Real limitations

- Smaller native connector library than Fivetran: 200+ native connectors versus 700+ for Fivetran. Estuary supplements with 500+ open-source connectors, but open-source quality varies.

- Newer company: Estuary is newer than Informatica, SAP, or Oracle. Some enterprise procurement processes apply extra scrutiny to vendors under five years old. The underlying open-source Gazette framework has approximately ten years of development, but the commercial product is newer.

Pricing

Free plan: 10 GB/month, 2 connector instances, indefinite use, no credit card required. Cloud plan: $0.50 per GB of data moved, plus $100/month per connector instance (first 6), then $50/month for additional instances. Pay-as-you-go or annual commitment options available. Enterprise: custom pricing with SSO, private deployment, compliance reporting, and custom SLAs.

Who should use Estuary

- Teams that need sub-minute or sub-second data freshness from relational databases and want a single system for streaming and batch rather than two separate vendor contracts.

- Regulated industries such as finance and healthcare requiring private cloud deployment where data never leaves their own infrastructure.

Who should not use Estuary

- Teams primarily needing broad SaaS connector coverage with enterprise-grade SLAs on each connector. Fivetran's depth is greater in that scenario.

- Teams with purely batch requirements and no real-time use case. The setup is disproportionate to simple scheduled ELT needs.

If real-time CDC or right-time data movement fits your use case, Estuary's free plan is a practical starting point. You get 10 GB per month and 2 connector instances with no credit card required. Most pipelines are running within minutes. Start free at dashboard.estuary.dev/register

Cloud Provider Native ETL Tools

If your data infrastructure already lives inside a single cloud provider, their native ETL service is usually the most cost-effective and easiest-to-integrate option. The trade-off is lock-in. Migrating providers means rebuilding pipelines.

5. Azure Data Factory

Serverless ETL and ELT for Azure-native data pipelines

| Best for | Teams whose data infrastructure is primarily on Azure who need managed, serverless ETL and ELT without managing compute |

|---|---|

| Connectors | 90+ built-in maintenance-free connectors at no added cost. |

| G2 | Read Azure Data Factory reviews on G2 |

| Pricing | azure.microsoft.com/pricing/data-factory |

What it does

Azure Data Factory is Microsoft's fully managed, serverless data integration service. It lets teams build ETL and ELT pipelines using a visual drag-and-drop interface or code, connecting on-premises and cloud data sources to Azure Synapse Analytics, Azure Data Lake, or other destinations. You pay per activity run and per integration runtime hour, with no idle cost when pipelines are not running.

Where it genuinely stands out

- Serverless and pay-per-use: Pipeline orchestration is charged at $0.005 per activity run. There is no infrastructure to provision or maintain. Costs only accumulate when pipelines execute.

- Native Azure ecosystem integration: Connecting ADF to Azure Synapse, Azure SQL Database, Azure Blob Storage, or Azure Data Lake requires minimal configuration. Everything communicates within the Azure network without egress charges.

- SSIS migration path: ADF includes Azure-SSIS Integration Runtime, which lets teams lift and shift existing SSIS packages to Azure without rewriting them. This is the natural migration route for organisations moving SQL Server workloads to the cloud.

- Visual Mapping Data Flows: No-code data transformation at scale using Spark under the hood. Non-engineers can build transformation logic visually without writing PySpark or SQL.

Real limitations

- Azure-centric: Integration with non-Azure services is more limited. Multiple G2 reviewers noted friction when connecting to platforms outside the Azure ecosystem.

- Debugging is difficult: Multiple verified G2 reviewers flagged the debugging experience as slow and limited, particularly for complex data flows where step-by-step troubleshooting is not well supported.

- Cost management requires attention: The usage-based model means costs can accumulate quickly if pipelines are not monitored. A pipeline with 5 activities running hourly generates 3,600 billable activity runs per month. Azure Cost Management monitoring is strongly recommended from day one.

Pricing

$0.005 per activity run for pipeline orchestration. Data movement billed per DIU-hour. Data Flow transformations billed per vCore-hour (minimum 8 vCores). No base fee.

Who should use Azure Data Factory

- Organisations with infrastructure primarily on Azure who need serverless ETL without managing a compute cluster.

- Teams migrating existing SSIS packages to the cloud. ADF is the official and most direct migration path.

Who should not use Azure Data Factory

- Multi-cloud or hybrid teams who need to integrate significant workloads from AWS, GCP, or non-Azure on-premises systems as their primary use case.

- Teams that need sub-minute data latency. ADF is a batch and near-real-time tool, not a streaming CDC platform.

6. AWS Glue

Serverless Spark ETL for AWS-native pipelines

| Best for | Teams whose data infrastructure lives primarily on AWS who want serverless, pay-per-use Spark ETL without managing a cluster |

|---|---|

| Pricing | $0.44/DPU-hour (serverless). Source: aws.amazon.com/glue/pricing |

What it does

AWS Glue is a fully managed, serverless ETL service built on Apache Spark. You write PySpark or Scala scripts, or use the visual interface for common patterns, and AWS handles cluster provisioning, scaling, and shutdown. You are billed per Data Processing Unit hour consumed with no idle cost between runs.

Where it genuinely stands out

- Serverless with no idle cost: At $0.44 per DPU-hour, Glue is cost-effective for infrequent workloads. There is no charge when jobs are not running.

- AWS Data Catalog: Built-in data catalog automatically discovers and indexes schemas from S3, RDS, Redshift, and other AWS services. Serves as centralised metadata across your AWS data estate without additional tooling.

- Native AWS connectivity: Connecting to S3, Redshift, RDS, DynamoDB, or Kinesis is near-zero configuration. Everything communicates within the AWS network.

Real limitations

- AWS-only: Not designed for multi-cloud or hybrid scenarios. Adding non-AWS sources or destinations adds meaningful friction.

- Spark expertise required for complex jobs: The visual interface covers basic ETL. Anything more complex needs PySpark or Scala. Not suitable for non-technical users.

- Cold start latency: Serverless Glue jobs have a startup delay. For sub-minute data latency requirements this is disqualifying.

Pricing

$0.44 per DPU-hour (serverless). Additional charges for the Glue Data Catalog based on objects stored and API requests.

Who should use AWS Glue

- AWS-first organisations that need flexible Spark ETL without cluster management.

- Teams with infrequent batch jobs where pay-per-run serverless pricing is more economical than a standing managed service.

Who should not use AWS Glue

- Multi-cloud teams or those with primary data sources outside AWS.

- Non-technical teams without Spark experience for complex transformation requirements.

Enterprise ETL Platforms

Enterprise ETL tools were built for on-premises databases, complex transformation logic, and strict IT governance. They are expensive, require trained specialists, and carry deep connector support for legacy systems that cloud-native tools do not cover. If you run Oracle or mainframe environments with serious governance requirements, these platforms are often the only realistic option.

7. Informatica PowerCenter

Enterprise ETL for complex, governance-heavy environments

| Best for | Large enterprises with legacy system connectivity, data governance requirements, and non-negotiable reliability |

|---|---|

| Connectors | 300+ |

| Gartner | Read Informatica on Gartner Peer Insights |

What it does

Informatica PowerCenter is one of the oldest and most feature-complete enterprise ETL platforms available. It handles extraction from mainframes, Oracle databases, SAP systems, and cloud applications, applies complex transformation logic, and loads to data warehouses or data lakes. It is built for environments where data governance, lineage tracking, and audit compliance are requirements, not preferences.

Where it genuinely stands out

- Deepest feature set: Informatica's IDMC (Intelligent Data Management Cloud) combines ETL, data quality management, master data management, and data cataloging. For organisations needing all of these from one vendor, the procurement simplification is significant.

- 300+ connectors including legacy: Covers mainframe, IBM Db2, AS/400, and enterprise systems that cloud-native ELT tools do not support.

- Data lineage and metadata management: For regulated industries needing to trace the origin of every data field for compliance, Informatica's lineage capabilities are among the strongest available.

Real limitations

- High cost: Starting at approximately $2,000/month, scaling significantly with deployment size. Not the right tool for most small and mid-size organisations.

- Steep learning curve: Verified Gartner reviewers note Informatica requires dedicated training. Ramp-up time is significantly longer than cloud-native tools.

- Not built for modern DevOps workflows: Informatica's roots are on-premises ETL. It does not fit naturally into CI/CD-based data pipelines.

Pricing

Enterprise licensing from approximately $2,000/month. Contact Informatica team for current pricing. Final cost depends on deployment model, user count, and feature tier.

Who should use Informatica PowerCenter

- Large enterprises with mainframe or legacy system connectivity requirements and serious data governance needs.

- Regulated industries where data lineage, audit trails, and master data management are compliance requirements.

Who should not use Informatica PowerCenter

- Small and mid-size teams. The cost and complexity is disproportionate to most use cases outside large enterprise.

- Teams building cloud-native analytics pipelines with modern tooling. Fivetran, Airbyte, or Matillion are better fits.

8. Oracle Data Integrator (ODI)

High-performance ETL for Oracle-centric environments

| Best for | Enterprises running Oracle databases, Oracle ERP, or Oracle Cloud who want native integration tooling with pushdown performance |

|---|---|

| Pricing | Custom Oracle licensing. Contact oracle.com |

What it does

Oracle Data Integrator is Oracle's primary data integration platform. It uses an E-LT approach that pushes transformation processing into the target database rather than an intermediate engine. For Oracle-to-Oracle scenarios, transformations run at native database speed with no external engine overhead.

Where it genuinely stands out

- Pushdown optimisation: ODI generates native SQL and pushes transformation logic to the target database. For Oracle Exadata or Oracle ADW, transformations run at full native database speed.

- Fault-tolerant architecture: ODI automatically identifies and routes faulty records during transformation, so a bad row does not fail an entire pipeline run.

- Near-real-time trickle feed: Supports incremental data movement for keeping operational Oracle systems in sync without full batch jobs.

Real limitations

- Best value inside Oracle: Outside Oracle environments, the advantages are much less compelling. Third-party connectivity exists but is not its primary strength.

- Complex, costly licensing: Oracle licensing is expensive and negotiated case by case.

- Smaller talent pool: Finding engineers experienced with ODI is harder than finding engineers who know Fivetran or Airbyte, which affects hiring and onboarding.

Pricing

Custom Oracle licensing. Contact Oracle team directly.

iPaaS and Application Integration Platforms

iPaaS tools connect applications to each other, not just data to a warehouse. They handle API-based connectivity, workflow automation, and business process integration. If your primary need is syncing Salesforce to HubSpot, routing orders to an ERP, or connecting hundreds of business applications, this is the right category.

9. Dell Boomi

Enterprise iPaaS for connecting applications and automating workflows

| Best for | Enterprises connecting SaaS applications, automating business workflows, and managing API integrations at scale |

|---|---|

| Connectors | 1,000+ |

| Pricing | Custom. Contact boomi.com |

What it does

Dell Boomi is an integration platform that connects cloud and on-premises applications through a low-code, drag-and-drop interface. It supports over 1,000 connectors and covers use cases from simple application sync to complex multi-step workflow orchestration. In December 2024, Boomi acquired Rivery, adding ELT-style data pipeline capabilities.

Where it genuinely stands out

- 1,000+ pre-built connectors: The broadest connector library in this list. Covers ERP systems, marketing tools, HR platforms, and custom REST APIs.

- Low-code for non-engineers: Business analysts can create and modify integrations without writing code, reducing dependency on engineering resources for routine integration work.

- Hybrid deployment via Atom runtime: Connects cloud applications to on-premises systems through a runtime that deploys inside your network. Essential for enterprises that cannot move all systems to the cloud.

Real limitations

- Not built for high-volume data movement: Boomi is designed for application integration workflows, not bulk data pipeline work. For moving hundreds of gigabytes daily into a warehouse, dedicated ELT tools are better suited.

- No public pricing: All deployments require a sales conversation.

Pricing

Custom pricing. Contact Boomi team directly.

10. SnapLogic

AI-assisted iPaaS for enterprise data and application integration

| Best for | Enterprise teams looking for a low-code integration platform with AI assistance for building and managing pipelines |

|---|---|

| Connectors | 500+ Snaps |

| Pricing | Custom. Contact snaplogic.com |

What it does

SnapLogic is a cloud-based enterprise integration platform with over 500 pre-built connectors called Snaps. Its AI assistant Iris helps users build pipelines by suggesting relevant Snaps, mapping data fields, and troubleshooting errors. It covers both application integration and data integration within one platform.

Where it genuinely stands out

- AI-assisted pipeline building: Iris reduces integration build time by surfacing connectors and suggesting field mappings. Meaningful productivity advantage for non-technical users managing complex integrations.

- Unified app and data integration: Handles both SaaS workflow automation and data movement in one platform, reducing the need for separate iPaaS and ETL tools.

Real limitations

- No public pricing: Requires a sales conversation to receive pricing information.

- Enterprise focus: Built for large enterprise deployments. Small or mid-market teams may find the setup overhead disproportionate.

Pricing

Custom subscription pricing. Contact Snaplogic team.

Microsoft Ecosystem: On-Premises ETL

For organisations running SQL Server on-premises, Microsoft's native ETL tooling is often the most practical and cost-effective starting point. SSIS is included with SQL Server licensing and has been in production environments for over 20 years. Teams moving workloads to Azure have Azure Data Factory as the direct cloud successor.

11. SQL Server Integration Services (SSIS)

Microsoft's battle-tested on-premises ETL engine

| Best for | Organisations running SQL Server on-premises who need ETL without additional licensing costs |

|---|---|

| Pricing | Included with SQL Server 2019 Standard and Enterprise. |

What it does

SSIS is a component of Microsoft SQL Server that has been in production use since 2005. It provides a graphical drag-and-drop interface for building ETL workflows that extract data from SQL Server, Excel, Oracle, IBM Db2, flat files, and other sources, apply transformations, and load to a destination. It is included with SQL Server licensing at no additional cost.

Where it genuinely stands out

- No additional cost with SQL Server: Included with SQL Server 2019 Standard and Enterprise. No separate charge for the ETL engine.

- Mature and well-documented: Over 20 years in production environments, with extensive training resources, third-party documentation, and a large community of experienced engineers.

- Complex on-premises transformation support: Broad transformation component library covering data manipulation operations that would require custom code in simpler tools.

Real limitations

- No real-time or streaming capability: SSIS is a batch ETL tool. It does not support event-driven pipelines or sub-minute data delivery.

- Windows-only development environment: SSIS packages are developed in Visual Studio on Windows. Linux and macOS development workflows face friction.

- Not cloud-native: Running SSIS in Azure requires Azure-SSIS Integration Runtime inside Azure Data Factory, which is a different product with separate pricing.

Pricing

Included with SQL Server 2019 Standard or Enterprise. No separate charge for SSIS. SQL Server licensing details are here.

How to Choose the Right Data Integration Tool

Four questions will narrow the field faster than any feature comparison chart.

1. What latency does your use case actually require?

- Under one minute: You need a CDC platform. Estuary is the right category.

- Under one hour: Managed ELT with frequent sync works. Fivetran and Airbyte both support 15-minute or hourly intervals.

- Daily or less frequent: Batch ETL is sufficient. SSIS, AWS Glue, Azure Data Factory, Matillion, Informatica, and most others serve you well here.

2. What is your team's honest technical capacity?

- No dedicated data engineers: Fivetran for data pipelines. Boomi or SnapLogic for application integration.

- Small data engineering team: Airbyte Cloud or Estuary Cloud. Managed experience with more control than Fivetran.

- Strong DevOps capacity: Airbyte self-hosted, AWS Glue, or Azure Data Factory for maximum control.

- Enterprise IT governance requirements: Informatica or Oracle ODI depending on your existing vendor relationships.

3. What cloud does your infrastructure live in?

- Azure: Azure Data Factory is the natural, cost-efficient first choice.

- AWS: AWS Glue for Spark ETL. Fivetran or Airbyte for managed ELT.

- Snowflake, BigQuery, or Redshift warehouse-centric: Matillion, Fivetran, or Airbyte depending on transformation depth needed.

- On-premises SQL Server: SSIS is already included in your license.

- No single cloud or multi-cloud setup: Estuary, Fivetran, and Airbyte are all cloud-agnostic and deploy across AWS, Azure, and GCP without locking you into one provider's ecosystem.

4. Have you modelled pricing at your real data volumes?

Usage-based pricing is unpredictable if you do not model it before signing. Fivetran's per-connector MAR model can double in cost as connector count grows. Matillion adds warehouse compute costs on top of licensing. AWS Glue only costs money when jobs run. Estuary's per-GB model is typically more predictable for CDC workloads. Most vendors offer pricing calculators or will provide a cost estimate based on your actual volumes. Use them before the commercial conversation.

Final Thoughts

There is no universally best data integration tool. Fivetran is the right choice for zero-maintenance managed ELT. Airbyte is the right choice when open-source flexibility and connector breadth matter more than managed reliability. Estuary is the right choice when you need real-time CDC and want a right-time platform that handles streaming and batch from one system. Matillion is built for teams that live inside Snowflake, BigQuery, or Redshift. Azure Data Factory and AWS Glue are most efficient when your infrastructure is already committed to one cloud. Informatica and Oracle ODI handle the enterprise and legacy scenarios that cloud-native tools cannot.

The most important recommendation we can give: validate against your actual requirements before signing a contract. Confirm your specific connectors are actively maintained, model the pricing at your real data volumes, and run a proof of concept on your own data before the commercial conversation happens. Most tools here offer a free tier or a free trial. Use it.

This article is updated quarterly. If you notice anything out of date, email sourabh@estuary.dev.

About Estuary: Estuary is the Right-Time Data Platform built for teams that need CDC, streaming, and batch pipelines in one system. The free plan includes 10 GB per month with 2 connector instances and requires no credit card. If real-time CDC or unified right-time data movement is relevant to your use case, it is worth evaluating alongside whichever other tool you are considering. Start for free at https://dashboard.estuary.dev/register

FAQs

What is Change Data Capture and when do I need it?

What does Right-Time Data Platform mean?

How much do data integration tools typically cost?

What is the best data integration tool for Snowflake?

About the author

Specialising in B2B SaaS growth, Sourabh works closely with the data engineering team at Estuary to produce accurate, technically grounded content on data integration, CDC, and real-time data platforms.