Importing CSV data into PostgreSQL is one of the most common data engineering tasks, and also one of the most error-prone. Missing headers, encoding mismatches, date format inconsistencies, and NULL handling quirks cause more failed imports than any configuration mistake.

This guide covers three methods: Estuary for automated continuous ingestion, the native COPY command for fast one-time loads, and pgAdmin for GUI-based imports. Each section includes the exact commands, parameters, and error fixes you need to complete the import without data loss.

⚡ Quick Summary: CSV to PostgreSQL Ingestion

The Schema Hurdle: Unlike SQL-to-SQL migration, CSVs carry no metadata. You must manually define the PostgreSQL target table schema or use a tool with schema inference before any data can load.

Manual Path: Use the COPY command for high-speed server-side imports. Use \copy (lowercase) in psql if the CSV is on your client machine, not the server.

The Encoding Trap: Mixed UTF-8 and ISO-8859-1 encodings are the leading cause of failed CSV imports. Always validate file encoding before running COPY.

Automated Path: For recurring or continuously updated CSVs, Estuary builds a real-time pipeline that handles schema inference, encoding, and ongoing sync without repeated manual imports.

COPY vs. INSERT vs. \copy: Which to Use

Before choosing a method, understand the three core import mechanisms in PostgreSQL and when each applies.

| Method | Best For | Speed | Requires Server Access |

|---|---|---|---|

COPY (server-side) | Large files on the database server | Very fast (bulk load) | Yes, superuser |

\\copy (client-side) | Files on your local machine | Fast | No |

INSERT statements | Small datasets, scripted row-by-row loads | Slow | No |

| pgAdmin Import | GUI-based one-off imports | Moderate | No |

| Estuary (HTTP/Sheets) | Recurring or continuous CSV sync | Real-time | No |

Key distinction between COPY and \\copy:

COPY is a PostgreSQL server command. The file path you provide must be accessible from the database server's filesystem, not your local machine. If you run COPY with a local file path, it will fail with ERROR: could not open file.

\\copy is a psql meta-command that reads from your client machine and streams data to the server. Use \\copy whenever the CSV is on your laptop or workstation.

Prerequisites: Setting Up the Target PostgreSQL Table

PostgreSQL cannot infer schema from a CSV file on its own. The target table must exist before you run COPY. This is the step most guides skip, and it is where most imports fail.

Step 1: Check your CSV structure

Before writing any DDL, inspect the file:

bash# Check encoding

file -i your_file.csv

# Preview first 5 rows

head -5 your_file.csv

# Count columns in header row

head -1 your_file.csv | tr ',' '\\n' | wc -l

Step 2: Map CSV columns to PostgreSQL types

Use this reference for common CSV field patterns:

| CSV Field Pattern | Recommended PostgreSQL Type | Notes |

|---|---|---|

| Integer numbers only | INTEGER or BIGINT | Use BIGINT for IDs over 2.1 billion |

| Decimal numbers | NUMERIC(p,s) | Avoid FLOAT for financial data |

| True/False, 1/0, Y/N | BOOLEAN | COPY accepts t/f and true/false natively |

| ISO dates (2024-01-15) | DATE | Direct import works |

| Timestamps (2024-01-15 10:30:00) | TIMESTAMP | Specify format if non-standard |

| Short text fields | VARCHAR(n) | Set n to realistic max length |

| Long or variable text | TEXT | No length limit |

| JSON strings | JSONB | Cast during or after import |

| Empty or nullable fields | Any type with NULL AS '' parameter | See COPY parameters below |

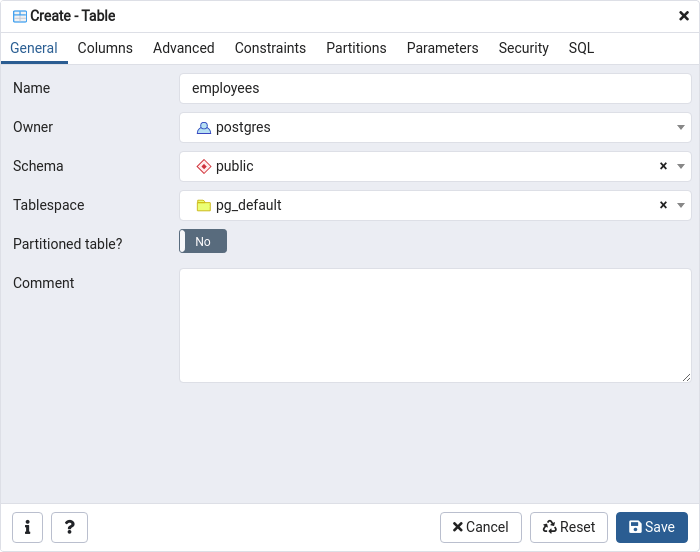

Step 3: Create the target table

sql-- Example: student records CSV

CREATE TABLE student_info (

student_id SERIAL PRIMARY KEY,

first_name VARCHAR(50) NOT NULL,

last_name VARCHAR(50) NOT NULL,

dob DATE,

city VARCHAR(50),

created_at TIMESTAMP DEFAULT NOW()

);

If your CSV has an ID column you want to import directly (not auto-generate), use INTEGER instead of SERIAL and omit the DEFAULT.

Method 1: Automated CSV to PostgreSQL with Estuary

Best for: CSVs hosted at a URL, Google Sheets exports, or any CSV that updates regularly and needs continuous sync rather than a one-time import.

Estuary connects to a CSV source via HTTP endpoint or Google Sheets, infers the schema automatically, and materializes the data into PostgreSQL continuously. When the source CSV updates, PostgreSQL reflects the change without any manual re-import.

When to use Estuary over COPY

- The CSV is hosted online (S3, Google Sheets, HTTP endpoint) rather than on disk

- The file is updated regularly and PostgreSQL needs to stay in sync

- You want schema inference instead of writing

CREATE TABLEmanually - You need to avoid writing and maintaining import scripts

Step 1: Sign in to Estuary

Register or log in at dashboard.estuary.dev.

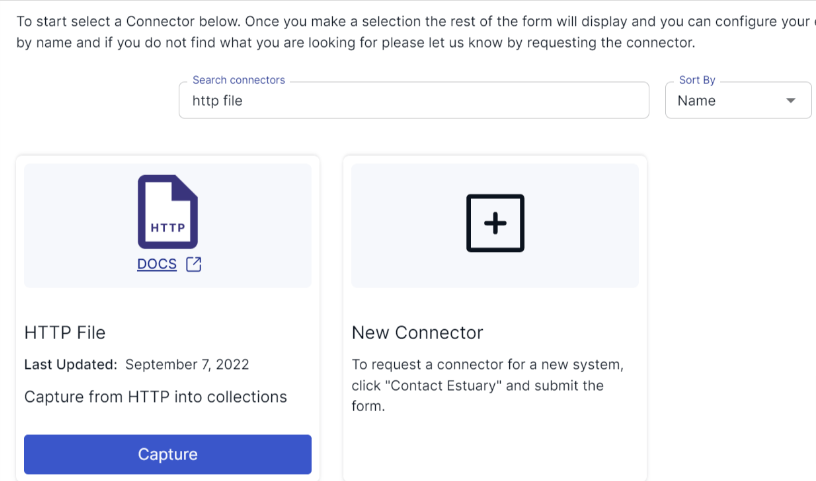

Step 2: Create a New Capture

Navigate to Sources and click + New Capture. Select:

- HTTP File connector if your CSV is hosted at a URL (including S3 pre-signed URLs)

- Google Sheets connector if the source is a Google Sheet

Note on Google Sheets sync: The Google Sheets connector polls on a configurable interval and performs a full sheet refresh on each poll rather than incremental row-level sync. For large sheets that update frequently, factor in the additional read volume this creates.

Provide the CSV URL or Sheets link. Estuary fetches a sample of the file and infers column names and types automatically. Review the inferred schema before proceeding — you can override any type in the collection spec.

Note for HTTP File: Before connecting, verify the URL supports HEAD requests and returns aLast-Modifiedheader. Test with: curl -I https://your-csv-url.com/file.csv. IfLast-Modifiedis absent from the response, the connector will not be able to detect file updates.

Step 3: Save and Publish the Capture

Click Next, review the collection spec, then click Save and Publish. Estuary begins reading the CSV immediately.

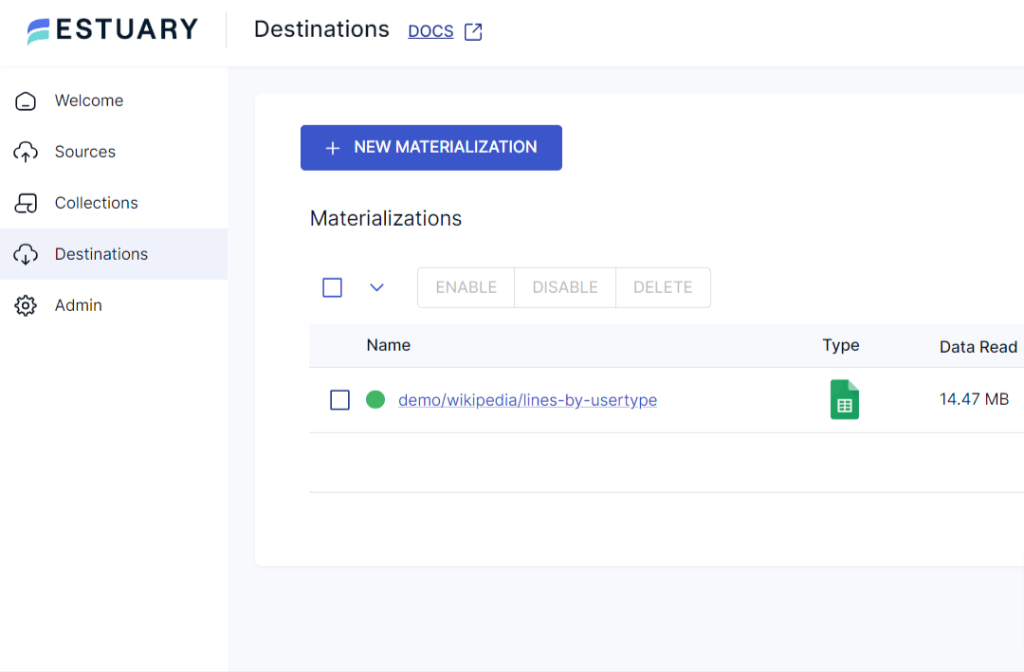

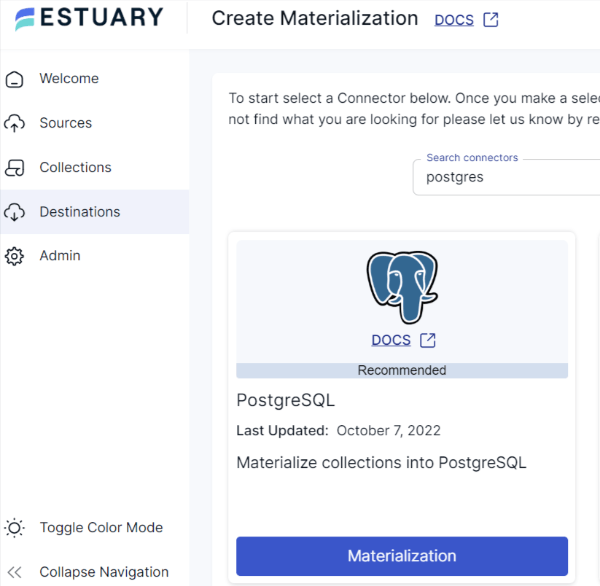

Step 4: Create a PostgreSQL Materialization

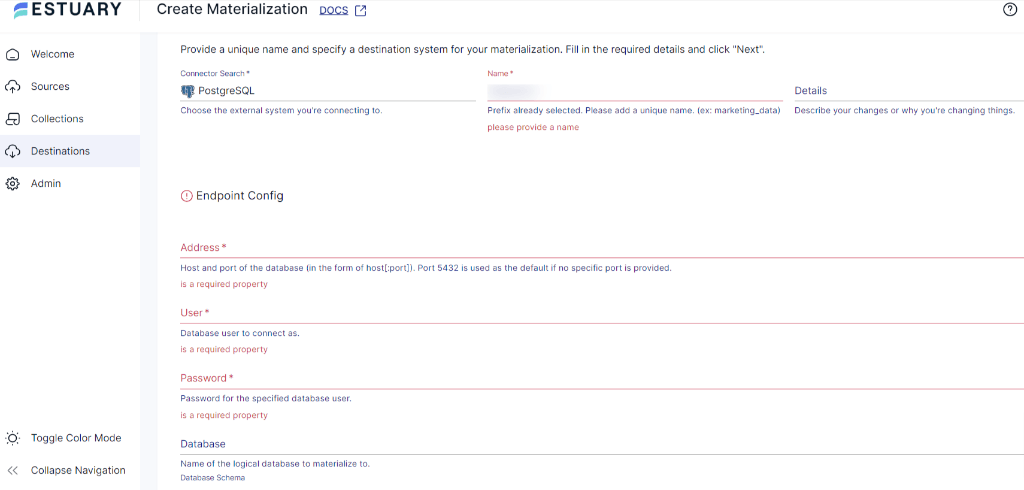

Navigate to Destinations and click + New Materialization. Select the PostgreSQL connector and fill in the endpoint config:

- Address: your PostgreSQL host and port (e.g.,

localhost:5432) - User: a PostgreSQL user with

CREATE TABLEandINSERTprivileges - Password

- Database: target database name

- Database Schema: target schema (default:

public)

Click Next. Estuary maps the captured collection to a PostgreSQL table, creating it automatically if it does not exist.

Step 5: Save and Publish

Click Save and Publish. Estuary performs the initial load, then monitors the CSV source for changes and syncs updates continuously.

What Estuary handles that manual COPY does not:

- Schema inference from CSV headers

- Automatic table creation in PostgreSQL

- Encoding normalization

- Ongoing sync when the source file changes

- Deduplication on re-imports

Method 2: Import CSV Using the PostgreSQL COPY Command

Best for: Large CSV files on the database server, one-time bulk loads, maximum import speed.

The COPY command is PostgreSQL's native bulk loader. It bypasses row-by-row INSERT overhead and loads directly into the table's heap files, making it significantly faster for large files.

Full COPY syntax reference

sqlCOPY table_name (column1, column2, column3)

FROM '/absolute/path/to/file.csv'

WITH (

FORMAT CSV,

HEADER TRUE,

DELIMITER ',',

ENCODING 'UTF8',

NULL '',

QUOTE '"',

ESCAPE '"'

);

Parameter reference:

| Parameter | Purpose | Default |

|---|---|---|

FORMAT CSV | Tells PostgreSQL this is a CSV, not binary or text | Required for CSV |

HEADER TRUE | Skips the first row (column names) | FALSE |

DELIMITER ',' | Field separator character | Comma |

ENCODING 'UTF8' | File encoding | Database default |

NULL '' | String in CSV that should be treated as NULL | Empty string |

QUOTE '"' | Character used to quote fields | Double quote |

ESCAPE '"' | Character used to escape the quote character | Same as QUOTE |

Step-by-step: importing student_info CSV

Step 1: Prepare the CSV file. Confirm encoding with file -i students.csv. If it returns iso-8859-1, either convert it first with iconv or specify ENCODING 'LATIN1' in the COPY command.

bash# Convert encoding if needed

iconv -f ISO-8859-1 -t UTF-8 students_latin.csv > students_utf8.csv

Step 2: Connect to PostgreSQL:

bashpsql -U postgres -d your_database

Step 3: Confirm the target table exists (see Prerequisites above). Then run the import:

sqlCOPY student_info (first_name, last_name, dob, city)

FROM '/var/data/students.csv'

WITH (FORMAT CSV, HEADER TRUE, ENCODING 'UTF8', NULL '');

Step 4: Verify row count:

sqlSELECT COUNT(*) FROM student_info;

Using \copy for client-side files

If the CSV is on your local machine (not the server), use \\copy in psql:

sql\\copy student_info (first_name, last_name, dob, city)

FROM '/Users/yourname/Downloads/students.csv'

WITH (FORMAT CSV, HEADER TRUE, ENCODING 'UTF8', NULL '');

\\copy uses identical syntax to COPY but reads from the client filesystem. No superuser privilege required.

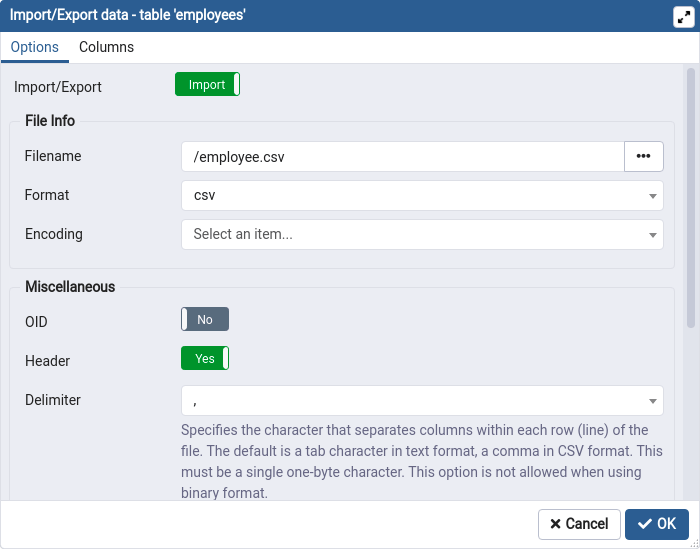

Method 3: Import CSV Using pgAdmin

Best for: One-off imports where you prefer a GUI over command line, or when you do not have direct psql access.

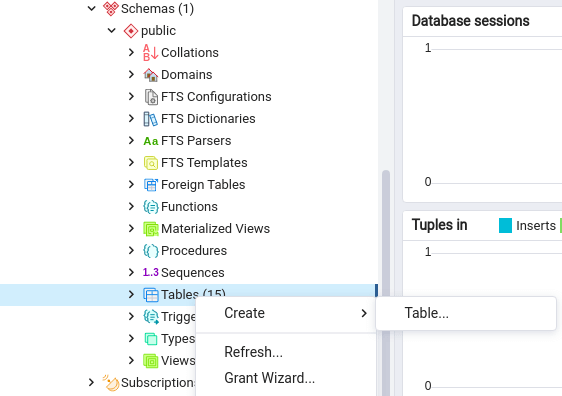

Step 1: Connect to your database in pgAdmin

Open pgAdmin, expand the server tree, and navigate to your target database and schema.

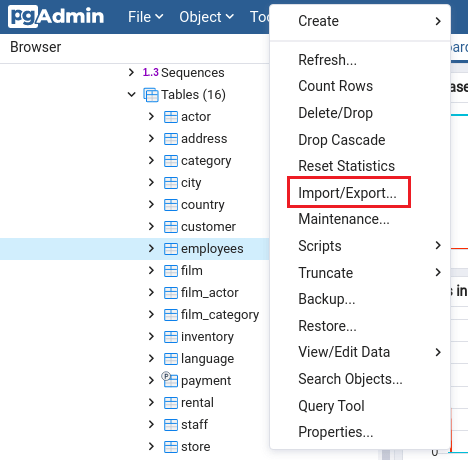

Step 2: Open the Import dialog

Right-click the target table in the Object Explorer. Select Import/Export Data. In the dialog that opens, set the toggle to Import.

Step 3: Configure the import settings

- Filename: browse to your local CSV file

- Format: CSV

- Encoding: match the file encoding (check with

file -iif unsure) - Under Options: toggle Header on if your CSV has a header row

- Set Delimiter to

,(or whatever separator your file uses) - Set NULL Strings to match how nulls appear in your file (commonly empty string or

NULL)

Step 4: Map columns (Columns tab)

Switch to the Columns tab. Verify the column mapping between CSV and table. If your CSV has columns in a different order than the table, reorder them here.

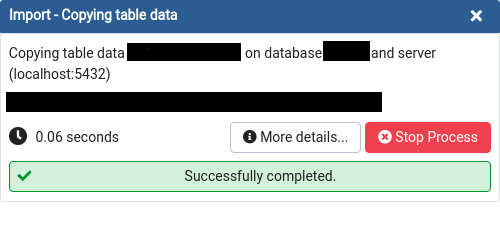

Step 5: Run the import

Click OK. pgAdmin runs \\copy behind the scenes and reports rows imported or any errors encountered.

CSV Import Validation Checklist

Run these checks in PostgreSQL immediately after any import method.

sql-- 1. Row count matches source

SELECT COUNT(*) FROM student_info;

-- 2. Check for unexpected NULLs in required columns

SELECT COUNT(*) FROM student_info WHERE first_name IS NULL OR last_name IS NULL;

-- 3. Check date range sanity

SELECT MIN(dob), MAX(dob) FROM student_info;

-- 4. Check for duplicate primary keys (if importing IDs)

SELECT student_id, COUNT(*) FROM student_info

GROUP BY student_id HAVING COUNT(*) > 1;

-- 5. Spot-check 5 random rows

SELECT * FROM student_info ORDER BY RANDOM() LIMIT 5;

Troubleshooting Common CSV Import Errors

These are the actual errors you will encounter and their exact fixes.

| Error | Root Cause | Fix |

|---|---|---|

ERROR: extra data after last expected column | CSV has more columns than the table, or wrong delimiter | Check delimiter; specify column list in COPY; verify header row count matches table |

ERROR: invalid input syntax for type date | Date format in CSV does not match PostgreSQL's expected format | Set datestyle before import: SET datestyle = 'ISO, MDY'; or use TO_DATE() in a staging table |

ERROR: invalid byte sequence for encoding "UTF8" | File contains non-UTF8 characters (often ISO-8859-1) | Run iconv -f ISO-8859-1 -t UTF-8 input.csv > output.csv then retry |

ERROR: could not open file: No such file or directory | Using server-side COPY with a local file path | Switch to \\copy (client-side) or move the file to the server |

ERROR: permission denied | Current user is not a superuser (required for server-side COPY) | Use \\copy instead, which does not require superuser |

| Empty strings imported as empty strings, not NULL | Default COPY behavior: empty fields become empty strings | Add NULL '' parameter to COPY command |

ERROR: value too long for type character varying(n) | A CSV field exceeds the column's VARCHAR length limit | Increase column size: ALTER TABLE t ALTER COLUMN c TYPE VARCHAR(200); |

ERROR: duplicate key value violates unique constraint | CSV contains duplicate values for a PRIMARY KEY or UNIQUE column | De-duplicate source: sort -u file.csv > dedup.csv or load into staging table first |

| Numbers importing as scientific notation | FLOAT type rounding on very large or very small numbers | Use NUMERIC instead of FLOAT for the column type |

| Header row imported as data | HEADER parameter not set | Add HEADER TRUE to COPY parameters |

Handling mixed encodings in a single file

This is the most common real-world scenario: a CSV exported from Excel on Windows that contains accented characters, em dashes, or currency symbols mixed with standard ASCII.

bash# Detect encoding

file -i problematic.csv

# or

chardet problematic.csv # requires Python chardet library

# Convert to UTF-8

iconv -f WINDOWS-1252 -t UTF-8 problematic.csv > clean.csv

# Verify conversion succeeded (should return no errors)

iconv -f UTF-8 -t UTF-8 clean.csv > /dev/null

Handling non-standard date formats

If your CSV contains dates in MM/DD/YYYY format rather than ISO YYYY-MM-DD:

sql-- Option 1: Set datestyle for the session before running COPY

SET datestyle = 'ISO, MDY';

COPY student_info FROM '/path/students.csv' WITH (FORMAT CSV, HEADER TRUE);

-- Option 2: Import as TEXT into a staging table, then cast

CREATE TEMP TABLE staging_import (

first_name TEXT, last_name TEXT, dob TEXT, city TEXT

);

COPY staging_import FROM '/path/students.csv' WITH (FORMAT CSV, HEADER TRUE);

INSERT INTO student_info (first_name, last_name, dob, city)

SELECT first_name, last_name, TO_DATE(dob, 'MM/DD/YYYY'), city

FROM staging_import;

Comparison: All Three Methods

| Capability | Estuary | COPY Command | pgAdmin |

|---|---|---|---|

| Schema inference | Yes (automatic) | No (manual DDL required) | No (manual DDL required) |

| Continuous sync | Yes | No (one-time) | No (one-time) |

| File location | URL or Google Sheets | Server filesystem | Local machine |

| Encoding handling | Automatic | Manual (specify in params) | Manual (specify in dialog) |

| Superuser required | No | Yes (for server-side COPY) | No |

| Speed for large files | High (streaming) | Very high (bulk load) | Moderate |

| Error visibility | Dashboard logs | psql terminal output | pgAdmin output panel |

| Best for | Recurring, hosted CSVs | Large one-time bulk loads | GUI-based one-off imports |

Conclusion

For a one-time import of a well-formed CSV, the COPY command is the fastest and most reliable path. For files on your local machine, \\copy removes the superuser requirement with no other tradeoffs. For CSVs that update regularly or are hosted at a URL, Estuary eliminates the manual re-import cycle entirely.

The troubleshooting table above covers 95% of real-world CSV import failures. Encoding issues and date format mismatches are responsible for the majority of errors — validate both before running any import.

FAQs

How do I import a CSV with a semicolon delimiter instead of a comma?

Can PostgreSQL import a CSV without a header row?

How do I handle a CSV where some rows have more columns than others?

What is the fastest way to import a very large CSV (10GB+) into PostgreSQL?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.