PostgreSQL to Apache Iceberg integration lets teams move operational database changes into open lakehouse tables for analytics, AI, and long-term historical analysis. The main decision is whether you need a one-time batch export or a continuously updated Iceberg table that reflects PostgreSQL inserts, updates, and deletes.

Batch workflows using COPY, CSV files, and Spark can work for one-time loads, proofs of concept, or low-frequency exports. pg_dump can be useful for backups or SQL-format exports, but COPY or psql \copy is clearer for CSV-based loading into Iceberg. These batch workflows require export jobs, file handling, Spark configuration, catalog setup, and repeated validation, and they do not automatically capture ongoing PostgreSQL changes.

For production lakehouse workloads, a CDC-based pipeline is usually the stronger option. Estuary can capture PostgreSQL changes using logical replication, backfill historical rows, and materialize the resulting collections into Apache Iceberg tables through an Iceberg REST catalog. This guide compares both approaches and explains the architecture, setup requirements, and production considerations for moving PostgreSQL data into Apache Iceberg.

Key Takeaways

Moving PostgreSQL data to Apache Iceberg helps teams scale analytics and lakehouse workloads without putting extra query load on the transactional database.

Estuary is a strong fit for continuous PostgreSQL to Iceberg pipelines because it can capture PostgreSQL changes with CDC and materialize them into Iceberg tables.

Manual pg_dump plus Spark workflows can work for one-time or periodic batch transfers, but they do not automatically capture ongoing PostgreSQL inserts, updates, and deletes.

For production use, plan logical replication, WAL retention, Iceberg catalog permissions, EMR Serverless compute, S3 staging, table keys, partitioning, and validation.

PostgreSQL to Iceberg Architecture: What Actually Has to Work?

Moving PostgreSQL data into Apache Iceberg requires more than exporting rows. A production pipeline has to coordinate the source database, change capture, lakehouse table format, catalog, compute engine, and storage layer.

| Layer | What matters |

|---|---|

| PostgreSQL source | Logical replication, WAL retention, publication, replication slot, table keys |

| Capture layer | Initial backfill, inserts, updates, deletes, schema changes, replay behavior |

| Estuary collections | Durable captured data that can be materialized into Iceberg |

| Iceberg catalog | AWS Glue REST, Amazon S3 Tables REST, Snowflake Open Catalog, or another REST catalog |

| Compute | EMR Serverless Spark jobs that merge changes into Iceberg tables |

| Storage | S3 staging bucket and final Iceberg table storage |

| Table design | Keys, partitioning, delete behavior, compaction, snapshot cleanup |

| Consumers | Spark, Trino, Flink, Athena, Snowflake, or other Iceberg-compatible engines |

Why Batch Exports Are Not Enough for Fresh Iceberg Tables

Batch exports can work when you only need a point-in-time snapshot, but they are a poor fit when Iceberg needs to reflect PostgreSQL changes continuously.

Common problems include:

- Missed intermediate updates: If a row changes multiple times between batch runs, only the latest exported state may appear in Iceberg.

- Delete handling: Hard deletes are difficult to capture with timestamp-based exports or basic CSV dumps.

- Clock and timestamp issues: Incremental exports based on

updated_atcan miss or duplicate records when timestamps are delayed, overwritten, or inconsistent. - Schema drift: Added, renamed, or removed columns can break export jobs or Spark loading logic.

- Operational overhead: You must schedule exports, move files, run Spark jobs, validate loads, retry failures, and clean up old files.

- Lakehouse maintenance: Repeated batch loads can create small files and require compaction and snapshot cleanup.

PostgreSQL to Apache Iceberg Methods Compared

| Method | Best for | Handles ongoing changes? | Latency | Setup effort | Operational burden |

|---|---|---|---|---|---|

| Estuary CDC pipeline | Production lakehouse ingestion, fresh analytics, AI workflows | Yes | Real-time or low-latency | Low to medium | Lower |

| COPY/CSV + Spark | One-time exports or periodic batch loads | No, unless scripted | Batch | Medium | Medium to high |

| Debezium + Kafka + Flink | Custom CDC-to-Iceberg architectures | Yes | Real-time or low-latency | High | High |

| Custom scripts | Highly customized export and merge workflows | Only if built manually | Depends on implementation | High | High |

If Iceberg tables need to stay current as PostgreSQL changes, use a CDC-based pipeline. If you only need a one-time export, COPY/CSV and Spark may be enough.

PostgreSQL to Apache Iceberg: 2 Methods Compared

- Method 1: Using Estuary to Load Data from Postgres to Iceberg

- Method 2: Batch Export from PostgreSQL to Iceberg with COPY, CSV, and Spark

Watch this quick video to see how Apache Iceberg transforms data management and how Estuary makes Postgres-to-Iceberg integration seamless.

Method 1: Using Estuary to Load Data from Postgres to Iceberg

Estuary is a managed data pipeline platform that can capture PostgreSQL changes using CDC, backfill historical rows, and materialize the resulting collections into Apache Iceberg tables through an Iceberg REST catalog.

This method is a strong fit when Iceberg tables need to stay current as PostgreSQL changes. Compared with batch exports, a CDC pipeline reduces the need for repeated CSV dumps, Spark batch jobs, manual merge logic, and reconciliation.

PostgreSQL CDC Requirements for Estuary

Before creating the PostgreSQL capture, confirm:

- PostgreSQL is version 10.0 or later.

- Logical replication is enabled with

wal_level=logical. - The capture user has the

REPLICATIONattribute. - A publication exists for the tables you want to capture.

- A replication slot exists or can be created by the connector.

- Each independent capture has its own replication slot.

- Estuary can reach the database over the network, optionally through SSH tunneling.

- WAL retention is monitored so replication slots do not cause unbounded WAL growth.

Estuary also offers a PostgreSQL Batch connector for managed PostgreSQL instances that do not support logical replication. That point matters because not every Postgres environment allows CDC.

PostgreSQL WAL Retention and Replication Slot Risks

PostgreSQL CDC depends on logical replication slots. A replication slot tells PostgreSQL which WAL changes still need to be retained for the capture process. If the slot does not advance, WAL files can accumulate and create storage pressure.

Before production use:

- Monitor replication slot lag and WAL retention.

- Avoid capturing unused or idle tables without a heartbeat strategy.

- Drop replication slots when captures are disabled or deleted.

- Set appropriate WAL retention safeguards for your PostgreSQL environment.

- Use read-only capture carefully if the captured publication may remain idle.

Steps

You can follow the below-mentioned steps to move data from Postgres to Iceberg after fulfilling the following prerequisites:

Pre-requisites

Before starting, make sure you have an Estuary account, PostgreSQL 10 or later with logical replication enabled, network access from Estuary to PostgreSQL, an Iceberg REST catalog, AWS EMR Serverless compute, an S3 staging bucket, and the required IAM roles for catalog and compute access.

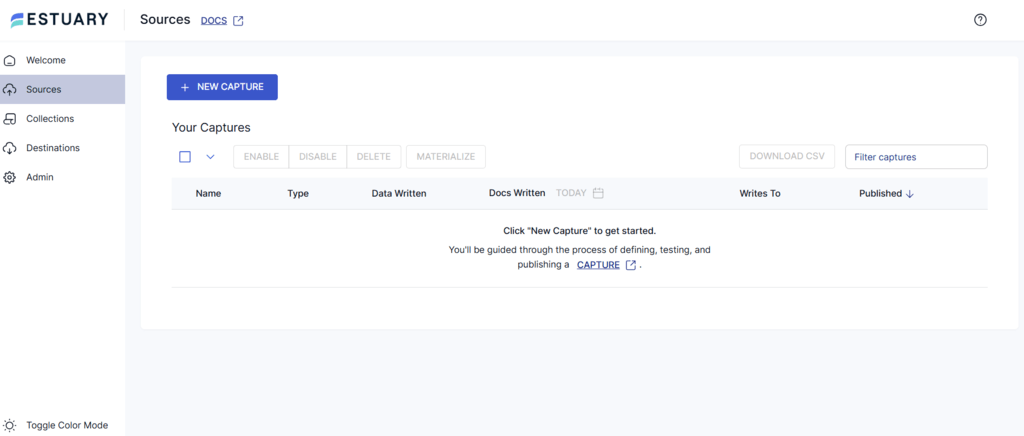

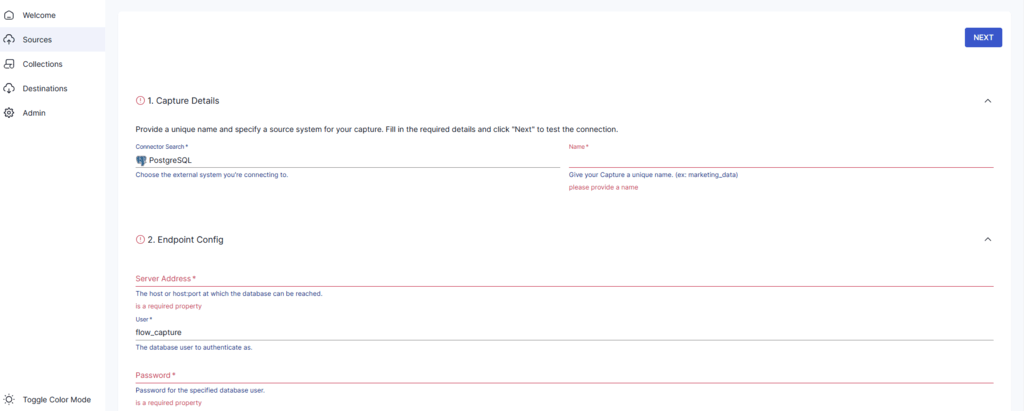

Step 1: Configure Postgres as Source

- Sign in to your Estuary account.

- From the left-side menu of the dashboard, click the Sources tab. You will be redirected to the Sources page.

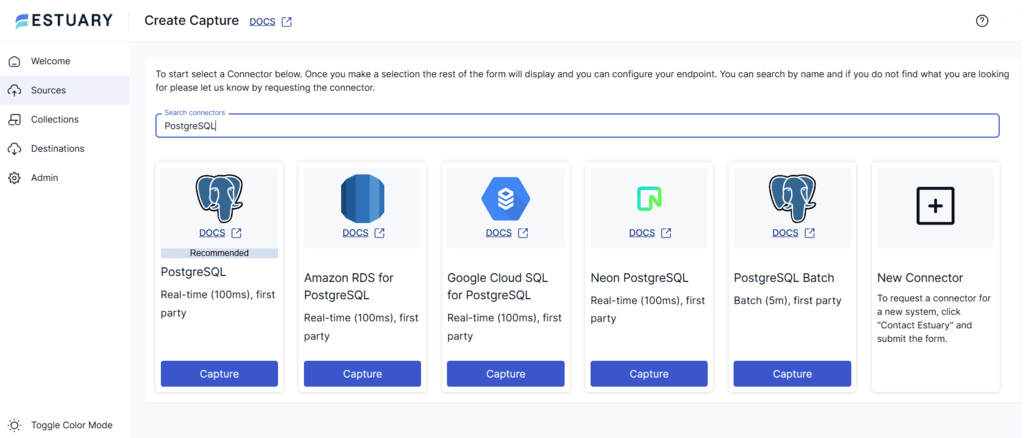

- Click the + NEW CAPTURE button and type PostgreSQL in the Search connectors field.

- You will see several options for PostgreSQL, including real-time and batch. Select the one that fits your requirements and click on the Capture button of the connector.

For this tutorial, let’s select the PostgreSQL real-time connector.

- On the connector’s configuration page, you need to enter all the essential fields, including:

- Address host or host:port

- Database name

- User and password

- SSL mode if your provider requires it

- After entering these details, click on NEXT > SAVE AND PUBLISH.

The PostgreSQL real-time connector captures ongoing inserts, updates, and deletes into Estuary collections. If logical replication is not available, Estuary’s PostgreSQL Batch connector can be used for periodic capture instead.

Recommended setup details

- Use read-only capture only when the connector cannot create a watermarks table.

- Use a heartbeat table if captured tables may remain idle.

- Set

max_slot_wal_keep_sizeto reduce the risk of unbounded WAL growth. - Include only the tables you intend to capture in the publication.

- Consider

REPLICA IDENTITY FULLonly when needed, because it can increase database overhead.

Apache Iceberg Materialization Requirements

Before creating the Iceberg materialization, make sure you have:

- An Iceberg catalog that implements the Apache Iceberg REST Catalog API.

- A supported REST catalog, such as AWS Glue Iceberg REST, Amazon S3 Tables REST, Snowflake Open Catalog, or another REST-compatible catalog.

- AWS EMR Serverless with Spark runtime.

- An S3 bucket for staging data files before they are merged into Iceberg tables.

- A dedicated IAM execution role for EMR Serverless jobs.

- An AWS IAM user or role that can submit jobs to EMR Serverless.

- The right catalog authentication method, such as AWS SigV4, AWS IAM, or OAuth 2.0 Client Credentials, depending on the catalog.

Estuary submits jobs to EMR Serverless to merge staged data into Iceberg tables. These jobs read staged files from S3 and connect to the Iceberg catalog using the credentials configured for the materialization. For OAuth-based catalogs, credentials can be stored in AWS Systems Manager Parameter Store for use by EMR jobs.

Which Iceberg Catalog Should You Use?

| Catalog option | Best for | Authentication notes |

|---|---|---|

| AWS Glue Iceberg REST | AWS-native lakehouse teams already using Glue and S3 | AWS IAM or SigV4-style authentication |

| Amazon S3 Tables REST | Teams standardizing on Amazon S3 Tables | AWS IAM/SigV4-style authentication |

| Snowflake Open Catalog | Teams using Snowflake’s Open Catalog/Polaris ecosystem | OAuth 2.0 Client Credentials |

| Other REST catalog | Teams using a vendor-managed or custom Iceberg catalog | Depends on provider |

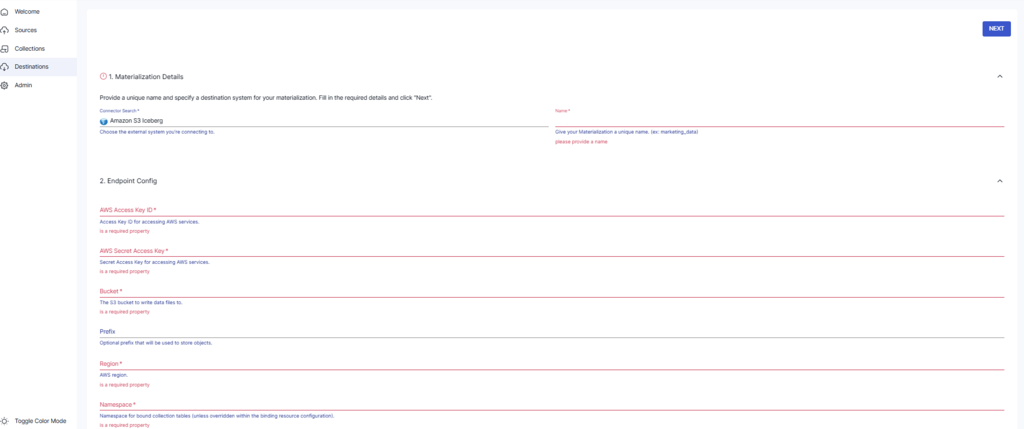

Step 2: Configure Iceberg as Destination

- Choose your catalog type and gather values

- AWS Glue Iceberg REST

- URL https://glue.<region>.amazonaws.com/iceberg

- Warehouse is your AWS Account ID

- Base Location is required and must be an S3 path

- Auth options include AWS SigV4 or AWS IAM

- Amazon S3 Tables REST

- URL https://s3tables.<region>.amazonaws.com/iceberg

- Warehouse is the S3 Tables bucket ARN

- Auth options include AWS SigV4 or AWS IAM

- Other REST catalogs for example Snowflake Open Catalog

- Use OAuth 2.0 Client Credentials with a scope such as PRINCIPAL_ROLE:<role>

- AWS Glue Iceberg REST

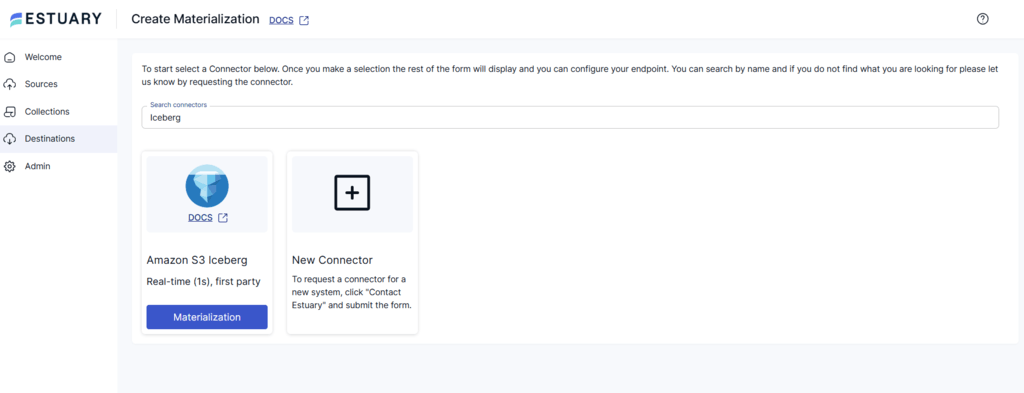

- In Estuary, go to Destinations and create a new materialization using the Apache Iceberg connector

- Set URL, Warehouse, Namespace, and Base Location if your catalog requires it

- Choose Catalog Authentication

- OAuth 2.0 Client Credentials

- AWS SigV4

- AWS IAM

- Configure Compute

- EMR region

- EMR Serverless application ID

- EMR execution role ARN

- S3 staging bucket and optional bucket path

- Optional Systems Manager Prefix for securely storing OAuth credentials used by EMR jobs

- The collections of your Postgres data added to your capture will be automatically linked to your materialization. However, if it hasn’t, you can manually select a capture to link.

To do this, click the SOURCE FROM CAPTURE button in the Source Collections section. Then, select your Postgres data collection.

- Finally, click NEXT and SAVE AND PUBLISH to complete the configuration process.

In practice, Estuary stages changes and uses EMR Serverless jobs to merge them into Iceberg tables through the configured catalog.

Advanced Iceberg Materialization Options

- Hard Delete applies source deletes as physical deletes. Off by default for soft delete.

- Lowercase Column Names makes all columns lowercase to improve compatibility with engines such as Athena.

- Sync Schedule lets you control how often materialization jobs run based on your freshness and cost requirements.

Iceberg Table Design for PostgreSQL CDC

When PostgreSQL changes are materialized into Iceberg, table design affects both correctness and query performance.

Plan these before production:

- Primary keys: Use stable keys from PostgreSQL so updates and deletes can be applied consistently.

- Partitioning: Partition Iceberg tables based on query filters, not simply PostgreSQL primary keys.

- Deletes: Decide whether downstream users need physical deletes, soft-delete metadata, or an audit trail.

- Compaction: CDC workloads can create many small files and delete files; plan compaction.

- Snapshot retention: Iceberg snapshots support time travel, but old snapshots should be expired according to retention needs.

- Schema evolution: Make sure downstream engines and jobs can handle added or changed columns.

- Query engines: Test the Iceberg tables with the engines that will actually read them: Spark, Trino, Athena, Snowflake, or others.

Here’s a quick video that shows how to set up Apache Iceberg with Amazon S3 and AWS Glue to simplify your data workflows:

Permissions checklist

Glue catalog

- Catalog user or role needs Glue permissions to create and modify databases and tables

- Access to the table bucket that stores Iceberg data and metadata

- If Lake Formation is enabled, grant Data Location, Create Database, and table level permissions to both the catalog user and the EMR execution role

S3 Tables catalog

- s3tables permissions for the target bucket for both the catalog user or role and the EMR execution role

EMR Serverless

- EMR execution role policy for reading credentials from Parameter Store when OAuth is used

- Read and write access to the S3 staging bucket

- Application start and job run permissions for the IAM principal configured in Estuary

What to Monitor in a PostgreSQL to Iceberg Pipeline

Track:

- PostgreSQL replication slot lag.

- WAL growth and disk usage.

- Capture errors and restart behavior.

- Backfill progress.

- Iceberg materialization job status.

- EMR Serverless job duration and failures.

- S3 staging bucket usage.

- Iceberg small-file growth.

- Snapshot count and metadata growth.

- Query performance in downstream engines.

Estuary vs Debezium/Kafka/Flink for PostgreSQL to Iceberg

| Approach | Strengths | Tradeoffs |

|---|---|---|

| Estuary | Managed PostgreSQL CDC, backfills, collections, Iceberg materialization, fewer components to operate | Less customizable than building every layer yourself |

| Debezium + Kafka + Flink | Highly flexible CDC architecture with strong streaming ecosystem | Requires operating Kafka, connectors, Flink jobs, schema management, and Iceberg sink behavior |

| Spark batch MERGE | Good for scheduled materialization and transformations | Higher latency and more orchestration work |

pg_dump / COPY + Spark | Simple for one-time loads | Not continuous; manual validation and scheduling required |

Method 2: Batch Export from PostgreSQL to Iceberg with COPY, CSV, and Spark

The manual batch method uses PostgreSQL COPY or psql \\copy to export table data into CSV files, then uses Apache Spark with the Iceberg runtime to write those files into Apache Iceberg tables.

This method is useful when you need a one-time backfill, a small proof of concept, or a periodic batch export from PostgreSQL into an Iceberg lakehouse. However, it is not a continuous replication method. It does not automatically capture PostgreSQL inserts, updates, and deletes after the export is complete.

Use this method when:

- You only need a point-in-time snapshot of PostgreSQL data.

- The PostgreSQL tables can be exported safely within your maintenance window or resource limits.

- You can tolerate batch freshness instead of real-time updates.

- You already use Spark for lakehouse processing.

- You are comfortable managing Iceberg catalog setup, Spark jobs, file paths, retries, and validation manually.

For production pipelines where Iceberg tables need to stay current as PostgreSQL changes, use a CDC-based method instead.

Step 1: Choose the PostgreSQL Tables to Export

Before exporting data, identify which PostgreSQL tables should be moved into Iceberg.

For each table, confirm:

- The table has a stable primary key or unique identifier.

- The table size is safe to export without affecting production workload.

- The table schema maps cleanly into Spark and Iceberg data types.

- Timestamp, numeric, JSON, array, and nullable fields are handled correctly.

- You know whether the Iceberg table should be partitioned by date, tenant, region, event type, or another query filter.

Avoid exporting every PostgreSQL table by default. Start with the tables that support a clear analytics, AI, or lakehouse use case.

Step 2: Export PostgreSQL Data to CSV

For CSV-based loading into Spark and Iceberg, use PostgreSQL COPY or psql \\copy.

Use server-side COPY when the PostgreSQL server can write to the target file path:

sqlCOPY your_table

TO '/path/to/your_table.csv'

WITH CSV HEADER;

Use client-side \\copy when you want to export the file from your local machine or another client environment:

bashpsql -d your_database -c "\\copy your_table TO 'your_table.csv' WITH CSV HEADER"

For a filtered export, use a query:

bashpsql -d your_database -c "\\copy (SELECT * FROM your_table WHERE updated_at >= '2026-01-01') TO 'your_table.csv' WITH CSV HEADER"

This is useful when you want to export a specific date range or a smaller subset of a large table.

Do not use pg_dump --column-inserts if your goal is to create CSV files for Spark. That command creates SQL insert statements, not CSV files. pg_dump is useful for backups and SQL-format exports, but COPY or \\copy is clearer for CSV-based PostgreSQL to Iceberg workflows.

Step 3: Upload the CSV File to Object Storage

Spark usually reads source files from a distributed storage location such as Amazon S3, Azure Data Lake Storage, Google Cloud Storage, or HDFS.

For example, if you are using Amazon S3, upload the exported CSV file:

bashaws s3 cp your_table.csv s3://your-bucket/postgres_exports/your_table.csv

Use a predictable path structure so batch jobs are easier to manage:

plaintexts3://your-bucket/postgres_exports/table_name/export_date=2026-04-29/your_table.csv

This helps with troubleshooting, reprocessing, and separating exports by table or date.

Step 4: Start Spark with the Iceberg Runtime

To write data into Apache Iceberg, start Spark with the Iceberg Spark runtime package.

Example for Spark 3.5 with Scala 2.12:

bashspark-sql \\

--packages org.apache.iceberg:iceberg-spark-runtime-3.5_2.12:1.7.0 \\

--conf spark.sql.extensions=org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions \\

--conf spark.sql.catalog.lakehouse=org.apache.iceberg.spark.SparkCatalog \\

--conf spark.sql.catalog.lakehouse.type=hadoop \\

--conf spark.sql.catalog.lakehouse.warehouse=s3://your-bucket/warehouse

This example uses a Hadoop-style catalog for simplicity. In production, many teams use a REST catalog, AWS Glue, Amazon S3 Tables, Snowflake Open Catalog, or another catalog that matches their lakehouse architecture.

If your production Iceberg setup uses a REST catalog, configure Spark with the catalog URI and authentication settings required by your environment.

Step 5: Create an Iceberg Namespace and Table

Create a namespace for the PostgreSQL data:

sqlCREATE NAMESPACE IF NOT EXISTS lakehouse.postgres;

Then create an Iceberg table.

Example:

sqlCREATE TABLE IF NOT EXISTS lakehouse.postgres.customers (

customer_id BIGINT,

email STRING,

first_name STRING,

last_name STRING,

status STRING,

created_at TIMESTAMP,

updated_at TIMESTAMP

)

PARTITIONED BY (days(created_at));

The exact table creation syntax can vary by Spark and Iceberg catalog configuration. Use the syntax required by your catalog and Spark runtime.

Choose partitions based on how the data will be queried. Do not automatically partition by PostgreSQL primary key. For analytics tables, date-based partitions are often more useful than high-cardinality identifiers.

Good partition candidates include:

created_atupdated_at- event date

- region

- tenant ID, only if query patterns justify it

- business date, such as order date or invoice date

Poor partition candidates usually include:

- email address

- UUID

- auto-incrementing ID

- high-cardinality user ID, unless carefully justified

Step 6: Read the PostgreSQL CSV Export with Spark

Use Spark to read the CSV file from object storage.

Example in PySpark:

pythoncsv_df = (

spark.read

.option("header", "true")

.option("inferSchema", "true")

.csv("s3://your-bucket/postgres_exports/customers/export_date=2026-04-29/customers.csv")

)

For production jobs, avoid relying only on inferSchema. Define the schema explicitly so column types do not change unexpectedly between exports.

Example:

pythonfrom pyspark.sql.types import StructType, StructField, LongType, StringType, TimestampType

customers_schema = StructType([

StructField("customer_id", LongType(), False),

StructField("email", StringType(), True),

StructField("first_name", StringType(), True),

StructField("last_name", StringType(), True),

StructField("status", StringType(), True),

StructField("created_at", TimestampType(), True),

StructField("updated_at", TimestampType(), True),

])

csv_df = (

spark.read

.option("header", "true")

.schema(customers_schema)

.csv("s3://your-bucket/postgres_exports/customers/export_date=2026-04-29/customers.csv")

)

Explicit schemas are safer for PostgreSQL to Iceberg workflows because PostgreSQL numeric, timestamp, boolean, JSON, and nullable fields can otherwise be inferred incorrectly.

Step 7: Write the DataFrame into an Iceberg Table

For a first-time table load, write the DataFrame into the Iceberg table:

pythoncsv_df.writeTo("lakehouse.postgres.customers").append()

If you want to create or replace the table from the DataFrame during a proof of concept, you can use:

pythoncsv_df.writeTo("lakehouse.postgres.customers").createOrReplace()

Use createOrReplace() carefully. It can be useful for testing, but production workflows usually need controlled append, merge, or overwrite behavior.

Step 8: Handle Repeated Batch Loads Carefully

If you run this export more than once, do not blindly append the same data again. That can create duplicates in Iceberg.

For repeated batch loads, use a staging table and then merge into the target Iceberg table.

Example:

pythoncsv_df.writeTo("lakehouse.postgres.customers_staging").createOrReplace()

Then run an Iceberg merge:

sqlMERGE INTO lakehouse.postgres.customers AS target

USING lakehouse.postgres.customers_staging AS source

ON target.customer_id = source.customer_id

WHEN MATCHED THEN UPDATE SET *

WHEN NOT MATCHED THEN INSERT *;

This helps prevent duplicate records when the same PostgreSQL rows are exported multiple times.

However, this still does not fully solve delete handling. If a row is deleted in PostgreSQL, a basic CSV export will not automatically tell Iceberg that the row should be deleted unless you build additional logic to detect missing records or export tombstone records.

Step 9: Validate the Iceberg Table

After loading data into Iceberg, validate the result before using it for analytics or AI workflows.

Run checks such as:

sqlSELECT COUNT(*) FROM lakehouse.postgres.customers;

Compare that count with PostgreSQL:

sqlSELECT COUNT(*) FROM customers;

Check sample records:

sqlSELECT *

FROM lakehouse.postgres.customers

WHERE customer_id = 12345;

Also validate:

- Primary keys are preserved.

- Timestamp values are correct.

- Null values are handled as expected.

- Numeric precision is not lost.

- JSON fields are represented correctly.

- Partitioning matches query patterns.

- Downstream engines can query the table.

- Re-running the batch job does not create duplicates.

- Updates and deletes are handled according to your business rules.

Step 10: Plan Iceberg Maintenance

Repeated CSV and Spark loads can create operational work in Iceberg.

Plan for:

- Compaction to reduce small files.

- Snapshot expiration to control metadata growth.

- Partition review as query patterns change.

- Cleanup of old export files.

- Monitoring failed Spark jobs.

- Validating row counts after each load.

- Tracking schema changes in PostgreSQL before each export.

This maintenance is especially important if the batch export runs daily, hourly, or across many PostgreSQL tables.

Limitations of COPY/CSV and Spark for PostgreSQL to Iceberg

The manual CSV and Spark method is useful for simple batch movement, but it has important limitations.

- It only captures a point-in-time snapshot unless you build additional scheduling and incremental logic.

- It does not automatically capture PostgreSQL inserts, updates, and deletes.

- It requires manual file exports, Spark configuration, catalog setup, and table validation.

- It can be error-prone when schemas change frequently.

- Large exports can put load on PostgreSQL and require careful scheduling.

- Spark jobs need monitoring, retries, and tuning for large datasets.

- Repeated batch loads can create small files and require Iceberg compaction and snapshot cleanup.

Use Cases for PostgreSQL to Apache Iceberg Integration

The high-performing nature of Iceberg tables makes them a suitable data system for numerous use cases. Some of these are as follows:

- Data Lake Architectures: A data lake is a centralized data system where you can store both structured and unstructured data. Using Iceberg tables within data lakes facilitates adequate data storage, management, and retrieval. You can then use this data for various finance, healthcare, banking, or e-commerce operations.

- High-Scale Data Analytics: By loading data to Iceberg tables, you can analyze petabyte-scale datasets for big enterprises, financial institutions, or government agencies. This simplifies the data-related workflow of such institutional bodies and popularizes data science for real-life applications.

- Fresh Analytics and AI Workflows: When PostgreSQL changes are continuously materialized into Iceberg, analytics and AI workflows can use fresher operational data without querying the transactional database directly. This is useful for customer intelligence, risk analysis, product analytics, and machine learning feature generation.

Conclusion

PostgreSQL to Apache Iceberg integration can be handled with a managed CDC pipeline or a manual batch workflow using CSV exports and Spark. The right method depends on your freshness requirements, PostgreSQL configuration, Iceberg catalog, compute environment, and how much operational work your team wants to manage.

Batch exports can work for one-time or periodic loads, but they require file handling, Spark jobs, catalog setup, and validation. They are not the same as continuously capturing PostgreSQL inserts, updates, and deletes.

Estuary is a strong fit when Iceberg tables need to stay current as PostgreSQL changes. It can capture historical rows and ongoing changes from PostgreSQL, then materialize them into Apache Iceberg tables through a REST catalog using configured compute such as EMR Serverless. Before production use, validate replication slots, WAL retention, catalog permissions, table keys, partitioning, update/delete behavior, compaction strategy, and downstream query performance.

FAQs

What is the best way to move PostgreSQL data to Apache Iceberg?

Do I need Kafka to sync PostgreSQL with Apache Iceberg?

How are PostgreSQL updates and deletes handled in Apache Iceberg?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.