A shift from a DynamoDB to Postgres is a transformative step in enhancing data management and analytics strategies. As both databases can handle structured and semi-structured data for analysis, this migration is a common approach for many businesses. Whether for autonomy or disaster recovery, DynamoDB Postgres migration will help you perform complex queries, advanced analytics, ACID transactions, and more.

This post explores two methods to connect DynamoDB and PostgreSQL, but before that, let's take a moment to get a better understanding of each of these platforms.

DynamoDB Overview

DynamoDB is a NoSQL database that provides a dynamic and scalable solution for managing massive amounts of data with low-latency performance.

As a NoSQL database, it eliminates the need for complex schema management and accommodates flexible data structures. Its key-value document-based model allows you to store and retrieve data without predefined structures. The flexibility of Amazon DynamoDB further extends due to its serverless architecture. This allows you to seamlessly handle growing workloads without manual interventions, making it a versatile choice for various applications, from mobile apps to gaming and IoT platforms.

PostgreSQL Overview

PostgreSQL, often known as Postgres, has a rich history dating back to 1986, when it originated as the POSTGRES project at the University of California, Berkeley. Over the years, it has become one of the most advanced open-source Object-Relational Database Management Systems (ORDBMS). Today, Postgres is a popular database globally used by businesses and developers to efficiently manage both structured and semi-structured data.

With features like support for advanced data types, powerful indexing capabilities, and a large set of extensions, Postgres is a reliable choice for handling a wide range of data needs. Its versatility extends to various use cases, including web applications, analytics, and geospatial applications, making it a suitable database solution in today’s data-driven world.

How to Integrate DynamoDB with PostgreSQL

There are two main approaches to connect DynamoDB and Postgres:

- Use SaaS tools like Estuary – Ideal for real-time, no-code CDC replication with sub-100ms latency.

- Manual export and import – Export DynamoDB data to S3 (using AWS Glue) and load it into PostgreSQL with DMS or custom scripts.

Let’s explore each method step by step.

Method 1: Create a DynamoDB to Postgres Data Pipeline Using SaaS Tools

While the above method does provide customization and control at various levels, its limitations can hinder real-time insights. To address these challenges, a data integration tool like Estuary is an effective solution that streamlines the data transfer process. Its automated, no-code approach helps to reduce the time and resources needed during the overall replication journey.

Estuary’s DynamoDB connector provides change data capture (CDC) capabilities, which lets you track changes in your database and capture them into Flow collections that can be migrated to Postgres (and other supported destinations!).

👉 With Estuary, changes captured from DynamoDB Streams can be replicated into PostgreSQL with sub-100ms latency, ensuring both systems remain in near-perfect sync for real-time analytics and operations.

Let’s look at the detailed steps and prerequisites to connect DynamoDB to PostgreSQL using Estuary.

Prerequisites:

- DynamoDB table with Streams enabled.

- An IAM user with the necessary table permissions.

- The AWS access key and secret access key.

- A Postgres database with necessary database credentials.

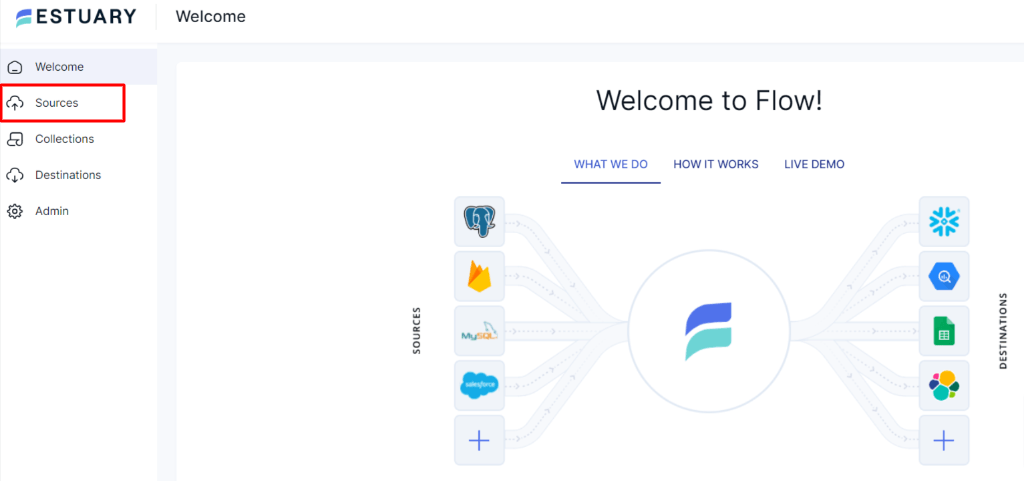

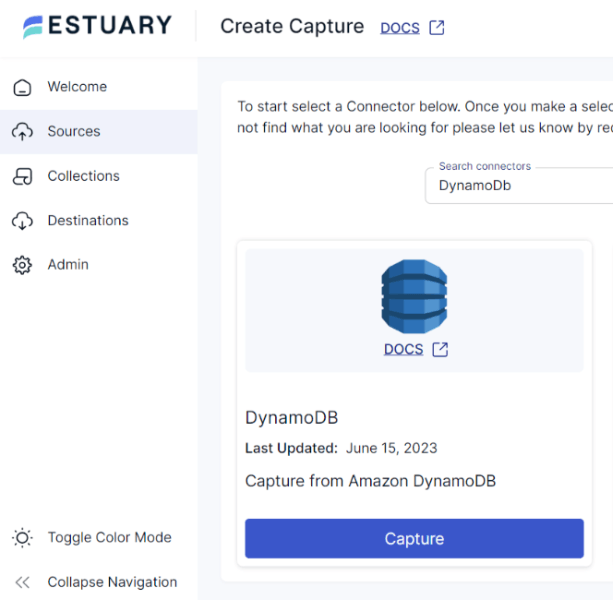

Step 1: Connect to DynamoDB Source

- Log in to your Estuary account or register for a new account if you don’t already have one.

- On the Estuary dashboard, click on the Sources present on the left side of the page.

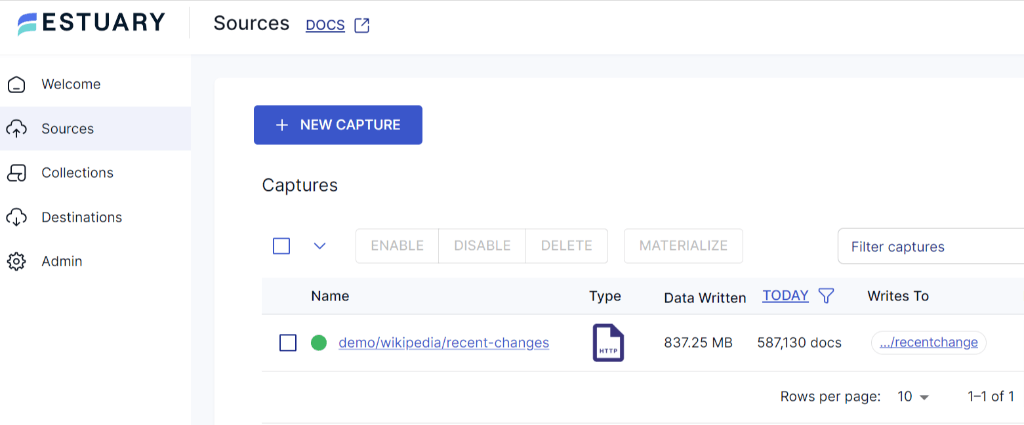

- Within the Sources page, locate and click on the + NEW CAPTURE button.

- On the Create Capture page, use the Search connectors box to find the DynamoDB connector. When you see the connector in the search results, click on the Capture button.

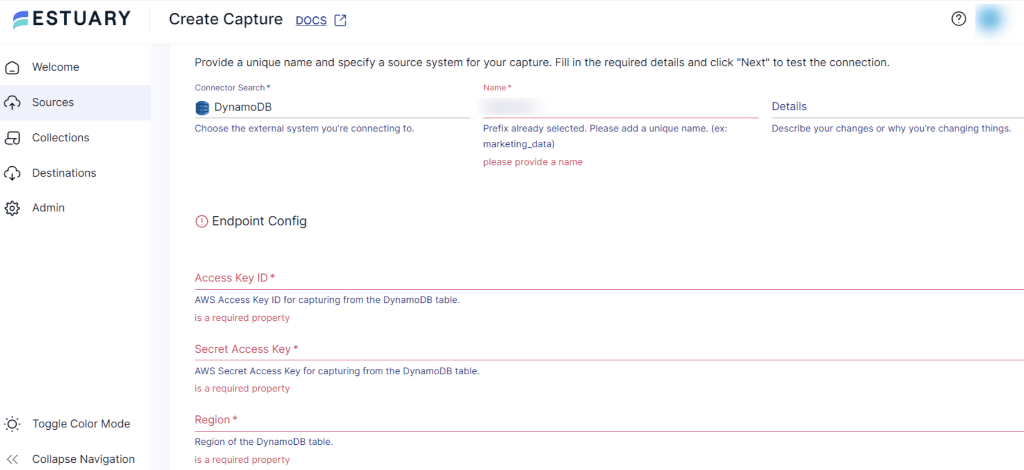

- On the DynamoDB Create Capture page, provide a unique Name for your connector. Specify the mandatory Endpoint Config details, including Access Key ID, Secret Access Key, and Region.

- Once you fill in all the required fields, click on Next > Save and Publish.

Step 2: Connect to PostgreSQL Destination

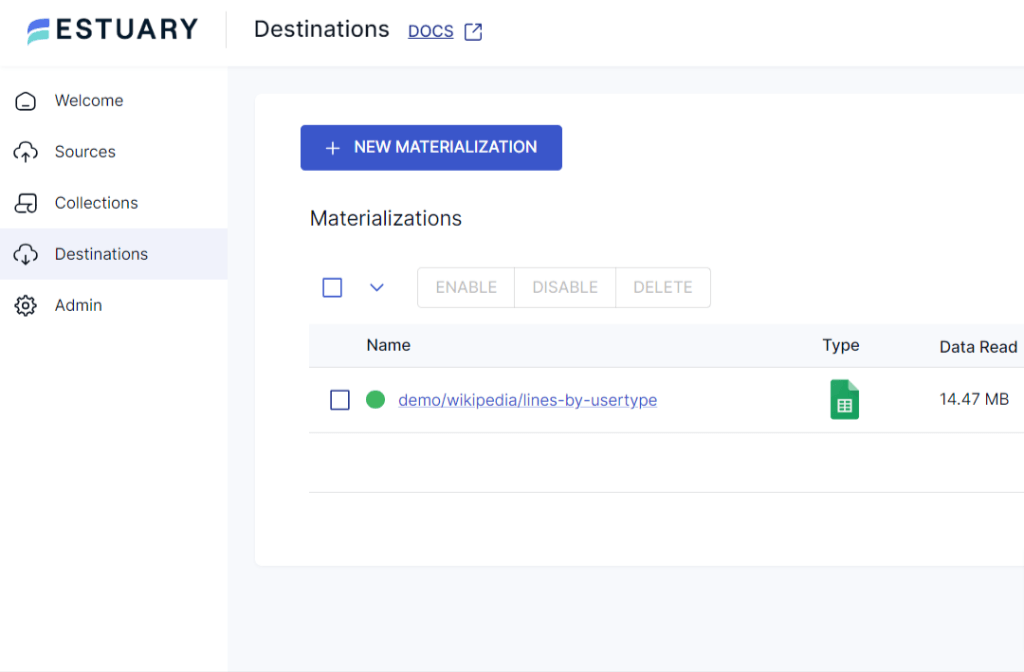

- Navigate back to the Estuary dashboard and click on Destinations to configure PostgreSQL.

- Within the Destinations page, click on the + NEW MATERIALIZATION button.

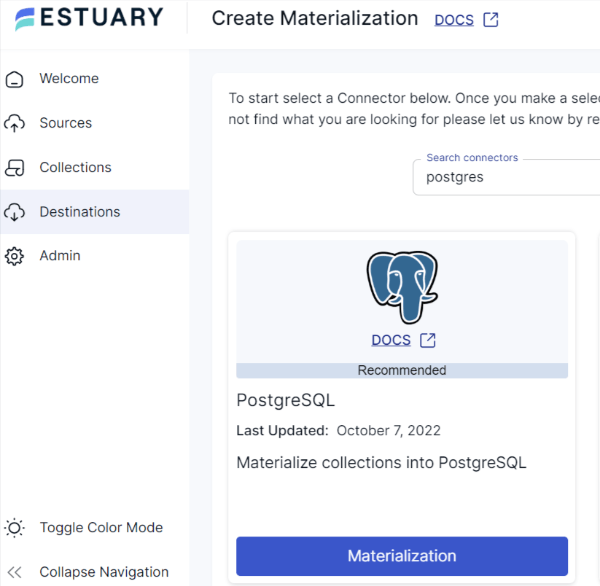

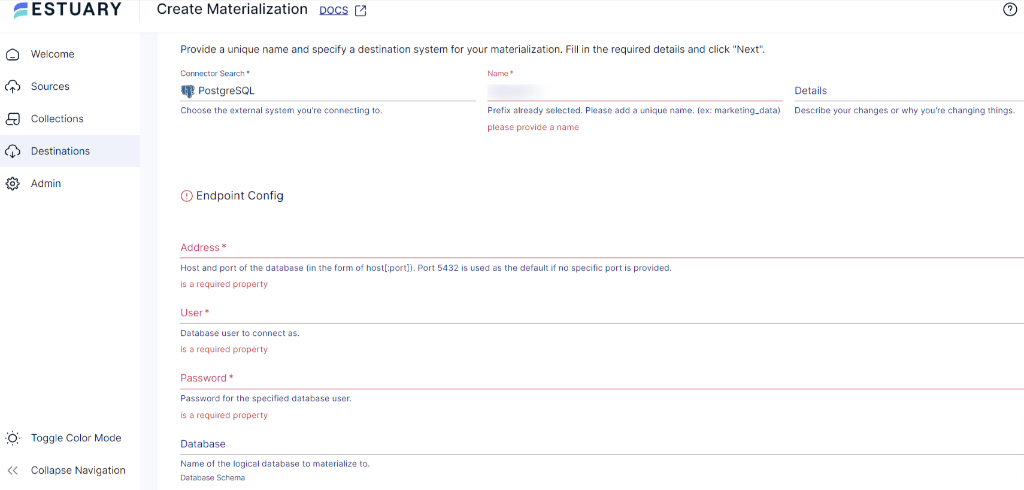

- On the Create Materialization page, use the Search connectors box to locate the PostgreSQL connector. Once you locate it, click on the Materialization button within the same tile.

- On the PostgreSQL Create Materialization page, provide a distinct Name for the connector. Provide all the necessary information, including Database Username, Password, and Address.

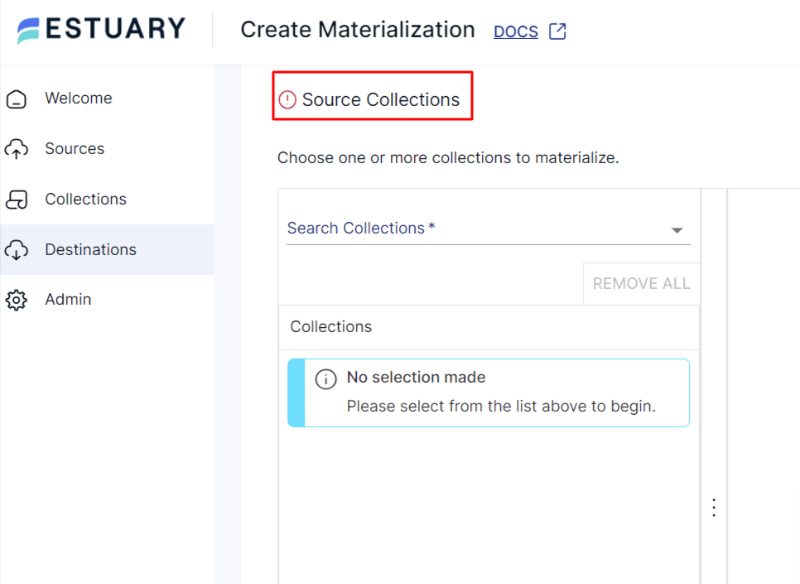

- In case the data from DynamoDB hasn’t been filled in automatically, you can manually add it from the Source Collections section.

- After filling in all the details, click on the NEXT button. Then click on SAVE AND PUBLISH.

After completing these two simple steps, Estuary will continuously migrate your DynamoDB data to Postgres in real-time, ensuring that your database is always up-to-date.

Get a complete understanding of the above flow from Estaury’s documentation:

Benefits of Using Estuary

- No-Code: Estuary eliminates the need for coding during extraction and loading, allowing you to perform data transfers without requiring advanced programming skills. This enables technical as well as non-technical users to effectively manage the migration process.

- Pre-built Connectors: With a wide range of 150+ inbuilt connectors, Flow facilitates effortless integration between multiple sources and destinations. This reduces the overall time and complexity required to set up the migration process.

- Change Data Capture with sub-100ms latency: Flow leverages CDC technology to detect and replicate only the modified data, applying changes to PostgreSQL in under 100ms. This minimizes transfer volume and keeps the target database perfectly up-to-date in real time.

Method 2: Manually Move Data from DynamoDB to PostgreSQL

There are two main steps to manually load data from DynamoDB into PostgreSQL:

- Exporting data from DynamoDB to Amazon S3 using AWS Glue.

- Importing data from S3 into PostgreSQL using a tool like AWS Database Migration Service (DMS) or custom Python scripts.

Prerequisites:

- AWS account

- Amazon DynamoDB table

- S3 bucket

- IAM roles with appropriate permissions for Glue, S3, and DynamoDB

Step 1: Export DynamoDB Data to Amazon S3 Using AWS Glue

AWS Glue is a fully managed ETL service that can extract, transform, and load data across AWS services. You can use Glue jobs to export DynamoDB tables into S3 in formats like CSV, JSON, or Parquet.

Here’s how to set it up:

- Sign in to the AWS Management Console and open AWS Glue.

- Click Jobs > Add job to create a new ETL job.

- In the Data source, select DynamoDB and choose the table you want to export.

- For the Target, select Amazon S3 and specify the bucket and folder path (e.g., s3://my-bucket/dynamodb-export/).

- Configure the output format (JSON, CSV, or Parquet).

- Assign an IAM role that has permissions for both DynamoDB and S3.

- Run the job — AWS Glue will export your DynamoDB table to S3.

Step 2: Replicate Data From S3 to PostgreSQL

Once your DynamoDB data is exported to S3, you can load it into PostgreSQL. There are two approaches:

Option 1: Custom Python Scripts

- Use the Boto3 library to download data from S3.

- Transform the data to match your PostgreSQL schema (e.g., reformatting timestamps, handling data types).

- Use Psycopg2 (or SQLAlchemy) to insert the data into PostgreSQL.

Option 2: AWS Database Migration Service (DMS)

- Open the AWS DMS Console and create a replication instance.

- Set up a source endpoint pointing to your S3 bucket.

- Set up a target endpoint pointing to your PostgreSQL database.

- Define table mappings to match S3 data to PostgreSQL tables.

- Run the migration task — DMS will move data from S3 into PostgreSQL automatically.

Limitations of the Manual Method

- Human Error: Manual setups increase the risk of data mapping issues, schema mismatches, or inconsistencies.

- Data Delays: Multiple steps (Glue job → S3 → Postgres) mean delays; this is not real-time.

- Resource Intensive: Requires AWS Glue jobs, S3 storage, and migration configurations, which increase overhead compared to no-code SaaS solutions.

Conclusion

To sum up, we’ve explored the process of integrating DynamoDB and Postgres seamlessly. To migrate data from DynamoDB into Postgres, you can either manually move the data or use SaaS tools like Estuary. The manual method involves using an S3 bucket and custom scripts or AWS tool to set up the DynamoDB to Postgres data pipeline. However, this method is prone to human errors and data delays.

Estuary provides a user-friendly interface and streamlines the data integration process. By using Flow, you can automate the integration tasks without writing extensive code in just two simple steps. This allows you to quickly perform real-time analysis and reporting tasks on up-to-date data.

Experience seamless data replication between DynamoDB and Postgres with Estuary and unlock the power of effortless automation. Sign up to build your first pipeline for free.

FAQs

Can I replicate DynamoDB data to PostgreSQL in real time?

Does Estuary support schema changes when migrating DynamoDB to PostgreSQL?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.