Most modern enterprises rely on search and AI systems. And these systems operate on data which is constantly changing.

The process of getting the data into the warehouse is not the main question for LLM-powered applications. The main question is whether the data that powers these systems is fresh enough for correct decision-making.

This is exactly the point where right-time data comes into play.

In this article, we will go through how organizations can power reliable Cortex Search pipelines in Snowflake using Estuary and Snowpipe Streaming. This behaves as a unified data movement layer that supports both real-time and batch workloads at scale.

We are going to discuss why right-time data is the correct choice. And, we will also talk about how traditional architectures cannot handle some needs.

Why Does “Right-Time” Matter?

Right-time is a concept that data should be delivered the moment when it’s actually needed. You have to ask yourself when does this data actually need to arrive to be useful? The answer depends on context:

- Fraud detection systems→ data is needed within milliseconds

- A sales dashboard for a daily standup → hourly or nightly refresh is fine

- A monthly executive report → batch processing works here as well

- An IoT sensor on a factory floor → maybe every few seconds

What Is Cortex Search in Snowflake?

Snowflake Cortex Search is a managed semantic search service. It allows companies to query structured or unstructured data using vector embeddings and natural language.

Instead of the usual keyword search, it gets the results based on semantic similarity. The internal logic contains a combination of vector embeddings with Snowflake-native storage. It also uses Snowflake warehouses for RAG systems, AI assistants, and enterprise search.

We need to ingest source data continuously into Snowflake. Then, embeddings are generated from text columns. Afterwards, a search service creates indexes for embeddings. In the end, users can query the service using semantic queries.

That’s why the critical requirement here is freshness for Cortex Search.

Cortex Search with Fresh Data

Cortex Search in Snowflake helps us use semantic search and vector-based retrieval for enterprise data. It uses AI assistants and recommendation systems.

When the underlying data is stale, search results will be worse. AI assistants provide outdated responses or unreliable recommendation system results. To make sure your AI is ready with the latest information at any time, AI search should often fall on the "real-time" side of the right-time spectrum.

With Snowpipe Streaming offering second-level latency on ingestion, stale data is a result of fragile or limited pipelines, not Snowflake itself.

The Hidden Cost of Too Many Tools

While the data powering your AI may be real-time, not all of your analytics require such low latency.

Most teams separate the logic of streaming and batch pipelines. They also connect them with custom orchestration.

In the end, all these tools bring maintenance overhead, fragile ingestion layers and pipelines, and unpredictable freshness of the data. Teams lose engineering time debugging pipelines when they could use this time to optimize data movement instead.

Right-time data requires a trusted foundation, not a collection of tools. This foundation is the data movement layer.

How Cortex Search Pipelines Break With Traditional Architectures

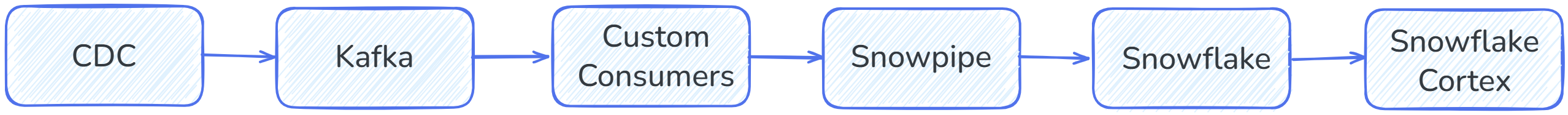

We can consider a common architecture that structures a search pipeline:

When it comes to production pipelines, this system is very open to failures.

Lag Builds Up

When the data volume increases:

- We will see a higher number of Kafka partitions.

- Consumers will struggle to catch up over time.

- There will be many more backlogs.

In the end, Snowflake will start receiving data later than expected.

Specifically for search systems, we will not be able to see newly created records in the results, and content will show outdated values.

As an inevitable result, search freshness will drift away from business reality.

Duplicate Events Appear

Most streaming pipelines are structured with a distributed approach, and this creates duplicates from retries, partial failures, and event delivery, which loses the order. Without reliable exactly-once semantics, this will break search indexes.

When we start seeing duplicate records, search indexes will contain multiple entries for the same entity, which causes inconsistencies.

Schema Changes Break Pipelines

As a result of dataset evolution, we can see additional or missing columns.

Schema evolution requires consumer updates, transformation deployments again and again, and backfills.

These result in ingestion downtime, and search pipelines do not update downstream anymore.

Batch Backfills Overlap With Streaming

Teams require backfills whether there’s a new feature, or there’s a model update, or in case of bugs.

When any of these happen, the streaming pipeline still keeps running. When this happens, batch pipelines start overlapping with streaming ingestion. We will also see breakage of the ordering, and also duplicated data.

Especially, for Cortex Search systems, this will lead to index instability.

Estuary as the Data Movement Layer for Right-Time Search

Estuary is designed in a way that it serves as a unified platform both for real-time and batch pipelines. It connects event streams and analytics platforms. It also maintains consistency and reliability.

Event Streams as the Foundation

Estuary acts as a right-time data movement layer. It streams events continuously instead of isolated batch jobs:

- Changes in the source get reflected to the destination continuously.

- Teams can replay events based on their needs.

- It allows systems to recover quickly. And during this, they don’t lose their states.

This means consistent updates, and predictable freshness for Cortex Search pipelines.

Exactly-Once Semantics

Since Estuary applies exactly-once semantics, it avoids duplicate data, so you don’t need to handle deduplication manually. This results in:

- Accurate search indexes.

- Clean Snowflake tables.

- Fewer operational incidents.

Your AI systems will become stable, and search results will be accurate.

Also, you will reduce operational costs, and data flows will become auditable.

Powering Snowflake Cortex Search With Estuary + Snowpipe Streaming

In this section, we will walk through a modern pipeline with Estuary.

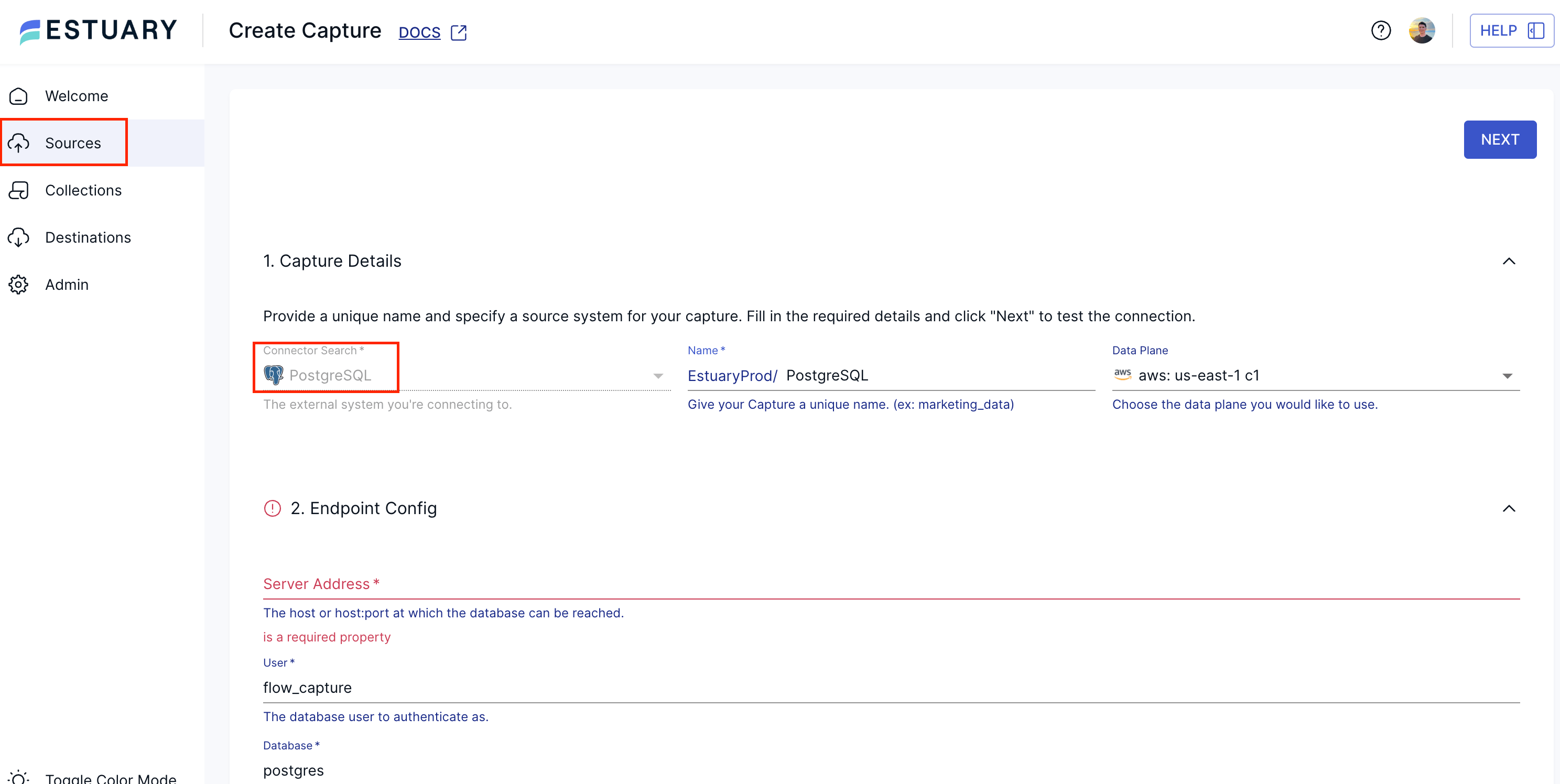

Step 1 — Capture Change Data

Let’s consider our source is a PostgreSQL database, perhaps with a products table. Estuary connects to PostgreSQL using connectors which capture data continuously.

Under the Sources section, search for PostgreSQL and create the capture according to your source information.

Estuary’s CDC captures don’t rely on polling. Materializations can directly reflect updates into your pipeline instead of exporting batches. This process ensures that your search indexes are always up to date.

Step 2 — Normalize Events

Inside Estuary’s data movement layer:

- You can have all your events validated.

- The ordering of the records is kept aligned with PostgreSQL.

- Optionally apply transformations to your data.

All these become critical since search indexes should reflect the latest and accurate version of each record. They also shouldn’t conflict with historical updates.

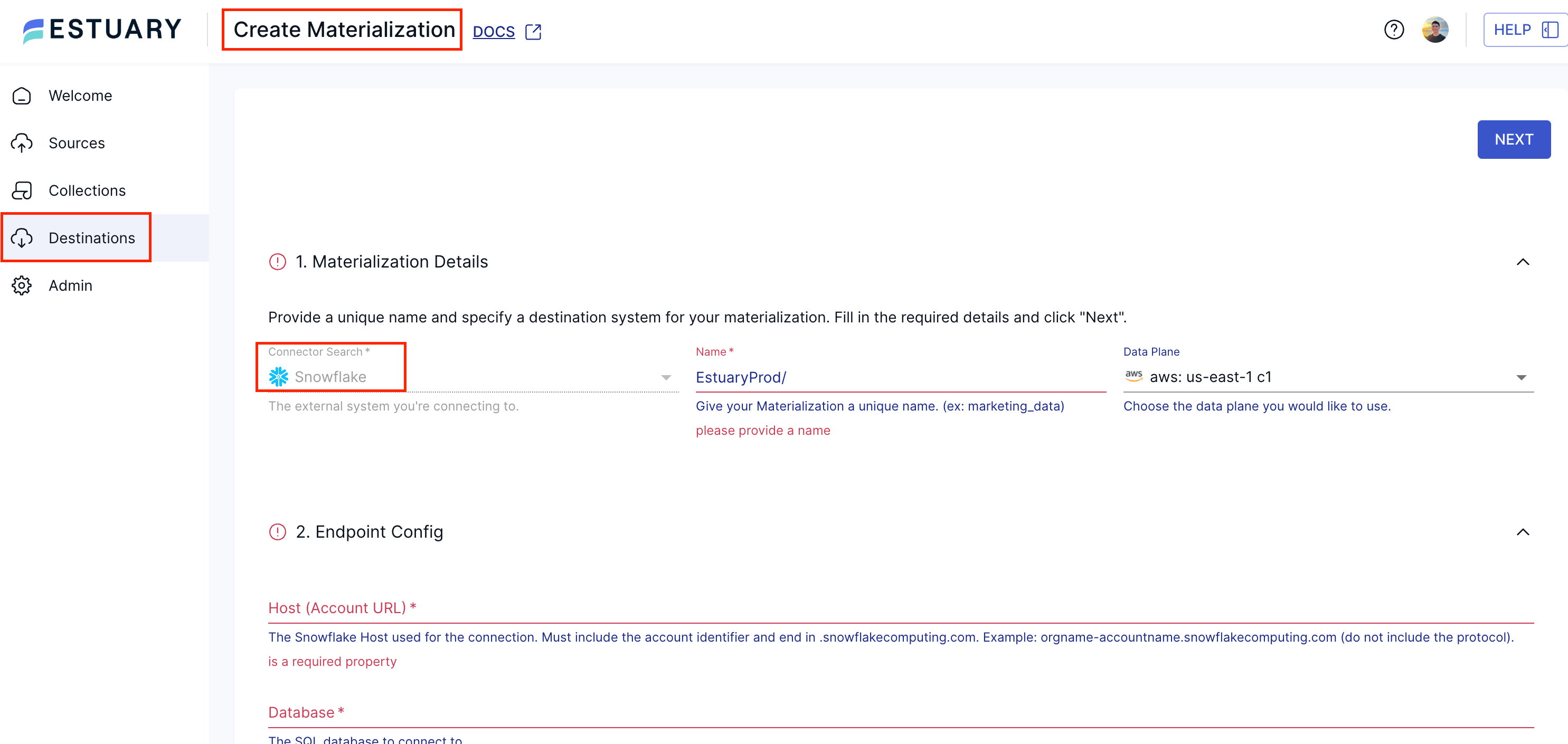

Step 3 — Stream Data Into Snowflake

In the end, you may deliver your data into Snowflake using Snowpipe Streaming. This helps us to ingest data with low latency, and without the need of micro batches.

This decreases the ingestion latency and complexity; and we can have updates reflected into Snowflake continuously. To do so, we need to create a Snowflake Materialization in Estuary:

Step 4 — Set Up Parameters for Streaming

While configuring the ingestion behavior, we want to use Snowpipe Streaming since it uses serverless compute, and latency is the minimum when compared to the other options.

Snowpipe Streaming is enabled via Delta Updates mode. It skips merge operations and writes updates directly into Snowflake tables.

When we add the source collection to our Snowflake materialization, we can enable Delta Updates for the collection binding.

💡 Estuary applies exactly-once semantics where possible. Note that with delta updates, however, rows do not get fully reduced: events are ingested as a real-time stream. This means that exactly-once semantics apply to events, not primary keys. You will only receive one row in your destination per create, update, or delete event, but multiple rows may relate to the same key from the original source.

For real-time delivery, we should also set the Sync Schedule to 0s.

Step 5 — Create a Cortex Search Service

Until this point, we have ingested our data from PostgreSQL to Snowflake in real-time with best practices. Now, we should create a Cortex Search service.

At this step, Estuary is materializing source data into Snowflake tables. We can use one of those materialized tables for our Cortex Search service.

Let’s consider that Estuary continuously materializes a products table. This table contains product descriptions which we want to make searchable.

Using Snowflake Cortex functions, let’s generate embeddings:

sqlCREATE OR REPLACE TABLE product_embeddings AS

SELECT

product_id,

SNOWFLAKE.CORTEX.AI_EMBED('snowflake-arctic-embed-m', description) AS embedding

FROM products;Then, we can create a Cortex Search service on top of this table:

sqlCREATE OR REPLACE CORTEX SEARCH SERVICE product_search

ON description

ATTRIBUTES product_id

WAREHOUSE = cortex_search_wh

AS (

SELECT product_id, description

FROM products

);Then, we can look for the information with textual search:

sqlSELECT *

FROM product_search

WHERE SEARCH('Noise Cancelling Headphones')

LIMIT 5;This is going to help us use both the Cortex Search service and embeddings. In the end, we can find whatever we want in our tables with semantic queries.

With the help of this modern architecture, we can eliminate cluster management and fragile custom consumers.

Built-in Best Practices

Estuary enforces some guarantees for the best results for Cortex Search services.

- Estuary orders the events aligned with sources. So, index updates happen in the most correct way.

- Estuary ensures that updates are consistent by applying exactly-once semantics. This keeps search indexes stable and reliable.

- Estuary can seamlessly handle schema evolution so that pipelines keep operating even as the shape of your data changes.

- Estuary provides observability so that teams can track freshness and pipeline health.

There are also countless cost advantages:

- Teams can manage data flows with fewer disparate tools.

- Engineering time dedicated to troubleshooting and fixing pipelines is reduced.

- Snowflake doesn’t need to reprocess data.

- Compute powers are optimized.

All these often result in significant cost savings at scale.

Deployment Flexibility, Compliance, and Enterprise Trust

Enterprise data movement is not only related to performance. It’s also tightly related to governance and compliance.

With Estuary, teams can have deployment models that enterprises require, such as Bring Your Own Cloud (BYOC), hybrid environments, and multi-region architectures.

Specifically for organizations that require frequent audits in regulated industries, this flexibility becomes critical.

Data Security

With a unified data movement platform like Estuary, you can meet all audit requirements:

- You can easily observe where the data originates from. If your pipeline requires data to stay within specific regions, you can deploy accordingly.

- You can obtain all required information about each step of the pipeline, and how your data flows. So, your entire infrastructure follows compliance frameworks.

- You can control data access, and it becomes auditable.

Why This Matters for AI and Search

Since AI regulations are in a state of flux, enterprises can avoid compliance risks, financial penalties, and reputational damage as the legal landscape changes.

Reliable pipelines can reduce all these risks.

A Trusted Partnership With Snowflake

Estuary is a premier partner of Snowflake, and it has a deep integration. More than 90 shared customers trust Estuary and Snowflake for enterprise-grade data movement.

This recognized partnership shows us how Estuary has become a core enterprise infrastructure layer.

Performance and Cost

Streaming ingestion has many advantages compared to batch ingestion patterns.

| Metric | Batch Pipelines | Snowpipe Streaming |

|---|---|---|

| Data latency | 5–60 minutes | Seconds |

| Infrastructure | Batch jobs + staging | Fully streaming |

| Warehouse usage | Required for ingestion | Not required |

| Operational complexity | High | Low with a managed integration |

Real Outcomes: From Better Search to Better Business Decisions

We can obtain real business outcomes with right-time search systems.

Fraud Detection

Financial systems require us to detect anomalies quickly. Hence, they need to rely on fresh data.

The risk models update continuously with a unified streaming pipeline.

AI and LLM Applications

AI applications should work with the most up-to-date data, and they sometimes need immediate access to it.

With Estuary’s right-time ingestion:

- LLM-powered applications provide more accurate responses.

- Since data is fed continuously, recommendations become context-aware.

- Automations become reliable.

Marketing Personalization

All events can be streamed into Snowflake, and we can have them indexed quickly.

Marketing teams can adjust marketing campaigns in real-time, and search results across platforms can become personalized.

The Strategic Impact

With Estuary, teams can spend more time building impactful products instead of spending time on maintenance.

So, the data movement layer with Estuary has become a strategic layer instead of just infrastructure.

If your organization is building Cortex Search pipelines on Snowflake, it’s worth exploring how Estuary behaves like a backbone for them.

What matters now is delivering the right data, at the right time, reliably.

FAQs

What is the best way to stream CDC data into Snowflake for low-latency search and RAG?

How can I measure and monitor Cortex Search refresh lag and freshness in Snowflake?

About the author

Senior Data Engineer with over four years of experience delivering end-to-end analytical solutions across industries including e-commerce, marketplaces, SaaS, proptech, enterprise platforms, and supply chain operations. Strong expertise in AI, machine learning, cloud engineering, and modern data and DataOps architectures, with a focus on scalable analytics, real-time data processing, and production-grade AI systems.