Syncing MySQL to Pinecone lets you convert structured relational data into vector embeddings for use in semantic search, recommendation engines, and retrieval-augmented generation (RAG) workflows. There are two ways to do this: using Estuary for automated, real-time CDC-based replication, or writing custom Python scripts with CSV exports for a manual approach.

What Is MySQL to Pinecone Integration and Why Does It Matter?

MySQL stores structured relational data. Pinecone is a vector database built for similarity search and AI-powered applications. Connecting the two lets teams use existing operational data to power faster, more accurate AI search experiences, including semantic search tools, recommendation engines, and RAG pipelines.

Method 1: How to Sync MySQL to Pinecone Using Estuary

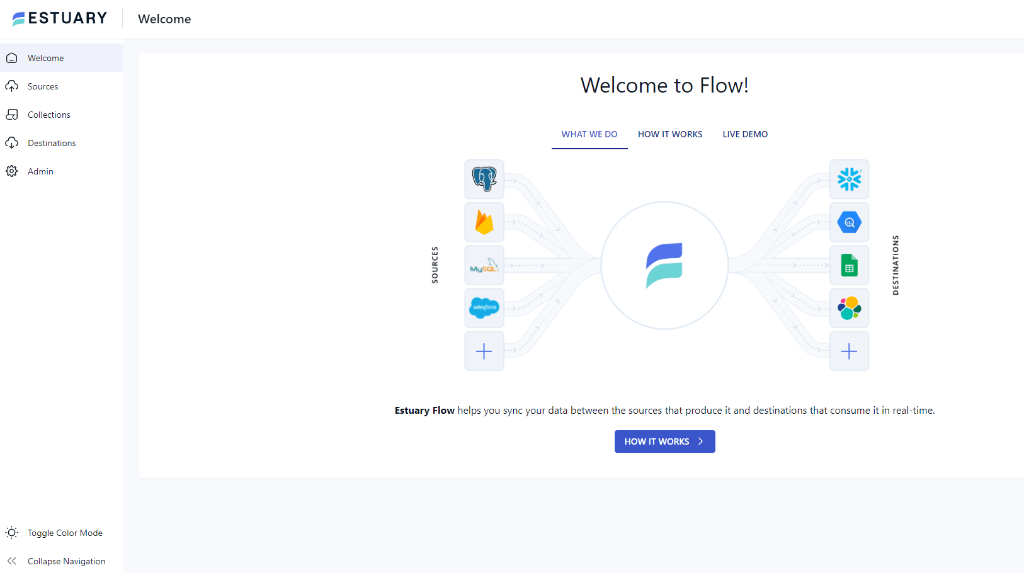

Estuary is a real-time CDC platform with pre-built connectors for both MySQL and Pinecone. It requires no custom code and keeps data in sync continuously using log-based change data capture.

Prerequisites

- An active MySQL database

- A Pinecone account and API key

- An Estuary account

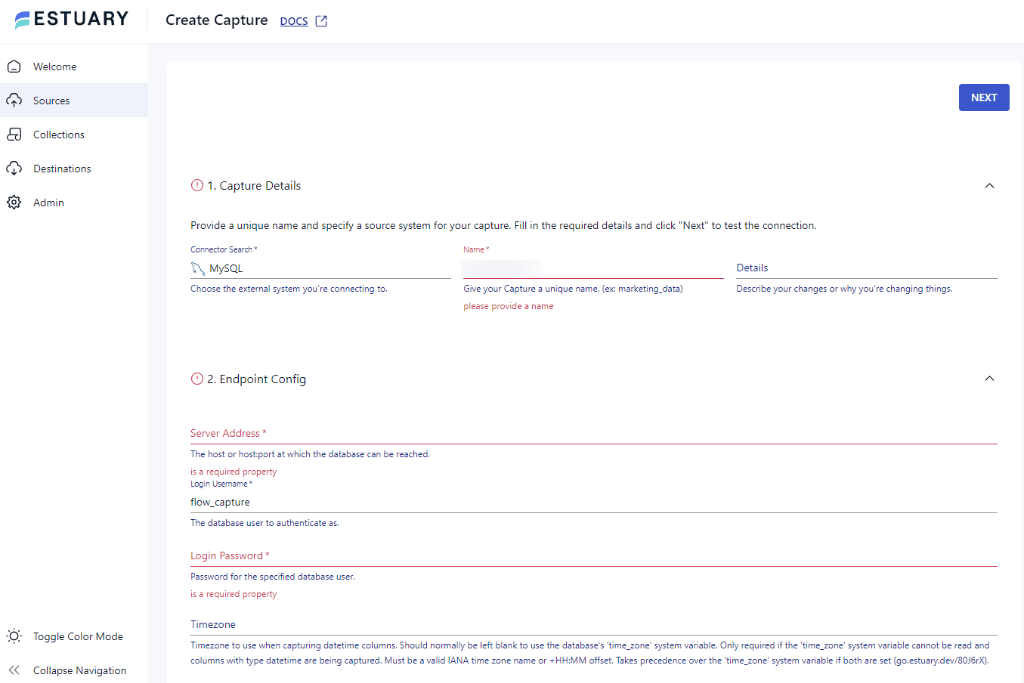

Step 1: Connect MySQL as a Source Connector

- Log in to your Estuary account and open the dashboard

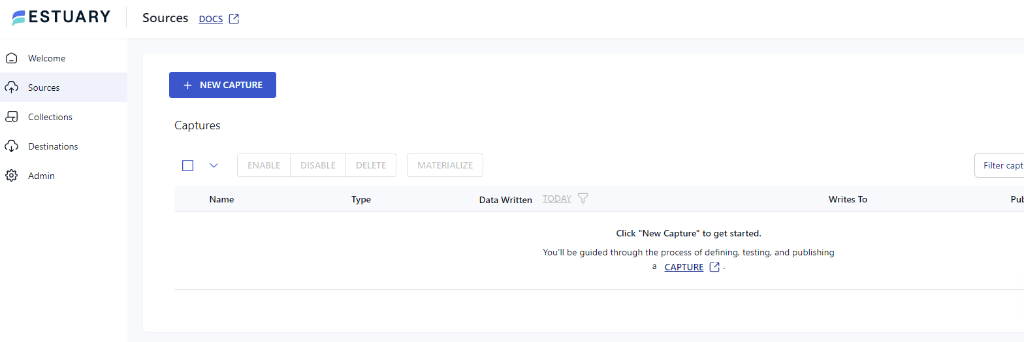

- Click Sources in the left navigation, then +New Capture

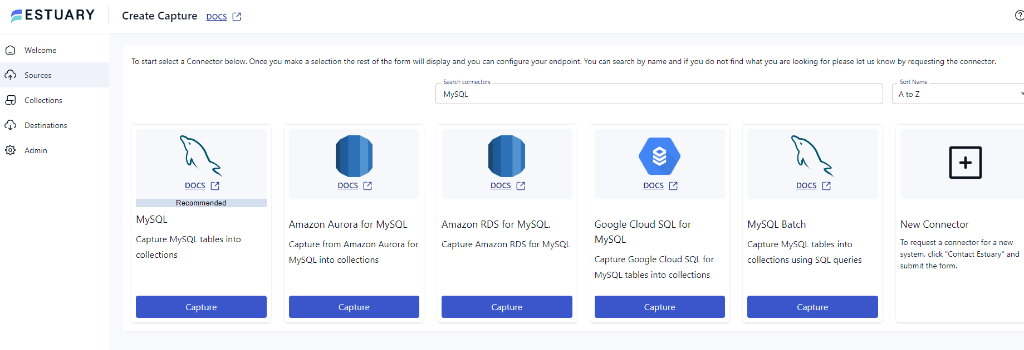

- In the Search connectors box, type MySQL, and you will see the connector in the search results. Click on its Capture button.

- This will redirect you to the MySQL connector page. On the Create Capture page, fill in the details like Name, Server Address, Login Username, Password, and Database details. Now, click on NEXT > SAVE and PUBLISH

Step 2: Connect to Pinecone as Destination

After a successful capture, a pop-up displaying the capture details will appear. Click the MATERIALIZE CONNECTIONS button in this pop-up to start setting up the pipeline's destination end.

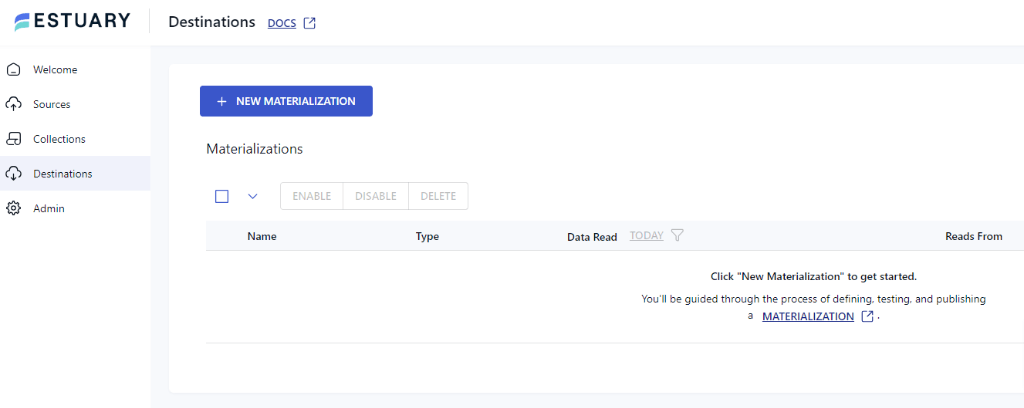

Alternatively, after configuring the source, click the Destinations option on the left side of the dashboard. You will be redirected to the destination page.

- On the Destinations page, click on the +NEW MATERIALIZATION button.

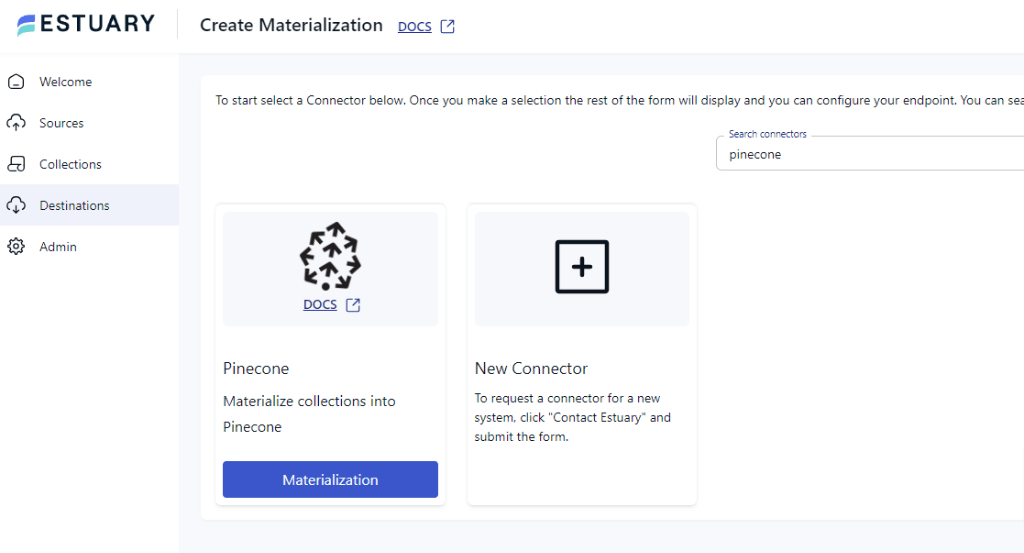

- Type Pinecone in the Search connectors box. When you see the Pinecone connector in the search results, click on its Materialization button.

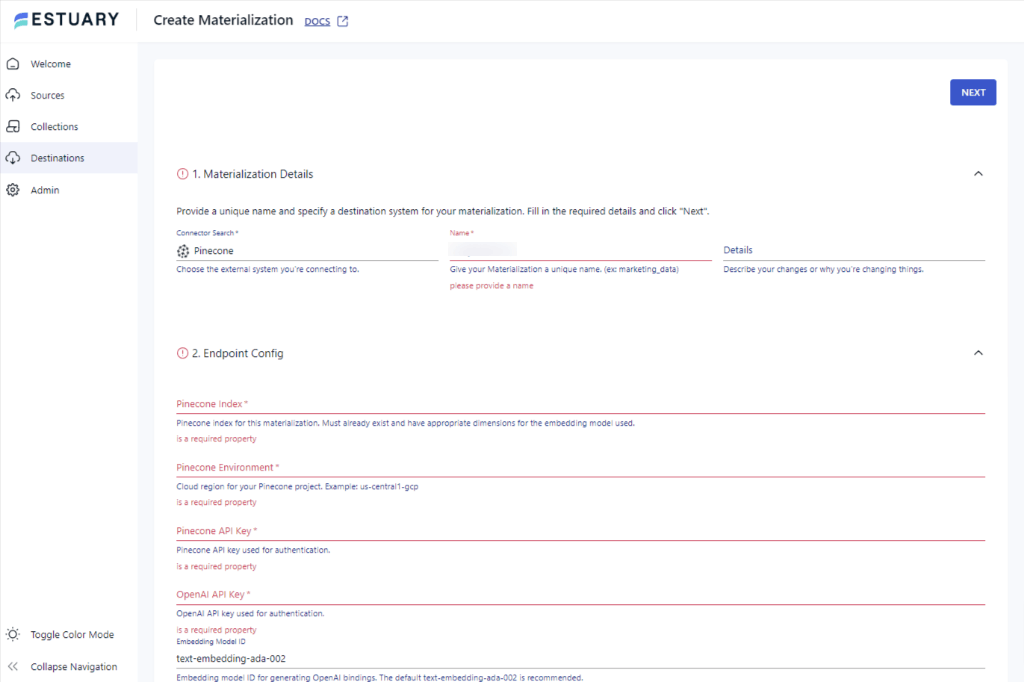

- You will see the Create Materialization page. Fill in the required fields, including Pinecone Index, Pinecone API Key, and OpeanAI API key, then click NEXT. Finally, click on SAVE and PUBLISH.

This concludes the integration from MySQL to Pinecone.

Why Use Estuary for MySQL to Pinecone Sync

- Pre-built connectors for both MySQL and Pinecone eliminate custom code

- Log-based CDC captures row-level changes in real time, minimizing latency

- No technical background required to configure or maintain the pipeline

Method 2: How to Sync MySQL to Pinecone Using Python and CSV Exports (Manual)

This approach uses MySQL Workbench to export data as CSV files, then a Python script to generate vector embeddings and upsert them into Pinecone. It works for one-time migrations or teams comfortable managing their own pipeline.

Step 1: Export CSV Files from MySQL

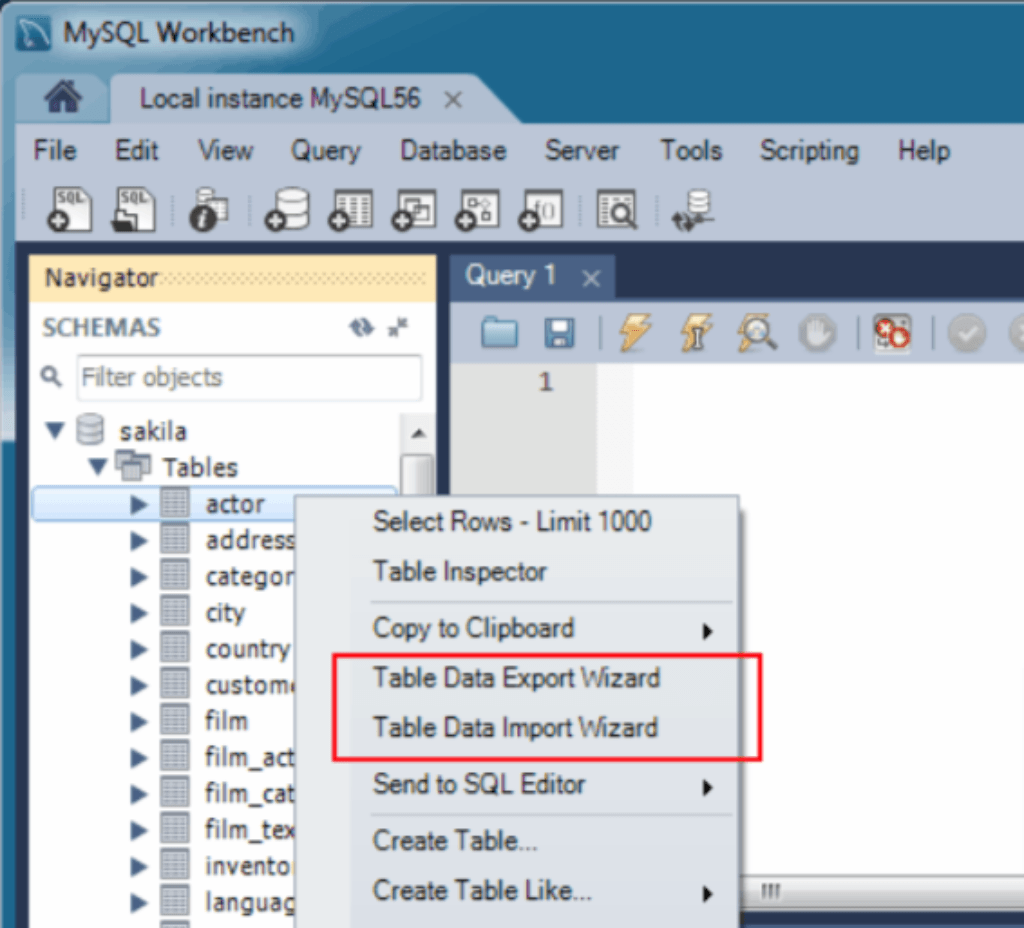

Open MySQL workbench and select the Database. From Files, choose the New Objects. On the context menu, right-click on a Table and select Data Table Export Wizard.

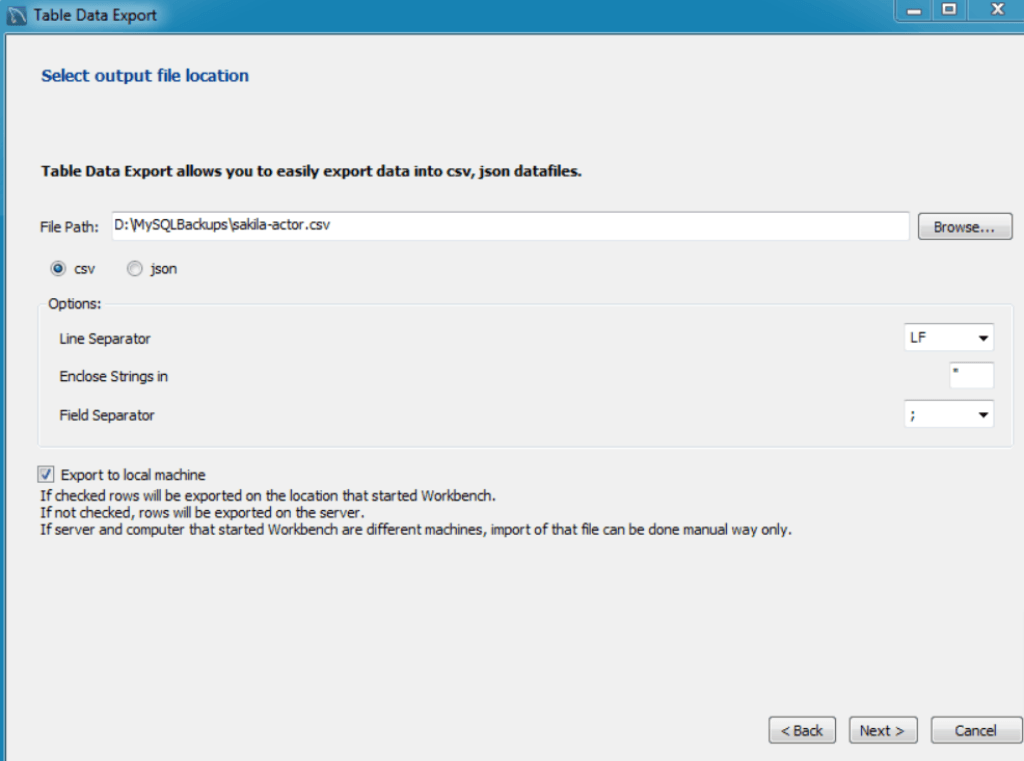

- In the next step, the Table Data Export window will appear. Browse the path to store your file and select CSV Files. Click on Next.

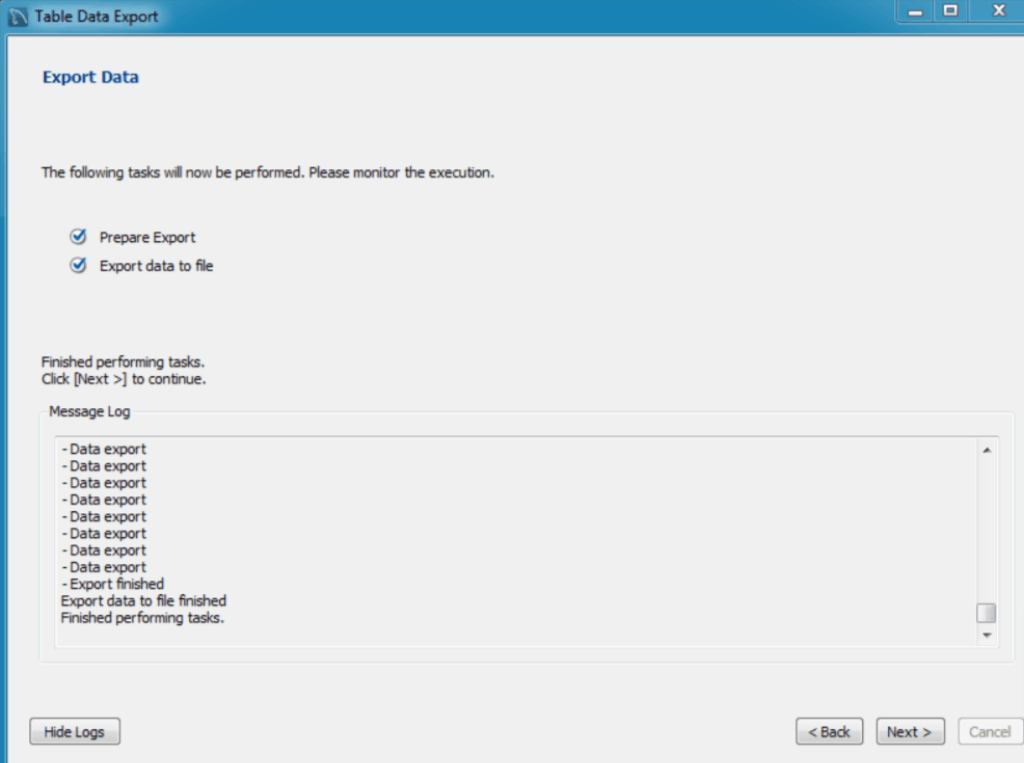

- Select Prepare Export and Export data to file in the Export Data window. Now click on the Next button at the bottom right. The export process will begin, and you can monitor the progress through logs.

Step 2: Import the CSV Files to Pinecone Using Python

- Ensure that your CSV files contain the necessary features that you want to transform into vectors.

- Use the following shell command and install Python client- version 3.6+.

plaintextpip3 install pinecone-client- Create a Pinecone index. Using the following example, create an index without a metadata configuration. However, Pinecone indexes all metadata by default.

pythonimport pinecone

pinecone.init(api_key="YOUR_API_KEY",

environment="YOUR_ENVIRONMENT")

pinecone.create_index("example-index", dimension=1024)- Once you create a Pinecone index, you can insert vector embeddings and metadata by creating a client index and targeting the index.

pythonindex = pinecone.Index("pinecone-index")- Now, use the upsert operation to write the records into the index. Here is an example.

python # Insert sample data (5 8-dimensional vectors)

index.upsert([

("A", [0.1, 0.1, 0.1, 0.1, 0.1, 0.1, 0.1, 0.1]),

("B", [0.2, 0.2, 0.2, 0.2, 0.2, 0.2, 0.2, 0.2]),

("C", [0.3, 0.3, 0.3, 0.3, 0.3, 0.3, 0.3, 0.3]),

("D", [0.4, 0.4, 0.4, 0.4, 0.4, 0.4, 0.4, 0.4]),

("E", [0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5]),

])Limitations of the Manual Python Approach

- No real-time sync: CSV exports are point-in-time snapshots; any changes to MySQL after the export are not reflected in Pinecone until you re-run the script

- Engineering overhead: Writing, testing, and maintaining custom code takes significant time and requires deep familiarity with both databases

- Higher risk of data errors: Manual scripting introduces opportunities for data loss, schema mismatches, and performance issues that automated tools handle automatically

Estuary vs. Custom Scripts: Which Method Should You Use?

| Estuary (Automated) | Custom Python Scripts | |

|---|---|---|

| Setup time | Minutes | Hours to days |

| Real-time sync | Yes, via CDC | No |

| Technical skill required | Low | High |

| Ongoing maintenance | Minimal | Significant |

| Best for | Production pipelines, continuous sync | One-time migrations, small datasets |

For most teams building AI applications on top of live operational data, Estuary is the faster and more reliable option. Custom scripts remain useful for simple, one-time data transfers where ongoing sync is not required.

Next Steps: Start Streaming MySQL to Pinecone

Connecting MySQL to Pinecone enables the AI search and retrieval capabilities modern applications depend on. Estuary makes this connection fast, reliable, and low-maintenance with pre-built connectors and real-time CDC. Custom Python scripts are a viable fallback for simple, one-time transfers but require significantly more engineering effort and do not support continuous sync.

Get started with Estuary for free or book a demo to see how Estuary handles your MySQL to Pinecone pipeline.

FAQs

Can I sync MySQL to Pinecone in real time?

How is Pinecone different from a traditional database like MySQL?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.