Welcome to the comprehensive guide on PostgreSQL Change Data Capture (CDC). As businesses and organizations increasingly rely on real-time data, it’s essential to maintain synchronization across multiple systems, ensuring up-to-date information is always available for decision-making. This is where CDC plays a crucial role.

PostgreSQL is widely used as the backbone for transactional systems, powering applications that require consistent, high-performance data. However, when it comes to analytics, reporting, or integrating data with other systems, a challenge arises: PostgreSQL alone isn't always optimal for these workflows. That's where PostgreSQL CDC comes into play, enabling seamless and efficient real-time data replication to other storage systems, like data warehouses.

In this guide, we’ll dive deep into PostgreSQL CDC, explaining how it works, the benefits of using it, and how you can implement it effectively in your environment. Whether you're a business leader looking to understand the strategic advantages, a data engineer working to implement CDC pipelines, or an IT professional looking to streamline your organization’s data integration, this guide will provide the tools and insights you need.

By the end of this article, you will have a clear understanding of how to capture changes in PostgreSQL and sync them in real-time with other systems, helping you improve the accuracy, speed, and reliability of your data architecture.

What is Change Data Capture (CDC)?

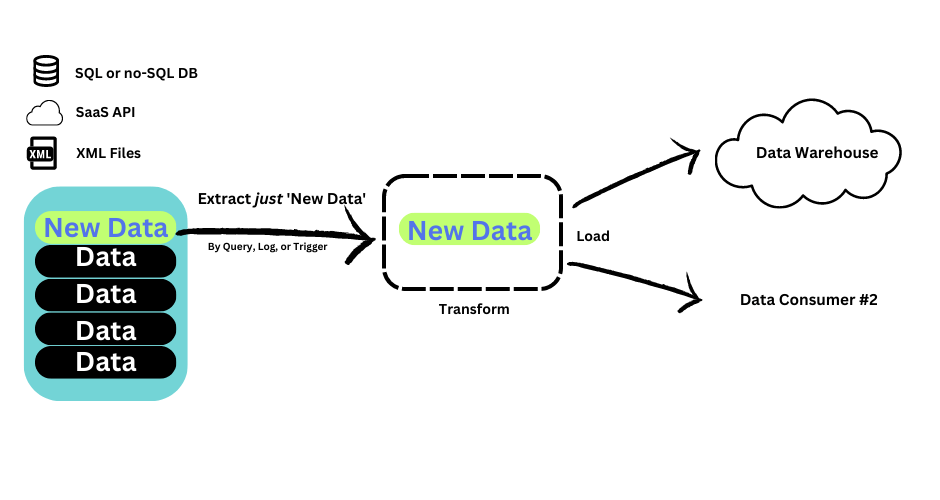

Change Data Capture (CDC) is a method used to track and capture changes made to data in a database in real-time. It helps ensure that data is kept synchronized across various systems, allowing businesses to act on up-to-date information without delay.

In simpler terms, CDC monitors and records changes in a database — such as inserts, updates, and deletes — as they happen. The captured changes are then transmitted to other systems or applications, ensuring consistency across data sources and providing real-time insights.

CDC is commonly used for:

- Data replication: Keeping multiple systems updated with changes from a source database.

- Real-time analytics: Providing up-to-date data to analytics platforms without batch updates.

- Data migration: Ensuring continuous data flow when migrating between different systems or databases.

In PostgreSQL, CDC can be implemented through various methods, including query-based, trigger-based, and log-based approaches. We will cover these methods in detail in the sections below.

Who Should Read This Guide?

This guide is designed for professionals and teams who work with or are considering PostgreSQL as their relational database solution and want to implement Change Data Capture (CDC). Whether you are new to CDC or looking to enhance your existing knowledge, this guide provides insights for a variety of roles:

- Business leaders: You need a foundational understanding of Postgres CDC to make informed decisions. We explain the core concepts of CDC, the pros and cons of different methods, and how they impact your organization.

- Data engineers and IT professionals: If you're responsible for setting up CDC for your organization, this guide will walk you through the technical details and key considerations for effective integration.

- Database Administrators: If you're responsible for ensuring the integrity and performance of PostgreSQL databases, you’ll appreciate the technical depth offered here. We’ll discuss the considerations necessary to minimize performance impacts when implementing CDC, as well as best practices for scaling this solution.

Why Choose PostgreSQL for Change Data Capture (CDC)?

PostgreSQL is one of the most popular relational database management systems (RDBMS) in the world, known for its reliability, flexibility, and high performance. While PostgreSQL excels in transactional environments, it often needs to be integrated with other systems for real-time data processing, analytics, and reporting. This is where Change Data Capture (CDC) becomes critical.

PostgreSQL is often used as the transactional database for a wide variety of applications. For instance, consider an e-commerce platform that relies on PostgreSQL to store customer information, process transactions, and manage inventory. In these scenarios, PostgreSQL CDC plays a key role in ensuring that the transactional data is efficiently synchronized with other systems.

However, in modern data environments, PostgreSQL is rarely the only database in use. Many organizations utilize multiple data stores, including data warehouses, for analytics and reporting. While PostgreSQL is highly effective for transactional workflows, it is not optimized for analytical workloads. That’s where CDC comes in.

By implementing CDC in PostgreSQL, you can seamlessly sync transactional data in real-time to external systems such as data warehouses, helping ensure that analytical systems always have up-to-date, accurate data for reporting and decision-making. This integration between transactional and analytical systems is essential for organizations that rely on both types of data to drive their operations.

Key Benefits of Using PostgreSQL for CDC:

- Reliability: PostgreSQL is a highly stable and secure system, ensuring data integrity and reducing the risk of data loss during CDC processes.

- Flexibility: PostgreSQL offers multiple ways to implement CDC, such as using SQL queries, triggers, and write-ahead logs (WAL), giving you flexibility in how to capture and synchronize data.

- Scalability: With PostgreSQL’s robust architecture, CDC can scale effectively as your data grows, ensuring real-time updates and synchronization even with large datasets.

- Integration: PostgreSQL easily integrates with a wide range of external systems, such as data warehouses, analytics platforms, and other data stores, making it an ideal choice for CDC.

What is Postgres CDC and Its Implementation Benefits?

PostgreSQL Change Data Capture (CDC) refers to the process of identifying and capturing changes made to a PostgreSQL database and making those changes available to other systems in real-time or in batches. It enables efficient data synchronization across various platforms, ensuring that all systems are working with the latest data without the need for full data replication.

In a typical architecture, your PostgreSQL database serves as the source of truth for transactional data. However, to support other systems like analytics platforms, data warehouses, or search engines, you need a way to propagate these changes to those systems as they occur. CDC solves this problem by capturing every update, deletion, or insert operation and synchronizing the changes with external systems in real-time or on a scheduled basis.

When implemented correctly, CDC in PostgreSQL provides several key benefits:

- Real-Time Data Synchronization: One of the major benefits of CDC is that it enables real-time updates across systems. This ensures that your data warehouse, business intelligence tools, or other downstream applications always have the latest information, allowing for more accurate reporting, decision-making, and analytics.

- Reduced Data Latency: Traditional methods of data replication, like periodic batch uploads, often introduce significant delays in updating downstream systems. CDC ensures that the data is continuously synchronized, minimizing latency and ensuring your data is up-to-date.

- Minimal Impact on Database Performance: When using PostgreSQL's native features, such as write-ahead logs (WAL) or triggers, CDC can be implemented with minimal impact on the performance of the source database. This allows for the efficient movement of data without taxing your production systems.

- Scalable and Reliable: As your data grows, CDC allows you to scale your synchronization processes effectively. Whether you're dealing with small amounts of data or terabytes of information, Postgres CDC methods can be optimized to handle large-scale data flows without significant performance degradation.

- Efficient Data Integration: CDC helps bridge the gap between transactional databases like PostgreSQL and other systems that need access to up-to-date data, such as data lakes or NoSQL databases. It provides a reliable way to integrate data from various systems while maintaining consistency and accuracy across the board.

Overall, Postgres CDC is an essential tool for organizations that require timely, accurate, and reliable data synchronization. By using CDC, you can ensure that your systems work with the latest data, improve operational efficiency, and support real-time analytics.

What Are the Different PostgreSQL Change Data Capture (CDC) Methods?

When implementing Postgres Change Data Capture (CDC), there are several methods to consider, each with its own advantages and challenges. The right method depends on factors such as your latency requirements, system resources, and the complexity of the integration. Broadly, Postgres CDC can be divided into batch-based methods and real-time methods. Here, we’ll explore three popular approaches:

- CDC using queries (Batch)

- CDC using triggers (Batch)

- CDC using logs (Real-time)

1. CDC Using Queries (Batch)

Batch CDC is one of the simplest and most common methods for implementing change data capture in PostgreSQL. It involves running regular SQL queries to capture and sync changes from the source database to a target system. This method is typically used when real-time synchronization is not required, and a certain degree of latency can be tolerated.

Your SQL query will look something like:

plaintextSELECT * FROM my_table WHERE time_updated > time_last_query’;How Queries-Based Postgres CDC works:

- You create an SQL query that selects records based on a timestamp or version number that indicates when a record was last updated.

- This query fetches new or modified records since the last query run.

- The results are then passed through an ETL (Extract, Transform, Load) pipeline to the target system.

Advantages of Queries-Based Postgres CDC:

- Simple to set up: Requires no special configuration beyond writing SQL queries.

- Low resource usage: Has minimal impact on the source system since it only pulls data at scheduled intervals.

Disadvantages of Queries-Based Postgres CDC:

- High latency: Changes are not captured in real-time, leading to potential delays in data synchronization.

- Cannot capture deletions: Queries often cannot detect DELETE operations unless explicitly handled.

- Performance impact: Large queries on high-volume tables can slow down the source database if not properly managed.

2. Triggers for Postgres Change Data Capture

Another common method for implementing CDC in PostgreSQL is through triggers. Triggers are functions that automatically execute in response to insert, update, or delete operations on the source database. These changes are captured and written to a secondary table (or changelog table), which serves as a log of modifications.

To run this function, you’d use the query:

plaintextSELECT audit.audit_table('target_table_name');How Trigger-Based Postgres CDC works:

- You define triggers that listen for changes to a table (inserts, updates, deletes).

- When changes occur, the triggers fire and write the changes to a dedicated table.

- The captured changes can then be extracted and moved to the target system.

Advantages of Trigger-Based Postgres CDC:

- Capture all change types: Triggers can detect INSERT, UPDATE, and DELETE operations, ensuring comprehensive tracking of changes.

- Real-time data capture: Changes are immediately recorded when they happen, offering low-latency synchronization.

Disadvantages of Trigger-Based Postgres CDC:

- Performance impact: Triggers can slow down database operations, especially on high-traffic tables, as each data modification needs to trigger an action.

- Complex setup: Setting up triggers requires careful planning, especially when dealing with large tables or complex business logic.

- Changelog management: The log of changes can grow quickly, necessitating cleanup and management to avoid performance issues.

3. CDC Using Write-Ahead Log (WAL) (Real-time)

The most advanced and efficient method for implementing real-time CDC in PostgreSQL involves utilizing the Write-Ahead Log (WAL). The WAL records every change made to the database and is used for recovery and data integrity purposes. By leveraging logical replication and decoding features, you can stream these WAL changes to external systems in near real-time.

How WAL for Postgres CDC works:

- Logical replication enables you to capture changes from the WAL.

- Logical decoding converts the WAL entries into a consumable format (like JSON or SQL).

- The changes are then streamed to a target system using tools like Kafka, RabbitMQ, or custom connectors

Advantages of Using WAL for Postgres CDC:

- Real-time data synchronization: Changes are captured and propagated to target systems almost immediately.

- Minimal impact on database performance: The use of WAL allows for efficient data capture with minimal overhead on the source database.

- Scalability: Ideal for large-scale systems with high-volume transactional data.

Disadvantages of Using WAL for Postgres CDC:

- Complex setup: Requires setting up logical replication, decoding, and potentially integrating third-party tools like Kafka, Debezium, or Estuary. Estuary offers an intuitive alternative for managing CDC pipelines with easy integration into PostgreSQL systems.

- Requires advanced PostgreSQL knowledge: Configuring WAL for CDC requires familiarity with PostgreSQL internals, which can be a steep learning curve for beginners.

Summary of CDC Methods Comparison

| Method | Latency | Performance Impact | Easy to Setup |

|---|---|---|---|

| CDC using Queries (Batch) | High | Low | Easy |

| CDC using Triggers (Batch) | Medium | Medium | Moderate |

| CDC using WAL (Real-time) | Low | Low | Complex |

Choosing the right Postgres CDC method depends on your specific requirements, such as data freshness, scalability, and ease of implementation. If real-time data updates are crucial, the WAL-based method is often the best choice. For less time-sensitive data integration, query-based or trigger-based methods may be more appropriate.

Steps for Setting Up Logical Replication for Postgres CDC

Implementing real-time Postgres Change Data Capture (CDC) with logical replication requires configuring several key components. Below are the steps involved in setting up logical replication for CDC, ensuring that your database captures changes efficiently and streams them to external systems like data lakes, warehouses, or analytical tools.

Step 1: Set 'wal_level' to Logical

- For logical replication to work, you need to set the wal_level to logical. This allows the database to record the necessary change details that will be captured during replication.

plaintextALTER SYSTEM SET wal_level = logical;- Explanation: This command adjusts the WAL level to ensure that it captures the logical changes required for replication. This is a crucial first step before proceeding to the replication setup.

Step 2: Ensure the Database User Has Replication and Read Privileges

- To perform logical replication, the user connecting to the database must have replication privileges and the ability to read all the necessary data.

plaintextALTER ROLE your_user_name WITH REPLICATION;

GRANT pg_read_all_data TO your_user_name; -- Useful for Postgres 14+

- Explanation: The first command grants the necessary replication privileges to the user. The second command grants read access to all tables, which is important for logical replication, especially in PostgreSQL 14+.

Step 3: Ensure the Source Tables Have a Primary Key or Set REPLICA IDENTITY FULL

- Logical replication requires that source tables have a primary key to identify rows uniquely. If a table does not have a primary key, you can set its REPLICA IDENTITY to FULL. However, this comes with performance implications.

plaintextALTER TABLE your_table_name REPLICA IDENTITY FULL;

- Explanation: The REPLICA IDENTITY setting ensures that the necessary information for UPDATEs and DELETEs is captured in the WAL. Tables without a primary key should be assigned this setting to ensure consistency in the replicated data.

Step 4: Create a Publication for the Source Tables

- A publication in PostgreSQL is a set of changes from source tables that you wish to replicate. You need to create a publication for the relevant tables.

plaintextCREATE PUBLICATION my_publication FOR TABLE my_table;

- Explanation: This creates a publication named my_publication for the selected tables. It essentially marks the changes made to these tables for replication to external systems.

Step 5: Perform Logical Decoding

Logical decoding converts the WAL into a format that can be consumed by external systems, such as JSON, SQL, or Protobuf. For this, you might need to use or install a plugin like wal2json or pgoutput (which is built into Postgres 10+).

Once logical replication is set up, you need to perform logical decoding to stream the changes to external consumers, such as Estuary.

You may need to install a plugin, such as wal2json or pgoutput, to help in the decoding process.

plaintextLOAD 'wal2json';

- Explanation: Plugins like wal2json or pgoutput are used to convert WAL records into a format that can be consumed by downstream systems. The decoded changes can be streamed in formats such as JSON, SQL, or Protobuf.

Step 6: Set Up a Messaging Queue or Event Bus

- Once logical decoding is in place, you can set up a messaging queue or event bus to relay the decoded event logs to the target system. This might involve setting up a Kafka topic, an event bus like RabbitMQ, or a custom implementation.

For example, with Kafka, you can use a producer script to stream the decoded data:

plaintextkafka-console-producer.sh --broker-list localhost:9092 --topic my_topic

- Explanation: This step involves routing the CDC data from the PostgreSQL source to a messaging system like Kafka, where it can be consumed by other services or databases.

Step 7: Monitor and Maintain Your Setup

Once your logical replication and CDC pipeline are up and running, it's essential to continuously monitor the system for errors, performance issues, and data consistency. You should also manage replication slots and keep an eye on resource usage to prevent system overload.

Why Consider Managed CDC Solutions?

While PostgreSQL’s native logical replication is powerful, it can be challenging to set up and manage, especially when scaling to handle large volumes of real-time data. This is where managed CDC solutions come in.

CDC using logical replication requires significant data engineering work. Setting up the infrastructure to capture and decode changes, configure replication, and push data to downstream systems like Kafka or a data warehouse can be complex. Not to mention, maintaining such a setup requires monitoring replication lag, error handling, and scaling the system.

Fortunately, managed CDC platforms have emerged to simplify these processes. These services abstract away much of the complexity involved in setting up CDC pipelines and offer a more user-friendly experience for data teams.

Managed CDC services like Estuary take the heavy lifting out of setting up real-time data pipelines. These platforms handle the technical complexity of replication slots, Kafka, and event bus setup, allowing you to focus on processing and using the data, not on managing the infrastructure.

Managed Solution: Estuary for Real-Time PostgreSQL CDC

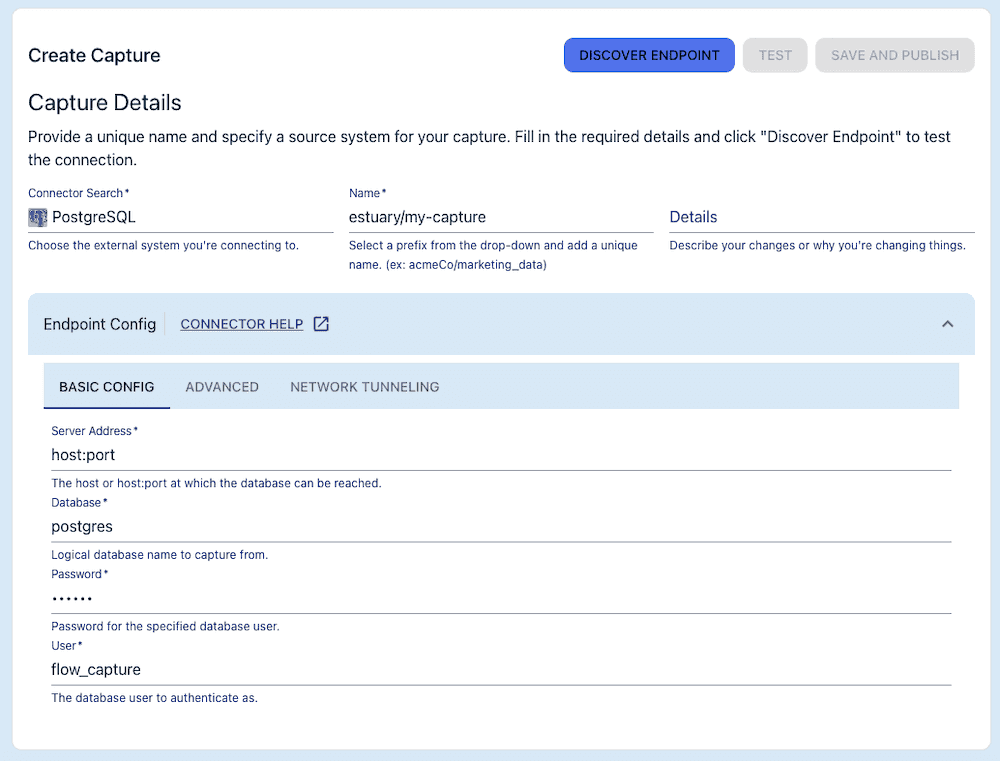

For teams looking for a simpler, more automated solution, Estuary offers an event-driven platform that simplifies the entire process of capturing and streaming PostgreSQL changes. Flow handles most of the manual setup for you, offering real-time CDC with minimal configuration.

When you use Estuary’s Postgres source connector, Estuary acts as a log-driven, real-time CDC pipeline.

After running some basic configuration on your database, as per the steps above, the Postgres connector essentially performs steps 5 and 6. It captures change events from the WAL and writes them to Estuary data collection. Cloud-storage-backed collection data is streamed through the Estuary runtime, which is built on Gazette, an open-source streaming broker that was also built by Estuary’s engineering team.

Your data collections captured from Postgres are automatically backed by a data lake in cloud storage. From there, they can be streamed to one or more target systems using any available materialization — or destination — connector. This occurs with millisecond latency.

Key Features of Estuary for Postgres CDC:

- High degree of customization using the intuitive web app or the CLI. Add additional data sources or multiple destinations to your CDC pipeline, and combine or separate streams of data without ever having to engineer the streaming broker directly.

- Ability to add transformations without an additional tool: aggregations, joins, and stateful stream transformations are all possible.

- Minimize performance impact of historical backfills. Backfilling data from Postgres tables is important for data integrity, even in event-driven CDC, but re-reading large tables can cause performance impacts or even errors like data duplication. Estuary avoids having to re-read the whole Postgres table by writing a checkpoint of where it left off in that source table.

By using Estuary, you avoid the complexity of setting up and maintaining Kafka, replication slots, and other event bus tools. You can focus on downstream applications and leverage the platform’s ease of use.

Looking for something else? Check out our CDC Guides for other systems:

Conclusion

PostgreSQL Change Data Capture (CDC) is an invaluable tool for businesses that rely on real-time data synchronization across systems. Whether you opt for query-based, trigger-based, or log-based CDC methods, each offers its own set of advantages that can be tailored to meet your specific needs.

In particular, logical replication offers a robust method for capturing changes, though it requires proper setup and maintenance. For teams looking to streamline this process and reduce the engineering overhead, managed solutions like Estuary are an excellent choice. Flow offers an intuitive, automated pipeline that eliminates the need for complex setups such as Kafka or manual replication slots, while also supporting data transformation and backfilling with ease.

By choosing the right CDC solution based on your team's capabilities and infrastructure, you can significantly enhance your data architecture, ensuring real-time insights and greater business agility.

Ready to Implement PostgreSQL CDC? Explore Estuary’s real-time, scalable CDC solution to effortlessly capture and stream changes from PostgreSQL to any destination system. Sign up today for a free trial and take the first step towards revolutionizing your data pipelines.

Key Takeaways

- PostgreSQL CDC enables real-time data synchronization, essential for modern data-driven applications.

- Logical replication is a powerful, flexible method for capturing data changes in Postgres, though it requires significant configuration.

- Managed solutions like Estuary offer a simplified, automated approach to CDC, eliminating complex setups and making it easier to scale.

- Estuary reduces the need for manual setup, with features like real-time streaming, transformations, and automated backfilling.

- Choosing the right CDC solution depends on your data volume, infrastructure, and engineering resources.

Additional Resources

Estuary resources:

- Quick Postgres CDC demo

- Blog series on CDC

- Estuary documentation

- Flow and the Postgres CDC connector code on GitHub

- Get started with Estuary today

Other resources:

- PostgreSQL documentation for topics mentioned: triggers, WAL, logical decoding.

- Custom Postgres CDC use-cases: Netflix

About the author

With over 15 years in data engineering, a seasoned expert in driving growth for early-stage data companies, focusing on strategies that attract customers and users. Extensive writing provides insights to help companies scale efficiently and effectively in an evolving data landscape.