From customer preferences to transaction records, every bit of information plays an important role in decision-making and strategy creation. Snowflake data types ensure that your data is stored, processed, and analyzed with the precision it deserves. Get them right and you are setting yourself up for data-driven success.

Snowflake's influence in the industry is undeniable. By the end of October 2022, the platform had 7,820 customers. Out of these, 330 customers were spending more than a million dollars each. Do you know what these savvy businesses had in common? They understood the potential of Snowflake data types.

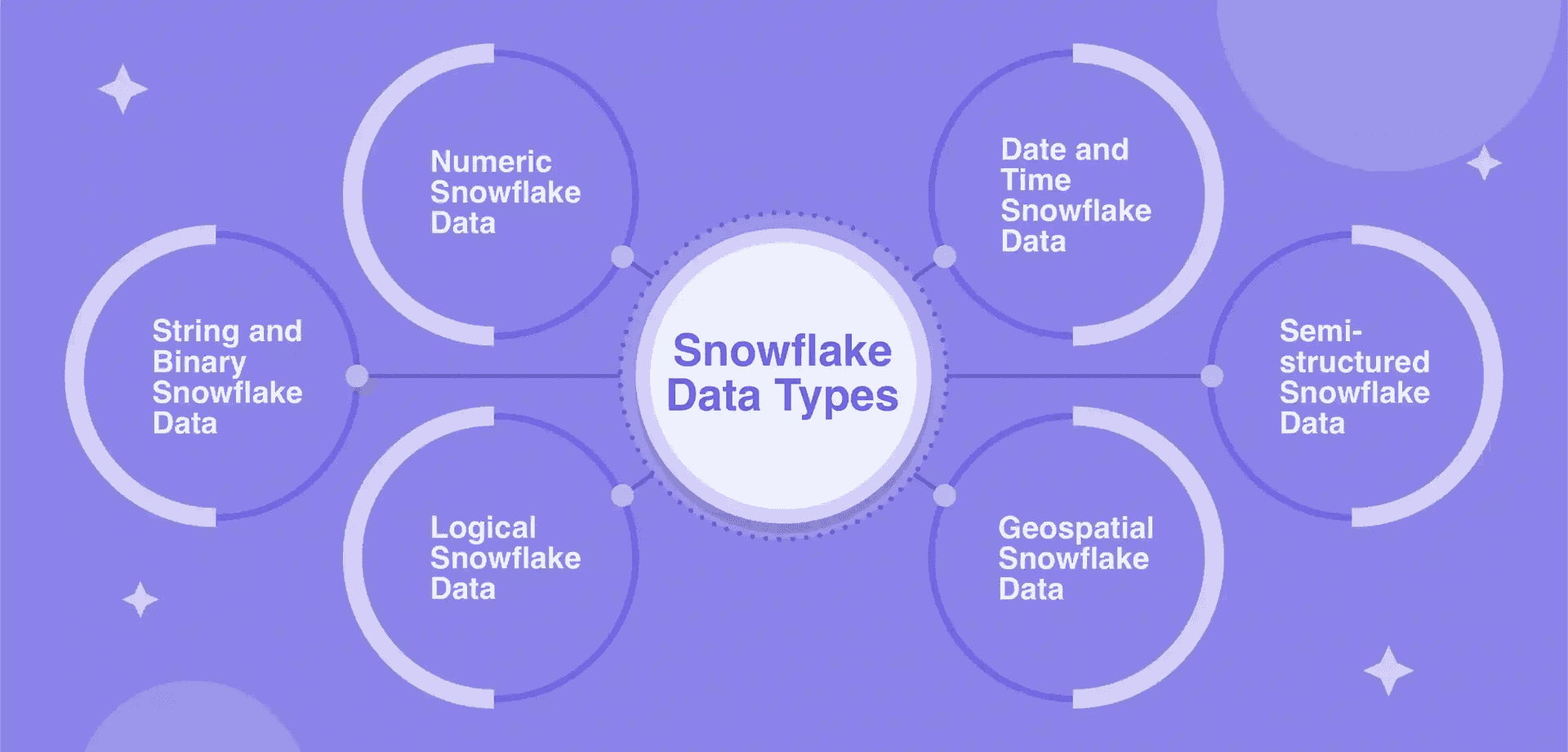

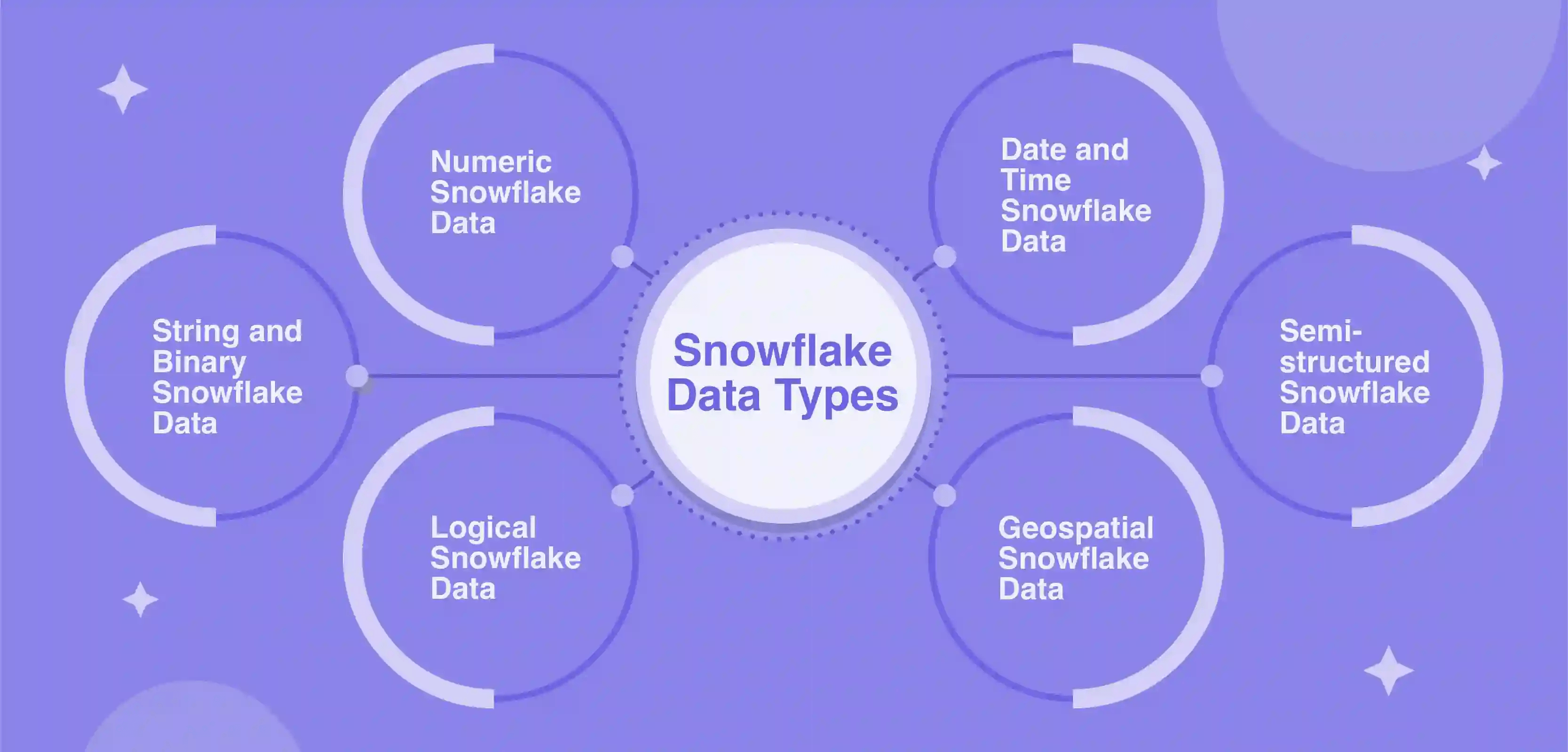

This guide explores six crucial Snowflake data types and the process of data type conversion, helping you enhance your expertise and optimize data storage, processing, and analysis for better decision-making and strategy implementation.

What Is Snowflake?

Snowflake is a cloud-native elastic data warehouse solution that was built specifically for the cloud. Rather than running on traditional on-premise hardware, Snowflake uses the power, scalability, and flexibility of cloud platforms like Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP).

Key features that set Snowflake apart include:

- Multi-cluster architecture: Snowflake can use multiple cloud platforms and regions at once for more flexibility.

- Near-zero maintenance: Snowflake handles all infrastructure maintenance and optimization behind the scenes.

- Flexible data structure: Snowflake uses a flexible semi-structured data model to accommodate different data types and structures.

- Secure data sharing: Snowflake provides tightly controlled and audited access to data that can be securely shared across business units and even third parties.

- Powerful performance: Snowflake uses a unique combination of SQL query optimization, caching, and other innovations to provide fast performance.

- Separation of storage and computing: Snowflake separates storage and computing functions. This lets storage and computing to scale independently, reducing costs.

For integration, check out our guides on:

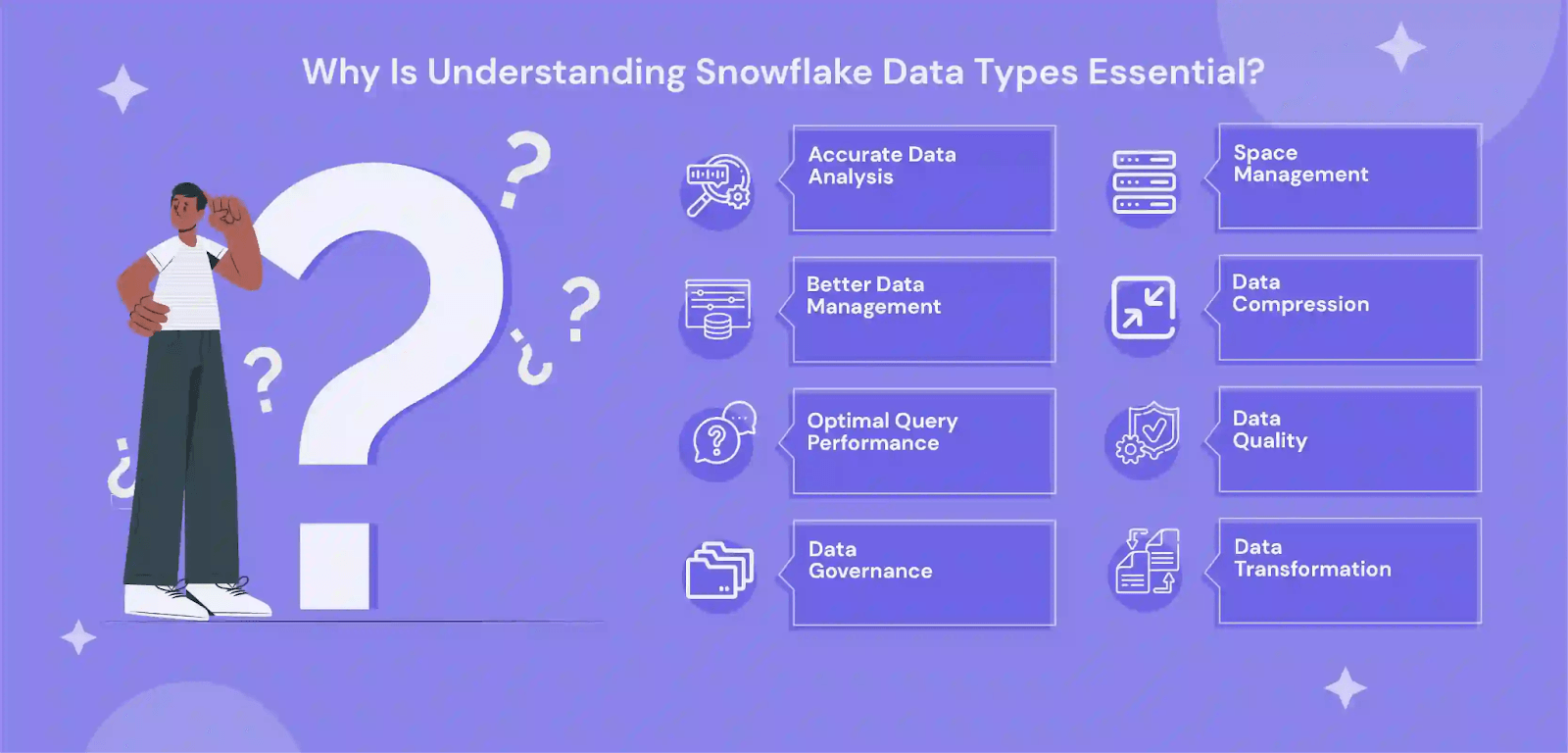

Why Understanding Snowflake Data Types Is Important

Snowflake offers a wide range of data types, all designed to suit different data storage and processing needs. Now why does understanding the ins and outs of these data types matter? Well, there are quite a few compelling reasons:

- Enable Data Portability: Snowflake supports familiar SQL data types like VARCHAR and TIMESTAMP. This makes migrating data into Snowflake easier from other systems.

- Avoid Pitfalls: Type conversion errors, precision loss, and other issues can occur without a solid understanding of data types. This knowledge helps you avoid problems.

- Unlock Functionality: Certain Snowflake features and functions are only supported for specific data types. You need to know the types available to maximize your capabilities.

- Simplify Data Management: Understanding the available data types makes data modeling and schema design more straightforward. You can map data appropriately to Snowflake types.

- Ensure Data Accuracy: Picking data types that match your data’s characteristics guarantees accurate storage and processing. Data quality issues can arise from data type mismatches.

- Optimize Storage & Performance: Choosing the right data type minimizes storage costs and maximizes query performance. Data types impact how data is compressed and indexed.

6 Essential Snowflake Data Types

Snowflake has a range of data types for use in columns, local variables, expressions, and parameters. Let’s discuss all the major data types supported in Snowflake – and also what isn’t supported in Snowflake.

1. Numeric Data Types

Numeric data types in Snowflake can be used to store numeric values like whole numbers, decimals, and floating point numbers. There are 2 important concepts to understand when dealing with numeric type data in Snowflake:

- Precision: This refers to the total number of digits a number can have. In Snowflake, the maximum precision for a numeric data type is 38.

- Scale: This is the count of digits after the decimal point. The maximum scale is 37 – so you can have up to 37 digits to the right of the decimal.

When storing the same value, precision does not affect storage but the scale does. Additionally, larger scales may slow down processing and use more memory.

Common numeric data types include:

- NUMBER: The primary data type for storing both integers and decimals. It comes with a default precision of 38 and a scale of 0.

- DECIMAL and NUMERIC: Both are interchangeable with NUMBER.

- INT, INTEGER, BIGINT, SMALLINT, TINYINT, BYTEINT: These types represent integers. Their precision is fixed at 38, with a scale of 0.

- FLOAT, FLOAT4, FLOAT8: Represented as 64-bit floating-point numbers.

- DOUBLE, DOUBLE PRECISION, REAL: These are other names for the FLOAT type.

Numeric types are versatile. They can hold both discrete and continuous values, support calculations, and are handy for columns like price, quantity, and ratings.

For instance, if you want to define a column for price with an optional precision parameter and scale parameter, you can use the following:

plaintextCREATE TABLE sales (price NUMBER(5,2));This sets up the price column to hold numbers up to 5 digits, with 2 digits after the decimal.

Special Considerations

- When storing large numbers, rounding errors might occur. Be cautious, especially when performing mathematical operations on these numbers.

- While the FLOAT data type provides flexibility, it comes with special values like 'NaN' (Not A Number), 'inf' (infinity), and '-inf' (negative infinity).

2. String & Binary Data Types

String and binary data types in Snowflake are used for textual and binary data storage and processing. These data types are very important when dealing with character strings, Unicode characters, or binary data.

When working with these data types in Snowflake, remember 2 fundamental points:

- Character Set: Snowflake uses the Unicode UTF-8 character set. This provides worldwide compatibility across various languages and symbols.

- Length Limitation: The maximum length varies depending on the data type. For example, VARCHAR can store up to 16,777,216 characters or bytes, while BINARY has an 8MB byte limit.

The essential string and binary data types in Snowflake are:

- VARCHAR: The quintessential data type for textual data, VARCHAR is equipped to store Unicode UTF-8 characters. Its length can range up to 16MB.

- CHAR, CHARACTER, NCHAR: These are synonymous with VARCHAR but with a twist. If no length is assigned, they default to storing just one character.

- STRING, TEXT, NVARCHAR, NVARCHAR2, CHAR VARYING, NCHAR VARYING: These are basically the variants of VARCHAR.

- BINARY: Tailored for binary data, BINARY doesn't recognize Unicode characters and is always byte-centric. The length can extend up to 8MB.

- VARBINARY: This is a flexible variant of BINARY. It retains the characteristics of BINARY but offers variable-length storage.

Columns with these data types can be created just like what we saw for the numeric data types. So to create a column for names, you can use:

plaintextCREATE TABLE names (first_name VARCHAR(50));This means the first_name column can store names with up to 50 characters.

Special Considerations

- Snowflake's CHAR doesn't pad shorter strings with spaces, deviating from common CHAR behavior.

- BINARY type is optimal for pure binary storage but remember to account for its hexadecimal display in Snowflake.

- In Snowflake, string constants should always be encapsulated between delimiter characters, either single quotes or dollar symbols.

- While VARCHAR supports a massive length, remember that multi-byte Unicode characters can reduce the actual number of characters storable.

3. Logical Data Type

Logical data types in Snowflake focus on storing Boolean values, catering to binary logical storage needs. It’s perfect for flags, indicators, condition evaluations, and binary state representations.

The main Logical data type in Snowflake is Boolean. This is the principal data type to represent logical states. Its core purpose is to capture either a TRUE or FALSE value but it can also represent an indeterminate or unknown state with NULL. The BOOLEAN data type can represent 3 distinct values:

- TRUE

- FALSE

- NULL (indicating an unknown state)

BOOLEAN is pivotal when discerning truth values, choices, or binary states in datasets. Adding logical data columns in your Snowflake database is very straightforward.

For example, when setting up a logical column, use:

plaintextCREATE TABLE user_activity (is_logged_in BOOLEAN);To populate the user_activity table, you can use:

INSERT INTO user_activity VALUES (TRUE), (FALSE), (NULL);

And, to filter records based on the BOOLEAN column:

SELECT * FROM user_activity WHERE is_logged_in = TRUE;

Special Considerations

- Be careful when converting other data types to BOOLEAN to ensure meaningful representation and avoid potential conversion errors.

- BOOLEAN data type doesn't support arithmetic operations. Therefore, arithmetic on BOOLEAN values like addition or multiplication is not possible.

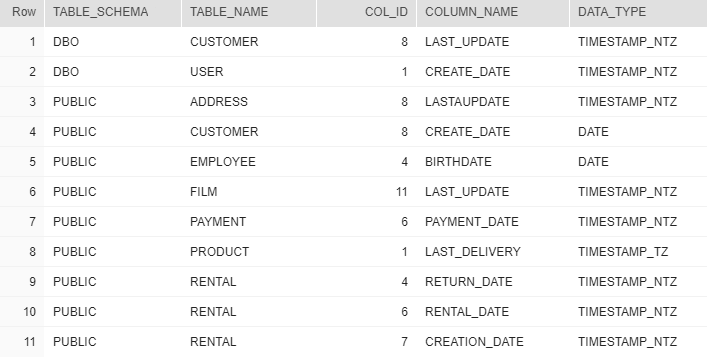

4. Date & Time Data Types

Date and time data types in Snowflake are integral for storing and managing chronological information. They play an important role when you need to capture moments, durations, and intervals. This makes them a must for a wide range of applications.

Here are the necessary concepts for working effectively with Snowflake's date and time data types:

- Time Zone Handling: Some timestamp data types in Snowflake come with or without time zone details. Knowing the difference confirms accurate data storage and retrieval.

- Calendar System: Snowflake operates on the Gregorian Calendar. Note that while years before 1582 are recognized, dates before this aren't adjusted to match the Julian Calendar.

The primary date and time data types in Snowflake are:

- DATE: Focused solely on capturing the date. Represented in formats like 'YYYY-MM-DD' or 'DD-MON-YYYY'.

- DATETIME: An alias for TIMESTAMP_NTZ. It combines both date and time but without time zone information.

- TIME: Stores the time of day, represented as 'HH:MI:SS', with optional fractional seconds.

- TIMESTAMP: Depending on the session settings, this type acts as a user-specified alias for TIMESTAMP_* variants.

- TIMESTAMP_LTZ (Local Time Zone): This type stores the UTC but operates and presents data in the current session’s time zone.

- TIMESTAMP_NTZ (No Time Zone): A representation of "wallclock" time, it stores both date and time but disregards any time zone data.

- TIMESTAMP_TZ (Time Zone): It captures the UTC and includes a time zone offset, denoted as 'YYYY-MM-DD HH:MI:SS +HH:MI'. If the time zone is not specified, it defaults to the session time zone offset.

To make a table with various date and time fields, specify the data type beside the column name:

plaintextCREATE TABLE event_log (

event_date DATE,

start_time TIME,

event_stamp TIMESTAMP_NTZ

);Special Considerations

- The TIMESTAMP_TZ type only stores the offset, not the actual time zone. This means it doesn't track daylight saving time changes.

- Always be conscious of the time zone settings in your Snowflake session as they can affect how TIMESTAMP_LTZ values are presented.

5. Semi-Structured Data Types

With semi-structured data types in Snowflake, you can manage and process non-relational data. These data types are specifically designed to handle data formats such as JSON, Avro, ORC, and Parquet.

To work efficiently with semi-structured data in Snowflake, you need to understand two key concepts:

- Data Extraction: Snowflake offers special operators and functions to extract, flatten, and navigate the complex hierarchies present in semi-structured data.

- Storage Mechanism: Unlike structured data, semi-structured data doesn't rely on predefined schemas. Instead, the data carries its own structure which Snowflake interprets dynamically.

Common Semi-Structured Data Types in Snowflake:

- VARIANT: This is the most versatile data type for storing semi-structured data. It can store arrays, objects, or a mix of both. Given its nature, VARIANT columns can hold data in multiple formats, like JSON or Avro.

- OBJECT: This type is used for storing key-value pairs. It's a subset of the VARIANT type.

- ARRAY: As the name suggests, this data type is for JSON arrays.

These data types process and query fluid or schema-less data to provide flexibility for data that doesn't fit neatly into traditional tables. Snowflake also provides advanced functions such as FLATTEN and PARSE_JSON tailored for these data types.

Let’s look at an example to create a VARIANT type column:

plaintextCREATE TABLE user_data (info VARIANT);Here, the info column can accommodate a diverse data set, from JSON objects to Avro data.

Special Considerations

- Snowflake does not support explicitly typed objects as of now.

- While VARIANT is powerful, it's important to confirm that the data stored in a VARIANT column is of the same format to avoid complications during querying.

- Extracting nested data from semi-structured types might require the use of specialized functions and might be more resource-intensive than querying structured data.

6. Geospatial Data Types

Geospatial data types in Snowflake cater to spatial data storage and processing which you can use for location-based information.

The primary Geospatial data types are:

- GEOMETRY: Handles spatial features in an Euclidean or Cartesian coordinate system.

- GEOGRAPHY: Represents the Earth in line with the WGS 84 standard, a global reference system for Earth.

There are a few fundamental things to know when working with geospatial types:

- They align with the GeoJSON format for spatial object representation.

- They capture spatial data necessary for applications like mapping services and logistics.

- They support specific spatial objects like Point, MultiPoint, LineString, MultiLineString, Polygon, and MultiPolygon.

Here’s how to define a GEOGRAPHY column:

plaintextCREATE TABLE geospatial_table (id INTEGER, location GEOGRAPHY);For data entry and querying, you can use:

plaintextINSERT INTO geospatial_table VALUES (1, 'POINT(-122.35 37.55)');

SELECT location FROM geospatial_table WHERE id = 1;Special Considerations

- Only 2D coordinates are supported for GEOMETRY.

- The GEOGRAPHY data type doesn't support altitude.

- Direct casting between GEOGRAPHY and GEOMETRY isn't straightforward and often requires GeoJSON as a middle step.

Data Type Conversion in Snowflake

Data type conversion, often termed as 'casting', is a crucial aspect of managing and manipulating data in Snowflake. It's the process of converting a value from one data type to another.

Snowflake provides both explicit and implicit methods for this conversion. Let’s discuss both of these.

Explicit Casting

This method requires users to specify the desired data type. Snowflake offers multiple options for this:

- CAST Function: Used as CAST(value AS type).

- Cast Operator (::): Used as value::type.

- SQL Functions: Functions like TO_DOUBLE(value).

Examples:

plaintextSELECT CAST(’2022-04-01’ AS DATE);

SELECT CAST(’2022-04-01’ AS DATE);

SELECT ’2022-04-01’::DATE;

SELECT ’2022-04-01’::DATE;Implicit Casting

Snowflake automatically converts data types in certain situations. This is mainly seen when a function or operator expects a specific type but receives a different, albeit compatible, type.

For example, if you use:

plaintextSELECT 17 || ’76’;This converts the INTEGER 17 to VARCHAR and outputs ’1776’.

Limitations

While Snowflake does allow various methods to cast data from one type to another, it has some limitations as well:

- Not all data types support implicit casting.

- Some conversions can result in a loss of precision. For instance, converting a FLOAT to INTEGER will round the value.

- Snowflake doesn't permit conversions that might truncate a VARCHAR value or result in the loss of significant digits.

Conclusion

Snowflake data types can empower your business to efficiently store and process diverse data. But only when you understand the true potential of each of these data types, can you actually ensure that your data is represented accurately, maximizing query performance and preventing issues.

To efficiently ingest data into Snowflake for analysis without worrying about data types, consider using Estuary. It offers no-code, real-time data pipelines that simplify data ingestion into Snowflake.

If you're curious about how Estuary can help you with Snowflake integration and setting up data pipelines, sign up here for free or get in touch with our team.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.