Poor data quality has measurable consequences for organizations of every size. According to Gartner, large enterprises lose an average of $12.9 million per year to poor data quality, and 59% of organizations do not even measure that cost. What cannot be measured cannot be improved.

Data quality refers to the degree to which data is accurate, complete, consistent, timely, and fit for its intended use. It is always context-dependent: the same dataset can be high quality for one use case and inadequate for another, depending on the decisions it needs to support.

This guide covers the core dimensions of data quality, the standards organizations use to measure and enforce it, and real-world case studies showing what good data quality practice looks like in action. It also covers where tools like Estuary fit into keeping data clean as it moves across systems.

What Is Data Quality?

Data quality refers to the degree to which data is accurate, complete, consistent, timely, and fit for its intended use. Importantly, data quality is always context-dependent. The same dataset can be high quality for one use case and inadequate for another, depending on what decisions or processes it is meant to support.

Poor data quality has measurable consequences. Gartner estimates that bad data costs large organizations an average of $12.9 million annually. Despite this, the same Gartner research found that 59% of organizations do not measure the financial cost of poor data quality at all, making it nearly impossible to build a business case for fixing it.

Organizations prioritize data quality for three core reasons. First, reliable data reduces the time teams spend on manual fixes and rework, which consumes a significant portion of a data practitioner's working time, according to multiple industry surveys. Second, decisions made on inaccurate or incomplete data produce inaccurate or incomplete outcomes, regardless of how sophisticated the analytics layer is. Third, customer-facing systems such as CRMs, recommendation engines, and billing platforms depend on clean data to function properly. A single duplicate or outdated record in a customer database can result in failed communications, billing errors, or compliance violations under regulations like GDPR and CCPA.

Enhancing Data Quality: Exploring 8 Key Dimensions For Reliable & Valuable Data

Understanding what makes data "good" requires a framework. The following eight dimensions give data teams a practical lens for assessing where data quality problems exist and how to prioritize fixing them. This approach aligns with how DAMA International and Gartner recommend organizations structure their data quality programs.

Accuracy

Accuracy is the degree to which data correctly reflects the real-world entity or event it represents. If your data is accurate, the systems and decisions relying on it will behave as expected. Inaccurate data, such as a wrong date of hire or an incorrect product price, can have downstream consequences across every system that consumes it.

Accuracy is typically measured by comparing data against a trusted reference source or through direct physical verification. Industries with regulatory requirements, such as healthcare under HIPAA and finance under SOX, treat accuracy as a compliance requirement, not just a best practice.

Completeness

Completeness measures whether all required data values are present. It is not about having every optional field populated but about having enough data to support the intended use case. A customer record missing a billing address is incomplete for an invoicing system, even if all other fields are populated.

Completeness is measured as a percentage of required fields populated across a dataset. DAMA DMBOK treats completeness as one of the foundational dimensions because incomplete data directly limits what can be analyzed or acted upon.

Consistency

Consistency checks whether the same data stored across multiple systems or instances aligns. If a customer's phone number appears differently in your CRM than in your billing system, you have a consistency problem, even if both values are individually plausible.

Consistency is especially critical in organizations running multiple platforms that share master data. Inconsistencies often surface during data integration projects and are one of the most commonly cited data quality challenges, according to Gartner.

Uniqueness

Uniqueness is the absence of duplicate records within or across datasets. Every entity, whether a customer, product, or transaction, should appear exactly once. Duplicate records inflate metrics, corrupt aggregations, and create friction in customer-facing workflows such as marketing and support.

Uniqueness is measured by running deduplication checks and calculating the rate of duplicate records in a dataset. Tools like data observability platforms help catch duplicates before they propagate downstream.

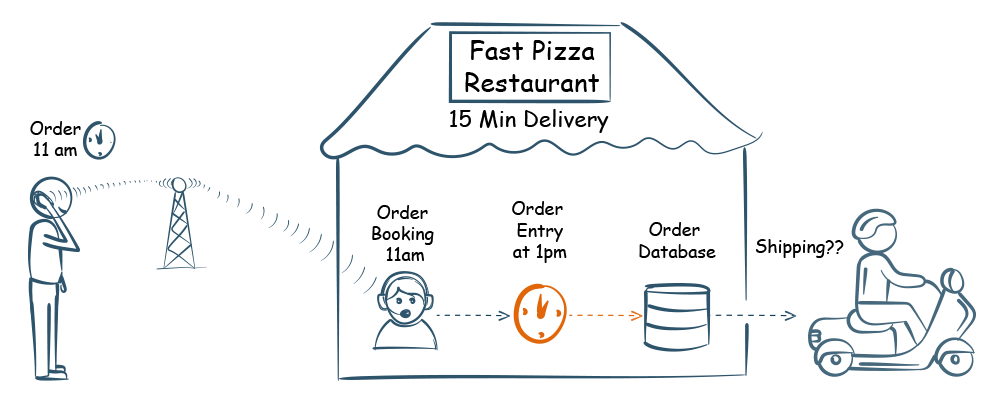

Timeliness

Timeliness measures whether data is available when it is needed and reflects the current state of the entity it represents. Stale data in a real-time analytics system or an overnight-batch CRM sync is a timeliness failure even if the data was accurate when it was captured.

Timeliness is directly tied to pipeline latency. The gap between when an event occurs at the source and when it is available for analysis determines how current your data is. This is one of the primary reasons organizations move from batch ETL to real-time CDC pipelines.

Validity

Validity is the extent to which data conforms to defined business rules, formats, and acceptable value ranges. A date of birth entered as February 30 is invalid. A ZIP code with six digits for a US address is invalid. Data can be complete and consistent but still invalid if it violates domain constraints.

Validity is enforced through schema validation rules and data contracts at the point of ingestion. dbt and similar transformation tools allow teams to define and test validity rules as part of their pipeline.

Currency

Currency refers to how up-to-date data is relative to the real-world state it represents. Unlike timeliness, which is about availability, currency is about whether the data still reflects reality. A customer's address that was accurate two years ago may no longer be current if they have moved.

Currency is typically managed through scheduled refresh cycles or event-driven updates that propagate changes from source systems to downstream consumers. Change Data Capture is one of the most effective mechanisms for keeping data current across distributed systems.

Integrity

Data integrity refers to the preservation of relationships between data entities as data moves across systems. In a relational database, referential integrity means that a foreign key in one table always corresponds to a valid primary key in another. When that relationship breaks, queries return incorrect results and downstream systems behave unpredictably.

Integrity failures often occur during data migrations, pipeline transformations, or system integrations where relationships are not explicitly enforced. ISO 8000 addresses data integrity as part of its broader framework for data quality governance.

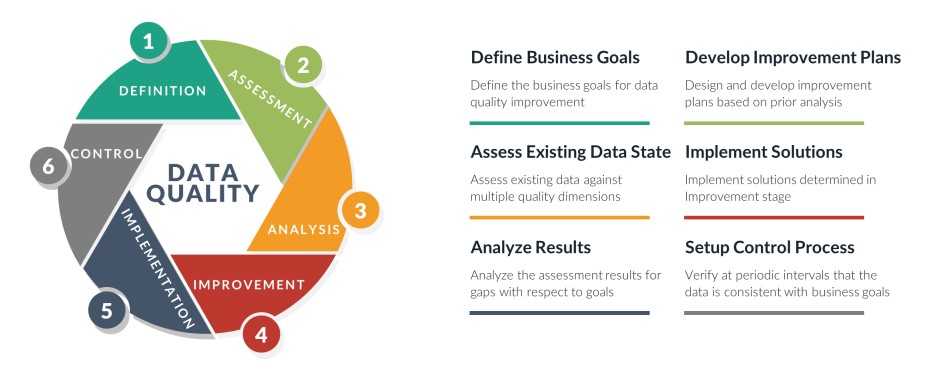

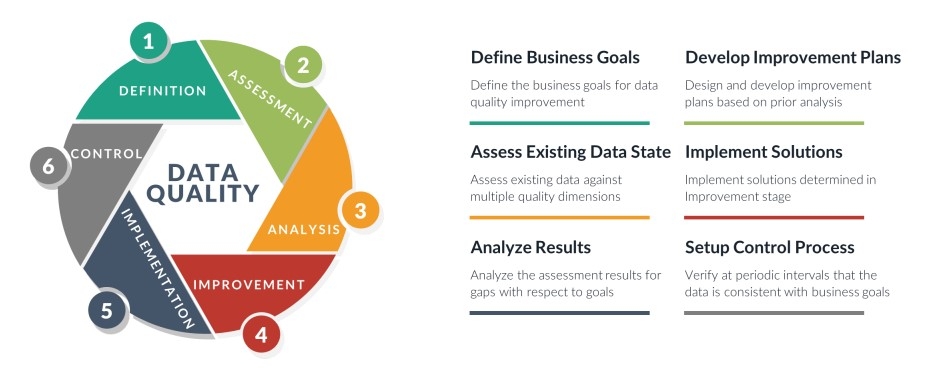

Understanding 6 Data Quality Standards

Here are 6 prominent data quality standards and frameworks.

ISO 8000

ISO 8000 is the international standard specifically focused on data quality. Developed by the International Organization for Standardization, it provides a framework for defining, measuring, and improving data quality across industries and organizational sizes.

ISO 8000 is notable for treating data quality as context-dependent rather than absolute. Data can meet quality requirements for one purpose but not another, depending on the requirements defined. ISO 8000-61, the most widely implemented part of the series, focuses specifically on master data quality for supply chain and product data contexts. The standard also covers data governance, maturity assessment, and portability, making it one of the most comprehensive frameworks available for enterprise data quality programs.

Total Data Quality Management (TDQM)

Total Data Quality Management (TDQM), developed at MIT, is an end-to-end approach to managing data quality from creation to consumption. Rather than treating data quality as a one-time cleanup exercise, TDQM applies continuous improvement principles borrowed from manufacturing quality management to data processes.

Key features of TDQM include root cause analysis to prevent recurring issues rather than just fixing symptoms, and coverage of the full data lifecycle including creation, collection, storage, maintenance, transfer, and use. Organizations that implement TDQM typically see improvements in operational efficiency, decision-making quality, and customer satisfaction because data quality is managed systematically rather than reactively.

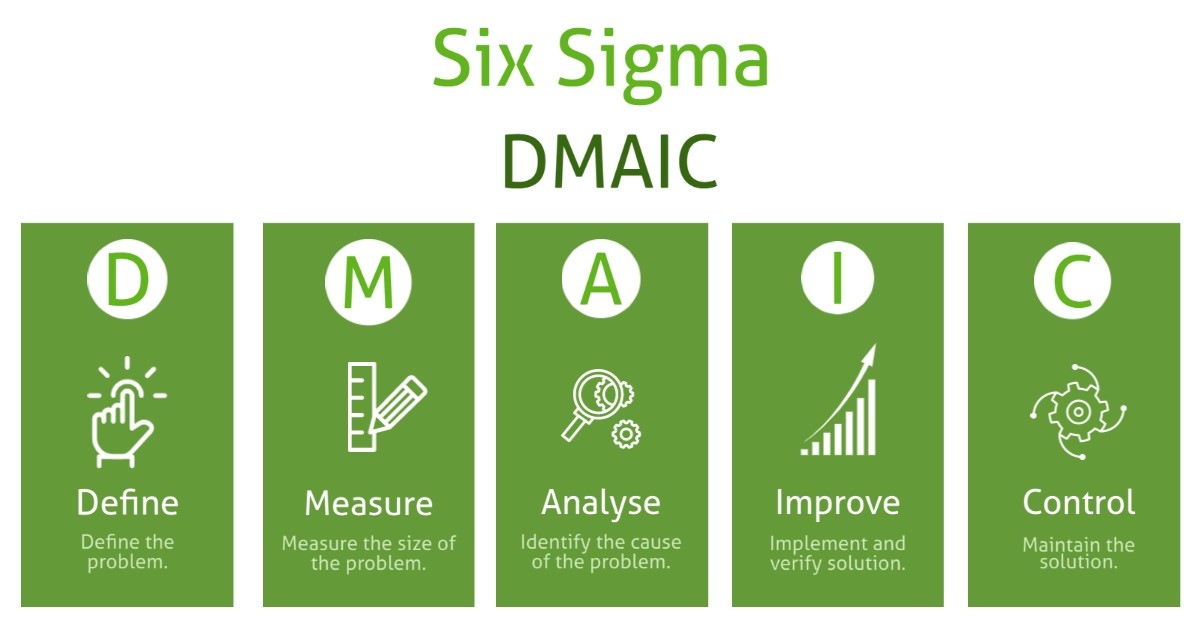

Six Sigma

Six Sigma is a process improvement methodology that applies statistical analysis to reduce defects and variation. Applied to data quality, it uses the DMAIC model (Define, Measure, Analyze, Improve, Control) to systematically identify and address quality issues.

- Define: identify the data quality problem and understand the needs of data consumers.

- Measure: assess the current state of data quality across relevant dimensions.

- Analyze: investigate root causes of identified quality issues.

- Improve: implement solutions including process redesign, system changes, or one-time data remediation.

- Control: monitor data quality continuously using metrics to sustain improvements over time.

Six Sigma is particularly effective in regulated industries where data quality defects have direct compliance consequences, such as financial services and pharmaceutical manufacturing.

DAMA DMBOK

The Data Management Body of Knowledge (DAMA DMBOK), published by DAMA International, is a comprehensive reference framework covering all aspects of data management across 11 knowledge areas including data governance, data architecture, data quality, metadata management, master data management, and data warehousing.

For data quality specifically, DAMA DMBOK provides a structured approach to defining quality dimensions, establishing measurement processes, assigning data stewardship responsibilities, and embedding quality controls into pipelines and governance programs. It is not a prescriptive standard but a knowledge framework that organizations adapt to their specific context and maturity level.

ISO/IEC 25012

ISO/IEC 25012 is the international standard specifically focused on data quality characteristics for software and data systems. It defines 15 data quality characteristics organized into two categories: inherent quality (properties of the data itself, such as accuracy, completeness, and consistency) and system-dependent quality (properties that depend on the system context, such as availability and portability).

ISO/IEC 25012 is more technically focused than ISO 8000 and is commonly used by software engineering and data architecture teams to define quality requirements for data products and information systems. It provides a common vocabulary for specifying and evaluating data quality that bridges business and technical stakeholders.

IMF Data Quality Assessment Framework (DQAF)

The IMF Data Quality Assessment Framework (DQAF) is a tool developed by the International Monetary Fund to assess the quality of statistical systems, processes, and outputs. It is built on the United Nations Fundamental Principles of Official Statistics and is used primarily in the context of national and international statistical reporting.

The DQAF evaluates data quality across five dimensions:

- assurances of integrity (objectivity in data collection and dissemination),

- methodological soundness (alignment with international standards),

- accuracy and reliability (sound source data and statistical techniques),

- serviceability (consistency, timeliness, and predictable revision policies),

- accessibility (ease of access to data and metadata).

While most relevant to government statistical agencies and international organizations, the DQAF's dimensional framework is also referenced by research institutions and policy-driven organizations managing large public datasets.

3 Real-life Examples Of Data Quality Practices

Let's explore some examples and case studies that highlight the significance of good data quality and its impact in different sectors.

IKEA

IKEA Australia's loyalty program, IKEA Family, aimed to personalize communication with its members to build loyalty and engagement.

To better understand and target its customers, the company recognized the need for data enrichment, particularly regarding postal addresses. Manually entered addresses resulted in poor data quality, including errors, incomplete data, and formatting issues, leading to a match rate of only 83%.

To address the challenges with data quality, IKEA ensured accurate data entry and improved data enrichment for their loyalty program. The solution streamlined the sign-up process and reduced errors and keystrokes.

The following are the outcomes:

- The enriched datasets led to a 7% increase in members' annual spending.

- The improvement in data quality enabled more targeted communications with customers.

- IKEA Australia's implementation of the solution resulted in a significant 12% increase in their data quality match rate, rising from 83% to 95%.

- The system of validated address data minimized the risk of incorrect information entering IKEA Australia's Customer Relationship Management (CRM) system.

Hope Media Group (HMG)

Hope Media Group was experiencing a significant data quality issue due to the continuous influx of contacts from various sources, resulting in numerous duplicate records. The transition to a centralized platform from multiple disparate solutions further highlighted the need for advanced data quality tools.

HMG implemented a data management strategy that involved scanning for thousands of duplicate records and creating a 'best record' for a single donor view in their CRM. They automated the process using rules to select the 'best record' and populate it with the best data from other duplicates. This saved review time and created a process for automatically reviewing duplicates. Ambiguous records were sent for further analysis and processing.

HMG has successfully identified, cleansed, and merged over 10,000 duplicate records to date, with the process ongoing. As they expand their CRM to include more data capture sources, the need for their data management strategy is increasing. This approach allowed them to clean their legacy datasets.

Northern Michigan University

Northern Michigan University faced a data quality issue when they introduced a self-service technology for students to manage administrative tasks, including address changes. This caused incorrect address data to be entered into the school's database.

The university implemented a real-time address verification system within the self-service technology. This system verified the address information entered over the web and prompted users to provide missing address elements when an incomplete address was detected.

The real-time address verification system has given the university confidence in the usability of the address data students entered. The system validates addresses against official postal files in real time before a student submits them.

If an address is incomplete, the student is prompted to augment it, reducing the resources spent on manually researching undeliverable addresses and ensuring accurate and complete address data for all students.

How Estuary Supports Data Quality in Motion

Most data quality problems do not originate in the analytics layer. They originate in transit, when data moves between source systems, pipelines, and destinations without proper validation, deduplication, or schema enforcement. This is the specific problem Estuary is built to address.

Estuary is a real-time CDC and data integration platform that helps maintain data quality across the pipeline, not just at the point of collection.

- Capturing only committed changes. Rather than re-copying full datasets on a schedule, Estuary uses Change Data Capture to detect and propagate only committed inserts, updates, and deletes from source systems. This eliminates the stale data problem that batch pipelines introduce and ensures your destination reflects the current state of the source.

- Exactly-once delivery. Duplicate records are one of the most common data quality issues in high-volume pipelines. Estuary's pipeline architecture guarantees exactly-once semantics, meaning each change event is written to the destination once and only once, without requiring a downstream deduplication step.

- Built-in schema validation. Estuary validates incoming data against defined schemas as it flows through the pipeline. When a source system introduces a schema change, Estuary detects it, flags the discrepancy, and supports schema evolution rather than silently passing malformed records downstream.

- Streaming transformations. Teams can apply SQL-based transformations to data in flight before it reaches the destination, enforcing business rules, standardizing formats, and filtering invalid records as part of the pipeline rather than as a separate cleanup job.

- Sub-second latency to destinations. Timeliness is a core data quality dimension. Estuary materializes data to destinations like Snowflake, BigQuery, and Redshift within milliseconds of the source event, keeping your analytics layer current without manual intervention.

For teams managing data quality across distributed systems, the pipeline layer is where quality is either preserved or degraded. Estuary is designed to preserve it.

Conclusion

Data quality is not a project with an end date. It is an operational standard that requires consistent enforcement across every system, pipeline, and process that touches your data.

The dimensions covered in this guide, from accuracy and completeness to timeliness and integrity, give you a practical framework for assessing where your data stands and what needs attention. The standards covered, including ISO 8000, TDQM, Six Sigma, and DAMA DMBOK, provide the governance structures to make those improvements systematic rather than reactive.

For organizations managing data across multiple source systems and destinations, maintaining quality in transit is as important as maintaining it at rest. Estuary helps data teams enforce quality standards at the pipeline level, where data is most at risk of becoming stale, duplicated, or malformed.

If you are building or improving a data pipeline and want to understand how Estuary can support your data quality standards, sign up for free to get started or contact the team to discuss your use case.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.