Data lakes are robust solutions for storing, organizing, and analyzing vast amounts of raw data. However, with the increasing volume, variety, and velocity of big data, businesses should access and analyze this data in real time. That’s where the concept of a real-time data lake comes in.

Chances are your organization is very comfortable with the current status of the data repository. But coming from industry experts, it will be much quicker than you realize that it has outgrown the data infrastructure.

So in this article, we will explore the concept of real-time data lakes in-depth and understand their purpose, architecture, and varied use cases across industries on this platform. All of this information will be supported by real-world examples from industry giants to paint a clearer picture for you.

By the end of this valuable read, you will have complete knowledge about how real-time data lakes work to ensure that your business stays at the forefront of data-driven decision-making.

Understanding Real-Time Data Lakes

Real-time data lakes store real-time data as soon as it is generated without preconceptions about its structure or type. By not making assumptions about the data before storing it, real-time data lakes provide businesses with the flexibility to adapt their data strategies as market conditions and business needs evolve.

For a better understanding of real-time data lakes, let’s discuss what a traditional data lake is and how it differs from a data warehouse.

Traditional Data Lakes

A traditional data lake, in simple terms, is a vast storage system where organizations dump all of their data in its raw form.

The major benefit here is flexibility. Data lakes accept all kinds of data without the need for prior organization or structuring. This way, you can add the structure later based on the specific analysis requirements or business questions you want to answer.

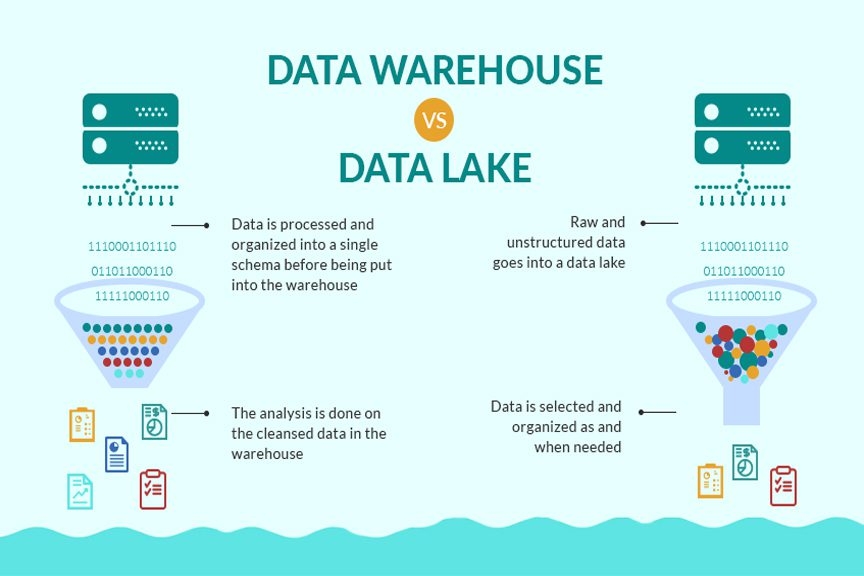

Differences Between A Data Warehouse & A Data Lake

Drawing a line between a data warehouse and a data lake can be a little tricky. So, let’s cut through the tech jargon and get to the heart of how these two differ from each other:

- Data Quality: Data in a warehouse is highly curated whereas a data lake stores raw, possibly unedited data.

- Data Use: Data in a warehouse has a defined purpose while a data lake can store data whose purpose isn’t determined yet.

- Cost & Speed: Data warehouses provide quick results at a higher cost. On the other hand, data lakes offer relatively slower results but cost much less.

- Users: Business analysts primarily use data warehouses, while data lakes attract a wider audience including data scientists, developers, and business analysts.

- Data Type: While a data lake accommodates structured and unstructured data from various sources, a data warehouse handles structured data from business apps.

- Data Organization: Data warehouses require organized data upfront (schema-on-write) while data lakes are more flexible, organizing data when needed (schema-on-read).

- Analytics: Warehouses are great for batch reporting and business intelligence applications. Data lakes, on the other hand, shine in machine learning, predictive analytics, and data discovery tasks.

The Evolution Of Data Lakes Into Real-Time Data Lakes

Traditional data lakes are best for storing and analyzing historical data. But businesses today need real-time insights to make quicker decisions and to compete in the current market. This is a role real-time data lakes were designed for.

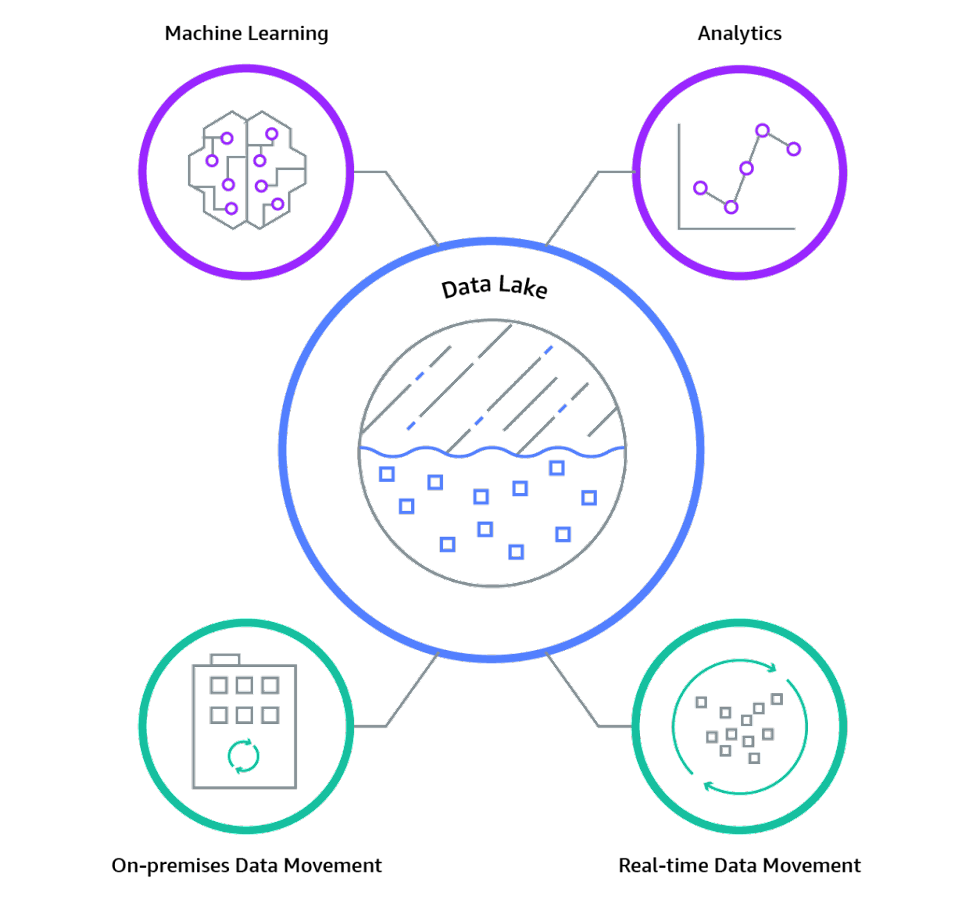

A real-time data lake, as the name suggests, is a data storage designed to process and analyze data in real time. It’s an evolution of the traditional data lake equipped to handle streaming data from IoT devices, social media feeds, weblogs, etc., and provide instant insights.

Here’s how it works:

- Data Streaming: Real-time data lakes ingest streaming data. They can process and analyze data as it arrives without the need to store it first. This benefits businesses that need to respond to events as they occur.

- Real-Time Analysis: They enable real-time analytics so that businesses can get insights and make data-driven decisions in near real-time. This is particularly beneficial in areas like fraud detection, monitoring, and real-time personalization.

- Data Agility: Real-time data lakes still maintain the advantages of traditional data lakes. They can handle all kinds of data, structured or unstructured, and can define the schema at the time of analysis, not before.

Real-Time Data Lake Architecture

To better understand the functionality of real-time data lakes, let’s break down the key architectural components and how they handle real-time data.

A highly functional real-time data lake requires more that the simple “dump it now, process it later” approach we see in some traditional data lakes. That’s because in order for data to be processed immediately, we can’t create any sort of technical debt that will take time to unravel.

Real-time data lake architecture must balance the advantages of a data lake (the ability to accept a variety of structured and unstructured data) with the advantages of a data product (for example, validation against a defined schema, a unique name, and metadata).

In Estuary, your data collections are real-time data lakes as well as highly-funcitonal data products. As we go through each of the general architectural components below, we’ll show how it plays out in the real-world system of Estuary.

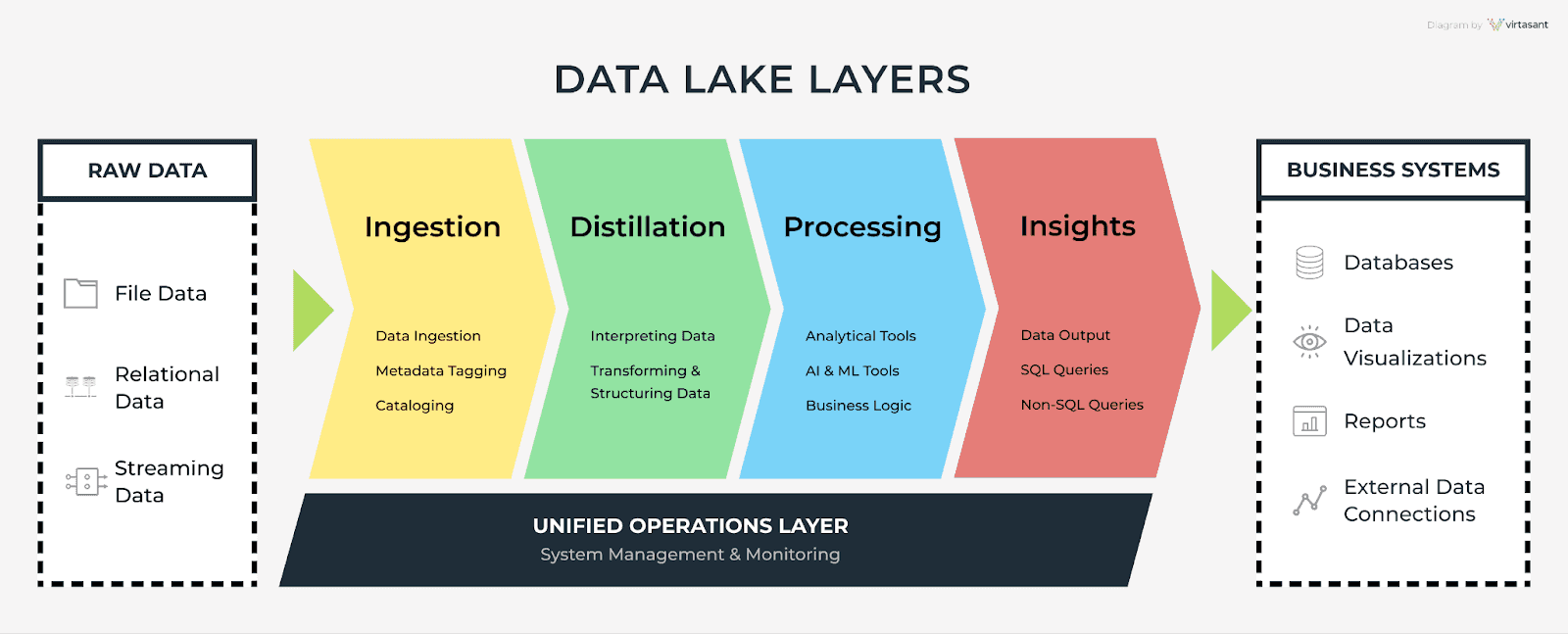

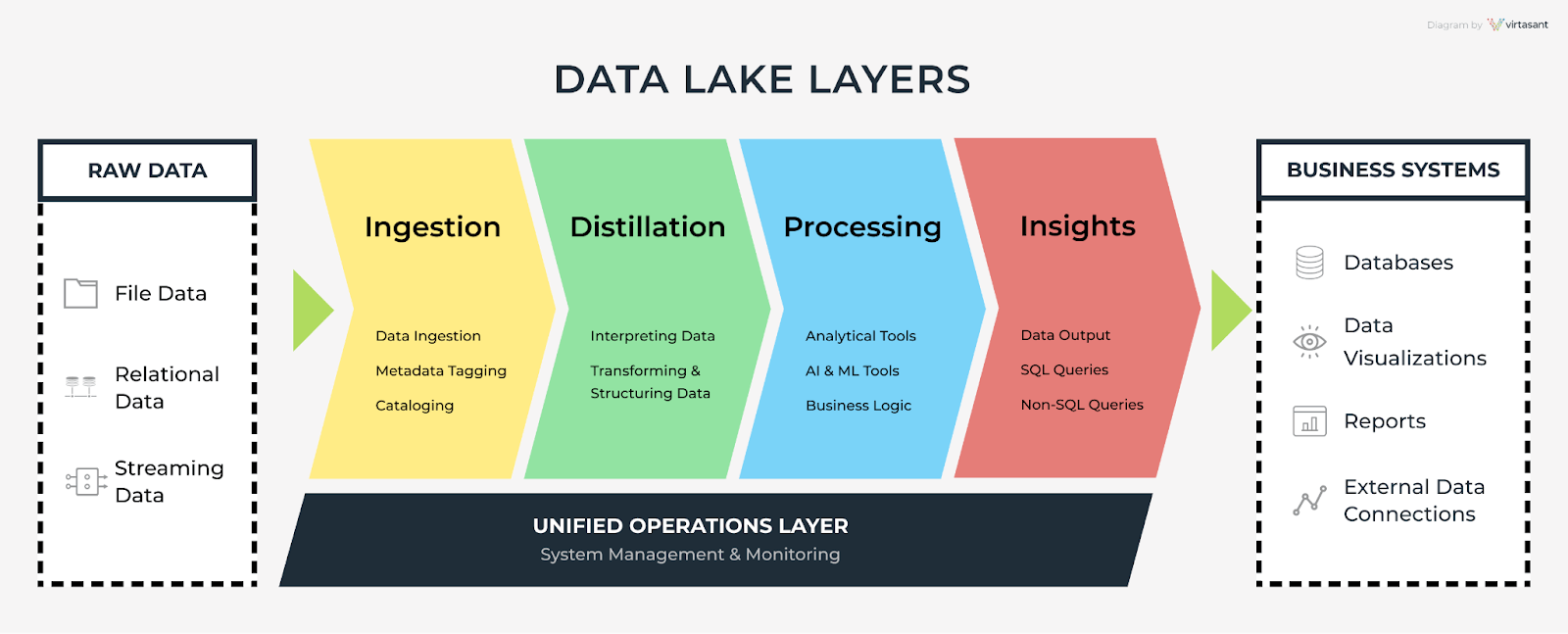

Ingestion Layer

The ingestion layer acquires data from various sources. In a real-time data lake, this layer continuously receives and processes data streams, so organizations can act on time-sensitive information.

The first layer has the following important functions:

- Real-time data ingestion

- Real-time data streaming from multiple sources

- Handling various data formats (structured, unstructured, and semi-structured)

In Estuary, data captures comprise the ingestion layer. These tasks ingest data in real time from a variety of sources — from APIs to database Change Data Capture.

Distillation Layer

The distillation layer plays a vital role in preparing data for analysis. In real-time data lakes, this layer ensures the rapid conversion of raw data into a structured format.

Key functions of this layer include:

- Real-time data cleansing and normalization

- Enriching data with additional context or metadata

- Indexing data to support faster retrieval

In Estuary, captures are also the distillation layer. They apply a basic JSON schema and a key to the ingested data, as well as metadata. The result is a data collection. Collections are stored in your cloud storage bucket, so you can access them for other workflows (they don’t just belong to Estuary!)

Processing Layer

The processing layer is where data gets analyzed and transformed into valuable insights. Real-time data lakes employ advanced data processing techniques to deliver results at an impressive speed.

Key features in the context of real-time data include:

- Real-time data analytics and processing

- Stream processing for time-sensitive insights

- Scalable and distributed computing resources for large datasets

In Estuary, derivations are the processing layer. These are stateful or stateless real-time transformations, allowing you to re-shape, filter, join, and aggregate collections.

Insights Layer

The insights layer is crucial for delivering advanced analytics to support decision-making. This layer ensures that you have access to the most recent and relevant information.

Key aspects include:

- Real-time visualization and reporting

- Dashboards and alerts for immediate action

- Integration with other business intelligence tools

In Estuary, data materializations allow you to create the insights layer. These tasks push collections to a variety of destination systems, like data warehouses and SaaS tools. From there, you can use your data to meet business needs.

Unified Operations Layer

The unified operations layer manages and monitors the entire real-time data lake for seamless data flow and maintaining system health.

Key functions of this layer include:

- Real-time monitoring and auditing

- Ensuring data security and compliance

- Workflow management and orchestration

In Estuary, you interact with the operations later via the web app. Estuary takes care of the nuts and bolts of security, compliance, and processing guarantees, but you can monitor your data pipelines, view logs, and manage workflows in the web app.

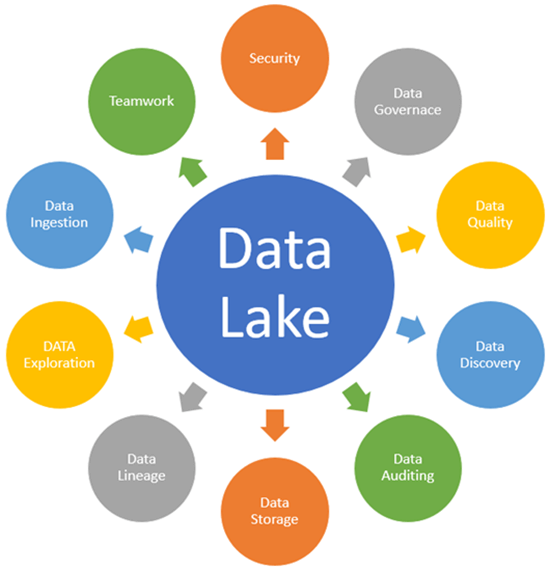

The Value Of Real-Time Data Lakes: A Closer Look At 7 Major Functions

Let’s get straight into why you need a real-time data lake in your operations.

- Cutting Costs: A real-time data lake can separate storage from computing resources resulting in substantial savings.

- Providing Instant Insights: Real-time data lakes give you immediate access to the stored raw data. This enables quicker, more informed decisions.

- Scaling With Your Business: As your business expands, so does your data. You need a system that grows with you and a real-time data lake is built for that.

- Fostering Team Collaboration: An important purpose of real-time data lakes is to break down data silos within your organization. It fosters data-driven teamwork and an inclusive data culture.

- Centralizing Data Control: With so much data at hand, having a centralized governance system becomes crucial. A real-time data lake gives you a single control point for all data-related activities.

- Facilitating Data Strategies and Machine Learning: If you plan to implement data strategies and accelerate machine learning initiatives, a real-time data lake provides a rich and diverse data resource for your innovative solutions.

- Managing Different Data Types: You’ll come across various data types in your business. Real-time data lakes offer a flexible solution that can process diverse data formats using an array of tools and languages.

7 Use Cases Of Real-Time Data Lakes

Real-time data lakes are transforming industries' operations and provide a centralized storage system for their continuously generated data. Let’s discuss how different sectors leverage real-time data lakes.

Healthcare Industry

Real-time data lakes store vast amounts of healthcare data, such as patient records and test results, allowing analysts to easily identify patterns like disease outbreaks or patient risks.

Retail Industry

Retailers can use real-time data lakes to store everything from sales transactions and customer data to inventory updates. They can use this information for real-time inventory monitoring and quick responses to sudden demand surges.

Financial Industry

In the finance industry, real-time data lakes act as repositories for transactional data and market movements, helping analysts in different tasks like monitoring for potential fraud.

Manufacturing Industry

Manufacturers use real-time data lakes to store varied data from machine performance to supply chains. This data allows analysts to perform advanced process optimization and quickly spot signs of machine failure.

Telecommunication Industry

The telecom sector generates large amounts of data ranging from network traffic, customer usage, service disruptions, and customer feedback. Real-time data lakes can store this data for later analysis.

Transportation Industry

In the transportation sector, real-time data lakes store diverse data including GPS tracking, traffic updates, vehicle maintenance records, and passenger info, allowing officials to access and analyze the latest information for improving the transit experience.

Energy Sector

Utility and energy providers use real-time data lakes to store everything from energy consumption and grid performance to weather patterns and equipment status. This data helps them respond faster to sudden spikes in energy use or equipment glitches to ensure a steady supply.

3 Real-World Examples Of Real-Time Data Lakes

Nothing brings the real-time data lakes concept to life like real-world examples. So let’s take a look at 3 examples.

Coca-Cola Andina

Let’s start with the case of Coca Cola Andina. This beverage giant uses a data lake to manage its vast amounts of data. Their massive operation involves catering to more than 54 million consumers spread across Chile, Argentina, Brazil, and Paraguay.

The company faced a common challenge in the CPG industry - vast quantities of data stored in separate, disconnected systems. This fragmented data made it difficult for the company to effectively analyze information and make informed decisions.

Realizing the need for a more streamlined approach to data management, Coca-Cola Andina decided to build a data lake - a single, unified source for all their relevant business data.

Here’s how it unfolded:

- They chose Amazon Web Services (AWS) for their data lake, needing an accessible and reliable system with unlimited storage and processing capacity.

- They successfully established a data lake, the single source of data generated from various resources like their ERP system, CSV files, and legacy databases.

- They utilized AWS services like Amazon S3, QuickSight, Athena, and SageMaker.

- They trained a multidisciplinary team with AWS Professional Services, turning the company into a data-driven decision-maker.

And the results were impressive:

- They saw an 80% productivity boost within their analytics team.

- Over 95% of data from different business areas were unified in the data lake.

- Reliable and unified data improved decision-making and increased revenue through efficient promotions, reduced stock shortages, and an enhanced customer shopping experience.

C4ADS

Now let’s take a look at C4ADS, an organization focused on global conflict and transnational security issues, which had a tough task at hand. They were dealing with diverse data from numerous sources which made it hard to digest and use effectively.

Their solution? A data lake. Here were the primary goals:

- Scalability

- Robust security

- Speedy implementation

For them, the main obstacle was consolidating various file formats like PDFs, emails, Microsoft Word and Excel files, logs, XML, and JSON files. They did this by tagging all uploaded file content with consistent metadata, allowing faster search results and enhanced visibility.

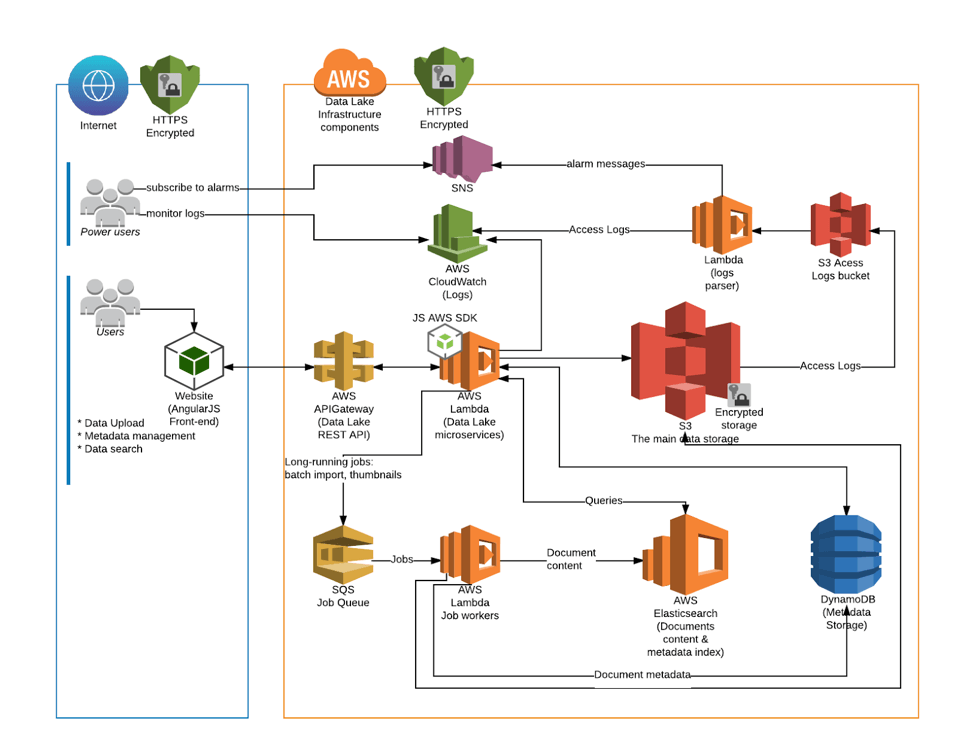

To make this data lake a reality, they decided to implement a cloud data lake in an Amazon Virtual Private Cloud (VPC) and designed a web-based user interface (UI) for the management of data sources. They employed AWS S3 for data storage, DynamoDB for file metadata storage, and AWS Elasticsearch for robust search queries.

AstraZeneca

AstraZeneca is a global pharmaceutical company that operates in over 70 countries. Their challenge was to improve the speed and consistency in performance reporting across their entire group.

AstraZeneca decided to leverage a data lake approach. This move replaced their time-consuming, manual reporting methods with a more agile, consistent, and quicker platform. AstraZeneca’s data lake pulled together internal data assets from around the world and eliminated the need for tedious manual interventions.

So what did this mean for AstraZeneca?

- They leveraged superior data management technology to manipulate, conform, cleanse, and structure large volumes of data.

- A shared toolset, metrics, and screens were utilized by business customers, shared service staff, and the BPO provider, providing everyone with the same information and insights.

- Standardized performance scorecards and metrics were implemented for every customer group worldwide. This made it much easier to identify issues and potential opportunities.

How Can Estuary Help?

A real-time data lake offers significant advantages to your business, from immediate insights to rapid decision-making. But architecting and managing your own real-time data lake is a massive engineering challenge.

That’s where we can help. Our streaming ETL (Extract, Transform, Load) solution, Estuary, uses a real-time data lake as its central storage, while helping you move data between your mission-critical systems. You get:

- A single source of truth: With Estuary, you capture data from all your source systems into collections. You can use Estuary to materialize these collections to other systems, or you can access them directly — they live in a cloud storage bucket that you own.

- Robust data products: Each Estuary collection has a globally unique name, a JSON schema, metadata, and a key. Estuary uses precise rules to reduce data based on its key, for highly controllable and predictable behavior. By applying a loose schema, you can still retain control over unstructured data.

- Transformations: With Estuary, you have the power of real-time SQL and TypeScript transformations at your fingertips so that you can modify and refine your data as it travels through the data lake.

- Extensive Range of Connectors: Estuary connectors serve as the glue connecting your many data systems to the Estuary platform by providing a consistent and uninterrupted data flow. With our open protocol, adding new connectors is also very easy.

- Scalability and Resilience: Estuary is built to grow alongside your data with a track record of handling data volumes up to 7 GB/s. It comes equipped with built-in testing for data accuracy and robust resilience across regions and data centers while keeping the load on your systems minimal.

Conclusion

A real-time data lake plays a big role in how we use data today. They offer fast access to data which helps businesses make quick and smart choices. You can use a real-time data lake to manage different kinds of data, generate immediate insights, and ultimately save money, and grow your business.

By collecting all your data at the same location, data lakes also improve data transparency and promote more effective team collaboration. Because of these reasons, this innovative storage solution has found wide-ranging applications in sectors such as healthcare, manufacturing, retail, and many more.

If you are thinking about setting up a real-time data lake to give your business a boost, use Estuary to skip the engineering legwork. Estuary ensures effortless and cost-effective handling of real-time data in a cloud-backed data lake without compromising quality.

Try Estuary for free by signing up here or talking to our team to see how we can help with your use case!

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.