Connecting Salesforce to Snowflake unlocks analytics that are impossible inside Salesforce alone. Your sales team manages customers and pipeline in Salesforce. Your data team needs that data in Snowflake to join it with finance, marketing, and product data, run complex queries, and power dashboards that Salesforce reports cannot produce.

This guide covers four methods for moving Salesforce data to Snowflake, including step-by-step setup for each, a comparison of trade-offs, and the technical details that matter in production, including API limits, formula field handling, and authentication setup.

Methods covered

- Estuary (recommended for continuous sync)

- Tableau CRM Sync Out (native, if licensed)

- Salesforce Bulk API 2.0 (DIY, no additional cost)

- Data Loader (manual or scripted exports)

Before You Start: Salesforce API Limits

Every method that reads from Salesforce consumes API calls. Understanding the limits before choosing a method prevents production surprises.

Salesforce enforces daily API call limits based on your edition and license count. Enterprise Edition includes 100,000 calls per day as a base, plus an additional 1,000 calls per user license. Bulk API 2.0 has separate limits from the REST API, which is why connectors that use Bulk API for backfills (including Estuary) preserve your REST API quota for incremental updates.

The formula field problem

Formula fields add an important wrinkle. Salesforce calculates formula fields on read and does not update a record's SystemModStamp when an underlying field changes the formula result. This means any connector that syncs incrementally by polling SystemModStamp will miss formula field updates silently.

The only solutions are either a full daily refresh of affected objects, or a connector that explicitly schedules formula field refreshes independently. Estuary handles this automatically on a configurable schedule (daily by default). If formula fields matter to your analytics, this is a meaningful selection criterion.

Salesforce API access requires Enterprise Edition or higher. Professional Edition does not expose the REST or Bulk API by default. All methods in this guide assume Enterprise Edition or above.

What You Need Before Connecting

Regardless of method, two things must be in place before connecting Salesforce to Snowflake.

On the Salesforce side

Create a dedicated Salesforce integration user with read-only access to the objects you need. Do not use an admin account for connector authentication. Then create a Connected App in Salesforce Setup under App Manager. The Connected App provides the OAuth credentials (Consumer Key and Consumer Secret) that connectors use to authenticate.

Enable the following OAuth scopes on the Connected App: api, refresh_token, and offline_access at minimum.

On the Snowflake side

Create a dedicated database, schema, virtual warehouse, role, and user for the integration. Run the following script in your Snowflake SQL editor. Check the All Queries checkbox before running.

plaintextset database_name = 'SALESFORCE_DB';

set warehouse_name = 'SALESFORCE_WH';

set estuary_role = 'ESTUARY_ROLE';

set estuary_user = 'ESTUARY_USER';

set estuary_schema = 'SALESFORCE_SCHEMA';

create role if not exists identifier($estuary_role);

grant role identifier($estuary_role) to role SYSADMIN;

create database if not exists identifier($database_name);

use database identifier($database_name);

create schema if not exists identifier($estuary_schema);

create user if not exists identifier($estuary_user)

default_role = $estuary_role

default_warehouse = $warehouse_name;

grant role identifier($estuary_role) to user identifier($estuary_user);

grant all on schema identifier($estuary_schema) to identifier($estuary_role);

create warehouse if not exists identifier($warehouse_name)

warehouse_size = xsmall

warehouse_type = standard

auto_suspend = 60

auto_resume = true

initially_suspended = true;

grant USAGE on warehouse identifier($warehouse_name)

to role identifier($estuary_role);

grant CREATE SCHEMA, MONITOR, USAGE on database identifier($database_name)

to role identifier($estuary_role);

grant CREATE INTEGRATION on account to role identifier($estuary_role);

COMMIT;

This script is adapted from the Estuary Snowflake connector documentation. The database, warehouse, and schema setup is the same regardless of which connector you use.

Method 1: Estuary (Recommended for Continuous Sync)

Estuary's Salesforce connector uses Salesforce's Bulk API 2.0 for initial backfills and the REST API for incremental updates. This dual-API approach means large historical loads do not consume your daily REST API quota. The connector supports standard and custom Salesforce objects, custom field types, and formula fields.

What makes Estuary technically distinct

- Formula field refresh: The connector automatically refreshes formula fields on a configurable schedule (daily by default). This addresses the SystemModStamp limitation and is a documented connector capability.

- Snowpipe Streaming: When delta updates are enabled on a Snowflake binding, Estuary automatically uses Snowpipe Streaming, Snowflake's lowest-latency ingestion method. This writes rows directly to Snowflake tables without keeping a warehouse running between loads, reducing cost.

- Dual API strategy: Bulk API 2.0 for backfills (high throughput, separate rate limits), REST API for incremental updates (lower volume, preserves daily quota).

- Standard and custom objects: All Salesforce standard objects and custom objects, including custom field types and formula fields, are captured automatically after connection.

Key-pair authentication is required on the Snowflake side to use Snowpipe Streaming for delta update bindings.

Password-based authentication is deprecated in the Estuary Snowflake connector. Use JWT key-pair auth.

Here's a step-by-step guide to integrating Salesforce with Snowflake using Estuary:

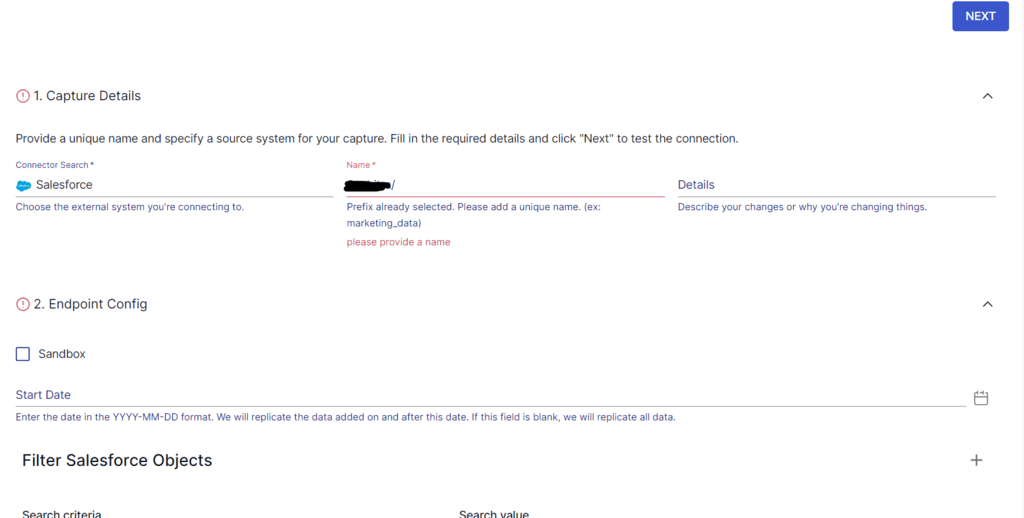

Step 1: Capturing Data from Salesforce with Estuary

- Sign in to your Estuary account or sign up for free. Once logged in, click on Sources.

- In the Sources window, click on + NEW CAPTURE.

- On the Create Capture page, search for Salesforce. Select the Salesforce connector.

- Fill in the required connection details:

- Authentication Method: OAuth Credentials

- Client ID: Consumer Key from your Salesforce Connected App

- Client Secret: Consumer Secret from your Connected App

- Refresh Token: Obtained after completing the OAuth flow in your Connected App

- Instance URL: Your Salesforce instance URL, for example https://yourcompany.salesforce.com

- Start Date: Optional. Limits the initial backfill to records created after this date. Useful for large orgs where a full historical backfill is impractical.

- After entering these details, click Next. Estuary connects to your Salesforce org and automatically discovers all available objects, both standard and custom. Because most Salesforce orgs contain more objects than you need to sync, disable bindings for objects you do not use before saving.

The connector maintains an internal list of known standard objects for discovery efficiency. If a standard object does not appear after connecting, contact Estuary support via Slack or email at support@estuary.dev to have it added.

- Click Save and Publish to start the capture. The initial backfill runs using Bulk API 2.0. Once the backfill completes, the connector switches to incremental sync using the REST API.

Step 2: Generate key-pair credentials for Snowflake

Key-pair authentication is required for the Snowflake materialization. Run the following in your terminal:

plaintext# Generate the private key

openssl genrsa -out rsa_key.pem 2048

# Extract the public key

openssl rsa -in rsa_key.pem -pubout -out rsa_key.pub

Register the public key with your Snowflake user. In the Snowflake SQL editor, run:

plaintext-- Replace the key value with the contents of rsa_key.pub

-- Remove the header/footer lines (-----BEGIN PUBLIC KEY----- etc.)

ALTER USER ESTUARY_USER SET RSA_PUBLIC_KEY='MIIBIjANBgkq...(your public key)...';

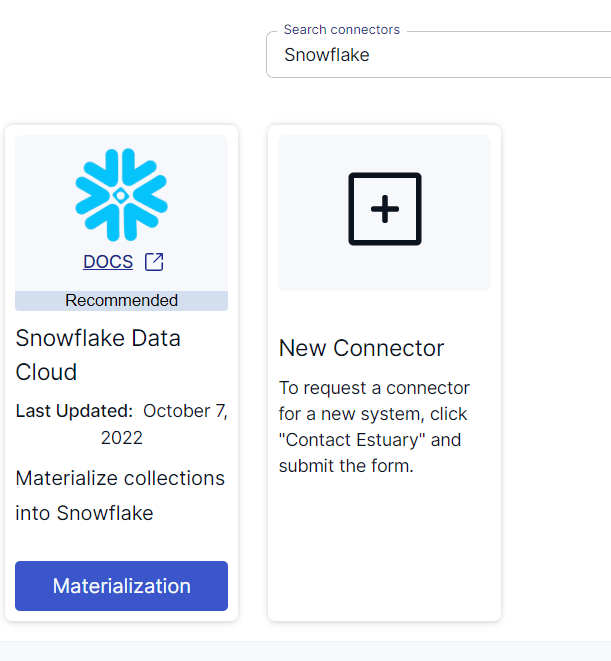

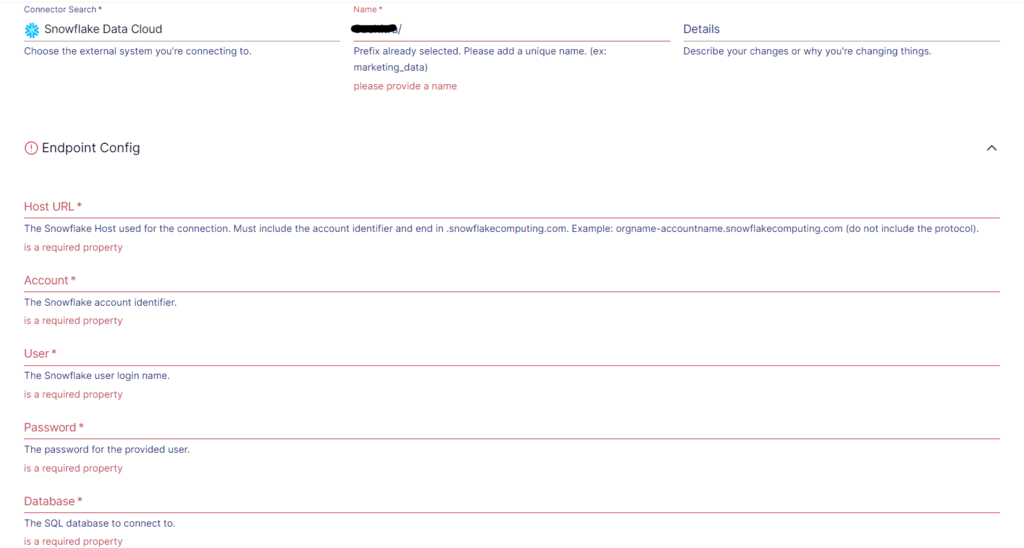

Step 3: Configure the Snowflake materialization

- After a successful capture, you can either click on Materialize Collections in the pop-up or go to the Estuary dashboard and click on Destinations on the left-side pane.

- Click New Materialization to set up the data destination. On the Create Materialization page, search for Snowflake and click on Materialize.

- Provide the endpoint configuration:

- Host: Your Snowflake account host URL. Format: orgname-accountname.snowflakecomputing.com

- Database, Schema, Warehouse: The values from the SQL setup script above

- Auth type: jwt

- User: ESTUARY_USER (or the username you set in the script)

- Private Key: Contents of rsa_key.pem including the header and footer lines

The YAML configuration for the Snowflake connector looks like this:

plaintextmaterializations:

your-prefix/salesforce-to-snowflake:

endpoint:

connector:

config:

database: SALESFORCE_DB

host: orgname-accountname.snowflakecomputing.com

schema: SALESFORCE_SCHEMA

warehouse: SALESFORCE_WH

credentials:

auth_type: jwt

user: ESTUARY_USER

privateKey: |

-----BEGIN PRIVATE KEY-----

MIIEv....

-----END PRIVATE KEY-----

image: ghcr.io/estuary/materialize-snowflake:dev

bindings:

- resource:

table: opportunity

delta_updates: true # enables Snowpipe Streaming

source: your-prefix/salesforce/Opportunity

The Source Collections browser will show the Salesforce collections captured in Step 1. Each collection maps to a Snowflake table. You can rename the table in the binding configuration.

For each binding, decide between standard updates (merge/upsert, always reflects current Salesforce state) and delta updates (append-only, activates Snowpipe Streaming for lowest-latency delivery). Set delta_updates: true per binding for Snowpipe Streaming.

- Click Save and Publish. Estuary will continuously sync Salesforce changes to Snowflake.

Snowflake warehouse cost tip

If you do not need minute-level data freshness, configure an update delay in the Snowflake materialization settings. An update delay pauses the materialization task for the configured duration before processing new data. This allows the Snowflake warehouse to auto-suspend between batches. Snowflake's minimum billing granularity is one minute, so a 1-2 hour update delay can substantially reduce compute costs for non-real-time use cases.

Further reading:

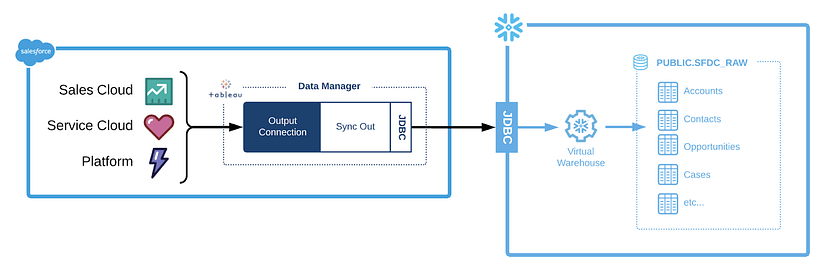

Method 2: Using Tableau CRM Sync Out (Native, If Licensed)

This method uses Salesforce's CRM Analytics (formerly Tableau CRM) Sync Out feature to push data from CRM Analytics datasets to Snowflake. It requires a CRM Analytics license, which is a paid add-on to Salesforce.

Sync Out transfers CRM Analytics datasets, not raw Salesforce object records. It is not a general-purpose Salesforce-to-Snowflake connector. Consider this method only if your team already uses CRM Analytics and wants to push curated analytics datasets to Snowflake for cross-system reporting.

To connect Salesforce to Snowflake, follow these steps:

Step 1: Configuring the Snowflake Output Connection in Salesforce

To use the Sync Out feature with Snowflake, you need to set up the following Snowflake objects in your Snowflake account:

- Database and Schema: You must create a database and schema to store Salesforce data.

- Warehouse: A warehouse in Snowflake is a computational resource that allows you to load and process data. For Sync Out, it is recommended to use an XSMALL or SMALL warehouse size, which provides sufficient resources for the task.

- Role: A role in Snowflake defines a set of permissions and privileges for users. You need to create a role with the necessary permissions on the warehouse and privileges on the database and schema.

- User: Finally, you must create a user and grant them the role as mentioned above. This user will be associated with the role and have the privileges and permissions necessary to perform data synchronization activities with Snowflake.

Here's a simplified script to create the Snowflake objects:

plaintextUSE ROLE SECURITYADMIN;

CREATE ROLE SYNCOUT;

CREATE USER TCRMSYNC PASSWORD = '<your password>' LOGIN_NAME = 'TCRMSYNC' DISPLAY_NAME = 'TCRMSYNC'

DEFAULT_ROLE = SYNCOUT DEFAULT_WAREHOUSE = 'SYNC_WH' DEFAULT_NAMESPACE = 'SFDC_DB.PUBLIC' MUST_CHANGE_PASSWORD = TRUE;

GRANT ROLE SYNCOUT TO USER TCRMSYNC;

USE ROLE SYSADMIN;

CREATE OR REPLACE WAREHOUSE SYNC_WH

WITH WAREHOUSE_SIZE = 'XSMALL'

AUTO_SUSPEND = 60

INITIALLY_SUSPENDED = TRUE

AUTO_RESUME = TRUE;

GRANT ALL ON WAREHOUSE SYNC_WH TO ROLE SYNCOUT;

CREATE DATABASE SYNC_DB;

CREATE SCHEMA SYNC_DB.SYNCOUT;

GRANT USAGE ON DATABASE SYNC_DB TO ROLE SYNCOUT;

GRANT USAGE, CREATE TABLE, CREATE STAGE ON SCHEMA SYNC_DB.SYNCOUT TO ROLE SYNCOUT;

GRANT SELECT, INSERT, UPDATE, DELETE, TRUNCATE ON FUTURE TABLES IN SCHEMA SYNC_DB.SYNCOUT TO ROLE SYNCOUT;This script will create a role, a user associated with that role, a warehouse, a database, and a schema. It grants the role and user-appropriate permissions for accessing and manipulating the Snowflake objects.

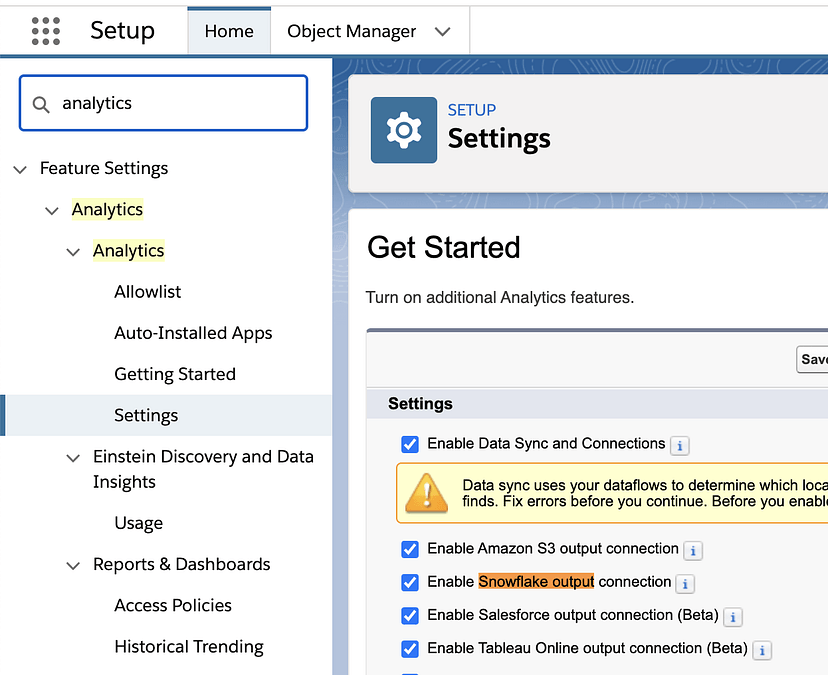

Step 2: Enabling Sync Out for Salesforce Objects

Firstly, you have to enable the Sync Out connection in Salesforce. Go to Setup > Analytics > Settings and check the Enable Data Sync, Connections, and Enable Snowflake output connection checkboxes.

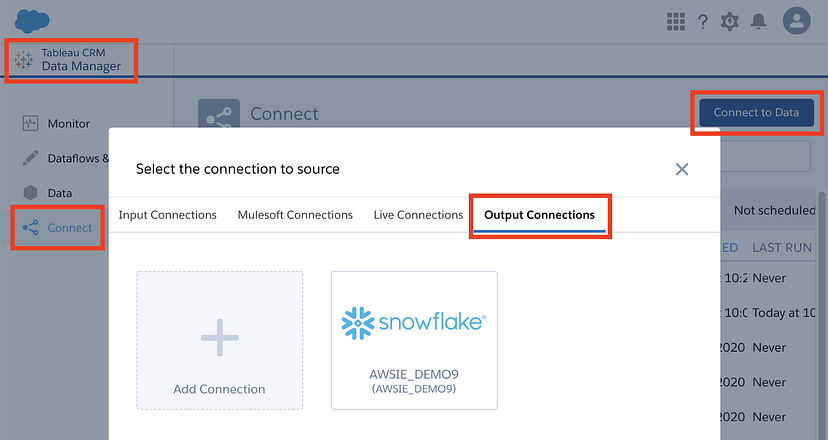

Step 3: Set up the Output Connection

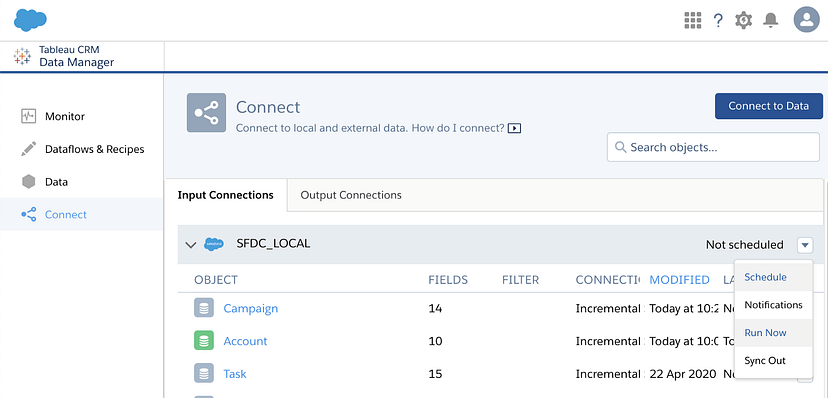

Go to Connect > Connect to Data > Output Connections in Tableau CRM Data Manager. Click on Add Connection, select the Snowflake Output Connector, and enter the Snowflake credentials.

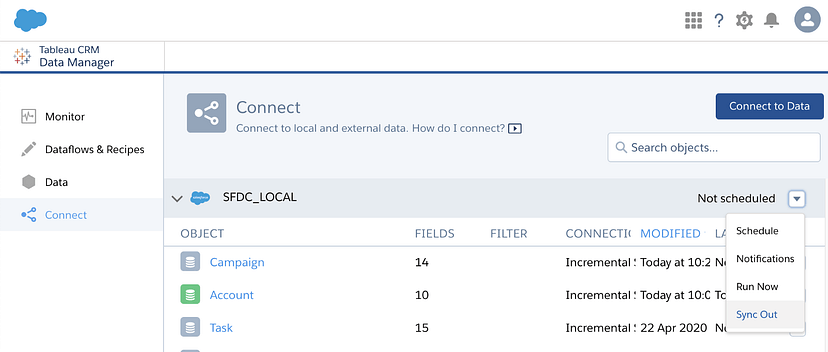

Step 4: Enable Sync Out for SFDC_LOCAL objects

Now, you must inform Tableau CRM that you want to push data to Snowflake. Open Tableau CRM Data Manager, go to Connect and click the down arrow on the right in the SFDC_LOCAL bar. Click on Sync Out and enable it by providing appropriate details. If you can't see the option, contact Salesforce Support for assistance.

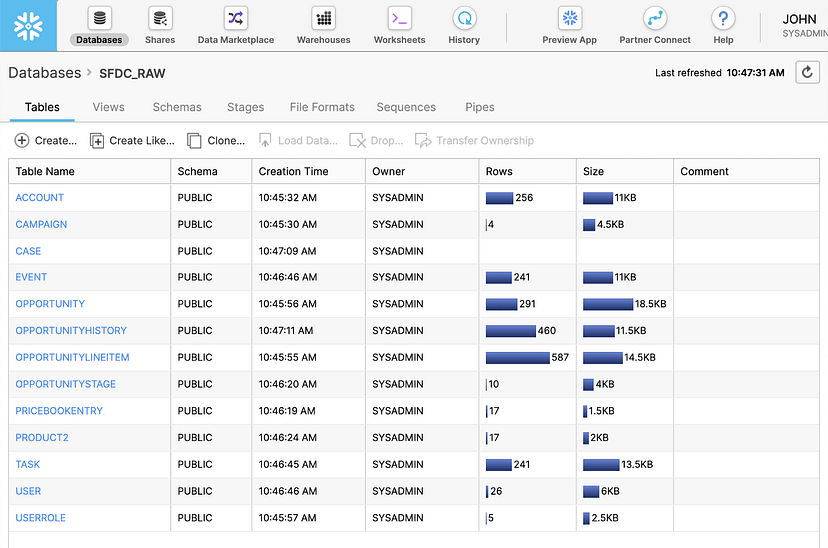

Step 5: Sending and Verifying Data Transfer to Snowflake

In the SFDC_LOCAL bar, click the down arrow and choose Run Now to send all data to Snowflake. To sync a specific object, click the down arrow on that object and select Run Now. Your data from Tableau CRM will be sent to Snowflake.

You can navigate back to your Snowflake account, where your Salesforce data is readily available. After Salesforce and Snowflake have been successfully integrated, you can proceed to perform advanced analytics on your data.

Limitations of Data Synchronization Using Tableau CRM Sync Out Feature

- Transfers CRM Analytics datasets, not raw Salesforce records.

- Complex formula fields spanning multiple objects may not read correctly through the CRM Analytics API.

- Local data source structures can cause table limitation errors.

- Sync schedules are managed within CRM Analytics, not independently.

Method 3: Salesforce Bulk API 2.0 (DIY, No Additional Cost)

If you want to move Salesforce data to Snowflake without a third-party connector, the Salesforce Bulk API 2.0 is the right choice for large data volumes. It handles high-throughput exports efficiently, supports CSV and JSON output, and has separate rate limits from the REST API. The trade-off is that you build and maintain the pipeline yourself: scheduling, error handling, schema drift detection, incremental watermarking, and delete tracking are all your responsibility.

Step 1: Extract data from Salesforce using Bulk API 2.0

Create a query job using an HTTP POST:

plaintextPOST /services/data/v59.0/jobs/query

Authorization: Bearer <access_token>

Content-Type: application/json

{

"operation": "query",

"query": "SELECT Id, Name, Amount, CloseDate, StageName, LastModifiedDate

FROM Opportunity

WHERE LastModifiedDate > 2024-01-01T00:00:00Z",

"contentType": "CSV"

}

Poll the job status endpoint until state shows JobComplete:

plaintextGET /services/data/v59.0/jobs/query/<jobId>

Authorization: Bearer <access_token>

Download the results once complete:

plaintextGET /services/data/v59.0/jobs/query/<jobId>/results

Authorization: Bearer <access_token>

For incremental syncs: track the maximum LastModifiedDate from the previous run and use it as the filter in your next query. Store this watermark in a metadata table or your orchestration layer (Airflow, dbt, etc.).

Step 2: Stage and load the CSV into Snowflake

Create a file format and stage in Snowflake:

plaintextCREATE OR REPLACE FILE FORMAT salesforce_csv

TYPE = 'CSV'

FIELD_OPTIONALLY_ENCLOSED_BY = '"'

SKIP_HEADER = 1;

CREATE OR REPLACE STAGE salesforce_stage

FILE_FORMAT = salesforce_csv;

Upload the CSV and load it:

plaintextPUT file:///local/path/to/opportunity.csv @salesforce_stage;

COPY INTO SALESFORCE_DB.SALESFORCE_SCHEMA.OPPORTUNITY

FROM @salesforce_stage/opportunity.csv

FILE_FORMAT = (FORMAT_NAME = 'salesforce_csv')

ON_ERROR = 'CONTINUE';

For incremental loads, use MERGE instead of COPY to upsert rather than replace:

plaintextMERGE INTO SALESFORCE_DB.SALESFORCE_SCHEMA.OPPORTUNITY AS target

USING (

SELECT * FROM @salesforce_stage/opportunity.csv

(FILE_FORMAT => 'salesforce_csv')

) AS source

ON target.Id = source.Id

WHEN MATCHED THEN UPDATE SET

target.Name = source.Name,

target.Amount = source.Amount,

target.StageName = source.StageName,

target.CloseDate = source.CloseDate

WHEN NOT MATCHED THEN INSERT (Id, Name, Amount, StageName, CloseDate)

VALUES (source.Id, source.Name, source.Amount,

source.StageName, source.CloseDate);

Limitations of the DIY approach

- Schema drift: A Salesforce admin adding or renaming a custom field breaks your pipeline silently unless you add schema detection logic.

- Soft deletes: Records moved to the Salesforce Recycle Bin (IsDeleted = true) do not appear in standard query results. You must query IsDeleted = true separately and handle deletes in your Snowflake table manually.

- Formula fields: SystemModStamp does not update when formula inputs change, so incremental queries miss formula field updates. A separate full-refresh job for formula-heavy objects is required.

- Maintenance: API authentication tokens expire, Salesforce API versions deprecate, and schema changes accumulate. All of this requires ongoing engineering attention.

Method 4: Using Data Loader for Salesforce to Snowflake Integration

Salesforce Data Loader is a client application for bulk import and export of Salesforce data. It supports up to 5 million records per operation and runs on Windows and macOS. This method is appropriate for one-time historical exports or for populating initial data in Snowflake before switching to a continuous sync method.

Data Loader is not suitable for ongoing automated pipelines without significant scripting effort. Use it for one-time exports, not production sync.

Steps

- Install Data Loader from the Salesforce downloads page. On macOS, run the installer.command file after download.

- Authenticate using your Salesforce integration user credentials and the Connected App OAuth settings.

- Use the Export operation to run a SOQL query against the objects you need. Export results to CSV.

- Load the exported CSV into Snowflake using the file staging approach from Method 2, or use the Snowflake web interface to upload files directly into a table.

For automation, Data Loader supports batch mode and a CLI interface. However, error recovery for partial failures and schema change handling require custom scripting on top.

Method Comparison

| Method | Sync type | Setup complexity | Formula fields | Maintenance | Best for |

|---|---|---|---|---|---|

| Estuary | Continuous incremental | Low (no-code UI) | Auto-refreshed daily | Minimal | Production pipelines, real-time dashboards |

| Bulk API (DIY) | Scheduled batch | High (custom code) | Manual refresh required | High | Engineering teams wanting full control |

| Tableau CRM Sync Out | Scheduled batch | Medium (CRM Analytics license) | Limited support | Low (but license cost) | Teams already on CRM Analytics |

| Data Loader | Manual / scripted | Low to configure | Not supported | High (manual runs) | One-time exports, historical loads |

Competitive landscape note

For teams evaluating managed connectors, the main alternatives to Estuary are Fivetran and Airbyte. Fivetran's Salesforce connector is mature and fully managed but pricing is consumption-based (Monthly Active Rows) and has become significantly more expensive in 2025-2026 for high-volume Salesforce orgs. Salesforce is one of the connectors where MAR costs are frequently higher than expected because Activity, Event, and EmailMessage objects generate far more rows than core CRM objects.

Airbyte offers a lower-cost alternative with a self-hosted option, though its Salesforce connector requires more configuration and schema drift handling is less automatic.

Estuary's free tier is available without a credit card. For teams evaluating Fivetran or Airbyte, running Estuary in parallel on a subset of objects is a practical way to compare before committing.

Common Issues and Fixes

Authentication fails after initial setup

OAuth tokens expire. Verify your Connected App has the refresh_token scope enabled. If using username/password authentication with a security token, confirm the token has not been reset by a Salesforce password change (Salesforce resets the security token any time the account password changes).

Formula fields show stale values in Snowflake

This is expected behavior when using any connector that syncs incrementally by SystemModStamp. Salesforce does not update SystemModStamp when formula inputs change. Use Estuary (auto-schedules formula field refreshes) or add a separate full-refresh job for formula-heavy objects alongside your incremental sync.

Soft-deleted records appear in Salesforce but not in Snowflake

Salesforce moves deleted records to the Recycle Bin with IsDeleted = true. Standard queries exclude them. To capture deletes, you must explicitly query IsDeleted = true objects or use a connector that handles delete tracking. Estuary's standard update mode handles deletes: when a record is deleted in Salesforce, the Snowflake row is updated accordingly.

Snowflake MERGE performance is slow on large tables

Snowflake's micro-partition architecture means merge performance depends heavily on the key used for the materialization. A key that is primarily chronological (such as a combination of date and record ID) performs significantly better than a single ID key, because Snowflake can prune micro-partitions that are not relevant to the transaction. This applies to all connectors. If you are using Estuary, review partition key guidance in the Snowflake materialization connector docs.

Snowflake warehouse running continuously and increasing compute costs

Configure an update delay in the Estuary Snowflake materialization settings. This pauses the materialization task for the configured duration between processing cycles. With a 1-2 hour update delay, the warehouse auto-suspends between batches. Snowflake's minimum billing granularity is one minute, so this reduces compute costs substantially for non-real-time use cases.

Standard object missing from Estuary discovery

The Estuary Salesforce connector maintains an internal list of standard objects for discovery efficiency. If a standard object you need does not appear after connecting, contact Estuary support via Slack at go.estuary.dev/slack or email support@estuary.dev. The object will be added to the connector's discovery list.

Conclusion

The right method for connecting Salesforce to Snowflake depends on what you are optimizing for.

If you need a production pipeline that stays in sync continuously, handles schema changes automatically, captures formula field updates, and requires minimal ongoing maintenance, Estuary is the most complete no-code option. The free tier is available at dashboard.estuary.dev with no credit card required.

If you have engineering resources and want full control over the pipeline, the Salesforce Bulk API 2.0 is capable and adds no licensing cost. The trade-off is everything that managed connectors handle for free: scheduling, error recovery, schema drift, formula field handling, and delete tracking.

If you are already on CRM Analytics, Tableau CRM Sync Out provides a native push to Snowflake for your analytics datasets without additional tooling, though it is not a general-purpose Salesforce object replication solution.

Ready to connect Salesforce to Snowflake? Start free at dashboard.estuary.dev

Related Articles

FAQs

Does connecting Salesforce to Snowflake require Salesforce Enterprise Edition?

How does Estuary handle formula fields when syncing Salesforce to Snowflake?

How do I handle Salesforce soft-deleted records in Snowflake?

About the author

With over 15 years in data engineering, a seasoned expert in driving growth for early-stage data companies, focusing on strategies that attract customers and users. Extensive writing provides insights to help companies scale efficiently and effectively in an evolving data landscape.