Data integration has become one of the most operationally critical activities in modern organizations. As businesses adopt more SaaS tools, cloud platforms, and database systems, the number of disconnected data sources grows faster than most teams can manage. The result is fragmented data, inconsistent reporting, and decisions made on incomplete information.

The pressure to fix this has intensified. More than half of surveyed executives in an IBM Institute for Business Value study said difficulties integrating AI infrastructure with legacy systems derailed their target outcomes. Data integration challenges are no longer just a pipeline problem. They are a direct barrier to AI adoption, real-time analytics, and the kind of cross-system visibility that modern businesses depend on.

Every business faces these challenges differently. The specific mix of source systems, data volumes, latency requirements, and compliance obligations varies, but the underlying problems recur across organizations of every size and industry.

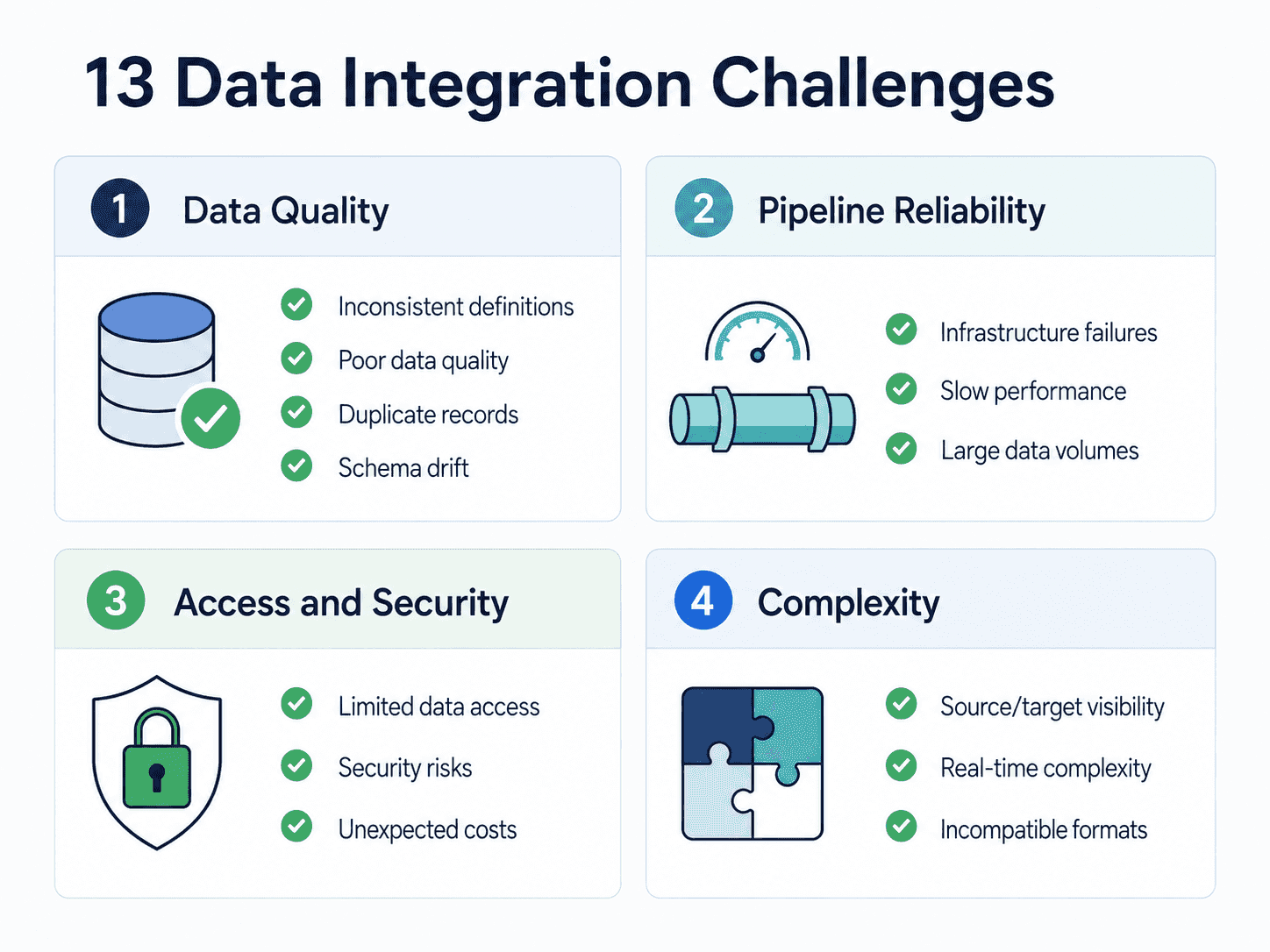

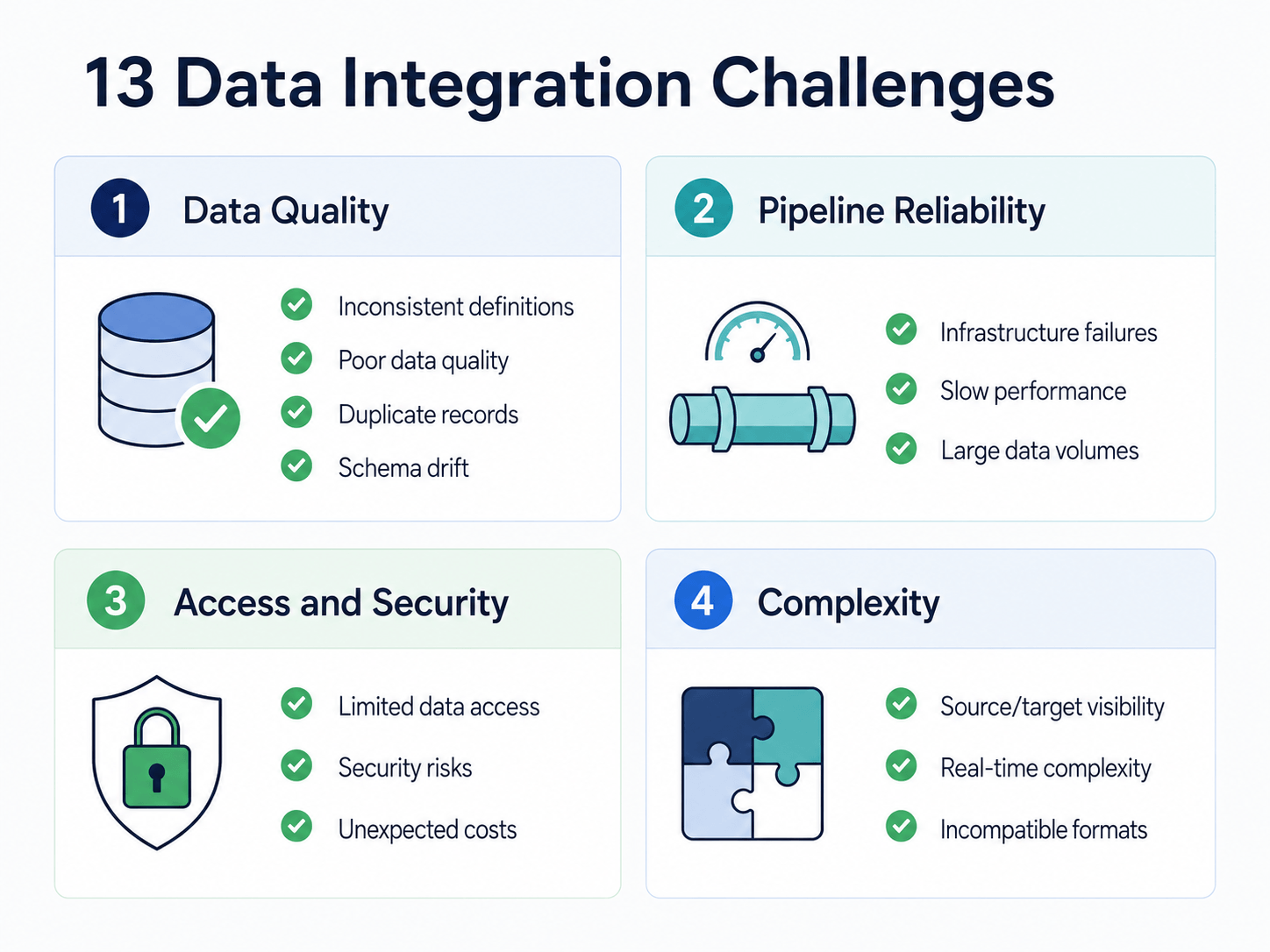

This guide covers the 13 most common data integration challenges, why each one occurs, and what teams can do to resolve or prevent them. The challenges span fragmented sources, inconsistent data quality, real-time complexity, schema drift, duplicate records, security gaps, and the growing difficulty of building pipelines that can support AI workloads. Each section includes practical solutions rather than generic advice.

Data Integration Challenges at a Glance

Before going into each challenge in detail, here’s a quick view of the most common data integration problems, why they matter, and how teams can prevent or solve them.

| Challenge | Why it matters | How to solve it |

|---|---|---|

| Inconsistent data definitions | Teams interpret the same metrics differently, causing conflicting reports and dashboards. | Create shared definitions, assign data owners, and document key metrics. |

| Poor visibility into source and target systems | Pipelines can miss records, map fields incorrectly, or fail when data changes. | Document schemas, keys, update patterns, API limits, and destination requirements. |

| Incompatible schemas and data formats | Different formats, data types, and structures can cause failed loads or incorrect joins. | Profile source data, map fields carefully, standardize formats, and monitor schema changes. |

| Large data volumes | Full reloads become slow, expensive, and unreliable as data grows. | Use incremental loading, CDC, partitioning, parallel processing, checkpoints, and recovery. |

| Pipeline reliability and infrastructure failures | Failed pipelines can delay dashboards, AI workflows, and operational systems. | Add monitoring, alerts, retries, checkpointing, ownership, and recovery plans. |

| Unexpected costs | Compute, storage, egress, retries, and maintenance can grow quickly at production scale. | Track usage, avoid unnecessary full refreshes, set alerts, and match sync frequency to business need. |

| Limited data access across systems | Important data remains locked in databases, SaaS tools, APIs, or legacy systems. | Map critical sources, permissions, owners, API limits, and use governed connectors. |

| Poor data quality | Missing, duplicate, stale, or inconsistent data leads to unreliable reports and AI outputs. | Profile data, validate records, standardize fields, monitor quality metrics, and fix issues at the source. |

| Security, privacy, and compliance risks | Sensitive data moves across systems, clouds, teams, and regions. | Use encryption, least-privilege access, masking, audit logs, and compliant deployment models. |

| Duplicate and conflicting records | Duplicate customers, accounts, or transactions distort metrics and customer views. | Define entity ownership, matching rules, deduplication logic, lineage, and source-of-truth rules. |

| Slow pipeline performance | Delayed pipelines reduce the value of reporting, AI, and operational workflows. | Replace full reloads with incremental sync, optimize queries, partition data, and monitor latency. |

| Real-time integration complexity | Low-latency pipelines are harder to operate reliably than batch jobs. | Use CDC, checkpoints, recovery, lag monitoring, schema handling, and right-time freshness decisions. |

What Is Data Integration?

Data integration is the process of combining data from multiple sources into a consistent, usable view for analytics, AI, operations, and reporting. It connects systems such as databases, SaaS applications, files, APIs, warehouses, and event streams so teams can work from trusted data instead of isolated snapshots.

Understanding which method fits your use case, whether ETL, ELT, CDC, or streaming, is the starting point for solving most of the challenges below. For a deeper breakdown of methods, architectures, tools, and best practices, see our full guide to data integration.

13 Data Integration Challenges & Their Tested Solutions

Most data integration challenges trace back to the same root causes: data that comes from too many different places, moves at different speeds, uses different formats, and is owned by different teams with different priorities. The 13 challenges below cover the most common points of failure, why they happen, and what to do about them.

1. Inconsistent Data Definitions Across Teams

One of the most common data integration challenges is that different teams define and use the same data differently. Sales may define an “active customer” one way, finance may define it another way, and product may use a completely different source of truth.

When these definitions are not aligned before integration, the result is inconsistent reporting, conflicting dashboards, and teams debating numbers instead of acting on them.

Why it happens:

Data is often created and managed inside separate systems owned by different teams. Each team builds its own naming conventions, business rules, metrics, and reporting logic. When that data is later integrated, those differences become visible.

How to solve it:

- Define shared business terms before building pipelines.

- Create ownership for key datasets and metrics.

- Document metric definitions, source systems, update frequency, and transformation rules.

- Use data governance and stewardship to align business and technical teams.

- Build a data catalog or glossary for important fields, entities, and KPIs.

Prevention tip:

Do not treat data integration as only a technical pipeline project. Align definitions, ownership, and business rules first so the integrated data actually means the same thing to every team.

2. Poor Visibility Into Source and Target Systems

Data integration becomes difficult when teams do not fully understand how source and target systems store, update, and expose data. A source database may update records continuously, a SaaS app may limit API access, and a target warehouse may require different data types, keys, or loading patterns.

Without this visibility, teams can build pipelines that miss records, overload source systems, map fields incorrectly, or fail when data changes.

Why it happens:

Source and target systems are often owned by different teams, documented inconsistently, or built for different purposes. Operational systems are optimized for transactions, while analytical systems are optimized for querying and aggregation. These differences affect how data should be extracted, transformed, and loaded.

How to solve it:

- Document source schemas, primary keys, update patterns, and API limits.

- Understand how deletes, updates, timestamps, and soft-deleted records are represented.

- Review target system requirements for data types, keys, deduplication, and merge behavior.

- Use data mapping tools or schema discovery to understand relationships between fields.

- Involve system owners early so pipeline assumptions can be validated before production.

Prevention tip:

Before building the pipeline, map how data is created, changed, deleted, and consumed. Most integration issues come from assumptions about systems that were never checked.

3. Incompatible Schemas and Data Formats

Data integration gets harder when source systems store similar information in different structures. One system may store customer IDs as numbers, another as text. One API may return nested JSON, while another database uses relational tables. Date formats, naming conventions, currencies, and field types may also vary across systems.

If these differences are not handled carefully, pipelines can produce incorrect joins, failed loads, broken dashboards, or unreliable analytics.

Why it happens:

Different systems are designed for different purposes. CRMs, databases, SaaS apps, APIs, and file exports all have their own schemas, data types, validation rules, and update patterns. As those systems evolve, formats can drift even further apart.

How to solve it:

- Profile source data before integration.

- Map fields, data types, keys, and relationships before loading data into the target system.

- Standardize naming conventions, timestamp formats, currencies, and IDs.

- Use ETL, ELT, or managed integration tools to handle mapping and transformations consistently.

- Test transformations with sample records before running full production syncs.

- Monitor for schema changes so new or altered fields do not break downstream systems.

Prevention tip:

Do not assume that fields with similar names mean the same thing. Validate structure, meaning, type, and business logic before merging data from different systems.

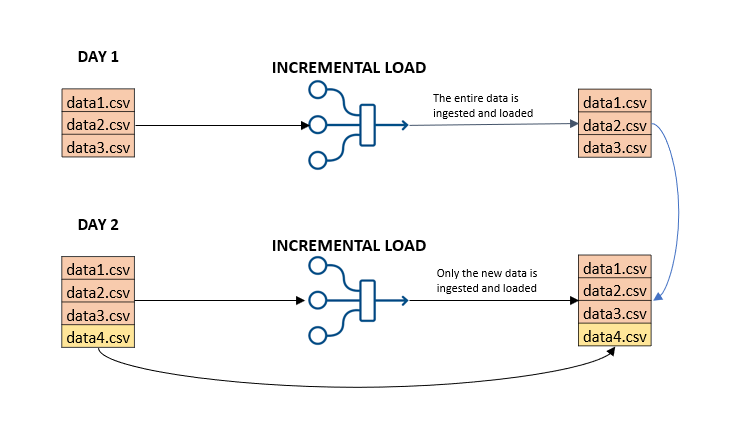

4. Scaling Pipelines for Large Data Volumes

As data volume grows, integration pipelines can slow down, miss processing windows, or fail under load. Full-table extracts that worked for small datasets can become expensive and unreliable when tables reach millions or billions of rows.

Large volumes also increase the risk of timeout errors, duplicate loads, delayed dashboards, and higher warehouse or infrastructure costs.

Why it happens:

Many pipelines are designed around full reloads, scheduled batch jobs, or single-threaded processes. As source data grows, these approaches require more compute, longer processing time, and more manual tuning.

How to solve it:

- Use incremental loading instead of repeatedly copying full datasets.

- Use CDC when updates, inserts, and deletes need to be captured continuously.

- Partition large datasets by time, key range, or logical grouping.

- Use parallel processing where supported.

- Monitor throughput, lag, retries, and failed records.

- Choose tools that support backfills, checkpoints, and recovery for large datasets.

Real-world example: Hayden AI reduced replication lag from 24 hours to about 1 hour, completed a 5TB backfill, and reduced monthly replication costs by 60% with Estuary. This is a strong example of why incremental movement, backfills, and reliable recovery matter when data volume grows.

Prevention tip:

Design for growth from the beginning. A pipeline that only works at today’s data volume may become a bottleneck as soon as usage, sources, or historical retention grows.

5. Pipeline Reliability and Infrastructure Failures

Data integration pipelines depend on several moving parts: source systems, networks, connectors, processing jobs, destination systems, credentials, and monitoring. If any part fails, data can arrive late, partially load, duplicate, or stop syncing entirely.

This becomes especially risky when pipelines support dashboards, AI workflows, operational systems, or customer-facing applications that depend on fresh data.

Why it happens:

Integration infrastructure often grows over time through scripts, schedulers, connectors, queues, and manual fixes. Without clear ownership, monitoring, retries, and recovery logic, small failures can become production incidents.

How to solve it:

- Monitor pipeline health, latency, throughput, and error rates.

- Add alerting for failed syncs, delayed data, authentication errors, and destination load failures.

- Use checkpointing and recovery so pipelines can resume safely after interruptions.

- Avoid relying on one-off scripts without logging, retries, or ownership.

- Validate network access, credentials, source permissions, and destination limits before production.

- Document who owns each pipeline and what happens during failure or rollback.

Prevention tip:

Treat data pipelines like production systems. If a pipeline affects reporting, AI, operations, or customer workflows, it needs monitoring, alerting, retries, and a clear recovery plan.

6. Unexpected Data Integration Costs

Data integration costs can grow quickly when pipelines move more data than necessary, reprocess full datasets, run inefficient warehouse queries, or require constant engineering maintenance. Costs also increase when teams add new sources, destinations, transformations, or monitoring after the pipeline is already live.

Why it happens:

Many teams underestimate the cost of compute, storage, egress, connector pricing, retries, failed jobs, and manual maintenance. A pipeline that looks cheap during a proof of concept can become expensive at production scale.

How to solve it:

- Track data volume, sync frequency, compute usage, and destination costs.

- Use incremental loading or CDC instead of full refreshes where possible.

- Match sync frequency to business need instead of making every pipeline real-time.

- Set budgets and alerts for warehouse, cloud, and integration platform usage.

- Review pipelines regularly to remove unused fields, tables, or destinations.

- Include maintenance time and engineering overhead in total cost calculations.

Real-world example: Xometry reduced data integration costs by 60% after moving to Estuary’s private deployment, while gaining real-time streaming into Snowflake and better visibility across analytics, sales, and operations.

Prevention tip:

Choose the right freshness level for each use case. Real-time is valuable when freshness affects decisions, but batch or micro-batch may be more cost-effective for lower-urgency reporting.

7. Limited Data Access Across Systems

Data integration becomes difficult when important data is locked inside separate databases, SaaS tools, spreadsheets, APIs, or legacy systems. Teams may know the data exists, but they cannot access it reliably or combine it with other sources.

This leads to manual exports, delayed reports, incomplete analysis, and duplicated work across departments.

Why it happens:

Different systems have different owners, permissions, APIs, data models, and security rules. Some legacy systems are hard to connect to, while SaaS tools may have API rate limits or restricted export options.

How to solve it:

- Identify the systems that contain business-critical data.

- Document access requirements, owners, permissions, and API limits.

- Use connectors or integration tools that support the required sources and destinations.

- Create governed access patterns instead of relying on ad hoc exports.

- Centralize important data where appropriate, or use virtualization when data cannot be moved.

- Review permissions so teams can access the data they need without exposing sensitive information.

Prevention tip:

Do not wait until a reporting or AI project starts to solve access issues. Map critical data sources and permissions early so teams are not blocked later.

8. Poor Data Quality

Poor data quality is one of the most common data integration challenges. When data comes from different systems, it may include missing values, duplicates, inconsistent formats, stale records, invalid IDs, or conflicting definitions.

If low-quality data flows into dashboards, AI models, or operational systems, teams may make decisions based on incorrect or incomplete information.

Why it happens:

Source systems often use different validation rules, naming conventions, update frequencies, and business logic. Some systems allow free-text entry, manual edits, or incomplete records, which creates quality problems before integration even begins.

How to solve it:

- Profile source data before building pipelines.

- Standardize field names, formats, IDs, timestamps, and units.

- Add validation checks for nulls, duplicates, invalid values, and broken relationships.

- Monitor quality metrics like freshness, completeness, uniqueness, and accuracy.

- Define ownership for key datasets and quality issues.

- Fix recurring problems at the source where possible, not only downstream.

Prevention tip:

Treat data quality as part of pipeline design, not a cleanup step after integration. Bad data moves faster when pipelines are automated.

9. Security, Privacy, and Compliance Risks

Data integration pipelines often move sensitive information across systems, clouds, teams, and regions. This can create security and compliance risks if access controls, encryption, masking, and auditability are not handled properly.

The risk is higher for customer data, financial records, healthcare data, employee information, and regulated workloads.

Why it happens:

Data moves through multiple systems during integration: sources, connectors, processing layers, destinations, logs, and monitoring tools. Each handoff can create exposure if permissions, credentials, encryption, or governance controls are weak.

How to solve it:

- Encrypt data in transit and at rest.

- Use role-based access control and least-privilege permissions.

- Store credentials in secret managers, not scripts or shared files.

- Mask or exclude sensitive fields when they are not needed downstream.

- Maintain audit logs for data access, pipeline changes, and failures.

- Confirm data residency, retention, and compliance requirements before choosing a deployment model.

Prevention tip:

Build security into the integration design from the beginning. Retrofitting access controls and compliance rules after data is already moving is harder and riskier.

10. Duplicate and Conflicting Records

Duplicate records make integrated data unreliable. The same customer, product, transaction, or account may appear multiple times with slight variations across systems. Conflicting records are even harder because two systems may disagree about which value is correct.

This can break reporting, inflate metrics, create poor customer experiences, and make AI or analytics outputs less trustworthy.

Why it happens:

Duplicates often come from manual data entry, inconsistent IDs, system migrations, separate departmental tools, or weak matching logic. Conflicts happen when different systems update the same entity independently.

How to solve it:

- Define matching rules for key entities like customers, accounts, products, and transactions.

- Use stable identifiers where possible, such as customer IDs, account IDs, or transaction IDs.

- Add deduplication logic before data is used in reporting or operations.

- Track data lineage so teams know which system produced each record.

- Define source-of-truth rules for conflicting fields.

- Monitor duplicate rates and investigate recurring causes.

Prevention tip:

Decide which system owns each core entity before integration. Without ownership rules, duplicates and conflicts will keep returning.

11. Slow Pipeline Performance

Slow pipelines delay reports, dashboards, AI workflows, and operational systems. Even if the data eventually arrives, long processing times can make it less useful for time-sensitive decisions.

Performance issues can also increase cost when jobs run longer, consume more compute, or repeatedly fail and retry.

Why it happens:

Common causes include full-table reloads, inefficient queries, poor partitioning, large transformations, source system bottlenecks, destination load limits, network latency, and under-provisioned infrastructure.

How to solve it:

- Replace full reloads with incremental loading or CDC where possible.

- Partition large datasets by time, key range, or business domain.

- Optimize queries and transformations before scaling infrastructure.

- Monitor pipeline latency, throughput, retries, and destination load times.

- Use parallel processing where supported.

- Separate heavy transformation workloads from source systems when possible.

Prevention tip:

Measure performance before production and keep measuring after launch. Pipeline performance changes as data volume, schemas, and business usage grow.

12. Real-Time Integration Complexity

Real-time integration is valuable when data freshness affects decisions, customer experiences, fraud detection, inventory, AI workflows, or operational systems. But real-time pipelines are harder to operate than simple batch jobs because they run continuously and must handle constant change.

The challenge is not just moving data quickly. It is moving data reliably, in order, without missing updates, duplicating records, or breaking when schemas change.

Why it happens:

Real-time pipelines must handle source changes, retries, ordering, deletes, backfills, schema evolution, network interruptions, and destination failures. Event-streaming systems can also require specialized infrastructure and operational expertise.

How to solve it:

- Use CDC for database changes instead of polling full tables.

- Choose the right freshness level; not every pipeline needs sub-second latency.

- Use checkpointing and recovery to avoid missing or duplicating data.

- Monitor lag, failure rates, throughput, and schema changes.

- Plan for backfills, replays, and destination outages.

- Use managed platforms when your team does not want to operate Kafka, Debezium, or custom streaming infrastructure.

Real-world example: Connect&Go reduced latency from 45 minutes to 15 seconds after replacing batch-based ELT with Estuary, giving attraction operators near-real-time visibility for museums, amusement parks, and festivals.

Prevention tip:

Real-time should be a business requirement, not a default setting. Use it where freshness changes the outcome, and use batch or micro-batch where it does not.

13. Schema Drift and Breaking Source Changes

Source systems change over time. New fields are added, columns are renamed, data types change, APIs update, and nested structures evolve. These changes can break pipelines or silently produce incorrect downstream data.

How to solve it:

- Use schema-aware integration tools that detect and adapt to source changes rather than failing silently.

- Set up alerts when new columns are added, types change, or fields are removed.

- Version important datasets and maintain data contracts between producers and consumers.

- Test downstream models, dashboards, and transformations when source schemas change.

- Use tools that support schema evolution rather than requiring manual intervention for every upstream change.

- Document expected schemas and flag deviations as part of pipeline monitoring.

Real-world context: Schema drift is one of the most common reasons teams move away from custom-built pipelines to managed platforms. A single renamed column in a source database can silently corrupt weeks of downstream data if the pipeline has no schema validation or alerting built in.

Prevention tip: Plan for schema change from the beginning. Treat source systems as external dependencies that will change, not stable contracts that will stay fixed.

How These Challenges Show Up in Practice

These challenges also appear consistently in practitioner communities. Data engineers frequently discuss schema drift, pipeline failures, data quality issues, Airflow and ETL maintenance, and the tradeoffs between custom pipelines and managed tools.

Stack Overflow threads show engineers troubleshooting schema drift in data pipelines, including cases where incoming files have changing columns or dynamic schemas. While the example uses Azure Data Factory, the underlying problem, pipelines breaking when source schemas change without warning, is tool-agnostic and one of the most frequently searched data engineering problems.

Hacker News discussions around Airflow and ETL highlight recurring frustrations: pipelines that are hard to debug when they fail, orchestration complexity that grows as teams add more jobs, and the ongoing maintenance burden of keeping batch workflows running reliably in cloud environments.

A Reddit r/dataengineering discussion on data quality challenges shows practitioners asking about the most frequent quality issues teams face in day-to-day work, which aligns directly with the challenges covered in this guide around poor data quality, duplicate records, and inconsistent definitions.

These are not edge cases. They are the day-to-day reality for data engineering teams working at production scale, and they are exactly the problems that well-designed integration platforms and practices are built to reduce.

How Estuary Helps Solve Data Integration Challenges

Estuary helps teams reduce common data integration problems by combining real-time CDC, batch backfills, schema-aware pipelines, and many-to-many data movement in one platform.

Where Estuary helps most:

- Large data volumes: Capture changes incrementally instead of repeatedly copying full datasets.

- Real-time complexity: Use CDC to keep warehouses, lakes, applications, and event streams updated with lower operational overhead.

- Schema drift: Detect and handle source schema changes so pipelines are less likely to fail silently.

- Duplicate records and reliability: Use checkpointing, recovery, and consistent materialization patterns to reduce duplicate or missing data.

- Infrastructure overhead: Avoid managing Kafka, Debezium, custom scripts, and one-off connector infrastructure for common CDC and integration workloads.

- Hybrid and private requirements: Use Estuary Cloud, BYOC, private deployment, or self-hosted options depending on security and compliance needs.

Estuary is not a replacement for governance, data quality ownership, or shared business definitions. It fits into the stack as the data movement layer that helps keep downstream warehouses, lakes, applications, and AI workflows supplied with current, reliable data.

Conclusion

Data integration challenges do not disappear with a single tool purchase. They require the right architecture, consistent governance, ongoing monitoring, and pipelines that can handle schema changes, scale, failures, and changing business requirements.

The 13 challenges covered in this guide are addressable. Most are cheaper to prevent during pipeline design than to fix after they reach production.

Estuary helps teams reduce integration complexity with real-time CDC, batch backfills, schema-aware pipelines, and many-to-many data movement across modern data stacks.

Start building with Estuary for free or talk to our team about your use case.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.