The quick answer:

The best Debezium alternatives in 2026 depend on your deployment model and operational capacity:

- For a fully managed CDC platform with exactly-once delivery: Estuary.

- For managed Debezium with deeper Kafka integration: Confluent Cloud.

- For enterprise on-prem and cloud CDC with exactly-once: Striim..

- For pure open-source self-hosted CDC without Kafka: Apache NiFi (limited scale).

Most teams looking for a Debezium alternative want the same log-based CDC capability without operating Kafka, ZooKeeper, and Kafka Connect themselves.

Debezium is a commonly used CDC solution, but it does require consistent management which why you may want to explore alternatives. There are Debezium alternatives worth considering, both open-source and paid. We cover several Debezium alternatives, including managed CDC platforms, open-source options, and on-prem-focused tools. Estuary is one of these; we explain how Estuary's architecture addresses Debezium's specific pain points in the section below.

What is Change Data Capture?

Change Data Capture (CDC) is the process by which you capture all the new events from a source system. Any update, any insert, or other modification to the source database that needs to be ultimately reflected in a downstream application.

A CDC pipeline will be constructed by data engineers to avoid this potential problem, especially those working in eCommerce, fraud, logistics, and financial services.

Implementation options range from simply querying the database on a periodic basis (query-based CDC), to complex implementations that stream database changes in real-time.

What is Debezium?

Debezium is an open-source distributed framework for creating streaming change data capture pipelines.

Most often used with the Apache Kafka streaming framework (but not a hard requirement), it enables you to abstract away much of the coding work that would otherwise be required to connect, say, changes in a Postgres database to Kafka.

For most database connectors (Postgres, MySQL, MongoDB), events are pushed from the write-ahead log into Debezium, which then pushes them downstream into Kafka in real-time, and in the exact order of event generation. For some others (SQL Server), Debezium is ‘polling’ the database.

Debezium is a popular project with over 8K+ Git stars and experiences are documented extensively online.

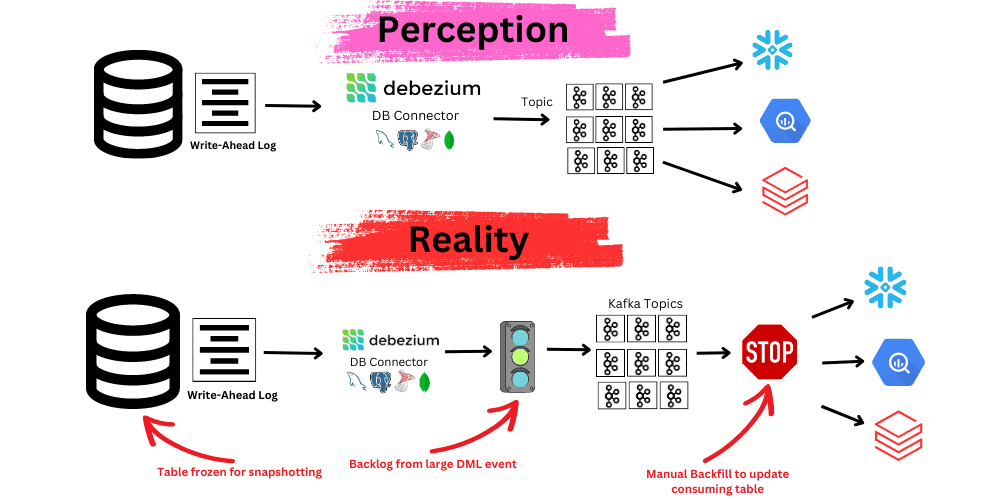

The Challenges with Debezium

Debezium is a popular open-source choice for streaming change data from databases like PostgreSQL, MySQL, and MongoDB into a Kafka topic, and on to downstream consumers. It was one of the first open-source CDC frameworks to gain popularity, and thousands of data teams large and small still choose Debezium and contribute to the project.

But Debezium is also a project with many complications that will require considerable time and effort to overcome when working with it at scale, namely:

1. Debezium and associated packages require specific expertise. Data teams will need to become intimately familiar with Kafka, Kafka Connect, and ZooKeeper to begin standing up CDC pipelines. ZooKeeper itself is fairly heavy and requires a large tech stack. Needless to say, teams will have to be highly proficient in Java to manage the implementation.

2. Continuous DevOps. It takes continuous work to provision the appropriate amount of CPU resources so data doesn’t get throttled while moving into a Kafka Topic. If data is throttled and the Kafka Topic retention is not set long enough, data may be lost.

3. When working with large tables, performance, availability, and data loss will become an issue.

There are a few problems that can arise when trying to scale Debezium:

- When large DML operations occur, it can take many hours to finish the operation. (“Large” here meaning “affecting tens of millions of rows,” which is more common than it might seem).

- Snapshotting is incremental and without concurrency. Tables with tens of millions of rows will take hours to snapshot. Snapshotting also locks a table from access until complete.

- The Postgres connector can manage 7,000 change events per second. You can get to a higher scale and avoid backlogging using partitioning, but that requires you to create multiple connectors for the same table and then re-join all the data again. If the load is unbalanced between tables, one table may have to wait to be processed until the first larger operation finishes.

- It takes time to spin up or turn down a new connector as there is no ‘hot standby’ in Kafka Connect.

- Logical decoding plug-ins like wal2json can run out of memory

4. Debezium provides At-least Once Delivery vs. Exactly-Once. You’ll likely have to implement reduction at the consumer level to prevent duplicates in the log and thus in downstream consumers.

5. Complex schema changes and migrations are manual. Debezium will propagate row-level changes into the Kafka topic (provided it isn’t currently snapshotting), but more complex schema changes will have to be manually managed.

For example:

- If the primary key is changed, Debezium will need to be stopped and the database will need to be put into read-only mode and then turned back on.

- If the DDL is modified in Postgres, no change will be alerted to downstream consumers.

6. Backfills are manual. If you have to spin up a new consumer that needs both historical and real-time change events, unless the data is being retained in a Kafka log forever (cost inefficient) or the topic can be compacted sufficiently, then you will have to manually trigger backfills

7. Transformations are limited. Debezium and Kafka enable single message transforms. This is powerful for formatting a message (for example, to modify a timestamp or change type) as it flows to the topic and some other scenarios. However, the limitation is in the name; the transform only applies to the single message as it flows to the topic. For more complex transformations like streaming joins, splitting a message, or applying a transformation against the whole dataset, a secondary system like Flink will be needed.

If none of the above fazes you, and the team has the time and resources to take on the challenge above, great! Just note that for teams working with very high volume datasets, it’s not uncommon that companies like Netflix, Robinhood, and WePay to have to allocate 4-6 full-time data professionals just to deal with Debezium.

All hope is not lost of course, and we don’t want to point out problems without pointing out some alternatives. So we won’t stay in this sphere of negativity.

There are many alternatives to Debezium for real-time replication of change events from a database to the cloud warehouse or beyond. Choices range across open-source and third-party vendors.

Alternatives to Debezium

The table below is a high-level round-up of popular, real-time CDC tools that are alternatives to Debezium. Before you dive in, a few notes and caveats:

- We can’t be experts on every solution, and we don’t claim to be! So while the table doesn’t go into great technical detail, it does cover major characteristics of each Debezium alternative.

- There are more solutions than listed here. For simplicity, we omitted tools that:

- Are purely enterprise-focused, such as Qlik, Talend, and GoldenGate.

- Are native and confined to a specific cloud ecosystem, such as Amazon DMS and Google Datastream.

- In general, anything that lacks self-serve developer access.

- Are purely enterprise-focused, such as Qlik, Talend, and GoldenGate.

| Solution | Open-Source / Paid | Company Summary | Advantages / Disadvantages |

| Estuary | Hybrid | Open-source connectors and fully managed no-code connectors for real-time CDC. | +Fully managed no-code or open-source +In-flight SQL transforms +Exactly-Once delivery -No on-prem |

| Confluent Cloud | Hybrid | Company created by founders of Apache Kafka offering fully-managed Debezium. Provide a no-code UI with sinks and sources for Kafka. | +Fully managed no-code +On-cloud or on-prem -Requires Kafka usage |

| Striim | Paid | Fully-managed no-code connectors for both on-prem and cloud | +Fully managed no-code -Requires windowing on joins -Expensive |

| Apache Nifi | Open-Source | Open-source real-time replication project with drivers for Postgres and MySQL | +UI +Open-source -Not widely used -Limited scalability |

Estuary as a Debezium Alternative

Allow me to do a quick introduction before getting into the nitty-gritty details of how we fully manage a streaming CDC implementation, and in our humble view, make up for some of the inherent shortcomings of Debezium that require intervention.

Estuary is a right-time data integration platform for building and transforming streaming data pipelines. We offer open-source as well as fully managed connectors so you can ingest data in real time.

Estuary writes its own open-source connectors for each database. These connectors use the same mechanism as Debezium (pull from write ahead or transaction log) to avoid hammering the production database. Estuary is built on top of the open-source Gazette distributed pub-sub streaming framework. Data flows from the write-ahead log connector and is ingested into a real-time data lake of JSON files that store both batch and real-time data.

Estuary vs. Debezium

Estuary provides both open-source connectors as well as no-code platform for creating cloud-based CDC pipelines.

As a fully managed solution, there is no need for Kafka or managing compute resources. (Note: While it's possible to use Debezium without Kafka, for example, by using Debezium with Kinesis, this is relatively uncommon. By building our CDC on top of Gazette instead of Kafka, we are able to more easily enable more powerful transformations and backfilling while retaining low latency.)

Estuary can also be self-hosted for those willing to take on a bit more management. At a more nuanced technical design level, here is how we implemented CDC to work around some of Debezium’s pitfalls.

- Exactly-Once Delivery

Debezium provides at-least-once delivery, which means downstream consumers can receive the same change event multiple times. Estuary provides exactly-once delivery, so deduplication is not needed at the destination.

Estuary connectors run data through the Gazette streaming framework. A benefit of using this framework is that it guarantees exactly-once delivery by de-duplicating messages stored in the real-time data lake of JSON files. The method is described in-depth here.

At a high level, each change event has a UUID, timestamp, and clock sequence. Estuary de-duplicates uses a combination of these properties to track the largest clock seen for a given producer. Read messages with smaller clocks are presumed duplicates and are discarded.

- Scaling large changes

Debezium's main scaling pain points are memory exhaustion during large DML operations and hour-long snapshots that lock tables. Estuary handles these differently. The challenge with Debezium at scale is that the database will run out of memory while it pulls data from the database log. Estuary’s database connectors properly acknowledge data already consumed to avoid the DML issues when working with millions of rows.

- Schema Changes

Debezium propagates simple row-level changes automatically but requires manual intervention for primary key changes, DDL modifications, and other schema evolution. Estuary handles schema changes as a platform feature. Every collection in Estuary has a JSON Schema that defines its structure and constraints. Estuary validates every document on both read and write, ensuring clean, consistent data.

Schemas are automatically generated during capture, and Estuary uses continuous schema inference to adapt as upstream structures change. You can also define separate write and read schemas — permissive for ingest, strict for downstream use — giving you control over how data evolves. For complex changes like keys or partitions, Estuary provides tools such as backfill and autoDiscover to keep pipelines consistent without manual rework.

- Backfills

Debezium backfills require manually replaying the Kafka log from a chosen point in time, which depends on log retention settings. Estuary backfills run automatically against the underlying data lake with exactly-once semantics. Because Estuary is built on the Gazette streaming framework, all data is stored as a real-time data lake of JSON files that have already been reduced and de-duplicated. Any backfilling of data from a point in time to a given consumer will fully backfill by default and with exactly-once semantics. There is no need to manually replay the log from a selected point in time.

- Transforms

Debezium's Single Message Transforms (SMT) can only modify one message at a time. For streaming joins, message splitting, or whole-dataset transformations, Debezium requires a secondary system like Flink. Estuary supports stateful, in-flight SQL transformations natively. Estuary enables more complex transformations than single message transforms (SMT). Any and all real-time or history data ingested into an Estuary collection (the real-time data lake in cloud storage), can be statefully joined or transformed in-flight with SQL. Again this is a function of having a Kappa architecture in which all data stored in the cloud can be cheaply derived upon.

Your next step in streaming Change Data Capture

As noted above, you have many choices across paid and open-source for capturing change data with low latency. Even beyond what is noted, for smaller databases with less strict latency requirements, you could still explore using highly frequent query or trigger-based CDC. Most batch software will only be down to five-minute syncs… which is hardly ‘streaming’.

We welcome you to use the free up to 10 GB/month Estuary platform for creating streaming change data capture pipelines from sources like Postgres, MongoDB, MySQL, Salesforce, and more to destinations like Snowflake, S3, Redshift, BigQuery, and more!

Getting Started!

Use these resources:

- Get started for free.

- Estuary Docs.

- Tutorial: streaming Postgres CDC to ElasticSearch through Estuary (many more tutorials are on the blog!)

FAQs

Why are teams moving off Debezium?

Is Debezium really free?

Can you use Debezium without Kafka?

What is the difference between Debezium and Estuary?

Is there a managed version of Debezium?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.