Moving data from Postgres to Snowflake is essential for organizations leveraging Snowflake’s cloud-native analytics and AI capabilities. Whether you're optimizing data pipelines for real-time insights, machine learning, or operational reporting, selecting the right migration method is crucial.

This guide explores three proven approaches to move data from PostgreSQL to Snowflake:

- Real-time CDC & batch pipelines with Estuary (best for automation & low-latency analytics)

- Batch processing with Apache Airflow (best for scheduled data loads)

- Manual migration via CSV exports (best for one-time transfers)

We compare these options—including tools like Estuary, Apache Airflow, and Snowflake's native features—so you can choose the best method for your data integration needs.

🚀 Ready to move data seamlessly? Let’s dive in!

PostgreSQL: A Quick Overview

PostgreSQL is an open-source object-relational database management system (RDBMS). Originally called POSTGRES as the successor to Ingres, it became PostgreSQL to emphasize its focus on SQL. People love open-source Postgres because it’s proven, has an extensive feature set, and is very extensible.

Why do people love Postgres?

- Data support: It supports several built-in data types such as a character, date and time, numeric, boolean, JSON, and more.

- Extensibility: With Postgres, you can change just about anything from functions, aggregates, and data types to core modules. There are plenty of options you can choose from.

- Query optimizer: Postgres is better than most in optimizing queries so that you don’t have to spend time re-writing queries manually.

- Security: PostgreSQL provides strong security features, including user roles and privileges, SSL certificates, and encryption choices to protect the confidentiality and integrity of data.

- Superior Indexing: PostgreSQL supports numerous indexing techniques, such as B-tree, hash, GIN (Generalised Inverted Index), and GiST (Generalized Search Tree), which enable effective data retrieval and query optimization.

A pre-built connector to PostgreSQL makes replication, transformation, and data migration from Postgres to Snowflake easier. The best connectors take care of most aspects of the data migration, from translating schema to the removal of duplicate records to data type mapping for loading into Snowflake.

Snowflake: A Quick Overview

Snowflake is one of the most popular cloud data warehouses. Founded in 2012, it was one of the first cloud data warehouses with decoupled storage and compute, and consumption-based pricing. Snowflake has evolved into a broader cloud data platform that supports other compute workloads for analytics, data science, machine learning, and AI. It is the only major cloud data warehouse that is independent of, and can be hosted on Microsoft Azure, Amazon Web Services, and Google Cloud Platform.

Snowflake's architecture is structured into three decoupled multi-tenant layers that scale independently—database storage, query processing, and cloud services.

- Database storage is a proprietary columnar storage format for tables, with each table composed of micro-partitions between 50-150MB in size. Each time you write anything to Snowflake, you’re rewriting an entire micro-partition. This makes Snowflake more suited for (micro-) batch than real-time. It’s one reason why most writes happen in 1+ minute intervals. But Snowflake is becoming more real-time.

- Data type support. Snowflake supports a rich set of data types, including semi-structured data types like JSON and XML, which is important for loading data directly into Snowflake and for supporting different types of analytics and machine learning.

- Compute (query processing) consists of virtual data warehouses and compute clusters that range from x-small (1 credit/hour, 8 VCPUs) to 6-xlarge (512 credit/hour, 4096 VCPUs). They are dedicated, independent compute clusters that load data from storage into a local “cache” as needed by their consumers. This reduces the data size, making compute faster and more efficient. There are also Snowpark-optimized warehouses for general-purpose compute. Compute is kept “warm” in a shared pool for fast startup.

- Cloud services are all the control services you need to support storage and compute, including security, metadata, and infrastructure management.

Why Move Data from Postgres to Snowflake Data Warehouse?

Snowflake has been an innovator in the market with several key features that make companies choose to adopt Snowflake.

- Scalability: Snowflake has a true elastic scale. You can quickly move from one to another size cluster with a click. Snowflake allows you to automatically scale up and down additional instances of the same size warehouse (multi-cluster warehouses.)

- Data sharing: Snowflake's innovative data-sharing capabilities help in sharing live, up-to-date data in a secure and efficient manner with internal and external people.

- Zero-copy cloning: Snowflake's zero-copy cloning feature helps to create, test, and deploy data without impacting your storage or performance. It makes it easier to develop and test new features, run experiments, or troubleshoot issues.

- Ecosystem support: Snowflake's large number of integrations and compatibility help connect with various popular BI, ETL, and data visualization tools. Its cloud architecture effortlessly integrates with any of your preferred cloud providers, be it Azure, AWS, or GCS.

- Virtual private cloud: Snowflake does not let you run on your own on premises or cloud infrastructure. But you can run Virtual Private Snowflake in a separate dedicated Snowflake account that accomplishes the same goal. With Virtual Private Snowflake, you have your own dedicated pool to ensure fast scaling.

- Time travel: Snowflake not only archives data. It allows you to view and modify historical data for up to 90 days.

Set Up Postgres

Before transferring data from Postgres to Snowflake, you’ll first need some data to transfer. In this section, we’ll go over a setup example so you can follow along for this tutorial. If you already have a PostgreSQL instance, feel free to skip ahead to the methods for loading data from Postgres to Snowflake.

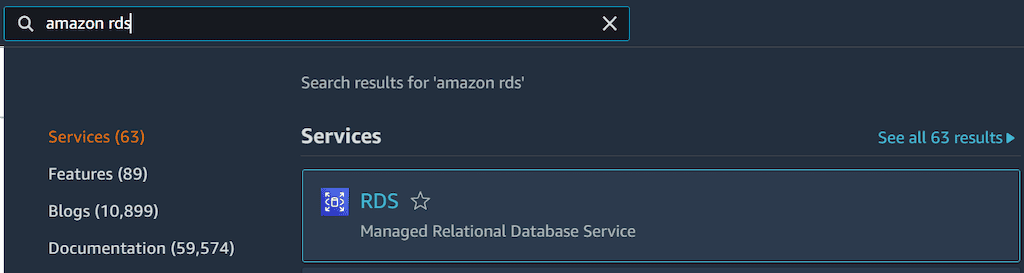

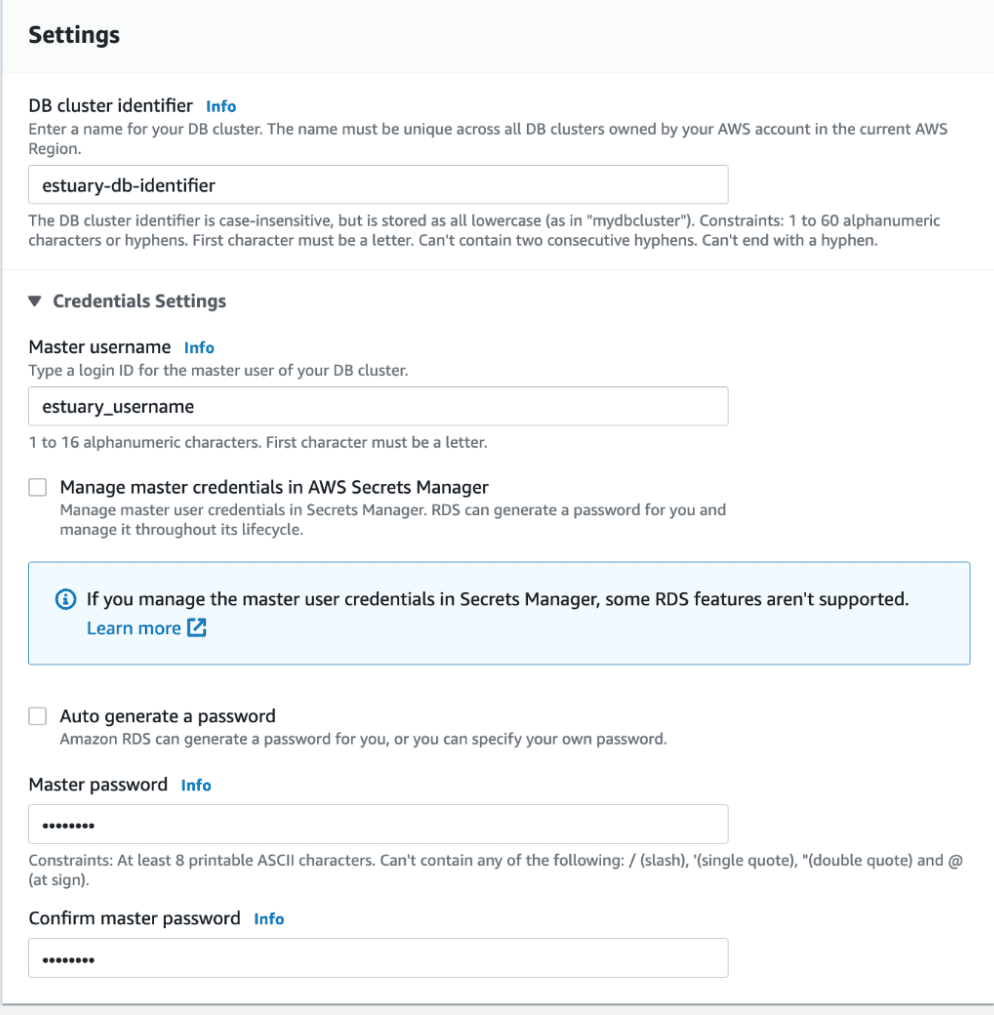

Step 1: Configure an AWS RDS for PostgreSQL

Set up a PostgreSQL instance using Amazon RDS or another cloud database service like Google Cloud SQL or Azure Database. For this guide, we’ll use Amazon RDS:

- Log in to your AWS account and search for "Amazon RDS" in the AWS search bar.

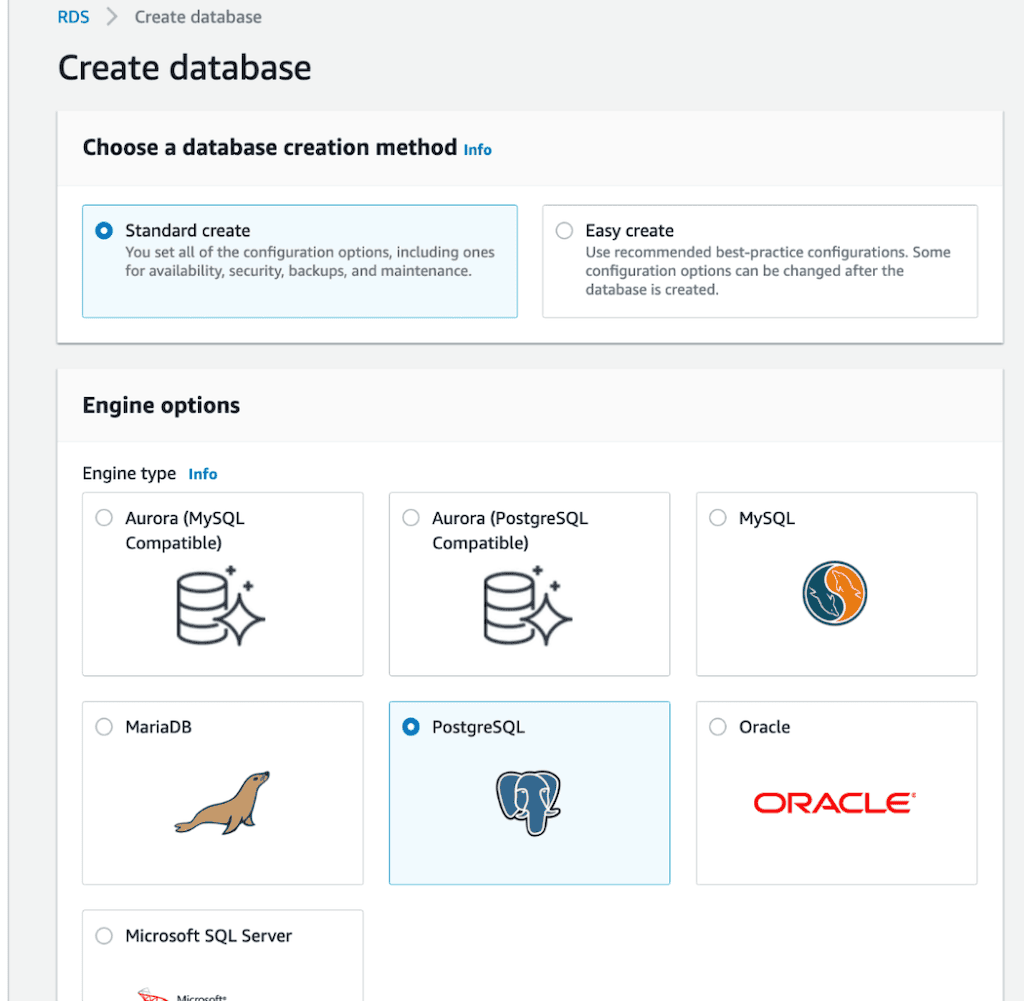

- Navigate to Databases > Create Database and select PostgreSQL as the database type.

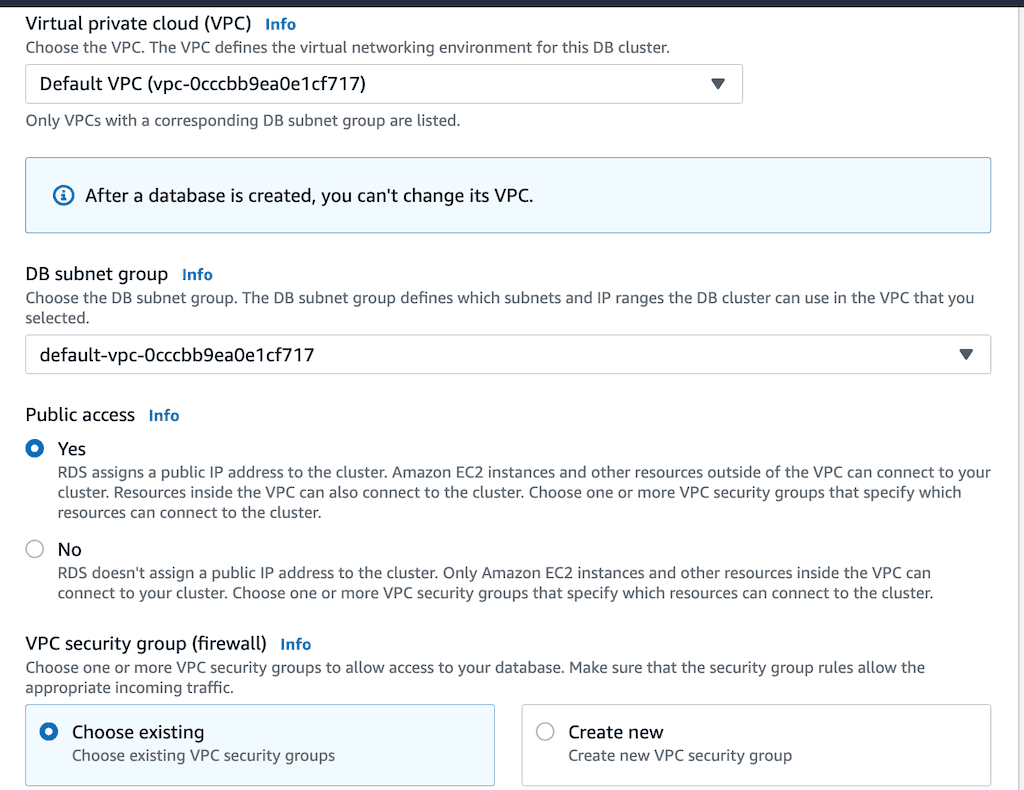

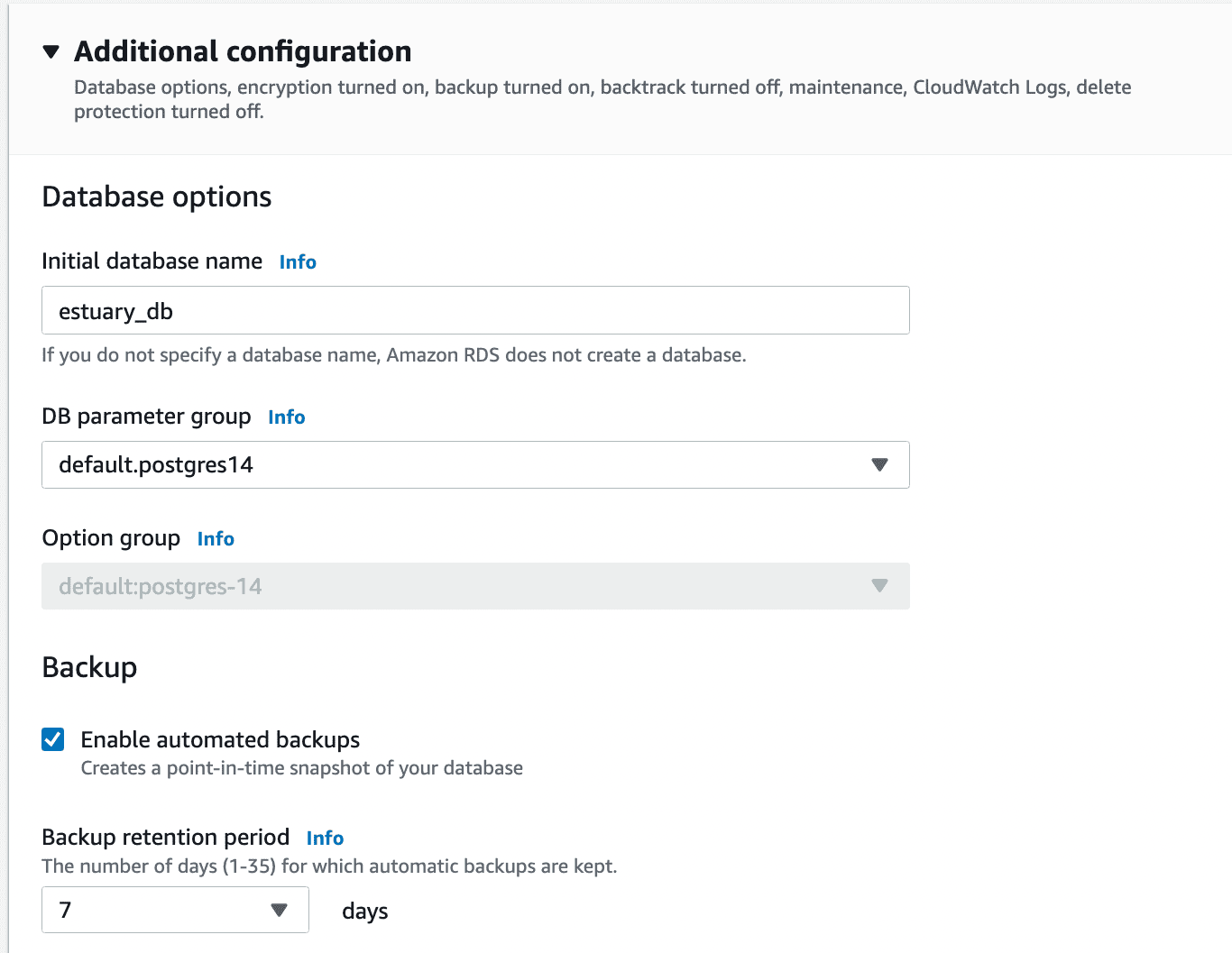

- Configure the following settings:

- Username

- Database name

- Public access: Enabled

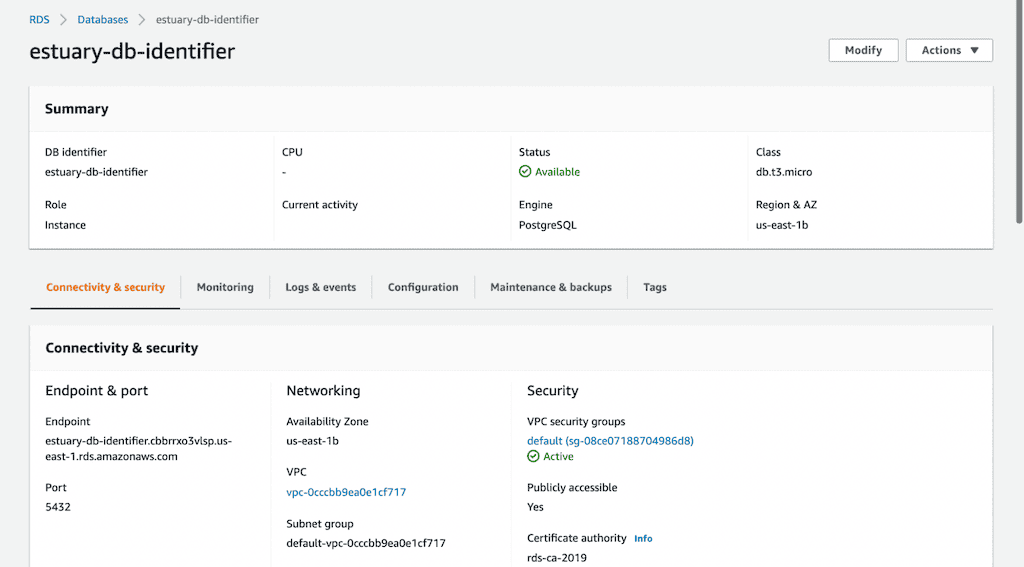

AWS will take some time to create the database. Once ready, note the endpoint for later use.

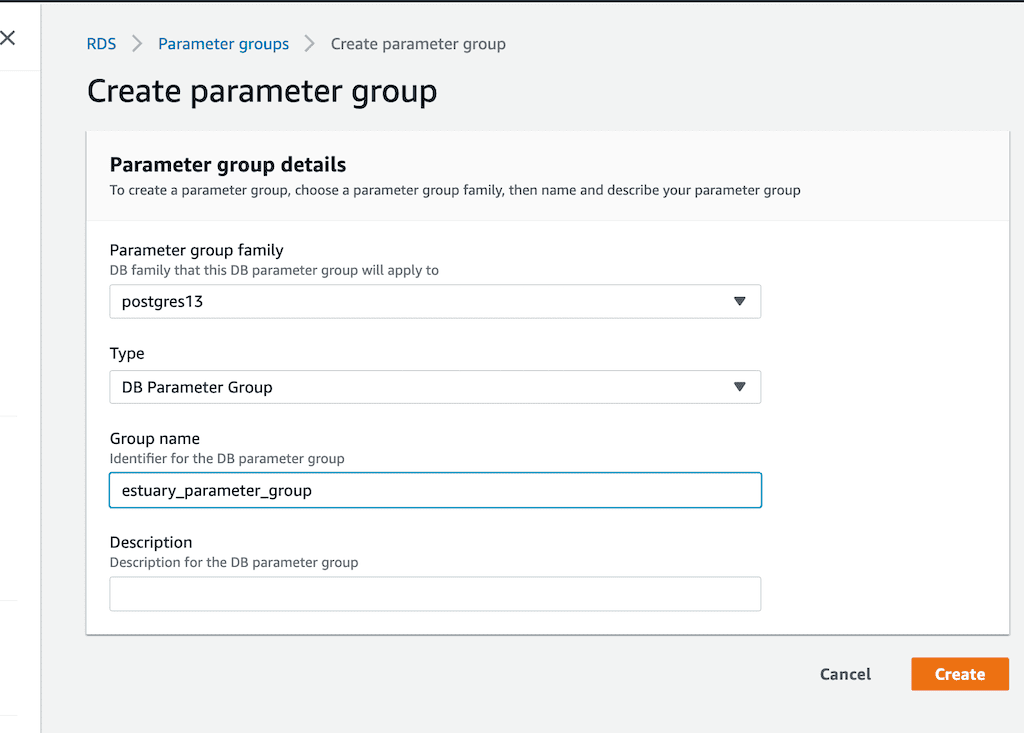

- Create a Parameter Group:

- Go to RDS > Parameter Groups and create a new group named, for example, estuary_parameter_group.

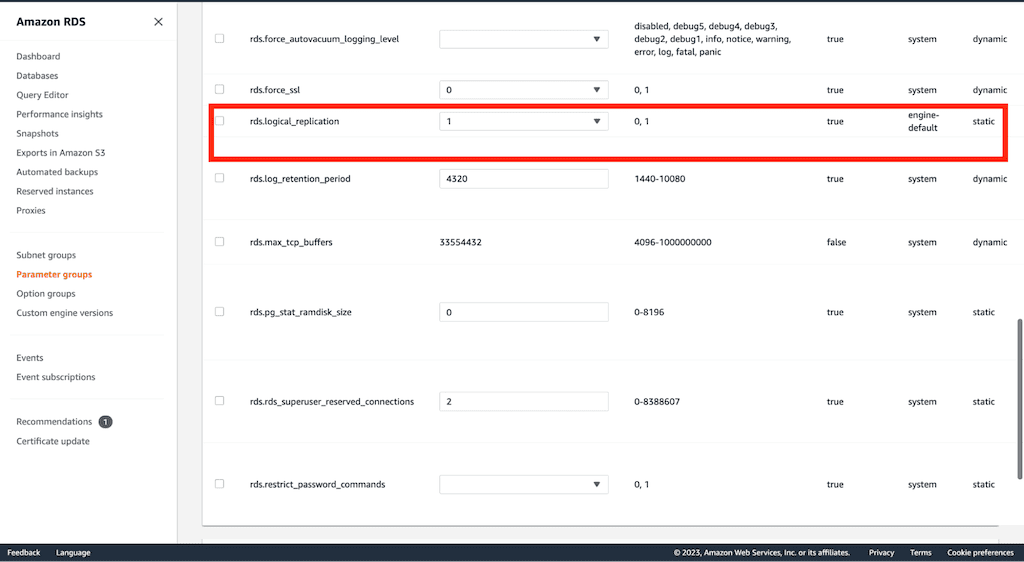

Edit this group to enable logical replication by setting rds.logical_replication=1.

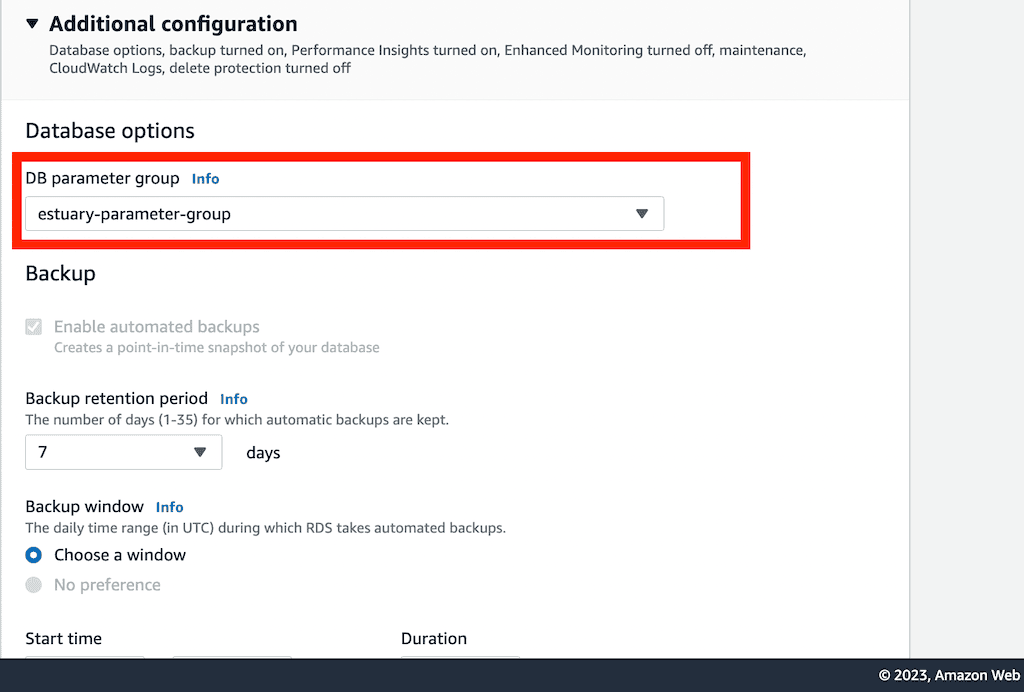

- Associate the Parameter Group with your database:

- Navigate to RDS > Databases > Modify, and change the DB parameter group to estuary_parameter_group.

- Reboot the database to apply changes.

rds.logical_replication for CDC.Step 2: Set Up a Local Postgres Client

To finalize your PostgreSQL setup, install and configure a local Postgres client:

- Download the client from the official PostgreSQL website.

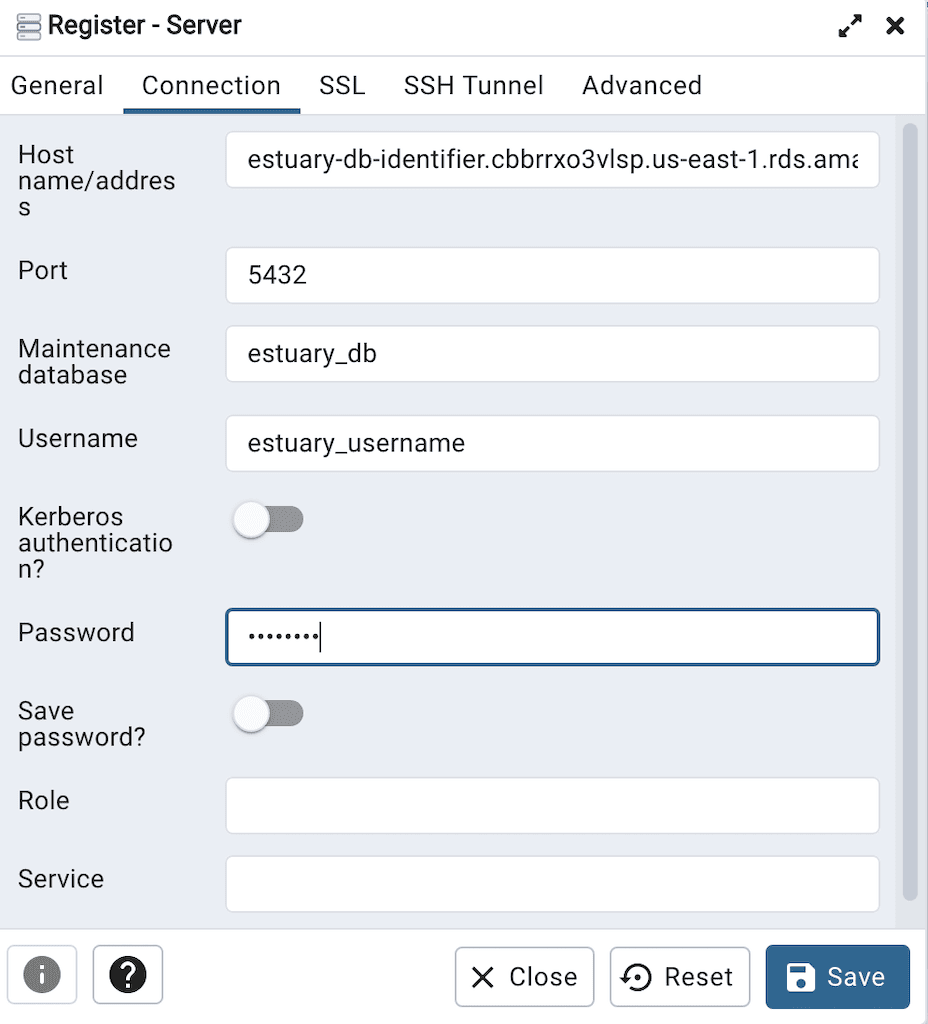

- Open the Postgres client and register a new server.

- Enter the following details from your Amazon RDS instance:

- Hostname

- Port number

- Username

- Database name

- Password

These are the same parameters you configured when you created your remote Amazon RDS database instance. When all the parameters have been entered, you should be able to successfully connect your local Postgres client to the remote Postgres database instance.

Step 3: Create a Table and Populate Data

Prepare a sample table and populate it with data:

- Create a schema and table in your database by running the following SQL:

plaintextcreate schema iot_schema;

create table iot_schema.iot_table(

id varchar(100) PRIMARY KEY,

room_id varchar(100),

noted_date varchar(100),

temp integer,

"out/in" varchar(50)

);- Populate the table with sample data:

plaintext\COPY iot_schema.iot_table FROM '/path-to-file/IOT-temp.csv' WITH (FORMAT CSV, HEADER TRUE, DELIMITER ',');- After executing this command, the data will be successfully loaded into your Postgres table. You can confirm by extracting the first ten records.

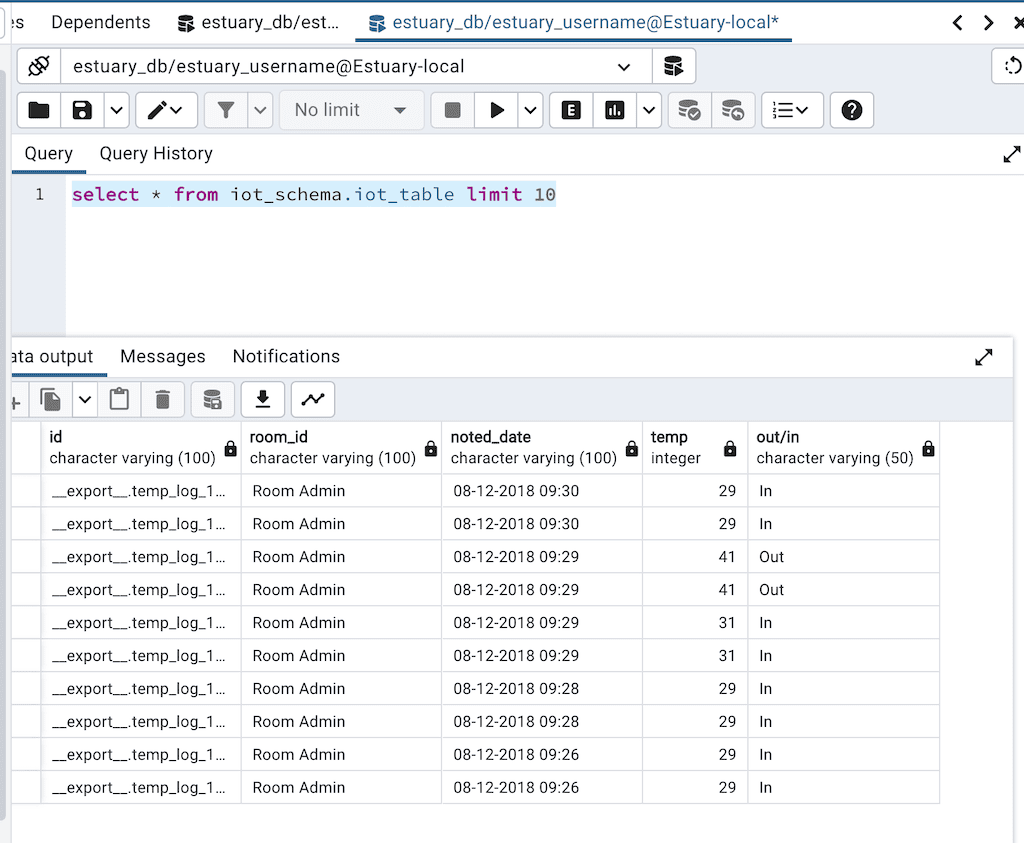

plaintextselect * from iot_schema.iot_table limit 10;The output of this query is shown in the image below.

iot_schema.iot_table table.You can also get the total records in the table by executing the command:

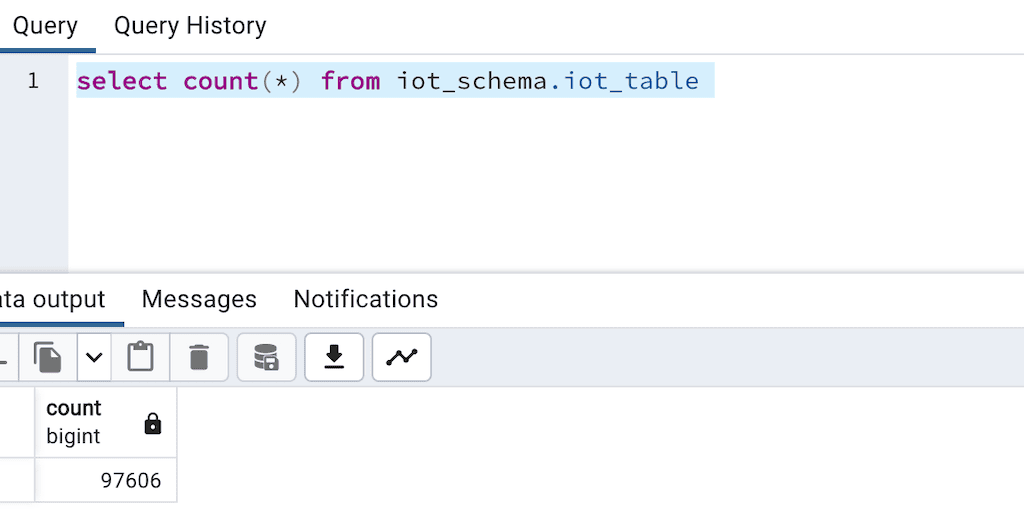

plaintextselect count(*) from iot_schema.iot_table iot_schema.iot_table table.The table should now contain your full sample dataset. You’re ready to stream this data to Snowflake!

3 Methods to Load Data from Postgres to Snowflake

You have a few options to load data from Postgres to Snowflake, from completely manual approaches or scripts to using pre-built connectors and data integration products.

- Method 1: Using Estuary (Recommended)

- Method 2: Use Apache Airflow for batch orchestration

- Method 3: Manual Method

Method 1: Steps to Move Data from Postgres to Snowflake Using Estuary

Estuary is the first platform built from the ground up to deliver the most real-time and reliable change data capture (CDC), streaming, and batch data using a single data pipeline for analytics, operations, and AI.

Ready to move your data from PostgreSQL to Snowflake? Follow this comprehensive guide to set up your pipeline in just minutes. If you have any questions or need assistance, our team is always available to help—just reach out on Slack.

Prerequisites

- An Estuary account

- A Postgres database

- A Snowflake account

Step 1: Create an Estuary account

To begin, create a free account in the Estuary web app:

- Sign up using your GitHub or Google account.

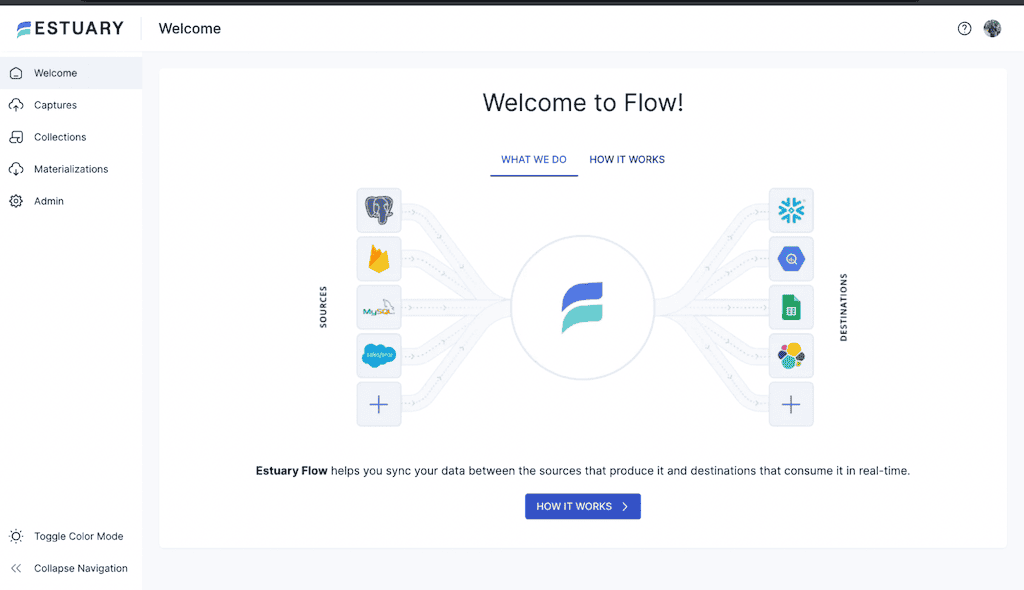

- After logging in, you’ll see a welcome page, indicating you’re ready to build your pipeline from PostgreSQL to Snowflake.

Step 2: Configure Snowflake

Set up your Snowflake environment:

- If you are new to Snowflake, follow Snowflake’s guide to get started. On the Snowflake classic console, you can create a Snowflake database by running the command:

plaintextcreate database estuary_snowflake_db

You can also create a schema in Snowflake by running the command:

plaintextuse "ESTUARY_SNOWFLAKE_DB";

create schema estuary_snowflake_schema;- You also need to create a user for Estuary and grant the user data warehouse permissions. You can do that by executing the block of code below, making sure to update the password in the example.

plaintextset estuary_role = 'ESTUARY_ROLE';

set estuary_user = 'ESTUARY_USER';

set estuary_password = 'password';

create database if not exists identifier($database_name);

use database identifier($database_name);

create schema if not exists identifier($estuary_schema);

create role if not exists identifier($estuary_role);

grant role identifier($estuary_role) to role SYSADMIN;

create user if not exists identifier($estuary_user)

password = $estuary_password

default_role = $estuary_role;

grant role identifier($estuary_role) to user identifier($estuary_user);

grant all on schema estuary_snowflake_schema to identifier($estuary_role);

grant USAGE on warehouse estuary_wh to role identifier($estuary_role);

-- grant Estuary access to database

grant CREATE SCHEMA, MONITOR, USAGE on database estuary_snowflake_db to role identifier($estuary_role);

-- change role to ACCOUNTADMIN for STORAGE INTEGRATION support to Estuary (only needed for Snowflake on GCP)

use role ACCOUNTADMIN;

grant CREATE INTEGRATION on account to role identifier($estuary_role);

use identifier($estuary_role);

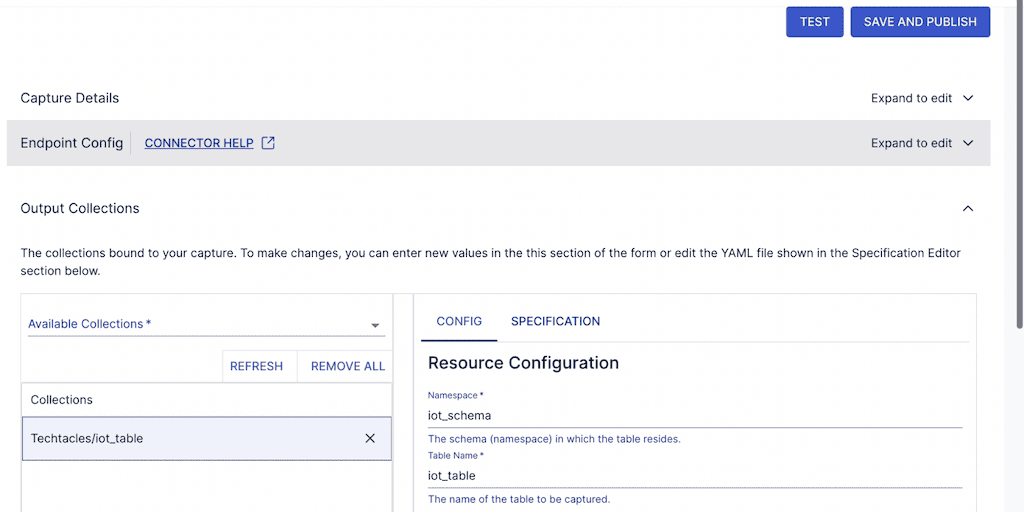

COMMIT;Step 3: Create a Capture in Estuary

Estuary reads data changes from PostgreSQL using Change Data Capture (CDC). To set up:

- Grant permissions in PostgreSQL, making sure to update the password in the example below:

plaintextCREATE USER flow_capture WITH PASSWORD ‘password’;

GRANT rds_replication TO flow_capture;

GRANT SELECT ON ALL TABLES IN SCHEMA iot_schema TO flow_capture;

GRANT ALL ON ALL TABLES IN SCHEMA iot_schema TO flow_capture;

ALTER DEFAULT PRIVILEGES IN SCHEMA iot_schema GRANT SELECT ON TABLES TO flow_capture;

GRANT USAGE ON SCHEMA iot_schema TO flow_capture ;

CREATE TABLE IF NOT EXISTS public.flow_watermarks (slot TEXT PRIMARY KEY, watermark TEXT);

GRANT ALL PRIVILEGES ON TABLE public.flow_watermarks TO flow_capture;

GRANT ALL PRIVILEGES ON TABLE iot_schema.iot_table TO flow_capture;

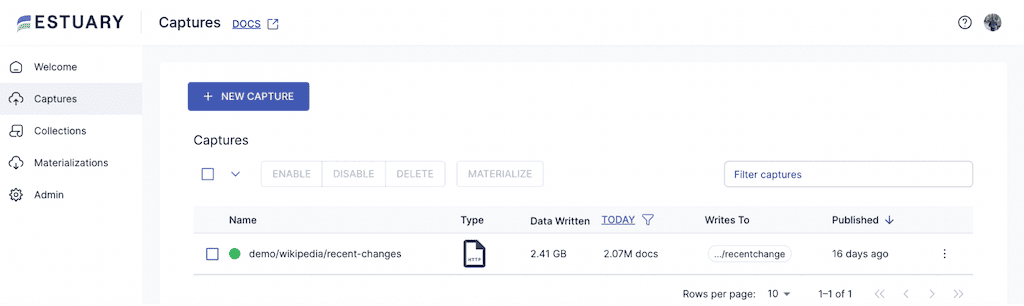

CREATE PUBLICATION flow_publication FOR ALL TABLES;- Log in to Estuary and create a New Capture

- Select PostgreSQL as the source.

- Enter connection details such as hostname, database name, username, and password.

Note that the hostname and database name were set when you created your Amazon RDS instance in the previous section.

When you click on NEXT, you will see the tables and schema you also created in the previous section.

- Save and publish the capture to start reading your data.

Estuary will then start to read the WAL and also start to execute an incremental snapshot of the database in parallel. Estuary is the only product that does both in real-time and in parallel. As it reads both, Estuary combines this into a stream with exactly-once guaranteed delivery that it also commits as a durable append-only log called a collection. A collection allows you to replay and reuse the stream at any time as a stream or batch load.

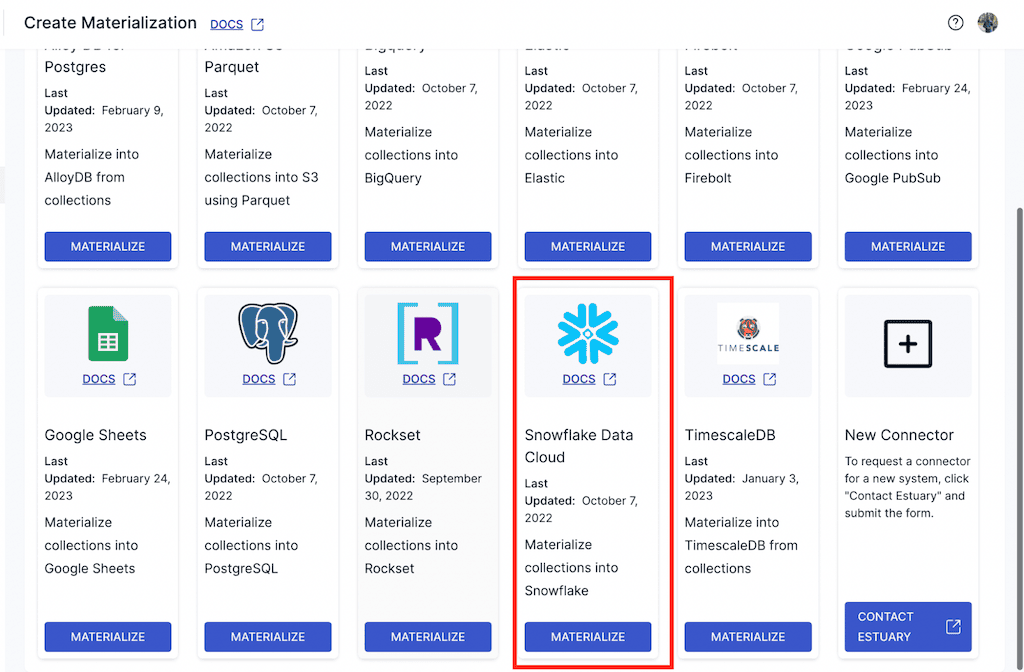

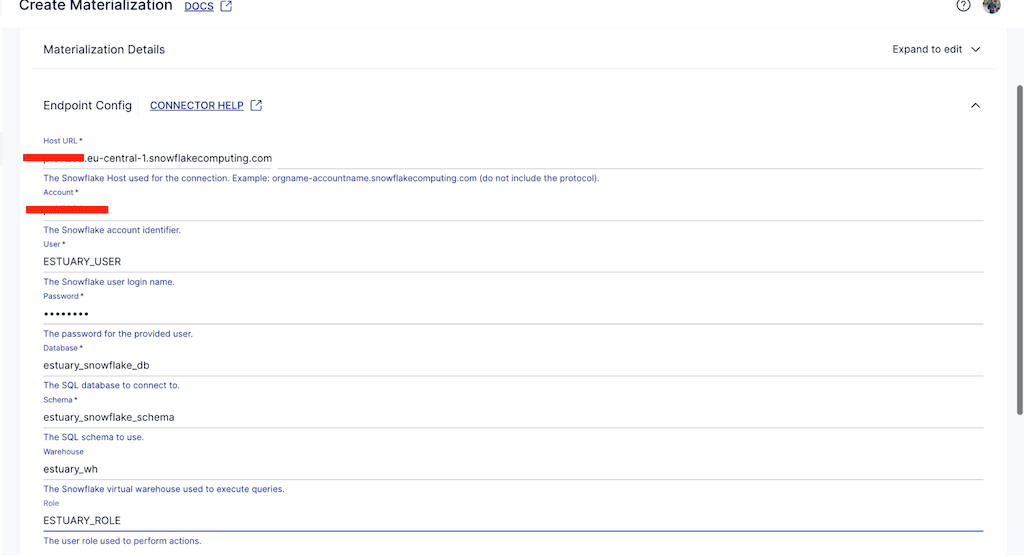

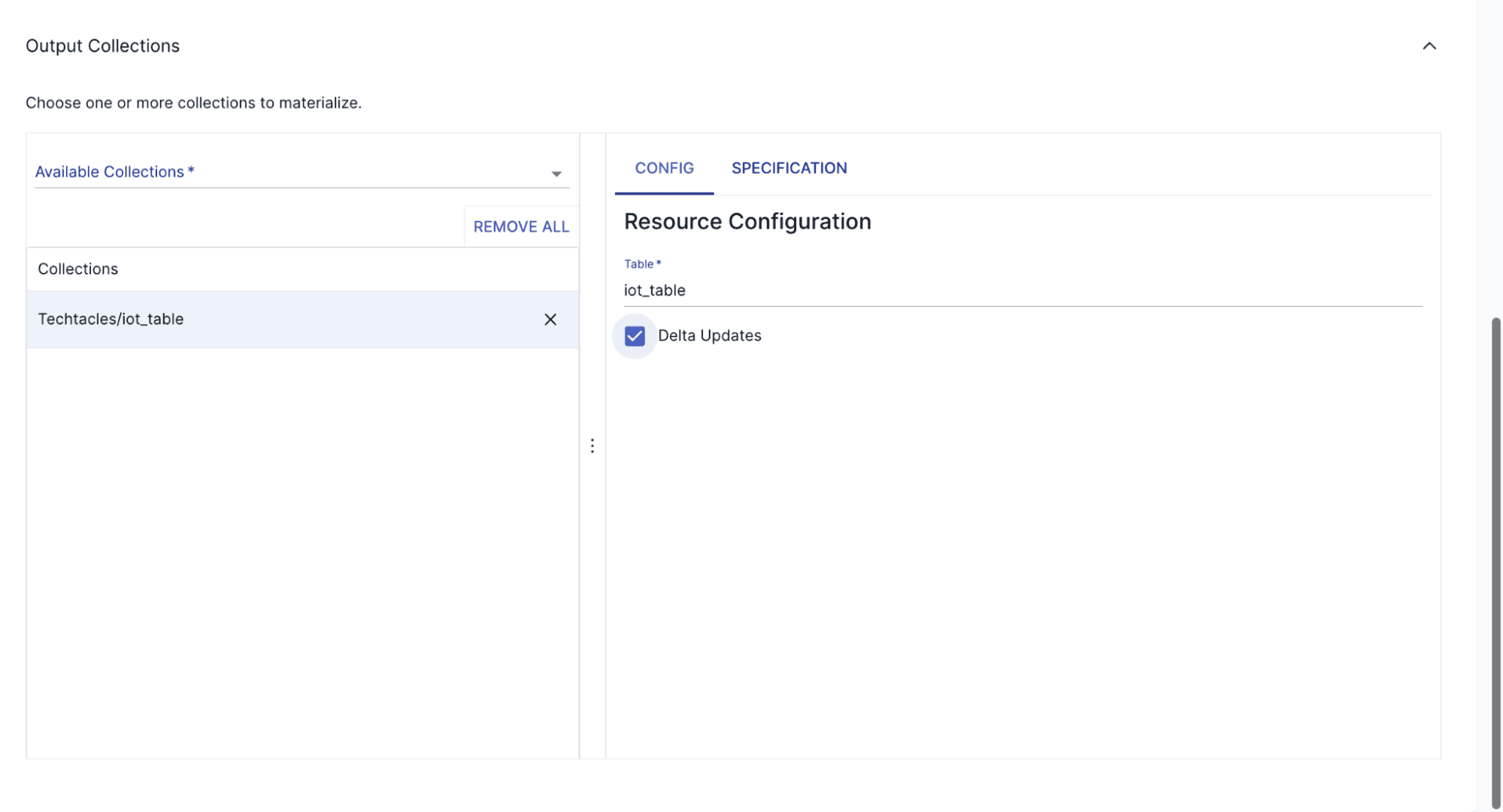

Step 4: Create a Materialization in Snowflake

Materializations transfer data from Estuary to a destination. To create one for Snowflake:

In the Estuary UI, navigate to Materialization and click New Materialization.

- Select Snowflake as the destination and configure connection details (e.g., Host URL, Database name, Warehouse name, Username, and Password). Many of these details can be found in the Snowflake setup script from Step 2.

- Specify the collection representing the IoT data from PostgreSQL.

- When you enable the materialization, you will notice that data gets streamed from Postgres to Snowflake in real-time.

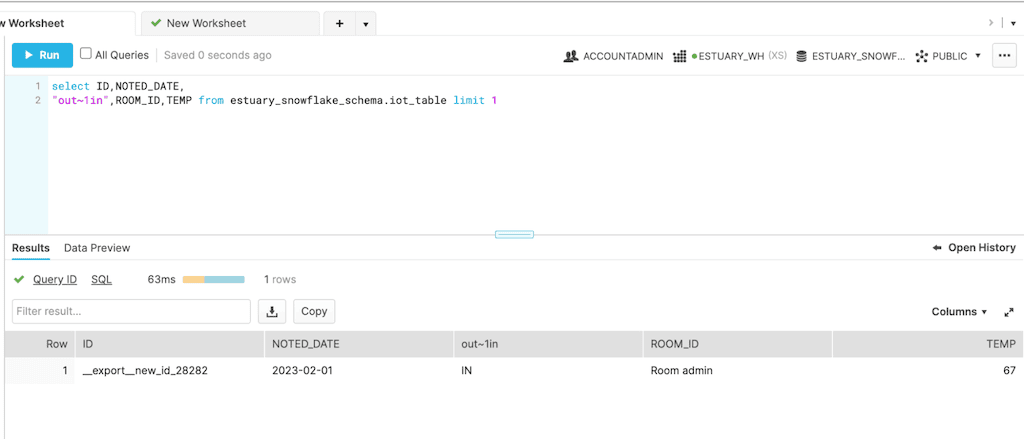

Step 8: Verify Data Transfer

Finally, test the pipeline by inserting new data into PostgreSQL and confirming it appears in Snowflake:

- On your local PostgreSQL server, you can run the following command:

plaintextinsert into iot_schema.iot_table values ('__export__new_id_28282',

'Room admin','2023-02-01',67,'IN')- Check Snowflake to ensure the record is present:

plaintextSELECT * FROM estuary_snowflake_schema.iot_table WHERE id = 'new_id_28282';Congratulations! Your data pipeline is now successfully moving data from PostgreSQL to Snowflake in real-time.

Key Benefits of Using Estuary

- Estuary allows you to build data pipelines in minutes, making it simple to get started without extensive training or setup time.

- Effortlessly manage both real-time streaming and batch data within a single pipeline.

- Recommended for real-time CDC to reduce source database load but flexible enough for batch processing when needed.

- Customize destination sync frequency to help manage Snowflake costs.

- Data is stored as it moves, ensuring recoverability and preventing data loss.

- Enables reuse of data streams for backfilling, stream processing, or time travel at any time.

One of the most affordable data integration tools on the market. Flexible pricing options, with a calculator available on the pricing page to estimate costs based on usage.

Estuary’s combination of speed, reliability, and cost-effectiveness makes it an excellent choice for building modern data pipelines.

Method 2: Using Apache Airflow to Load Data from Postgres to Snowflake

If you decide to use Apache Airflow to replicate data from Postgres to Snowflake, you will need to set up and maintain your own Apache Airflow deployment. There are several articles with instructions on how to connect Snowflake to Postgres once you’re all set up, like this Medium article.

Step 1: Set up Airflow

Assuming you already have a Postgres database set up, if you’re using Linux you can follow this article for setting up Airflow. You will also need to install your Airflow packages on your client.

Step 2: Set up your Airflow Postgres and Snowflake connectors

From there, you will have to configure the connectors to Postgres and Snowflake. While this can take a little time, Airflow does make it easier.

Step 3: Define your Airflow DAG

Next, you’ll need to write your DAG (Directed Acyclic Graph) as a Python script. Airflow uses DAGs to define orchestrations. This means you need to be familiar with Python and the AIrflow syntax for DAGs.

Step 4: Run and monitor your Airflow DAG

Once you start the Airflow scheduler and Web server, you can log in, find the specific DAG, and start it. You can then start to monitor its progress.

Summary: Airflow Benefits and Challenges

Airflow is one of the most mature open source tools you can use for building your own batch data pipelines. It has been proven to work even in some of the largest environments.

But there are still some major challenges that make the majority of companies choose other options:

- Expertise Required: You need a team of Airflow experts to install and manage Airflow, and developers to code your pipelines in Python. The more sources and destinations, the more complex your DAGs can become to develop, manage, monitor, and scale.

- Open Source Limitations: While there are commercially available options like Astronomer, open-source tools come with their own set of challenges.

- Batch-Only Processing: If you need real-time data movement, you’ll need to use another tool for real-time data, resulting in two different technologies.

Method 3: Sync Postgres to Snowflake Destination Manually

For organizations that prefer a hands-on approach, a manual migration method can be implemented using standard PostgreSQL and Snowflake tools. However, unless you’re looking for a one-off method of transferring data, an automated tool like Estuary should be used to make use of CDC and batch processing.

Step 1: Export Data from PostgreSQL

Use the COPY command to export data to a CSV file:

plaintextCOPY my_table TO '/path/to/export.csv' WITH CSV HEADER;Alternatively, use pg_dump to export the entire database:

plaintextpg_dump -U my_user -h my_host -d my_database -F c -f my_backup.dumpStep 2: Upload Data to Cloud Storage

Upload the exported CSV file to an AWS S3, Google Cloud Storage, or Azure Blob Storage bucket:

plaintextaws s3 cp /path/to/export.csv s3://my-bucket-name/Ensure the Snowflake instance has the necessary permissions to access the bucket.

Step 3: Create a Table in Snowflake

Before loading data, create a table that matches the structure of your PostgreSQL table:

plaintextCREATE TABLE my_table (

id INT,

name STRING,

created_at TIMESTAMP

);

Step 4: Load Data into Snowflake

Create a Snowflake stage to reference the cloud storage:

plaintextCREATE OR REPLACE STAGE my_stage

URL = 's3://my-bucket-name/'

STORAGE_INTEGRATION = my_s3_integration;Load data into Snowflake using the COPY INTO command:

plaintextCOPY INTO my_table

FROM @my_stage/export.csv

FILE_FORMAT = (TYPE = 'CSV' FIELD_OPTIONALLY_ENCLOSED_BY='"');Step 5: Verify the Data

After loading, verify the data integrity by running queries in Snowflake:

plaintextSELECT COUNT(*) FROM my_table;

SELECT * FROM my_table LIMIT 10;Challenges with Manual Migration

While the manual method gives full control, it comes with challenges:

- Time-consuming: Requires multiple steps and hands-on intervention.

- Error-prone: Schema mismatches, incorrect data formats, or permission issues can cause failures.

- No CDC support: Doesn't support real-time change tracking, meaning only full dataset migrations are feasible.

Repetitive: Needs to be executed manually every time new data needs to be transferred.

Comparison Table of Methods: Batch, Real-Time Streaming, or Manual Migration

Selecting the right approach for moving data from PostgreSQL to Snowflake is crucial. The following table outlines the summary of three primary methods based on different needs and priorities.

| Method | Best For | Pros | Cons |

|---|---|---|---|

| Batch Processing | Periodic analytics, cost-conscious use cases |

|

|

| Real-time Streaming (CDC) | Real-time analytics, AI, machine learning |

|

Estuary is one of the lowest-cost options compared to alternatives |

| Manual Migration | One-time migrations, small datasets, full control |

|

|

Build vs. Buy: Data Pipeline Considerations

Many organizations debate whether to build their own data pipeline or use a managed ELT/CDC tool. Consider these factors:

- Expertise Needed: Building requires skilled engineers, while a managed tool handles complexity for you.

- Cost: Managed solutions (like Estuary) operate on pay-per-use pricing, whereas in-house solutions demand significant infrastructure investment.

- Flexibility: Custom-built pipelines offer maximum control but require ongoing maintenance.

- Real-Time Streaming: If you need real-time CDC, using a dedicated tool is often easier than managing Kafka or Debezium yourself.

- Batch Processing: Tools like Airflow can help automate batch jobs, but require monitoring and troubleshooting.

Conclusion

Hopefully, you’ve learned a few ways to load data from Postgres to Snowflake and figured out which method is best for your needs.

This guide has outlined three ways to move data from Postgres to Snowflake: using Estuary for real-time and batch pipelines, Apache Airflow for scheduled batch processing, and a manual approach for smaller, ad-hoc migrations. While Airflow is effective for batch processes and the manual method offers full control, Estuary provides a faster, fully managed solution that supports both batch and real-time CDC.

Ready to simplify your Postgres to Snowflake data pipeline? Try Estuary for free and start moving your data in minutes.

FAQs

What are the top tools for integrating PostgreSQL into Snowflake?

Estuary is the best choice for connecting PostgreSQL with Snowflake, offering both real-time streaming and batch processing with minimal setup. Other tools like Fivetran and Stitch provide ELT capabilities but can become costly, while Airbyte offers an open-source option requiring technical expertise.

What are my options for real-time data movement using Postgres to Snowflake CDC?

Estuary and Debezium are the two main options. Debezium is only for the CDC and, as open source, will require you to set it up, maintain it, and support it on your own. With Estuary, you’re set up in minutes and can seamlessly mix CDC with other real-time or batch connections.

What are the risks of using CDC with Postgres?

The main risks include misconfigurations in Postgres settings and increased load when reading the WAL in batch intervals instead of real-time. These can be mitigated by using tools like Estuary, which simplifies CDC setup and ensures reliable, low-latency data streaming.

Why do I need to provide write access to a CDC connector?

If a CDC connection fails for any reason, both the Postgres connection and the remote client should keep track of the position where the WAL was last read. The most reliable way to do that in Postgres is to use Postgres to track the offset as a database write.

Next Steps: Get Started with Estuary Today

- Create Your Free Account: Sign up now and start your journey with Estuary.

- Explore the Documentation: Check out our Getting Started guide to familiarize yourself with the platform.

- Join the Community: Connect with other users and get expert support in our Estuary Slack community.

Need Help? Our team is here for you—contact us anytime for assistance.

Related Articles: Sync with Postgres

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.