You can connect Kafka to Snowflake using either the Snowflake Kafka Connector or Estuary, a right-time data platform that automates streaming pipelines without manual setup. Both methods let you continuously move event data from Kafka topics into Snowflake tables for analytics, AI, and real-time insights.

Kafka acts as the central message backbone for streaming data, while Snowflake provides scalable storage and processing for analysis. Integrating the two allows organizations to centralize continuous data flows, reduce latency, and unlock right-time analytics across their entire stack.

This guide explains both methods in detail, so you can choose the best approach for your architecture and data requirements.

What is Kafka: Key Features and Use Cases

Apache Kafka is an open-source, distributed event-streaming platform used for publishing and subscribing to streams of records. It plays a crucial role in sending data from Kafka to Snowflake for real-time analytics.

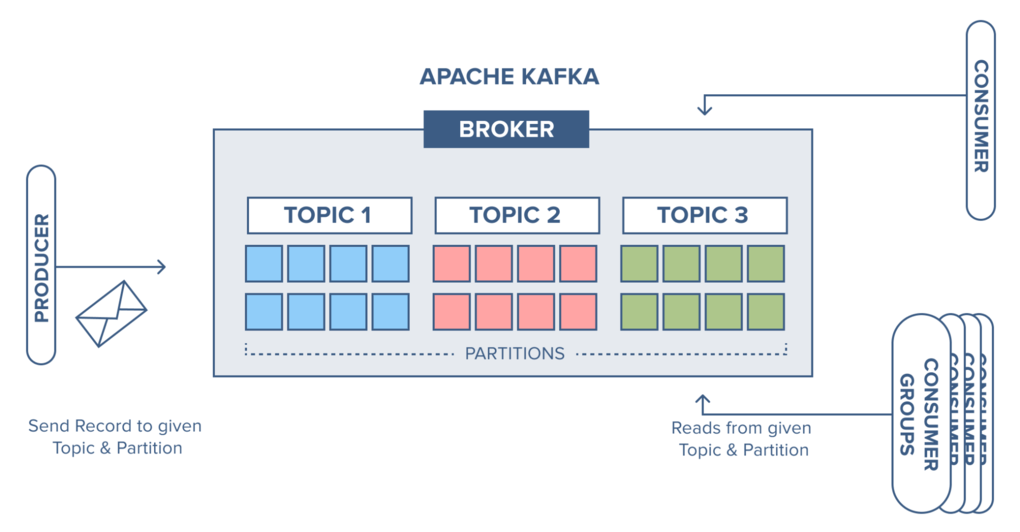

Kafka uses a message broker system that can sequentially and incrementally process a massive inflow of continuous data streams. The source systems, called the Producers, can send multiple streams of data to Kafka brokers. And the target systems, called Consumers, can read and process the data from the brokers. The data isn’t limited to any single destination; multiple consumers can read the same data present in the Kafka broker.

Here are some of Apache Kafka’s key features:

- Scalability: Apache Kafka is massively scalable since it allows data distribution across multiple servers. It can be scaled quickly without any downtime.

- Fault Tolerance: Since Kafka is a distributed system with several nodes running together to serve the cluster, it’s resistant to any cluster node’s or machine’s failure.

- Durability: The Kafka system persists the messages on the disks, which provides an intra-cluster replication. This helps in building a highly durable messaging system.

- High Performance: Since Kafka decouples the data streams, it can process messages at a very high speed, with processing rates exceeding 100k/second. It maintains stable performance even with terabytes of data loads.

Learn more: What is a Kafka Data Pipeline?

Why Snowflake is Ideal for Kafka Data Streams

Snowflake is a fully managed cloud-based data warehousing platform. It uses cloud infrastructures like Azure, AWS, or GCP to manage big data for analytics. Snowflake uses the ANSI SQL protocol that supports fully structured and semi-structured data formats, like XML, Parquet, and JSON. You can perform SQL queries on your Snowflake data to manage data and generate insights.

Key Capabilities that Make Snowflake Ideal for Kafka Data:

- Centralized Repository: Snowflake consolidates diverse data types and sources into a single platform, eliminating silos and simplifying analytics.

- Native Support for Semi-Structured Data: It easily handles formats such as JSON, Avro, and Parquet—common in Kafka event payloads.

- Elastic Compute and Storage: Snowflake’s architecture allows you to scale compute independently from storage, optimizing cost and performance for variable workloads.

- Data Sharing and Collaboration: Built-in secure data sharing enables teams and partners to analyze Kafka data without copying it across systems.

- High Reliability and Fail-Safe Recovery: Snowflake offers multi-cluster compute, automatic recovery, and time-travel features to ensure uninterrupted operations.

Methods for Kafka to Snowflake Integration

There are two primary ways to move data from Kafka to Snowflake:

- Using the Snowflake Kafka Connector, a native integration provided by Snowflake.

- Using a modern right-time integration platform like Estuary for a no-code, real-time approach.

Both methods achieve the same goal—streaming continuous event data into Snowflake—but they differ in complexity, latency, and maintenance requirements.

Method #1: Using Snowflake's Kafka Connector

Snowflake provides a Kafka connector, which is an Apache Kafka Connect plugin, facilitating the Kafka to Snowflake data transfer. Kafka Connect is a framework that connects Kafka to external systems for reliable and scalable data streaming. You can use Snowflake’s Kafka connector to ingest data from one or more Kafka topics to a Snowflake table. Currently, there are two versions of this connector—a Confluent version and an open-source version.

The Kafka to Snowflake connector allows you to stay within the Snowflake ecosystem and prevents the need for any external tools for data migration. It uses Snowpipe or Snowpipe Streaming API to ingest Kafka data into Snowflake tables in real-time.

It sounds very promising. But how does it work? Before you use the Kafka to Snowflake connector, here’s a list of the prerequisites:

- A Snowflake account with Read-Write access to the tables, schema, and database.

- A Confluent Kafka or Apache Kafka account.

- Installed Apache Kafka or Confluent connectors.

The Kafka connector subscribes to one or more Kafka topics based on configuration file settings. You can also configure it using the Confluent command line.

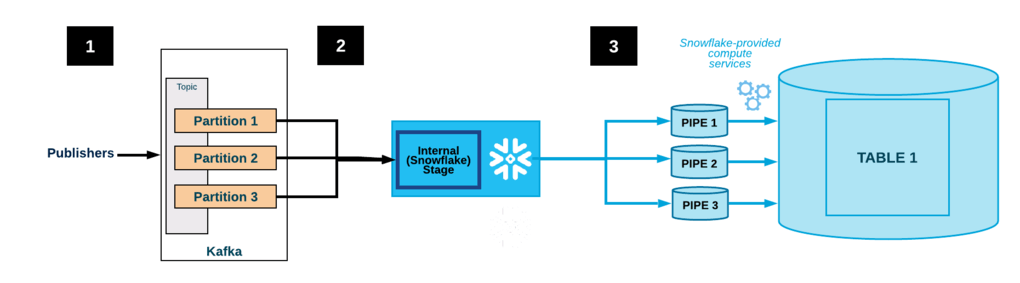

The Kafka to Snowflake connector creates the following objects for each topic:

- Internal stage: Temporarily stores data files for each topic.

- Pipeline: Ingests data files from each topic partition.

- Table: Creates a Snowflake table for each Kafka topic.

Here’s an example of how data flows with the Kafka to Snowflake connector:

- Applications publish Avro or JSON records to a Kafka cluster, which Kafka divides into topic partitions.

- The Kafka connector buffers messages from the Kafka topics. Once a threshold of time, memory, or message count is met, it writes messages into an internal, temporary file.

- The connector triggers Snowpipe to ingest this temporary file into Snowflake.

- The connector monitors Snowpipe and, after confirming successful data loading, deletes the temporary files from the internal stage.

Method #2: SaaS Alternatives for Kafka to Snowflake Integration

If you want to move Kafka data to Snowflake without managing Kafka Connect or writing code, Estuary provides a fully managed alternative. It captures event streams from Kafka and materializes them into Snowflake in real time—automatically handling schema evolution, scaling, and recovery.

With Estuary, you can stream, transform, and load Kafka data continuously, ensuring Snowflake always reflects the latest state of your event data. This approach is ideal for teams that need real-time analytics with minimal operational overhead.

Follow these steps to start streaming data seamlessly from Kafka into Snowflake.

Step 1: Capture Data from Kafka as the Source

- To start using Flow, you can register for a free account. However, if you already have one, then log in to your Estuary account.

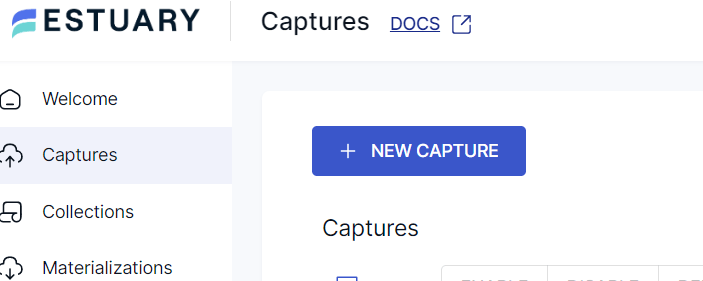

- On the Estuary dashboard, navigate to the Captures section and click on New Capture.

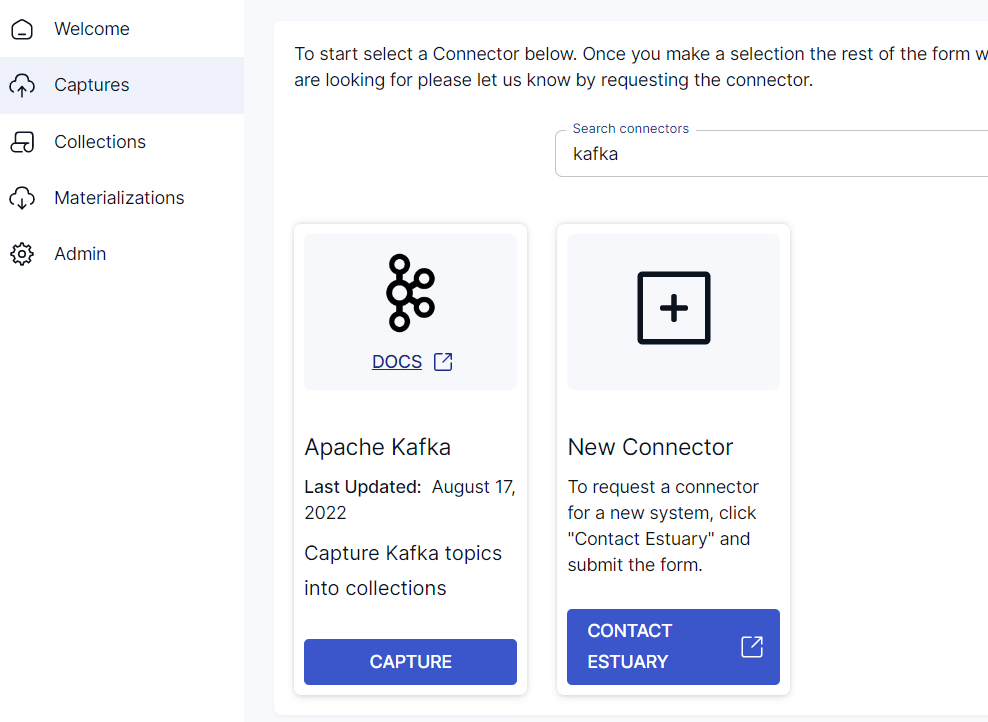

- Search for Kafka and click Capture.

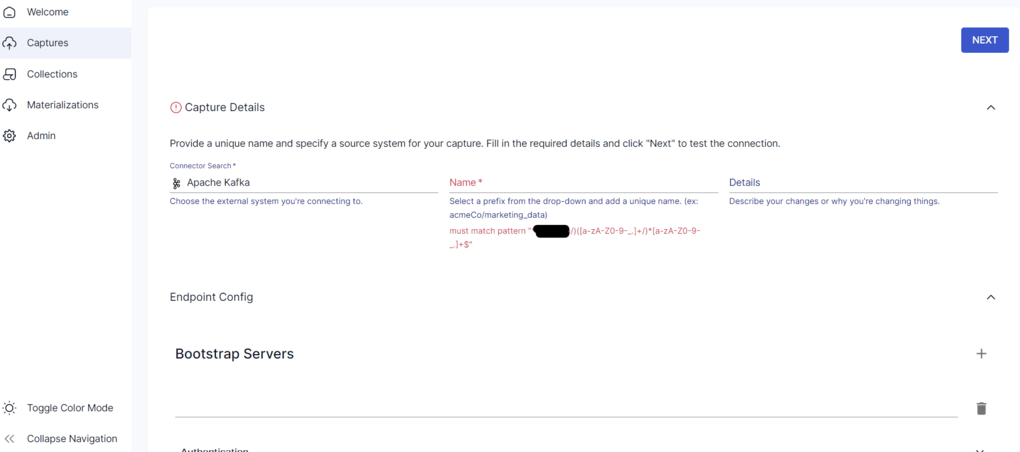

- On the configuration page, provide your Kafka connection details:

- Bootstrap Servers: Comma-separated list of broker addresses (for example, server1:9092,server2:9092).

- Authentication: Choose a supported SASL mechanism — PLAIN, SCRAM-SHA-256, or SCRAM-SHA-512. You can also use AWS IAM authentication if you’re connecting to MSK.

- TLS Encryption: Recommended for production environments to ensure secure data transmission.

- Schema Registry (optional): If you’re using Avro messages, specify your Confluent Schema Registry endpoint and credentials. JSON messages can be read without it.

- Click Next, then Save and Publish to start capturing data.

Estuary will automatically discover your Kafka topics and continuously stream Avro or JSON records into Flow collections. These collections form the live data feed for your downstream destinations like Snowflake.

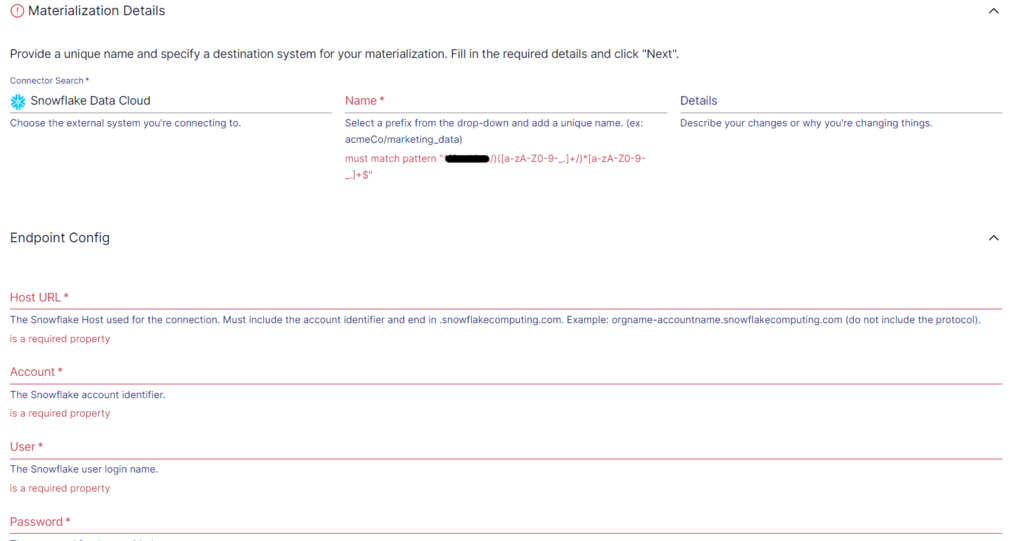

Step 2: Configure Snowflake as the Destination

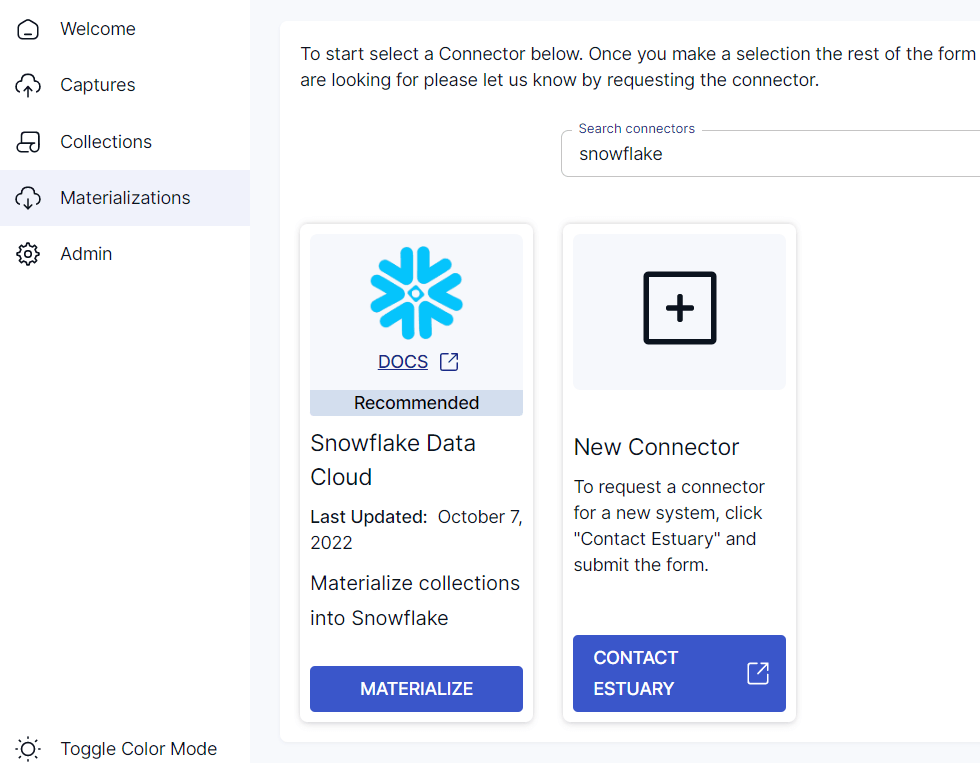

- From the sidebar, navigate to Materializations and click + New Materialization.

- Search for Snowflake and click Materialize.

- Fill in your connection details:

- Host (Account URL): Example orgname-accountname.snowflakecomputing.com

- Database: Target Snowflake database name

- Schema: Target schema for storing tables

- Warehouse: Virtual warehouse to handle compute

- Role: Snowflake role with the required privileges

- Set up Key-Pair Authentication (JWT) — required since password authentication was deprecated in 2025:

- Generate a key pair using OpenSSL:

plaintextopenssl genrsa 2048 | openssl pkcs8 -topk8 -inform PEM -out rsa_key.p8 -nocrypt

openssl rsa -in rsa_key.p8 -pubout -out rsa_key.pub- Assign the public key to your Snowflake user with:

plaintextALTER USER ESTUARY_USER SET RSA_PUBLIC_KEY='MIIBIj...';- Paste the private key (contents of rsa_key.p8) in the Estuary Snowflake connector under Private Key.

- Select the Flow collections (from Kafka) to materialize and click Next, then Save and Publish.

Estuary’s Snowflake connector automatically manages schema mapping, applies data changes transactionally, and ensures consistent delivery to Snowflake tables.

Note: Estuary supports both standard (merge-based) and delta updates for Snowflake. Delta updates use Snowpipe Streaming, which provides the lowest latency by writing rows directly to Snowflake tables and scaling compute automatically.

Step 3: Verify and Monitor the Pipeline

Once published, Estuary begins streaming data from Kafka to Snowflake in real time. You can monitor and manage your pipeline directly from the Estuary dashboard:

- Monitor pipeline status: Check task health, latency, and throughput.

- Track record counts: View messages captured and rows written to Snowflake.

- Handle schema evolution: Estuary automatically adapts to new fields or type changes in Kafka messages.

- View logs and alerts: Quickly identify and resolve configuration or data issues.

The result is a continuously updated Snowflake table reflecting your Kafka topics with sub-second latency — without managing brokers, batch jobs, or custom scripts.

Why Choose Estuary for Kafka to Snowflake Integration

Estuary simplifies Kafka to Snowflake integration with dependable, right-time data movement. Instead of maintaining connectors or managing Snowpipe scripts, you can set up a streaming pipeline in minutes — secure, scalable, and continuously up to date.

Key advantages:

- Real-time performance: Uses Snowpipe Streaming for delta updates to deliver Kafka data into Snowflake with sub-second latency.

- Exactly-once reliability: Every Kafka message is captured and written once, ensuring clean and consistent data.

- Zero-code setup: Configure everything through a simple UI or YAML specs — no Spark, Airflow, or SQL scripts needed.

- Automatic schema handling: Adapts to evolving Kafka topics without breaking downstream pipelines.

- Scalable and secure: Supports TLS for Kafka, key-pair authentication for Snowflake, and private or BYOC deployment options.

- Full visibility: Built-in monitoring tracks latency, throughput, and task health from a single dashboard.

With Estuary, Kafka events flow continuously into Snowflake for analytics, AI, or reporting — no manual steps, no data loss, and no maintenance burden.

For detailed guidance, refer to our documentation on the Kafka source connector and Snowflake materialization connector.

Conclusion

Connecting Kafka to Snowflake allows you to centralize event data for analytics, AI, and real-time decision-making. While the native Kafka to Snowflake connector works for basic streaming, it often requires complex setup, maintenance, and scaling effort.

Estuary provides a simpler, right-time alternative. With automated streaming pipelines, Snowpipe Streaming support, and exactly-once delivery, Estuary keeps your Kafka and Snowflake environments in sync — without code or manual configuration.

Whether you’re building dashboards, powering machine learning, or monitoring operations, Estuary helps you move data dependably and in real time.

Next Steps

- Try the interactive demo – See how right-time data pipelines work in action.

- Start for free – Create your first Kafka to Snowflake pipeline in minutes.

- Contact Estuary – Talk to our data integration experts about your architecture or compliance needs.

Related Guides:

FAQs

Does Snowflake support real-time data ingestion from Kafka?

What message formats does Estuary support for Kafka?

What are the costs involved in streaming Kafka data to Snowflake?

Can I deploy Estuary in my own environment?

About the author

With over 15 years in data engineering, a seasoned expert in driving growth for early-stage data companies, focusing on strategies that attract customers and users. Extensive writing provides insights to help companies scale efficiently and effectively in an evolving data landscape.