Data pipelines have become the backbone of modern enterprises. They ensure a smooth transfer of data across applications, databases, and other data systems, guaranteeing real-time, secure, and reliable information exchange. When it comes to designing one, the Kafka data pipeline takes center stage.

That said, understanding Kafka can be quite challenging. Besides the intricate architecture, it also involves learning about its operational principles, configuration specifics, and the potential pitfalls in its deployment and maintenance.

In today’s value-packed guide, we’ll discuss the benefits of the Kafka data pipeline and unveil its architecture and inner workings. We'll also look at the components that make up a Kafka data pipeline and provide you with real-world examples and use cases that demonstrate its immense power and versatility.

By the time you complete this article, you will have a solid understanding of the Kafka data pipeline, its architecture, and how it can solve complex data processing challenges.

What Is A Kafka Data Pipeline?

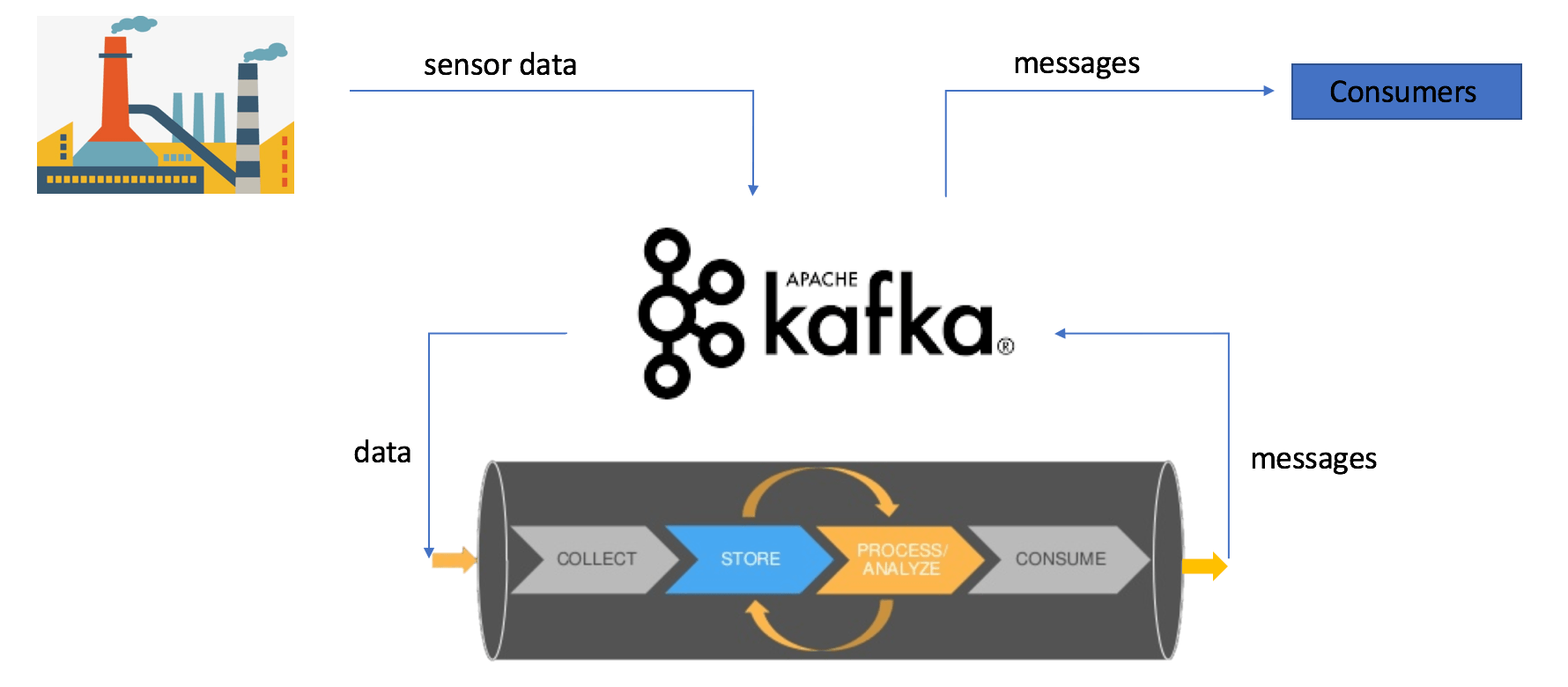

A Kafka data pipeline is a powerful system that harnesses the capabilities of Apache Kafka Connect for seamless streaming and processing of data across different applications, data systems, and data warehouses.

It acts as a central hub for data flow and allows organizations to ingest, transform, and deliver data in real time.

Within a Kafka data pipeline, a series of events takes place:

- Data from various sources is collected and sent to Kafka for storage and organization.

- Kafka is a high-throughput, fault-tolerant message broker that handles data distribution across topics or streams.

- Data is consumed by different applications or systems for processing and analysis.

With a Kafka data pipeline, you can use streaming data for streaming analytics, fraud detection, and log processing, unlocking valuable insights and driving informed decision-making.

Revolutionizing Data Processing: 9 Key Benefits Of Kafka Data Pipelines

Here are 9 major benefits of a Kafka data pipeline:

Real-time Data Streaming

Kafka data pipelines seamlessly ingest, process, and deliver streaming data. It acts as a central nervous system for your data and handles data as it flows in real time.

Scalability & Fault Tolerance

Kafka's distributed architecture provides exceptional scalability and fault tolerance. By using a cluster of nodes, Kafka handles large data volumes and accommodates increasing workloads effortlessly.

Data Integration

Kafka acts as a bridge between various data sources and data consumers. It seamlessly integrates across different systems and applications and collects data from diverse sources such as databases, sensors, social media feeds, etc. It also integrates with various data processing solutions like Apache Hadoop, and cloud-based data warehouses like Amazon Redshift and Google BigQuery.

High Throughput & Low Latency

Kafka data pipelines are designed to handle thousands of messages per second with minimal latency, allowing for near real-time data processing. This makes Kafka perfect for applications that need near-instantaneous data delivery like real-time reporting and monitoring systems.

Durability & Persistence

Kafka stores data in a fault-tolerant and distributed manner so that no data is lost during transit or processing. With Kafka's durable storage mechanism, you can replay a data stream at any point in time, facilitating data recovery, debugging, and reprocessing scenarios.

Data Transformation & Enrichment

With Kafka's integration with popular stream processing frameworks like Apache Kafka Streams and KSQL, you can apply powerful transformations to your data streams in real time. This way, you can cleanse, aggregate, enrich, and filter your data as it flows through the pipeline.

Decoupling Of Data Producers & Consumers

Data producers can push data to Kafka without worrying about the specific consumers and their requirements. Similarly, consumers can subscribe to relevant topics in Kafka and consume the data at their own pace.

Exactly-Once Data Processing

Kafka ensures exactly-once data processing semantics which guarantees that each message in the data stream is processed exactly once, eliminating any duplicate or missing data concerns.

Change Data Capture

Kafka Connect provides connectors that capture data changes from various sources such as databases, message queues, and file systems. These connectors continuously monitor the data sources for any changes and capture them in real time. Once the changes are captured, they are transformed into Kafka messages, ready to be consumed and processed by downstream systems.

Now that we know what Kafka data pipeline is and what its benefits are, it is equally important to understand the architecture of Kafka data pipelines to get a comprehensive understanding of their functionality. Let’s take a look.

Goodbye ZooKeeper: Kafka 4.0’s KRaft-Based Architecture

With the release of Apache Kafka 4.0, the platform now runs in KRaft mode by default, replacing the legacy ZooKeeper-based coordination system.

Why It Matters:

- Simplified Operations: No more managing ZooKeeper clusters.

- Improved Scalability: KRaft handles metadata internally with better performance.

- Faster Rebalancing: Thanks to KIP-848, Kafka eliminates “stop-the-world” rebalances.

- Cleaner Failover: KIP-996 introduces pre-vote logic to reduce unnecessary elections.

- New Capabilities: KIP-932 introduces share groups for queue-style semantics.

If you're still using Kafka with ZooKeeper, migrating through Kafka 3.9 is required before upgrading to 4.0.

Kafka Data Pipeline Components (Post-ZooKeeper Era)

Let’s first understand how Kafka works before getting into the details of the architecture.

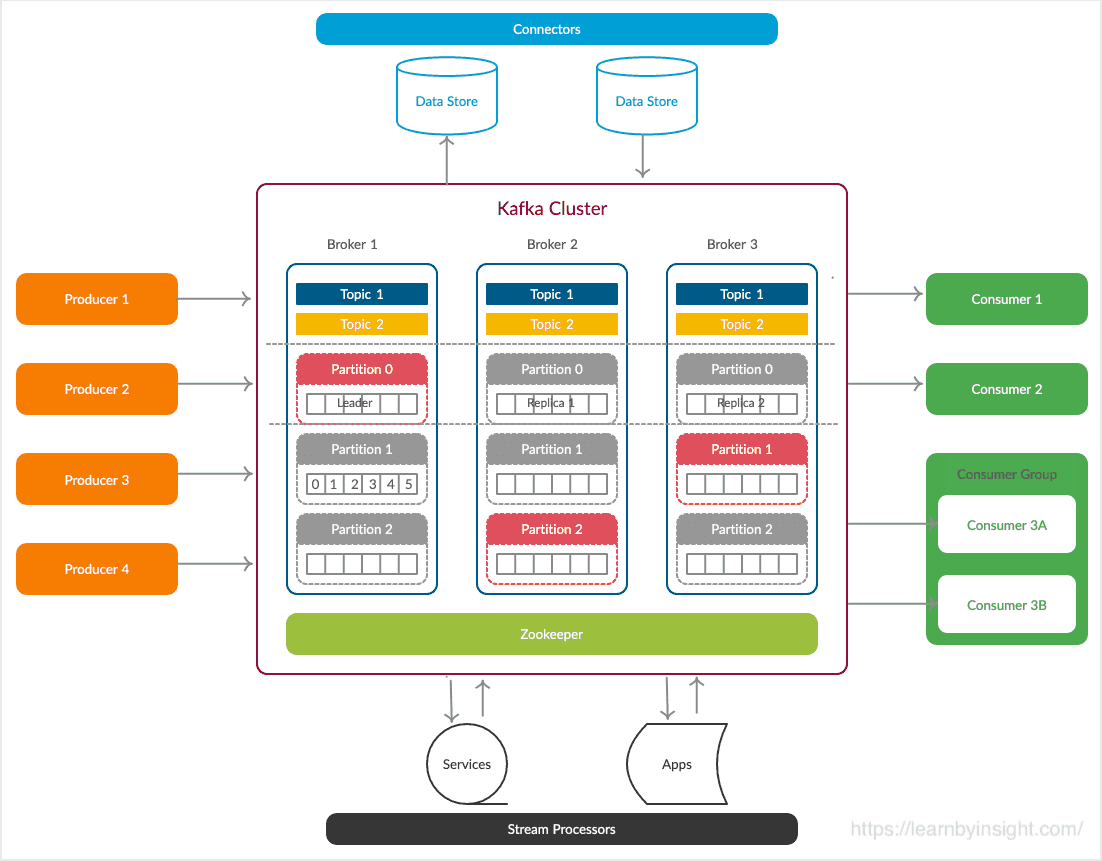

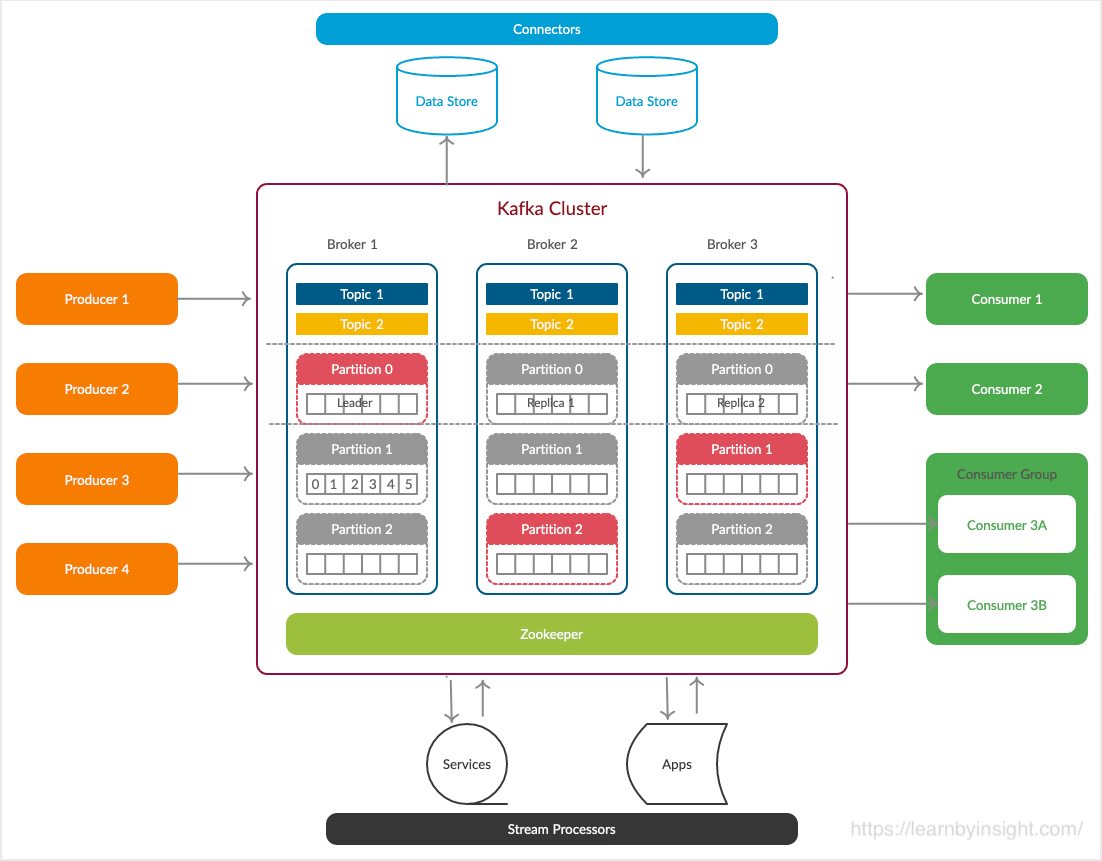

Kafka works by receiving and transmitting data as events, known as messages. These messages are organized into topics and are published by data producers, while data consumers subscribe to these topics. This structure is often referred to as a pub/sub system due to its core principle of 'publishing' and 'subscribing'

What Is A Kafka Cluster?

A Kafka cluster is formed by having multiple brokers working together harmoniously. This Kafka cluster allows seamless scalability without any downtime. Its purpose is to efficiently manage the replication of data messages. If, for any reason, the primary cluster experiences a setback, other Kafka clusters will step in and continue delivering the same reliable service without any delay.

Now let's dissect the Kafka Cluster. A Kafka cluster typically comprises 5 core components:

- Kafka Topics and Partitions

- Producers

- Kafka Brokers

- Consumers

- KRaft Controllers (Replaces ZooKeeper in Kafka 4.0 and beyond)

Let's discuss these components individually.

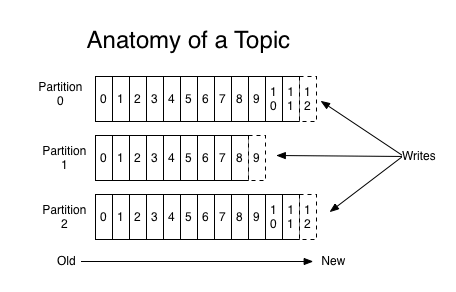

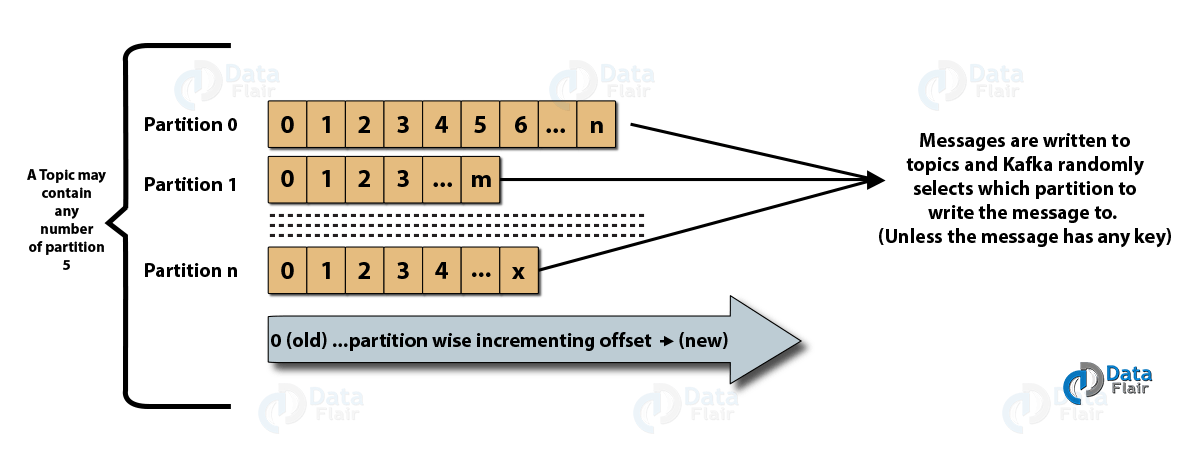

Kafka Topic & Partition

A Kafka topic is a collection of messages categorized under a specific category or feed name. It serves as a way to organize all the records in Kafka. Consumer applications retrieve data from topics while producer applications write data for them.

Topics are divided into partitions so that multiple users can read data simultaneously. These partitions are logically ordered and can be adjusted in number as needed. The partitions are distributed across Kafka cluster servers with each server handling its own data and partition requests.

Messages are sent to specific partitions based on a key, enabling different users to read from the same topic concurrently.

Producers

In Kafka clusters, producers play a vital role in sending or publishing data/messages to topics. By submitting it to Kafka, they help applications store large volumes of data. When a producer sends a record to a topic, it is delivered to the topic's leader.

The leader appends the record to its commit log and increases the record offset. This allows Kafka to keep track of the data piled up in the cluster. Note that producers don't wait for acknowledgment from the broker before delivering messages; they send them as fast as the broker can handle.

Producers should obtain metadata about the Kafka cluster from the broker before sending any reports. In Kafka 4.0, this metadata is managed directly within Kafka's built-in controller quorum, eliminating the previous dependency on ZooKeeper.

Kafka Brokers

A broker in Kafka is essentially a server that receives messages from producers, assigns them unique offsets, and stores them on disk. Offsets are crucial for maintaining data consistency and allow consumers to return to the last-consumed message after a failure.

Brokers respond to partition call requests from consumers and provide committed messages. A Kafka cluster consists of multiple brokers to distribute the load and ensure availability. These brokers are stateless and rely on KRaft mode for state coordination and leadership management instead of ZooKeeper.

During the setup of a Kafka system, it's best to consider topic replication. Replication ensures that if a broker goes down, the topic's duplicates from another broker can resolve the issue. Topics can have a replication factor of 2 or more with additional copies stored in separate brokers.

Consumers

In a Kafka data pipeline, consumers are responsible for reading and consuming messages from Kafka clusters. They can choose the starting offset for reading messages, allowing them to join Kafka clusters at any time.

There are 2 types of consumers in Kafka:

Low-Level Consumer

- Specifies topics, partitions, and the desired offset to read from.

- The offset can be fixed or variable to provide fine-grained control over message consumption.

High-Level Consumer (Consumer Groups)

- Consists of one or more consumers working together.

- Consumers in a group collaborate to consume messages from Kafka topics efficiently.

These different consumer categories provide flexibility and efficiency when consuming messages from Kafka clusters, catering to various use cases in data pipelines. Kafka 4.0 introduces a new consumer group protocol (KIP-848) that improves rebalance speed and reliability in large deployments.

KRaft Controllers (New in Kafka 4.0)

In place of ZooKeeper, Kafka 4.0 introduces KRaft (Kafka Raft) controllers to manage cluster metadata, leadership elections, and state coordination. These controllers form a quorum and handle all administrative tasks internally.

KRaft simplifies the deployment architecture, eliminates the need for external coordination systems, and provides better fault tolerance and observability.

Driving Data Innovation: 5 Inspiring Kafka Data Pipeline Applications

Here are some examples that showcase the versatility and potential of Kafka in action.

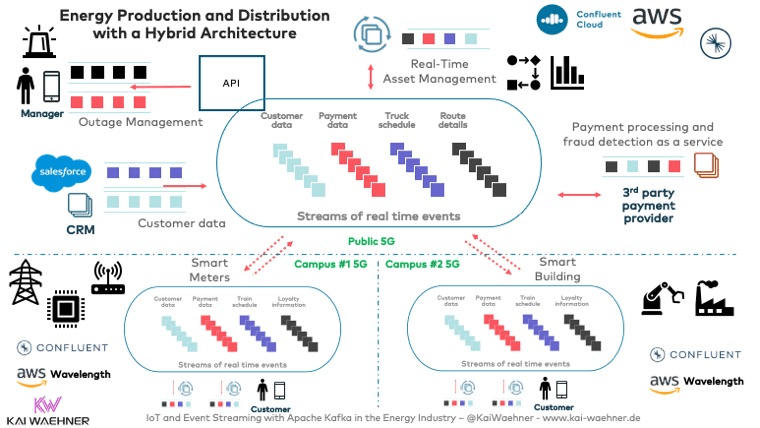

Smart Grid-Energy Production & Distribution

A Smart Grid is an advanced electricity network that efficiently integrates the behavior and actions of all stakeholders. Technical challenges of load adjustment, pricing, and peak leveling in smart grids can’t be handled by traditional methods.

One key challenge in managing a smart grid is handling the massive influx of streaming data from millions of devices and sensors spread across the grid. A smart grid needs a cloud-native infrastructure that is flexible, scalable, elastic, and reliable. Real-time data integration and processing are also needed in these smart setups.

That's why an increasing number of energy companies are turning to event streaming with the Kafka data pipeline and its ecosystem. With Kafka, we have a high-throughput, fault-tolerant, and scalable platform that acts as a central nervous system for the smart grid.

Whether it's managing the massive influx of data, enabling real-time decision-making, or empowering advanced analytics, Kafka data pipelines make smart grids truly smart.

Healthcare

Kafka is playing an important role in enabling real-time data processing and automation across the healthcare value chain. Kafka's ability to offer a decoupled and scalable infrastructure improves the functionality of healthcare systems. It allows unimpeded integration of diverse systems and data formats.

Let's explore how Kafka is used in healthcare:

- Use Cases in Healthcare: Kafka finds applications for pharmaceuticals, health cybersecurity, patient care, and insurance.

- Real-time Data Processing: Kafka's real-time data processing revolutionizes healthcare by improving efficiency and reducing risks.

- Real-World Deployments: Many healthcare companies are already using Kafka for data streaming. Examples include Optum/UnitedHealth Group, Centene, Bayer, Cerner, Recursion, and Care.com, among others.

Fraud Detection

Kafka's role in fraud detection and prevention is paramount in modern anti-fraud management systems. By employing real-time data processing, Kafka helps to timely detect and respond to fraudulent activities, avoiding revenue loss and enhancing customer experience.

Traditional approaches that rely on batch processing and analyzing data at rest are no longer effective in fraud prevention. Kafka's data pipeline capabilities, including Kafka Streams, KSQL, and integration with machine learning frameworks, allow you to analyze data in motion and identify anomalies and patterns indicative of fraud in real-time.

Here we have some real-world deployments in different industries:

- Paypal utilizes Kafka on transactional data for fraud detection.

- ING Bank has implemented real-time fraud detection using Kafka, Flink, and embedded analytic models.

- Kakao Games, a South Korean gaming company, employs a data streaming pipeline and Kafka platform to detect and manage anomalies across 300+ patterns.

- Capital One leverages stream processing to prevent an average of $150 of fraud per customer annually by detecting and preventing personally identifiable information violations in real-time data streams.

Supply Chain Management

Supply Chain Management (SCM) plays a crucial role in optimizing the flow of goods and services that involves various interconnected processes. To address the challenges faced by supply chains in today's modern businesses, Apache Kafka provides valuable solutions.

Kafka provides global scalability, cloud-native architecture, and data integration capabilities, using its real-time analytics. By utilizing Kafka's features, organizations can overcome challenges such as

- Rapid change

- Lack of visibility

- Outdated models

- Shorter time frames

- Integration of diverse tech

Let's see some real-world cases of Kafka data pipeline solutions in the supply chain.

- BMW has developed a Kafka-based robust NLP service framework that enables smart information extraction.

- Walmart has successfully implemented a real-time inventory system by using Kafka data pipeline services.

- Porsche is also using Kafka for data streams for warranty and sales, manufacturing and supply chain, connected vehicles, and charging stations

Modern Automotive Industry & Challenges

The modern automotive industry is all about agility, innovation, and pushing the boundaries of what's possible. Hundreds of robots generate an enormous amount of data at every step of the manufacturing process, and Kafka's data pipelines provide the fuel that powers these advancements.

Kafka's lightning-fast data pipelines enable manufacturers to monitor and optimize their production processes like never before. Real-time data from sensors, machines, and systems is streamed into Kafka, providing invaluable insights into manufacturing operations. With Kafka, manufacturers can detect anomalies and bottlenecks immediately. They can identify and resolve issues before they become major problems.

But it doesn't end there. Kafka's real-time data streaming capabilities also extend beyond the factory floor and into the vehicles themselves. By using Kafka's data pipelines, automakers gather a wealth of information and turn it into actionable insights. They can analyze driving patterns to improve fuel efficiency, enhance safety features, and even create personalized user experiences.

Kafka’s Advantages - Without the Engineering Overhead

Apache Kafka has long been a pillar of real-time data infrastructure. With its pub/sub architecture, high throughput, and now, a simplified KRaft-based core in version 4.0, it continues to power mission-critical systems across industries.

But despite its strengths, Kafka’s architecture—topics, brokers, replication, schema management, and consumer groups—is notoriously hard to set up and scale. Running a production-grade Kafka pipeline still demands a robust, specialized engineering team.

Enter Estuary: Kafka Power, Minus the Complexity

Estuary gives you everything you love about Kafka-style streaming, without having to build and manage Kafka itself.

It’s a fully managed, cloud-native data movement platform built on top of a high-performance event broker (like Kafka), designed to remove the operational burden while expanding what’s possible with streaming pipelines.

With Estuary, You Get:

- No infrastructure management — no ZooKeeper, no KRaft clusters, no brokers to babysit

- Built-in connectors to dozens of source and destination systems (Kafka included!)

- Exactly-once semantics, real-time replication, and schema validation out of the box

- Powerful SQL-like transformations to enrich and shape your data midstream

- Web UI + API + CLI workflows that scale with your team

Real-World Examples: Estuary in Action

Here’s how Estuary replicates and improves on Kafka pipelines:

- Already using Kafka?

Use Estuary to bridge Kafka with downstream systems like Snowflake or BigQuery in real time — great for ML models, dashboards, and fraud analytics. - Need real-time transformations?

Estuary supports inline deduplication, type casting, key reshaping, and filtering — no need for Kafka Streams or ksqlDB setup. - Streaming to cloud storage or a data lake?

Materialize Kafka streams directly into Amazon S3, Google Cloud Storage, or Apache Iceberg with built-in connectors. - Need observability and debugging tools?

Monitor end-to-end delivery, track schema changes, and view stream health — all from Estuary’s unified dashboard.

TL;DR: You get the reliability, speed, and stream-first architecture of Kafka — but with a modern, managed interface and broader integrations.

⚡ Build your first pipeline in minutes. Start free or book a demo.

Conclusion

Apache Kafka remains one of the most powerful and scalable architectures for real-time data pipelines. Its event-driven design enables organizations to ingest, process, and deliver high-throughput data with fault tolerance and reliability, making it a critical piece of modern data infrastructure.

With the release of Kafka 4.0, the platform has become even more streamlined thanks to its new KRaft-based architecture, which removes the ZooKeeper dependency and reduces operational complexity.

But even with these improvements, managing Kafka at scale still requires significant engineering investment.

That’s where Estuary comes in.

Estuary offers the power of Kafka-style pipelines in a fully managed, low-code platform — no brokers, no coordination layers, no operational headaches. In minutes, you can deploy robust, real-time pipelines across your data ecosystem and start unlocking actionable insights.

👉 Ready to simplify your data streaming strategy? Start free or book a demo to see what Estuary can do for you.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.