Stream data from Dropbox to MySQL

Sync your Dropbox data with MySQL in minutes using Estuary for real-time, no-code integration and seamless data pipelines.

- No credit card required

- 30-day free trial

- 200+Of connectors

- 5500+Active users

- <100msEnd-to-end latency

- 7+GB/secSingle dataflow

Dropbox connector details

The Dropbox connector captures data from a specified Dropbox folder into Estuary collections, enabling automated ingestion of structured and semi-structured files for analysis and downstream use.

- Folder-based ingestion: Continuously reads and captures files from a designated Dropbox folder into Flow, keeping data pipelines updated as new files are added.

- Multi-format support: Automatically detects and parses multiple file types, including Avro, CSV, JSON, Protobuf, and W3C Extended Log formats.

- Automatic compression handling: Supports ZIP, GZIP, and ZSTD compression with optional manual override for custom configurations.

- Custom parsing control: Advanced users can explicitly define parsing rules such as delimiter type, headers, compression, and encoding for CSV or other formats.

- Efficient incremental sync: The optional Ascending Keys mode improves performance by reading only new files added since the last sync, based on lexicographic key order.

- Secure authentication: Uses OAuth2 for account access, automatically managed within the Flow web app.

💡 Tip: For the fastest incremental syncs, enable Ascending Keys only if your Dropbox data is organized with timestamped or sequentially ordered filenames.

MySQL connector details

The Estuary MySQL connector materializes Flow collections into MySQL tables for downstream analytics or application use. It supports standard and delta update modes, ensuring efficient incremental writes. The connector works with both self-hosted and managed MySQL services like Amazon RDS, Google Cloud SQL, and Azure Database for MySQL, with optional SSH tunneling for secure connectivity.

- Writes Flow collections to MySQL tables in real time

- Supports standard and delta updates for optimized performance

- Compatible with RDS, Cloud SQL, and Azure Database for MySQL

- Handles date-time normalization and time zone alignment automatically

- Enables secure connections via SSL or SSH tunneling

How to integrate Dropbox with MySQL in 3 simple steps using Estuary

Connect Dropbox as Your Real-Time Data Source

Set up a real-time source connector for Dropbox in minutes. Estuary captures change data (CDC), events, or snapshots — no custom pipelines, agents or manual configs needed.

Configure MySQL as Your Target

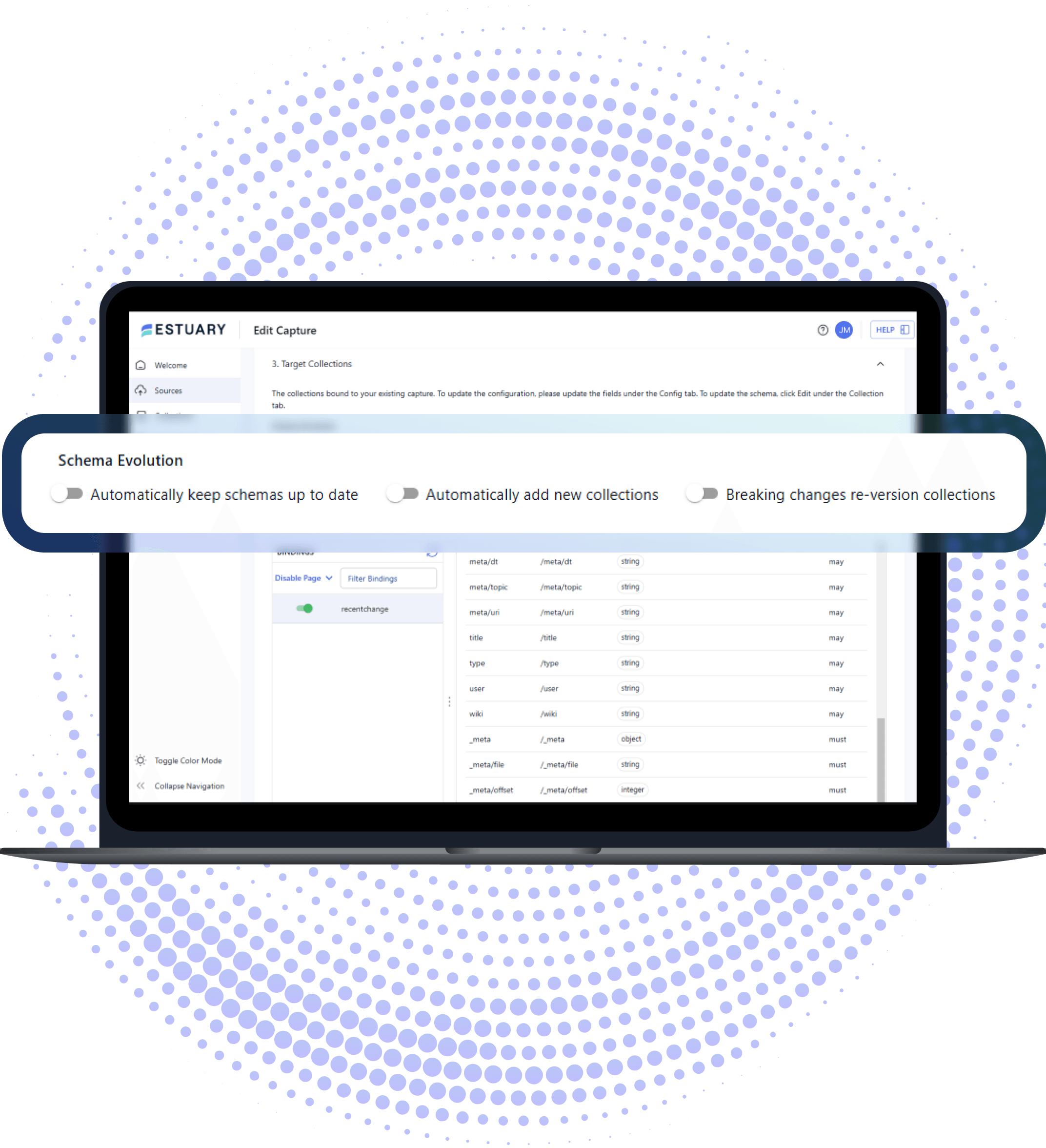

Choose MySQL as your target system. Estuary intelligently maps schemas, supports both batch and streaming loads, and adapts to schema changes automatically.

Deploy and Monitor Your End-to-End Data Pipeline

Launch your pipeline and monitor it from a single UI. Estuary guarantees exactly-once delivery, handles backfills and replays, and scales with your data — without engineering overhead.

Estuary in action

See how to build end-to-end pipelines using no-code connectors in minutes. Estuary does the rest.

Why Estuary is the best choice for data integration

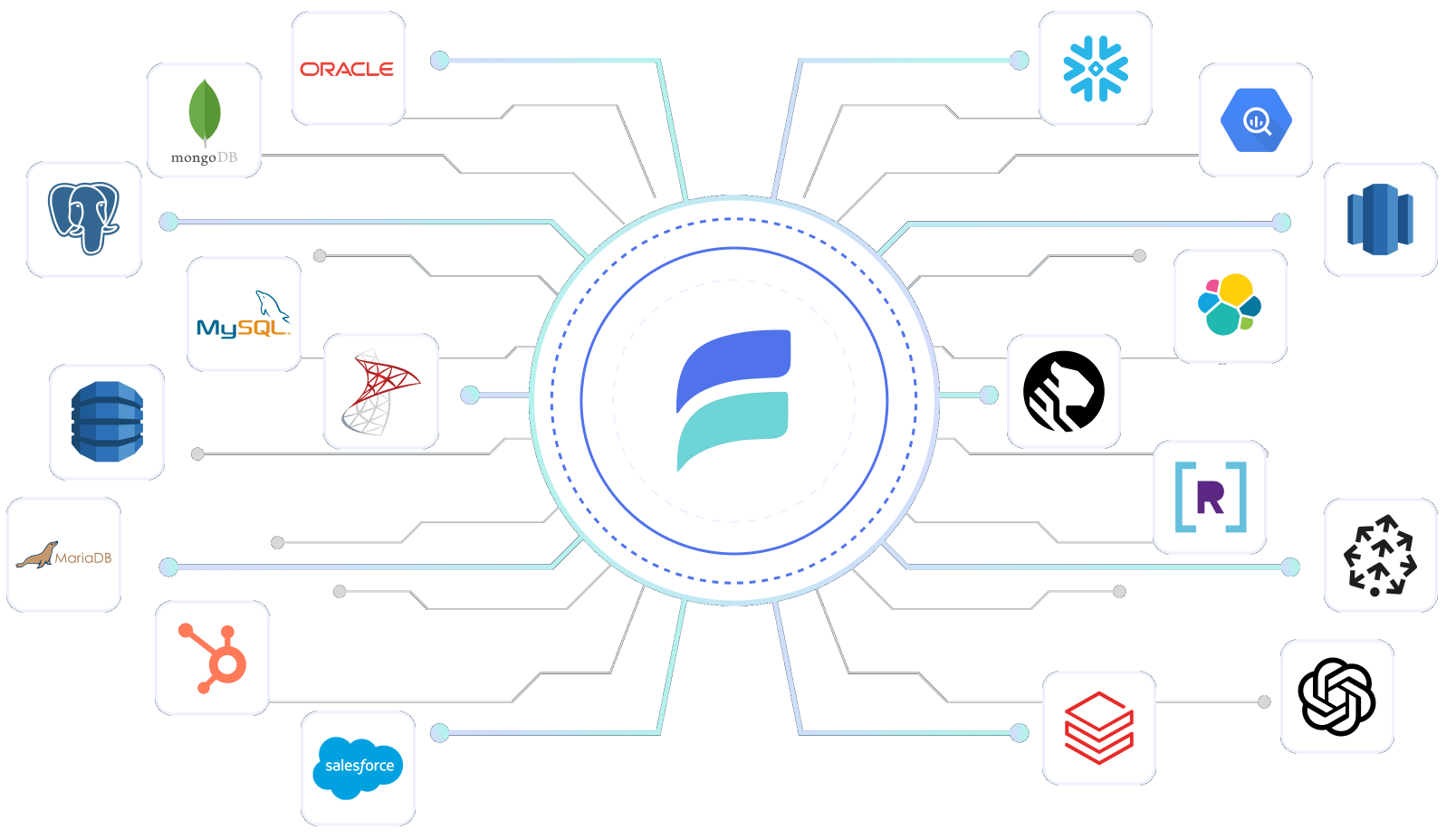

Estuary combines the most real-time, streaming change data capture (CDC), and batch connectors together into a unified modern data pipeline:

What customers are saying

Increase productivity 4x

With Estuary companies increase productivity 4x and deliver new projects in days, not months. Spend much less time on troubleshooting, and much more on building new features faster. Estuary decouples sources and destinations so you can add and change systems without impacting others, and share data across analytics, apps, and AI.

Spend 2-5x less

Estuary customers not only do 4x more. They also spend 2-5x less on ETL and ELT. Estuary's unique ability to mix and match streaming and batch loading has also helped customers save as much as 40% on data warehouse compute costs.

Dropbox to MySQL pricing estimate

Estimated monthly cost to move 800 GB from Dropbox to MySQL is approximately $1,000.

Data moved

Choose how much data you want to move from Dropbox to MySQL each month.

GB

Choose number of sources and destinations.

Why pay more?

Move the same data for a fraction of the cost.

Frequently Asked Questions

- Set Up Capture: In Estuary, go to Sources, click + NEW CAPTURE, and select the Dropbox connector.

- Enter Details: Add your Dropbox connection details and click SAVE AND PUBLISH.

- Materialize Data: Go to Destinations, choose your target system, link the Dropbox capture, and publish.

What is Dropbox?

How do I Transfer Data from Dropbox?

What are the pricing options for Estuary?

Estuary offers competitive and transparent pricing, with a free tier that includes 2 connector instances and up to 10 GB of data transfer per month. Explore our pricing options to see which plan fits your data integration needs.

Getting started with Estuary

Free account

Getting started with Estuary is simple. Sign up for a free account.

Sign upDocs

Make sure you read through the documentation, especially the get started section.

Learn moreCommunity

I highly recommend you also join the Slack community. It's the easiest way to get support while you're getting started.

Join Slack CommunityEstuary 101

I highly recommend you also join the Slack community. It's the easiest way to get support while you're getting started.

Watch

DataOps made simple

Add advanced capabilities like schema inference and evolution with a few clicks. Or automate your data pipeline and integrate into your existing DataOps using Estuary's rich CLI.