Stream data from Airtable to Amazon S3 CSV

Move data from Airtable to Amazon S3 CSV in minutes using Estuary. Stream, batch, or continuously sync data with control over latency from sub-second to batch.

- No credit card required

- 30-day free trial

- 200+Of connectors

- 5500+Active users

- <100msEnd-to-end latency

- 7+GB/secSingle dataflow

How to integrate Airtable with Amazon S3 CSV in 3 simple steps

Connect Airtable as your data source

Set up a source connector for Airtable in minutes. Estuary supports streaming (including CDC where available) and batch data capture through events, incremental syncs, or snapshots — without custom pipelines, agents, or manual configuration.

Configure Amazon S3 CSV as your destination connector

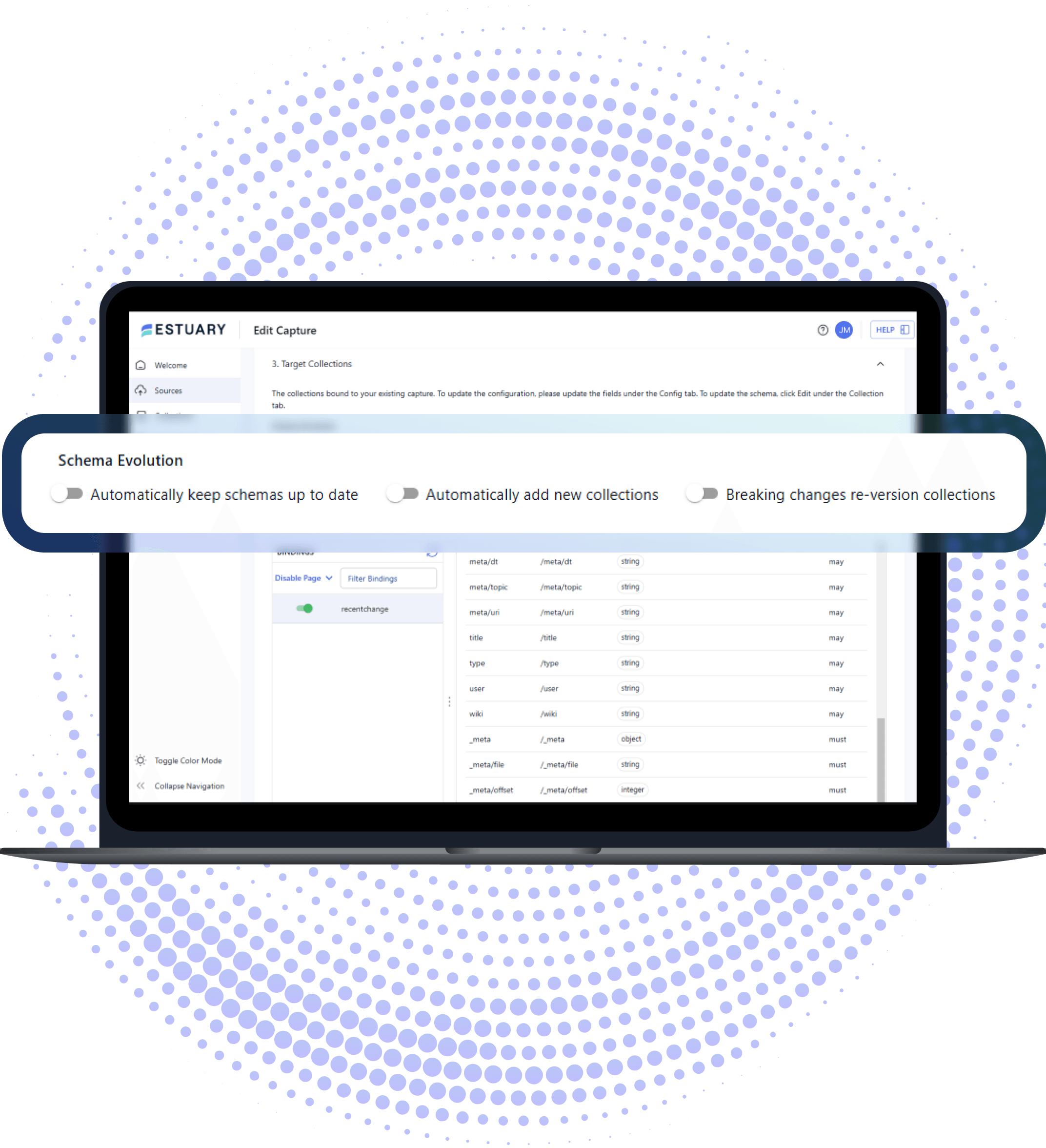

Estuary supports intelligent schema handling, with schema inference and evolution tools that help align source and destination structures over time. It supports both batch and streaming data movement, reliably delivering data to Amazon S3 CSV.

Deploy and Monitor Your End-to-End Data Pipeline

Launch your pipeline and monitor it from a single UI. Estuary guarantees exactly-once delivery, handles backfills and replays, and scales with your data — without engineering overhead.

Airtable connector details

- Log-based CDC for high-performance, low-impact data capture

- Automatic schema evolution to handle changes in source structure without manual intervention

- Unified streaming and batch ingestion in the same pipeline

- Hybrid deployment and BYOC support for security and control

- Fault-tolerant pipelines that resume automatically from the last checkpoint

- Kafka API connectivity for direct integration into streaming ecosystems

Amazon S3 CSV connector details

The Amazon S3 CSV connector exports Flow collection data as compressed CSV files into your S3 bucket, offering a reliable and scalable way to persist real-time updates in an analytics-friendly format.

- Delta-based materialization: Writes only changed records (delta updates) from Flow collections as CSV files, efficiently keeping your data lake up to date.

- Configurable batching: Aggregates changes in Flow and uploads them to S3 at a defined interval, with support for custom file size limits.

- Flexible authentication: Supports both AWS access keys and IAM roles for secure access management.

- Structured file naming: Automatically organizes files with versioned, lexically sortable naming for easy tracking and replay.

- Customizable storage paths: Lets you define prefixes and per-collection paths for better data organization.

- Compatible and extensible: Can also connect to S3-compatible APIs using a custom endpoint if needed.

💡 Tip: Use shorter upload intervals for near-real-time analytics, or increase them to reduce storage and API costs when dealing with large batch updates.

Spend 2-5x less

Estuary customers not only do 4x more. They also spend 2-5x less on ETL and ELT. Estuary's unique ability to mix and match streaming and batch loading has also helped customers save as much as 40% on data warehouse compute costs.

Airtable to Amazon S3 CSV pricing estimate

Estimated monthly cost to move 800 GB from Airtable to Amazon S3 CSV is approximately $1,000.

Data moved

Choose how much data you want to move from Airtable to Amazon S3 CSV each month.

GB

Choose number of sources and destinations.

Why pay more?

Move the same data for a fraction of the cost.

Estuary in action

See how to build end-to-end pipelines using no-code connectors in minutes. Estuary does the rest.

What customers are saying

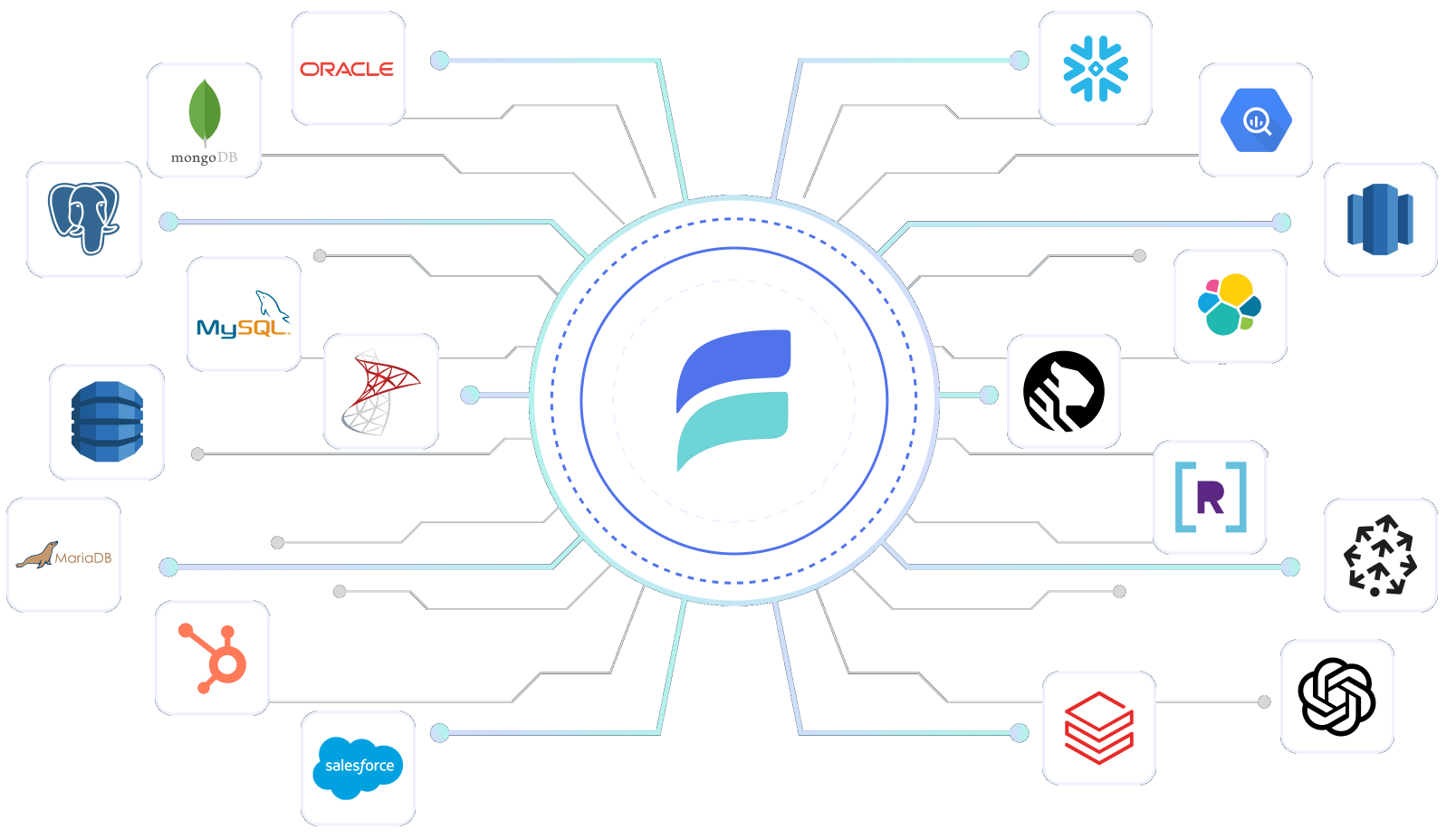

Why Estuary is the best choice for data integration

Estuary combines streaming and batch data movement capabilities into a unified modern data pipeline. This approach simplifies building and operating pipelines like Airtable to Amazon S3 CSV without custom code or orchestration.

Increase productivity 4x

With Estuary companies increase productivity 4x and deliver new projects in days, not months. Spend much less time on troubleshooting, and much more on building new features faster. Estuary decouples sources and destinations so you can add and change systems without impacting others, and share data across analytics, apps, and AI.

Getting started with Estuary

Free account

Getting started with Estuary is simple. Sign up for a free account.

Sign upDocs

Make sure you read through the documentation, especially the get started section.

Learn moreCommunity

I highly recommend you also join the Slack community. It's the easiest way to get support while you're getting started.

Join Slack CommunityEstuary 101

I highly recommend you also join the Slack community. It's the easiest way to get support while you're getting started.

Watch

Frequently Asked Questions

Is this integration suitable for production workloads?

Yes. Estuary pipelines are designed for production use, with exactly-once delivery semantics, automated backfills, and continuous operation at scale.

Can I control where my data runs and is processed?

Yes. Estuary offers multiple deployment options, including fully managed SaaS, private deployments, and bring-your-own-cloud (BYOC). This allows teams to control where their data plane runs and meet security, compliance, and networking requirements. Learn more about Estuary's security and deployment options.

Can I build this Airtable to Amazon S3 CSV integration manually?

Yes, it's possible to build a manual pipeline using custom scripts, scheduled jobs, or open-source tools. However, manual approaches typically require ongoing maintenance, custom error handling, schema management, and operational overhead. Estuary simplifies this by providing a managed pipeline with built-in reliability, scaling, and monitoring.

Related integrations with Airtable

DataOps made simple

Add advanced capabilities like schema inference and evolution with a few clicks. Or automate your data pipeline and integrate into your existing DataOps using Estuary's rich CLI.