Organizations often use HubSpot to manage their marketing, sales, operations, and customer support. However, all this data provides better insights when combined to create a single source of truth. By transferring data from HubSpot to BigQuery, a powerful data warehouse, businesses can perform advanced data analysis, driving informed decision-making.

In this guide, we’ll explore the top methods to connect HubSpot to BigQuery, ensuring you can choose the most efficient approach for your business needs.

Want the fastest, real-time way to sync HubSpot to BigQuery — no code required? Jump to the Estuary method →

Quick Summary

This guide walks you through four effective ways to move HubSpot data to BigQuery, depending on your integration needs:

- HubSpot Private App — Use HubSpot’s private app authentication to fetch CRM data via API and manually load it into BigQuery.

- Manual CSV Export/Import — Export HubSpot data as CSV and load it manually into BigQuery using the web UI or command line.

- Custom Integration — Build your own pipeline using code, cloud functions, and HubSpot/BigQuery APIs.

- Estuary (Real-Time, No Code) — Use a fully managed, native HubSpot connector that streams data into BigQuery in real time, with no scripts or maintenance required.

Whether you’re an analyst or a data engineer, this guide will help you pick the best method to unify your customer data and power deeper analytics.

What is HubSpot?

HubSpot is a cloud-based platform to help organizations streamline sales, marketing, support, and more. The HubSpot platform encompasses five hubs—CRM, Marketing, CMS, Operations, and Service—each targeting a different aspect of your business.

Features of HubSpot Hubs

- HubSpot Marketing hub: Helps you collect leads, automate marketing campaigns, and more.

- HubSpot Sales hub: Enables you to automate sales pipelines, get 360o view of contacts, and more.

- HubSpot Service hub: Allows you to add a knowledge base, collect customer feedback, and generate support tickets.

- HubSpot CMS hub: Assist you in building websites with a drag-and-drop editor, optimize SEO strategies, and more.

- HubSpot Operations hub: Helps you sync data across different platforms, improve data quality, and more.

What is BigQuery?

BigQuery is Google’s fully-managed, serverless, cloud-based enterprise data warehouse. BigQuery is built on Dremel technology. Dremel turns SQL queries into execution trees. This tree architecture model makes for easy and efficient querying and aggregating results.

BigQuery supports columnar storage to provide superior analytics query performance. For analytics, you can either run simple SQL queries or connect BigQuery with business intelligence tools.

BigQuery is built to manage petabyte-scale analytics. Hence, it can collect vast amounts of data from different sources to support your big data analytics.

Features of BigQuery

- BigQuery Data Transfer: The BQ data transfer service automates data movement into BigQuery regularly based on the defined schedules.

- BigQuery ML: You can use simple SQL queries to create and execute ML models in BigQuery.

- BigQuery BI Engine: The built-in query engine processes large queries in seconds.

- BigQuery GIS: The Geographic Information Systems (GIS) feature provides information about location and mapping through geospatial analysis.

Why Move Data From HubSpot to BigQuery?

Moving data from HubSpot to BigQuery brings several benefits:

- Data Analytics: When you move HubSpot data to BigQuery tables, you can create a single source of truth for analytics requirements. It helps you generate insights into your customer and business operations data for better decision-making.

- Scalability: BigQuery can handle massive amounts of data. As your business generates more data, you can easily accommodate it on BigQuery.

- Security and Compliance: BigQuery, built on Google Cloud, offers security and compliance features, like auditing, access controls, and data encryption. When you move your data to BigQuery, it’s stored securely and in compliance with regulations.

- Cost Savings: BigQuery offers a flexible pricing model. You only need to pay for the amount of data and processing power you use. This results in significant cost savings than when maintaining your own on-premise data warehouse.

Here’s a list of the data you can move to backup HubSpot to BigQuery:

- Activity data (clicks, views, opens, URL redirects, etc.)

- Calls-to-action (CTA) analytics

- Contact lists

- CRM data

- Customer feedback

- Form submission data

- Marketing emails

- Sales data

How to Connect HubSpot to BigQuery (4 Methods)

Now that you understand the value of both platforms, let’s dive into how to connect them. There are four primary ways to move data from HubSpot to BigQuery, ranging from manual methods to fully automated real-time pipelines.

Before choosing a method, make sure your accounts and environments are properly set up.

Prerequisites for Any HubSpot to BigQuery Integration

Before starting any of the methods below, ensure you have the following:

- A Google Cloud Platform (GCP) account with billing enabled

- A HubSpot account with admin access

- The BigQuery API enabled within your GCP project

- BigQuery dataset and table creation permissions

- Google Cloud SDK installed (for CLI-based tasks)

- (For OAuth-based tools like Estuary) Optional: a connected HubSpot account for OAuth2 authorization

- (For custom integrations) API credentials and access tokens from HubSpot

With these in place, you’re ready to explore the integration options.

Method #1: HubSpot Private App for Moving Data to BigQuery

One of the most reliable methods for transferring data from HubSpot to BigQuery is through HubSpot’s private app integration.

Steps to Transfer HubSpot Data to BigQuery Using HubSpot’s Private App:

1. Create a Private App

Earlier, internal integrations used a HubSpot API key that provided read and write access to all of your HubSpot CRM data. However, HubSpot API keys were discontinued from being used for authentication to access HubSpot APIs.

With HubSpot API key migration made compulsory, all API keys got deactivated. All existing API key integrations were migrated to private apps. Now, you can use HubSpot’s APIs through private apps to access specific data from your HubSpot account.

Once you’ve authorized what each private app can request or change in your account, a unique access token gets generated for your app.

- Log in to your HubSpot account. Click on the Settings icon in the main navigation bar.

- From the left sidebar menu, navigate to Integrations → Private Apps.

- Click on Create a private app.

- On the Basic Info tab, provide basic app details:

- Enter an app name or click on Generate a new random name.

- Get your pointer over the logo placeholder and click on the upload icon. You can upload a square logo unique to your app.

- Enter your app’s description.

- Click on the Scopes tab.

- For each scope you’d like to enable for your private app’s access, select the Read or Write checkbox. To search for a specific scope, go to the Find a Scope search bar.

- Upon completion of app configuration, click on the Create app at the top right.

- A dialog box will appear. Review the information about the access token, then click on Continue creating.

After creating your app, you can use the app’s access token to make API calls. Suppose you want to edit your app’s info or change the scopes, click on Edit details.

2. Generate Access Token:

- Once your private app is created, HubSpot will generate a unique access token for the app.

- To view the token, go to the app's details page and click Show token. Copy this token to authenticate future API requests.

3. Make API Calls Using the Access Token:

- Use HubSpot’s APIs with the access token to fetch data. HubSpot’s API endpoints return JSON data, which you can transform and load into BigQuery.

- For example, to retrieve all contacts, use this API request in Node.js:

plaintextaxios.get('https://api.hubapi.com/crm/v3/objects/contacts', {

headers: {

'Authorization': `Bearer YOUR_TOKEN`, // Replace YOUR_TOKEN with the actual token from HubSpot

'Content-Type': 'application/json'

}

})

.then(response => {

console.log(response.data);

})

.catch(error => {

console.error(error);

});

- Note: The "Bearer" prefix is part of the OAuth protocol, and it tells the API that the token following it (YOUR_TOKEN) is the access token. Replace

[YOUR_TOKEN]with the actual token you generated from HubSpot.

4. Set Up BigQuery Dataset:

- Create a new dataset in BigQuery to store the HubSpot data.

- Run the following command in your Google Cloud CLI:

plaintextbq mk hs_data

- Next, create an empty table for your contacts data:

plaintextbq mk --table --expiration 86400 --description "Contacts table" hs_data.hs_contacts_table

5. Load Data Into BigQuery:

- After fetching data from HubSpot, upload it to BigQuery. For example, if the contacts data is in a JSON format, use this command:

plaintextbq load --source_format=NEWLINE_DELIMITED_JSON hs_data.hs_contacts_table ./contacts_data.json ./contacts_schema.json

- Ensure the schema file is prepared to match the JSON data structure.

6. Automate the Data Transfer:

- For ongoing data synchronization, create a script that fetches data regularly and loads it into BigQuery. You can automate the script using cron jobs or other scheduling tools.

- Example of scheduling a daily load at 6 PM:

plaintext0 18 * * * /bin/scripts/backup.sh

Additional Features of HubSpot’s Private App:

- OAuth-Based Security: HubSpot’s private apps use OAuth for secure access to your data.

- Monitoring and Error Handling: Implement error handling in your API calls and monitor them for data consistency.

- Scopes and Permissions: Choose specific scopes (e.g., read-only access) to control what data your app can interact with.

This method provides a secure and customizable way to transfer data from HubSpot to BigQuery by leveraging HubSpot’s API and Google Cloud tools.

Method #2: Move HubSpot Data to BigQuery Using Manual CSV Files

Among the easier options to move HubSpot data to BigQuery is to download and upload CSV files manually.

You have the option to Import & Export data from your HubSpot account. You can export the following records:

- Calls

- Contacts

- Companies

- Deals

- Payments

- Tickets

- Custom objects

When you export any records, you can select the file format (XLS, XLSX, CSV). You can also Include only properties in the view or include all properties on records. Upon exporting, you’ll receive an email with a download link to your export file. These download links expire after 30 days.

Once you’ve downloaded the file, you can move it to your Google BigQuery platform.

Pros of moving data from HubSpot to BigQuery with manual CSV files

- It’s a relatively simple method.

- There is no entry barrier, and you can do it quickly.

Cons of moving data from HubSpot to BigQuery with manual CSV files

- Every time there’s an update, you must manually download and move.

- There is no continuous data flow.

Method #3: Build Custom Integrations for HubSpot to BigQuery Data Transfer

If your team is up to the task, you can build your own in-house data pipeline to move data from HubSpot to BigQuery. However, this involves spending a considerable amount of time to get the integration up and running. You also need to spend time, resources, and money to manage and maintain data pipelines.

HubSpot and BigQuery constantly change or update their APIs. This might translate into upstream or downstream failures unless properly addressed.

The custom integration option is worthwhile if your data needs are very specific to your business. However, this option is not a scalable process for syncing data to additional SaaS apps. It will require spending more time to build or maintain data pipelines. You must also take care of data security with custom integrations.

Method #4: Use Estuary’s Real-Time HubSpot Connector (No Code)

If you're looking for the fastest, most reliable, and real-time way to move HubSpot data into BigQuery without writing a single line of code, Estuary is the solution.

Estuary provides a native, first-party HubSpot connector that streams changes from HubSpot in real time (as fast as 1 second) and writes them into BigQuery with sub-10-second latency. It’s built for modern analytics, trusted by engineering and marketing teams alike, and requires zero infrastructure setup.

Why Estuary?

Estuary stands out as the most efficient and future-proof way to sync HubSpot to BigQuery.

It enables real-time streaming, so you never have to wait hours for your reports to update. Data flows continuously from HubSpot into BigQuery, ensuring your dashboards, customer insights, and campaign metrics are always up to date.

The platform is completely no-code; you can build and deploy a pipeline from your browser in minutes. Just authenticate your HubSpot account, select the resources you want to sync, and connect BigQuery as your destination.

Estuary also offers secure OAuth2 authentication, automatic schema evolution, and fully managed infrastructure, so you never have to worry about API rate limits, failed scripts, or data consistency. Whether you're centralizing marketing data, powering real-time dashboards, or enriching a data lake, Estuary gives you a powerful foundation for real-time analytics without the engineering lift.

Steps to Use Estuary for HubSpot to BigQuery Integration:

Step 1: Sign In or Register to Estuary

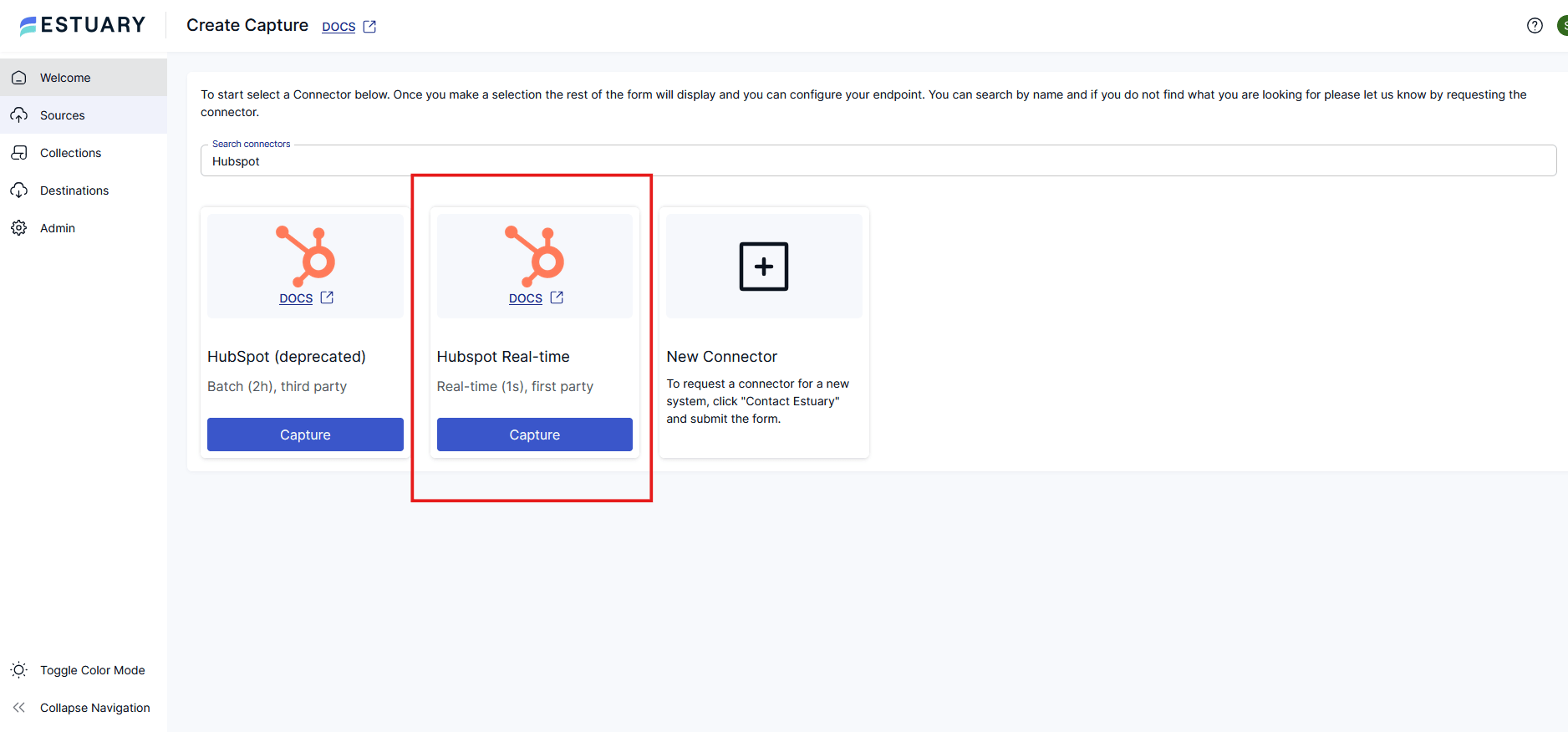

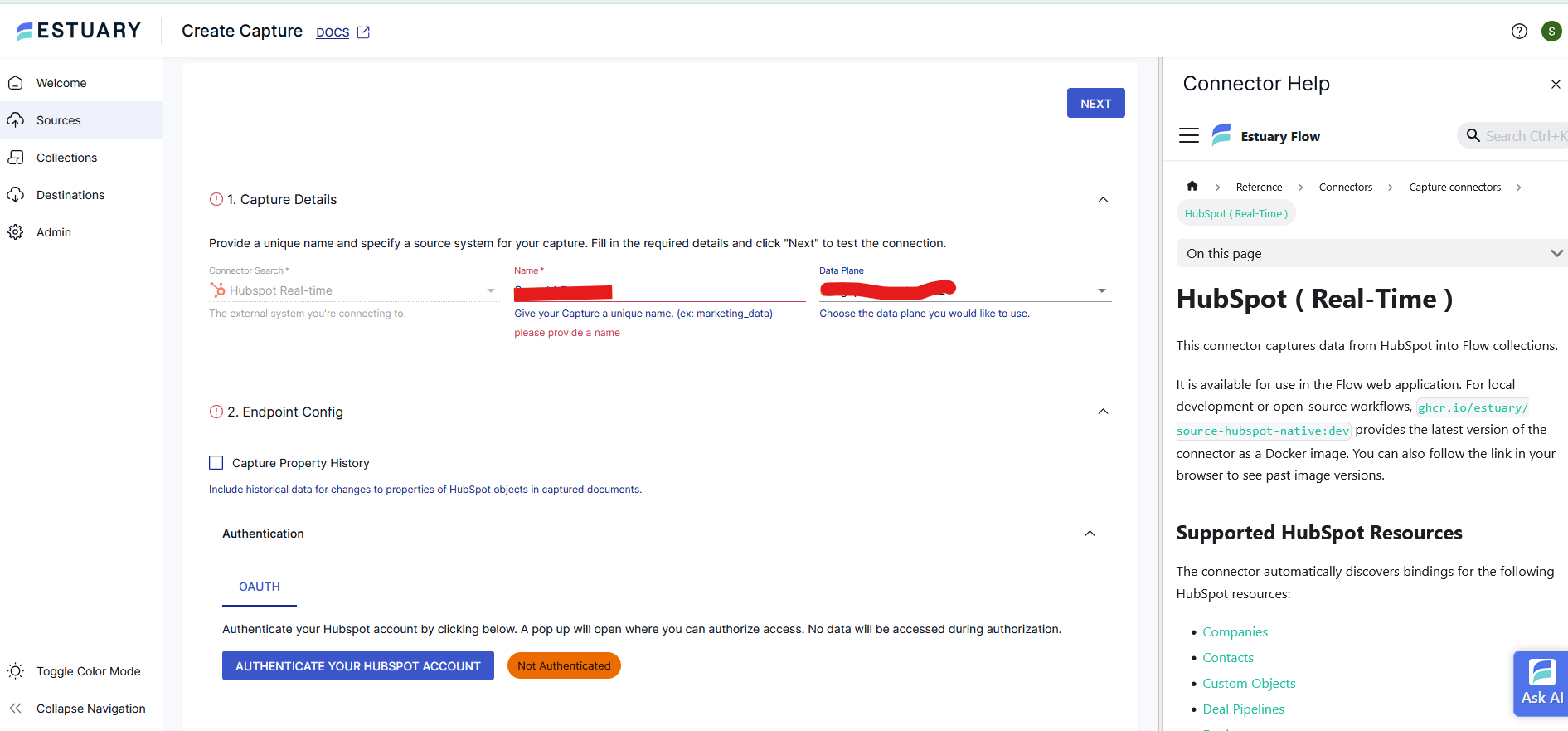

Step 2: Create a HubSpot Real-Time Capture

- In the sidebar, go to Sources → click New Capture.

- Search for HubSpot, then select “HubSpot Real-time” — this is Estuary’s first-party, low-latency connector.

- Give your capture a name and choose a Data Plane.

- Under Authentication, click Authenticate Your HubSpot Account. You’ll be prompted to authorize OAuth2 access.

- Estuary auto-discovers available HubSpot data like:

- Contacts

- Deals

- Companies

- Engagements

- Email Events

- Tickets

- Custom Objects, and more.

- Select the resources you want to capture. Then click Next, and Save and Publish to activate the capture.

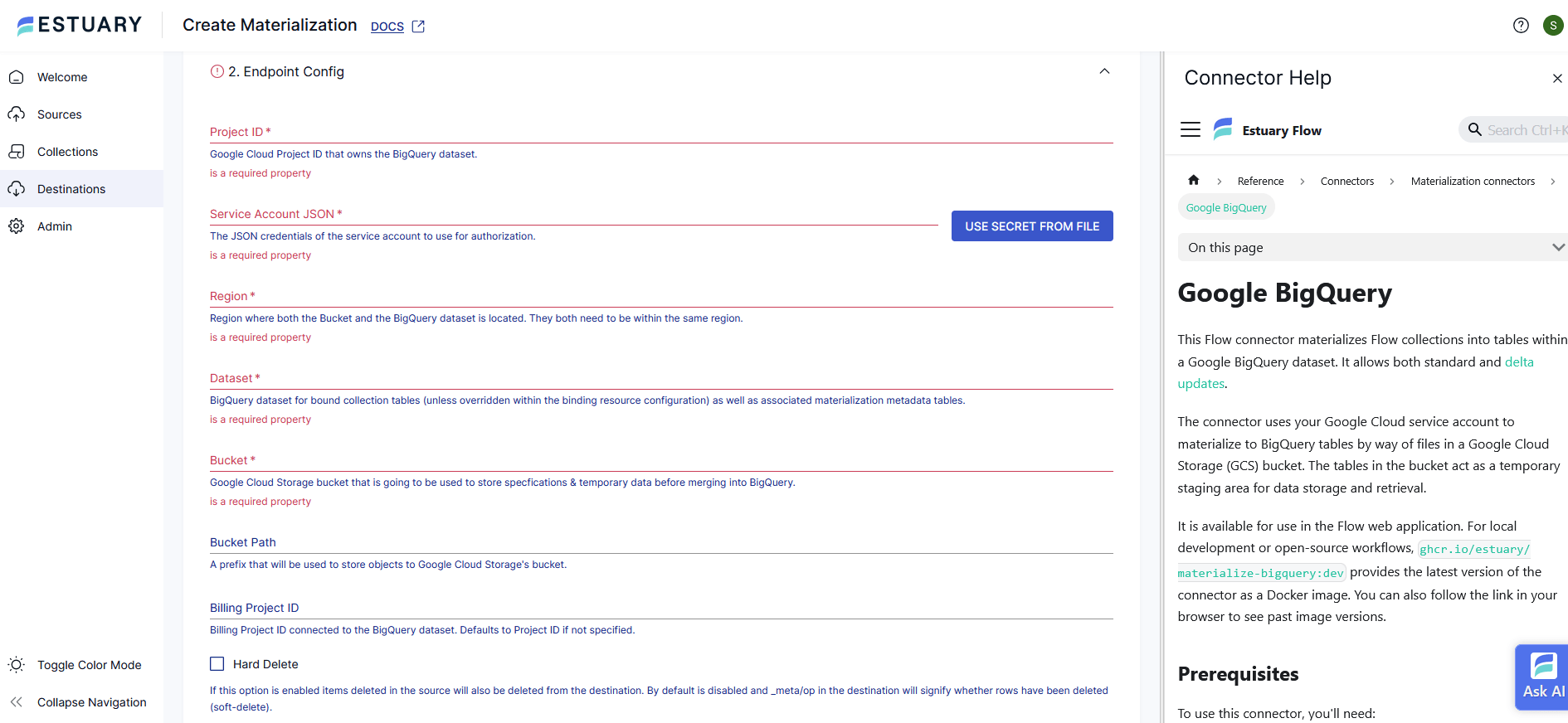

Step 3: Set Up BigQuery as a Destination

- Navigate to Destinations → click New Materialization.

- Search and select Google BigQuery.

- Enter your configuration details:

- Project ID

- Service Account JSON

- Region

- Dataset

- Google Cloud Storage Bucket (used for temporary file staging)

- Use the Collection Selector to bind the HubSpot resources you captured.

- Click Save and Publish — your pipeline is now live and syncing in real time!

BigQuery has a few more prerequisites you’ll need to meet before you can connect to Flow successfully. So before you continue, follow the steps here.

For a more detailed set of instructions, see the Estuary documentation on:

- HubSpot Real-Time Connector Docs →

- Google BigQuery Materialization Docs →

- Getting Started with Estuary →

Conclusion

HubSpot is a vital component of the tech stack for several businesses. With its many benefits, it empowers you to improve customer relationships and hone your communication strategies. However, when you extract and combine your HubSpot data with other data sources, the business growth potential increases exponentially. Moving your data from HubSpot to BigQuery allows you to reap the benefits of a single source of truth. This, in turn, boosts the growth of your business.

Ready to integrate your HubSpot data with BigQuery effortlessly? Start your data syncing with Estuary today for real-time insights. Get Started it Free!

Here are some other interesting reads to move data from HubSpot to other platform:

FAQs

Can I transfer HubSpot data to BigQuery in real time?

What is the best tool for HubSpot to BigQuery integration?

About the author

With over 15 years in data engineering, a seasoned expert in driving growth for early-stage data companies, focusing on strategies that attract customers and users. Extensive writing provides insights to help companies scale efficiently and effectively in an evolving data landscape.