Traditional data architectures have benefited enterprises by centralizing their data, helping them make more informed decisions. However, there are several bottlenecks associated with these systems. This is where data mesh comes in handy.

Truly data-oriented organizations can use data mesh to overcome the various challenges of monolithic architectures. Thanks to data mesh tools, it’s easier to drive innovation, scale without problems, and simplify data governance without the chaos or data silos.

But first, let's get a deeper understanding of Data Mesh.

What is Data Mesh? A Quick Look.

A data mesh is a decentralized data architecture with distributed ownership. The idea behind data mesh architecture is to organize data based on business processes like sales, marketing, finance, and more.

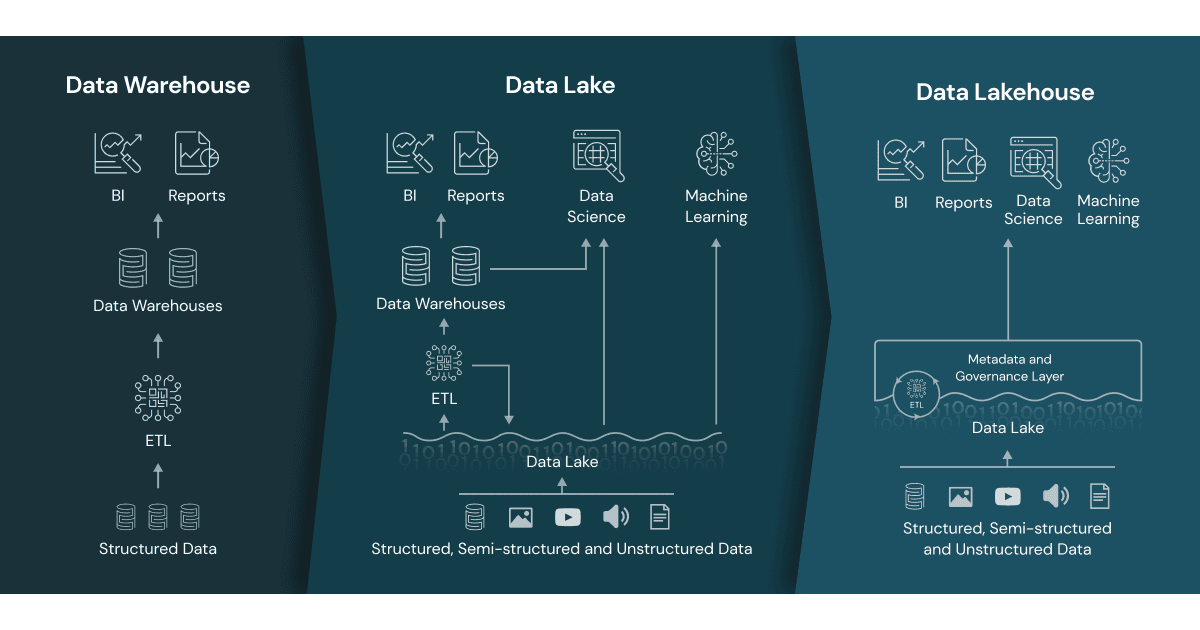

With data mesh, you can still use data warehouses and data lakes, where all the data will be in a centralized repository. However, you segregate the data and ownership. The centralized data is split into multiple decentralized repositories owned and managed by individual departments. They can control, define, and access their data without heavily relying on centralized data teams.

However, the decentralized data architecture remains interconnected on a large scale. So, teams can still perform cross-domain data analysis independently.

The data mesh concept revolves around four core principles:

- Domain-oriented and decentralized data ownership and architecture

- Data is delivered as a product

- The data infrastructure is a self-serve data platform

- Federated computational governance

Data mesh also helps solve problems like the high management costs of monolithic architectures and pressure overload on data teams.

Top 6 Data Mesh Tools

Because data mesh depends on your business domains and your organization’s precise goals, no platform can provide an out-of-the-box data mesh solution that’s perfect for you. However, many tools are available that make it easy to design and manage your data mesh.

Here’s a list of the top 6 data mesh tools to help you decide which is suitable for your business needs.

Estuary

Estuary is a real-time DataOps tool designed for engineers, data scientists, and business stakeholders. By packaging the power of data streaming in an intuitive web UI, Estuary puts robust, scaleable data pipelines within reach. Out-of-the-box connectors allow you to capture data from, and push it to, a variety of databases, data warehouses, filestores, SaaS apps, and more.

In a Data Mesh framework, Estuary acts as the centralized, self-serve infrastructure. It allows stakeholders from different domains to create and share data products, called collections. Estuary also enforces a universal standard of governance that acts as an intermediary between all the other data systems in the organization.

Pros

- Universal standard of governance, data validation, and monitoring.

- Real-time integrations between many popular data systems.

- On-the-fly transformations.

- Intuitive user interface.

Cons

- As a newer tool, Estuary is continuously evolving and changing.

Ready to implement a data mesh with real-time data integration? Build your first pipeline for free with Estuary!

Next Data

Any article on data mesh products would be remiss without mentioning Next Data, the company recently founded by Zhamak Dehghani, who coined the term “data mesh” and outlined its core concepts.

Next Data takes the model of containerization that revolutionized software and applies it to data. It also introduces data-first APIs. Together, this framework productizes data. As of mid 2023, NextData’s product is not yet available for use.

Pros

- Created by the founder of Data Mesh.

- Containerized data products.

- Data-first APIs.

Cons

- Not yet available, and anticipated launch date not given.

K2View

K2View offers data mesh capabilities with Data Product Platform powered by the patented Micro-Database technology.

Let’s understand what a Micro-Database is.

You can think of Micro-Database as a “mini data lake.” All the data of a business entity, like each customer, order, device, etc., is stored in an encrypted and compressed Micro-DB. Each business entity instance can have its own Micro-DB. You can use K2View’s data products to create and manage billions of such Micro-DBs. Additionally, it can be instantly accessed by any other authorized data consumer.

In terms of its data mesh-support capabilities, K2View’s Data Integration helps you integrate and organize data from different sources into Micro-DBs.

The K2View Data Catalog can visually describe how your organization collects, transforms, and stores its data inventory. You can use K2View’s data catalog to collect, analyze, and visualize the metadata of an enterprise’s data products.

Pros

- Supports both analytical and operational workloads

- Can be deployed on-premises, across hybrid environments, or as an iPaaS in the cloud

- Rapid deployment (in weeks) and seamless adaptation

- Supports high-volume workloads requiring real-time data integration/pipelining

Cons

- Focus of deployment only on healthcare, telco, and financial services (so far)

- Primarily for large enterprises with relatively few mid-sized customers

Denodo

Denodo serves 30+ industries and is among the major companies in the data management landscape. Its customers include several mid-market companies and large enterprises that have largely benefited from the award-winning Denodo Platform.

But what is the Denodo Platform?

It’s a logical data management platform that you can use to access data in real time and break out of data silos. You can use it for data integration, management, and delivery. It enables hybrid/multi-cloud data integration, enterprise data services, self-service business intelligence, and data science.

Among the many use cases of the Denodo Platform, it helps you create a data mesh architecture that simplifies the design of your enterprise data infrastructure. This is achieved with data virtualization. It is a logical data layer that:

- Integrates enterprise data from different sources/departments

- Manages the integrated data for centralized governance and security from a single point of control

- Delivers it in real time to business users

The data virtualization architecture is the perfect solution for enabling data mesh. It centralizes only the critical metadata for accessing the different data sources. You can also view full-featured data catalogs that list the available data and provide real-time access in a self-service manner.

Pros

- Greater automation of data management processes

- Seamless security and governance

- Flat learning curve; doesn’t need extensive coding knowledge

Cons

- Limitations with large-scale data

- Not applicable for high-scale operational use cases

Databricks

Legacy systems and enterprise data warehouses have drawbacks like high costs, slow performance, and data silos. The Databricks Lakehouse Platform helps overcome these drawbacks. It combines the best of data lakes and data warehouses. The Databricks Lakehouse Platform delivers strong governance, reliability, flexibility, openness, and performance of data warehouses.

Using the Databricks Lakehouse capabilities, you can build a data mesh that embraces domain-driven design principles. It means data will be treated as a product and managed by individual domain teams.

Its Data Governance solution, Unity Catalog, is a unified solution for all AI assets and data in your lakehouse. The capabilities include informational catalogings, like data discovery and lineage, and enforcing fine-grained access controls and auditing.

With the Databricks Delta Sharing solution, you can also securely share data products between domains across organizational, technical, and regional boundaries. The solution is ideal for large, globally-distributed organizations with deployments across clouds and regions.

Some other Databricks features that benefit the data mesh include:

- Databricks Feature Store: promotes sharing and reuse between ML and Data Science teams

- Databricks SQL: allows BI and SQL queries directly on the lake

- Workflows and Delta Live Tables: supports high-quality self-service data pipelines

Pros

- Seamless integrations with existing tools

- Enhanced query performance at any scale

- Automated and real-time data lineage

Cons

- All runnable code has to stay in Notebooks—not very production-friendly

- Unsatisfactory insights and details about job failures and resources consumption

- No fine-grained access control mechanism for tables or views

Talend

Do you rely on a platform like a data lake or data warehouse with data from disparate sources? What you need is a good and dependable data cataloging mechanism and data owners. Talend Data Catalog is an excellent choice for this purpose.

You can use Talend to create a data mesh. With Data Catalog, you can create a centrally governed data catalog to share and collaborate seamlessly. It automatically crawls, profiles, organizes, links, and enriches all your data. It also helps you maintain a secure, single data control point. By using Data Catalog, you can improve data accessibility, accuracy, and business relevance.

That’s not all; it makes it easy to protect your data, manage data pipelines, govern your analytics, and accelerate your ETL processes. Data Catalog’s data compliance tracking and lineage tracing also support data privacy and regulatory compliance.

Data Catalog automatically documents up to 80% of the information associated with the data. It keeps the documented data up-to-date through ML and smart learning, continually delivering the most current data to you.

Pros

- Good connectivity to various databases from classic relational (MySQL, Oracle, MSSQL, etc.) to No-SQL databases

- Broad data engineering capabilities

- Easy to search and access data, also verifies data validity before sharing with peers

- Data integration possibilities span on-premise, multi-cloud, and hybrid environments

Cons

- Limited capabilities with regard to data products

- Better suited for analytics; not suitable for high-volume operational use cases

Conclusion

The best part is that you can implement a data mesh on platforms like cloud data warehouses, on-premise data lakes, etc. Embrace any of the above listed top 6 data mesh tools and companies for faster data delivery, increased agility and scalability, and support data-driven insights. Whichever your choice of data mesh tool, ensure it supports the four data mesh principles.

If you’d like to know the differences between data mesh and data fabric, you’ll find this article helpful.

Re-reworking your data architecture? Estuary is a flexible self-serve platform that helps you integrate all your data systems in real time. Whether you’re building a data mesh or taking an approach all your own, Estuary can help tie the components together. Build your first pipeline for free.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.