SQL Server to Snowflake: 4 Ways to Load and Sync Data

Learn how to move SQL Server data to Snowflake using Estuary CDC, Snowpipe, SnowSQL, or SQLpipe. Compare real-time sync, batch loading, setup steps, Snowflake authentication, delta updates, cost controls, and validation best practices.

SQL Server to Snowflake integration lets teams move operational database data into Snowflake for analytics, reporting, machine learning, customer intelligence, and AI workflows. You can do this with a real-time CDC pipeline using Estuary, or with file-based methods such as Snowpipe, SnowSQL, or SQLpipe.

For production workloads, Estuary is often a strong fit when Snowflake needs to stay updated as SQL Server changes. Estuary can capture inserts, updates, and deletes from SQL Server using change data capture, backfill historical rows, and materialize the data into Snowflake tables.

Manual methods such as Snowpipe, SnowSQL, and SQLpipe can work for one-time transfers or scheduled batch jobs, but they require more setup, staging files, orchestration, monitoring, and error handling. In this guide, we’ll compare all four methods and explain when each one makes sense.

Key Takeaways

SQL Server to Snowflake integration can be handled with real-time CDC, Snowpipe, SnowSQL, SQLpipe, or custom file-based workflows.

Estuary is the strongest fit when you need Snowflake to stay updated as SQL Server inserts, updates, and deletes occur.

Manual methods can work for one-time loads, but they require staging, scheduling, schema mapping, retries, and monitoring.

Before production use, validate primary keys, CDC enablement, Snowflake authentication, update/delete handling, row counts, and downstream dashboards.

SQL Server to Snowflake: What Changes During Integration?

Moving data from SQL Server to Snowflake is not just a database copy. SQL Server is usually the operational system where transactional data changes constantly, while Snowflake is typically the analytical destination where teams query, model, and join data at scale.

Important integration decisions include:

| Area | What to decide |

|---|---|

| Capture method | Real-time CDC, Change Tracking, batch polling, Snowpipe, SnowSQL, or SQLpipe |

| Table eligibility | Whether SQL Server tables have primary keys and CDC enabled |

| Historical load | How existing rows will be backfilled before ongoing changes begin |

| Updates and deletes | Whether Snowflake should reflect SQL Server updates and deletes |

| Schema changes | How table changes, added columns, and altered source schemas will be handled |

| Destination update mode | Standard merges or delta updates in Snowflake |

| Snowflake authentication | JWT/key-pair authentication and required role permissions |

| Cost controls | Snowflake warehouse auto-suspend and sync schedule |

| Validation | Row counts, sample records, primary keys, duplicates, and downstream query behavior |

SQL Server to Snowflake Methods Compared

| Method | Best for | Handles ongoing changes? | Latency | Setup effort | Operational burden |

|---|---|---|---|---|---|

| Estuary CDC pipeline | Production replication, analytics, operational reporting, AI workflows | Yes | Real-time or low-latency | Low to medium | Lower |

| Snowpipe | File-based ingestion through cloud stages | Partially, if files are exported continuously | Near real time after staging | Medium to high | Medium |

| SnowSQL | One-time or scheduled command-line loads | No, unless scripted | Batch | Medium | Medium |

| SQLpipe | Simple command-line transfer using ODBC | Limited | Batch or scheduled | Medium | Medium |

| Custom scripts | Highly customized workflows | Only if built manually | Depends on implementation | High | High |

If Snowflake needs to stay current as SQL Server changes, use a CDC-based pipeline. If you only need a one-time transfer, SnowSQL, SQLpipe, or staged Snowpipe workflows may be enough.

Methods to Transfer Data from SQL Server to Snowflake

In this section, we provide comprehensive step-by-step methods for transferring data from SQL Server to Snowflake using managed CDC pipelines to file-based and command-line loading methods.

In this section, we’ll compare four practical ways to move SQL Server data into Snowflake, from managed CDC pipelines to file-based and command-line loading methods.

Method 1: SQL Server to Snowflake CDC with Estuary

Method 2: SQL Server to Snowflake Using Snowpipe

Method 3: Using SnowSQL

Method 4: Using SQLpipe

Method 1: SQL Server to Snowflake CDC with Estuary

Estuary is a managed data pipeline platform that can capture SQL Server changes using CDC, backfill historical rows, and materialize the resulting collections into Snowflake tables.

This method is a strong fit when Snowflake needs to stay current as SQL Server changes, especially for analytics, operational reporting, machine learning, customer intelligence, and AI workflows. Compared with file-based methods, a CDC pipeline reduces the need for recurring exports, staging jobs, batch scripts, and manual reconciliation.

Estuary SQL Server Connector Requirements

Before creating the SQL Server capture, confirm that:

- SQL Server is reachable from Estuary.

- The database and the individual tables you want to capture have CDC enabled.

- Each captured table has a primary key, or you manually specify a key in the Estuary collection definition.

- The capture user has the required database access.

- You know the SQL Server host, port, database name, username, and password.

- You have decided whether CDC, Change Tracking, or Batch capture is the right mechanism for your use case.

Estuary’s SQL Server CDC connector is best when you need real-time capture with full audit history. Change Tracking can be a better fit when you need lower storage overhead or need to capture computed columns. Batch capture is useful when SQL Server does not support CDC or Change Tracking, when you need views, or when you want custom queries.

SQL Server Capture Options in Estuary

| Capture option | How it works | Best for | Notes |

|---|---|---|---|

| SQL Server CDC | Log-based change capture | Real-time replication, full audit history, tables without primary keys if keys are specified manually | Requires CDC enabled on the database and tables |

| Change Tracking | Tracks changed primary keys | Real-time capture with lower source storage overhead | Good when all tables have primary keys or when computed columns matter |

| Batch Query | Periodic polling | Views, custom queries, or SQL Server instances without CDC/Change Tracking | Latency is minutes to hours |

Estuary recommends CDC when you need complete audit logging with full row history, Change Tracking when you need lower storage overhead or computed columns, and Batch when CDC or Change Tracking is not available.

Here’s how to set it up:

Step 1. Create an Estuary Account

- Start by signing up for a free Estuary account. You can register using Google or GitHub credentials. Once inside, you’ll be greeted by a dashboard with options to capture data and materialize it to your desired destination.

Step 2. Set Up Your SQL Server to Snowflake Environments

Before building the pipeline, make sure:

- SQL Server is network-accessible and allows CDC on the tables you want to sync

- You have login credentials with REPLICATION or equivalent privileges

- Your Snowflake account is ready to receive data. You’ll need the host, database, schema, warehouse, and a user configured with key-pair authentication.

[See full prerequisites for SQL Server and Snowflake →]

Step 3. Capture Data from SQL Server

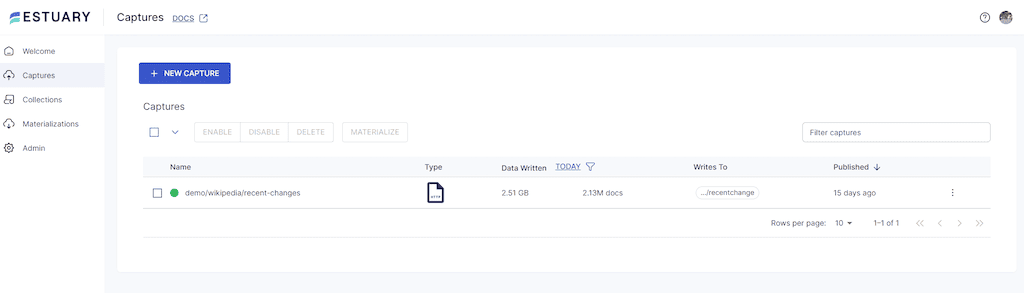

- Click Captures and select New capture. This will connect you to your data source— the SQL Server.

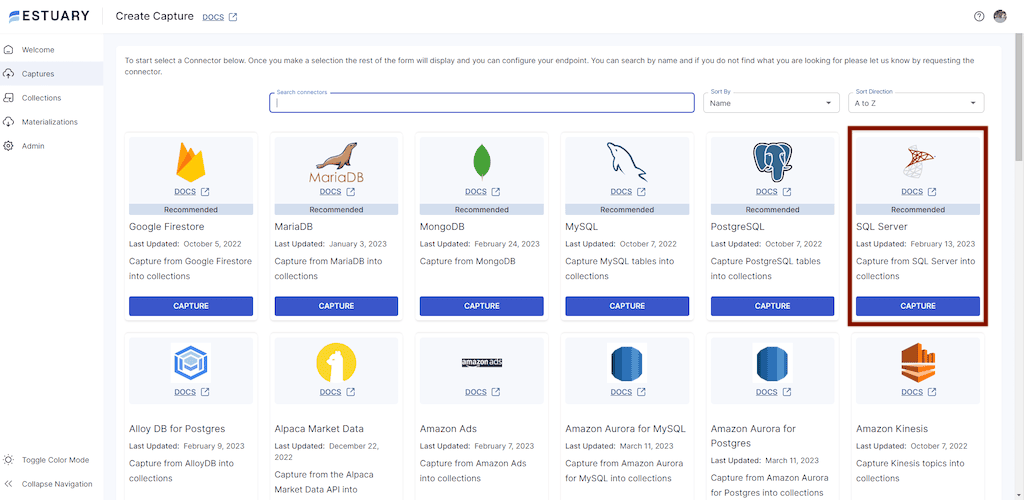

- Navigate to and select SQL Server as your data source connector.

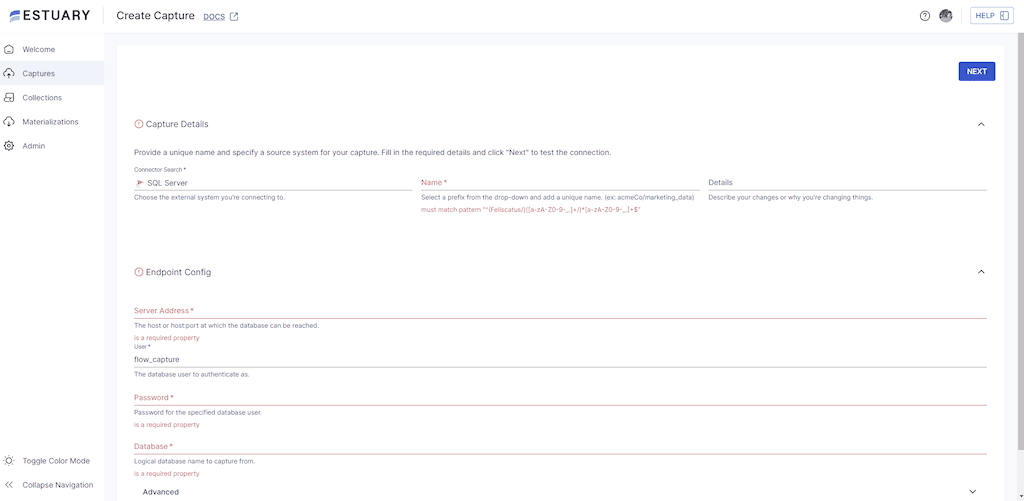

- Fill in the capture details with your SQL server address, database username, and password. Click Next.

- Estuary will connect to your database and auto-detect all tables that support CDC

- Choose which tables you want to capture (all are selected by default)

- Click Save and Publish

Estuary will now backfill historical rows and begin listening for real-time changes via SQL Server CDC. Each selected table becomes a versioned collection within Estuary.

Snowflake Connector Requirements

Before creating the Snowflake materialization, make sure you have:

- Snowflake host URL.

- Target database and schema.

- Virtual warehouse.

- Snowflake role with required access.

- Snowflake user configured for key-pair/JWT authentication.

- Private key for the configured Snowflake user.

- Timestamp type selected for materialized timestamp columns.

QUOTED_IDENTIFIERS_IGNORE_CASEset toFALSEfor the Estuary user.- Username/password authentication should not be used for new Snowflake materializations.

Snowflake username/password authentication was deprecated in April 2025, and Estuary’s Snowflake connector now uses key-pair/JWT authentication.

Step 4: Configure Snowflake as the Destination

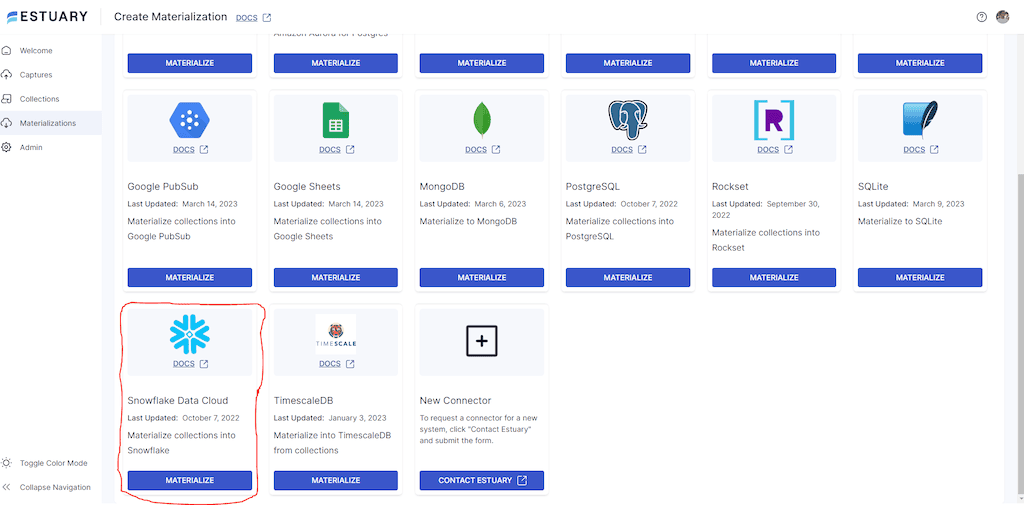

- Click the Materialize collections button to transfer your captured data.

- From the connector tile, select Snowflake Data Cloud as your destination.

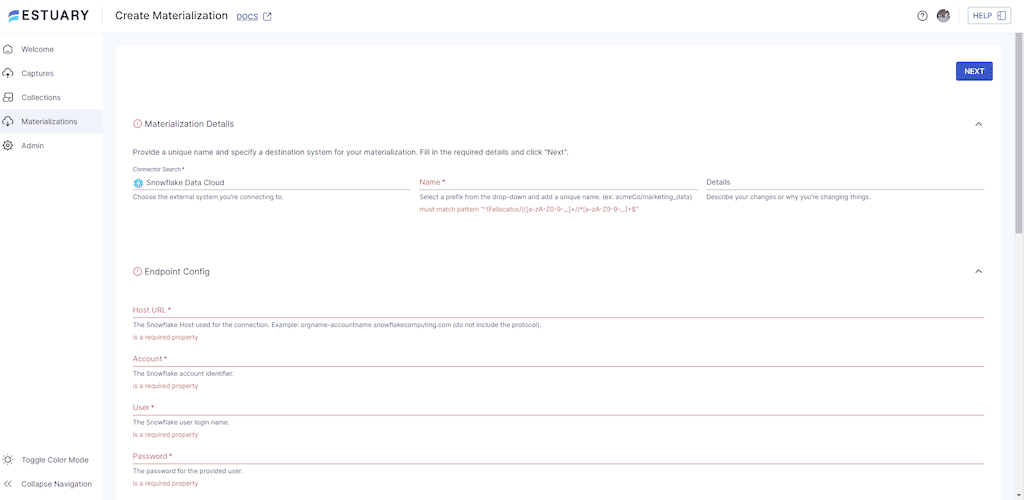

- On the Snowflake connector page, provide the following details:

- Host URL (your Snowflake account URL, e.g., orgname-accountname.snowflakecomputing.com)

- Database and Schema where the data will be materialized

- Warehouse (name of your Snowflake warehouse)

- User (Snowflake username)

- Private Key (for authentication)

- After entering the configuration, click Next.

Estuary maps each collection (from SQL Server) to a new or existing table in Snowflake. You can optionally:

- Rename the target tables

- Filter fields

- Apply in-flight transformations

By default, Estuary uses standard merges, but you can enable delta updates on a per-table basis. Delta updates reduce latency and costs for large datasets, and when enabled, Estuary writes data into Snowflake using Snowpipe Streaming for near real-time ingestion.

- Finally, click Save and Publish to activate the pipeline.

Snowflake Cost and Performance Tips

When materializing SQL Server data into Snowflake, consider both data freshness and warehouse cost. Snowflake compute is billed per second with a 60-second minimum, and inactive warehouses do not incur compute charges. Estuary recommends configuring the Snowflake warehouse to auto-suspend after 60 seconds and setting the materialization sync schedule based on freshness requirements.

For large datasets, collection keys can affect Snowflake update performance. Standard updates can perform better when keys help Snowflake prune micro-partitions efficiently. For example, chronological keys such as /date, /user_id may perform better than using only /user_id for large update-heavy tables.

Standard Updates vs Delta Updates in Snowflake

Estuary’s Snowflake materialization connector supports both standard updates and delta updates. Standard updates are the default and are better when the Snowflake table should reflect the latest fully reduced state of SQL Server records.

Delta updates can reduce latency and cost for large datasets because Estuary does not need to query existing rows in the Snowflake table. However, delta updates are not suitable for every workflow because the resulting table in Snowflake is not fully reduced. Snowpipe Streaming is used by default for delta update bindings and is the lowest-latency method to load data into Snowflake through Estuary.

Snowpipe is a Snowflake-native file ingestion option. To use it with SQL Server, you still need a separate process to export SQL Server data into files, upload those files to a cloud stage, and then let Snowpipe load them into Snowflake. This can work for scheduled batch pipelines, but it adds orchestration and monitoring work.

Method 2: SQL Server to Snowflake Using Snowpipe

Step 1. Set up your Snowflake account. Create a database and schema, ensuring you have the required privileges.

Step 2. Create an external stage using this SQL statement:

sql language-plaintextCREATE STAGE <stage_name>

URL = 's3://<your_s3_bucket>'

CREDENTIALS = (AWS_KEY_ID='<your_aws_access_key>', AWS_SECRET_KEY='<your_aws_secret_key>');Security note: For production environments, consider using a Snowflake storage integration instead of embedding cloud access keys directly in SQL. Storage integrations are generally easier to govern and rotate safely.

Replace <stage_name> with the name of your stage, <your_s3_bucket>, <your_aws_access_key>, and <your_aws_secret_key> with your S3 bucket name and AWS credentials.

Step 3. Create a pipe using this SQL statement:

bash language-plaintextCREATE PIPE <pipe_name>

AUTO_INGEST = TRUE

AS COPY INTO <your_table_name>

FROM '@<stage_name>/<your_file_prefix>'

FILE_FORMAT = (TYPE=CSV FIELD_DELIMITER=',' SKIP_HEADER=1);Replace <pipe_name> with the name of your pipe, <your_table_name> with the name of the table in your snowflake database and schema where you want to load the data, <stage_name> with the name of your external stage, and <your_file_prefix> with the prefix for your files in the external stage.

Step 4. Run this command to enable Snowpipe in your Snowflake database:

bash language-plaintextALTER ACCOUNT SET PIPE_EXECUTION_PAUSED = FALSE;This allows Snowpipe to ingest data when it arrives in the external stage automatically.

Step 5. Export SQL Server data to files and upload them to the stage.

Snowpipe loads files from a cloud stage into Snowflake. It does not directly extract rows from SQL Server. You need a separate process, such as BCP, SSIS, a scheduled script, or another export job, to write SQL Server data into CSV or another supported file format and place those files in the staged location.

To create a batch file, open a text file, and run this command:

bash language-plaintext@echo off

title [title of your batch script]

echo [first line]

pauseSave the text file with the extension .bat, double-click to run the batch file, right-click and select edit so you can paste the SQL statement in the file.

Step 6. Use a scheduling tool like Windows Task Scheduler or Cron to schedule the batch file to run regularly. If you implement the preceding steps correctly, your SQL data should be automatically loaded into Snowflake through Snowpipe.

For teams that want to avoid repeated file exports, staging, and scheduling, a CDC-based pipeline may be a better fit.

Method 3: SQL Server to Snowflake Using SnowSQL

SnowSQL is best for one-time loads or scheduled batch jobs. It does not automatically capture ongoing SQL Server inserts, updates, and deletes unless you build additional export, scheduling, and reconciliation logic.

Step 1. Download SnowSQL from the Snowflake website and install it on your local machine.

Step 2. Create a Snowflake account to create a new database and table in Snowflake.

Step 3. Run this command to connect to Snowflake using SnowSQL:

plaintextsnowsql -a <account_name> -u <username> -d <database_name> -s <schema_name> -w <warehouse_name>Replace <account_name>, <username>, <database_name>, <schema_name>, and <warehouse_name> with your Snowflake account details.

Step 4. Run this SQL command to create a Snowflake table to host the data in transit:

plaintextCREATE TABLE <table_name> (

<column1_name> <column1_data_type>,

<column2_name> <column2_data_type>,

...

)Replace <table_name>, <column1_name>, <column1_data_type>, <column2_name>, <column2_data_type>, and other blank values with your table and column names and data types.

Step 5. Run this command to export data from the SQL Server table using the BCP utility.

plaintextbcp <table_name> out <file_name> -c -t',' -S <server_name> -U <username> -P <password>Replace <table_name> with the name of the table you want to export, <file_name> with the name of the file you want to export to, <server_name> with the name of the SQL Server instance, <username> with your SQL Server username, and <password> with your SQL Server password

Step 6. Run this command to upload the data to Snowflake:

plaintextPUT file://<file_path> @<stage_name>/<file_name>Replace <file_path> with the path to the file you exported from SQL Server, <stage_name> with the name of the Snowflake stage you want to upload the file to, and <file_name> with the name of the file you want to upload.

Step 7. Execute this command to load the data from the file to the Snowflake table you created earlier:

plaintextCOPY INTO <table_name>

FROM @<stage_name>/<file_name>

FILE_FORMAT = (TYPE = CSV, FIELD_DELIMITER = ',', SKIP_HEADER = 1);Replace <table_name> with the name of the Snowflake table you created earlier, <stage_name> with the name of the Snowflake stage you uploaded the file to, and <file_name> with the name of the file you uploaded.

This completes the one-time process of transferring data from the SQL Server to Snowflake using SnowSQL. To automate the transfer of bulk data, you can either run a task for each of your SQL statements and build dependencies between them or wrap the entire SnowSQL script into a stored procedure triggered with your tasks.

Method 4: Using SQLpipe

Step 1. Download and install SQLpipe.

Step 2. Connect to your SQL Server instance using your computer’s ODBC Data Source Administrator. Search for “ODBC” in the Windows Start menu and supply the database name, username, password, schema name, hostname, and port.

Step 3. Connect to Snowflake using the ODBC Data Source Administrator using the same format.

Step 4. Write a SQL query to extract your data from SQL Server for onward transfer to Snowflake. You can use any SQL client, such as SQL Server Management Studio or Visual Studio Code.

Step 5. Save the SQL query as a file on your computer, and note the file path.

Step 6. Use SQLpipe to transfer the data.

Open your command prompt, navigate to the folder hosting SQLpipe, and run this command:

plaintextsqlpipe -s [source_odbc_data_source_name] -d [destination_odbc_data_source_name] -f [path_to_sql_query_file] -t [target_table_name]Replace [source_odbc_data_source_name] with the name of the ODBC data source that you set up for SQL Server, [destination_odbc_data_source_name] with the name of the ODBC data source that you set up for Snowflake, [path_to_sql_query_file] with the file path of the SQL query that you wrote in Step 4, and [target_table_name] with the name of the table in Snowflake where you want to store the data.

Assuming you want to transfer a table called “users” in the schema “dbo” from SQL Server to Snowflake, here is an example command:

plaintextsqlpipe transfer \

--source-ds-type "mssql" \

--source-hostname "your-mssql-hostname" \

--source-port 1433 \

--source-db-name "your-mssql-db-name" \

--source-username "your-mssql-username" \

--source-password "your-mssql-password" \

--target-ds-type "snowflake" \

--target-account-id "your-snowflake-account-id" \

--target-db-name "your-snowflake-db-name" \

--target-schema "your-snowflake-schema-name" \

--target-username "your-snowflake-username" \

--target-password "your-snowflake-password" \

--target-table "name-of-table-to-insert-into" \

--overwrite \

--query "select * from dbo.users"The command -c or --csv enables you to output the data in CSV instead of the default tab-separated format. This can be useful if you need to import the data into a tool that requires CSV format.

Step 7. After the data transfer is complete, verify that the data has been successfully transferred by querying the target table using an SQL client.

After the transfer completes, query the Snowflake target table to confirm that the expected rows were loaded.

Explore our guide for loading data from SQL Server to various other databases:

SQL Server to Snowflake Validation Checklist

Before using the pipeline in production, validate:

- SQL Server CDC is enabled on the database and selected tables.

- Each captured table has a primary key or a manually specified Estuary collection key.

- Historical backfill completed successfully.

- Inserts, updates, and deletes from SQL Server appear correctly in Snowflake.

- Snowflake tables are created in the expected database and schema.

- Timestamp columns use the expected Snowflake timestamp type.

- Standard or delta update mode matches your analytics requirements.

- Row counts match between SQL Server and Snowflake.

- Sample records match between the source and the destination.

- Duplicate records are not created during retries or backfills.

- Downstream dashboards, dbt models, notebooks, and applications still work.

- Snowflake warehouse auto-suspend and sync schedule are configured for cost control.

- Monitoring and alerts are configured for capture or materialization failures.

Common SQL Server to Snowflake Migration Mistakes

Treating File-Based Loads as Real-Time Replication

Snowpipe, SnowSQL, and SQLpipe can move data into Snowflake, but they require exported files, schedules, staging, and monitoring. They are not the same as continuously capturing SQL Server inserts, updates, and deletes through CDC.

Forgetting to Enable CDC on SQL Server Tables

For CDC-based replication, CDC must be enabled on both the database and the individual tables you want to capture.

Ignoring Primary Keys

Primary keys are important for deduplication, updates, and delete handling. If a SQL Server table does not have a primary key, you must define an appropriate key in the Estuary collection.

Choosing the Wrong Snowflake Update Mode

Standard updates and delta updates have different tradeoffs. Use standard updates when Snowflake should represent the latest state of each row. Use delta updates only when append-style event data is appropriate.

Skipping Snowflake Cost Controls

Configure warehouse auto-suspend and materialization sync schedules to avoid unnecessary compute costs.

Not Testing Downstream Queries

A successful data load does not guarantee that dashboards, transformations, or applications behave correctly. Validate downstream queries before cutover.

Conclusion

SQL Server to Snowflake integration can be handled with a managed CDC pipeline or with file-based methods such as Snowpipe, SnowSQL, and SQLpipe. The right method depends on your latency requirements, change volume, schema complexity, cost controls, and how much operational work your team wants to manage.

Manual methods can work for one-time transfers or scheduled batch loads, but they require separate export jobs, staging files, orchestration, monitoring, and validation. They are not the same as continuously capturing SQL Server inserts, updates, and deletes.

Estuary is a strong fit when Snowflake needs to stay current as SQL Server changes. It can capture historical rows and ongoing changes from SQL Server, then materialize them into Snowflake using standard updates or delta updates with Snowpipe Streaming. For production workloads, validate CDC enablement, keys, update mode, Snowflake authentication, warehouse cost settings, and downstream queries before cutover.

For teams evaluating production replication, the safest next step is to test the pipeline with a representative table, validate backfill and CDC behavior, and confirm Snowflake cost and query performance before cutover.

Further Reading:

- How to Migrate from SQL Server to Snowflake – An in-depth, practitioner-written guide from Seattle Data Guy covering real-world migration planning, architecture differences, and tooling considerations

FAQs

Can you automate data transfer from SQL Server to Snowflake?

What authentication method is supported for Snowflake?

What are some popular third-party tools for transferring data from SQL Server to Snowflake?

Can SQL Server changes be replicated to Snowflake in real time?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.