For a long time, Snowflake users have relied on the typical COPY INTO commands for data ingestion, using Snowpipe for micro-batching or tools like Fivetran for ELT. But things have changed recently. With the surge of GenAI and an influx of unstructured data, the old “land, then transform” approach has started to crack.

In 2024, Datavolo was acquired by Snowflake, shortly before the announcement of Snowflake OpenFlow. It was built by the creators of Apache NiFi (a system used to process and distribute data). This acquisition has enabled Snowflake to redesign its data ingestion layer and enable its users to build and extend processors from a data source to any destination.

In this article, we’ll be focusing on Snowflake OpenFlow’s architecture, how it works, the ways in which you can use it, who its competitors are, and where its strengths and limitations lie, with a special focus on the 2026 data landscape.

Key Takeaways

Snowflake OpenFlow enables in-flight data processing. It allows teams to transform, enrich, and route data before it lands in Snowflake.

OpenFlow is built on Apache NiFi via the Datavolo acquisition. As such, it brings flow-based programming and visual orchestration into the Snowflake platform.

OpenFlow is best suited for unstructured data, streaming ingestion, and AI pipelines. This is where traditional ELT tools fall short.

OpenFlow complements tools like Estuary and Fivetran. It trades ultra-low latency and simplicity for flexibility and customization.

What Is Snowflake OpenFlow?

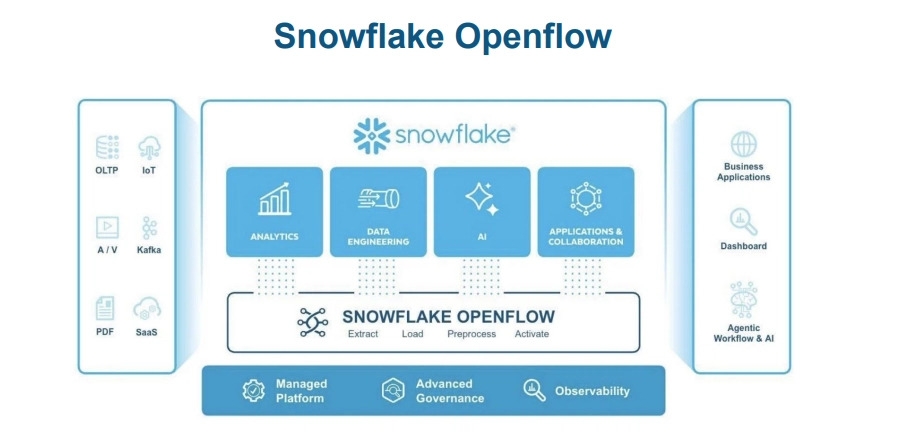

Snowflake OpenFlow is an integration service that connects virtually any data source to any destination. It features hundreds of processors that support structured and unstructured text, images, audio, video, and sensor data.

Most connectors simply move data from one place to another, but OpenFlow is a flow-based orchestration engine, which means it lets engineers process data while it’s still “flowing”, before it even touches a Snowflake table.

Let’s circle back to the classic ELT process. We start by loading the raw data into the Bronze layer. Then, we use SQL to transform it and clean it before moving it into the Silver layer. This setup works just fine for CSVs or JSON, but when we apply it to other types of data and processes, it starts to break down. Some examples include:

- Unstructured data: SQL won’t be able to extract what you need from a PDF or an image.

- Real-time routing: In some cases, the same data stream must be routed to multiple destinations, like a vector database and an Iceberg lake, and SQL is not capable of achieving that.

- Complex API logic: If you are dealing with multi-step OAuth2 flows or paginated REST APIs, SQL won’t be able to help you out.

Datavolo Acquisition and Flow-Based Programming (FBP) Model

Even though the acquisition was mentioned earlier, it’s worth revisiting since it was a strategic move to bring flow-based programming (FBP) into the warehouse. As discussed, this acquisition effectively brings Apache NiFi 2.0, a system created by the NSA to manage massive, complicated data streams, into Snowflake.

Now, with OpenFlow, you get all that NiFi power without the burden of managing servers, complex technical setups, or dedicated teams.

Architecture: Control Plane vs. Data Plane

Snowflake uses a modern split-plane architecture to keep your data secure while allowing your system to scale easily.

Control Plane

You can access the control plane directly through Snowsight (Snowflake’s web interface). Through your workspace, you can visually build your flows by dragging and dropping processors, connecting them, and managing versions with Git. This plane is in charge of all the instructions and metadata. However, I should note that the actual data never touches this plane, which helps keep information private and secure.

Data Plane (“Runtime”)

This is where the actual data processing occurs. It can be deployed in two ways:

- Managed (SPCS): The runtime runs inside Snowpark Container Services. It is a fully managed option operated by Snowflake, and it automatically scales based on your credit usage.

- BYOC (Bring Your Own Cloud): If your company needs to keep everything in-house due to strict data residency requirements (or other compliance reasons), you can deploy an OpenFlow Agent in your own VPC on AWS or Azure. Your data remains local, but instructions still come from the Snowflake Control Plane.

Image Registry

Snowflake uses a system image registry to ensure the platform remains secure. When you add a new processor (such as a Python tool to read PDFs), the data plane agent pulls a signed and verified container image from this registry. This guarantees that every piece of code running in your environment is authorized and kept up to date.

FlowFiles in Snowflake OpenFlow: Content, Metadata, and Backpressure

Every piece of information is wrapped in a FlowFile, which has two main components. The content refers to the actual data payload, such as the raw bytes of an image or a specific row from a spreadsheet. The attributes store key-value metadata attached to the payload, like the file’s origin, its unique ID, or the type of data it contains.

Handling “Spiky” Data

Backpressure helps handle sudden surges of data. As such, it's one of OpenFlow's greatest assets.

In traditional pipelines, a spike in data can crash the consumer. With OpenFlow, however, every connection acts as a safety buffer. You can also set limits (for instance, “pause if the queue reaches 5,000 FlowFiles”) to give the ingestion engine room to breathe and prevent the runtime from crashing during peak loads.

Snowflake Connection Service

This service acts as a bridge between NiFi and Snowflake. It manages the key-pair authentication and ensures that the NiFi stream is mapped to the right Snowflake endpoint.

Let’s have a look at a JSON representation of a Controller Service config:

plaintext{

"controllerService": "SnowflakeConnectionService",

"properties": {

"accountUrl": "<https://xy12345.snowflakecomputing.com>",

"user": "OPENFLOW_INGEST_USER",

"privateKey": "${snowflake_private_key_secret}",

"warehouse": "INGEST_WH",

"database": "RAW_DB",

"schema": "PUBLIC"

}

}Note: The privateKey is mapped directly to a Snowflake Secret to ensure credentials are not exposed in plaintext on the canvas.

High-Value Data Ingestion Patterns in Snowflake OpenFlow

Openflow is an ingestion and replication tool, not a full-scale transformation (ETL) engine, designed to move raw or lightly processed data into Snowflake with high efficiency and native AI integration. It focuses on the "L" (Load) and "E" (Extract) phases, ensuring data is landed in a query-ready state. In this section we’ll introduce the five primary high-value patterns used in the industry today:

Pattern 1: Database Replication and CDC

With OpenFlow, there will be no more SELECT * queries to your database. It uses log-based Change Data Capture (CDC) to stream changes.

plaintext-- Run on source Postgres DB

ALTER SYSTEM SET wal_level = logical;

CREATE PUBLICATION snowflake_export FOR ALL TABLES;

SELECT * FROM pg_create_logical_replication_slot('snowflake_slot', 'pgoutput');The CaptureChangePostgreSQL processor reads the database logs and creates FlowFiles for every insert, update, and delete. These then stream directly into Snowflake.

Pattern 2: Streaming Event Ingestion

Snowpipe Streaming is Snowflake’s low-latency ingestion API designed for real-time event data. This method sends data directly to a table and skips the staging step entirely.

plaintextCREATE OR REPLACE TABLE raw_events (

event_id UUID,

payload VARIANT,

processed_at TIMESTAMP_NTZ DEFAULT CURRENT_TIMESTAMP()

);The PutSnowflakeStreaming processor sends NiFi records to the Snowflake table buffer and is capable of achieving latencies as low as 2 seconds.

Pattern 3: Internal Staging

If you’re building a RAG application, you’ll need a place to land PDFs or audio files. In this case, you can use PutSnowflakeInternalStage to upload these files directly to Snowflake.

plaintextCREATE OR REPLACE STAGE docs_stage

DIRECTORY = (ENABLE = TRUE)

ENCRYPTION = (TYPE = 'SNOWFLAKE_SSE');

-- Querying the metadata of the binary files

SELECT * FROM DIRECTORY(@docs_stage);OpenFlow moves the files from the source (such as a local SFTP) into the stage. Snowflake’s Directory Tables make these files immediately available to your AI functions, no extra steps required.

Pattern 4: Managed Iceberg

Vendor lock-in is no fun, but you can avoid it by writing your data in Apache Iceberg format while letting Snowflake manage the catalog.

plaintextCREATE OR REPLACE ICEBERG TABLE customer_data (

id INT,

name STRING

)

EXTERNAL_VOLUME = 'my_s3_volume'

CATALOG = 'SNOWFLAKE';The PutIcebergTable processor writes Parquet files to your S3 bucket and updates the table metadata. As a result, engines like Apache Spark can query the data immediately.

Pattern 5: The Cortex AI Transform

This is arguably OpenFlow’s best feature. Basically, you can extract entities from your data stream before it lands in a table, which allows you to clean or enrich your data in-flight.

Let’s have a look at the conceptual logic of the Cortex processor:

plaintext-- Logic executed in-flight by the Cortex Processor

SELECT SNOWFLAKE.CORTEX.EXTRACT_ENTITIES(

attribute.content,

['company', 'person', 'location']

);We place a CortexProcessor between a source and a sink (destination). It then sends the content of each FlowFile to an LLM, extracts entities, and stores them as attributes that can be used in your table.

Security and Governance in Snowflake OpenFlow

There exist several ways to keep your data safe with Snowflake, and Openflow integrates directly into Snowflake’s security framework ensuring that ingestion pipelines follow the same Role-Based Access Control and encryption standards as your data warehouse. Let’s have a look at the options in more detail:

RBAC (Role-Based Access Control)

To build a flow, you need to have the OPENFLOW_ADMIN role. Moreover, for it to run successfully, the data plane must have a role that has the USAGE grant on the specific warehouse and the INSERT grant on the target table. Otherwise, the flow may encounter permission issues.

Secrets Management

When your code or app needs to connect to an external system (like Salesforce or Stripe), it typically requires an API_KEY. Typing this secret value directly into your code is risky, especially if it’s publicly available. A safer approach is to reference the secret value using Snowflake secrets management, such as: SECRET_VALUE = ${secrets.api_key} .

That way, the secret value isn’t stored in your code.

Data Lineage

Every action in OpenFlow is recorded and can be reviewed in Snowflake Trail. You can access these logs at any time and trace what happened in case something has gone wrong.

Snowflake OpenFlow vs. Estuary vs. Fivetran: The 2026 Data Integration Landscape

Selecting the right tool for your data pipeline is very important, and it will depend on whether you prioritize simplicity, real-time speed, or native AI capabilities. In this section, we’re comparing Openflow against its biggest competitors:

OpenFlow vs. Estuary

Estuary is good for moving data instantly. Even though it combines batch and streaming into a single platform, it’s designed for streaming above all else. You should choose it if you need your data to be identical in two places at the same time (subsecond latency).

On the other hand, OpenFlow is a great choice if you are dealing with “messy” data, such as files and complex APIs, or when you need to run AI models on the data during ingestion.

OpenFlow vs. Fivetran

Fivetran is very easy to set up. You just provide your login details and watch it automatically move your data into your storage. It’s ideal for standard SaaS-to-warehouse pipelines where you don’t want to manage a canvas. It works particularly well with popular apps everyone uses, like Salesforce or Zendesk.

As mentioned in the previous comparison, OpenFlow is better suited for handling unstructured data, or in those situations when Fivetran’s settings aren’t flexible enough for your use case.

Estuary vs. OpenFlow vs. Fivetran

As a summary of the previous section, in the following table we compare Estuary, Openflow and Fivetran’s capabilities with a focus on use cases, latency, pricing, AI, CDC, data handling, and governance and security.

| Estuary | Snowflake OpenFlow | Fivetran | |

|---|---|---|---|

| Ideal use cases | Real-time dashboards, operational syncing, database data migration | Complex AI pipelines, unstructured data ingestion | Standard marketing/sales analytics (Salesforce, Google Ads, and similar) |

| Minimum latency | Sub 100ms | ~2s | 1 minute (real-time requires self-hosted HVR) |

| Pricing model | Volume-based | Compute-based | Row-based |

| Transformation style | Streaming ETL | Visual flow (300+ drag & drop processors) | Post-load ELT (primarily uses dbt once data is already in the warehouse) |

| AI/LLM readiness | High (native vector database sinks with pinecone and AI API calling) | Excellent (access to Snowflake Cortex AI) | Limited (provides data models for RAG, but doesn’t have real-time AI processing) |

| CDC method | Log-based (WAL/Binlog); highly efficient for databases | Log-based via NiFi processors (CaptureChangePostgreSQL, and similar) | Log-based; very reliable but can be expensive at high volumes |

| Unstructured data handling | Good (handles files and JSON streams well) | Excellent (specifically built for this kind of data) | Minimal (primary focus is on structured/tabular data from SaaS APIs) |

| Governance and security | SOC2, HIPAA; external to warehouse | Native (inherits Snowflake RBAC, secrets, and Horizon governance) | SOC2, HIPAA, ISO; external to warehouse |

Pricing and Lock-In

Every company takes vendor lock-in into account. Although OpenFlow is powered by Apache NiFi, the managed features are Snowflake-only, which means you have to be a Snowflake user.

Refer to pattern 4 earlier in this article to see how to avoid vendor lock-in.

You will have to pay for OpenFlow using Snowflake Credits, but there’s also the option of bringing your own cloud (BYOC) if that’s what you prefer. This may also help you save on high-bandwidth jobs since you bypass Snowflake’s compute markup and only pay for your own vCPU and RAM. In any case, pricing is based on the runtime size (small, medium, or large nodes, which we will discuss further in the following section) and how long those nodes are active. Essentially, you are paying for the service to stay active regardless of your data volume.

By contrast, Fivetran uses a Monthly Active Rows (MAR) model, which lets you pay for the rows updated or inserted. If your data volume is low, Fivetran is often cheaper, but if you have massive datasets, the per-row cost can escalate quickly.

Similarly, Estuary uses a “pay-for-what-you-use” model; however, it bills you based on how much data you move (GB per month) rather than the number of rows or the size of the machine.

Snowflake OpenFlow Performance, Scaling, and Limitations

Scaling the Runtime

There are three runtime options available, which you can choose from depending on your specific use case:

- Small: 1 vCPU / 2GB RAM (normally a good option for light API polling)

- Medium: 4 vCPU / 16GB RAM (works well for standard CDC and Snowpipe Streaming)

- Large: 16 vCPU / 64GB RAM (when dealing with heavy AI/ML or image processing)

Limitations

OpenFlow may be very powerful, but there are a few things to keep in mind before deciding if it’s the right fit for your team.

First, its regional availability is still limited to AWS and Azure services, with many Google Cloud (GCP) regions in the preview phase. If this is what you're using, I suggest that you wait until your region is fully available.

Second, there’s a steep learning curve since the platform is built on Apache NiFi. You will need some basic knowledge of flow-based programming to be able to use this "hands-on" tool.

Finally, not all connectors are available. The most popular ones are, but if you need a less common or niche ELT connector, you may be out of luck. You can still build your own logic, though, but it won’t be as fast or as convenient as it would be if you were using one of their provided and maintained connectors.

Conclusion

Snowflake OpenFlow undoubtedly represents the most significant change to the Snowflake ingestion story in a decade. It has transformed from a basic loader into a powerful orchestrator, positioning itself at the center of the AI pipeline. Beyond data movement, it provides a visual, well-governed, and scalable infrastructure, which helps you get things done efficiently.

For data engineers in 2026, the question is no longer “How do I move this data?” but “How much value can I add to this data before it lands?” If this issue has been on your mind lately, OpenFlow might just be what you’re looking for.

FAQs

What are the planes in Snowflake OpenFlow?

What is a FlowFile?

How does OpenFlow handle surges of data?

Does OpenFlow integrate with LLMs?

Is OpenFlow better than Fivetran or Estuary?

What’s the OpenFlow’s pricing model?

About the author

Ana is a results-driven Data Platform Engineer with a focus on building scalable, high-performance architectures. Combining a passion for emerging technologies with a commitment to continuous technical evolution, she specializes in engineering the foundational platforms that power (real-time) data initiatives. She's dedicated to the philosophy that 'the path is made by walking'; continuously upskilling to solve complex engineering challenges.