Introduction

MongoDB and Elasticsearch are built for different things. MongoDB stores documents and handles transactional reads and writes at scale. Elasticsearch indexes those documents and makes them searchable across millions of records in milliseconds. Using them together is a common and well-established pattern: MongoDB as the system of record, Elasticsearch as the search and analytics layer on top of it.

The challenge is keeping them in sync. Every time a document is created, updated, or deleted in MongoDB, that change needs to reach Elasticsearch quickly enough to keep search results accurate. For a product catalog, a user profile system, or a content platform, stale search results directly affect user experience. For a fraud detection or monitoring system, stale data affects business outcomes.

If you have searched for how to do this and found articles recommending the MongoDB River plugin or Mongo Connector, ignore them. The River plugin was removed from Elasticsearch in version 1.5 in 2015 and has not worked for a decade. Mongo Connector was deprecated by MongoDB in 2020 and the repository is now archived. Both are completely non-functional. Any guide still recommending these tools is outdated and will waste your time.

This guide covers the four methods that actually work in 2026, what each one is suited for, and how to set each one up correctly.

What this guide covers:

- The native Elastic MongoDB Connector for straightforward self-managed sync

- Monstache for real-time open source sync using MongoDB change streams

- Logstash with the MongoDB input plugin for teams already using the Elastic stack

- Estuary for fully managed real-time CDC with no infrastructure to operate

- How MongoDB BSON documents map to Elasticsearch index mappings

- Troubleshooting the most common sync failures

Why Syncing MongoDB to Elasticsearch is Harder Than It Looks

On the surface, moving data from MongoDB to Elasticsearch seems straightforward. Both store JSON-like documents, both are schema-flexible, and both have mature client libraries in every major language. The reality is more complicated.

- The data model mismatch is significant. MongoDB documents can contain deeply nested objects, arrays of mixed types, and fields that change type between documents in the same collection. Elasticsearch requires an explicit or inferred index mapping where every field has a defined type. When a nested MongoDB document arrives in Elasticsearch without a correctly configured mapping, Elasticsearch either rejects it, flattens it incorrectly, or maps it as a generic object that cannot be queried efficiently.

- Deletes do not propagate automatically. When a document is deleted in MongoDB, nothing in Elasticsearch knows about it unless your sync mechanism explicitly handles delete events. Batch sync tools that only read current documents will never catch deletes, leaving stale documents in your Elasticsearch index indefinitely. For search use cases this means deleted products, deactivated users, or removed content continue appearing in search results.

- Change streams require a replica set. The most reliable real-time sync methods for MongoDB use change streams, which require MongoDB to be running as a replica set or on MongoDB Atlas. A standalone MongoDB instance does not support change streams. If you are running a standalone instance and need real-time sync, you either need to convert it to a replica set first or use a batch polling approach with its inherent latency tradeoffs.

- Index mappings conflict with schema evolution. MongoDB collections have no enforced schema, so new fields appear in documents over time as the application evolves. In Elasticsearch, adding a new field type that conflicts with an existing mapping causes indexing failures. Without a strategy for handling schema evolution, a sync pipeline that works today breaks silently when a developer adds a new field to a MongoDB document next month.

Understanding these four problems upfront helps you choose the right sync method and configure it correctly the first time.

Choosing the Right Sync Method

The right method depends on three things: whether your MongoDB instance supports change streams, how much infrastructure you want to operate, and how fresh your Elasticsearch data needs to be.

- Native Elastic MongoDB Connector is the simplest option for teams that want an officially supported tool without writing code. It handles both initial sync and ongoing updates. Best for teams already on Elastic Cloud or running a managed Elastic deployment.

- Monstache is the most widely used open source option for real-time sync. It reads from MongoDB change streams, handles deletes correctly, and supports index mapping configuration. Best for teams that want real-time sync, are comfortable operating a lightweight Go daemon, and want full control over the sync behavior.

- Logstash with the MongoDB input plugin suits teams already running the ELK stack who want to add MongoDB as a data source. It is a batch polling approach rather than true real-time sync, which makes it appropriate for use cases where data freshness of a few minutes is acceptable.

- Estuary is the fully managed option. It uses MongoDB change streams to capture inserts, updates, and deletes in real time and materializes them into Elasticsearch indices continuously. No infrastructure to provision, no daemon to operate, and no custom code to write or maintain.

| Native Elastic Connector | Monstache | Logstash | Estuary | |

|---|---|---|---|---|

| Real-time sync | Yes | Yes | No (batch) | Yes |

| Handles deletes | Yes | Yes | No | Yes |

| Requires replica set | Yes | Yes | No | Yes |

| Infrastructure to operate | Moderate | Low | Moderate | None |

| Supports MongoDB Atlas | Yes | Yes | Yes | Yes |

| Supports self-hosted MongoDB | Yes | Yes | Yes | Yes |

| Supports Amazon DocumentDB | No | Partial | Yes | Yes |

| Supports Azure Cosmos DB | No | No | Yes | Yes |

| Open source | Yes | Yes | Yes | No |

| Setup complexity | Low | Moderate | Moderate | Low |

Method 1 — Native Elastic MongoDB Connector

The native Elastic MongoDB Connector is an officially supported connector maintained by Elastic. It handles both the initial full sync and ongoing incremental updates, and it runs either as a managed connector on Elastic Cloud or as a self-hosted connector on your own infrastructure.

Prerequisites:

- MongoDB 3.6 or later running as a replica set, or MongoDB Atlas

- Elasticsearch 8.5.1 or later, or an Elastic Cloud deployment

- A MongoDB user with

readpermission on the databases you want to sync - Network access between the connector and both MongoDB and Elasticsearch

Setting up the connector on Elastic Cloud:

Go to your Elastic Cloud deployment and navigate to Search, then Connectors. Click New Connector and select MongoDB from the connector catalog.

Fill in the connection details:

- MongoDB connection string in the format

mongodb+srv://username:password@cluster.mongodb.netfor Atlas, ormongodb://username:password@hostname:27017for self-hosted - Database name to sync from

- Collection name, or leave blank to sync all collections in the database

Click Save and run a full sync to load existing documents into Elasticsearch. Once the full sync completes, enable incremental sync to capture ongoing changes using MongoDB change streams.

Elastic creates an index in Elasticsearch for each MongoDB collection, named using the format database-name.collection-name by default.

Setting up the connector as self-hosted:

If you are not on Elastic Cloud, you can run the connector as a service on your own infrastructure using the Elastic connector framework:

plaintext language-bash# Install the connector service

pip install elastic-connectors

# Configure and run

elastic-ingest --config config.yml

Your config.yml needs the Elasticsearch endpoint, API key, and MongoDB connection details. The full configuration reference is in Elastic's connector documentation.

Limitations to be aware of:

The native connector does not support MongoDB views or time series collections, only standard collections. It also does not support Amazon DocumentDB or Azure Cosmos DB, even though both use MongoDB-compatible APIs. For those environments use Estuary or Logstash instead.

Incremental sync frequency on the self-hosted version depends on your polling configuration. On Elastic Cloud the managed connector runs incremental syncs on a schedule you define, typically every few minutes rather than true real-time sub-second latency.

Method 2 — Monstache

Monstache is an open source sync daemon written in Go that reads from MongoDB change streams and indexes documents into Elasticsearch in real time. It is actively maintained, widely used in production, and handles the four core sync problems correctly: real-time updates, deletes, schema mapping, and resume after failure.

Unlike the native Elastic connector which runs on a sync schedule, Monstache listens to the MongoDB change stream continuously and pushes changes to Elasticsearch within milliseconds of them occurring in MongoDB.

Prerequisites:

- MongoDB 3.6 or later running as a replica set or on MongoDB Atlas

- Elasticsearch 7.x or 8.x

- Go 1.20 or later if building from source, or use the pre-built binary

Installation:

Download the latest release binary from the Monstache GitHub releases page:

plaintext language-bash# Linux

wget <https://github.com/rwynn/monstache/releases/download/v6.7.12/monstache-linux-amd64.zip>

unzip monstache-linux-amd64.zip

chmod +x monstache

Or build from source:

plaintext language-bashgo install github.com/rwynn/monstache@latest

Basic configuration:

Monstache uses a TOML configuration file. A minimal configuration that syncs a single MongoDB collection to Elasticsearch looks like this:

plaintext language-tomlmongo-url = "mongodb://username:password@hostname:27017"

elasticsearch-urls = ["<https://your-elasticsearch-host:9200>"]

elasticsearch-user = "elastic"

elasticsearch-password = "your-password"

# Sync specific namespaces (database.collection format)

namespace-regex = '^mydb\\.products$'

# Enable change stream mode (default, requires replica set)

change-stream-namespaces = ["mydb.products"]

Run Monstache with your config file:

plaintext language-bash./monstache -f config.toml

Handling deletes:

By default Monstache propagates deletes from MongoDB to Elasticsearch automatically. When a document is deleted in MongoDB, Monstache receives the delete event from the change stream and issues a delete request to Elasticsearch for the corresponding document. No additional configuration is required.

Configuring index naming:

By default Monstache creates Elasticsearch indices using the MongoDB namespace format database.collection. You can override this with a custom index mapping:

plaintext language-toml[[mapping]]

namespace = "mydb.products"

index = "products"

Handling resume after failure:

Monstache stores its resume token from the MongoDB change stream in Elasticsearch by default. If Monstache restarts after a failure, it reads the resume token and continues from where it left off without missing any changes. This makes it safe to restart without data loss as long as the MongoDB change stream window has not expired.

Configure the resume token storage location explicitly:

plaintext language-tomlresume = true

resume-name = "monstache-resume"

Running Monstache in production:

For production deployments, run Monstache as a system service using systemd to ensure it restarts automatically after failures:

plaintext language-bash# Create a systemd service file at /etc/systemd/system/monstache.service

[Unit]

Description=Monstache MongoDB to Elasticsearch sync

After=network.target

[Service]

ExecStart=/usr/local/bin/monstache -f /etc/monstache/config.toml

Restart=always

RestartSec=5

[Install]

WantedBy=multi-user.target

Enable and start the service:

plaintext language-bashsystemctl enable monstache

systemctl start monstache

Limitations:

Monstache requires a MongoDB replica set. It does not work with standalone MongoDB instances. It also does not officially support Amazon DocumentDB, which implements only a subset of the MongoDB change stream API. For DocumentDB use Estuary's dedicated DocumentDB connector instead.

Method 3 — Logstash with MongoDB Input Plugin

Logstash is part of the Elastic stack and the natural choice for teams already running ELK infrastructure who want to add MongoDB as a data source. It polls MongoDB on a defined schedule rather than using change streams, making it a batch sync approach rather than real-time. For use cases where data freshness of a few minutes is acceptable, it works reliably without requiring a MongoDB replica set.

Prerequisites:

- Logstash 7.x or 8.x

- MongoDB 3.6 or later, standalone or replica set

- Elasticsearch 7.x or 8.x

- The

logstash-input-mongodbplugin

Install the MongoDB input plugin:

plaintext language-bashbin/logstash-plugin install logstash-input-mongodb

Verify the installation:

plaintext language-bashbin/logstash-plugin list | grep mongodb

Basic pipeline configuration:

Logstash pipelines are defined in .conf files with three sections: input, filter, and output. Here is a complete configuration that polls a MongoDB collection every 5 minutes and indexes documents into Elasticsearch:

rubyinput {

mongodb {

uri => "mongodb://username:password@hostname:27017/mydb"

placeholder_db_dir => "/tmp/logstash-mongodb"

placeholder_db_name => "logstash_sqlite.db"

collection => "products"

batch_size => 5000

generateId => true

since_table => "products_since"

schedule => "*/5 * * * *"

}

}

filter {

mutate {

rename => { "_id" => "[@metadata][mongo_id]" }

}

}

output {

elasticsearch {

hosts => ["<https://your-elasticsearch-host:9200>"]

user => "elastic"

password => "your-password"

index => "products"

document_id => "%{[@metadata][mongo_id]}"

}

}

The key configuration fields are:

placeholder_db_dirandplaceholder_db_namedefine where Logstash stores its position tracking using a local SQLite database. This is how Logstash knows which documents it has already processed on subsequent runs.scheduleuses cron syntax to define how often Logstash polls MongoDB./5 * * * *runs every 5 minutes.batch_sizecontrols how many documents Logstash reads per poll. Increase this for larger collections but monitor memory usage.generateIdset totruetells the plugin to use the MongoDB_idfield as the Logstash document ID, which maps correctly to the Elasticsearch document ID and prevents duplicates on re-runs.

Handling nested MongoDB documents:

MongoDB documents with nested objects need a filter step to flatten them before indexing into Elasticsearch, otherwise they land as opaque objects that cannot be queried by field:

rubyfilter {

ruby {

code => "

event.to_hash.each do |key, value|

if value.is_a?(Hash)

value.each do |subkey, subvalue|

event.set("#{key}_#{subkey}", subvalue)

end

event.remove(key)

end

end

"

}

}

This flattens a nested document like address.city into a top-level field address_city that Elasticsearch can index and query directly.

Running the pipeline:

plaintext language-bashbin/logstash -f /etc/logstash/conf.d/mongodb-to-elasticsearch.conf

For production, run Logstash as a managed service and place your pipeline config in /etc/logstash/conf.d/. Logstash reads all .conf files in that directory on startup.

Limitations:

Logstash with the MongoDB input plugin does not handle deletes. It only reads documents that exist in MongoDB at the time of each poll. Documents deleted from MongoDB remain in Elasticsearch until you manually remove them or rebuild the index. If your use case requires delete propagation, use Monstache or Estuary instead.

The polling approach also means changes that occur between poll intervals are not immediately visible in Elasticsearch. For most analytics and reporting use cases this is acceptable. For search experiences where users expect immediate results after a change, the latency is a meaningful limitation.

Method 4 — Sync MongoDB to Elasticsearch Using Estuary

Estuary is a managed data pipeline platform that connects MongoDB to Elasticsearch using change streams, capturing every insert, update, and delete in real time and materializing them into Elasticsearch indices continuously. Unlike Monstache or Logstash, there is no daemon to deploy, no infrastructure to operate, and no custom configuration to maintain when MongoDB schemas evolve.

Estuary's approach fits the right-time data model: you get sub-second latency when your use case demands it, or you configure a less frequent materialization cadence for workloads where that makes more sense, all within the same pipeline without changing tools.

Prerequisites:

- MongoDB 3.6 or later running as a replica set, MongoDB Atlas, Amazon DocumentDB, or Azure Cosmos DB

- An Elasticsearch cluster with a known endpoint, self-hosted or on Elastic Cloud

- An Estuary account at

dashboard.estuary.dev - Estuary IP addresses allowlisted in MongoDB and Elasticsearch if your instances restrict inbound connections

Supported MongoDB platforms:

This is where Estuary has a meaningful advantage over Monstache and the native Elastic connector. Estuary supports:

- Self-hosted MongoDB replica sets

- MongoDB Atlas

- Amazon DocumentDB via a dedicated connector variant

- Azure Cosmos DB for MongoDB via a dedicated connector variant

If you are running DocumentDB or Cosmos DB, Estuary is the only method in this guide that supports them natively.

Step 1: Create an Estuary account

Go to dashboard.estuary.dev and register for a free account using Google or GitHub credentials.

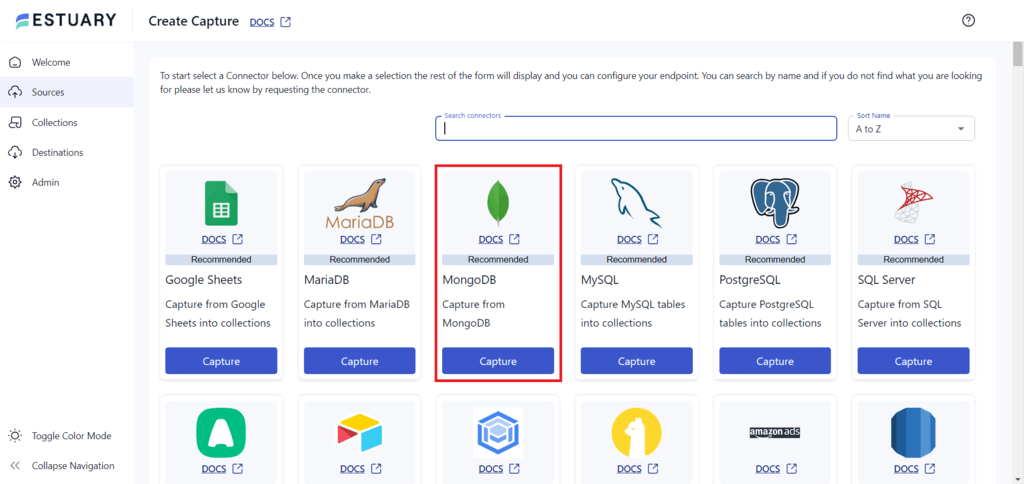

Step 2: Create a MongoDB capture

Click Sources in the left navigation, then New Capture. Search for MongoDB in the connector catalog and select the appropriate variant for your environment: MongoDB, Amazon DocumentDB, or Azure Cosmos DB.

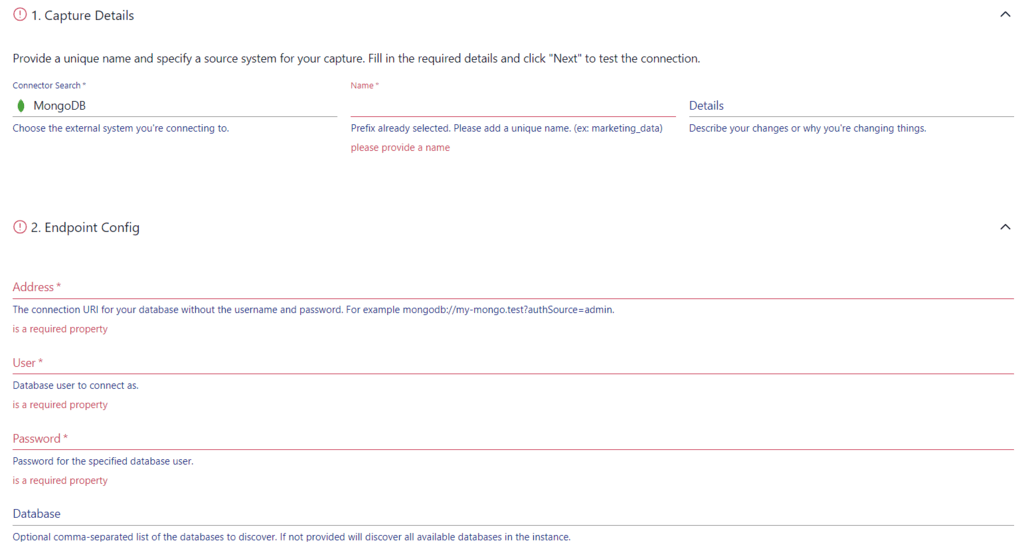

Fill in the connection details:

- Address: Your MongoDB connection string. For Atlas use the

mongodb+srv://format. For self-hosted usemongodb://hostname:27017. If you are connecting with a user that has access to all databases, append?authSource=adminto authenticate through the admin database. - User and Password: Credentials for a MongoDB user with read access on the databases you want to capture.

- Database: The specific database to capture from.

Click Next. Estuary connects to MongoDB and auto-detects all collections available for capture.

Step 3: Select capture mode per collection

This is a detail that matters for correctness. Estuary supports three capture modes, configured per collection:

- Change Stream Incremental is the default and preferred mode. It uses MongoDB change streams to capture inserts, updates, and deletes in real time as they occur. This requires a replica set or Atlas.

- Batch Snapshot performs a full collection scan on a defined schedule. Use this for MongoDB views or time series collections that do not support change streams, or for standalone MongoDB instances without replica set support.

- Batch Incremental scans only documents where the cursor field value is higher than the last observed value. Use this for append-only collections. For best performance, ensure the cursor field has an index in MongoDB.

For most production use cases, Change Stream Incremental is the right choice. Select your collections and click Save and Publish. Estuary immediately begins a backfill of existing documents and simultaneously listens for new change stream events so no changes are missed during the initial load.

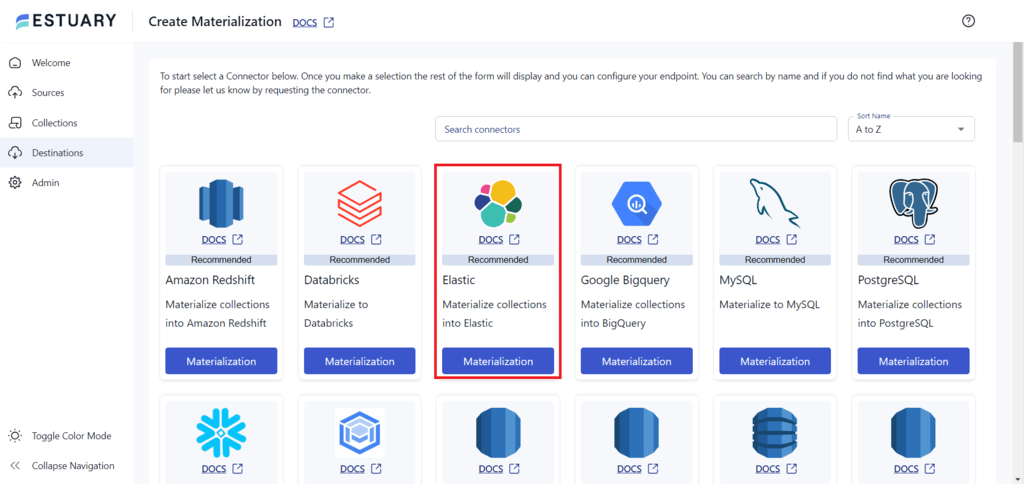

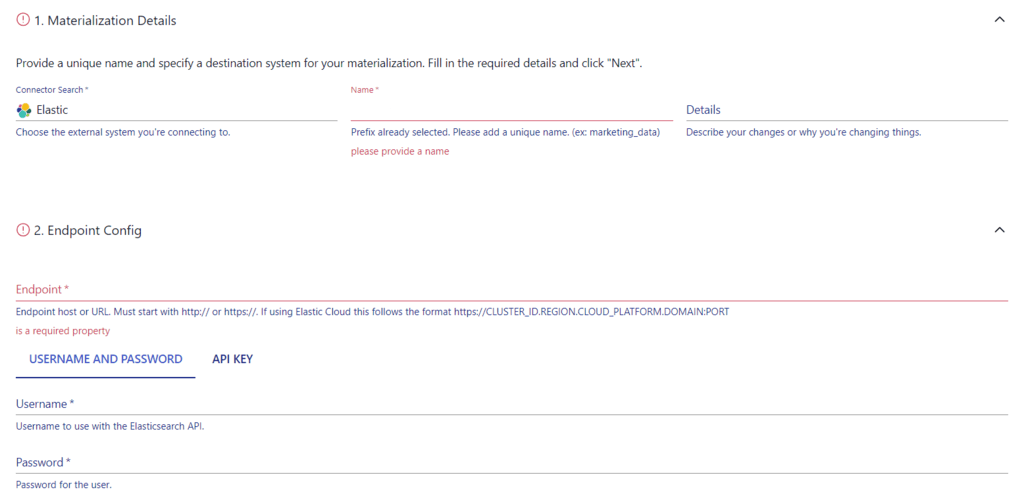

Step 4: Create an Elasticsearch materialization

Click Destinations, then New Materialization. Search for Elasticsearch in the connector catalog and select it.

Fill in the Elasticsearch connection details:

- Endpoint: Your Elasticsearch cluster URL, for example

https://your-cluster.es.io:9243for Elastic Cloud orhttps://hostname:9200for self-hosted - Username and Password: Credentials for an Elasticsearch user with the following index privileges on the target indices:

auto_configure,create_index,delete,delete_index,index,read

Step 5: Configure index bindings

Estuary maps each MongoDB collection to an Elasticsearch index. By default, the index name matches the collection name. You can rename target indices at this stage.

For each binding, you can also select specific fields to include or exclude before data reaches Elasticsearch, which is useful for dropping internal MongoDB fields or sensitive data you do not want indexed.

Step 6: Save and publish

Click Save and Publish. Estuary begins materializing MongoDB documents into Elasticsearch indices immediately. Within minutes of publishing, you should see documents appearing in your Elasticsearch indices.

Verify the sync is working by querying Elasticsearch directly:

plaintext language-bashcurl -X GET "<https://your-elasticsearch-host:9200/your-index/_count>" \\

-u elastic:your-password

The document count should match your MongoDB collection count once the initial backfill completes.

To verify real-time sync, insert a document in MongoDB and query Elasticsearch for it within seconds:

plaintext language-bashcurl -X GET "<https://your-elasticsearch-host:9200/your-index/_search>" \\

-u elastic:your-password \\

-H "Content-Type: application/json" \\

-d '{"query": {"match": {"field_name": "test_value"}}}'

Why Estuary handles the hard parts automatically:

The four sync problems described in Section 2 are all handled without manual configuration. Deletes are propagated automatically via change stream delete events. Schema evolution in MongoDB is detected and the Elasticsearch index mapping is updated without pipeline intervention. Resume after failure uses the change stream resume token so no changes are lost during outages. And the backfill plus real-time sync run in parallel on first setup so you never have a gap between historical data and live changes.

MongoDB to Elasticsearch Data Mapping

Getting the pipeline running is only half the work. The other half is ensuring MongoDB documents map correctly to Elasticsearch index mappings so your data is queryable, searchable, and structured the way your application expects. This is where most sync pipelines fail silently after initial setup.

How Elasticsearch Index Mappings Work

When a document arrives in Elasticsearch for the first time, Elasticsearch either uses an existing mapping you have defined or infers one automatically through dynamic mapping. Dynamic mapping is convenient but produces incorrect field types surprisingly often when the source is MongoDB, because BSON types do not map cleanly to Elasticsearch field types in every case.

The safest approach for a MongoDB to Elasticsearch pipeline is to define your index mapping explicitly before the first document arrives, then disable dynamic mapping for fields you do not control. This prevents Elasticsearch from making incorrect type inferences that are painful to fix after data is already indexed.

Common MongoDB to Elasticsearch Type Mappings

| MongoDB BSON Type | Elasticsearch Type | Notes |

|---|---|---|

| String | text or keyword | Use text for full-text search fields. Use keyword for exact match, filtering, and aggregations. Use both with a multi-field mapping for fields that need both. |

| Int32, Int64 | integer, long | Map directly. Use long for MongoDB Int64 to avoid overflow. |

| Double | double | Maps directly. |

| Boolean | boolean | Maps directly. |

| Date | date | MongoDB stores dates as BSON Date. Elasticsearch expects ISO 8601 format. Verify your sync tool converts correctly. |

| ObjectId | keyword | MongoDB _id ObjectId fields should be mapped as keyword in Elasticsearch since they are used for exact match lookups not full-text search. |

| Nested object | object or nested | Use object for simple nested documents. Use nested when you need to query sub-fields independently. See below. |

| Array | Array of the element type | Elasticsearch handles arrays natively for most types. Arrays of objects require nested mapping. |

| Null | Any type | Elasticsearch accepts null for any field type. No special handling needed. |

| Decimal128 | scaled_float or keyword | No native Elasticsearch equivalent. Use scaled_float for numeric operations or keyword if you only need to store and retrieve the value. |

The Object vs Nested Mapping Problem

This is the most common mapping mistake in MongoDB to Elasticsearch pipelines and the one most likely to produce silently incorrect query results.

MongoDB documents frequently contain arrays of embedded objects. For example an orders collection might have a line_items field containing an array of objects each with product_id, quantity, and price fields.

If you map line_items as an object type in Elasticsearch, which is the default, Elasticsearch flattens the array internally. This means a query for orders where line_items.product_id is ABC and line_items.quantity is greater than 5 may return incorrect results because Elasticsearch correlates field values across different array elements rather than within the same element.

If you map line_items as nested, Elasticsearch preserves the relationship between fields within each array element and queries produce correct results.

Define nested mappings explicitly before indexing:

plaintext language-jsonPUT /orders

{

"mappings": {

"properties": {

"line_items": {

"type": "nested",

"properties": {

"product_id": { "type": "keyword" },

"quantity": { "type": "integer" },

"price": { "type": "double" }

}

}

}

}

}

Nested mappings have a performance cost. Elasticsearch stores nested objects as hidden separate documents internally. For collections with large arrays of nested objects, monitor index size and query performance after enabling nested mapping.

Text vs Keyword for String Fields

MongoDB stores all strings as BSON strings without distinguishing between fields used for search and fields used for filtering. Elasticsearch makes this distinction explicitly and it affects both indexing behavior and query performance significantly.

- Use

textmapping for fields your users search against: product names, descriptions, article content, user bios. Text fields are analyzed, meaning Elasticsearch tokenizes and normalizes the content to support full-text search including stemming and relevance scoring. - Use

keywordmapping for fields you filter, sort, or aggregate on: status codes, category names, user IDs, country codes. Keyword fields are stored as-is and support exact match queries and aggregations efficiently.

For fields that need both, use a multi-field mapping:

plaintext language-json"product_name": {

"type": "text",

"fields": {

"keyword": {

"type": "keyword",

"ignore_above": 256

}

}

}

This lets you run full-text search queries on product_name and exact match or aggregation queries on product_name.keyword from the same indexed field.

Handling MongoDB ObjectId Fields

The MongoDB _id field is an ObjectId by default, a 12-byte BSON type that looks like 507f1f77bcf86cd799439011 when serialized to a string. Most sync tools convert ObjectId to its string representation when writing to Elasticsearch.

Map _id and any other ObjectId reference fields as keyword in Elasticsearch:

plaintext language-json"_id": { "type": "keyword" },

"user_id": { "type": "keyword" },

"product_id": { "type": "keyword" }

Do not map ObjectId fields as text. Text mapping applies full-text analysis which tokenizes the ObjectId string and makes exact match lookups unreliable.

Managing Schema Evolution

MongoDB collections have no enforced schema, so new fields appear over time as your application evolves. Elasticsearch dynamic mapping handles new fields automatically by default, but this can produce incorrect type inferences for new fields.

The safest production configuration uses dynamic mapping templates to control how new fields are handled:

plaintext language-jsonPUT /products

{

"mappings": {

"dynamic_templates": [

{

"strings_as_keywords": {

"match_mapping_type": "string",

"mapping": {

"type": "keyword"

}

}

}

],

"properties": {

"description": { "type": "text" },

"product_name": {

"type": "text",

"fields": {

"keyword": { "type": "keyword" }

}

}

}

}

}

This template maps any new string fields that arrive without an explicit mapping as keyword by default, which is a safer default than text for unknown fields. Fields you know need full-text search are defined explicitly in the properties section.

Troubleshooting Common MongoDB to Elasticsearch Sync Problems

Problem 1: Change Streams Not Available

Error:

plaintextFailed to watch change stream: not primary or secondary; cannot replicate

or

plaintextThe $changeStream stage is only supported on replica sets

Cause: Your MongoDB instance is running as a standalone server rather than a replica set. Change streams require a replica set or MongoDB Atlas.

Fix: Convert your standalone MongoDB instance to a single-node replica set. This does not require additional servers and works on a single machine:

plaintext language-bash# Start mongod with replica set enabled

mongod --replSet rs0 --bind_ip localhost

# Connect to mongosh and initiate the replica set

rs.initiate({

_id: "rs0",

members: [{ _id: 0, host: "localhost:27017" }]

})

After initiating, verify the replica set status:

plaintext language-bashrs.status()

The output should show the node as PRIMARY. Change streams are now available and your sync tool can connect normally.

If converting to a replica set is not possible in your environment, switch to Logstash with the MongoDB input plugin which uses polling and does not require a replica set, or use Estuary's Batch Snapshot capture mode.

Problem 2: Documents Rejected Due to Mapping Conflicts

Error in Elasticsearch logs:

plaintextmapper_parsing_exception: failed to parse field [field_name] of type [integer]

or

plaintextillegal_argument_exception: mapper [field_name] cannot be changed from type [keyword] to [text]

Cause: A document arrived in Elasticsearch with a field value that conflicts with the existing index mapping. This happens most often when a MongoDB collection contains documents where the same field holds different types across documents, for example some documents have price as an integer and others have it as a string.

Fix: First identify all documents in the affected MongoDB collection where the field type is inconsistent:

plaintext language-jsxdb.products.find({

price: { $type: "string" }

}).limit(10)

Then decide on the correct type and either clean the MongoDB data or handle the type conversion in your sync configuration. For Monstache, use a JavaScript mapping function to normalize the field before it reaches Elasticsearch:

plaintext language-jsx// In monstache config

[[script]]

namespace = "mydb.products"

script = """

module.exports = function(doc) {

if (typeof doc.price === 'string') {

doc.price = parseFloat(doc.price);

}

return doc;

}

"""

For existing indices with a mapping conflict you cannot resolve through data cleaning, the only option is to delete the index, fix the mapping, and re-index:

plaintext language-bash# Delete the conflicting index

curl -X DELETE "<https://your-elasticsearch-host:9200/products>" \\

-u elastic:your-password

# Create with correct mapping then trigger a full re-sync

Problem 3: Deletes Not Propagating to Elasticsearch

Symptom: Documents deleted from MongoDB continue appearing in Elasticsearch search results.

Cause: Your sync method does not handle delete events. Logstash with the MongoDB input plugin does not capture deletes. Batch polling tools in general only read documents that currently exist and have no mechanism for detecting removals.

Fix options:

If you are using Logstash, the only reliable fix is to implement a soft delete pattern in MongoDB. Instead of deleting documents, add a deleted_at timestamp field and filter deleted documents in your application queries. In Logstash, sync the deleted_at field to Elasticsearch and handle filtering there. This avoids true deletes entirely.

If soft deletes are not acceptable, switch to Monstache or Estuary, both of which receive delete events from MongoDB change streams and propagate them to Elasticsearch automatically.

Problem 4: Resume Token Expired After Downtime

Error with Monstache:

plaintextresume token not found in change stream

or

plaintextChangeStreamHistoryLost: Resume of change stream was not possible

Cause: Monstache stores a resume token from the MongoDB change stream to recover its position after a restart. MongoDB change streams have a retention window tied to the oplog size. If Monstache is down long enough for the oplog to rotate past the stored resume token position, the token becomes invalid and Monstache cannot resume.

Fix: Increase the MongoDB oplog size to give your sync tool more time to recover after outages. On a replica set:

plaintext language-jsx// Check current oplog size

db.getReplicationInfo()

// Increase oplog size to 10GB

db.adminCommand({

replSetResizeOplog: 1,

size: 10240

})

For immediate recovery when the token has already expired, delete the stored resume token and trigger a full re-sync. In Monstache this means clearing the resume record from Elasticsearch:

plaintext language-bashcurl -X DELETE "<https://your-elasticsearch-host:9200/monstache-resume>" \\

-u elastic:your-password

Then restart Monstache. It will perform a full backfill of all MongoDB documents before resuming change stream capture.

For Estuary, resume token management is handled automatically. If a resume token expires during an extended outage, Estuary detects this and automatically triggers a re-backfill of the affected collections without manual intervention.

Problem 5: Elasticsearch Indexing Performance Degrading at Scale

Symptom: Sync latency increases over time, Elasticsearch CPU and memory usage climbs, and indexing throughput drops as the MongoDB collection grows.

Common causes and fixes:

Too many shards is the most frequent cause of Elasticsearch performance problems at scale. The default of one shard per index works well for small indices but becomes inefficient as data grows. For indices expected to exceed 50GB, configure sharding explicitly when creating the index:

plaintext language-jsonPUT /products

{

"settings": {

"number_of_shards": 3,

"number_of_replicas": 1

}

}

Refresh interval set too low forces Elasticsearch to make new documents searchable too frequently. The default refresh interval of 1 second is aggressive for high-volume indexing. During bulk indexing operations increase it temporarily:

plaintext language-bashcurl -X PUT "<https://your-elasticsearch-host:9200/products/_settings>" \\

-u elastic:your-password \\

-H "Content-Type: application/json" \\

-d '{"index": {"refresh_interval": "30s"}}'

Reset to the default after bulk indexing completes.

Nested mappings on large arrays add significant indexing overhead because Elasticsearch stores each nested object as a hidden document. If you have nested mappings on fields with large arrays, evaluate whether object mapping with the cross-element query limitation is acceptable for your use case. The performance difference can be substantial at scale.

Conclusion

Syncing MongoDB to Elasticsearch is a solved problem in 2026, but only if you are using tools that are still actively maintained. The MongoDB River plugin and Mongo Connector are both dead. Any guide still recommending them is outdated.

Here is a quick reference for choosing the right method for your situation:

| Situation | Recommended Method |

|---|---|

| Already on Elastic Cloud, want simplest setup | Native Elastic MongoDB Connector |

| Need real-time sync, comfortable operating a daemon | Monstache |

| Already running ELK stack, batch latency acceptable | Logstash with MongoDB input plugin |

| Need fully managed pipeline, no infrastructure to operate | Estuary |

| Running Amazon DocumentDB | Estuary |

| Running Azure Cosmos DB for MongoDB | Estuary |

| Standalone MongoDB without replica set | Logstash or Estuary Batch Snapshot mode |

| Need sub-second sync with delete propagation | Monstache or Estuary |

Whichever method you choose, the data mapping section of this guide is worth revisiting after your pipeline is running. Getting the connection working is straightforward. Getting the Elasticsearch index mappings right so your data is correctly queryable is where most pipelines need tuning.

Start Syncing MongoDB to Elasticsearch in Real Time

Estuary connects to your MongoDB instance using change streams, captures every insert, update, and delete as it happens, and keeps your Elasticsearch indices in sync continuously. It works across self-hosted MongoDB, MongoDB Atlas, Amazon DocumentDB, and Azure Cosmos DB without separate tools or custom configuration for each environment.

No daemon to deploy. No infrastructure to operate. No resume token management. No mapping conflicts to debug manually.

Setup takes under 30 minutes. Your first pipeline is free.

Start building at dashboard.estuary.dev.

Further Reading:

- MongoDB CDC: Complete Guide to Change Data Capture for a deeper technical reference on MongoDB change streams

- Elasticsearch as a Destination for full Estuary Elasticsearch connector documentation

- MongoDB Connector Documentation for full Estuary MongoDB connector prerequisites and configuration

FAQs

Which method correctly handles document deletes?

Can I sync MongoDB to Elasticsearch without writing any code?

Does Estuary support Amazon DocumentDB and Azure Cosmos DB?

About the author

With over 15 years in data engineering, a seasoned expert in driving growth for early-stage data companies, focusing on strategies that attract customers and users. Extensive writing provides insights to help companies scale efficiently and effectively in an evolving data landscape.