Amazon Redshift is one of the most widely used cloud data warehouses in the world. Getting data into it reliably, on schedule, and at scale is where most engineering teams run into real challenges. The wrong method can mean stale dashboards, brittle pipelines, or AWS bills that spiral out of control.

This guide covers four proven methods to load data into Amazon Redshift, each suited to a different use case:

- Method 1: The COPY Command (native bulk loading from S3)

- Method 2: AWS Glue (serverless ETL with complex transforms)

- Method 3: Amazon Zero-ETL Integration (near-real-time CDC from Aurora and RDS)

- Method 4: Estuary (real-time CDC from any database, SaaS tool, or API)

For each method, you will find an explanation of how it works, when to use it, setup steps, code examples, and an honest look at the trade-offs. The comparison table and decision guide at the end will help you choose the right approach for your situation.

What to Know Before You Start

Redshift Uses Massively Parallel Processing

Redshift distributes query and load work across multiple compute nodes and slices. This means loading data via bulk files is dramatically faster than inserting rows one at a time. The COPY command, which AWS explicitly recommends as the most efficient way to load a table, reads multiple files in parallel and distributes them across all slices simultaneously.

Redshift is a Destination, Not a Pipeline

Redshift does not extract or transform data on its own. Everything in this guide describes tools and services that move data from your source systems into Redshift. The pipeline lives outside of Redshift.

Two Deployment Options

- Provisioned clusters: You choose node types and counts. You pay for uptime whether the cluster is active or idle.

- Redshift Serverless: Scales automatically. You pay per RPU-second. Great for variable workloads, but continuous streaming pipelines can prevent auto-pause and raise costs unexpectedly.

S3 as the Staging Layer

Almost every method in this guide moves data through Amazon S3 at some point. The COPY command reads directly from S3. Estuary uses S3 as a high-performance staging area. For best results, your S3 bucket and Redshift cluster should always be in the same AWS region.

Supported File Formats

Redshift supports CSV, JSON, Parquet, ORC, and Avro, as well as Gzip and Zstandard compression. Parquet is the recommended format for analytical workloads because of its columnar structure and compression efficiency.

Maintenance After Large Loads

After any significant bulk load, run VACUUM to reclaim deleted space and ANALYZE to update table statistics. These commands help the query optimizer make better decisions and keep performance healthy over time.

Method 1: The COPY Command

Best for: bulk loading large files from Amazon S3, DynamoDB, Amazon EMR, or SSH remote hosts

What It Is

The COPY command is Redshift's native built-in bulk loading tool. It is the fastest and most cost-effective way to load a table because it uses Redshift's MPP architecture to read multiple files simultaneously across all compute slices. AWS documentation explicitly states it is the most efficient loading method available.

How It Works

You stage data files in S3 and run a COPY command that tells Redshift where to find them, what format they are in, and which IAM role to use for authentication. Redshift reads the files in parallel across all nodes and loads them into the target table in one operation.

Basic COPY Example

plaintextCOPY your_table

FROM 's3://your-bucket/data-prefix/'

IAM_ROLE 'arn:aws:iam::123456789012:role/RedshiftS3Role'

FORMAT AS PARQUET;

plaintext-- For CSV files with a header row:

COPY your_table

FROM 's3://your-bucket/data.csv'

IAM_ROLE 'arn:aws:iam::123456789012:role/RedshiftS3Role'

CSV IGNOREHEADER 1

REGION 'us-east-1';

Auto-COPY: Continuous File Ingestion

AWS launched continuous auto-copy for Redshift in 2024. A single SQL command sets up a job that automatically loads new files landing in an S3 path, without any scheduling infrastructure.

plaintextCOPY store_sales

FROM 's3://your-bucket/store_sales/'

IAM_ROLE 'arn:aws:iam::123456789012:role/RedshiftS3Role'

FORMAT AS PARQUET

JOB CREATE job_store_sales AUTO ON;

File Preparation Tips

- Split files into equal sizes between 1 MB and 1 GB. Redshift assigns one file per slice, so equal-sized files mean even parallel load distribution.

- Compress files with Gzip or Zstandard before uploading to reduce transfer time and cost.

- Run only one COPY command per target table at a time. Multiple concurrent COPY commands on the same table are serialized and offer no speed benefit.

- Use IAM roles for authentication rather than long-lived access keys where possible.

Pros and Cons

| Pros | Cons |

|---|---|

| Built into Redshift, no additional tools needed | No support for operational databases or SaaS sources directly |

| Extremely fast for large batch loads from S3 | No schema drift handling, manual changes required |

| Supports CSV, JSON, Parquet, ORC, and Avro | Error handling is manual via STL_LOAD_ERRORS system table |

| Auto-COPY enables hands-off continuous S3 ingestion | Not suitable for sub-minute data freshness requirements |

| Low cost, uses existing Redshift compute | S3 staging must be managed by your team |

Method 2: AWS Glue

Best for: complex data transformation within the AWS ecosystem before loading to Redshift

What It Is

AWS Glue is Amazon's serverless, fully managed ETL service. It runs Apache Spark under the hood, which gives it the ability to handle complex transformations, join datasets from multiple sources, and write to Redshift. You write transformation logic in Python or Scala, and Glue manages the compute infrastructure.

How It Works

An AWS Glue pipeline has three main components.

- The Crawler scans your source data and registers its schema in the Glue Data Catalog, a central metadata store.

- The ETL Job reads from the catalog, applies your PySpark or Scala transformation logic, and writes the result to Redshift.

- A Scheduler or event trigger kicks off the job on your chosen cadence.

Supported Sources

AWS Glue connects to over 70 data sources natively. These include Amazon S3, Amazon RDS, Amazon DynamoDB, Amazon Kinesis, MongoDB, and any JDBC-compatible database. Connecting to non-AWS SaaS tools typically requires custom development or third-party connectors in the AWS Marketplace.

PySpark Job Example

Manage imports:

pythonimport sys

from awsglue.transforms import *

from awsglue.utils import getResolvedOptions

from pyspark.context import SparkContext

from awsglue.context import GlueContext

from awsglue.job import JobInitialize contexts:

pythonargs = getResolvedOptions(sys.argv, ['JOB_NAME'])

sc = SparkContext()

glueContext = GlueContext(sc)

spark = glueContext.spark_session

job = Job(glueContext)

Read data from a table:

python# Read from source catalog

source_df = glueContext.create_dynamic_frame.from_catalog(

database='my_database',

table_name='orders'

)

Add mappings:

python# Apply transforms (filter, rename, cast)

transformed = ApplyMapping.apply(

frame=source_df,

mappings=[('order_id', 'long', 'order_id', 'long'),

('amount', 'double', 'revenue', 'double')]

)

Store transformed data to Redshift:

python# Write to Redshift

glueContext.write_dynamic_frame.from_jdbc_conf(

frame=transformed,

catalog_connection='redshift_connection',

connection_options={

'dbtable': 'analytics.orders_clean',

'database': 'analytics'

},

redshift_tmp_dir='s3://my-temp-bucket/glue-tmp/'

)

Pros and Cons

| Pros | Cons |

|---|---|

| Serverless, no cluster management | Cold start latency of several minutes per job run |

| Handles complex multi-step transforms | Requires Apache Spark expertise |

| Auto schema discovery via Glue crawler | Primarily AWS-ecosystem sources, weak SaaS support |

| Deep AWS Lake Formation and EMR integration | Expensive for high-frequency or continuous workloads |

| Supports 70+ AWS and JDBC data sources | Schema changes require re-running the crawler |

Method 3: Amazon Zero-ETL Integration

Best for: near-real-time CDC from Aurora, RDS, and DynamoDB sources already running in the same AWS region

What It Is

Amazon Zero-ETL integration is a native AWS feature that replicates data from Aurora and RDS databases directly into Redshift in near real time, without requiring any ETL pipeline. AWS manages the Change Data Capture at the storage layer. Data is available in Redshift within seconds of being written to the source database.

Supported Sources

- Aurora MySQL-Compatible Edition

- Aurora PostgreSQL-Compatible Edition

- Amazon RDS for MySQL

- Amazon RDS for PostgreSQL

- Amazon DynamoDB (15-30 minute refresh interval, not continuous CDC)

- Enterprise SaaS applications via AWS Glue Zero-ETL integrations: Salesforce, Zendesk, ServiceNow, SAP, and others

Setting Up Zero-ETL

- Enable binary logging on your Aurora or RDS source. For Aurora PostgreSQL, confirm you are running version 16.4 or higher.

- Open the AWS Console and navigate to your Aurora or RDS cluster.

- Choose Zero-ETL integrations and create a new integration, selecting your Redshift cluster or Serverless workgroup as the target.

- Configure a resource policy on the Redshift namespace to authorize the integration source.

- Create a destination database in Redshift to receive the replicated data.

- Monitor integration status via Redshift system views.

Note: All source tables must have a primary key. Tables without a primary key cannot be replicated and will be excluded from the integration. For Aurora PostgreSQL, you must also configure at least one data filter pattern specifying which databases and tables to replicate.

Key Limitations

- Source and target must be in the same AWS region. Cross-region replication is not supported.

- Non-AWS sources are out of scope. Oracle, SQL Server, MongoDB, Google Cloud, and on-premises databases cannot use Zero-ETL.

- Replicated tables in Redshift are read-only. You cannot run INSERT, UPDATE, or DELETE on them directly.

- DDL changes on source tables, such as adding or renaming columns, can trigger a full table resync. On large tables this can make the table unavailable for queries for 20 to 90 minutes.

- Continuous CDC activity on Redshift Serverless prevents auto-pausing, which can cause significantly higher RPU costs for teams on the serverless tier.

- DynamoDB Zero-ETL refreshes every 15-30 minutes at minimum, not continuously.

- Each Redshift data warehouse supports a maximum of 50 zero-ETL integrations.

Pros and Cons

| Pros | Cons |

|---|---|

| No pipeline to build or manage for supported sources | AWS sources only, no external databases or cross-cloud support |

| Near-real-time latency from Aurora and RDS sources | Replicated tables are read-only in Redshift |

| No additional cost beyond existing Aurora and Redshift charges | DDL changes can cause 20-90 minute table unavailability |

| Handles initial backfill automatically | Continuous CDC prevents Redshift Serverless from auto-pausing |

| Supports SaaS apps via AWS Glue integration | All tables must have a primary key |

| Max 50 integrations per Redshift warehouse |

Method 4: Estuary

Best for: real-time CDC from any database, SaaS tool, or API into Redshift, with automatic schema evolution and managed infrastructure

What It Is

Estuary is a right-time data platform that delivers sub-second Change Data Capture from databases and SaaS tools into Amazon Redshift. It is fully managed, meaning you configure pipelines through a web UI and Estuary handles the infrastructure, scaling, retries, and schema changes automatically.

Unlike Zero-ETL, Estuary works with any source regardless of cloud provider. The Redshift connector stages data in S3 for high-performance bulk loading, giving you real-time CDC with the loading efficiency of the COPY command.

How It Works

Estuary pipelines run as three connected stages:

- Capture: Estuary connects to your source and captures changes as a continuous stream of documents.

- Collection: Changes are stored in a durable, replayable, schema-enforced collection.

- Materialization: The Redshift connector reads from the collection and writes to your Redshift tables using S3 as a staging layer, then issues a COPY command.

Supported Sources

Estuary supports 120+ source connectors, including SQL databases (PostgreSQL, MySQL, SQL Server), NoSQL databases (MongoDB, Amazon DocumentDB), object storage (Amazon S3, GCS), SaaS sources (Salesforce, HubSpot, Shopify), and many more. See the full connector list.

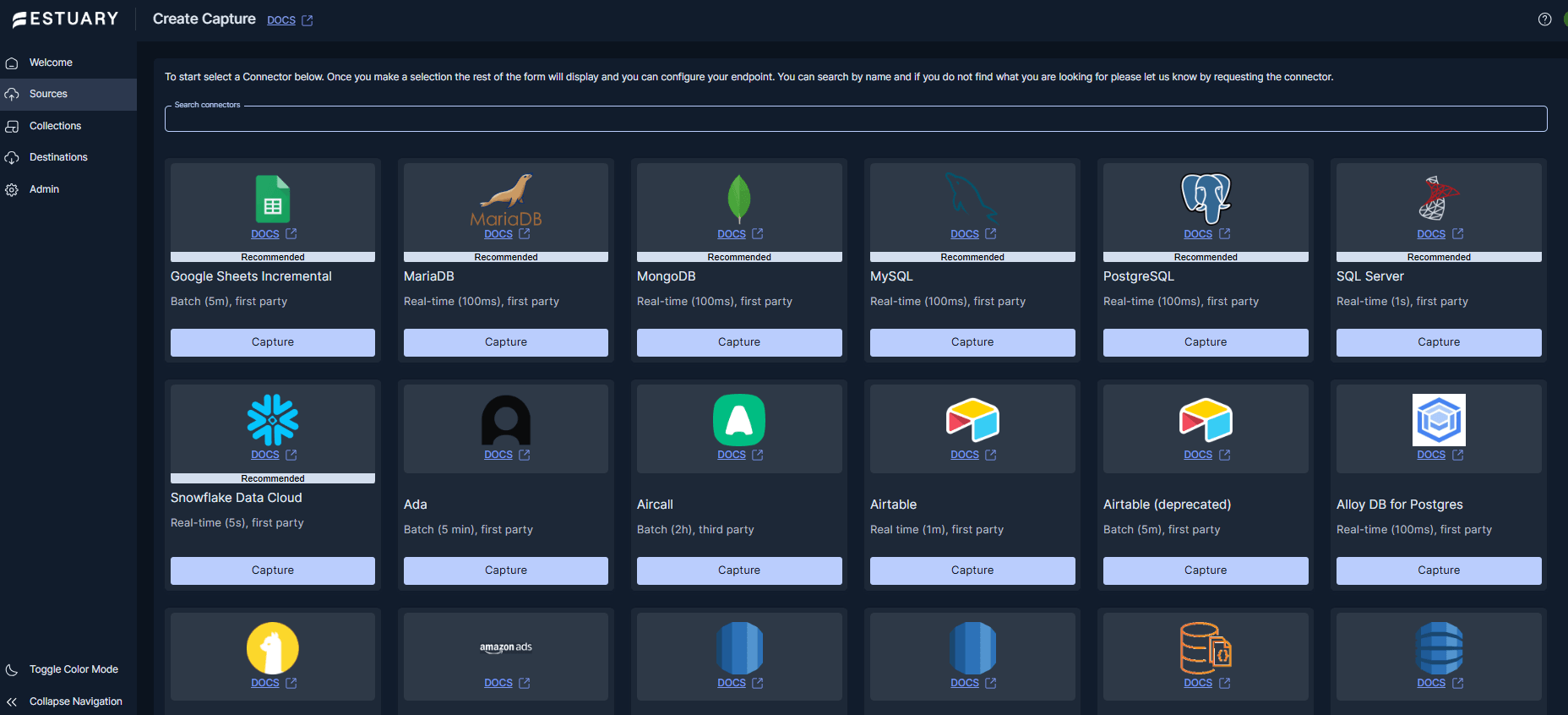

Step-by-Step Setup

Step 1: Sign up

Create a free account at dashboard.estuary.dev/register. The free tier includes 2 connector instances and up to 10 GB per month, letting you test out your pipeline without any commitment.

Step 2: Set up your source capture

In the Estuary dashboard, go to Sources and click + New Capture. Search for your source connector, enter your credentials, and click Next.

Estuary runs a discovery phase that automatically infers your source schema and proposes collections. Review auto-discovered collections and then Save and Publish your capture.

Note that some sources will require additional setup to connect. For example, PostgreSQL sources require logical replication to be enabled on your database. Estuary's capture connector docs walk through this per source.

Step 3: Configure network access for Redshift

Estuary connects to your Redshift cluster from a set of fixed egress IP addresses. You have two options:

- Public access with IP allowlist: make your cluster publicly accessible and allow Estuary's egress IPs on port 5439. All connections are SSL-only.

- SSH tunnel: if your cluster is in a private VPC, set up an EC2 bastion host and configure an SSH tunnel. Note that S3 staging traffic uses HTTPS and bypasses the tunnel regardless.

Step 4: Create your S3 staging bucket

Create an S3 bucket in the same region as your Redshift cluster. Create an IAM user with read and write access to the bucket and note its Access Key ID and Secret Access Key. The IAM policy should look something like:

json{

"Version": "2012-10-17",

"Statement": [{

"Effect": "Allow",

"Action": ["s3:GetObject","s3:PutObject","s3:DeleteObject","s3:ListBucket"],

"Resource": [

"arn:aws:s3:::your-staging-bucket",

"arn:aws:s3:::your-staging-bucket/*"

]

}]

}

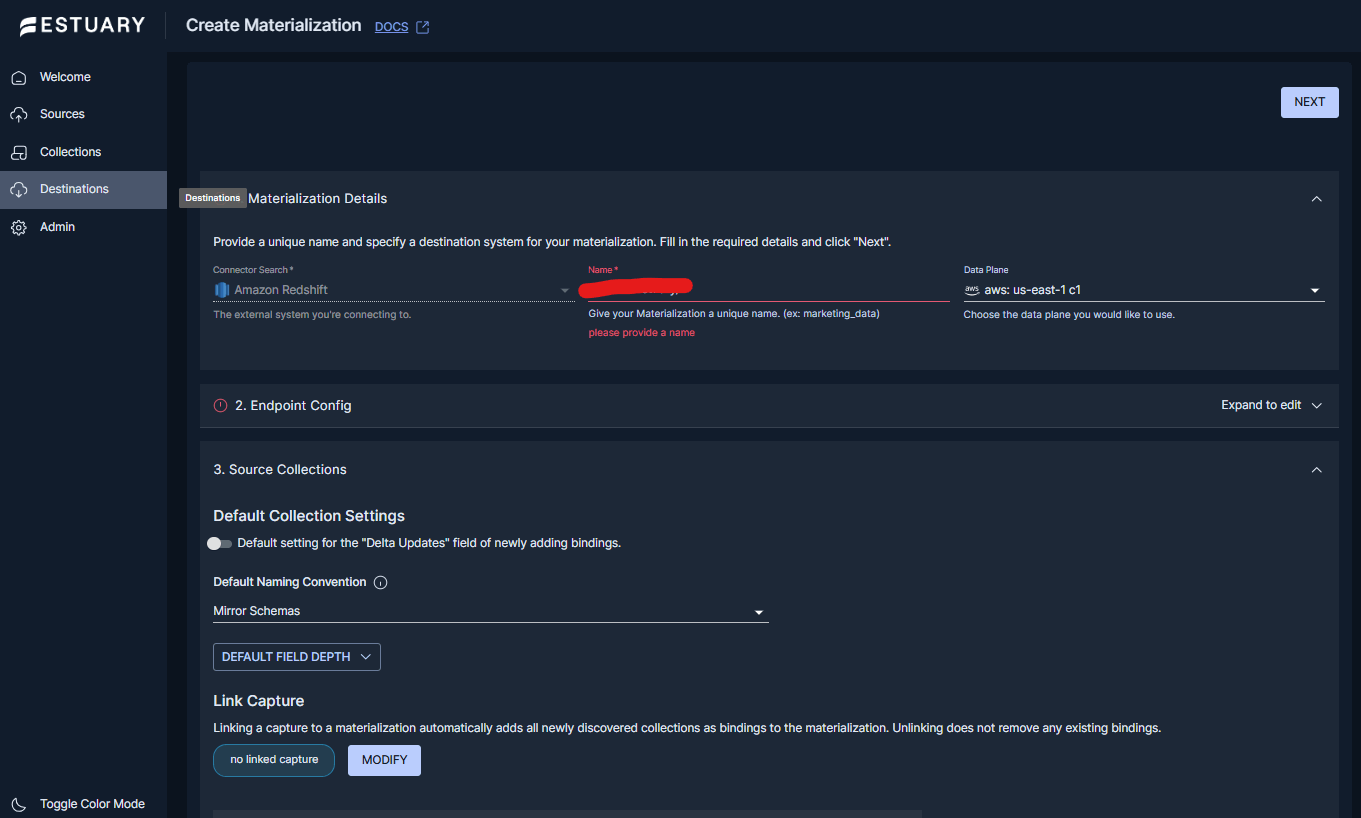

Step 5: Create the Redshift materialization

In the Estuary dashboard, go to Destinations and click + New Materialization. Search for Amazon Redshift and enter your connection details:

| Field | Description | Example |

|---|---|---|

| Address | Cluster host and port | my-cluster.abc123.us-east-1.redshift.amazonaws.com:5439 |

| User | Redshift database user | etl_user |

| Password | Database password | your_password |

| Database (optional) | Target database name | analytics |

| Schema (optional) | Database schema (defaults to public) | public |

| S3 Staging Bucket | Bucket name | my-redshift-staging |

| AWS Access Key ID | IAM key for S3 bucket | AKIAIOSFODNN7EXAMPLE |

| AWS Secret Access Key | IAM secret for S3 bucket | wJalrXUtnFEMI/K7MDENG... |

| Region | Bucket and cluster region | us-east-1 |

| Bucket Path (optional) | S3 key prefix for staged files | estuary/staging/ |

Click Source from Capture to link your capture, then click Save and Publish. Estuary performs an initial backfill of existing data and switches to continuous CDC mode.

Step 6: Verify the pipeline

In the dashboard, check that the materialization shows a Running status. Then run a quick verification in Redshift:

sql-- Confirm table was created

SELECT table_schema, table_name

FROM information_schema.tables

WHERE table_schema = 'public'

ORDER BY table_name;sql-- Check row count

SELECT COUNT(*) FROM public.orders;sql-- Inspect Estuary metadata table (for exactly-once bookkeeping)

SELECT * FROM public.flow_checkpoints_v1;YAML Configuration Reference

Estuary connectors use a backing specification file for configuration. If you want to manage your pipeline programmatically rather than through the UI, you can use flowctl to work with your connectors’ spec files.

Alternatively, you can edit the specification file directly in the UI using the Advanced Specification Editor.

plaintextmaterializations:

your_prefix/redshift-main:

endpoint:

connector:

image: ghcr.io/estuary/materialize-redshift:v2

config:

address: my-cluster.abc123.us-east-1.redshift.amazonaws.com:5439

user: etl_user

password: secret_password

database: analytics

schema: public

awsAccessKeyId: AKIAIOSFODNN7EXAMPLE

awsSecretAccessKey: wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY

bucket: my-redshift-staging

region: us-east-1

bucketPath: estuary/staging/

bindings:

- resource:

table: orders

source: your_prefix/postgres/orders

- resource:

table: customers

source: your_prefix/postgres/customers

Update Modes

- Standard mode (default): Estuary reads the current document state, merges incoming changes, and writes the result as an upsert keyed on the document's primary key. This keeps Redshift tables as a current-state mirror of your source.

- Delta updates mode: Estuary appends each change event as a new row without merging. Useful for audit logs and change history. Enable per binding with delta_updates: true.

Performance Tips

Tip: Run at most one Redshift materialization per schema. Estuary uses a table-level lock on metadata tables during each transaction commit. Multiple materializations sharing a schema must take turns acquiring this lock, which serializes commits and reduces throughput. Add all collections as bindings to a single materialization instead.

Tip: For large initial backfills, set maxTxnDuration to 10-30 minutes in your shard configuration to allow large batches to accumulate before committing. This significantly improves backfill speed compared to many small transactions.

Tip: For steady-state streaming where data freshness of a few hours is acceptable, configure a syncFrequency (e.g., 4h) to batch updates. Fewer, larger syncs are much more efficient in Redshift than frequent small commits.

Schema Handling

Estuary creates the necessary Redshift tables automatically when you publish a materialization. Manually pre-created tables are not supported.

All table and column identifiers are treated as lowercase by default. If your source has fields that differ only by case, such as myField and MyField, the materialization will fail. Rename fields from the capture UI or use projections in your collection spec before they reach Redshift.

Source-Specific Guides

- PostgreSQL to Redshift: Stream PostgreSQL to Redshift in real time

- MySQL to Redshift: Sync MySQL to Redshift in real time

- MongoDB to Redshift: Connect MongoDB to Redshift

Ready to try Estuary? Connect your first source to Amazon Redshift in minutes. Start free

Pros and Cons

| Pros | Cons |

|---|---|

| Free tier | Free tier limited to 10 GB/month and 2 connectors |

| Works with 120+ sources across any cloud or on-premises | S3 staging bucket and IAM credentials required |

| Sub-second CDC latency from source to Redshift | One materialization per schema recommended for performance |

| Exactly-once delivery with automatic retry and recovery | IAM AssumeRole not yet supported; long-lived access keys required for S3 |

| Managed data movement platform | |

| Automatic schema evolution and table creation | |

| Supports both standard merge and delta update modes |

Side-by-Side Comparison: All 4 Methods

| Feature | COPY Command | AWS Glue | Zero-ETL | Estuary |

|---|---|---|---|---|

| Setup complexity | Medium | High | Medium | Low |

| Real-time CDC | No | No | Near-real-time (AWS only) | Yes, sub-second |

| Source types | S3, DynamoDB, EMR, SSH | 70+ AWS and JDBC sources | Aurora, RDS, DynamoDB, SaaS apps | 120+ databases, SaaS, APIs, files |

| Non-AWS sources | No | Limited via JDBC | No | Yes |

| SaaS connectors | No | Marketplace only | Limited (via AWS Glue) | Yes, built-in |

| Schema evolution | Manual | Semi-auto via crawler | Limited, DDL causes resyncs | Automatic |

| Exactly-once delivery | No | No | Yes | Yes |

| Latency | Batch (hours to days) | Minutes (cold start) | Seconds (Aurora/RDS) | Configurable, from seconds to batch syncs |

| Infrastructure needed | S3 + IAM role | Serverless (AWS manages) | None (AWS manages) | S3 + IAM role |

| Best use case | Bulk loads from S3 | AWS ETL with transforms | Aurora and RDS near-real-time | Any source, real-time, multi-connector |

Which Method Should You Choose?

| Your situation | Best method |

|---|---|

| You have data files in S3 and need scheduled bulk loads | COPY Command |

| You need complex multi-step data transformation before loading, all within AWS | AWS Glue |

| Your source is Aurora or RDS and you want near-real-time sync with no pipeline to build | Zero-ETL Integration |

| Your source is PostgreSQL, MySQL, MongoDB, Salesforce, or any non-AWS system | Estuary |

| You need real-time CDC with exactly-once semantics and automatic schema handling | Estuary |

| You have sources across multiple clouds or on-premises databases | Estuary |

| You want one tool to connect all your databases and SaaS tools to Redshift | Estuary |

| You want to avoid Spark expertise, cold starts, and AWS-only limitations | Estuary |

Best Practices for Loading Data into Redshift

- Split files into equal sizes between 1 MB and 1 GB. Redshift assigns one file to each node slice. Equal-sized files give every slice the same amount of work and maximize parallel load efficiency.

- Compress files before staging in S3. Gzip and Zstandard are supported natively and reduce both transfer time and cost without any extra configuration.

- Keep your S3 bucket in the same region as your Redshift cluster. Cross-region data transfer adds latency and cost with no benefit.

- Run VACUUM and ANALYZE after large loads. VACUUM reclaims deleted row space and restores sort order. ANALYZE updates statistics that the query optimizer uses to build efficient query plans.

- Use staging tables for bulk transformations. Load raw data into a staging table first, apply your transformation logic, then use ALTER TABLE APPEND to merge clean data into the production table. This is faster than INSERT INTO SELECT.

- Run only one COPY per table at a time. Multiple concurrent COPY commands targeting the same table are serialized by Redshift. Running them in parallel offers no speed benefit and can slow things down.

- Use IAM roles rather than static access keys wherever possible. Roles are easier to rotate, scope, and audit than long-lived access key pairs.

Troubleshooting Common Redshift Load Errors

| Error or Symptom | Likely Cause | Fix |

|---|---|---|

| Rows in STL_LOAD_ERRORS after COPY | Type mismatch, extra columns, or malformed data in source files | Query STL_LOAD_ERRORS for the file name and line number. Fix the source file or use COPY options like IGNOREHEADER, MAXERROR, or ACCEPTINVCHARS. |

| Schema mismatch fails the COPY | Source file has more or fewer columns than the target table | Update the table schema to match the source, or use a JSONPath or column list in the COPY command. |

| Serializable isolation violation in Estuary logs | Multiple Estuary materializations are sharing the same Redshift schema | Ensure each materialization uses a unique endpoint schema. Use per-binding schema overrides to still land tables in a shared target schema. |

| Zero-ETL table unavailable for 20-90 minutes | DDL change on source table triggered a full table resync | Plan schema changes during low-traffic windows. Monitor resync progress via Redshift system views. |

| Redshift Serverless bills higher than expected | Continuous CDC from Zero-ETL prevents auto-pause | Set RPU-hour limits in the console, or migrate continuous CDC workloads to a provisioned RA3 cluster. |

| Permission denied on COPY or staging | IAM role missing S3 read permissions or not attached to the cluster | Attach the IAM role with the correct S3 policy to the Redshift cluster in the AWS Console. |

| S3 region mismatch error | S3 staging bucket is in a different region than the Redshift cluster | Move or recreate the staging bucket in the same region as your Redshift cluster. |

Conclusion

There is no single right method for loading data into Amazon Redshift. Each approach covered in this guide fits a different set of requirements.

The COPY command remains the fastest and most cost-effective option when your data already lives in S3. AWS Glue is the right choice when you need complex transformation logic before data lands in Redshift and you are fully within the AWS ecosystem.

Zero-ETL is an excellent option if your source is Aurora or RDS and you want near-real-time sync without building and maintaining a pipeline, though its AWS-only constraint rules it out for most teams with diverse source systems.

Estuary covers what the other methods cannot: real-time CDC from any database or SaaS tool, regardless of cloud provider, with exactly-once delivery, automatic schema evolution, and managed infrastructure. If your team needs a single platform that connects all your sources to Redshift and keeps them in sync continuously, Estuary is the most operationally straightforward path to get there.

Start Loading Data into Amazon Redshift with Estuary

Stop building and maintaining batch pipelines that leave your analytics team waiting. Estuary delivers low-latency CDC from any database or SaaS tool into Amazon Redshift, exactly-once and ready to scale.

About the author

Dani is a data professional with a rich background in data engineering and real-time data platforms. At Estuary, Daniel focuses on promoting cutting-edge streaming solutions, helping to bridge the gap between technical innovation and developer adoption. With deep expertise in cloud-native and streaming technologies, Dani has successfully supported startups and enterprises in building robust data solutions.