Imagine this: you’re a newly appointed CTO of a thriving tech startup, and you’ve just accepted your first challenge. The goal is to move your company’s operational data from Postgres to Snowflake using CDC (Change Data Capture). The solution has to be scalable, cost-effective, and adaptable to business needs. Your data pipelines need to handle terabytes of data movement every day, and this data can come in batches or in streams of CDC (the foundation of Postgres to Snowflake CDC pipelines).

Someone on your team has mentioned using traditional SaaS solutions like Fivetran. Meanwhile, other senior engineers insist on building their own system to handle the traffic load. And now you're facing a classic build vs. buy data pipelines dilemma, where cost and convenience collide.

A managed SaaS solution would be quick to set up, and you’ll spend little effort to keep things up and running. Still, this convenience will come at a premium price and a loss of control.

A DIY solution will allow you and your team to optimize the cost and tune the performance to your needs. The senior engineers claim that they can deliver everything in just a couple of weeks. Still, you’re worried about the maintenance burden and the costs that may emerge over time.

But what if I told you that this binary perspective of build vs. buy is misleading and that there's a third, hybrid option?

Estuary is a unified and flexible data movement layer for enterprises. With it, you won’t have to sacrifice control for cost efficiency anymore.

We'll walk you through the advantages and inconveniences of each solution for moving data from Postgres to Snowflake, and we'll see together why a right-time data platform offers a new alternative.

Key Takeaways

Build vs. buy decisions for Postgres to Snowflake pipelines should be evaluated on total cost of ownership, not just setup speed or tooling cost.

DIY CDC pipelines offer control but introduce long-term maintenance, operational risks, and hidden engineering costs.

Traditional SaaS tools simplify ingestion but can become expensive and opaque in high-churn CDC scenarios.

A right-time data movement layer allows teams to balance batch and real-time needs without rebuilding pipelines.

Estuary provides a hybrid alternative that combines predictable cost, operational reliability, and flexibility.

Build vs. Buy: The Hidden Cost of Flexible Pipelines Development

This section explores the real trade-offs behind build vs. buy data pipelines, beyond surface-level cost comparisons.

The Build Trap: Why Control Is Expensive

So, back to the CTO’s story: one of the engineers on your team has examined the technical requirements, and she's positive she can make the DIY solution work.

Now you're curious about the technical specifications. Here are the inputs for your equation:

- Data source: Postgres production with 50 core tables

- Daily volume: 10 GB of changed data (mix of new inserts and high-frequency updates/CDC)

- Data churn: Medium to high (rows are updated multiple times a day)

- Schema evolution: 2-3

ALTER TABLEcommands per month from the business team. - Latency: <5 minutes for reporting dashboards

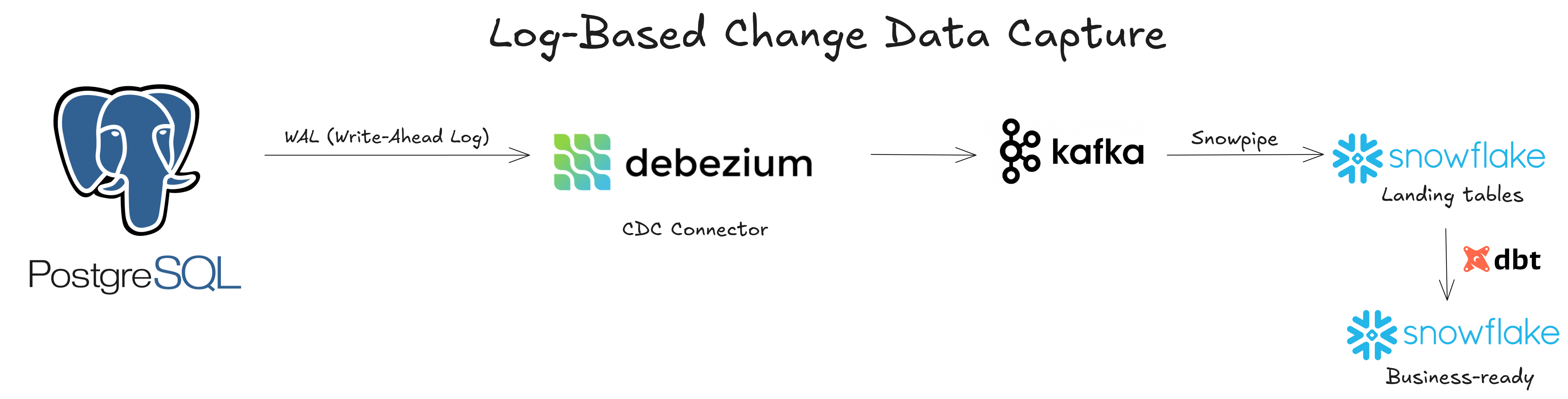

Eagerly, the said engineer explains her approach to building a log-based Change Data Capture (CDC) pipeline. She'll use a 100% open-source stack, which includes a CDC engine like Debezium, a message broker like Apache Kafka, and a Snowflake Kafka connector to handle landing data. Finally, she'll stick with dbt-core to transform the CDC stream into final, business-ready tables.

The engineering team’s enthusiasm to build something of their own could turn out to be a trap. Writing the code is often only 10% of the work, and DIY pipelines are far from set-it-and-forget-it. The maintenance and evolution can take up to 20% of an engineer’s time, and she'll have to manage things like exactly-once data delivery, schema changes, state management, and updates. After you factor in her annual salary, your total cost of ownership (TCO) becomes hard to ignore (but we’ll get to this later).

And of course, your team will have to spend a significant amount of time re-inventing the wheel.

Here’s an example of how your engineer should handle schema evolution caused by frequent ALTER TABLE commands from upstream.

pythonimport psycopg2

import snowflake.connector

def sync_schema(table_name):

# Fetch Postgres Schema

pg_conn = psycopg2.connect(...)

with pg_conn.cursor() as cur:

cur.execute(f"SELECT column_name, data_type FROM information_schema.columns WHERE table_name = '{table_name}'")

pg_columns = {row[0]: map_type(row[1]) for row in cur.fetchall()}

# Fetch Snowflake Schema

sn_conn = snowflake.connector.connect(...)

sn_columns = sn_conn.cursor().execute(f"DESC TABLE {table_name}").fetchall()

sn_col_names = [col[0].lower() for col in sn_columns]

# Identify missing columns

for col, dtype in pg_columns.items():

if col not in sn_col_names:

print(f"Adding missing column {col} to Snowflake")

sn_conn.cursor().execute(f"ALTER TABLE {table_name} ADD COLUMN {col} {dtype}")

def map_type(pg_type):

# Mapping layer

mapping = {"uuid": "VARCHAR", "timestamp with time zone": "TIMESTAMP_TZ", "jsonb": "VARIANT"}

return mapping.get(pg_type, "VARCHAR")

Among these logics, several (such as hard delete handling, state reconstruction logic, and monitoring and alerting) require substantial engineering effort. Many ambitious startups set out to build their custom engine, only to end up with a brittle system that requires a full-time babysitter.

Unless your product is a data integration tool, don’t build one yourself.

While full control over your data pipelines can keep your engineering team happy, it comes with the hidden cost of maintenance and evolution. At that point, it’s worth taking a closer look at a managed SaaS solution.

The Buy Dilemma: Trading Maintenance for Flexibility

Another engineer on your team has heard about a traditional data moving solution called Fivetran. It’s a managed SaaS that can help your team accelerate development and achieve a certain level of scalability.

Fivetran acts as an intermediary that orchestrates the entire EL (Extract-Load) process. It provides a native connector to Postgres Write-Ahead Log (WAL). Then, it stages the data in cloud storage before pushing it to Snowflake. Along the way, Fivetran handles tasks such as automatic schema drift, deduplication, idempotency, and retries.

At first glance, it sounds like a dream come true. You can save a lot of engineering effort, while your team can focus on more important things than babysitting your pipelines.

However, just like other traditional managed SaaS tools, Fivetran comes with several hiccups.

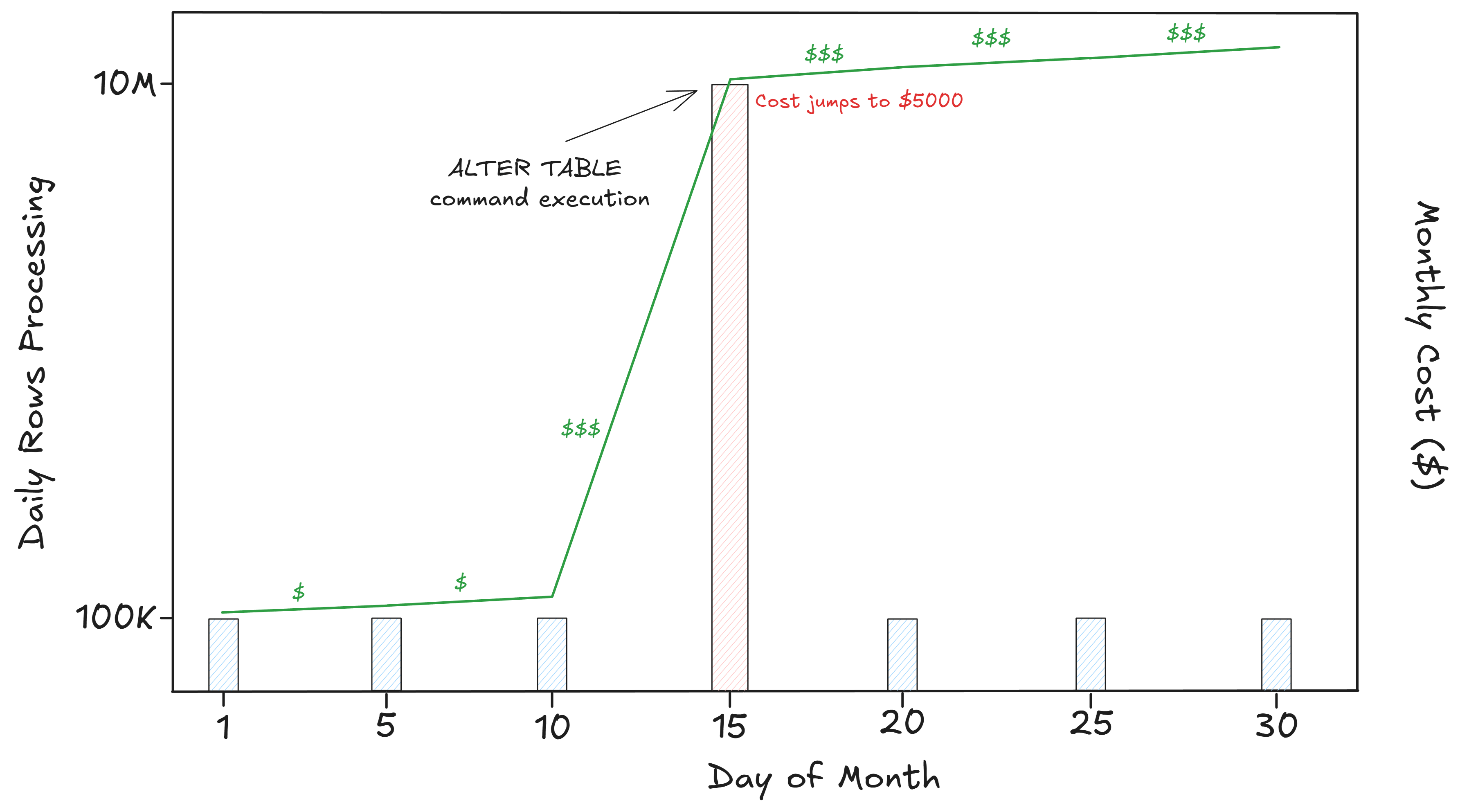

When you look at its pricing model, you’ll see that they charge your pipelines based on monthly active rows (MAR). In a high-churn scenario, where many rows are updated every day, Fivetran can become exponentially expensive. In turn, this means that the cost can be highly unpredictable.

Let’s go back to your initial inputs on Postgres to Snowflake pipelines. We'll break down the cost of how the MAR pricing model might hurt your company unexpectedly.

Here are those inputs:

- Total records in Postgres: 10 million rows

- Daily updates: 100,000 rows (1% of the database)

- Average row size: 1 KB

- Fivetran public pricing for the Enterprise tier: ~$500 per 1 million MAR

During a typical month, we can assume that only 10% of your users are active, which means that only 10% of your database is touched. That’s 1,000,000 MAR, or roughly $500 per month.

That sounds reasonable, until it doesn’t.

When your backend team decides to add a new column with a default value, they execute this simple command in the Postgres backend ALTER TABLE users ADD COLUMN country DEFAULT 'US'

Now, this will touch all of your 10 million rows, and even though it’s an instant operation, Fivetran sees this as 10,000,000 active rows.

The consequence: you’ll pay around $5,000 in the worst-case scenario where your 10 million rows are fragmented. That’s a 10X cost spike triggered by a single update.

Cost increase aside, with a paid vendor like Fivetran, you'll be operating in a “black box” model. You won’t have a fine-grained lineage, observability, and control over your data pipelines.

Plus, a managed data ingestion tool like Fivetran only lands data in raw schemas in your Snowflake. You still have to write the dbt models to transform these tables by joining them together and applying business logic. That’s when you might fall back into the build mode.

It feels like this struggle between building and buying never ends. Are these really the only two choices that you have?

“There is another,” said Yoda.

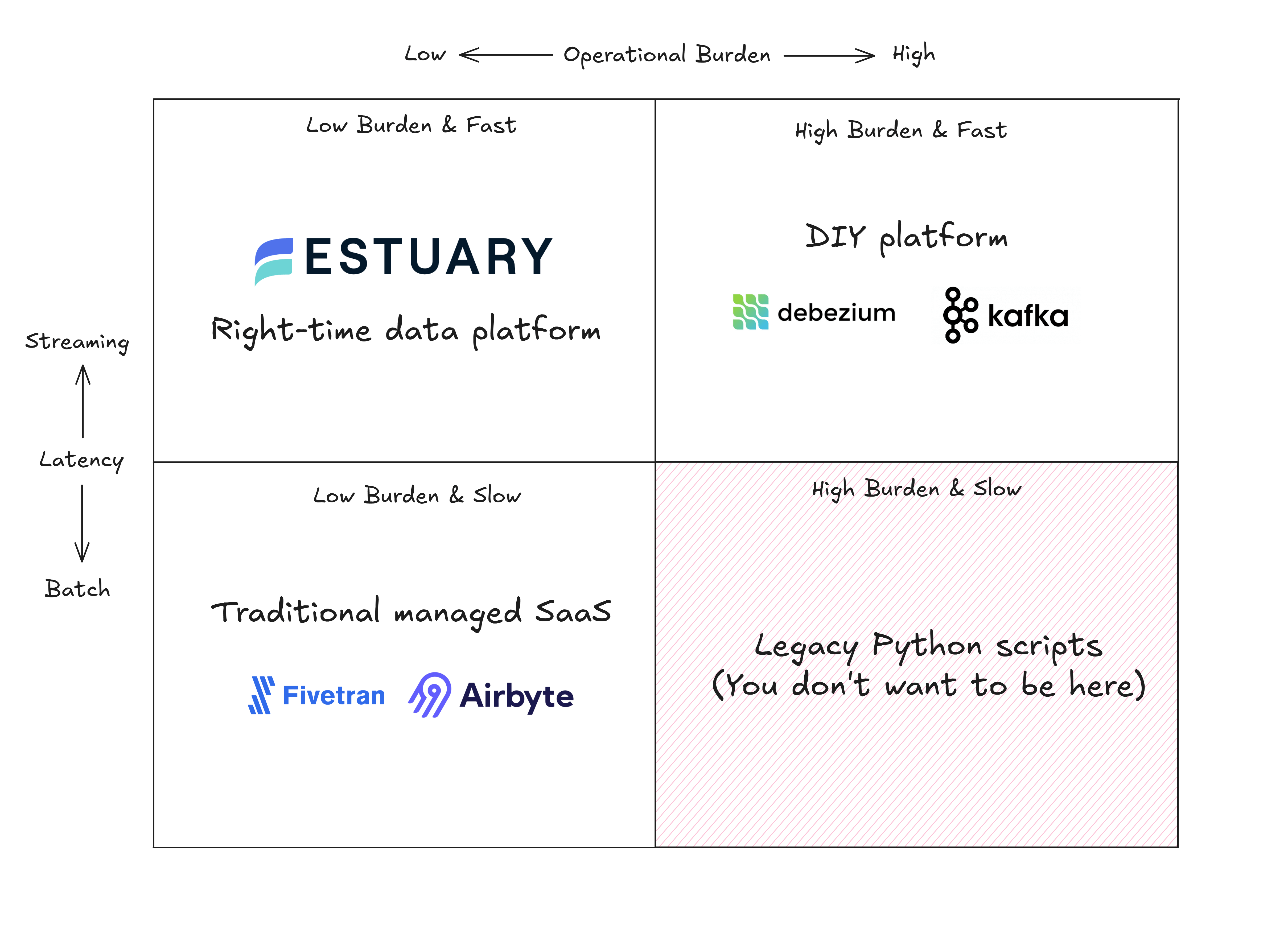

The Third Way: A Dependable, Right-Time Data Movement Layer

Estuary is the right-time data platform, purposefully built to become a dependable, unified data movement layer for enterprises.

For so long, data engineers had to choose between batch and streaming, sacrificing either throughput and latency. Estuary’s right-time approach enables your data team to do batch and real-time processing side by side, within a unified platform.

With Estuary, data captured from external systems (like CDC from a Postgres database) will be stored in collections, which act as an intermediate, durable storage layer that exists in cloud storage like AWS S3 or Google Cloud Storage.

From these collections, data can move to the destination on any cadence (batch or real-time) within the same platform. There’s even a Replay functionality where you don’t have to re-scan Postgres. You can “replay” the Estuary collection directly from cloud storage to Snowflake, for example.

Estuary's technical edge comes from Gazette, an open-source streaming broker similar to Kafka. However, while Kafka stores data on local disks, Gazette keeps data in fragmented files on cloud storage (which powers Estuary collections). With the decreasing cost of cloud storage, we can have a prolonged retention for a fraction of the cost of Kafka disks.

In addition, Estuary offers a strong alternative to both DIY and managed SaaS platforms thanks to its exactly-once semantics and in-stream transformation. This reduces the development overhead while maintaining a high reliability of your pipelines.

Your data engineer team can also choose to deploy Estuary on a public or private SaaS cloud. Heck, you can even bring your own cloud (BYOC).

As for Postgres connectors, Estuary supports a variety of versions and platforms. It ranges from self-hosted PostgreSQL, Amazon RDS and Aurora, to Supabase and Neon PostgreSQL.

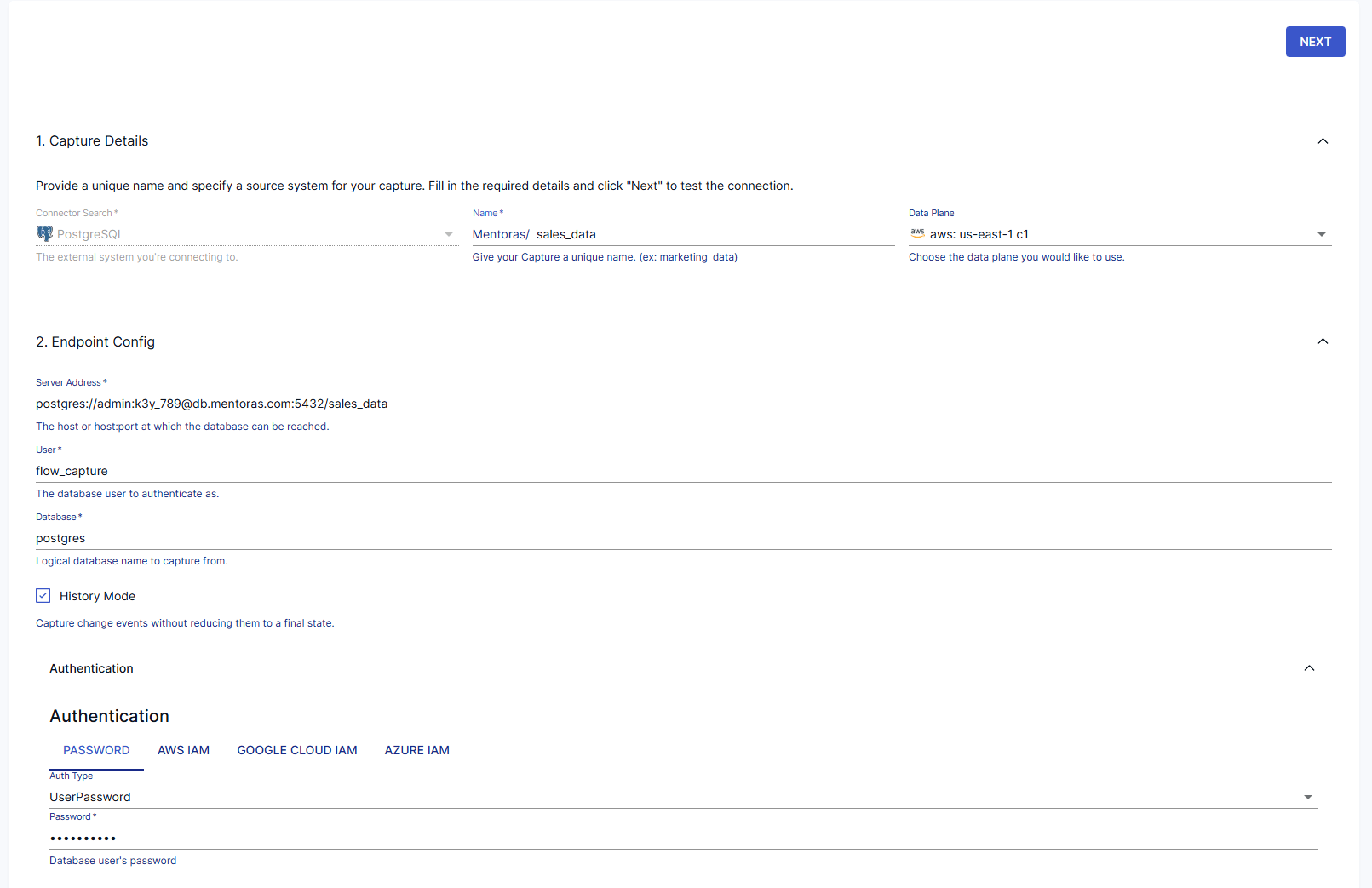

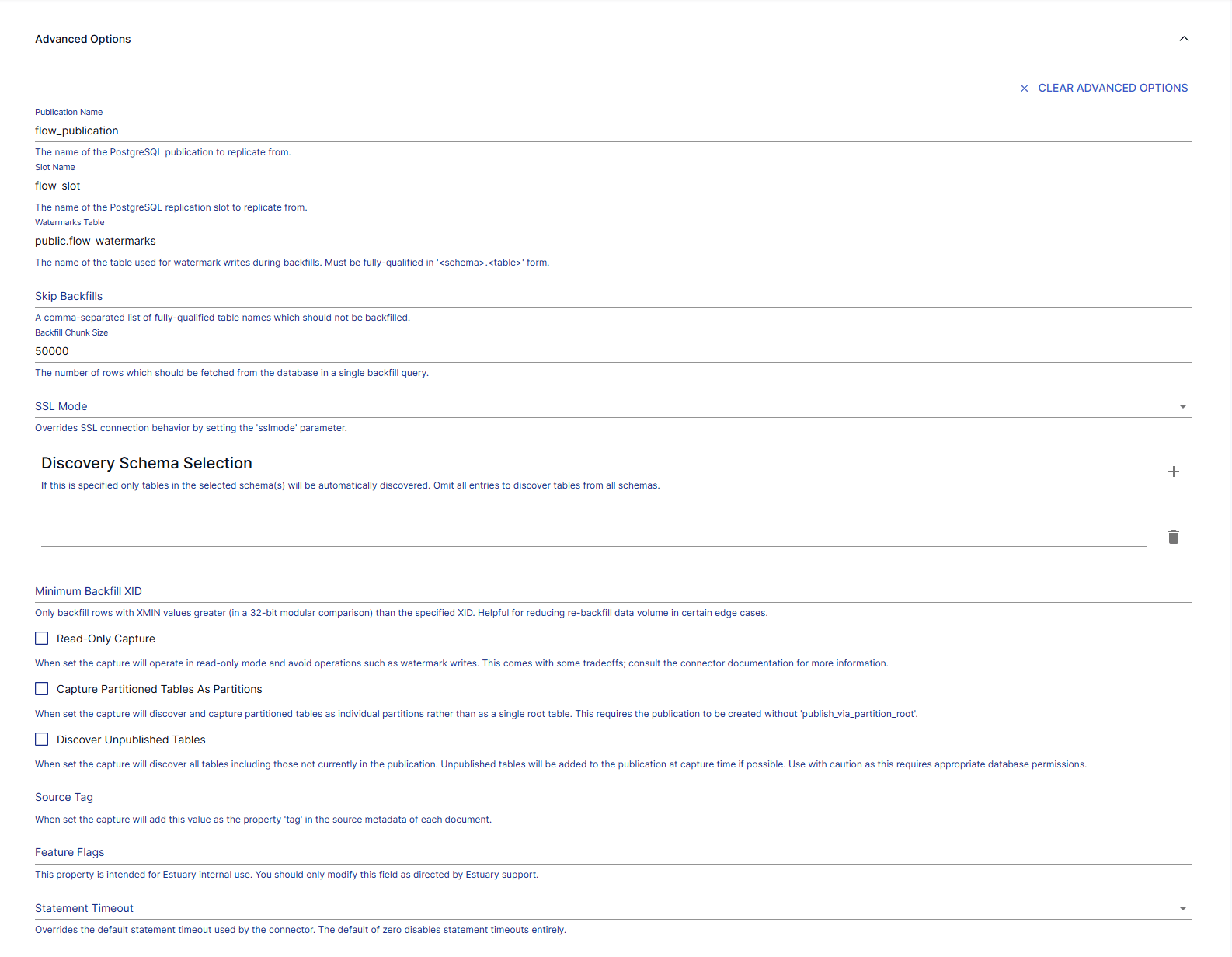

When creating a capture for PostgreSQL, Estuary allows you to set some advanced features rather easily, whereas, in a DIY solution, they would require heavy engineering work.

Some of the most important features include:

- Backfill chunk size: This corresponds to the number of rows Estuary will fetch in the initial snapshot phase. In a DIY solution, you’ll have to manually implement keyset pagination, which requires tuning to avoid OOM (Out of Memory) error. Estuary, on the other hand, handles the state management of these chunks for you.

- Minimum backfill XID: When using tools like Debezium, this ID is a specific transaction ID (XID) you start from. It’s extremely hard to get it right both with a DIY and managed solution like Fivetran. Estuary uses this to stitch together the history and the stream perfectly.

- Watermarks table: Estuary uses this tracking table to coordinate between the snapshotting and the WAL (write-ahead log). A DIY solution would require a separate state storage (like Redis) for this, while Estuary keeps the state in Postgres itself.

- Read-only capture: This mode enables Estuary to capture changes from a read-replica without having to write to a watermark table. It’s particularly difficult to implement since logical replication slots usually require being on the Primary.

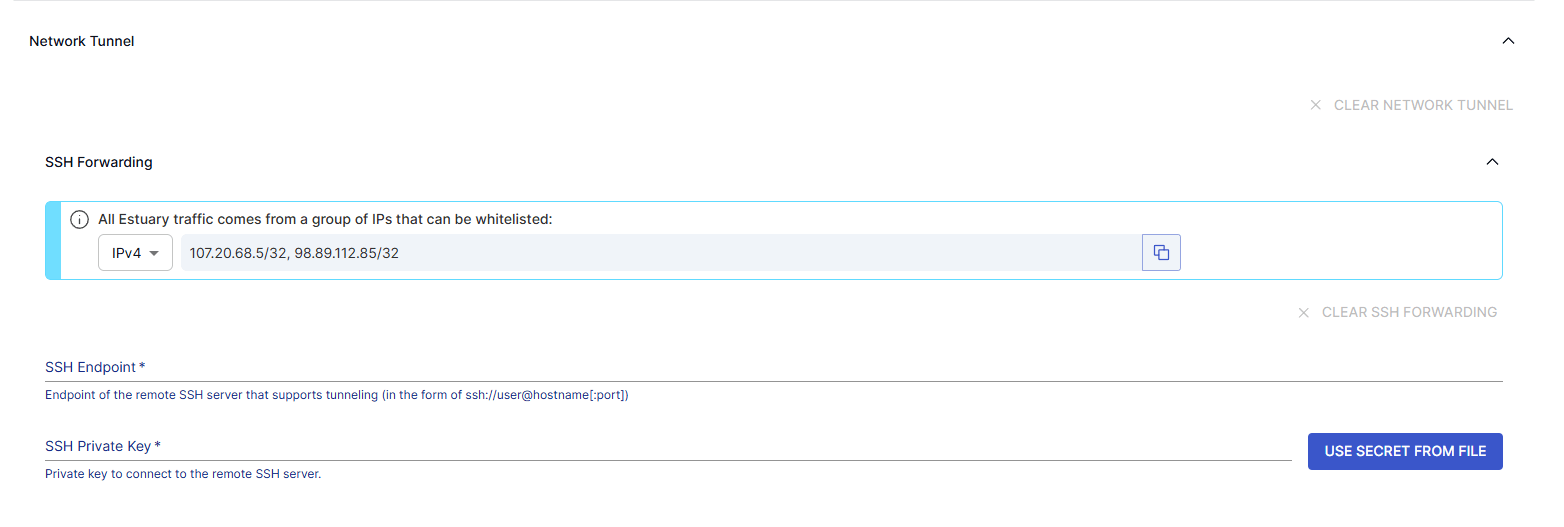

- SSH tunnel: We can set up an SSH tunnel while creating the PostgreSQL capture in Estuary, which will serve as a secure, encrypted bridge that allows Estuary to connect to a PostgreSQL instance sitting in a private network (think AWS VPC). Therefore, the data captures won't be exposed to the open internet. Obviously, this would require complex logic handling and a certain level of networking and security expertise on your team to get it right.

Here’s an example of how you can create a PostgreSQL capture in the Estuary UI:

You'll only need to specify the name and the endpoint configuration of the source system (in this case, PostgreSQL) to get started.

Then, in the advanced settings, you can set the parameters for additional options, which we have covered above: backfill chunk size, minimum backfill XID, read-only capture, and more.

We can, of course, configure the SSH connection in the Network Tunnel section.

You can also manipulate all of these configurations programmatically with Estuary CLI via a YAML format, which will allow you to define the parameters in a single file.

This is a demonstration of how your team can get the flexibility of a DIY platform (build) with the speed of a managed service (buy) with Estuary.

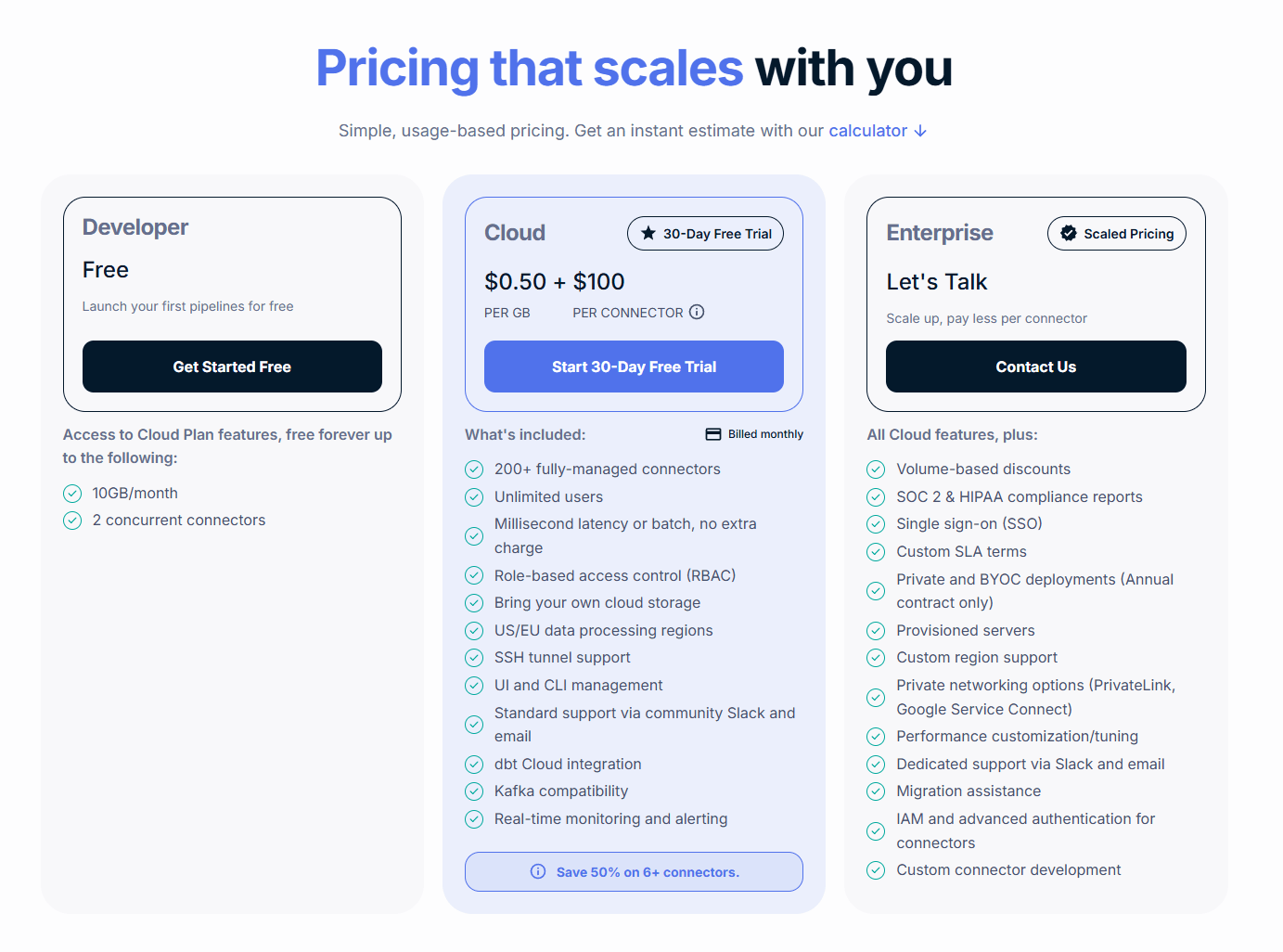

On the pricing note, Estuary has a more predictable TCO. It charges you based on the volume rather than the total number of rows.

Let’s revisit our earlier hypothesis to compare the cost of moving data from Postgres to Snowflake with Estuary vs. Fivetran.

With daily updates of around 100,000 rows and an average row size of 1 KB, you can expect to move 100k * 30 * 1KB = 3GB of data per month. Additionally, the initial load will include 10GB of data.

Estuary’s public pricing is $0.5 per GB and $100 per connector. So, the total cost for your Postgres data movement use case is $100 (Postgres connector) + $100 (Snowflake connector) + $0.5 * 3 = $201 per month.

Even in an extreme case, such as a column update affecting 10 million rows, which made Fivetran’s cost skyrocket, Estuary’s cost remains as expected.

10 million rows * 1KB = 10GB ⇒ a total cost of 10GB * $0.5 + $200 = $205 for a month with heavy activity

A mere 2% increase compared to 1,000% with Fivetran.

True TCO Comparison: When It Makes Sense to Build vs. to Buy

Up until this point, as a new CTO, you’ve heard the arguments from both sides of your data engineering team. Some are fond of the DIY approach, while others are keen on exploring the managed SaaS options. Both paths have their own strengths and weaknesses, and Estuary is an alternative worth considering.

Yet you have to pick only one.

When evaluating the true TCO behind build vs. buy data pipelines, you might want to think beyond the technical capabilities and short-term cost. How much will the solution set you back in the long run?

Here are a few decision signals you should focus on:

- Time to value (TTV): How quickly can business stakeholders benefit from this solution? You want dashboards to be ready next week or next month, not next quarter.

- Talent allocation: The best people on your team should be working on your core products, not plumbing your data pipelines. Unless you're a data integration company, your resources are better spent elsewhere.

- Scalability and maintenance: Your 10x growth shouldn’t lead to a 50x cloud bill. Another thing to keep in mind: when your lead engineer leaves the team, you don’t want the pipelines to collapse with them.

Let’s take a step back and put everything we’ve covered so far on the table.

| DIY (build) | Traditional SaaS (Fivetran) | Estuary (right-time data platform) | |

| Architecture | Debezium + Kafka Custom streaming logic | Point-to-point managed batch | Batch and streaming Capture → Collection → Streaming sink |

| Engineering effort | High Requires a lot of “plumbing” and a Kafka expertise | Low UI-driven | Low Developer first with managed infra |

| Latency | Sub-second but requires constant tuning | >15 minutes | Sub-second |

| Pricing model | Infrastructure cost + engineers salary Hard to track ROI | MAR (monthly active rows) Expensive in case of high-churn data | Volume-based Highly predictable and linear cost |

| Flexibility | Total ownership of the code | Low Vendor lock-in logic and destination types | High Capture once, replay many times |

| Best fit when | You’re operating at a massive scale with proprietary databases that require complex handlings. | You have a small, non-technical team with a lot of SaaS systems to connect to (Salesforce, Workday). Real-time is not your priority. | You're building a future solution for a fast-growing tech company. You're looking for a best price-to-performance ratio for Postgres CDC with real-time streaming. |

If you want to evaluate whether a right-time data movement layer fits your use case, you can explore Estuary with a free account or talk to the team to discuss your requirements.

Conclusion

Given the current pace of technology, build vs. buy is no longer a binary decision.

CTOs and Heads of Data must look beyond technical capabilities and short-term gains to see the true TCO and long-term evolution. It’s not just about which solution has the best technologies; it’s also about which one enables your team to strike the best balance.

Modern enterprises need a solution that scales like a managed service while still giving teams the flexibility to move fast.

Estuary, the right-time data movement platform, can offer a hybrid approach in the build vs. buy debate. With its technical flexibility, cost predictability, and low development overhead, it empowers data teams to deliver a technical and business ROI quickly.

Start building with Estuary today. Book a demo to explore how right-time data movement can support your growth.

About the author

Results-driven data professional with 7+ years of experience designing robust data platforms and AI solutions. Proven track record in building scalable pipelines, mentoring teams, and translating complex data into actionable insights. Fluent in Python, Spark, and other cloud technologies.