Introduction

MongoDB is one of the most popular NoSQL databases, known for its flexible document model, scalability, and ability to handle massive amounts of unstructured or semi-structured data. Many organizations use MongoDB as the backbone for applications, event tracking, and operational data.

To unlock the full value of this data, however, teams often need to move it into data warehouses, analytics platforms, or other operational systems. That’s where ETL tools for MongoDB come in. These platforms make it possible to extract data from MongoDB, transform it into structured formats, and load it into destinations like Snowflake, BigQuery, Databricks, or ClickHouse for deeper analytics and integration.

In this article, we’ll explore the five best ETL tools for MongoDB. We’ll cover modern streaming-first platforms, fully managed enterprise options, lightweight solutions, and even MongoDB’s own native utilities, so you can decide which approach fits your business needs.

What Makes ETL for MongoDB Unique

ETL for MongoDB comes with challenges that differ from traditional relational databases. Because MongoDB is a document-oriented database, its flexible schema and nested data structures require special handling when building pipelines. Here are a few unique considerations:

- Flexible, evolving schema: Unlike relational databases, MongoDB collections do not enforce a rigid schema. Fields can be added, removed, or changed over time, which can easily break pipelines without strong schema management.

- Nested and array data: MongoDB documents often contain deeply nested JSON and arrays. ETL tools need to flatten or map these structures properly to work with SQL-based warehouses and analytics tools.

- Change Streams for real-time: MongoDB’s Change Streams API enables continuous capture of inserts, updates, and deletes. Tools that leverage Change Streams can deliver real-time MongoDB ETL pipelines instead of relying only on batch exports.

- Time series and special collections: Time series collections and views require incremental batch approaches instead of change streams, so the ETL tool must support multiple capture modes.

- Indexing and cursor fields: Efficient incremental loads depend on properly indexed cursor fields (like _id or timeField). Without indexing, backfills and polling can be slow and resource-intensive.

These factors mean that not every ETL platform handles MongoDB equally well. Choosing the right solution depends on whether you need batch or streaming integration, how you want to manage schema drift, and how critical near real-time pipelines are to your business.

Some tools replicate changes for analytics (CDC), while others focus on batch exports and transformations. The best fit depends on whether freshness or transformation depth is your primary need.

How to Choose the Best MongoDB ETL Tools

Not all ETL platforms handle MongoDB equally well. Before selecting a tool, it’s important to evaluate how well it supports MongoDB’s document model, scaling requirements, and real-time capabilities. Key factors to consider include:

- Real-time vs batch support: Does the tool leverage MongoDB Change Streams for continuous replication, or does it only provide scheduled batch exports? Real-time ETL ensures downstream systems are always up to date, while batch pipelines may be sufficient for periodic reporting.

- Handling schema drift: MongoDB’s flexible schema can introduce new fields or remove existing ones at any time. The best ETL tools automatically adjust or enforce schemas to prevent pipeline failures.

- Support for nested JSON structures: Because MongoDB documents often include arrays and nested objects, the ETL platform should support flattening or mapping these structures into relational-friendly formats.

- Deployment and scalability: Some teams prefer fully managed cloud services, while others require private deployments or bring-your-own-cloud options for compliance. Consider whether the tool can scale to large datasets and high-throughput pipelines.

- Security and governance: Features like SSH tunneling, VPC peering, PrivateLink, and access control are critical for organizations that need secure and compliant pipelines from MongoDB.

- Cost and pricing model: Tools vary widely in pricing models — from open-source to usage-based SaaS to enterprise subscriptions. Cost often scales with data volume, so it’s important to match the tool to your expected throughput.

Evaluating these criteria will help you choose the right MongoDB ETL solution — whether you need a streaming-first platform, a simple batch loader, or an enterprise-grade integration suite.

Best ETL Tools for MongoDB Integration

If you are looking to move or sync data from MongoDB into analytics platforms or data warehouses, the right ETL solution can save time and improve reliability. Below are 5 of the most effective ETL tools for MongoDB integration, covering native options, streaming-first platforms, and enterprise-grade services.

1. MongoDB Native Tools (Change Streams + mongoexport)

MongoDB provides native utilities and APIs that can serve as the foundation for ETL pipelines. While not full-featured ETL platforms, they allow developers to move or stream data directly from MongoDB without third-party dependencies.

Key Components

- mongoexport and mongoimport: Command-line utilities that export MongoDB collections to JSON or CSV and import them back. These tools are free and simple, making them cost-effective for small teams or one-time migrations.

- Change Streams API: Captures inserts, updates, and deletes in real time, enabling continuous pipelines without writing custom polling logic. This gives developers a way to build streaming ETL directly into downstream systems.

- MongoDB BI Connector / Atlas Data Lake: Optional MongoDB products that allow SQL-based querying and federation with external storage like S3. These extend MongoDB’s usefulness for analytics without external ETL software.

Considerations

- Requires custom engineering to build full ETL pipelines (schema handling, flattening, retries).

- Does not include built-in monitoring, error handling, or governance features.

- Best suited for teams with development resources and custom pipeline needs.

- Lacks built-in connectors to warehouses—requires scripting or orchestration.

2. Estuary

Estuary supports MongoDB as a source using Change Streams for continuous replication, along with snapshot backfills for historical data. It is designed for teams that want more than scheduled batch exports, especially when MongoDB data feeds real-time analytics or operational systems.

The platform handles standard collections, nested JSON documents, and evolving schemas. It supports multiple capture approaches, including streaming via Change Streams and polling-based methods for collections where streaming is not available.

Key Strengths

- Native Change Stream capture: Continuously stream inserts, updates, and deletes from MongoDB with exactly-once delivery, ensuring downstream systems stay perfectly synchronized.

- Adaptive schema management: Automatically adjusts to evolving document structures, preserving nested and array fields with type-safe mapping into relational destinations.

- Hybrid capture modes: Choose between streaming Change Streams, snapshot backfills, or incremental polling for time-series or archival collections.

- Operational efficiency: Offload heavy reads from production databases by capturing through replicas or secondary nodes.

- Enterprise-grade security: Deploy Estuary in your own cloud or as a managed service with full network isolation via PrivateLink, VPC peering, or SSH tunneling.

- No-code data movement: Configure MongoDB sources and destinations visually, or manage pipelines declaratively via YAML — no connectors to code or maintain.

- Unified transformation layer: Apply lightweight SQL or TypeScript transformations in-line for filtering, normalization, or joins before data lands in your warehouse.

Considerations

- May be more than necessary for simple nightly exports or small reporting workloads.

- Streaming-style pipelines require planning around nested document flattening and downstream schema design.

- As with any MongoDB integration, performance and cost should be validated against your specific data volume and update frequency.

3. Fivetran

Fivetran is a fully managed ETL and ELT platform that provides automated pipelines for MongoDB and hundreds of other data sources. It is popular with enterprises that want hands-off data integration without building or maintaining their own pipelines.

Key Features for MongoDB

- MongoDB connector: Extracts collections from MongoDB (including MongoDB Atlas) and loads them into destinations such as Snowflake, BigQuery, Databricks, or Redshift.

- Automated schema management: Detects schema changes in MongoDB and adjusts pipelines automatically to prevent breakages.

- Prebuilt connectors: Supports hundreds of other SaaS and database sources, making it easy to blend MongoDB data with CRM, ERP, and marketing systems.

- Managed reliability: Monitoring, error handling, and retries are built in, reducing the need for dedicated engineering support.

Considerations

- Pipelines are batch-oriented; not designed for continuous real-time updates via Change Streams.

- Monthly Active Rows (MAR) pricing can become costly with high update volumes or frequent syncs.

- Less control over transformation logic in transit; transformations occur post-load in the warehouse.

- Still subject to MongoDB API performance and rate limits.

4. Stitch Data

Stitch Data is a cloud-based ETL service built on top of Singer’s open-source connectors. It offers a simple and affordable way to replicate MongoDB data into popular data warehouses for analytics.

Key Features for MongoDB

- MongoDB connector: Supports extracting data from MongoDB collections and loading it into destinations such as Snowflake, BigQuery, Redshift, or PostgreSQL.

- Cloud-native simplicity: Fully managed service with an easy-to-use interface that reduces setup time.

- Singer ecosystem: Built on Singer taps and targets, which gives flexibility to extend or customize integrations if needed.

- Affordable entry point: Transparent pricing and lower costs than enterprise-first tools like Fivetran.

Considerations

- Limited to batch replication; no streaming CDC currently available.

- Advanced transformations and deep data modeling may require external tooling or engineering effort.

- Monitoring and error recovery are more basic compared to enterprise platforms.

- Best suited for straightforward, periodic syncs rather than complex production workloads.

5. Talend

Talend is an enterprise-grade data integration and transformation platform that supports MongoDB alongside a wide range of structured and unstructured data sources. It is often chosen by large organizations that require robust governance, compliance, and advanced transformation capabilities.

Key Features for MongoDB

- Native MongoDB connectors: Talend provides components to read from and write to MongoDB, including MongoDB Atlas. Data can be extracted from collections, transformed in Talend Studio, and loaded into destinations like Snowflake, Databricks, or on-prem databases.

- Powerful transformations: Offers a drag-and-drop UI in Talend Studio and support for complex transformations, making it possible to clean, enrich, and reshape MongoDB data before loading.

- Enterprise governance: Includes features like data quality checks, lineage tracking, and compliance reporting—important for regulated industries.

- Deployment flexibility: Available as Talend Open Studio (open-source), a commercial cloud offering, or self-hosted enterprise deployments.

Considerations

- Generally requires more engineering involvement and setup time than lightweight tools.

- Batch-oriented pipelines may not meet needs for near real-time analytic use cases.

- The learning curve and total cost are higher, especially for enterprise editions.

- Self-managed or hybrid deployments can increase operational overhead.

Comparison of ETL Tools for MongoDB

Tool | Real-Time Support | Ease of Use | Pricing Model | Best Fit |

| MongoDB Native Tools | Change Streams enable real-time, mongoexport is batch-only | Developer-focused, requires scripts | Free with MongoDB | Teams building custom pipelines in-house |

| Estuary | Streaming-first with Change Data Capture | No-code, fast setup | Transparent volume-based | Teams needing low-latency or hybrid (stream + batch) MongoDB pipelines |

| Fivetran | Batch-first | Very easy, fully managed | MAR-based (costly at scale) | Teams wanting managed batch sync with minimal maintenance |

| Stitch Data | Batch-first | Simple, cloud-based | Usage-based, affordable | SMBs needing simple batch replication to a warehouse |

| Talend | Primarily batch | Moderate (GUI, transformations) | Open-source + commercial licensing | Teams needing heavier transformations and governance workflows |

Have specific compliance, scaling, or deployment questions? Contact us - we’d be happy to help map out the right MongoDB ETL setup for you.

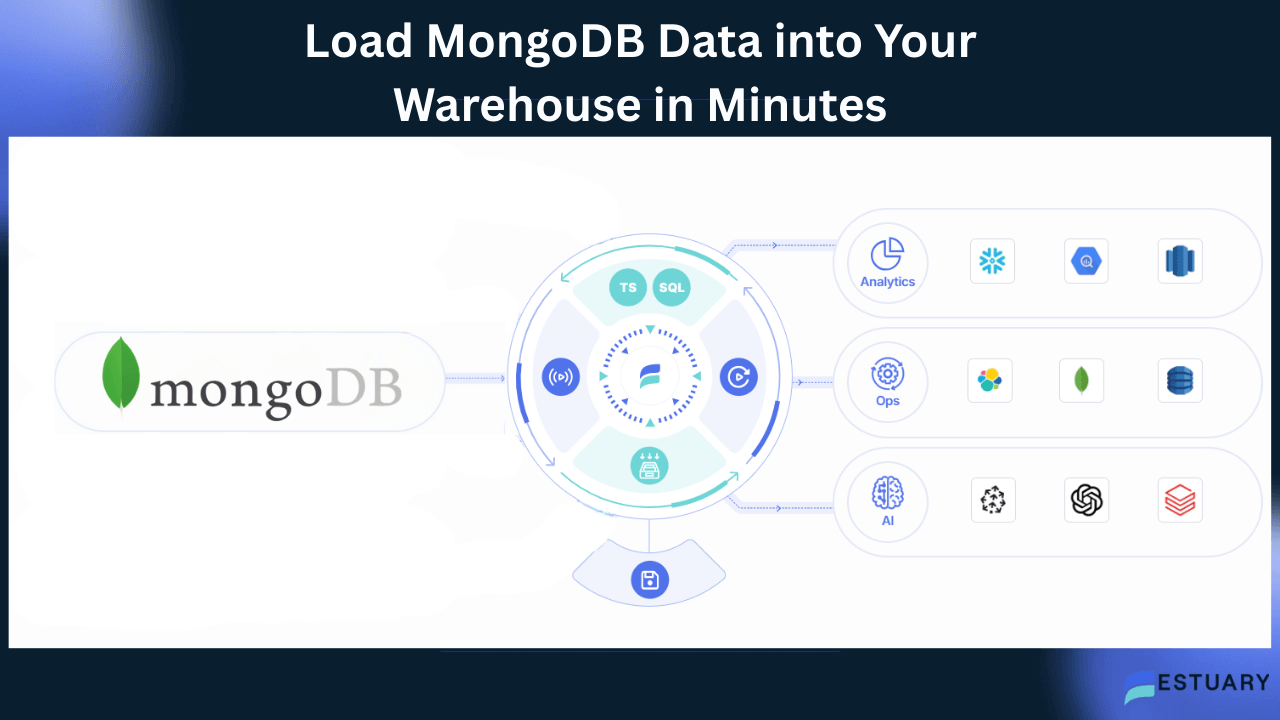

How to Load MongoDB Data into Your Warehouse in Minutes with Estuary

Step 1: Prerequisites

- Access to a MongoDB instance (self-hosted or Atlas).

- Credentials: database user, password, and connection URI (e.g., mongodb+srv://...).

- An Estuary account (sign up free).

Step 2: Create a MongoDB Capture

- In the Estuary dashboard, go to Sources → + New Capture.

- Select MongoDB from the connector list.

- Fill in the Endpoint Config fields:

- Address → MongoDB URI (without credentials, e.g. mongodb://my-mongo.test?authSource=admin).

- User → database username.

- Password → database password.

- Database (optional) → leave blank to discover all available DBs, or enter specific DB names.

- (Optional) Enable Capture Batch Collections in Addition to Change Stream Collections if you want to include views or time-series collections.

- (Optional) Adjust Default Batch Collection Polling Schedule (default = 24h).

👉 At this stage, Estuary will connect and discover your MongoDB collections.

Step 3: Backfill & Continuous Sync

- Estuary automatically performs a snapshot backfill of existing data.

- Simultaneously, it starts reading Change Streams (if available) so you don’t miss real-time updates during backfill.

- Once backfill completes, only incremental changes are streamed forward.

Step 4: Optional Transformations

- Navigate to Collections → New Transformation.

- Select one or more source collections.

- Choose a transformation language: SQL or TypeScript.

- Define your transformation (e.g., filtering, joins, field renaming).

- Save the derived collection — this becomes the pipeline output.

Step 5: Materialize Data to a Destination

- Go to Destinations → Create Materialization.

- Choose a warehouse or database destination (Snowflake, BigQuery, Databricks, ClickHouse, PostgreSQL, Elastic, etc.).

- Provide connection details for your target system.

- Map your Estuary collections to destination tables.

Estuary will now stream MongoDB changes into your warehouse in near real time, with exactly-once guarantees.

Key Takeaways

- MongoDB ETL pipelines must handle flexible document structures, nested JSON, and evolving schemas — challenges that traditional ETL tools are not designed for.

- Estuary is the Right-Time Data Platform built for document data, using MongoDB Change Streams for continuous CDC and exactly-once delivery to ensure downstream consistency.

- It simplifies complex MongoDB transformations, automatically detects schema drift, and provides enterprise security through Private Cloud and BYOC deployment options.

- Estuary connects MongoDB with modern destinations such as Snowflake, BigQuery, Databricks, ClickHouse, and PostgreSQL — combining historical backfills with real-time syncs.

- Fivetran offers managed batch ETL for MongoDB, but is limited in streaming capability and can become costly at scale.

- Stitch Data and MongoDB native tools are useful for lightweight workloads or developer-managed projects but require more manual effort.

- Talend remains best suited for enterprises with complex transformation and compliance needs, though it is heavier to maintain.

Conclusion

MongoDB ETL tools differ primarily in how they handle schema flexibility, nested documents, and incremental updates. Native MongoDB utilities can work well for developer-managed exports and lightweight use cases. Batch-first services are often sufficient for scheduled warehouse syncs. Enterprise ETL platforms provide deeper transformation and governance features for regulated or complex environments.

Teams that require lower-latency analytics or operational pipelines should prioritize tools that support Change Streams, reliable backfills, and safe schema evolution.

Rather than selecting a tool based only on feature lists, test one or two high-volume collections end-to-end. Compare freshness, nested data handling, error recovery, and total cost at your expected scale. The best MongoDB ETL tool is the one that fits your data model and operational requirements over time.

Next Step

Start a MongoDB ETL proof of concept by creating a free account in the Estuary dashboard, or contact our team to review your MongoDB architecture and data requirements.

FAQs

Can MongoDB ETL be done in real time?

How does Estuary compare to Airbyte for MongoDB ETL?

What are the challenges of DIY MongoDB ETL with custom scripts?

About the author

Team Estuary is a group of engineers, product experts, and data strategists building the future of real-time and batch data integration. We write to share technical insights, industry trends, and practical guides.