Why most CDC comparisons get it wrong

Most lists of CDC tools treat log-based CDC and incremental sync as if they are interchangeable options on a spectrum. They are not. They are fundamentally different approaches with different latency profiles, source database requirements, and failure modes. Choosing the wrong category does not mean picking a slightly slower tool, it means building the wrong kind of pipeline entirely.

This guide makes that distinction the organizing principle. The nine tools below are grouped by what they actually do, evaluated on criteria that matter in production, and compared honestly including their real limitations and pricing realities. If you have already read three articles on this topic and felt like you were reading the same list with different logos, this one is different.

One important note on framing: CDC is a technique, not a product category. Some tools on this list are pure CDC engines. Others are broader data integration platforms that use CDC as one of several ingestion modes. Knowing which is which prevents a lot of architectural mistakes downstream.

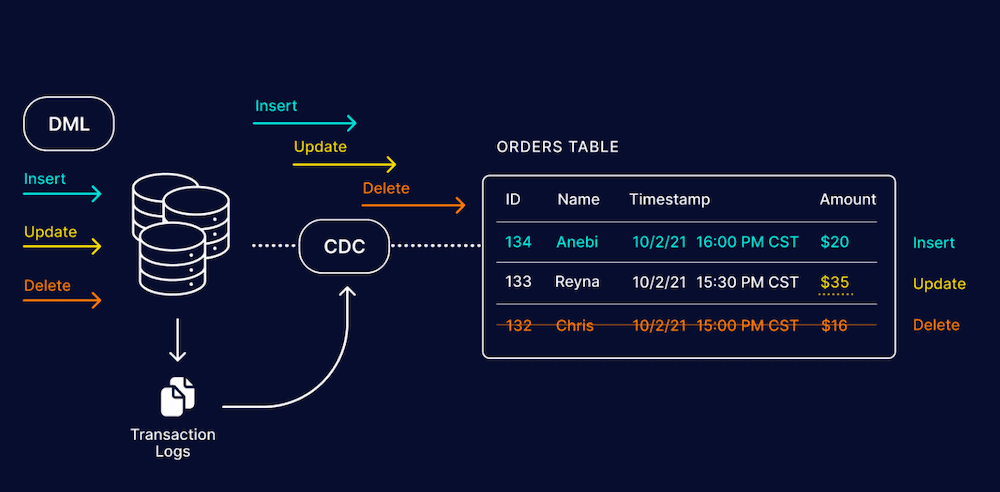

What is Change Data Capture (CDC)?

Change Data Capture is the process of identifying row-level changes in a source database -- inserts, updates, and deletes -- and propagating them to downstream systems without copying the entire table on every run.

The alternative is full table extraction: read everything, compare with the last snapshot, figure out what changed. That approach works until it doesn't, usually around the point where your tables hit a few hundred million rows and your batch window starts eating into business hours.

CDC solves this by reading what actually changed, not inferring it after the fact. In log-based CDC, the gold standard -- the tool reads the database transaction log directly. Every committed transaction is already recorded there. The CDC engine replays those events to downstream systems, often within milliseconds of the original commit.

The three CDC methods compared

| Method | How it works | Latency | Best for |

|---|---|---|---|

| Log-based CDC | Reads database transaction logs (WAL, binlog, redo log) directly | Sub-second to seconds | Real-time pipelines, high-volume, low source impact |

| Query/timestamp-based | Polls source tables using updated_at or primary key comparisons | Minutes (scheduled) | Simple syncs where latency tolerance is high |

| Trigger-based | Database triggers write changes to a shadow table; tool reads the shadow table | Near real-time | Legacy databases without accessible transaction logs |

When trigger-based CDC makes sense: it is mostly useful for legacy databases that do not expose transaction logs to external tools. The operational overhead is significant (triggers fire on every write, creating additional load) and you should migrate away from it as soon as the underlying database supports log access.

Why teams switch to CDC (and what they give up with batch)

The honest version of this section is not just a list of benefits. Teams switch to CDC because batch pipelines have broken something important to them. Here are the real scenarios:

- Data freshness failures: a nightly ETL job means your analytics dashboard is always showing yesterday. Your fraud model is scoring transactions against 24-hour-old feature data. CDC closes that window to seconds.

- Database load problems: full table scans to detect changes hammer production databases, especially during business hours. Log-based CDC reads from the transaction log, which is already being written regardless -- the marginal load is near zero.

- Scale breaking batch windows: a 4-hour ETL job that worked fine on 10GB tables breaks when the table hits 500GB. CDC pipelines do not get slower as tables grow because they only process what changed, not the whole table.

- Event-driven architecture adoption: teams building microservices or streaming analytics need a reliable change event stream, not a scheduled file. CDC is the standard way to turn a relational database into an event source.

What you give up: CDC is harder to operate than batch. You need to think about WAL retention limits, replication slot management, schema evolution, and delivery guarantees. The tools in this list handle varying amounts of that complexity for you, which is a core factor in the evaluation below.

How we evaluated these tools

The criteria below reflect what actually determines whether a CDC pipeline stays healthy in production, not just whether it passes a demo.

- CDC method accuracy: log-based CDC is the standard for real-time pipelines. Tools using incremental queries are noted clearly because the behavior difference is significant.

- End-to-end latency: measured from source commit to destination write, not just pipeline throughput. Sub-second, seconds, and minutes are meaningfully different for downstream use cases.

- Delivery guarantees: at-least-once delivery is the minimum. Exactly-once semantics matter for financial data, audit logs, and anything where duplicate processing has consequences.

- Operational complexity: how much do you need to know to keep it running? Kafka cluster management, connector offsets, and schema evolution are all real operational costs.

- Connector depth, not just count: a tool that lists 500 connectors but has shallow CDC support for your specific database version is worse than a tool with 20 connectors that are deeply tested.

- Pricing transparency: CDC tools that hide pricing behind sales calls are noted. Pricing that scales predictably with data volume is differentiated from pricing that can spike unexpectedly.

- Schema evolution handling: what happens when an upstream team adds a column, renames a field, or drops a table? Pipelines that break silently on DDL changes are a production risk.

The 9 best CDC tools in 2026

1. Estuary

Best for: teams that want real-time CDC, batch backfills, and streaming pipelines in a single managed platform without running Kafka or stream processors themselves

CDC method: Log-based (WAL/binlog) | Latency: Sub-second | Deployment: Fully managed, BYOC available

Estuary is a right-time data platform built around the idea that CDC pipelines and batch pipelines should not be separate systems requiring separate teams to operate. In practice, most organizations running Debezium for real-time CDC also run Airflow or dbt for historical backfills, and end up maintaining two codebases to solve one problem.

Estuary unifies those patterns. It captures changes from database transaction logs with sub-second latency and supports full historical backfills, replays, and scheduled batch ingestion through the same connector infrastructure. The platform is fully managed -- no Kafka cluster, no Connect workers, no offset management -- but it also offers private deployments and Bring Your Own Cloud (BYOC) options for teams with data residency or security requirements.

Under the hood, Estuary uses a persistent, append-only log called a collection as its central abstraction. Every change event is written to the collection first, which means downstream systems can read at whatever pace they can handle, and you can replay the full history of a pipeline without re-running CDC from scratch.

Where Estuary performs well

- Exactly-once delivery into supported destinations, which matters for financial data, audit trails, and any pipeline where downstream deduplication is painful to implement correctly

- Schema evolution: Estuary detects upstream schema changes and handles column additions and type changes without requiring manual intervention or pipeline restarts

- Connector coverage that goes beyond databases -- S3, GCS, Kafka, Salesforce, and major SaaS systems alongside MySQL, Postgres, MongoDB, SQL Server, and others

- No infrastructure to operate. The operational overhead that makes Debezium hard to scale (Kafka cluster sizing, connector restarts, offset management) does not exist in Estuary's managed offering

- Free tier available at estuary.dev/register, with usage-based pricing for production workloads. No sales call required to get started.

Honest limitations

- CDC must be enabled on source databases (logical replication for Postgres, binlog for MySQL). If your DBA team restricts log access, Estuary cannot work around that

- Not a general-purpose message broker. If you need to publish arbitrary application events alongside database change events, you will still need Kafka or a similar system for the application event side

- The collection abstraction requires some conceptual adjustment if your team thinks primarily in terms of tables and jobs rather than streams

Estuary in production: what real teams report

★★★★★ 5/5 on G2 "Set up a real-time CDC pipeline for a database on a secured network in minutes. The data arrives at the destination in real-time, with schema changes properly handled. Running without issues for months." -- Verified User, Consulting (G2, Feb 2025

Case study: Surface Ventures A VC firm needed sub-second analytics on financial portfolio data from Neon Postgres into MotherDuck -- including deeply nested joins. Production in 2 days | Sub-second end-to-end latency | 10x lower cost vs alternatives "When I enter new data, I can immediately see the impact of changes on investments and portfolios." -- Gyan Kapur, Co-Managing Partner

Estuary's free tier covers 2 tasks and up to 10GB of data per month. For most teams evaluating CDC, this is enough to run a full proof of concept against a production database before committing to paid infrastructure.

2. Debezium

Best for: engineering teams running Kafka who want open-source, log-based CDC with full control over every component

CDC method: Log-based (WAL/binlog/redo) | Latency: Sub-second | Deployment: Self-hosted (Kafka required)

Debezium is the most widely deployed open-source CDC engine in the world. It runs as a Kafka Connect plugin, reads directly from database transaction logs, and publishes ordered change events to Kafka topics. Downstream consumers (Kafka Streams, Flink, Spark, or custom consumers) read those topics at their own pace.

The Debezium model gives you something important: the change stream and the processing layer are completely decoupled. You can add or remove consumers, replay events from any offset, and build completely different downstream pipelines against the same CDC stream. No other CDC tool gives you this level of architectural flexibility.

Debezium supports MySQL (binlog), PostgreSQL (logical decoding via pgoutput or wal2json), SQL Server (CDC tables), Oracle (LogMiner or XStream), MongoDB (change streams), Db2 (ASN), and several others. Each connector has different maturity levels -- the MySQL and Postgres connectors are production-hardened across thousands of deployments; the Oracle connector works but requires more careful configuration.

The real operational picture

This is the part that vendor-neutral guides often gloss over. Running Debezium in production is not just installing a JAR file. Here is what you are actually signing up for:

- Kafka cluster management: Debezium depends on Kafka Connect, which depends on Kafka. You need to size this correctly, manage topic retention, handle broker failures, and plan for upgrades. Managed Kafka (Confluent Cloud, Amazon MSK, Aiven) reduces this but does not eliminate it.

- Replication slot hygiene (Postgres): inactive replication slots will hold WAL files indefinitely, eventually filling your disk. Monitoring pg_replication_slots is not optional; it is a production requirement.

- Schema registry: Debezium change events include the schema of the row that changed. As schemas evolve, managing those schema versions via Confluent Schema Registry or Apicurio adds operational surface area.

- Connector restarts and offset recovery: when a connector fails and restarts, it needs to resume from the correct offset without creating duplicates or missing events. Testing this failure mode before it happens in production is critical.

None of this is a reason to avoid Debezium. It is a reason to staff for it appropriately. Teams with strong Kafka expertise consistently run very reliable Debezium pipelines. Teams that underestimate the operational requirements consistently struggle.

Honest limitations

- Requires Kafka and Kafka Connect infrastructure -- this is not negotiable

- Does not handle delivery to destinations; it only produces to Kafka topics. You need a separate Kafka consumer or Kafka Connect sink connector to move data to a warehouse or database

- No built-in UI, monitoring, or alerting. You build observability yourself or use Kafka management tools

- Free, but total cost of ownership, including infrastructure and engineering time is often higher than managed alternatives at scale

3. Fivetran (powered by HVR)

Best for: enterprises that need the widest possible connector coverage and are willing to pay for a fully managed, governance-rich platform

CDC method: Log-based via HVR acquisition | Latency: Sub-second to minutes depending on destination | Deployment: Fully managed SaaS

Fivetran acquired HVR in 2021, which gave it enterprise-grade log-based CDC capabilities on top of its already large connector library. HVR was built specifically for high-volume, low-latency database replication -- it reads directly from Oracle redo logs, SQL Server CDC tables, and other log mechanisms and delivers to targets with minimal lag.

The result is a platform that covers both the "I need to pull data from our Salesforce instance" use case and the "I need sub-second CDC from Oracle" use case through a single vendor. For organizations managing dozens of data sources, that consolidation has real value.

Where Fivetran earns its price

- More than 500 pre-built connectors, many with CDC support, covering a range of databases and SaaS applications that no other single vendor matches

- Automated schema migration: Fivetran detects upstream schema changes and applies them at the destination automatically, reducing the most common source of silent pipeline failures

- Strong data governance features including column-level encryption, access controls, and audit logging, which matter for compliance-sensitive industries

- 9% uptime SLA with 24/7/365 support and a global team. For teams without deep data engineering expertise in-house, this support quality is genuinely valuable

The pricing reality

Fivetran uses a Monthly Active Rows (MAR) pricing model. On low-volume pipelines, the cost is manageable. On high-change-rate databases -- say, a payments table with millions of transactions per day -- MAR costs can grow quickly in ways that are hard to predict before you instrument your actual change volume.

Evaluate Fivetran with your actual change rates, not estimated ones. Request a cost estimate based on a one-week sample of your production change log before signing a contract.

Honest limitations

- Pricing can become expensive at high change volumes due to MAR model; understand your change rates before committing

- Limited ability to customize connector behavior or add proprietary transformation logic within the pipeline

- Some connectors have meaningful latency even with HVR backing -- the sub-second claim applies to the HVR-powered database connectors, not all 500+

4. Qlik Replicate

Best for: enterprises running high-volume, mission-critical replication across heterogeneous database environments including mainframes, SAP, and Oracle

CDC method: Log-based | Latency: Seconds | Deployment: Managed or self-hosted

Qlik Replicate (formerly Attunity Replicate) is one of the oldest and most battle-tested enterprise CDC platforms available. It was built from the ground up for the specific problem of replicating data across different database technologies at high volume, with the operational reliability requirements of a Fortune 500 data center.

Where Qlik Replicate differentiates itself is in the breadth of legacy and enterprise source support. It handles mainframe sources (IBM Db2 for z/OS, IMS), SAP applications (via HANA and ABAPand other extraction methods), and legacy enterprise databases that other tools often struggle with. If your organization has a heterogeneous mix of enterprise systems built up over decades, Qlik Replicate is worth evaluating seriously.

Where it fits

- Large-scale Oracle, SQL Server, DB2, and SAP replication where you need very high throughput and proven enterprise support

- Regulated industries (banking, healthcare, insurance) where the tool's audit trail, change logging, and support history matter for compliance

- Organizations already in the Qlik ecosystem that want a CDC solution integrated with Qlik's broader data management and analytics platform

Honest limitations

- Commercial pricing is not publicly disclosed; expect enterprise-level costs

- Limited built-in transformation capabilities; complex data reshaping happens downstream

- Heavier to set up and operate than modern SaaS CDC platforms; benefits from experienced DBA involvement

- Less suited for teams that need rapid iteration or self-service pipeline creation

5. Oracle GoldenGate (OCI GoldenGate)

Best for: large enterprises running Oracle database environments who need mission-critical CDC with high availability and disaster recovery support

CDC method: Log-based (redo logs) | Latency: Seconds | Deployment: Managed (OCI) or self-hosted

GoldenGate has been doing log-based database replication longer than most of the other tools on this list have existed. It was the standard for Oracle-to-Oracle replication in large enterprises through the 2000s and 2010s, and OCI GoldenGate extends that to a managed cloud service with broader database support.

The tool's core strength is in scenarios where data correctness and uptime are non-negotiable. Active-active replication (both source and target accept writes and stay in sync), bidirectional sync, and near-zero downtime migrations are all production-tested capabilities. The complexity of setting these up is high, but for organizations that genuinely need them, there is no cleaner solution.

The Oracle-centricity trade-off

GoldenGate works best when Oracle is either the source or the target. The non-Oracle connectors (MySQL, PostgreSQL, SQL Server) work but have historically received less investment and testing depth than the Oracle-to-Oracle path. If you are not running Oracle databases, start with a different tool.

Honest limitations

- Among the highest licensing costs in the CDC market; typically requires an Oracle support contract alongside the GoldenGate license

- Requires experienced Oracle DBAs to configure and maintain; not self-service

- The best capabilities are Oracle-to-Oracle; non-Oracle use cases have more rough edges

- Modern SaaS CDC tools have narrowed GoldenGate's advantage significantly for teams not already invested in the Oracle ecosystem

6. Striim

Best for: enterprises that need CDC and real-time stream processing in a single runtime, without assembling a separate Flink or Spark cluster for in-flight transformations

CDC method: Log-based + streaming | Latency: Sub-second to seconds | Deployment: Managed cloud or self-hosted

Striim was founded by several engineers who worked on Oracle GoldenGate, and that heritage shows. The CDC capture engine is mature and reliable. What differentiates Striim from pure CDC tools is the built-in stream processing layer: you can filter rows before they reach the destination, join multiple change streams, enrich events with reference data lookups, and aggregate in-flight -- all within Striim, without routing the data through a separate Flink or Spark job.

This integration has real value for teams building operational analytics or event-driven architectures where you need to transform data close to the source before it lands in a warehouse or a downstream system. The alternative -- raw CDC into Kafka, then Flink for processing, then a sink to the destination -- involves three separate systems with three separate failure modes.

Where Striim fits and where it does not

Striim is a strong fit when transformations are complex and latency-sensitive. If your CDC pipeline is mostly "replicate these tables as-is to Snowflake", Striim's processing layer adds cost without adding value. Use a simpler managed CDC tool for that pattern.

Honest limitations

- Commercial pricing; costs are not publicly listed and scale with data volume and feature usage

- Striim's SQL-based streaming query language has a learning curve, particularly for teams coming from batch ETL backgrounds

- Historical reprocessing is less flexible than on platforms designed around event log replay

- Self-hosted deployments require JVM-based infrastructure management

7. AWS Database Migration Service (DMS)

Best for: AWS-native teams that want managed CDC without running Kafka, primarily for database migrations or ongoing replication into AWS analytics services

CDC method: Log-based | Latency: Seconds to minutes | Deployment: Fully managed (AWS only)

AWS DMS is the path of least resistance for CDC within an AWS-first architecture. It captures changes from database transaction logs and delivers them to Amazon Redshift, S3, RDS databases, DynamoDB, Kinesis Data Streams, and other supported targets -- all without deploying any infrastructure yourself.

The setup experience is genuinely simple for standard use cases. If you are replicating a MySQL RDS instance to Redshift, you can have a working CDC pipeline in an afternoon. AWS handles the instance management, failover, and upgrades.

The limitations that matter in practice

AWS DMS is a replication tool, not a data integration platform. It does not support complex transformations during replication. The latency on some source/target combinations can drift into the minutes range during high-volume periods. And the tool is firmly tied to AWS -- if you have data sources on-premises or in another cloud, the integration path is more complex.

A pattern worth noting: AWS DMS works well as a bridge into Kinesis Data Streams, where you can then use more capable stream processing (Kinesis Analytics or Flink on EMR) for transformations before landing in Redshift or S3. This is often a better architecture than relying on DMS alone for complex pipelines.

Honest limitations

- Primarily AWS ecosystem only; multi-cloud or on-prem-first architectures are awkward

- Latency can degrade under high load; DMS instances need to be right-sized for your change rate

- Limited transformation capabilities -- designed for replication, not enrichment or reshaping

- Pricing is per replication instance hour, which is predictable but can be more expensive than usage-based alternatives at low change volumes

8. Airbyte

Best for: teams that need broad SaaS and database connector coverage and can tolerate batch-default behavior for most sources in exchange for a rich open-source connector ecosystem

CDC method: Log-based via embedded Debezium for supported databases; incremental batch for others | Latency: Minutes (most connectors) | Deployment: Self-hosted or Airbyte Cloud

Airbyte started as an ELT platform and has grown into one of the most widely used open-source data integration tools. For CDC specifically, Airbyte uses Debezium as an embedded library for its database connectors, which means the underlying change capture mechanism is the same battle-tested engine -- but Airbyte manages the connector lifecycle and Kafka is not required.

The differentiation is connector breadth. Airbyte's community-maintained connector catalog covers SaaS applications, databases, and file systems that purpose-built CDC tools often skip. If you need to replicate data from a combination of a Postgres database, a Salesforce instance, and a HubSpot account into Snowflake, Airbyte handles all three through one platform.

The CDC caveat

Airbyte's CDC support is real but uneven. The Postgres and MySQL CDC connectors are well-tested and use true log-based capture. Many other connectors use incremental refresh (scheduled queries with a cursor), which is batch behavior regardless of how frequently you run it. If log-based CDC is a hard requirement for a specific source, verify that Airbyte's connector for that source actually uses it before committing.

Honest limitations

- Batch-default behavior for most non-database connectors; sub-minute latency is not the default experience

- Self-hosted Airbyte requires meaningful infrastructure management (Docker, Kubernetes, or their cloud offering)

- Complex stream processing is not supported within Airbyte pipelines; transformations happen at the destination

- The open-source version and the cloud version have meaningful feature gaps; enterprise features require Airbyte Cloud

9. Skyvia

Best for: small teams or non-technical users who need simple, scheduled data synchronization between systems and where latency of minutes is acceptable

CDC method: Incremental sync (not true log-based CDC) | Latency: Minutes (scheduled) | Deployment: Fully managed SaaS

Skyvia is included in this list with a clear caveat: it is not a log-based CDC tool. It detects data changes by querying source tables on a schedule, comparing results to the previous run using timestamps or primary keys, and syncing the differences. This is incremental replication, not event-driven change capture.

That said, Skyvia fills a genuine need. Not every data synchronization problem requires sub-second latency. If you need to keep a reporting database roughly in sync with an operational system and can tolerate 15-minute delays, Skyvia's no-code setup and affordable pricing make it a reasonable choice. The setup experience is significantly simpler than any of the other tools on this list.

When to use Skyvia and when not to

Use Skyvia when: the data is not time-critical, the source database does not expose transaction logs, you need a quick integration without engineering resources, or the volume is low enough that scheduled queries do not create meaningful load.

Do not use Skyvia when: you need sub-second or even sub-minute data freshness, you are building event-driven pipelines, or your source tables are large enough that full scans would create performance problems.

Honest limitations

- Not true CDC: changes are detected by queries, not from transaction logs

- Latency depends on schedule frequency; true real-time is not achievable

- Does not guarantee ordering of changes the way log-based tools do

- Limited scalability for very high-volume or high-change-rate tables

Full comparison: 9 CDC tools side by side

| Tool | CDC method | Latency | Deployment | Key sources | Pricing model | Best for |

|---|---|---|---|---|---|---|

| Estuary | Log-based + batch | Sub-second | Fully managed (BYOC) | MySQL, Postgres, MongoDB, S3, Snowflake, Redshift, BigQuery | $0 free tier; usage-based | Unified CDC + batch; exactly-once; low ops overhead |

| Debezium | Log-based | Sub-second | Self-hosted (Kafka) | MySQL, Postgres, SQL Server, Oracle, MongoDB, Db2 | Free (open source) | Open-source engine; Kafka-native; full control |

| Fivetran (HVR) | Log-based | Sub-second to minutes | Fully managed SaaS | 500+ connectors; Oracle, SAP, SQL Server | Per MAR; can escalate quickly | Widest connector catalog; strong governance; enterprise SLAs |

| Qlik Replicate | Log-based | Seconds | Managed or self-hosted | Oracle, SQL Server, DB2, SAP, Snowflake, Redshift, BigQuery | Commercial (opaque) | High-volume enterprise replication; strong monitoring |

| Oracle GoldenGate | Log-based | Seconds | Managed (OCI) or self-hosted | Oracle-first; SQL Server, MySQL, Postgres, others | Commercial (high) | Mission-critical Oracle environments; HA and DR scenarios |

| Striim | Log-based + streaming | Sub-second to seconds | Managed or self-hosted | Databases, cloud warehouses, messaging | Commercial (opaque) | CDC + in-flight stream processing; enterprise reliability |

| AWS DMS | Log-based | Seconds to minutes | Fully managed (AWS only) | RDS, Aurora, Redshift, S3, DynamoDB, others | Per instance/hour | Simple managed CDC within AWS; good for migrations |

| Airbyte | Log-based (via Debezium) + batch | Minutes (batch default) | Self-hosted or managed cloud | 300+ connectors; broad SaaS and DB coverage | Open source free; cloud usage-based | Wide connector coverage; good for SaaS sources; limited real-time |

| Skyvia | Incremental (not log-based) | Minutes (scheduled) | Fully managed SaaS | Databases, SaaS apps, files | Freemium; affordable tiers | Simple no-code sync; best for non-critical, low-latency-ok use cases |

How to choose the right CDC tool for your stack

The comparison table above tells you what each tool does. This section tells you which one to pick based on your actual situation.

Step 1: Determine whether you need true log-based CDC

Ask your DBA whether your source database exposes its transaction log to external tools. For Postgres, this means logical replication must be enabled (wal_level = logical). For MySQL, binary logging must be on (log_bin = ON). For Oracle, you need Supplemental Logging enabled and either LogMiner access or XStream configured.

If log access is available, you should use a log-based CDC tool. If it is not available and cannot be enabled (common in managed database services with restricted configurations or in legacy environments), then incremental sync tools or trigger-based approaches are your fallback options.

Step 2: Decide on managed versus self-hosted

Self-hosted CDC (Debezium) gives you full control and no ongoing licensing costs, but requires engineering bandwidth to operate. Managed CDC (Estuary, Fivetran, AWS DMS, Skyvia) trades control for operational simplicity. The right answer depends on your team's capacity, not on which approach is theoretically better.

A practical heuristic: if your team does not already run Kafka, starting with a managed CDC platform is almost always the right call. The operational complexity of running Kafka for the first time while simultaneously building CDC pipelines is a significant distraction from actually solving the business problem.

Step 3: Match the tool to your latency requirement

Be specific about what "real-time" means for your use case. Sub-second latency is necessary for fraud detection, live inventory systems, and operational analytics dashboards that business users refresh continuously. Seconds-range latency is fine for most analytics pipelines feeding overnight reporting. Minutes-range is acceptable for data warehouse syncs where dbt runs on an hourly schedule anyway.

Over-engineering on latency is expensive. Do not pay for sub-second CDC infrastructure if your downstream consumers check for new data every five minutes.

Quick decision guide

| Your situation | Condition | Recommended tool |

|---|---|---|

| Sub-second CDC to a cloud warehouse, managed | You want zero Kafka ops | Estuary |

| Open-source, Kafka already in stack | You need full control, no vendor | Debezium |

| 500+ SaaS and DB connectors, enterprise governance | Fivetran HVR pricing is acceptable | Fivetran (HVR) |

| Heavy Oracle or SAP environment, enterprise SLAs | Budget for commercial licensing | Qlik Replicate or GoldenGate |

| CDC + real-time stream processing in one tool | You need in-flight filtering and joins | Striim |

| Everything on AWS, simple migration CDC | AWS lock-in is fine | AWS DMS |

| Wide SaaS source coverage, batch is acceptable | Sub-minute latency not required | Airbyte |

| Small team, non-critical sync, no-code preferred | Latency of minutes is fine | Skyvia |

Getting CDC right: the configuration details that matter

These are the things that trip up most teams in their first production CDC deployment, regardless of which tool they use.

Postgres: wal_level and replication slots

Set wal_level = logical in postgresql.conf before enabling CDC. On managed Postgres services (RDS, Cloud SQL, Aurora), this is a parameter group setting that usually requires an instance restart.

Every CDC tool that connects to Postgres creates a replication slot. Monitor pg_replication_slots and pg_stat_replication regularly. An inactive slot will hold WAL files indefinitely. If your CDC consumer goes offline for an extended period and you do not clean up the slot, you risk filling the disk on your Postgres primary.

MySQL: binary logging and row format

Ensure log_bin = ON and binlog_format = ROW in your MySQL configuration. Statement-based and mixed-format binary logs do not support CDC reliably because they do not record the actual row values for all statement types.

Set binlog_row_image = FULL so that both the before and after image of every changed row is included in the binary log. Without this, you will only get the changed columns, which makes it difficult to maintain the correct state at the destination.

Schema evolution: plan for it, do not react to it

Schema changes are the most common cause of silent CDC pipeline failures. Adding a nullable column is usually fine. Renaming a column, changing a data type, or dropping a column can break downstream consumers that do not handle schema evolution gracefully.

Before choosing a CDC tool, test your specific schema evolution scenarios against it. Specifically: add a column to a source table with CDC running and verify that the destination handles it correctly without manual intervention. Most managed tools handle this; many self-managed setups require explicit handling.

Delivery guarantees and destination idempotency

Log-based CDC tools deliver at-least-once by default, meaning duplicate events are possible during failure recovery and restarts. If your destination is a data warehouse loading via MERGE/UPSERT, duplicates are handled. If your destination is a queue or a system that processes events with side effects, you need to implement idempotency at the consumer.

Exactly-once delivery (end-to-end) is technically harder and most tools either do not offer it or offer it only for specific source-destination combinations. Understand what your chosen tool guarantees before assuming duplicates cannot happen.

CDC troubleshooting: common problems and fixes

| Symptom | Likely cause | Fix |

|---|---|---|

| Pipeline lag spikes suddenly | WAL or binlog retention hitting its limit on the source | Increase max_wal_size (Postgres) or expire_logs_days (MySQL); monitor consumer lag dashboards |

| Schema change breaks the pipeline | Tool does not handle DDL automatically | Use tools with schema evolution support (Estuary, Fivetran); add DDL change alerts |

| Duplicate records at destination | At-least-once delivery with no deduplication at the sink | Use exactly-once tools where possible; add primary key upsert logic at destination |

| High CPU on source database | Query-based or trigger-based CDC polling too aggressively | Switch to log-based CDC; Debezium/Estuary read logs without polling the main tables |

| Kafka consumer lag growing | Underpowered Connect worker or insufficient partitions | Scale Connect workers; increase topic partition count; monitor using consumer group metrics |

| Replication slot bloat in Postgres | Inactive Debezium slot holding WAL indefinitely | Drop unused replication slots; set wal_keep_size; monitor pg_replication_slots |

Conclusion

The decision between CDC tools comes down to three variables: whether you need true log-based capture, how much operational complexity you can absorb, and which sources and destinations you need to connect.

For teams that want to move fast without managing infrastructure, Estuary covers the most common use cases -- log-based CDC from major databases into cloud warehouses and data lakes -- with a free tier that makes it easy to validate the approach before committing to production. For teams already running Kafka who want full control, Debezium remains the open-source standard. For organizations with large Oracle or SAP environments and enterprise procurement processes, Qlik Replicate and Oracle GoldenGate are the proven options.

Whatever you choose, test the failure modes before going to production. The tool that looks simplest in a demo is not always the tool that stays operational at 3am when a connector drops its offset and your Postgres WAL starts growing.

Try Estuary for free

Set up a real-time CDC pipeline from your database to Snowflake, BigQuery, or Redshift in under 10 minutes. No Kafka, no brokers, no infrastructure to manage. Start free

Related reading

FAQs

Does CDC require changes to the source database?

Can CDC tools handle schema changes automatically?

How does CDC affect source database performance?

What is WAL and why does it matter for CDC?

What CDC tool is best for Postgres?

Is Debezium the same as Kafka?

About the author

With over 15 years in data engineering, a seasoned expert in driving growth for early-stage data companies, focusing on strategies that attract customers and users. Extensive writing provides insights to help companies scale efficiently and effectively in an evolving data landscape.