Today, capturing, analyzing, and using streaming data is revolutionizing industries across the board. With its live and ready-to-go nature, streaming data is changing the game in data science and analytics, empowering organizations to make rapid, on-the-fly decisions.

Based on a recent study, the global streaming analytics market is projected to reach $175 billion by 2030, growing at a compound annual growth rate (CAGR) of 33.56% during the forecast period. This just gets to show the immense value and potential that streaming data offers.

However, alongside the opportunities brought by streaming data, organizations are faced with the challenge of effectively integrating and leveraging it within their data science and analytics practices. Limited awareness and understanding of streaming data technologies can hinder the adoption and utilization of real-time data analytics.

Explore this guide to understand what streaming data is, its role in data science and analytics, and how it transforms raw data into actionable insights that enhance business outcomes.

What Is Streaming Data?

Streaming Data is a continuous flow of information generated and processed in real-time. This data is produced by sources like applications, network devices, server log files, and different kinds of online activities.

Streaming data doesn’t require the complete data set to be collected before it can be processed. It’s immediately received and acted upon as soon as the data is generated, ensuring the most current information is available for analysis and decision-making.

With more devices connected to the internet, the use of streaming data has become pivotal. It’s a key component in modern technologies, ranging from health monitoring devices that provide real-time health metrics to home security systems that detect and report unusual activities immediately.

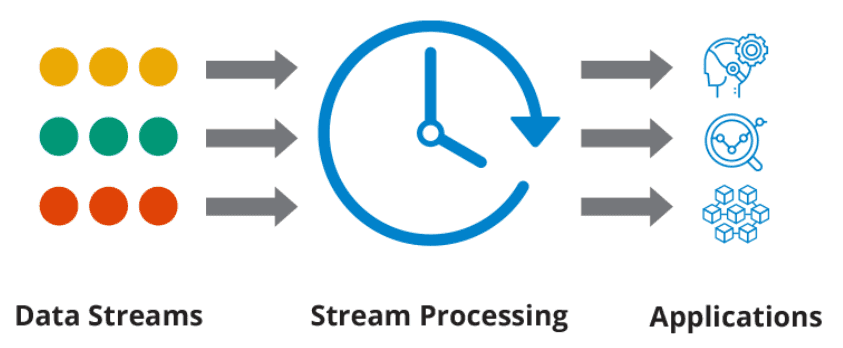

How Streaming Data Works: Your Gateway To Real-Time Data Processing & Analysis

Understanding the workings of streaming data not only provides a glimpse into its capabilities but also explains how it has become an important component in modern data management. Let’s look at its underlying process:

Step 1: Data Capture

It involves real-time data collection from a variety of streaming data sources. These can include sensors, data streaming applications, or databases. Rapid and continuous data capture forms the foundation of the real-time operation of data streaming.

Step 2: Data Processing

The next phase involves processing this raw information. This is achieved using data stream processing engines that can execute operations such as filtering, aggregation, and enhancement, transforming the raw data into a useful resource for subsequent processes.

Step 3: Data Delivery

After the data is processed, it is dispatched to a variety of destinations. These can be databases, analytics systems, or user applications. Timely data delivery ensures that the information can be put to use immediately and effectively.

Step 4: Data Storage

The final step of the data streaming process is the storage of the processed data. The data can be stored in various formats, such as in-memory storage, distributed file systems, or cloud-based storage solutions. Optimal data storage mechanisms are critical for maintaining the seamless flow and functionality of data streaming.

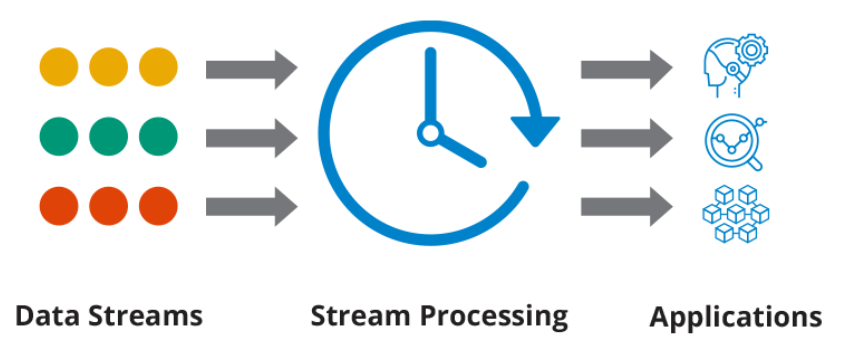

What Is Streaming Data Analytics?

Streaming data analytics is the process of extracting insights from a continuous flow of data, often referred to as a real-time data stream. To achieve this, continuous queries execute data analysis from a multitude of streaming sources, which could include health monitoring systems, financial transactions, or traffic monitors.

The power of streaming data analytics lies in its ability to enable rapid detection of significant events or changes. For instance, a sudden surge in web traffic or a sudden dip in financial transactions could be detected almost immediately. Organizations can then react effectively and efficiently, drastically reducing the time between event occurrence and response.

The primary goal of streaming analytics is to empower organizations to promptly identify crucial events and respond to them effectively. It fundamentally changes how we approach data, shifting from traditional static data analysis to a more dynamic evaluation.

The Role of Streaming Data in Data Science

The intersection of data science and data streaming has opened new avenues in analyzing, interpreting, and making the most out of data in real time. Data streaming brings a dynamic edge to the static world of batch processing and has transformed the way data is processed and insights are derived.

Embracing data streaming in data science means we are now moving away from waiting for data to pile up for batch processing. Instead, the focus is on real-time data processing which equips businesses with timely and actionable insights and fuels faster and more efficient decision-making processes.

If you're interested in diving deeper into the world of data streaming and its applications, consider enrolling in a Data Science Course. This course will provide you with the tools and techniques needed to harness the power of streaming data for real-time analytics. For more information on how to get started with a Data Science Course, you can explore relevant offerings from educational institutions and online learning platforms.

How Streaming Data Fuels Predictive Modeling

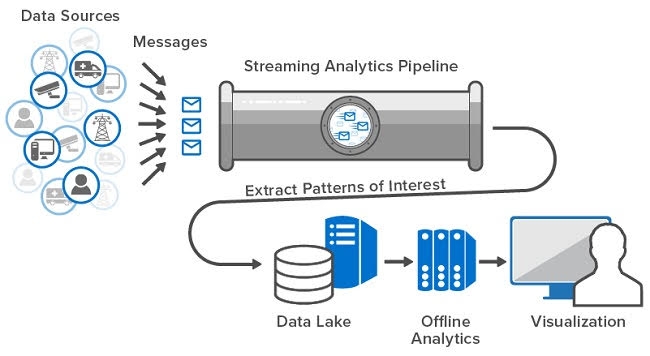

Data science is increasingly leaning towards real-time solutions and data streaming plays a pivotal role in this shift. One of its applications is predictive modeling.

If we take the example of identifying phishing websites, the necessary features like the URL’s length or the domain’s age are extracted from the dataset.

The process involves deploying the model into the streaming pipeline and performing model scoring on every single data point as they stream in. This is a significant step forward from the traditional offline or batch prediction model, offering immediate insights as data comes in.

Online Learning With Data Streaming

One of the key areas where data streaming has made a marked difference in data science is model updating. In traditional approaches, organizations would update models offline by collecting all new observations and periodically retraining the model, typically overnight or weekly. But with data streaming, online learning is possible.

In online learning, the model gets updated for every new observation that comes in, helping the model adapt to new trends much more quickly. Also, when datasets are too large to train on in their entirety, online learning proves beneficial in training the model incrementally. This constant learning and adjusting give the model an added edge, as it continually evolves with the incoming data.

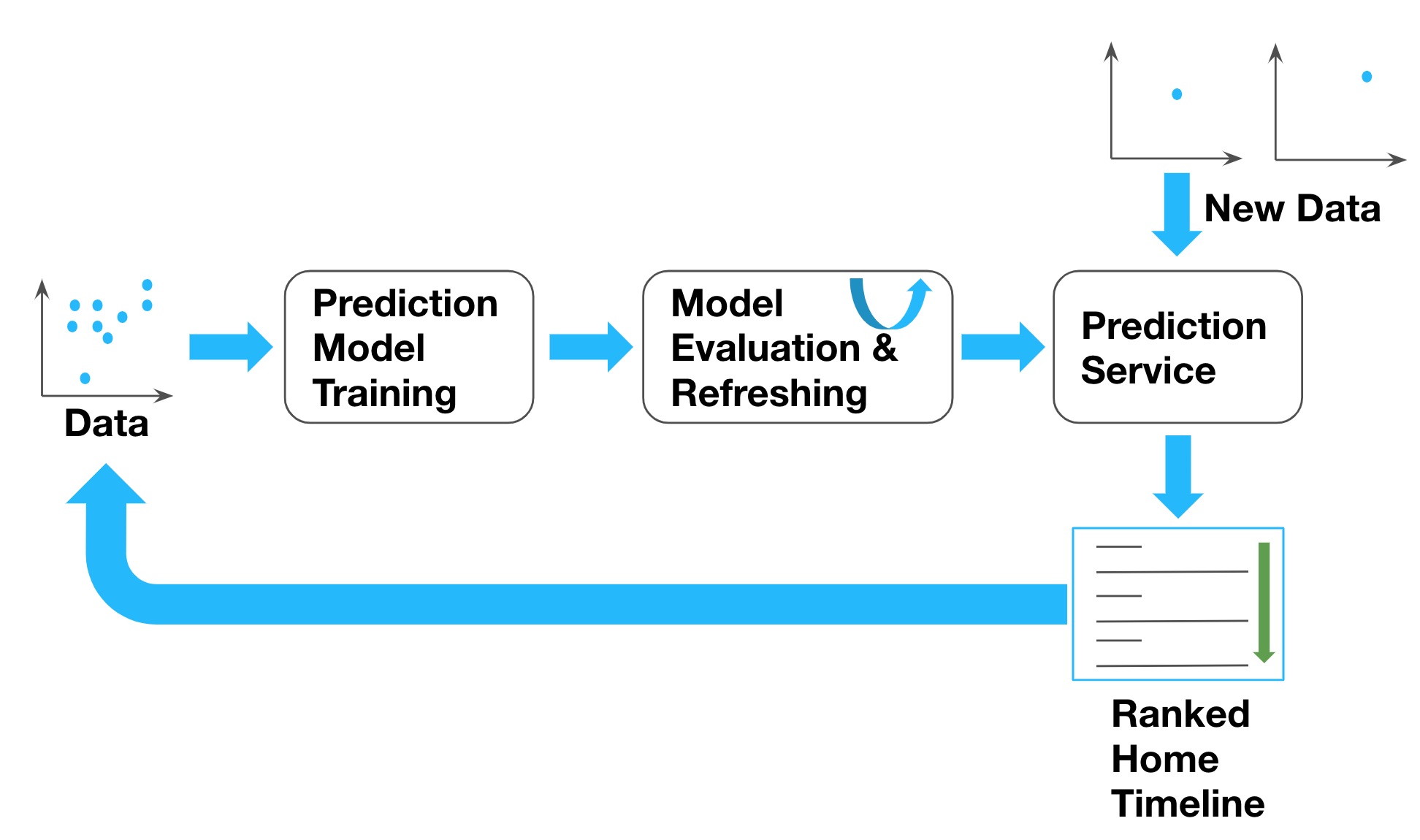

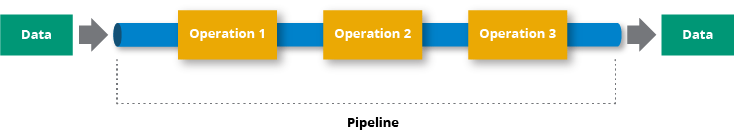

Building A Data Streaming Pipeline

Data scientists not only analyze and interpret complex digital data but also work on building data streaming pipelines. A typical pipeline includes:

- Database

- Streaming processing engine

- Queuing system that stores the messages

- Serving layer that could be an API or a front-end web application

One essential aspect to consider when building your data streaming pipelines is time - particularly understanding and managing the difference between event time and processing time. This forms a crucial part of dealing with data in a streaming pipeline.

Key Considerations for Streaming Data

Implementing data streaming in data science requires careful consideration of several factors. Some of the major ones include:

- Tolerance for latency in the pipeline: Real-time data processing can involve latency. Understanding how much latency is tolerable for your specific use case is crucial.

- Understanding the impact on model accuracy: With real-time streaming and updates, the model’s accuracy can vary. It’s important to measure and monitor this actively.

- Control over the model updating process: While online learning allows the model to adapt to new trends faster, remember that the control over data feeding into the model is less in comparison to batch processing.

Use Cases of Streaming Data for Operational Efficiency and Insights

Data streaming is not confined to a single domain but has a wide range of applications across diverse industries. Let’s look at some examples of streaming data in the real world.

Health Monitoring Devices

In healthcare, real-time data processing can be used in health monitoring devices to provide instant metrics. For instance, data coming from a patient’s wearable device can be streamed and processed in real time. This enables healthcare professionals to monitor the patient’s health and provide immediate feedback or intervention if necessary.

Real-time Inventory Management

Data streaming is also crucial in modern supply chain and inventory management. IoT applications in this industry often deal with streaming real-time data. For instance, sensors on warehouse shelves stream data about the current inventory level. This information is then processed and analyzed to ensure accurate inventory management.

Personalized Advertisements

In the online advertising industry, data streaming is often used to track user behavior, clicks, and interests. This information is processed instantaneously to deliver personalized advertisements to users. This way, the likelihood of a user engaging with an ad is significantly increased, leading to more effective advertising campaigns and improved revenue for businesses.

Real-Time Log Analysis

Data streaming enables real-time log analysis, a tool widely used in IT operations. You instantly get valuable insights by using distributed processing of log streams. It allows for in-depth analysis of logs with out-of-the-box filters for quick visualization. Whether it’s analyzing network logs to detect anomalies or monitoring the internet activity of applications, data streaming plays a crucial role.

Fraud Detection

Data streaming also serves an instrumental role in fraud detection. Machine learning (ML) models trained on streaming data help identify data anomalies, thereby preventing fraudulent transactions from occurring. Stream processing combined with automated alert systems via email, SMS, or social media provides a robust security measure against financial fraud.

Cybersecurity

The use of streaming data to identify and isolate cyber threats is a significant aspect of modern cybersecurity. Analyzing incoming traffic enables the identification of threats such as DDoS attacks by actively observing whether there is an unusual volume of traffic originating from a single IP address or a user with a single profile.

Stream Processing Of Sensor Data

Data streaming is also pivotal in processing real-time data from sensors and devices. For instance, in the aviation industry, it helps spot potential faults before they escalate into significant issues, thereby reducing maintenance delays and improving flight safety. Another example could be modern IoT applications, which rely heavily on real-time data processing for efficient supply chain and inventory management.

Learning about abstract concepts and methodologies can certainly be educational but there is a unique value in examining real-world instances. Let’s discuss some case studies and see how these technologies were employed in actual scenarios.

Real-World Case Studies of Streaming Data

Here are a couple of real-world scenarios where 2 distinct organizations effectively incorporated data streams into their operations:

Case Study 1: Streaming Data In The Oil & Gas Industry

A Fortune 500 Oil & Gas company based in the U.S. was in search of ways to upgrade its monitoring systems and enhance safety measures for its workers and boost operational efficiency.

Their existing system could not automate alerting or store sensor data for long-term analyses and predictive modeling. The major challenges included handling a massive volume of data, industry-specific complexities, and ensuring high fault tolerance due to remote drill site locations.

Solution

The company partnered with a data management firm to build a new data streaming framework.

- Performance Tuning: The system was optimized for processing large volumes of IoT data from multiple drilling sites.

- Fault tolerance: The solution included redundant pipelines and buffered messages in a distributed event streaming platform to minimize data loss and disruption.

- Data storage and ingestion: They opted for a distributed data storage engine for real-time data and HDFS for long-term storage. A custom origin was built to read the sensor data in the O&G industry’s standard WitsML format.

Result

The new system enables real-time automated alerts for anomalies and supports historical data analysis and future forecasting. The high-redundancy solution provides a more detailed view of the drilling sites, thereby improving worker safety and operational efficiency.

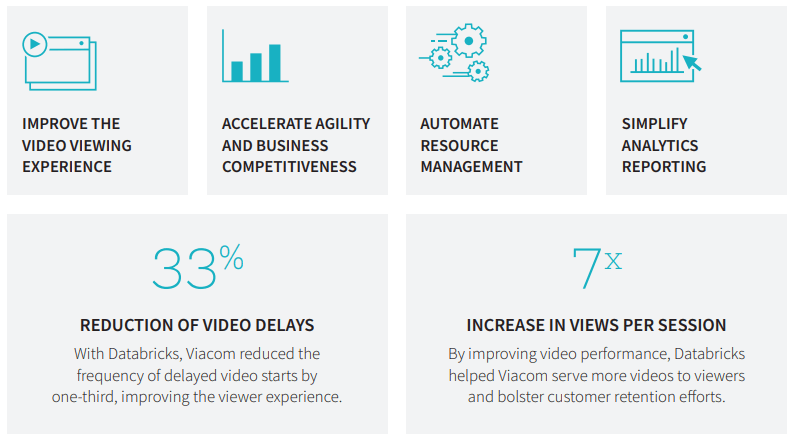

Case Study 2: Transforming Viewer Experience In The Broadcast Industry

Viacom, operating around 170 networks across 160 countries, was exploring ways to leverage data analytics to enhance viewer loyalty and increase revenue.

Their existing delivery systems were struggling with the worldwide streaming of petabytes of video data, causing issues like video loading failures and stuttering because of rebuffering. The major challenges were understanding and utilizing vast amounts of viewing data, handling the immense volume of network information, and creating effective advertising strategies amidst declining TV ad sales.

Solution

Viacom collaborated with a data analytics firm to construct a real-time analytics platform.

- Platform management: They utilized a unified analytics platform to reduce the burden of managing data processing clusters, monitor video feed quality, and automate resource reallocation.

- Data storage and ingestion: Streaming data was ingested and analyzed in real-time within a distributed processing cluster, then routed to a robust storage service, and presented as recommendations to viewers in the shortest possible time frame.

- Streamlined reporting: The unified analytics platform enabled Viacom to provide on-demand, self-service reporting at different levels of granularity, meeting the needs of both technical and non-technical staff.

- Integration with business intelligence tools: The system is integrated seamlessly with visualization and technical monitoring tools to accelerate business insights and system monitoring.

Result

The implementation of the real-time analytics solution resulted in a 33% reduction in video start delays. It also led to a sevenfold increase in views per session, improving video performance and bolstering customer retention.

How Estuary Can Streamline Your Streaming Data Operations

Estuary is our dynamic platform that simplifies real-time data operations. It's a one-stop solution for organizations to execute real-time change data capture. It offers a comprehensive suite of tools for the collection, storage, and processing of raw data in real time.

Designed with user experience at its core, Estuary eliminates the complexities of managing streaming data architecture. Its intuitive web app and CLI make it accessible and straightforward for all users regardless of their technical expertise.

Estuary Features

Estuary is packed with features that make it a versatile tool to process data:

- Community and support: It provides a wealth of resources, including documentation, tutorials, and a supportive community on Slack.

- Scalable data pipelines: You can construct scalable, future-proof data pipelines within minutes, harmonizing batch and stream processing systems.

- Unified data operations: The platform integrates all systems used to produce, process, and consume data, creating a cohesive environment for data analysis.

- Low-Code UI and CLI: Flow offers a low-code UI for essential workflows and a CLI for fine-grain control over your pipelines. This allows seamless switching between them as you build and refine your pipelines.

- Real-time data capture and transformation: With Flow pipelines, you can capture raw data from various sources into collections, materialize a collection as a view within another system, and derive new collections by transforming from other collections – all in real time.

- Integration with external data systems: A variety of connectors are available to integrate Flow with external data systems, focusing on high-scale technology systems and Change Data Capture. This feature allows for seamless integration with a data warehouse and can even work with legacy data processing methods.

Conclusion

Streaming data provides real-time insights that can steer business strategies toward success. Its wide array of applications across various industries is a testament to its versatility and potential.

However, you need to make sure you have robust data governance frameworks in place to harness the power of streaming data effectively. Without proper controls and security measures, the valuable insights derived from streaming data can be compromised.

If you are looking to upgrade your current data processing capabilities, Estuary is an ideal choice. Flow stands out as a resourceful tool and offers robust features to manage and analyze data effectively. With its focus on real-time data handling, it can significantly enhance your organization’s analytical capabilities.

Sign up for free and try the unmatched capabilities of Estuary. You can also connect with our team for more personalized assistance tailored to your unique needs.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.