Quick Answer: SQL Server to PostgreSQL Migration

Migration approach: Two paths cover most scenarios. Use SSIS for a one-time batch transfer when you already run the Microsoft stack and can tolerate downtime. Use Change Data Capture (CDC) when you need continuous replication, zero-downtime cutover, or are moving a database larger than 100 GB.

Critical type mapping: SQL Server's TIMESTAMP is a row version counter, not a datetime. Map it to PostgreSQL BYTEA or drop it if it serves no business logic. Replace MONEY with NUMERIC(19,4) since PostgreSQL has no native equivalent.

Post-migration requirements: PostgreSQL lowercases all identifiers by default, so CustomerID in SQL Server becomes customerid in PostgreSQL. Audit application code for casing issues before cutover, and reset all identity sequences after bulk load to prevent duplicate key errors on the first insert.

This guide provides a technical walkthrough for migrating production workloads from SQL Server to PostgreSQL. We cover manual schema mapping, SSIS batch migration, and real-time synchronization using Change Data Capture (CDC) to ensure zero data loss.

What You Need Before Starting This Migration

Before you run a single command or open any tool, confirm the following technical requirements are in place. Skipping this pre-flight check is the leading cause of failed or corrupted migrations.

On the SQL Server side:

- SQL Server 2017 or later (Estuary's connector is regularly tested against SQL Server 2017 and up; SQL Server 2016 supports CDC but is not in the documented test matrix)

saor a user account withdb_ownerorCONTROL DATABASEpermissions- SQL Server Agent must be running (required for SSIS job scheduling and CDC)

- TCP/IP protocol enabled in SQL Server Configuration Manager

- Sufficient disk space for a full data export (at minimum 1.5x the current database size)

On the PostgreSQL side:

- PostgreSQL 12 or later recommended

- A target database already created:

CREATE DATABASE target_db; - A dedicated migration user with

CREATEprivileges on the target schema pg_hba.confconfigured to allow connections from your migration host

Network requirements:

- Firewall rules allowing outbound traffic from migration host to SQL Server port 1433

- Firewall rules allowing outbound traffic to PostgreSQL port 5432

- For Estuary CDC: outbound HTTPS (port 443) to Estuary's cloud endpoint

SQL Server to PostgreSQL: Data Type Mapping Reference

This is the most common source of migration failures. SQL Server and PostgreSQL use different type systems, and several types have no direct equivalent. Map every column type before you migrate, not after.

| SQL Server Type | PostgreSQL Equivalent | Notes |

|---|---|---|

INT | INTEGER | Direct equivalent |

BIGINT | BIGINT | Direct equivalent |

SMALLINT | SMALLINT | Direct equivalent |

TINYINT | SMALLINT | PostgreSQL has no 1-byte integer |

BIT | BOOLEAN | 1/0 maps to TRUE/FALSE |

DECIMAL(p,s) | NUMERIC(p,s) | Direct equivalent; use NUMERIC for financial data |

FLOAT | DOUBLE PRECISION | Minor precision differences possible |

REAL | REAL | Direct equivalent |

MONEY | NUMERIC(19,4) | No native MONEY type in PostgreSQL |

SMALLMONEY | NUMERIC(10,4) | No native SMALLMONEY type in PostgreSQL |

CHAR(n) | CHAR(n) | Direct equivalent |

VARCHAR(n) | VARCHAR(n) | Direct equivalent |

VARCHAR(MAX) | TEXT | PostgreSQL TEXT has no length limit |

NCHAR(n) | CHAR(n) | PostgreSQL stores all text as UTF-8 natively |

NVARCHAR(n) | VARCHAR(n) | PostgreSQL stores all text as UTF-8 natively |

NVARCHAR(MAX) | TEXT | Same UTF-8 treatment applies |

TEXT | TEXT | SQL Server TEXT is deprecated; use VARCHAR(MAX) as source |

NTEXT | TEXT | Deprecated in SQL Server; migrate to TEXT |

DATETIME | TIMESTAMP | No timezone info; precision differences possible |

DATETIME2 | TIMESTAMP | Higher precision (100ns vs 1ms); truncation may occur |

SMALLDATETIME | TIMESTAMP | 1-minute precision; safe to map to TIMESTAMP |

DATE | DATE | Direct equivalent |

TIME | TIME | Minor precision differences |

DATETIMEOFFSET | TIMESTAMPTZ | Includes timezone offset; maps cleanly |

BINARY(n) | BYTEA | Binary data; syntax changes required |

VARBINARY(n) | BYTEA | Binary data |

VARBINARY(MAX) | BYTEA | Binary data |

IMAGE | BYTEA | Deprecated in SQL Server; migrate away from this type |

UNIQUEIDENTIFIER | UUID | Direct equivalent |

XML | XML | Direct equivalent |

ROWVERSION / TIMESTAMP | BYTEA | SQL Server TIMESTAMP is NOT a date; it is a row version |

HIERARCHYID | No direct equivalent | Requires application-layer transformation |

GEOGRAPHY / GEOMETRY | PostGIS geometry | Requires PostGIS extension |

JSON (as VARCHAR) | JSONB | Use JSONB for indexable JSON in PostgreSQL |

IDENTITY column | SERIAL or GENERATED ALWAYS AS IDENTITY | Reset sequences after migration |

Critical gotcha: SQL Server's TIMESTAMP is not a datetime. It is a row version counter (ROWVERSION). Do not map it to a PostgreSQL timestamp type. Map it to BYTEA or drop it entirely if not used for business logic.

After migration, reset all sequences:

sql-- Run this in PostgreSQL for each table with a SERIAL/IDENTITY column

SELECT setval('schema.table_id_seq', (SELECT MAX(id) FROM schema.table));

Complexity Note: If manual mapping for hundreds of tables feels prone to error, you can use Estuary’s automated schema discovery to handle these conversions instantly. Try it Free

Common Migration Gotchas and How to Handle Them

These are the issues most migration guides skip. They surface in production.

1. Case sensitivity

SQL Server is case-insensitive by default. PostgreSQL is case-sensitive for identifiers unless quoted. A column named CustomerID in SQL Server becomes customerid in PostgreSQL (lowercased at DDL time). If your application code references CustomerID, it will break unless you either use quoted identifiers in PostgreSQL or lowercase all references in the application.

2. NULL handling in string comparisons

SQL Server treats empty strings and NULLs differently across some functions. Audit your stored procedures for ISNULL() usage; replace with COALESCE() in PostgreSQL.

3. Auto-increment identity gaps

After bulk loading data, PostgreSQL sequences are not automatically updated to reflect the imported maximum ID value. Any INSERT that relies on auto-increment will fail with a duplicate key error until you reset the sequence (see the setval command above).

4. Collation differences

SQL Server collations (e.g., SQL_Latin1_General_CP1_CI_AS) do not map directly to PostgreSQL collations. Sorting behavior for special characters may differ. Run sort-order tests on string columns after migration.

5. T-SQL stored procedures and functions

T-SQL does not run in PostgreSQL. All stored procedures, functions, and triggers must be rewritten in PL/pgSQL. This is a significant effort for databases with heavy server-side logic. Budget this separately from the data migration.

6. Linked servers

SQL Server linked server connections have no direct equivalent in PostgreSQL. If your database uses linked servers for cross-database queries, these must be replaced with postgres_fdw (Foreign Data Wrapper) or application-layer query routing.

Method 1: Manual Migration Using SSIS (SQL Server Integration Services)

SSIS is the built-in Microsoft ETL platform. It works well for one-time batch migrations where the team is already familiar with the Microsoft toolchain.

When to use SSIS:

- One-time, non-incremental migration

- SQL Server environment with existing SSIS infrastructure

- Team has SSIS experience and can maintain the packages

When not to use SSIS:

- You need continuous or near-real-time replication after the initial load

- The database is large (100GB+) and you cannot afford a long migration window

- No existing SSIS expertise on the team

Step 1: Install the PostgreSQL ODBC Driver

Download and install the PostgreSQL ODBC driver (psqlODBC) on the machine running SSIS. Configure a System DSN in Windows ODBC Data Source Administrator pointing to your PostgreSQL target database. Test the connection before proceeding.

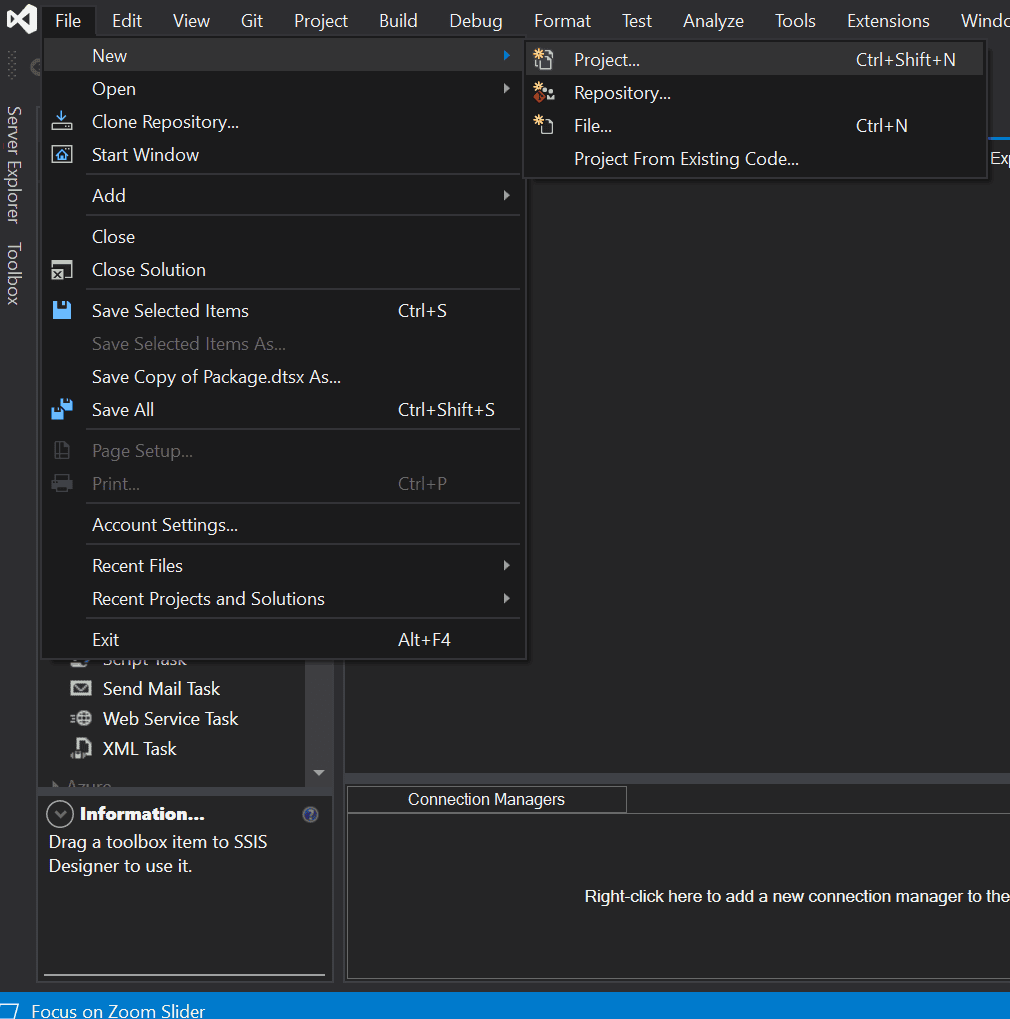

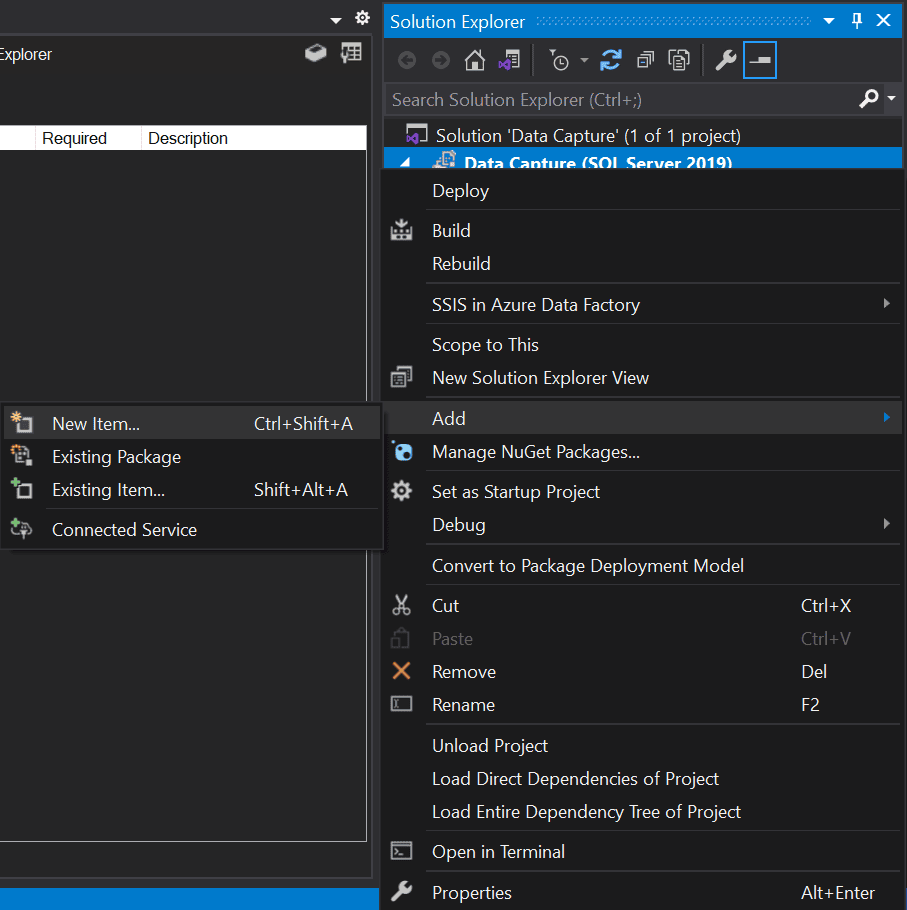

Step 2: Create a New SSIS Project

Open SQL Server Data Tools (SSDT). Select File > New > Project, then choose Integration Services Project. In Solution Explorer, right-click the project and select Add > New Item > SSIS Package.

Step 3: Add a Data Flow Task

In the Control Flow tab, drag a Data Flow Task from the SSIS Toolbox onto the design surface. Double-click to enter the Data Flow editor.

Step 4: Configure the SQL Server Source

Inside the Data Flow editor, drag an OLE DB Source component onto the canvas. Double-click to configure:

- Create a new OLE DB connection pointing to your SQL Server instance

- Select Table or view as the data access mode

- Choose the source table from the dropdown

- Test the connection before closing the editor

Step 5: Configure the PostgreSQL Destination

Drag an ODBC Destination component onto the canvas. Connect it to the OLE DB Source by dragging the blue arrow. Double-click to configure:

- Create a new ODBC connection using the DSN you configured in Step 1

- Select the target PostgreSQL table from the dropdown

- If the target table does not exist yet, create it manually in PostgreSQL first (DDL is not auto-generated by SSIS)

Step 6: Map Source Columns to Destination Columns

In the ODBC Destination editor, go to the Mappings tab. SSIS attempts auto-mapping by column name. Review every mapping manually, especially for the type pairs listed in the data type table above. Pay close attention to:

- Any

DATETIME2toTIMESTAMPmappings (precision truncation) - Any

MONEYorSMALLMONEYcolumns - Any

VARBINARYorIMAGEcolumns (may require a Script Component transformation)

Step 7: Configure Error Handling

Do not skip this step. In the Data Flow, right-click the connection arrow between source and destination and select Configure Error Output. Set the error behavior to Redirect Row rather than Fail Component. Connect the error output to a Flat File Destination to capture rejected rows for later review.

In the Control Flow, add an Email Task or a Script Task connected to the failure path of the Data Flow Task so the team is notified if the package fails.

Step 8: Execute and Validate

Run the package with F5 or the Execute button. Monitor the Progress tab for row counts and errors. After completion:

- Count rows in source:

SELECT COUNT(*) FROM source_table; - Count rows in destination:

SELECT COUNT(*) FROM destination_table; - Spot-check 10-20 random rows for data accuracy

- Run the sequence reset commands for all identity columns

SSIS limitations to know:

- SSIS does not support ongoing CDC. After the initial migration, changes in SQL Server are not automatically reflected in PostgreSQL.

- Large binary (

VARBINARY(MAX),IMAGE) columns require a Script Component transformation and add significant complexity. - SSIS packages require SQL Server Data Tools to edit and SQL Server Agent to schedule. Maintenance overhead is high.

Method 2: Continuous Real-Time Replication with Estuary

Estuary uses Change Data Capture (CDC) to read the SQL Server transaction log and replicate inserts, updates, and deletes to PostgreSQL continuously, with millisecond-level latency. Unlike SSIS, Estuary handles the ongoing sync, not just the initial load.

When to use Estuary:

- You need ongoing replication, not just a one-time migration

- You want to minimize downtime by syncing live changes during the migration window

- You cannot tolerate the maintenance overhead of SSIS package management

- You need a free tier to get started without licensing costs

Prerequisites for Estuary CDC on SQL Server

Before connecting Estuary, SQL Server must have CDC enabled at both the database and table level. Run all commands connected to your source database as a user with sysadmin or db_owner rights.

sqlUSE your_database;

GO

-- Enable CDC on the database

EXEC sys.sp_cdc_enable_db;

GO

-- Create a dedicated Estuary user

CREATE LOGIN estuary_user WITH PASSWORD = 'strong_password';

CREATE USER estuary_user FOR LOGIN estuary_user;

-- Grant SELECT on the schema(s) containing tables to capture (adjust if not dbo)

GRANT SELECT ON SCHEMA :: dbo TO estuary_user;

-- Grant SELECT on the CDC schema

GRANT SELECT ON SCHEMA :: cdc TO estuary_user;

-- Required: grant VIEW DATABASE STATE permission

GRANT VIEW DATABASE STATE TO estuary_user;

-- Enable CDC on each table you want to replicate (repeat for each table)

EXEC sys.sp_cdc_enable_table

@source_schema = N'dbo',

@source_name = N'your_table',

@role_name = N'estuary_user';

GO

Verify CDC is active:

sqlSELECT name, is_cdc_enabled FROM sys.databases WHERE name = 'your_database';

SELECT name, is_tracked_by_cdc FROM sys.tables;

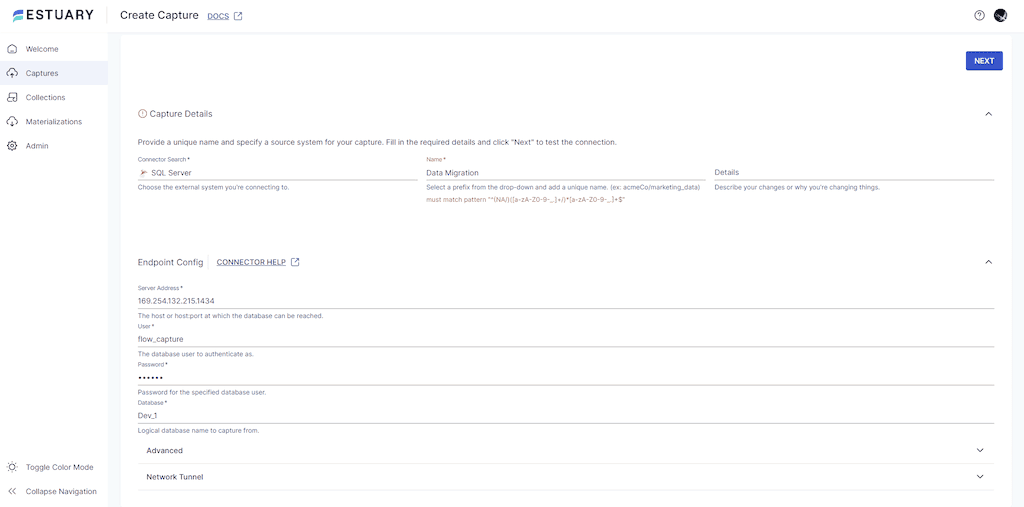

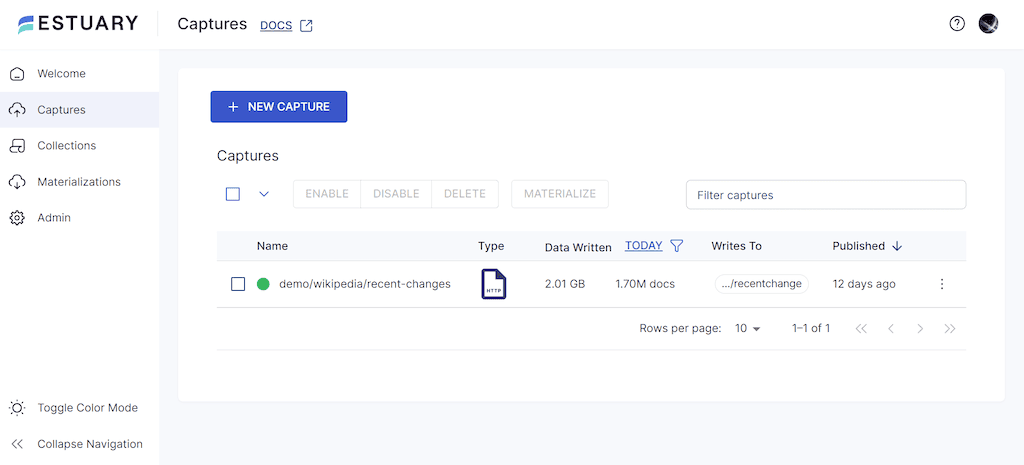

Step 1: Create the SQL Server Capture in Estuary

- Register or log in at dashboard.estuary.dev

- Go to Captures > New Capture

- Select the SQL Server connector

- Fill in the endpoint config:

- Server address: your SQL Server hostname or IP

- Port: 1433 (default)

- Database: your source database name

- User: the dedicated user created above

- Password: the password for that user

- Click Next. Estuary tests the connection and discovers all CDC-enabled tables.

- Review the auto-discovered collections. Deselect any tables you do not need to replicate.

- Click Save and Publish. The initial backfill begins immediately.

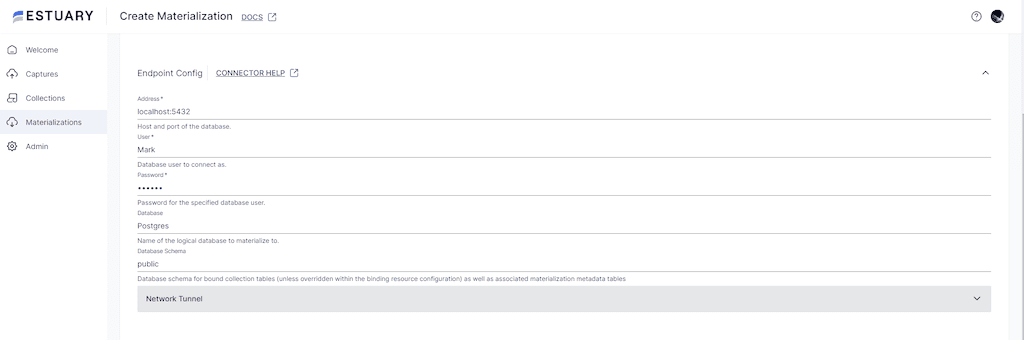

Step 2: Create the PostgreSQL Materialization

- After the capture is published, click Materialize Collections

- Select the PostgreSQL connector

- Fill in the endpoint config:

- Host: your PostgreSQL hostname

- Port: 5432 (default)

- Database: your target database name

- User: a user with CREATE TABLE privileges in the target schema

- Password

- Click Next. Estuary maps captured collections to destination tables automatically.

- Review the collection-to-table mapping. Column names are lowercased to match PostgreSQL conventions.

- Click Save and Publish. Estuary performs the initial full load, then switches to continuous CDC replication.

What Estuary handles automatically that SSIS does not:

- Schema changes (Estuary detects a new CDC capture instance and switches to it seamlessly; note that adding a column to a SQL Server table does not auto-update the CDC change table — a new capture instance must be created first, either manually or via Estuary's Automatic Capture Instance Management advanced option)

- DELETE operations (SSIS batch copies do not replicate deletes)

- Error recovery (failed rows are logged with full context, not silently dropped)

SSIS vs Estuary: Full Technical Comparison

| Capability | SSIS | Estuary |

|---|---|---|

| Initial full load | Yes | Yes |

| Ongoing CDC replication | No | Yes |

| DELETE propagation | No (batch copy only) | Yes |

| Schema change handling | Manual | Semi-automatic (detects new capture instances; source-side instance creation may be manual) |

| Setup time | 2-4 hours for simple tables | Under 15 minutes |

| Maintenance | High (package upgrades, SQL Server Agent monitoring) | Low (fully managed SaaS) |

| Binary/BLOB column support | Requires Script Component | Handled natively |

| Large table performance | Limited by SSIS buffer settings | Optimized for high-throughput streams |

| Cost | SQL Server license required | Free tier available; pay-as-you-go |

| Downtime required | Yes (for cutover) | No (sync live, cut over without downtime) |

| Stored procedure migration | Not applicable (DDL only) | Not applicable (DDL only) |

Pre-Migration Checklist

Use this checklist before starting either method.

Schema audit:

- [ ] Export full DDL from SQL Server:

INFORMATION_SCHEMA.COLUMNSfor all tables - [ ] Map every column type using the type mapping table above

- [ ] Identify all

IDENTITYcolumns and note their current maximum values - [ ] Identify all

ROWVERSION/TIMESTAMPcolumns and decide whether to migrate or drop them - [ ] List all stored procedures, functions, and triggers that will need PL/pgSQL rewrites

- [ ] List all linked server references that need replacement

Data quality:

- [ ] Check for NULL values in columns that will be

NOT NULLin the target - [ ] Check for string columns that exceed the target length limit

- [ ] Run a row count on every source table before migration

- [ ] Create a full backup of the SQL Server database

Infrastructure:

- [ ] PostgreSQL target database and schema created

- [ ] Migration user created with correct permissions

- [ ] Network connectivity tested (port 1433 source, port 5432 target)

- [ ] Monitoring alerts set up on target PostgreSQL instance

Post-migration validation:

- [ ] Row counts match between source and target

- [ ] Spot-check data for 10-20 rows per table

- [ ] All identity sequences reset to current maximum values

- [ ] Application connection strings updated and tested in staging before production cutover

Best Practices for SQL Server to PostgreSQL Migration

- Back up before you start: Create a full SQL Server backup immediately before the migration window. Even for CDC-based migrations, a backup provides a clean rollback point if the target schema needs to be rebuilt.

- Migrate schema first, data second: Generate the PostgreSQL DDL manually or with a tool like

pgloaderin dry-run mode. Review the output before loading any data. Schema errors discovered mid-migration are far more disruptive than ones caught before the first row is copied. - Test with a non-production copy first: Run the full migration against a restored copy of production before executing against live data. This surfaces type mapping errors, NULL constraint violations, and sequence issues before they affect users.

- Plan for stored procedure rewrites separately: T-SQL to PL/pgSQL is not a simple find-and-replace. Budget it as a separate workstream, not a post-migration cleanup task.

- Do not migrate and cut over simultaneously: Use Estuary or another CDC tool to sync changes during the migration window, so the cutover is a connection string change rather than a freeze-and-copy operation.

Conclusion

Migrating from SQL Server to PostgreSQL requires careful preparation across three areas: type mapping, schema translation, and choosing the right replication strategy. The data type mapping table and gotchas section above cover the issues that cause most migration failures.

For one-time batch migrations with an existing SSIS environment, the manual SSIS method works well. For any scenario requiring ongoing sync, zero-downtime cutover, or minimal maintenance overhead, Estuary's CDC-based approach is the faster and more reliable option.

Start your free Estuary migration or explore related migration guides:

FAQs

Can I migrate SQL Server stored procedures to PostgreSQL automatically?

Does PostgreSQL support SQL Server's IDENTITY column behavior?

What happens to SQL Server views during migration?

Is downtime required for SQL Server to PostgreSQL migration?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.