Migrating from SQL Server, a conventional relational database system, to Elasticsearch, the contemporary distributed search and analytics platform, brings several benefits. This migration can enhance your organization’s capabilities for full-text search, real-time data analysis, and logging and monitoring.

In this tutorial, we’ll explore the details and methods of SQL Server to Elasticsearch migration. We will also look at the features offered, the benefits associated with the methods of integration, and which method would best suit your needs. So, let’s dive in!

SQL Server Overview

SQL Server, developed by Microsoft, is a relational database management system designed to store and retrieve data as requested by other software applications. Among the impressive features of SQL Server is its ability to seamlessly integrate with other Microsoft products and services, including Azure, Visual Studio, and Power BI. This integration helps achieve better collaboration and advanced analytics and data visualization with SQL Server.

Some key features of SQL Server include:

- SQL Server can query across your entire data estate without moving or duplicating data, allowing you to gain insights directly from the existing data storage.

- SQL Server offers the capability to combine data from almost any source through its extensive connector library and advanced transformations available in SSAS (SQL Server Analytics Services) tabular models.

- SQL Server provides functions such as encrypting sensitive data, performing complex calculations on encrypted data, and enabling tailored data access based on role-specific row filtering. These functions are essential for enhancing data security, optimizing processing, and ensuring controlled access within SQL Server.

- SQL Server monitors anomalous activity and automatically notifies users about security flaws and configuration errors.

What Is Elasticsearch?

Elasticsearch is a distributed search and analytics engine built on top of Apache Lucene. It serves as a central repository at the core of the Elastic Stack, guaranteeing blazing-fast searches, precisely calibrated relevance, and seamless scalability for powerful analytics.

You can easily integrate Elasticsearch with a wide range of data sources, visualization tools, and frameworks to create detailed data ingestion, analysis, and visualization workflows. This integration is often achieved with the ELK stack, which includes Elasticsearch, Logstash, and Kibana.

Some key features of Elasticsearch include:

- Elasticsearch offers a comprehensive Query DSL (Domain-Specific Language) based on JSON for defining searches. This enables complex search capabilities across distributed environments in real-time.

- The Elasticsearch architecture is highly available and horizontally scalable. If an issue arises, Elasticsearch determines whether one of the nodes is malfunctioning and then redistributes the data to ensure continuous availability without data loss.

- Elasticsearch is a document-oriented search engine that stores complex real-world entities as structured JSON documents and, by default, indexes all fields. This enhances search performance, making it highly effective for full-text search applications.

- Elasticsearch enhances performance with intelligent caching. It stores frequently executed queries as filters. Elasticsearch will retrieve results faster from the cache for any subsequent request involving these filters for faster query performance.

How to Load Data From SQL Server to Elasticsearch

Let’s look into the different methods of migrating data from SQL Server to Elasticsearch.

- Method 1: Using Estuary Flow to connect SQL Server to Elasticsearch

- Method 2: Using SSIS to migrate data from SQL Server into Elasticsearch

Method 1: Using Estuary Flow for SQL Server to Elasticsearch Integration

The first method involves using Estuary Flow, a no-code, modern migration tool, which has multiple built-in connectors to facilitate real-time data migration between different platforms. This section details the steps on how to use Estuary Flow to sync data from SQL Server to Elasticsearch.

Prerequisites

Step 1: Configure SQL Server as the Source

- Register for a new Estuary Flow account or log in to your existing one.

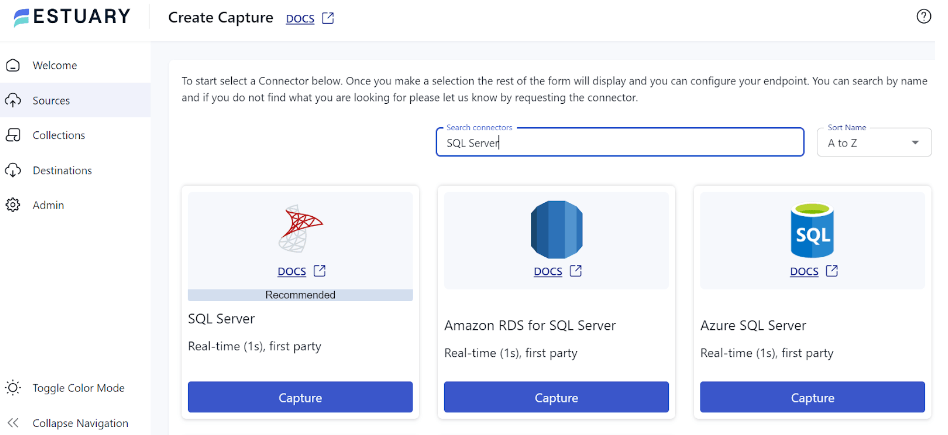

- Select the Sources option from the left navigation pane of the dashboard.

- Click the + NEW CAPTURE button on the Sources page.

- Type SQL Server in the Search connectors box and click the connector’s Capture button when it appears in the search results.

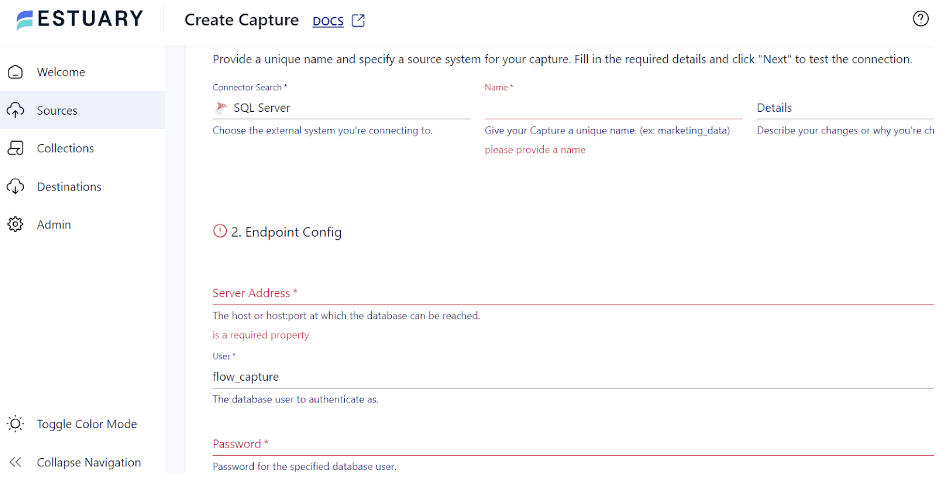

- On the SQL Server connector configuration page, enter the required details, such as Name, Server Address, User, Password, and Database.

- Click NEXT > SAVE AND PUBLISH to finish configuring the source. This CDC connector will continuously capture updates in your SQL Server database into one or more Flow collections.

Step 2: Configure Elasticsearch as the Destination

- After configuring the source, the next step is to setup the destination end of the integration pipeline. Click MATERIALIZE COLLECTIONS in the pop-up window that appears after a successful capture.

You can also select the Destinations option from the left-hand pane of the dashboard.

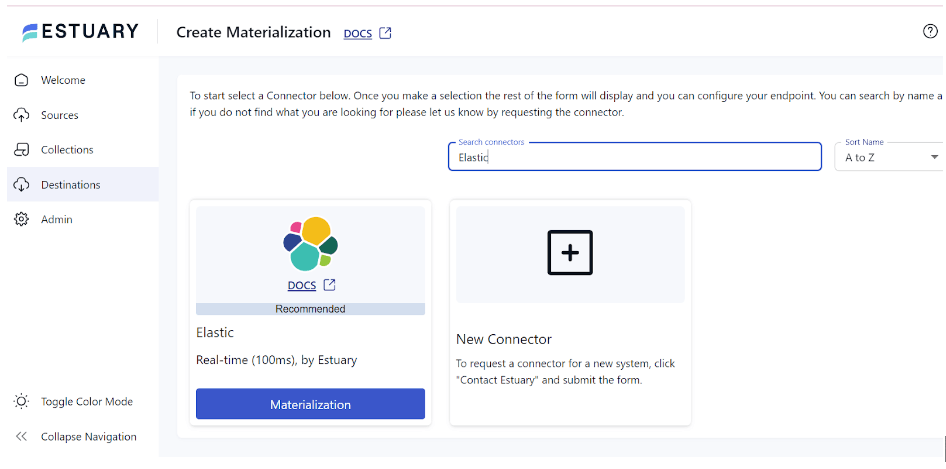

- On the Destinations page, click the + NEW MATERIALIZATION button.

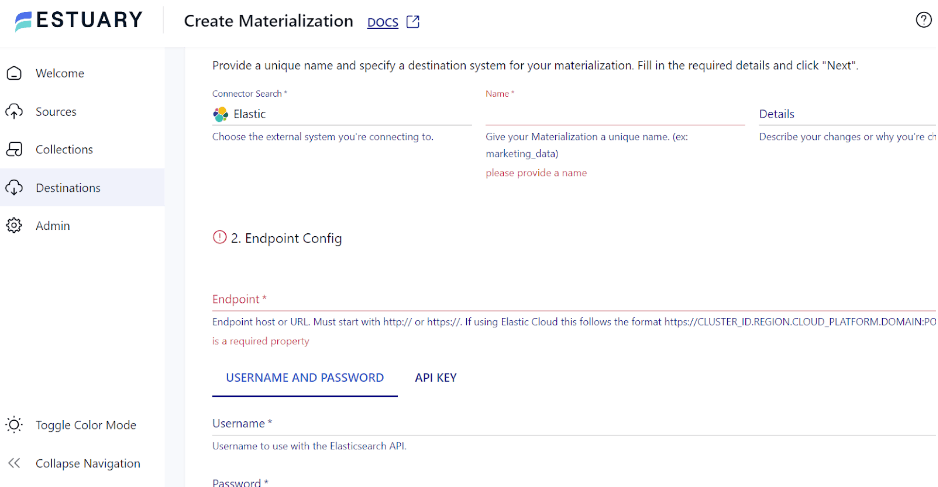

- Next, type Elastic in the Search connectors box, and click the Materialization button of the connector in the search results.

- On the Create Materialization page, enter the necessary information, including Name, Endpoint, Username, and Password.

- To manually link a capture to your materialization, use the SOURCE FROM CAPTURE option in the Source Collections section.

- Finally, click NEXT > SAVE AND PUBLISH to complete the configuration process. The connector will materialize Flow collections of your SQL Server data into indices in an Elasticsearch cluster.

To gain a better understanding of Estuary Flow, refer to the following documentation:

Benefits of Estuary Flow

- Wide Range of Connectors: Estuary Flow offers 200+ pre-built connectors for effortless configuration. You can leverage these connectors to merge data from several sources to a destination without writing a single line of code.

- Scalability: It is designed to handle large data flows and supports up to 7 GB/s.

- Real-Time Data Processing: Estuary Flow supports continuous, real-time data transfer from SQL Server to Elasticsearch. This helps ensure that the most recent data is being used by downstream apps, enabling real-time decision-making.

- Change Data Capture: It uses complex log-based Change Data Capture (CDC) algorithms to gather fine-grained data changes. This activity ensures data integrity and reduces delays.

Ready to elevate your data capabilities? Start your SQL Server to Elasticsearch migration with Estuary Flow for real-time, seamless integration. Get Started for Free or Contact Us to learn more!

Method 2: Using SSIS to Migrate Data From SQL Server to Elasticsearch

Microsoft offers SQL Server Integration Services (SSIS), a potent toolkit for data integration and transformation. It allows programmers and data experts to plan, create, implement, and oversee intricate workflow and data integration solutions.

Before you initiate the steps for migration of data from SQL Server to Elasticsearch using SSIS, ensure the following prerequisites are in place.

- Install and configure SSIS in your SQL Server environment.

- Ensure access to SQL Server Management Studio (SSMS) to create and manage SSIS packages.

- Set up and configure Elasticsearch to receive the migrated data.

Step 1: Import Data from SQL Server

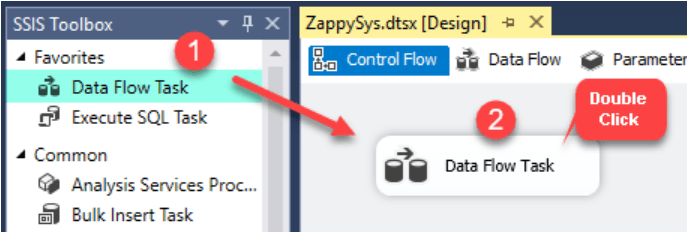

- Create a new SSIS package and drag a Data Flow Task from the SSIS Toolbox into the Control Flow.

- After opening the Data Flow Task, add OLE DB Source.

- Configure the OLE DB Source to pull information from a table. For example, the Products table in the Northwind database.

- Select the columns you need. For this guide, consider ProductName and ProductID.

Step 2: Convert Data into JSON Documents

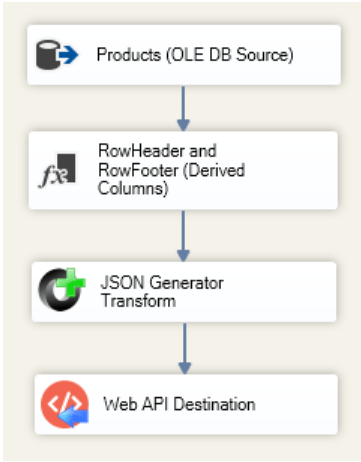

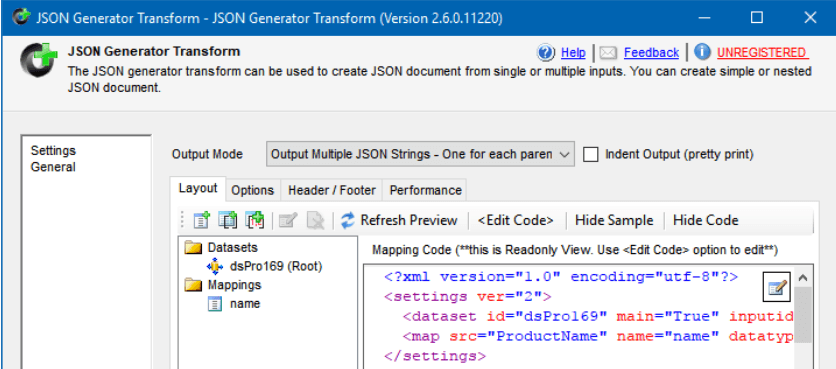

Use JSON Generator Transform to prepare the data before adding it to Elasticsearch. It will assist in creating JSON documents from the SQL table rows.

Step 3: Create Index Data for the Elasticsearch Bulk API

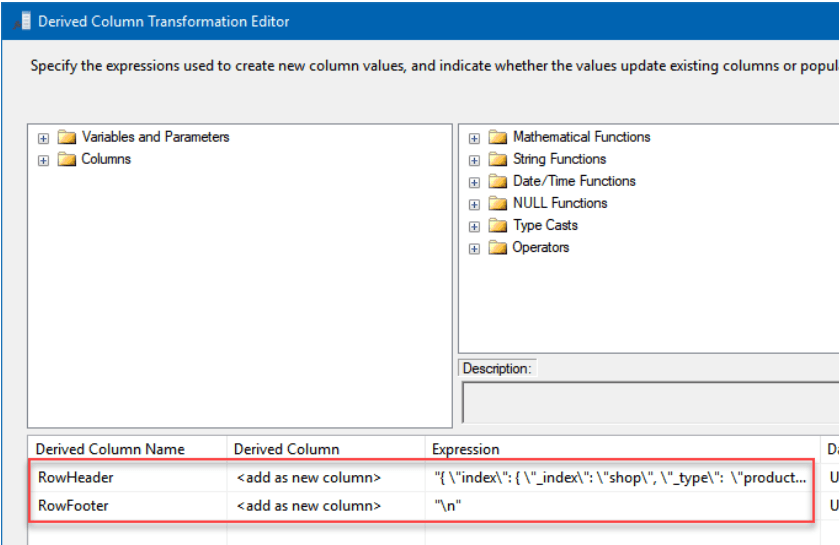

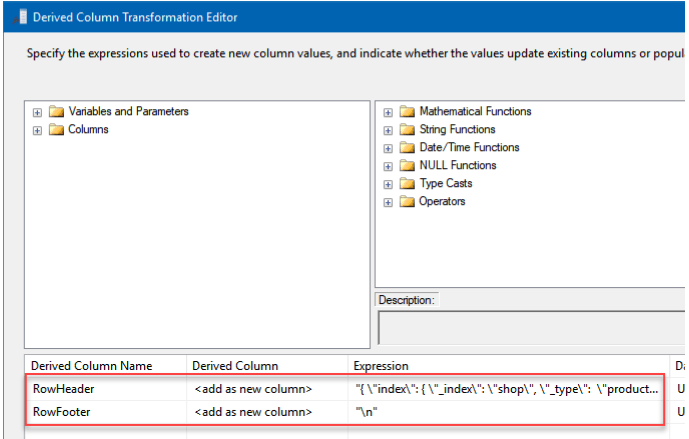

- After closing the window, drag the Derived Column component from the SSIS Toolbox.

- Create two additional columns and name them RowFooter and RowHeader.

- Provide the columns with values in the Expression column:

plaintextRowHeader:1 | "{ \"index\": { \"_index\": \"shop\", \"_type\": \"products\", \"_id\" : \"" + (DT_WSTR,100)ProductID + "\" } }\n" |

plaintextRowFooter: "\n"- Next, drag the JSON Generator Transform from the SSIS toolbox and open it.

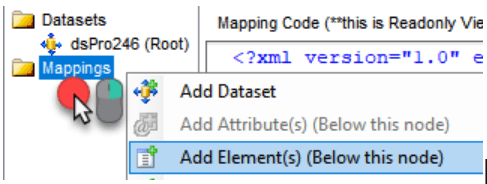

- Right-click on Mappings, then choose Add Element(s) (Below this node).

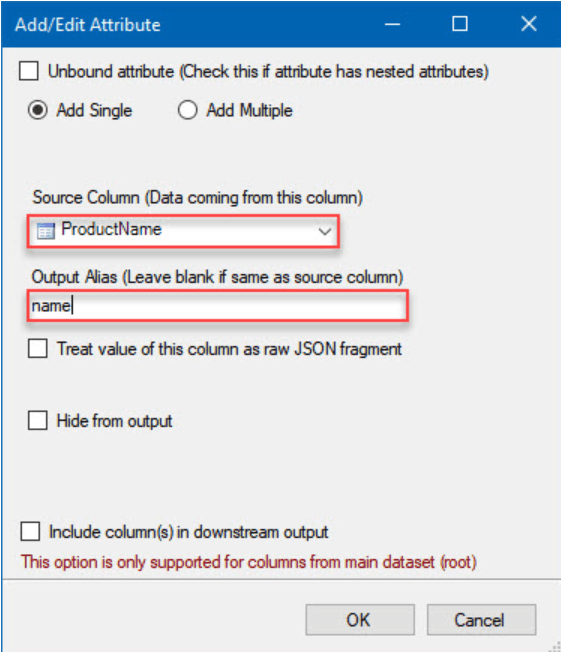

- Select ProductName in the Source Column and name as the Output Alias in the dialog box.

- Ensure your view is similar to this.

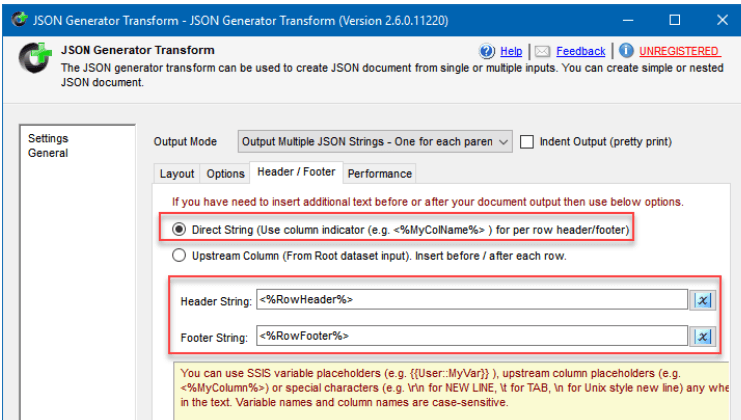

- Next, select the Header / Footer tab.

- Choose the Direct String option.

- Input <%RowHeader%> in Header String

- Input <%RowFooter%> in Footer String.

- Then, close the window.

Step 4: Use JSON String to Update the Bulk API Call’s Index Data

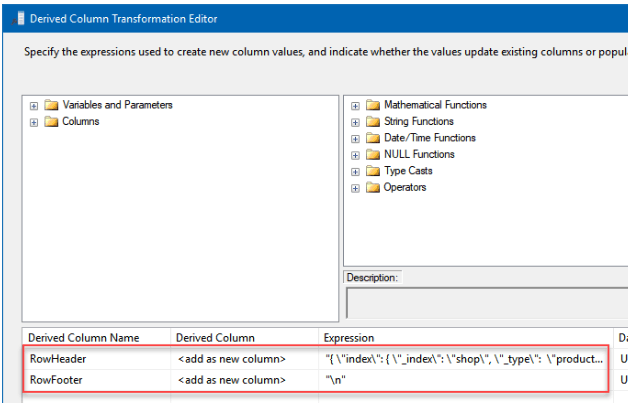

- From the steps mentioned for Create Index Data for the Elasticsearch Bulk API, complete all the steps except for step 2. Instead, use the expressions below for RowHeader and RowFooter.

- Configure the Derived Column to add a prefix. In the Expression column, provide the header and footer to the JSON.

RowHeader:

plaintext"{ \"update\": { \"_index\": \"shop\", \"_id\" : \"" + (DT_WSTR,100)ProductID + "\" } }\n{ \"doc\" : "RowFooter: "}\n"

Step 5: JSON String for Index Data Deletion for the Bulk API Call

- Follow the first two steps in Step 3: Create Index Data for the Elasticsearch Bulk API.

- For the second step in Step 3, use the expression below for RowHeader.

- Configure derived columns to add a prefix and the header and footer to a JSON.

- Provide them values in the Expression column.

RowHeader:

plaintext"delete\": { \"_index\": \"shop\", \"_id\" : \"" + (DT_WSTR,100)ProductID + "\" } }\n"Step 6: Integrate SQL Server and Elasticsearch

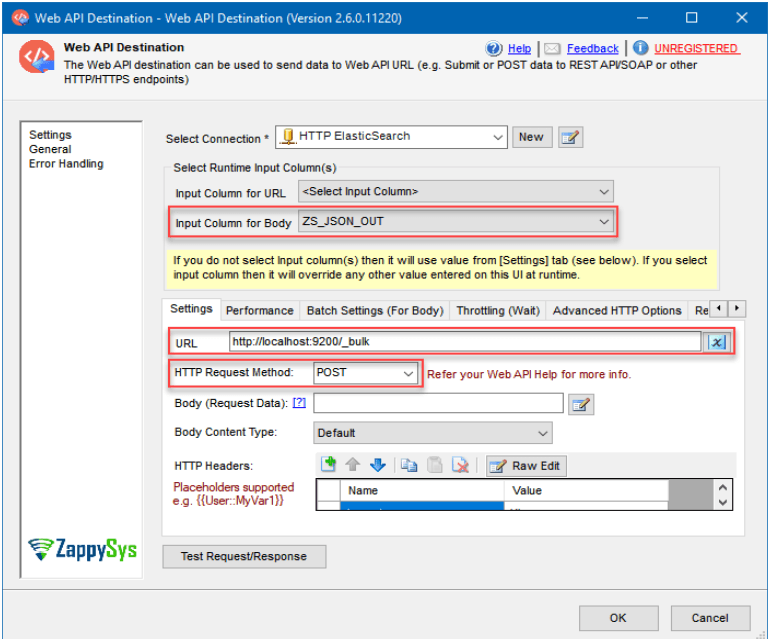

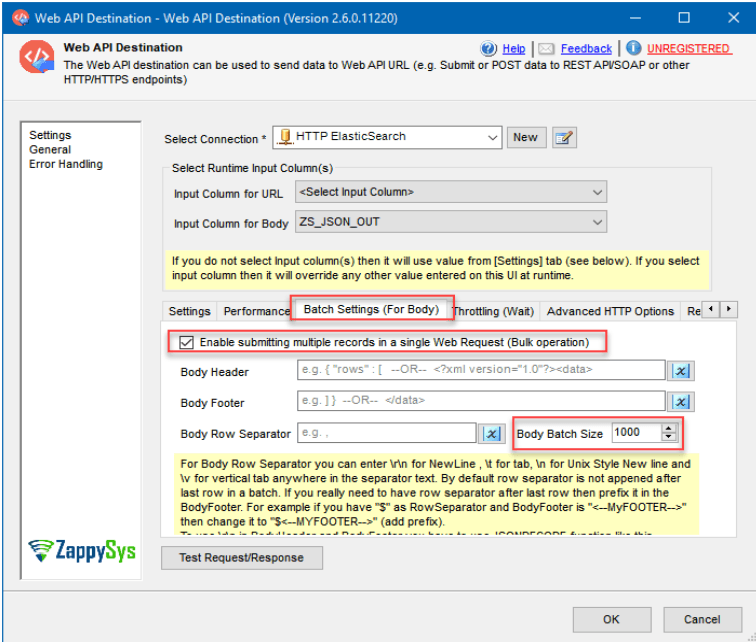

- Drag the Web API Destination SSIS into the Data Flow for editing.

- Press <New ZS-HTTP Connection> in Select Connection.

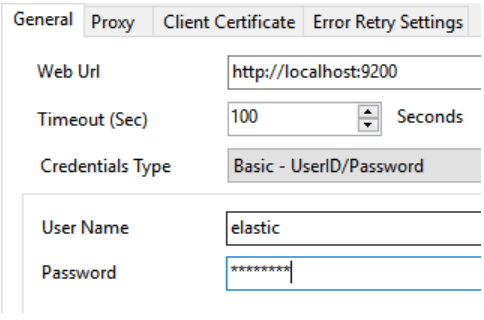

- When the HTTP Connection Manager window opens, configure the connection to your Elasticsearch instance:

- Configure a Web Url that points to your Elasticsearch instance.

- Select Basic - UserID/Password under Credentials Type.

- Then, set your password and username.

- Close the configuration window and set up a Web API Destination.

- Here are the steps for importing those rows into Elasticsearch:

- Set the Input Column for Body to ZS_JSON_OUT for Create and Update Index Data and to RowHeader [Derived Column] for Delete Index Data.

- Set the URL to http://localhost:9200/_bulk.

- Set the HTTP Request Method as POST.

- In the Batch settings (For Body) tab,

- Enable batch submission.

- Set the Body Batch Size, let’s say, to 1000. Ensure this is an even number to avoid any issues.

By following these steps, you can successfully load data from SQL Server to Elasticsearch.

Limitations of Migrating Data from SQL Server to Elasticsearch with SSIS

- Data Transformation: SSIS may not support all data transformations necessary to correctly map and prepare SQL Server data for ingestion into Elasticsearch. Complex transformations could require additional tools or custom coding.

- Data Types: Compared to SQL Server, Elasticsearch supports different data formats. Aligning the data types between the two systems can be difficult, and some data types might not have exact equivalents in Elasticsearch, leading to data loss.

- Performance: The amount of data being transferred and the network latency between the two systems significantly impact the performance of data migration from SQL Server to Elasticsearch. Large datasets could impact performance and must be optimized.

Conclusion

For organizations looking to enhance their data storage and search capabilities, switching from SQL Server to Elasticsearch is the right choice. Elasticsearch offers superior scalability, flexibility, and real-time search capabilities to handle large-scale data ingestion, storage, and analysis.

To integrate SQL Server and Elasticsearch, you have two options: SSIS or Estuary Flow. However, the limitations associated with using SSIS include a lack of real-time data integration, effort-intensive, truncation-related errors, and authentication-related errors.

Estuary Flow can help overcome drawbacks associated with manual methods of data migration. With handy features like an intuitive interface, ready-to-use connectors, CDC capabilities, and transformation support, creating and running your ETL pipeline will only take a few clicks.

Looking to migrate data from multiple sources to your data destination without any hassle? Estuary Flow is all you’ll need. Log in or register to get started!

FAQs

How do I design effective data mapping in Elasticsearch?

Select data types in Elasticsearch that closely match the datatypes in your SQL Server tables. Utilize Elasticsearch’s nested objects and arrays to represent hierarchical relationships within SQL Server data effectively.

How does the performance of Elasticsearch compare to SQL Server for typical use cases?

Elasticsearch is designed to execute searches and retrievals quickly, particularly for use cases involving full-text search and analytics. Performance comparisons can change based on query methods, data amount, and use cases.

About the author

Rob has worked extensively in marketing and product marketing on database, data integration, API management, and application integration technologies at WS02, Firebolt, Imply, GridGain, Axway, Informatica, and TIBCO.