Imagine that you’re the founder of a successful eCommerce platform. You've rocked the charts on your home turf and now you're all hyped up to take on the world. But reality hits you square in the face. How can you ensure that a customer in Asia gets the same quick access to product information as a customer in North America? Let's not even get started on discrepancies in product availability and pricing.

By replicating Snowflake databases, you can take your eCommerce business on a world tour with confidence. Your platform will maintain its data integrity, dish out amazing user experiences, and smoothly navigate the tricky waters of data compliance.

However, while replication might sound simple, copying data between different regions and accounts has challenges lurking beneath the surface. Missteps during setup can compromise data consistency and integrity.

This guide explores these complexities and provides the perfect blueprint. We'll walk you through the steps to master Snowflake replication and get the most out of it.

What Is Snowflake?

Established in 2012, Snowflake is a Software-as-a-Service solution that provides a unified platform for different data needs, including data warehousing, data lakes, data engineering, and data science. It offers features like:

- Data cloning

- Data sharing

- Compatibility with third-party tools

- Dynamic scaling of computing power

- Separation of storage and computing

Snowflake’s design revolves around 3 primary elements that form the backbone of its cloud data platform:

- Cloud services: Using ANSI SQL, Snowflake enhances data optimization and infrastructure management. It takes charge of data security and encryption and holds certifications like PCI DSS and HIPAA. This segment deals with:

- Authentication

- Access control

- Query optimization

- Metadata management

- Infrastructure management

- Authentication

- Query processing: Snowflake operates using virtual cloud data warehouses to process data requests. Every virtual warehouse functions as a separate cluster, so they don't interfere with each other's performance.

- Database storage: This is where the structured and semi-structured data is stored. Snowflake autonomously oversees the entire data storage procedure and takes care of aspects like data organization, compression, and statistics.

A Quick Overview Of Database Replication In Snowflake

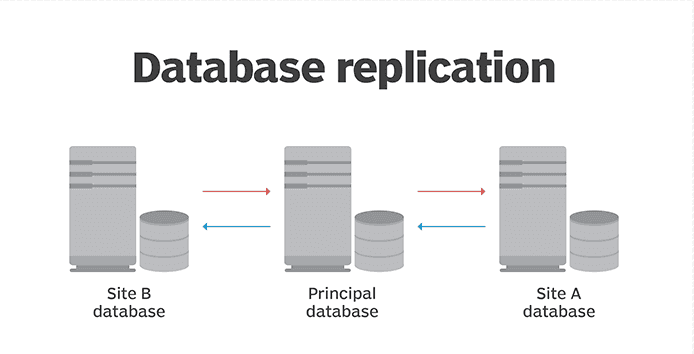

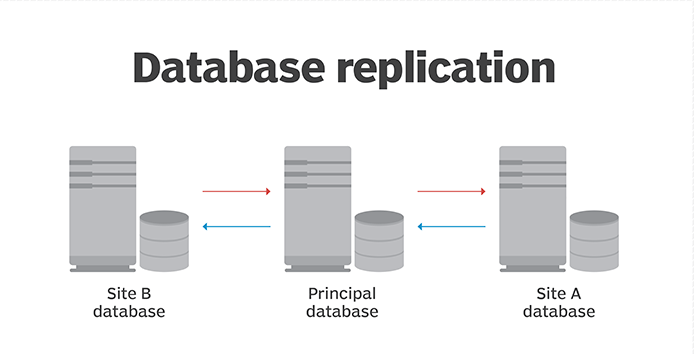

Snowflake’s database replication provides smooth integration of database objects and data across multiple accounts within a single organization – connecting various regions and different cloud platforms.

In the replication mechanism, the source account initiates the process and the primary account acts as the pivotal hub for data storage. The replication schedule is controlled to maintain data consistency in the transient database.

When a database is designated for replication, it’s recognized as the ‘primary’ database where all DML/DDL operations transpire. This primary database takes the lead in the replication system.

Any database can take the primary role, but its main function is its replication capability to secondary databases. These secondary databases, located in different regions or cloud platforms, function as read-only versions that constantly receive updates from the primary.

Differences Between Account & Database Replication

While both account and database replication are important to Snowflake’s data management, they target different object types and carry unique attributes in data synchronization. Here’s a breakdown of their unique features and processes:

Account Object Replication

While only databases are subject to database replication, account replication covers a variety of object types within an account. A detailed list of account objects can be found in this Replicated Objects section.

Access Control

Privileges assigned to database objects do not transfer to a secondary database. This is true for both current and forthcoming object privilege grants (meaning future allocations). However, through account replication, these privilege grants can be replicated.

Parameters

Account parameters aren’t replicated via database replication but through account replication. Specific object parameters set at the schema or object level, like DATA_RETENTION_TIME_IN_DAYS, DEFAULT_DDL_COLLATION, and others, are replicated if explicitly set using CREATE or ALTER commands.

While parameters set on primary database objects overwrite those on secondary database objects, database-level parameters in secondary databases remain unchanged after replication.

Note: PIPE objects aren’t replicated, but their parameters like PIPE_EXECUTION_PAUSED are if set at the schema level.

How To Implement Database Replication In Snowflake

If you’re looking to replicate your databases across different Snowflake accounts, you’ll need to use Snowflake configurations and SQL commands. It works, and it’s great to have this replication mechanism. However, there is also a simpler way to replicate databases and integrate with various data sources - without having to worry about maintenance, consistency, technical expertise, etc.

No-code SaaS alternatives, like Estuary, provide fully managed solutions for data integration and scaling your data infrastructure. But, before we dive into this alternative approach, let’s look at how you can replicate databases using Snowflake’s account replication feature.

Database replication in Snowflake lets you synchronize database objects and data between Snowflake accounts. This can be especially useful for scenarios like:

- Disaster recovery

- Supported platforms for replication

- Data consolidation from multiple accounts

- Distributing data across different geographical locations

Snowflake's replication capability is available across major cloud platforms including Amazon Web Services (AWS), Google Cloud Platform (GCP), and Microsoft Azure.

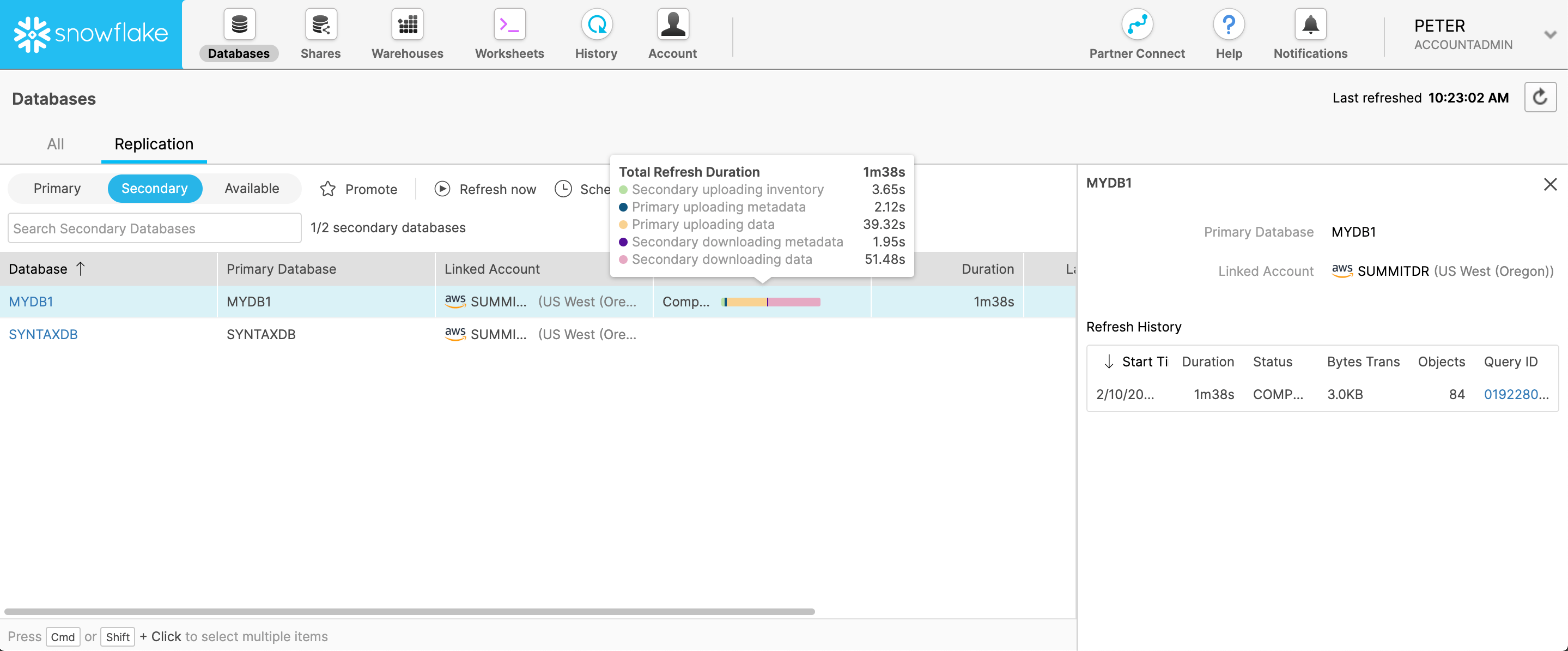

Snowflake offers web interfaces in both Snowsight and Classic Console to manage database replication and failover/failback operations. Familiarity with these interfaces will help when reviewing the detailed steps below. So let’s take a quick look at these interfaces:

- Classic Console: The original Snowflake web interface provides options to enable replication, manage refresh, and promote/demote databases through the Databases > Replication section.

- Snowsight: The newer web interface where you can enable replication on a local database to make it a primary database. You can also manage secondary databases, monitor refresh status, and promote a secondary to become the new primary.

Here’s the step-by-step guide for setting up and managing database replication using SQL, CLI, and Snowflake’s web interfaces.

Steps For Replicating Database In Snowflake

Let’s now explore how to configure and achieve database replication across Snowflake accounts and regions:

Prerequisites

- The source and target accounts should belong to the same Snowflake organization

- Replication must be enabled at the organization and account levels by the ‘ORGADMIN’

- The user managing replication must have the ‘ACCOUNTADMIN’ role in each account

Step 1: Promoting A Database To A Primary Database

Use the following SQL command to designate a database as the primary and allow replicas to be created:

plaintextALTER DATABASE mydb ENABLE REPLICATION

TO ACCOUNTS myorg.acct1, myorg.acct2, myorg.acct3;This promotes the database to become the source primary database. You can also enable this through Snowsight.

Step 2: Configuring Replication And Failover Via Replicas

Next, you can set up failover through a replica of the primary database so that a secondary database can be promoted to take over as the new primary. Use the following statement to enable failover:

plaintextALTER DATABASE mydb ENABLE FAILOVER

TO ACCOUNTS myorg.acct1, myorg.acct2, myorg.acct3;The failover can also be configured in Snowsight or Classic Console.

Step 3: Creating Secondary Databases

Run the following SQL command in each target account to create read-only secondary databases:

plaintextCREATE DATABASE mydb AS REPLICA OF myorg.source_acct.mydb;Make sure to give the secondary database the same name as the primary. You can manage secondary databases in Snowsight after creation.

Step 4: Refreshing Secondary Databases

To propagate changes from the primary to secondaries, issue a refresh on each secondary:

plaintextALTER DATABASE mydb REFRESH;Configure scheduled refreshes based on your RPO requirements. Use Snowsight or Classic Console to monitor the refresh status.

Step 5: Promoting A Secondary To Become Primary

If the primary database becomes unavailable, promote a secondary to take over as the new primary:

plaintextALTER DATABASE mydb PROMOTE REPLICA;This can also be initiated through Snowsight or Classic Console. Make sure to reconfigure the replication to the new primary.

Step 6: Monitoring Replication Status

Use Information Schema views like DATABASE_REFRESH_PROGRESS to monitor the status and history of replication operations across your accounts.

Optimizing Your Database Replication Workflow

While these steps provide a comprehensive guide to replicating Snowflake databases, additional pointers can further improve and streamline your overall database replication workflow.

- Legacy account locator: While the older snowflake_region.account_locator format is still functional for replication, its future use is discouraged as it may be phased out.

- Setting the statement timeout: Large-scale initial replications might exceed the default 2-day timeout so consider adjusting the ‘STATEMENT_TIMEOUT_IN_SECONDS’ parameter:

plaintextALTER SESSION SET STATEMENT_TIMEOUT_IN_SECONDS = 604800;- Monitoring refresh progress: Stay informed about the refresh status via the ‘DATABASE_REFRESH_PROGRESS’ function. You can also tap into the Snowflake Information Schema for a broader history of replication activities.

- Comparing Primary and Secondary Databases: Use the ‘HASH_AGG’ function to maintain data consistency across your primary and secondary databases. Compare hash values from both databases to detect and address discrepancies.

- Dropping Databases: If you ever need to drop your databases:

- Secondary databases can be dropped at will using the ‘DROP DATABASE’ command.

- Primary databases with linked secondary ones need a prior promotion of a secondary database to primary or require the deletion of all linked secondary databases before deletion.

- Secondary databases can be dropped at will using the ‘DROP DATABASE’ command.

Database Replication Challenges And Limitations

Despite the advantages of Snowflake's replication, there are some scenarios and commands that can throw a wrench in the works.

Database Replication To Accounts On Lower Editions

When promoting a local database to primary in Snowflake, an error message appears if:

- The primary database is on a Business Critical or higher account but some replication-approved accounts have lower editions. The Business Critical Edition is for extremely sensitive data.

- The primary database in a Business Critical or higher account has a signed agreement for storing PHI data per HIPAA and HITRUST CSF, yet some replication-approved accounts don't, regardless of their edition.

This guarantees sensitive data isn’t mistakenly replicated to lower editions. However, administrators can use the IGNORE EDITION CHECK clause during the ALTER DATABASE … ENABLE REPLICATION TO ACCOUNTS command to bypass this. With this set, replication can take place to any Snowflake edition.

Replication Restrictions

You can’t replicate databases that come from shares. It’s a set rule to maintain data consistency and structure. Another important point to remember is about refreshing primary databases. If yours contains dynamic tables, event tables, or external tables, then the refresh won’t work. These table types just don’t play well with the refresh operation.

Command Limitations

When using the ‘CREATE DATABASE … AS REPLICA’ command in Snowflake, there are a few things to be aware of. This command is incompatible with the ‘WITH TAG’ clause. The major reason for this limitation is that secondary (replica) databases are inherently read-only.

However, if you've previously used the WITH TAG clause with your primary database and now want to create a replica, follow these steps:

- Remove the ‘WITH TAG’ clause from your primary database.

- Before creating the replica, execute the ‘GET_DDL’ function in Snowflake to check whether your database uses the ‘WITH TAG’ clause.

- If a tag is present, you'll see the ‘ALTER DATABASE … SET TAG’ instruction in the resulting output.

A No-Code Approach To Database Replication Through Estuary

Estuary is a reliable, no-code platform for efficient database replication. It creates a transparent connection between data sources and analysis platforms to ensure a steady flow of your data. Flow’s change data capture capabilities allow you to minimize data loads, which enhances overall system performance.

Regardless of the size of your database, Flow dynamically scales to cater to your data needs. It offers unparalleled flexibility and accommodates databases from the most compact to expansive ones exceeding 10TB.

Here are some more advantages of adopting Flow for your database replication:

- Efficiency: Flow promises quick data movement while maintaining its trustworthiness and ensures that your data is accessible on demand.

- Precision: Employing exactly-once semantics, Flow guarantees that every data transaction gets processed once and in the correct sequence.

- Schema inference: Estuary transforms unstructured data into structured formats, an essential capability for managing various data types.

- Data modification: Apart from data movement, it also offers on-the-spot data adjustments using streaming SQL and TypeScript to increase your data’s versatility.

- Event-focused integration: Flow is structured around an event-driven framework. This method permits real-time data alterations and allows prompt modifications when needed.

- Data visibility and protection: The configuration of user roles and privileges limits data access to authorized personnel. It also employs advanced encryption to improve data safety.

Conclusion

By learning the ins and outs of Snowflake replication, you ensure that your organization's data remains safe, accessible, and consistent – a rock-solid foundation for growth. From scalability that adapts with you to being ready for any unexpected data hiccups – that's what efficient Snowflake replication brings to the table.

Estuary stands out as a tool that can rapidly replicate databases, no matter their size or intricacy. Its connector-based approach simplifies the process of gathering data from its source and sending it to its intended destination. Added features like configurable roles and encryption elevate security and access measures.

Get started on your journey towards efficient data integration and replication with Estuary. Sign up for a free Flow account or get in touch with our support team.

About the author

With over 15 years in data engineering, a seasoned expert in driving growth for early-stage data companies, focusing on strategies that attract customers and users. Extensive writing provides insights to help companies scale efficiently and effectively in an evolving data landscape.