Migrating from MySQL to PostgreSQL is usually straightforward until you hit real-world issues like data type differences, collation/encoding mismatches, large tables, and cutover downtime. This guide gives you a complete, step-by-step MySQL to PostgreSQL migration runbook using pgloader (a free tool), including pre-migration checks, validation queries, and troubleshooting.

If you need minimal downtime or want to keep MySQL and PostgreSQL in sync during the cutover, this guide also covers a continuous change data capture (CDC) approach using Estuary, plus a few alternatives for smaller databases.

Key takeaways

- Fastest one-time migration (free): Use pgloader when you can accept a short maintenance window.

- Minimal downtime migration: Use CDC + cutover when you need to keep data flowing during the transition.

- Prevent common failures: Follow the pre-migration checklist (types, encodings, zero dates, identifier limits).

- Validate before cutover: Use the included row-count and spot-check queries to confirm correctness.

- Plan rollback: Have a cutover checklist and rollback plan before switching production traffic.

What is MySQL?

MySQL is a widely used relational database management system (RDBMS) based on SQL. Owned by Oracle, it's a critical component in many technology stacks, enabling teams to build and maintain data-driven services and applications.

Advantages of Using MySQL

- Open-Source and Free: MySQL is open-source, offering enterprise-level support and immediate deployment without cost.

- Cross-Platform Support: It supports popular operating systems like Windows, Linux, and Solaris.

- Scalability: MySQL can handle more than 50 million rows and scale to manage large datasets.

- Security: It features a robust data security layer, making it secure for handling sensitive information.

Disadvantages of MySQL

- Performance at Scale: MySQL’s efficiency diminishes as data scales, particularly in write-heavy or complex query scenarios.

- Limited Tooling: Compared to other databases, MySQL has fewer advanced debugging and development tools.

What is PostgreSQL (Postgres)?

PostgreSQL, also known as Postgres, is an open-source, enterprise-class object-relational database management system (ORDBMS). It supports both SQL (relational) and JSON (non-relational) querying, offering greater extensibility and adaptability than MySQL.

Advantages of Using PostgreSQL

- Performance: PostgreSQL handles complex queries and large-scale data with greater efficiency.

- Extensibility: It supports user-defined types and various procedural languages.

- ACID Compliance: PostgreSQL ensures high reliability and fault tolerance with write-ahead logging.

- Geospatial Capabilities: It supports geographic objects, ideal for location-based services and applications.

Disadvantages of PostgreSQL

- Learning Curve: PostgreSQL can be more challenging to learn and configure compared to MySQL.

- Performance at Smaller Scales: It may be slower for simple, small-scale operations.

Choose the best MySQL to PostgreSQL migration method

Not every “MySQL to PostgreSQL migration” is the same. The right approach depends on how much downtime you can tolerate, how large your database is, and whether you need to keep MySQL and PostgreSQL in sync during the cutover.

Option 1: pgloader (fastest one-time migration, free)

Choose pgloader if:

- You can schedule a maintenance window (minutes to hours, depending on size)

- You want the simplest “run it and migrate” path

- You’re doing a one-time move (not ongoing replication)

Avoid pgloader if you need near-zero downtime or must keep both systems continuously in sync after migration.

Option 2: CDC + cutover (minimal downtime)

Choose CDC + cutover if:

- You need minimal downtime and want continuous changes replicated during migration

- Your database is large and re-running a full migration would be painful

- You want a safer cutover with time for validation while data keeps flowing

This approach typically looks like: initial backfill → continuous changes replicated → validate → switch applications to PostgreSQL. (We’ll show a step-by-step CDC setup later in Method 2.)

Option 3: Dump and restore (small databases only)

A dump/restore approach can work well if:

- The database is small

- You can tolerate downtime

- You don’t need complex type mapping or incremental syncing

Quick decision checklist

Pick pgloader if you want speed + simplicity and can accept downtime.

Pick CDC + cutover if you need minimal downtime and a safer, staged transition.

Pick dump/restore if the database is small and downtime isn’t a concern.

What you need before migrating

Before you start, confirm a few basics. These prerequisites prevent the most common “it worked halfway and then failed” migration scenarios.

Supported versions and assumptions

- You have access to a MySQL database you can read from (and ideally a maintenance window if doing a one-time migration).

- You have a PostgreSQL instance ready (managed Postgres or self-hosted).

- You can open network connectivity from wherever you run the migration (your laptop/VM/CI runner) to both MySQL and PostgreSQL.

Permissions checklist (MySQL + PostgreSQL)

MySQL (source)

- A user that can read schema + data (and read from all databases/schemas you plan to migrate).

- Ability to access metadata (information_schema) so tools can discover tables and types.

PostgreSQL (destination)

- A role that can:

- create database objects (tables, indexes, sequences)

- write data

- create schemas (if you’re migrating multiple schemas)

- A target database created (or permission to create it)

Network and SSL connectivity

- Confirm you can connect to both databases from the machine where you’ll run the migration.

- If either database requires SSL/TLS:

- verify certificates/SSL modes in advance

- test a simple connection before running any migration job

Time, disk, and performance planning

- Downtime window: If you’re doing a one-time migration, decide how long you can pause writes (minutes vs hours).

- Storage: Ensure your Postgres instance has enough disk for full data + indexes (and extra headroom during load).

- Performance: For large tables, plan for:

- parallel loading (workers/concurrency)

- index/constraint timing (often better created after bulk load)

- running the migration from a machine close to the databases (to reduce network bottlenecks)

Method 1: Migrate MySQL to PostgreSQL using pgloader (step-by-step)

pgloader is a free, battle-tested command-line tool for one-time MySQL to PostgreSQL migrations. It connects directly to both databases, creates tables, loads data, and can create indexes and reset sequences. Use pgloader when you can schedule a maintenance window and you want the fastest path to a complete initial migration.

Step 1: Install pgloader

Debian/Ubuntu

plaintext language-bashsudo apt-get update

sudo apt-get install -y pgloader

macOS (Homebrew)

plaintext language-bashbrew install pgloader

Windows (recommended options)

pgloader is easiest to run on Windows using one of these:

- WSL: install Ubuntu in WSL, then install pgloader with

apt-get - Docker: run pgloader from a container (useful if you already use Docker for tooling)

If you are migrating from a Windows machine, WSL is usually the most reliable option.

Step 2: Create the PostgreSQL database and user

Create a dedicated migration user with permissions to create tables and write data.

plaintext language-sqlCREATE USER migrate_user WITH PASSWORD 'strong_password';

CREATE DATABASE target_db OWNER migrate_user;

GRANT ALL PRIVILEGES ON DATABASE target_db TO migrate_user;

If you plan to load into a specific schema (not public), create and grant that too.

Step 3: Prepare MySQL for a consistent migration (recommended)

If this is production data, decide how you’ll handle writes during the migration:

- Simplest: schedule a maintenance window and pause writes during the load

- If you cannot pause writes: use a CDC-based approach (covered later) or accept that a one-time load may miss changes after the migration starts

Also, confirm:

- you can connect from the machine running pgloader to both databases

- you have enough disk and compute on PostgreSQL to absorb the load

- you’ve reviewed the pre-migration checklist (zero dates, encoding, long identifiers)

Step 4: Create a pgloader .load file (recommended)

A .load file makes migrations repeatable and easier to tune.

Create a file like mysql_to_postgres.load:

plaintext language-lispLOAD DATABASE

FROM mysql://MYSQL_USER:MYSQL_PASSWORD@MYSQL_HOST:3306/MYSQL_DB

INTO postgresql://PG_USER:PG_PASSWORD@PG_HOST:5432/PG_DB

WITH include drop,

create tables,

create indexes,

reset sequences,

foreign keys

SET work_mem to '64MB',

maintenance_work_mem to '512MB'

CAST

type tinyint when (= precision 1) to boolean using tinyint-to-boolean,

type datetime to timestamptz

BEFORE LOAD DO

$$ CREATE SCHEMA IF NOT EXISTS public; $$;

Notes:

include dropis convenient for re-runs, but do not use it if you might accidentally drop the wrong target database.- The

CASTsection is where you handle common MySQL patterns likeTINYINT(1)and datetime behavior. - If you hit constraint issues on the first run, you can temporarily remove

foreign keysand add constraints later.

Step 5: Run pgloader (with logging)

Run the migration using the .load file:

plaintext language-bashpgloader mysql_to_postgres.load

For easier debugging, capture output to a log:

plaintext language-bashpgloader mysql_to_postgres.load 2>&1 | tee pgloader.log

If you prefer to run without a .load file, you can use direct connection strings, but a .load file is easier to maintain and tune.

Step 6: Validate the migration (copy/paste checks)

Do not cut over until you validate.

1) Compare table counts

In MySQL:

plaintext language-sqlSELECT COUNT(*) AS tables

FROM information_schema.tables

WHERE table_schema = 'MYSQL_DB';

In PostgreSQL:

plaintext language-sqlSELECT COUNT(*) AS tables

FROM information_schema.tables

WHERE table_schema = 'public';

2) Compare row counts for important tables

In MySQL (example for a table):

plaintext language-sqlSELECT COUNT(*) FROM your_table;

In PostgreSQL:

plaintext language-sqlSELECT COUNT(*) FROM your_table;

Repeat for your largest and most business-critical tables.

3) Spot-check key queries

Run 5 to 10 representative application queries against PostgreSQL and confirm results match expectations.

4) Verify sequences (AUTO_INCREMENT equivalents)

If your tables use sequences or identity columns, confirm inserts do not collide and that IDs increment correctly after the load.

Step 7: Cutover checklist (and rollback plan)

Before switching production traffic:

- Update application connection strings to PostgreSQL

- Run a smoke test (read and write paths)

- Monitor error rates and slow queries

- Keep MySQL available during the initial cutover window as your rollback option

- If you paused writes, ensure they remain paused until the application is fully pointed at PostgreSQL

Rollback plan (simple):

- If critical issues appear, point the application back to MySQL and fix PostgreSQL offline before retrying.

For a comprehensive list of options and further configuration details, refer to the official pgloader documentation or command instructs.

Troubleshooting pgloader (common errors and fixes)

| Symptom / Error | Likely cause | Fix |

|---|---|---|

FATAL error: SSL connection is required / connection fails | SSL/TLS required or wrong SSL mode | Enable SSL in your connection string / .load config. Confirm certificates and allowed TLS versions. Test connectivity with mysql and psql from the same machine running pgloader. |

Access denied for user (MySQL) | Wrong credentials or insufficient privileges | Verify username/password and grant permissions (at minimum read access + metadata access). Use a dedicated migration user. |

permission denied / must be owner of (Postgres) | Target role can’t create tables/indexes or lacks schema permissions | Grant privileges or use a role that can create objects in the target database/schema. |

| Migration fails on date/time columns (e.g., invalid input syntax) | MySQL “zero dates” like 0000-00-00 or invalid date values | Fix at source or CAST those values to NULL/sentinel. Identify affected tables/columns first, then re-run. |

| Strange time shifts after migration | DATETIME vs TIMESTAMP timezone semantics differ | Confirm which columns represent absolute time. Standardize timezone handling and CAST to timestamptz (or keep as timestamp intentionally). Validate with spot checks. |

Errors or missing values around ENUM/SET | Type conversion mismatch | Convert to TEXT initially for simplest migration, or map to a custom type/lookup table. Test on one table first. |

| Duplicate object name / constraint/index creation fails | PostgreSQL identifier limit (63 bytes) causing truncation collisions | Rename long indexes/constraints or disable index creation during load and create them manually with shorter names after. |

| Foreign key errors during load | Child rows loaded before parents or FK constraints enforced too early | Load without foreign keys/constraints first, then add constraints after data load. Ensure parent tables load before child tables if enforcing FKs during load. |

| “Out of memory” / migration stalls on big tables | Single huge transactions, too many workers, large rows | Reduce concurrency/workers, tune batch sizes, load biggest tables separately, create indexes after load. Run pgloader close to the databases to reduce network overhead. |

| Migration is extremely slow | Index creation during load, network bottleneck, insufficient Postgres resources | Disable index creation during load and create after. Increase Postgres CPU/IO, run migration from same region/VPC, tune workers conservatively. |

| Partial migration / need to re-run safely | Re-running drops or conflicts with existing objects | Use a fresh target DB for retries, or use include drop only when you’re sure you’re pointing at the correct target. Keep logs and validate each run. |

Quick tip for faster debugging

Always capture logs when running pgloader:

- run with output redirected to a log file

- keep the log from the failing run and re-run after one change at a time

Method 2: Minimal-downtime migration with CDC + cutover (Estuary)

If you can’t afford a long maintenance window, a one-time migration tool like pgloader may not be enough on its own. A safer production approach is to backfill historical data, keep continuous changes flowing from MySQL to PostgreSQL during the transition, then cut over your application only after you’ve validated that PostgreSQL is correct and up to date.

Estuary is the Right-Time Data Platform, which means you can choose when data moves: sub-second, near real-time, or batch. For migrations, that flexibility matters because you can start with a backfill and then switch to continuous replication while you validate and plan the cutover.

Step-by-Step Guide: Migrating MySQL to PostgreSQL Using Estuary:

Step 1: Sign Up for Free on Estuary

- Start by signing up for a free account on the Estuary platform. For large-scale production needs, you can contact Estuary for an organizational account.

Step 2: Prepare Your MySQL Database

- Log in to the Estuary web app and ensure that your MySQL database meets the prerequisites.

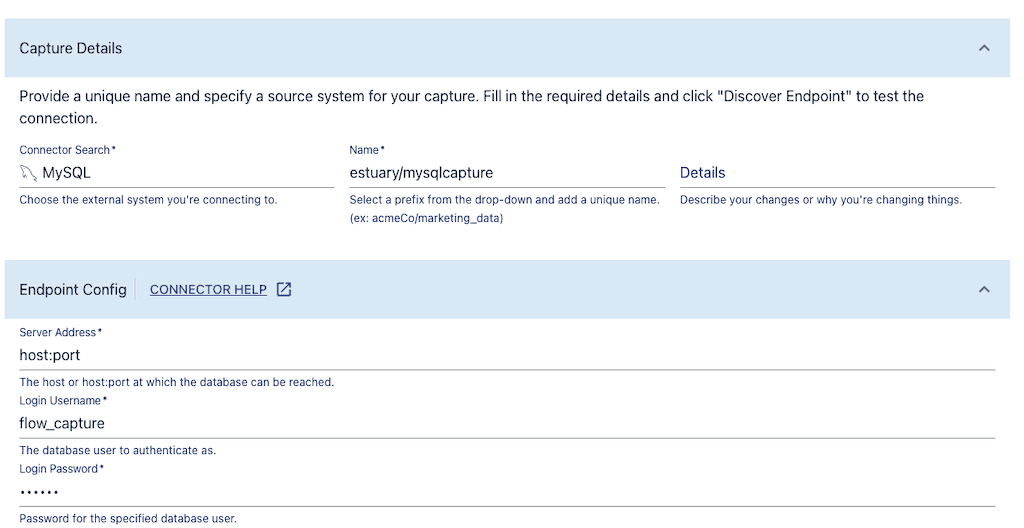

Step 3: Capture MySQL Data in Real-time

- Navigate to the Captures tab and select New Capture.

- Choose the MySQL tile and fill out the required details, including your MySQL server address, database username, and password.

- Select the tables you wish to capture and publish your settings.

Step 4: Materialize to Postgres

- Choose the PostgreSQL tile in the Materialize section.

- Provide the necessary PostgreSQL database credentials, including the database address, username, and password.

- Map the captured MySQL data to new tables in PostgreSQL and publish the materialization.

Estuary will now handle the real-time data replication, ensuring that any new data in MySQL is automatically captured and replicated to PostgreSQL.

For more help with this method, see the Estuary documentation on:

When to choose this over pgloader

Choose CDC + cutover when:

- You need minimal downtime (or near-zero downtime)

- Your database is large, and a full re-run would be expensive

- You want time to validate PostgreSQL while data continues to update

- You need ongoing MySQL to PostgreSQL replication after migration (temporary or permanent)

Method 3: Alternative approaches

pgloader and CDC-based replication cover most real-world migrations, but there are a couple of other approaches worth considering depending on database size, downtime tolerance, and operational constraints.

pg_chameleon (logical replication-style migration)

pg_chameleon is commonly used to replicate MySQL into PostgreSQL using a “load + replay changes” model. Teams typically consider it when they want a replication-style workflow without building their own tooling.

Use it if:

- you prefer an open-source replication approach

- you’re comfortable operating and monitoring another service

- you want more control than a one-shot migration

Skip it if:

- you want the simplest one-time migration (pgloader is usually faster)

- you need a more managed, production cutover workflow (CDC platforms can reduce operational overhead)

Dump and restore (small databases only)

A dump/restore method (like mysqldump → transform → psql) can work well when:

- the database is small

- downtime is acceptable

- schema complexity is low

For anything medium-to-large, dump/restore often becomes slow, hard to validate, and painful to retry.

Conclusion

Database migration and replication are important facets of every business’s data strategy and architecture. As requirements and use cases evolve, it’s more important than ever to have the right type of database.

MySQL and PostgreSQL each have their place and optimal use cases. Whether your goal was to permanently move from one to the other or maintain both side-by-side, we hope this guide has simplified the process for you.

Next steps

If you need minimal-downtime migration or ongoing MySQL to PostgreSQL sync, you can use Estuary to backfill historical data and continuously replicate changes with CDC.

FAQs

Is there a MySQL to PostgreSQL converter?

How do I migrate MySQL to PostgreSQL on Windows?

Can pgloader do incremental replication?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.