Data is the lifeblood of many modern applications, and an application’s storage mechanism can change throughout its lifecycle. The storage solution from when the application was first conceived and prototyped may not be suitable after a few years in production. This article will show you how to capture data from MongoDB to PostgreSQL. This can be part of a migration or replication process.

You will also be introduced to MongoDB and PostgreSQL. The key differences between them will be highlighted alongside how you can perform data integration using two broad approaches: the manual Extract Transform Load (ETL) process and no-code data integration platforms that automate the process.

Choose Your Method

⚡ Real-Time Method: Use Estuary

Set up a low-latency, no-code MongoDB ➝ PostgreSQL pipeline in minutes. Ideal for production environments that require continuous syncing and built-in schema handling.

🛠 Manual Method: Use CLI and SQL

Export data from MongoDB and load it into PostgreSQL using mongoexport and the COPY command. Best for small-scale, one-time migrations — but not ideal for growing systems or real-time needs.

What is MongoDB?

MongoDB is a document-oriented database that follows the NoSQL paradigm for storing, managing, and accessing data. Data is stored in JavaScript Object Notation (JSON)-like documents and does not need to follow a strict schema structure, as keys can be added dynamically.

MongoDB’s flexibility means it can store both structured and semi-structured data efficiently while handling high volumes of data with low latency. MongoDB is a cross-platform database, which means that it is available on various operating systems and cloud service providers.

For applications with changing data requirements, especially those where those requirements are not known ahead of time, it can be a good fit to reduce the friction associated with rapid iterations at the data model layer.

What is PostgreSQL?

PostgreSQL is an open-source Relational Database Management System (RDBMS) that is Structured Query Language (SQL) compliant and is known for its proven reliability, data integrity, and vibrant community. It also has a large feature set and supports extensibility as one of its core tenets.

PostgreSQL is a popular choice amongst developers whenever the requirement of the application to be designed has inherent relationships or interactions that have to be modeled to enable the efficient retrieval of records for analytical purposes.

PostgreSQL is Atomicity, Consistency, Isolation, and Durability (ACID) compliant. What that means is that transactions are either processed in full or not at all. PostgreSQL, therefore, avoids partial execution of operations, and data recovery in the case of failure can be more holistic and methodical.

Critical Differences Between MongoDB and PostgreSQL

In this section, you will look at the key differences between MongoDB and PostgreSQL. Some of the most important differences are listed below.

- MongoDB is a document-oriented database, while PostgreSQL is an object-relational database.

- MongoDB stores data in collections of JSON-like documents called Binary JSON (BSON), whereas data in PostgreSQL is stored in tables.

- MongoDB documents in a collection are the equivalent of rows in PostgreSQL.

- In MongoDB, you don’t have to follow a strict schema definition, as not all documents in a collection may have the same keys, whereas in PostgreSQL, the schema definition is strict, as columns in a table are specified at creation.

- MongoDB uses the MongoDB Query Language for interacting with the database, whereas PostgreSQL utilizes SQL.

- MongoDB uses embedded documents, $lookup, $graphLookup, and $unionWith operations to combine records and perform aggregation, whereas PostgreSQL uses JOINs and UNIONs.

MongoDB and PostgreSQL Data Integration

Data stored in a MongoDB database can be moved to a PostgreSQL database in two different scenarios:

- During a one-time database migration.

- As part of an ongoing replication process that treats MongoDB as the source database and PostgreSQL as the destination database.

If the data changes that happen in MongoDB are to be captured in real-time to a PostgreSQL database, a Change Data Capture (CDC) solution may be utilized.

In this section, you will look at two methods of capturing data from MongoDB to PostgreSQL for migration from MongoDB to PostgreSQL.

Method 1: Manual ETL Process

When moving data between dissimilar databases, the process typically involves extracting the data from the source database, transforming it into a form or format that is compatible with the destination database, then loading the transformed data into the destination database.

To capture data from MongoDB to PostgreSQL using the manual ETL approach, you will first export the data using the mongoexport utility, and make sure that the export is in a CSV file format. Next, you will create a table in the PostgreSQL database that mirrors the structure in your export. Finally, you will use the COPY command in PostgreSQL to load (import) the data into PostgreSQL. Below are the steps and relevant commands to achieve this.

Step 1: Extract the data from MongoDB using mongoexport

From a terminal, run the command below. This assumes you have an existing MongoDB collection.

plaintextmongoexport --host localhost --db employeedb --collection employees --type=csv

--out employees.csv --fields name,position,country,specializationThe command above will generate an output CSV file named employees.csv and assumes you have an employeedb database with an employees collection and the specified keys.

Step 2: Create the PostgreSQL table that mirrors the existing structure

The schema of the PostgreSQL table used to receive the exported data should be the same as the structure in the CSV file. Use the SQL statement below to create a table that mirrors the example collection you used above.

plaintextCREATE TABLE employees (

id SERIAL PRIMARY KEY,

name VARCHAR NOT NULL,

position VARCHAR NOT NULL,

country VARCHAR NOT NULL,

specialization VARCHAR NOT NULL

)Step 3: Load the exported data into PostgreSQL using the COPY command

You can now run the command below to load the data into PostgreSQL.

plaintextCOPY employees(name,position,country,specialization)

FROM 'C:employees.csv' DELIMITER ',' CSV HEADER;Pros

- It is easy to perform migration for small data sizes.

- You can use the existing tooling provided by both databases and do not need to depend on external tools.

Cons

- The manual nature of the process introduces room for error.

- For a large migration with multiple collections, the process can get cumbersome very quickly.

- It requires expertise to manage appropriately, especially when the complexity of requirements increases.

Method 2: No Code Data Integration Platforms

No code data integration platforms provide the means to migrate, replicate, or capture data from one database system to another without writing code. They typically automate the schema conversion process so data types are compatible across the databases that they support. They also provide a User Interface (UI) for easy interaction with the databases.

In this section, you will look at how to perform data integration between MongoDB and PostgreSQL using Estuary and Airbyte. To follow along, it is assumed that you have accounts with these service providers, as they all provide a cloud solution.

Estuary

Estuary is a data integration platform that features the ability to set up real-time data pipelines that are reliable, efficient, and scalable. It comes with an intuitive UI that enables end users to perform tasks seamlessly, and it also supports automatic schema conversion between various data sources.

👉 With Estuary, changes captured from MongoDB can be replicated into PostgreSQL with sub-100ms latency, making it a reliable choice for mission-critical, real-time applications.

It comes in two flavors, a self-hosted version for organizations that want to keep their data on-premises and a fully managed cloud offering that does not require any installation, configuration, or maintenance. You will use the cloud version in this article to capture data from MongoDB to PostgreSQL.

The steps to achieve this are given below.

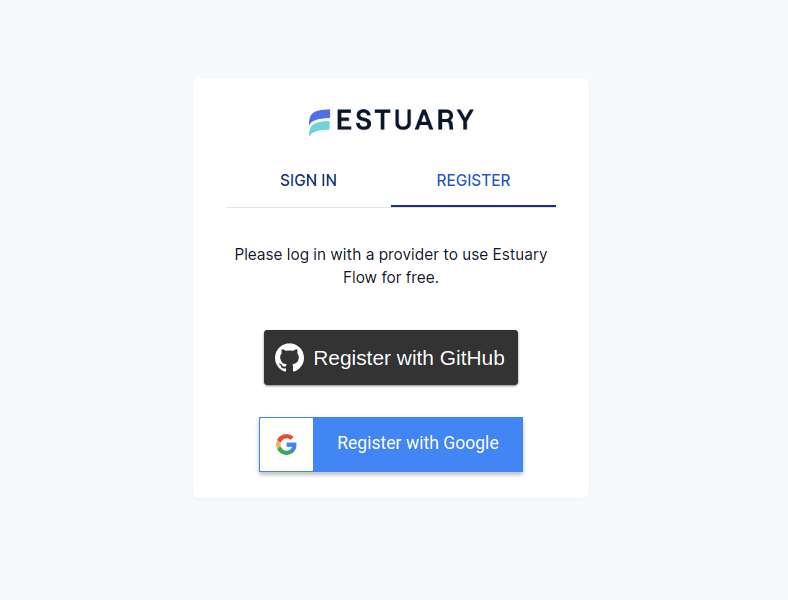

- Sign up for Estuary or log in if you already have an account.

- Make sure your existing MongoDB and PostgreSQL databases are ready to use with Estuary. See the prerequisites for MongoDB and PostgreSQL.

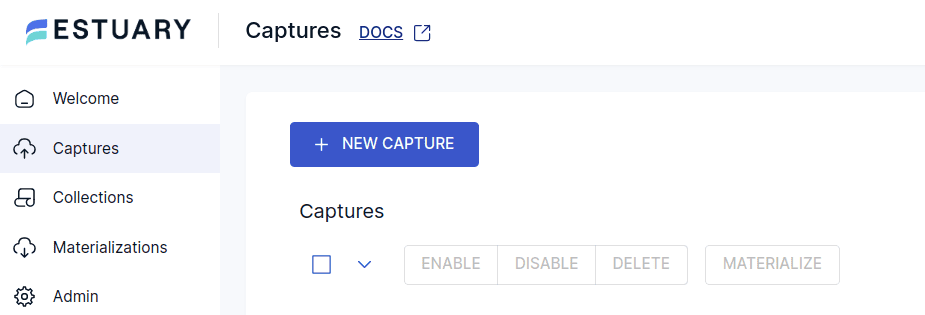

- Next, you will need to create a data pipeline that connects a source to a destination. Data pipelines are called “Data Flow” in Estuary speak. Sources are called “Captures” while destinations are called “Materializations.”

- Click on Captures on the left pane menu, then click on the New capture button.

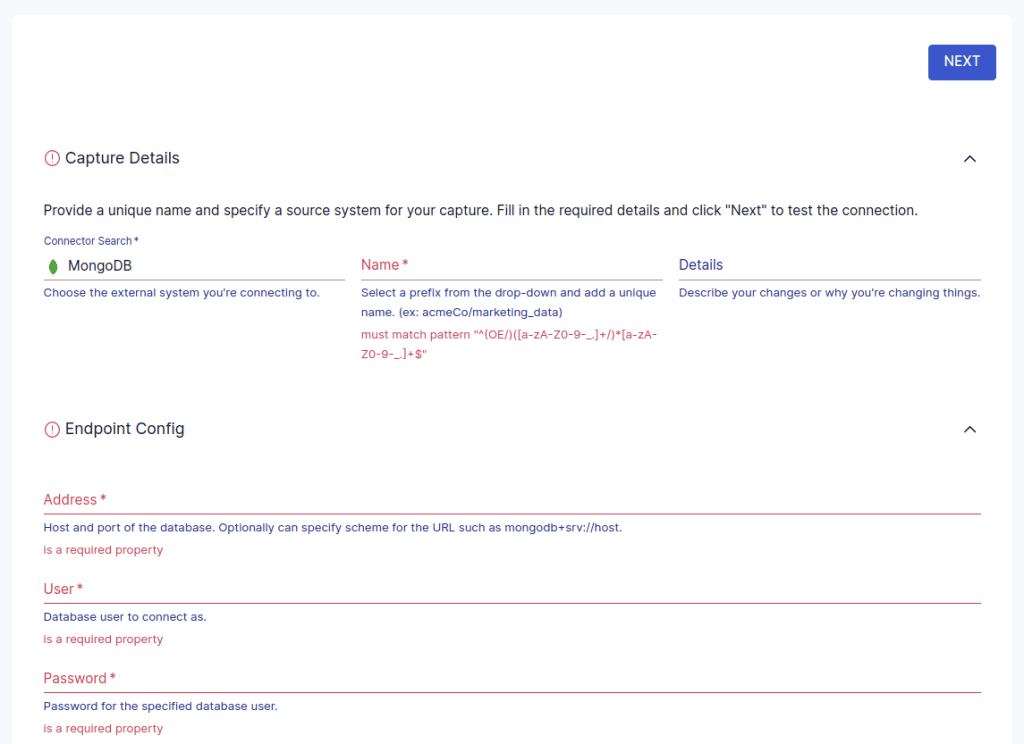

- Next, select MongoDB as the Connector for your source.

- You will have to provide a Name for the capture and provide the necessary details, then click on Next.

- You can select collections or modify properties as you see fit. The collections are the datasets stored in Estuary; data captured from a source connection is stored in the cloud.

- Click on Next again if you made changes to your collections, and then click Save and publish.

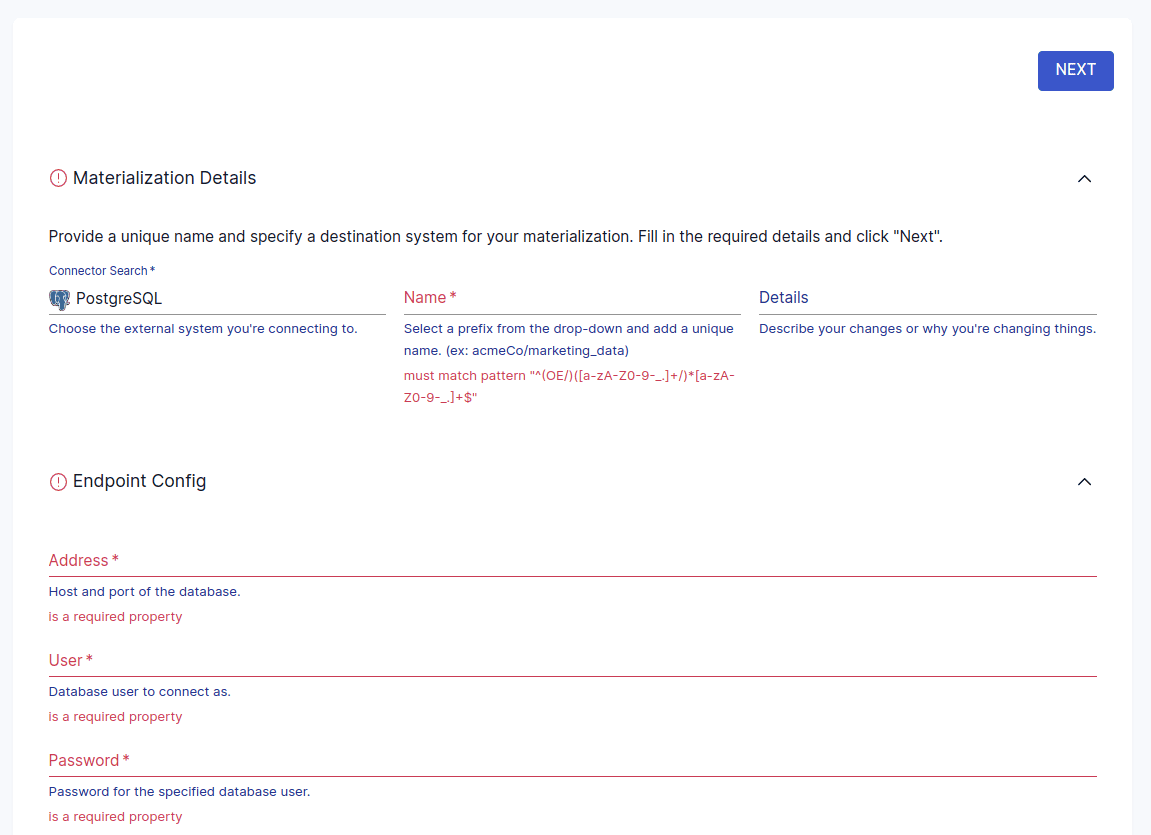

- The next step is to click on Materialize collections.

- You will now select the Connector tile for the destination, which in this case will be PostgreSQL.

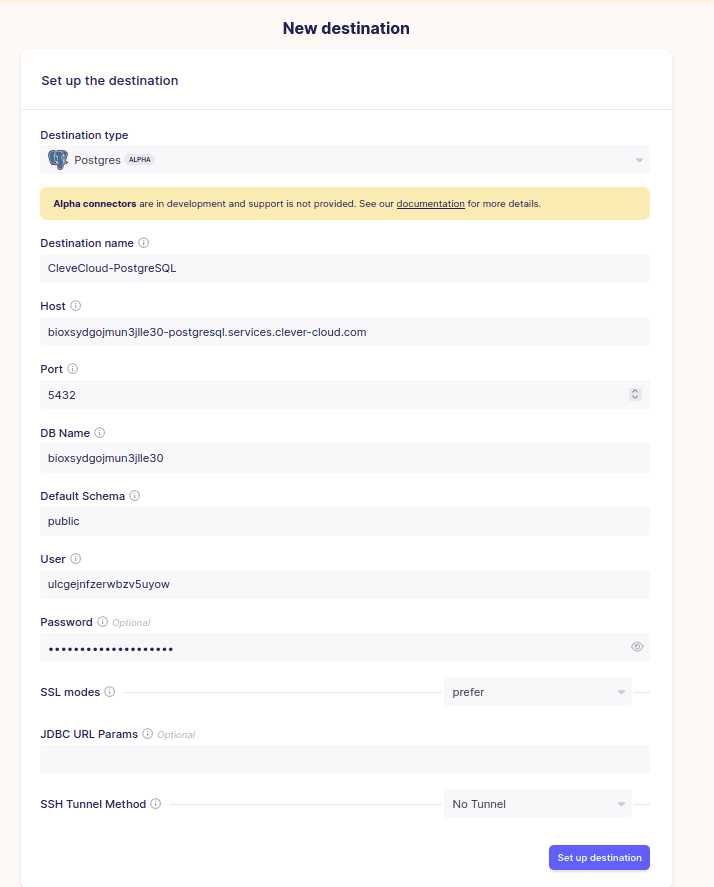

- Choose a unique Name for the materialization, then configure the connection details, then click Next. You can still choose to add or remove collections at this point.

- Finally, click on Save and publish.

Pros

- You do not need to write code to perform data integration, as it can be achieved through a point-and-click interface.

- The cloud version of Estuary lets you set up data pipelines in minutes, since there is nothing to install, configure, or manage.

- The scalability of your operations is guaranteed even as your data grows.

- It has a wide range of supported connectors for source and destination systems.

- Estuary offers automatic schema conversion.

Skip the manual steps and set up a real-time, no-code data pipeline with Estuary. Start your free trial or contact us to learn more about seamless data integration.

Airbyte

Airbyte is an open-source data movement platform that can be used to set up and manage data pipelines. It has integrations with various data sources, applications, and databases. Airbyte has a self-hosted and managed service. For this demonstration, you can use the managed service, and it will be focused on setting up the source and destination connectors and how to link them.

You can follow the steps below to capture data from MongoDB to PostgreSQL.

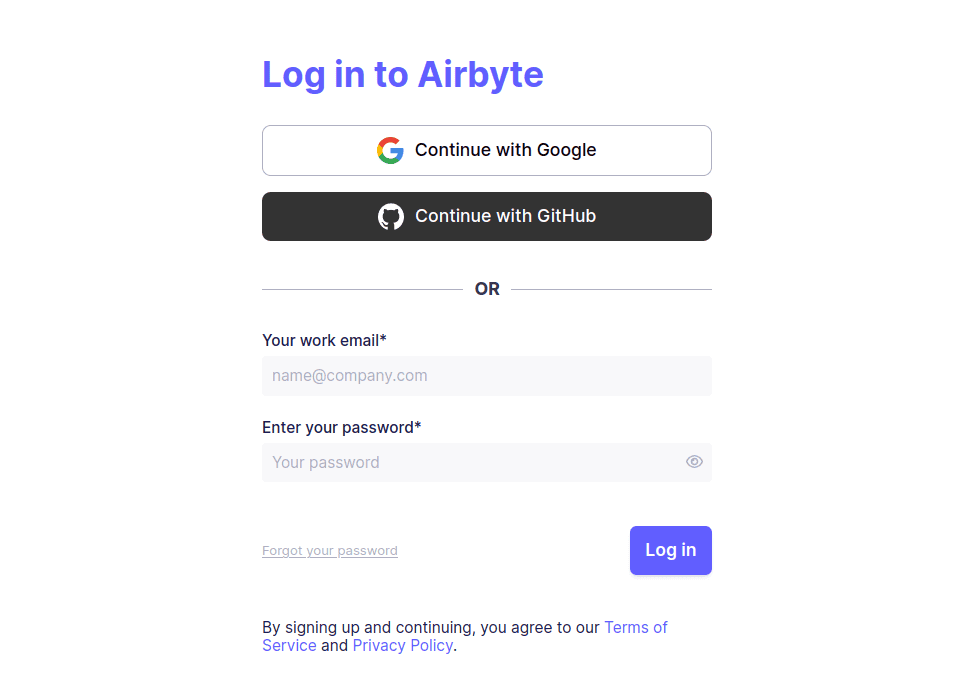

- First, log in to your Airbyte account.

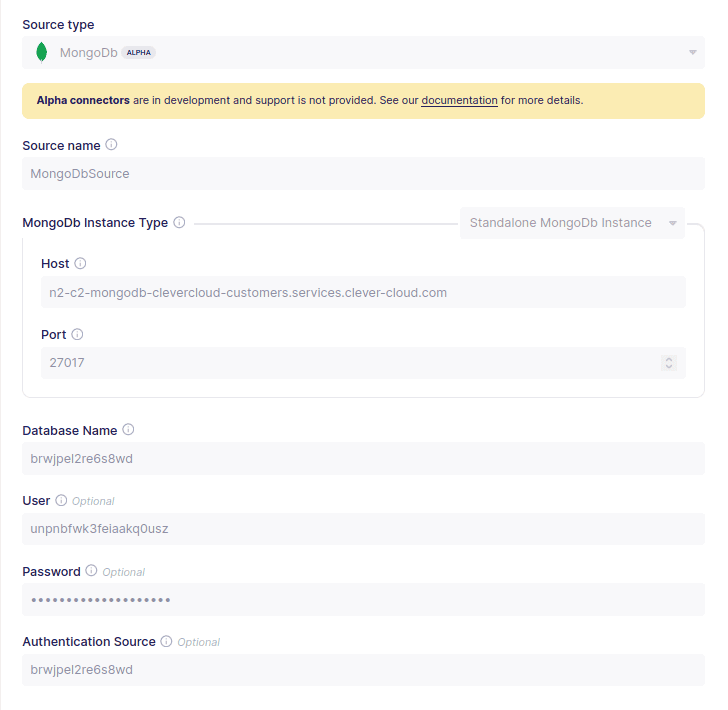

- Next, you will need to set up a source connector. From your Airbyte dashboard, click on New source, then select MongoDB from the list of sources. You will need to provide the necessary connection details to a MongoDB database instance, then click on Set up source.

- Next, you will need to configure a destination connector. To do so click on Destinations in your Airbyte dashboard, then select Create destination and choose PostgreSQL.

- Enter the relevant connection parameters, then click Set up destination to complete the process.

- The last step is to set up the Airbyte connection between the source and the destination. To do so click on Connections in your Airbyte dashboard and choose New connection.

- Select the source and destination you created in an earlier step. The collections in MongoDB will be automatically detected and you can choose the replication mode and the frequency of replication.

- Click on the Set up connection button to start the syncing process.

Note: Airbyte is suitable for batch syncs, but not real-time use cases.

⚡ Looking for a truly real-time, low-latency solution? Estuary supports change data capture (CDC) from MongoDB to PostgreSQL with built-in schema conversion and a no-code UI.

👉 Start your free Estuary pipeline now — no credit card required.

Conclusion

In this article, you learned how to capture data from MongoDB to PostgreSQL via two methods: a manual ETL process and no-code data platforms. Before that, you were introduced to MongoDB and PostgreSQL database systems, and some of the major differences between them were outlined.

At this stage, you should have a robust understanding of migrating data from MongoDB to PostgreSQL and how data integration platforms like Estuary can help you simplify the process.

Estuary is free to try today. Have questions about this or any other Data Flow? Join the Estuary team in the community discussion, or check out the docs.

Related posts

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.