Leveraging quality data has become extremely important for any enterprise seeking to stay ahead of its competition. While Google Search Console (GSC) provides valuable metrics to help businesses understand their website's performance and engagement, it’s not designed for in-depth analysis. For those seeking to utilize their data better with advanced analysis, there’s a need for alternative solutions. Here's where Amazon’s Redshift comes into play.

Redshift is designed for complex data analysis and is intuitive and easy to understand. However, data migration from Google Search Console to Redshift can pose significant challenges, particularly for individuals lacking a sound technical background. This guide will cover the two best methods of loading data from Google Search Console to Redshift.

What Is Google Search Console?

Google Search Console is a web-based service offered for free by Google. Website owners, webmasters, and SEO professionals can use this tool to monitor their site’s presence in Google Search results. You get a variety of features and reports to understand how Google views your website's performance, security, and other key metrics.

Let’s look at some of Google Search Console's key features:

- Monitoring Website Performance: As a website owner, you can use Google Search Console to monitor your website’s performance. This tool allows you to see how frequently your site appears on Google search results, giving a better understanding of the website’s visibility.

- Troubleshooting: Search Console can help identify crawling errors, sitemap issues, and other SEO-related technical problems. This helps ensure the website runs properly and provides a good user experience.

- Improve Search Presence: The tool can also help website owners improve their search presence. It provides insights into search queries that bring users to your site, which can help you create better content and improve your overall SEO strategy.

- Security Alerts: Google Search Console notifies web admins of security issues such as hacking and malware. It also helps monitor and resolve server errors and site load issues.

What Is Redshift?

Redshift is a fully managed, petabyte-scale data warehouse provided by Amazon Web Services (AWS). It allows users to analyze large volumes of data quickly and cost-effectively using standard SQL and Business Intelligence (BI) tools. It offers robust security features such as encryption and network isolation using Amazon Virtual Private Cloud (VPC) and AWS Identity and Access Management (IAM). Redshift also uses advanced column-based storage architecture, which reduces the overall data scan size during queries, leading to faster read times and optimized storage and compression.

Key features of Amazon Redshift include:

- Massively Parallel Processing (MPP): Redshift uses MPP architecture to distribute and query data across several nodes. Each node processes the data subset separately and in parallel, significantly boosting query performance and scalability. This architecture ensures that query speed remains consistent even as the volume of data increases, making it ideal for large-scale data analysis.

- Advanced Compression Techniques: Redshift utilizes advanced compression algorithms to reduce storage requirements and improve the performance of your queries. It automatically samples data and selects the most appropriate compression scheme, which helps in reducing the amount of I/O needed for data processing.

- Concurrency Scaling: Redshift can handle virtually unlimited concurrent users and queries by automatically adding additional query processing power when needed. This automatic scaling reduces latency and performance issues during sudden spikes in traffic.

- Advanced Query Accelerator (AQUA): Redshift uses a high-speed cache layer that accelerates data queries. AQUA uses an innovative hardware-accelerated cache to deliver up to 10 times faster query performance than other enterprise warehouses. This makes Redshift the top choice for data-intensive analytics.

If you’re considering other destinations for GSC data, you can also explore our step-by-step guides for Google Search Console to BigQuery and Google Search Console to Snowflake.

How to Connect Google Search Console to Redshift: 2 Methods

Now, let’s explore the steps you’ll need to follow to migrate data from GSC to Redshift.

There are two ways to move Google Search Console (GSC) data into Redshift:

- Automated Method (Estuary): Use a no-code pipeline tool with built-in connectors to continuously sync GSC data to Redshift in real time.

- Manual Method (CSV + COPY): Export data from GSC as CSV, upload it to Amazon S3, and use Redshift’s COPY command to load it into your tables.

The automated method is best for real-time, scalable analytics, while the manual method suits small, one-off transfers.

Automated Method: Connect Google Search Console to Redshift Using Estuary (No-Code)

You can easily load data from Google Search Console to Redshift using user-friendly no-code ETL (extract, transform, load) tools that do not require you to write code. One of the best tools is Estuary, which streamlines data migration from GSC to Redshift.

Prerequisites

- Google Search Console

- Amazon Redshift

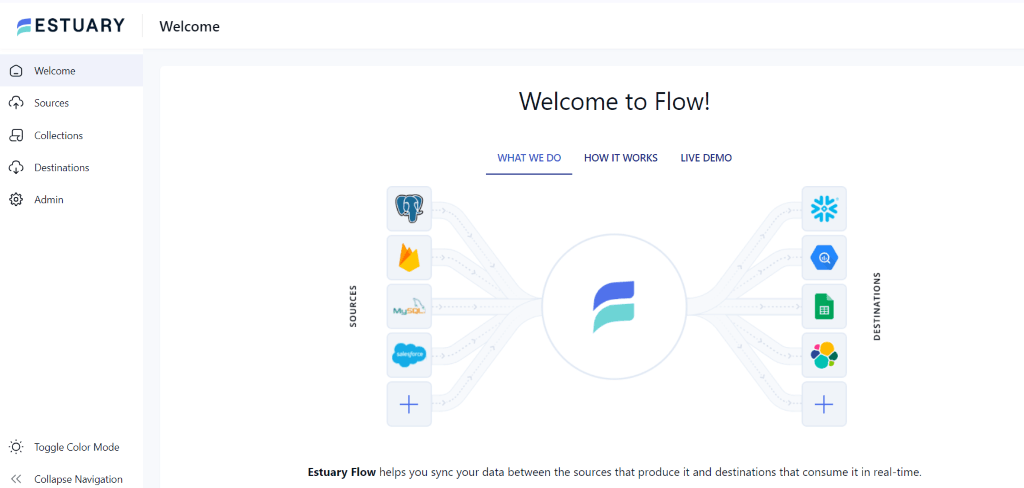

- Create an account or log in to your existing Estuary account.

Take a look at the following easy steps to migrate data from Google Search Console to Redshift:

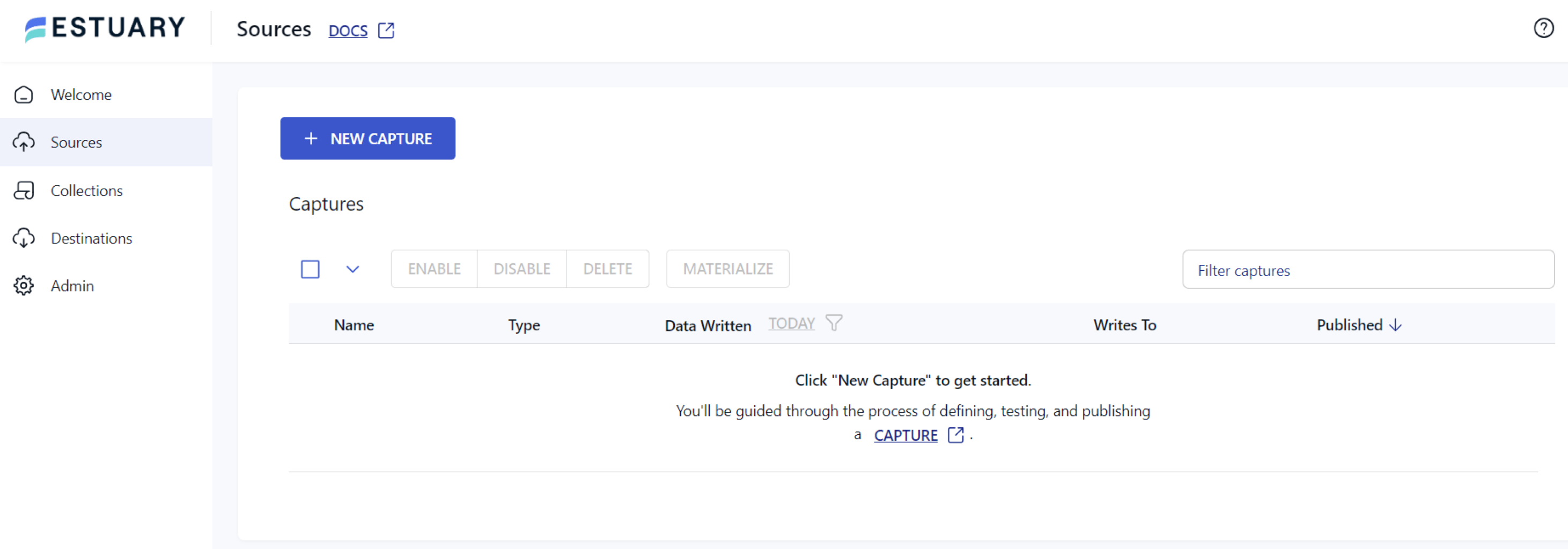

Step 1: Configure Google Search Console as Source

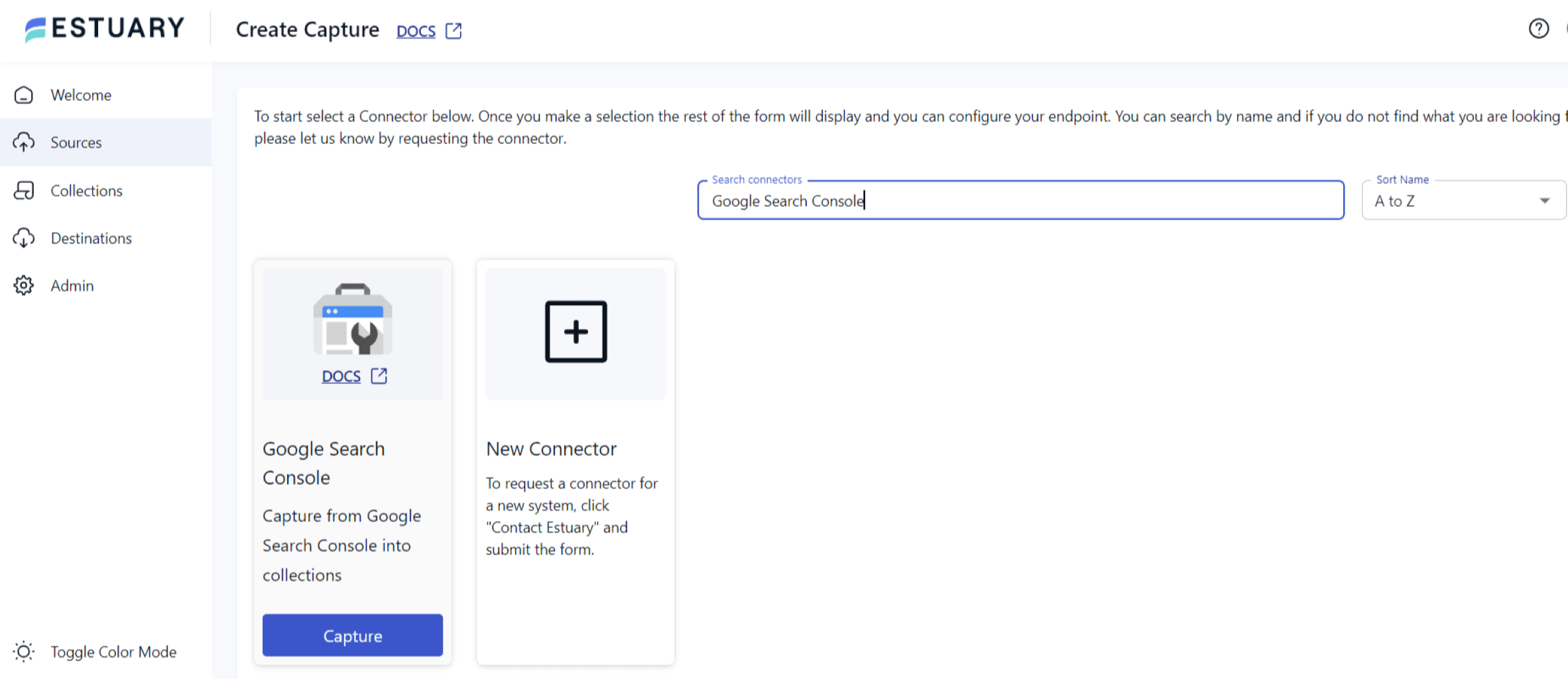

- In the Estuary dashboard, click on the Sources tab on the left navigation pane.

- Click on the + NEW CAPTURE option.

- Next, search for Google Search Console in the search field and click the Capture button to begin configuring it as the data source.

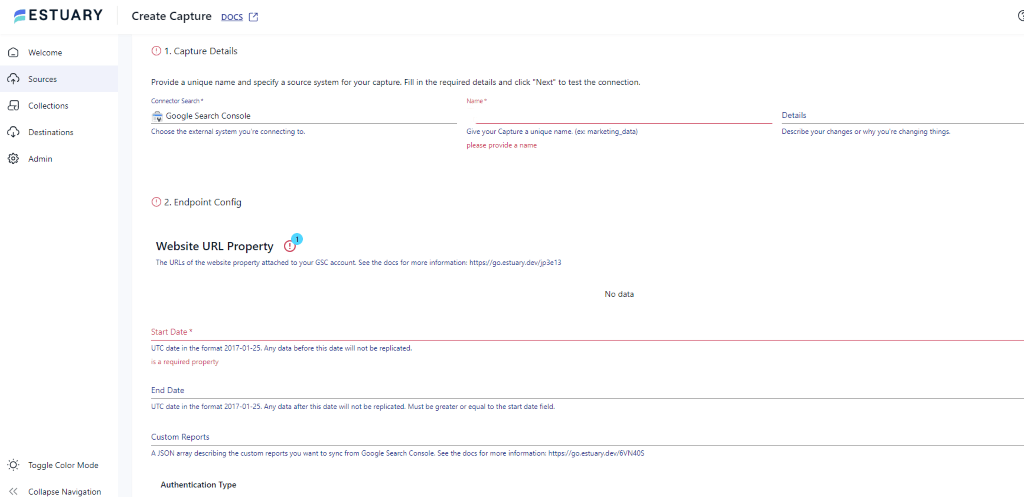

- On the Create Capture page, correctly enter the specified details like Name, Start Date, and End Date.

- After filling in the required fields, click on NEXT > SAVE AND PUBLISH. This will capture data from Google Search Console and move it into Flow collections.

Step 2: Configure Redshift as the Destination

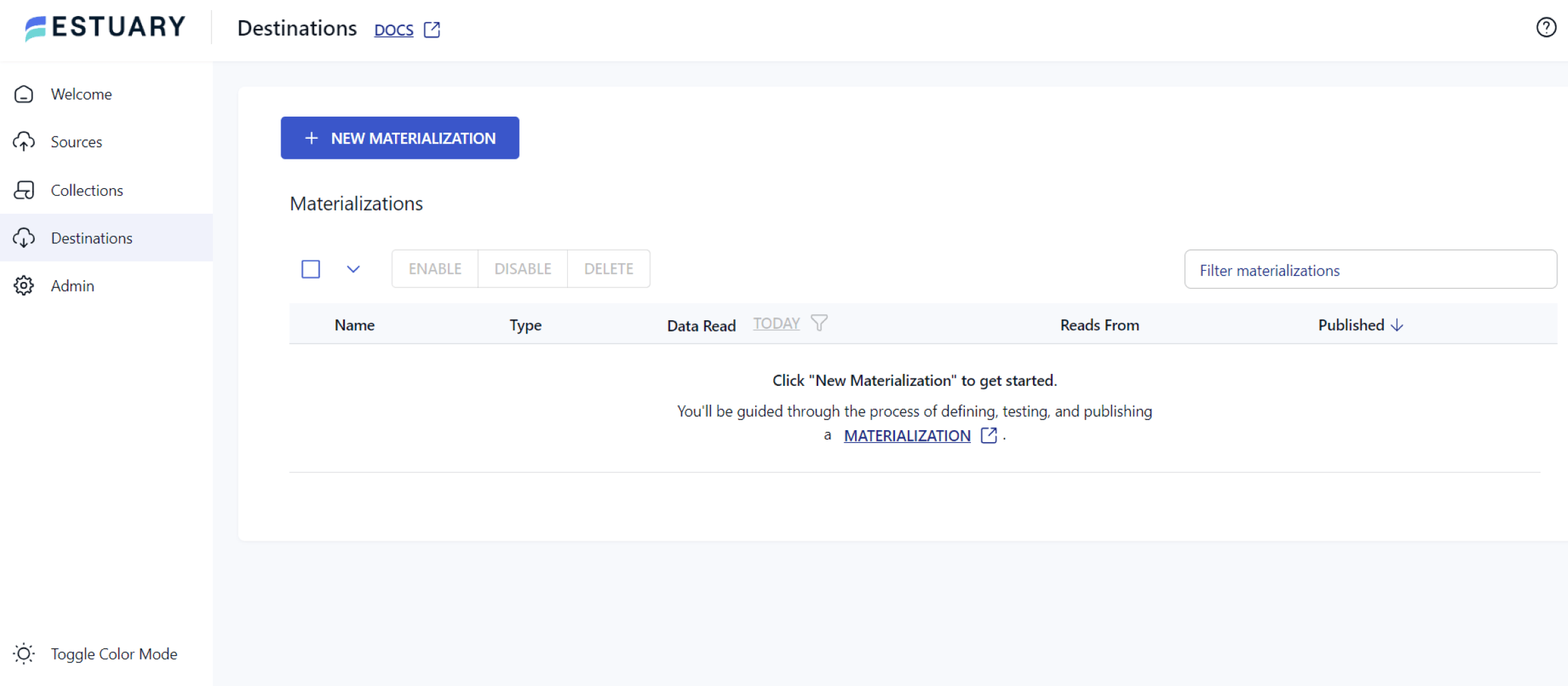

- Once the source is set, click on the Destinations option on the dashboard.

- Click on the + NEW MATERIALIZATION button on the Destinations page.

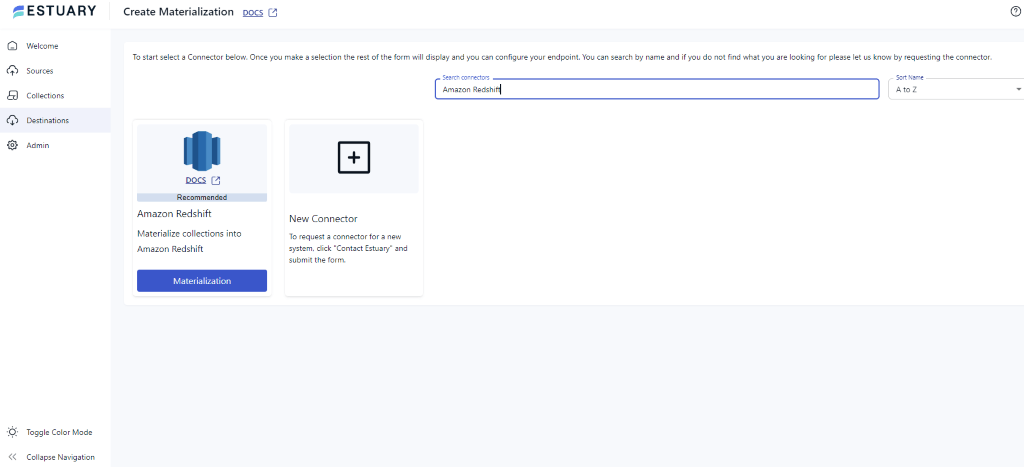

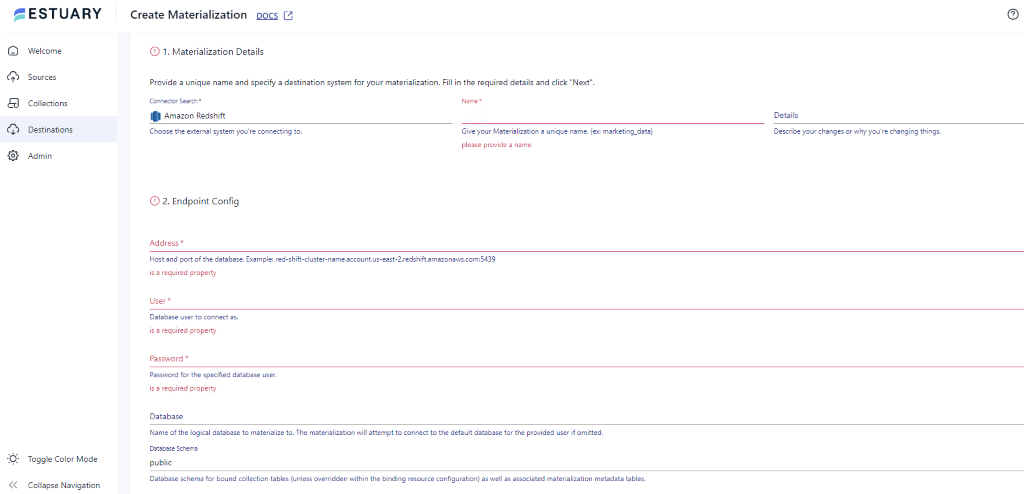

- Type Amazon Redshift in the Search connectors box and click on the Materialization button of the connector when it appears in the search results.

- On the Create Materialization page, enter the details like Address, User, Password, S3 Staging Bucket, and Region.

- If your dataset from Google Search Console isn’t filled automatically, you can add it manually using the Link Capture button in the Source Collections section.

With the source and destination configured, Estuary will begin loading data from Google Search Console to Redshift.

Benefits of Using Estuary

Here are some of the advantages of using Flow:

- User-Friendly Interface: Estuary offers an intuitive and easy-to-use interface, allowing users to build and manage data pipelines without extensive technical expertise.

- Automated Workflows: Real-time ETL tools like Estuary automate the data migration process, reducing manual work for data ingestion. Such automation reduces the risk of errors and creates robust and complex workflows.

- Protection Against Failures: Estuary ensures resilience against failures by storing captured data in cloud-backed data collections. This process serves as a backup measure, offering exactly one-semantic and distributed storage.

- Real-time data processing with CDC: Estuary leverages Change Data Capture (CDC) for real-time data processing. This feature helps maintain data integrity, reduces latency, and keeps the datasets up-to-date.

Manual Method: Manually Load Google Search Console Data into Redshift (CSV + COPY)

Loading data from Google Search Console to Redshift manually involves multiple steps that require the utilization of various AWS services, including AWS Redshift and AWS S3. Here are the steps to manually transfer data from Google Search Console to Redshift.

Prerequisites:

- Google Search Console account

- Redshift Cluster

- AWS Credentials (IAM)

- Amazon S3 bucket

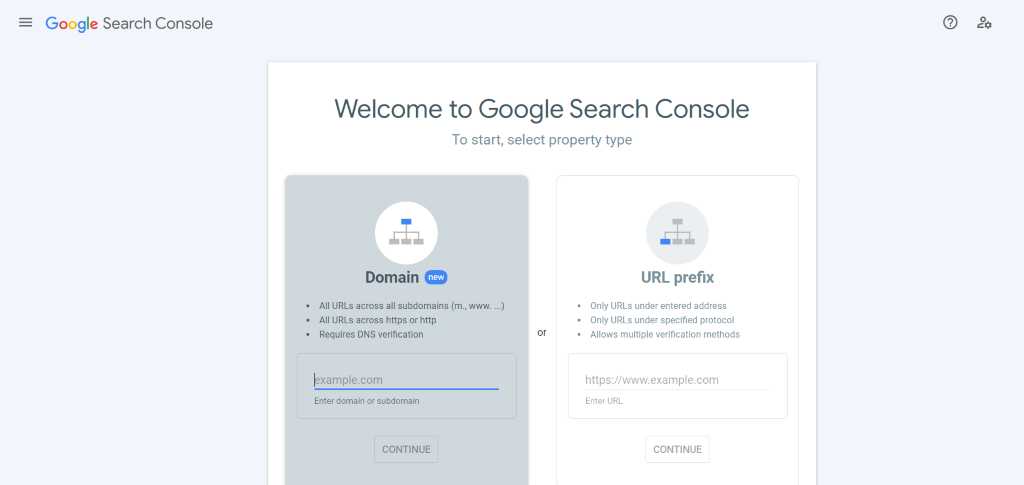

Step 1: Exporting Data from Google Search Console as CSV files

- Log into your Google Search Console account.

- From the dashboard, select the parameter or feature you wish to export.

- On the left-hand menu, navigate to the Performance View. Select any metric—Search results, Discover, or Google News—and choose the desired date range for the data you wish to export.

- Click on the Export button. You will find it at the top right corner.

- Finally, choose the CSV format for extracting the data.

Step 2: Preparing Amazon S3 for Data Transfer

- Log into your Amazon S3 account. If you don’t have an S3 bucket ready, create a new one where you can upload your CSV files.

- Upload the same CSV file that you exported from the GSC to this bucket. Ensure the file is correctly named and located in an easy-to-access location.

Step 3: Loading GSC Data in Amazon Redshift

- Open the Amazon Redshift Console and navigate to the Redshift cluster where you want to load the GSC data.

- Create a new table or use an existing table that corresponds to the CSV file’s data. This step is crucial for ensuring the data loads correctly.

Step 4: Executing the Data Transfer

- Use the COPY command in Redshift to start transferring the data. This command should also include the file path in the S3 bucket, the destination table in Redshift, and the necessary credentials. Use the below command for Data Transfer.

| COPY <schema-name>.<table-name> (<ordered-list-of-columns>) FROM '<manifest-file-s3-url>' CREDENTIALS'aws_access_key_id=<key>;aws_secret_access_key=<secret-key>' GZIP MANIFEST; |

- Monitor the process to confirm that the data transfer is completed successfully.

These are the steps for connecting Google Search Console to Redshift. However, this method has several limitations:

- Complex Procedures: The process of manual data handling necessitates the implementation of several procedures on different platforms, such as data cleaning, transformation, and continuous upload. This approach can prove to be time-intensive in real-time scenarios. Furthermore, it also poses a significant risk of data loss and manipulation. These factors render manual data handling challenging and less efficient in businesses and academic settings.

- Performance Issues: The utilization of manual procedures for exporting and uploading data can adversely affect the data processing speed. Slow and ineffective data processing systems delay the analysis of critical data and lead to poor decision-making. It can have a significant impact on the revenue and profitability of your business.

- Lack of Expertise: The manual processing and handling of data requires you to have technical experts by your side who can swiftly customize code according to your needs. These experts can also resolve bugs or bottlenecks during the process. However, if you do not have the right staff by your side, the entire process can prove to be expensive and time-consuming for you.

The Takeaway

Data migration from Google Search Console to Redshift is crucial for businesses leveraging data to increase their online reach. The first method involves using CSV files to load data into Redshift using the built-in COPY command. The second consists of using Estuary, a fully-managed, real-time data integration tool that establishes the entire workflow in just a few clicks.

Traditional manual methods of Google Search Console to Redshift integration offer more control but demand technical expertise and time investment. Furthermore, traditional methods are unreliable for real-time scenarios due to multiple manual steps, which makes them prone to human error and delays. It is best to understand the specific needs of your business before making a choice between these two methods.

Estuary provides an extensive and growing list of connectors, robust functionalities, and a user-friendly interface. Sign up today to simplify and automate data loading from Google Search Console to Redshift.

FAQs

How do I export data from Google Search Console?

Can Google Search Console connect directly to Redshift?

What is the easiest way to load GSC data into Redshift?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.