More downtime, performance bottlenecks, and hindered ROI – this is what a database schema can pull you out from. Of course, that's other than laying the foundation for efficient database development. So, overlooking the importance of a well-designed database schema can end up leaving you in a not-so-good situation.

Today, managing unstructured data is a major challenge for 95% of businesses. So, whether you’re running a startup looking to revolutionize an industry or a multinational corporation processing terabytes of information daily, the right schema ensures that your data architecture stays responsive and capable of translating raw information into actionable insights.

But how can you ensure to make the best out of database schemas? From the benefits and types of database schemas to their design patterns and applications across different industries, you’ll find all the answers in this easy-to-follow guide.

What Is a Database Schema?

A database schema is a comprehensive blueprint that formally defines the complete logical structure and organization of data within a Database Management System (DBMS). It defines how data is formatted, stored, processed, secured, and accessed among the various structural schema objects like tables, views, indexes, triggers, logical constraints, etc.

In other words, schema serves as the skeleton and architectural authority governing everything in the database. It provides:

- Effective querying capabilities for data engineers

- Overall governance of database policies and standards

- Accurate access control administration by database managers

- Proper technical design of the database schema table, and objects by developers

6 Benefits of Using Database Schemas

Database schemas are dynamic tool sets that help in many critical operations in Relational Database Management Systems (RDBMS). Let’s take a look at the top 6 database schema benefits.

Data Integrity

Well-constructed database schemas play an important role in maintaining data validity and consistency. They use column types, NOT NULL, and CHECK constraints to validate new data entries. Also, integrity constraints like primary keys, foreign keys, and unique constraints help maintain data accuracy.

A centralized schema also addresses potential issues of missing or duplicate information through default values and constraints. This not only guarantees high-quality data but also makes it reliably accessible to all applications.

Security

Database schemas provide robust data security. They implement roles, views, and permissions to manage who accesses what. The schema can restrict data exposure and help in auditing critical activities. Even column-level encryption can be set through the schema for extra security.

Documentation

An up-to-date database schema acts as a guide for your database instance. It helps in long-term maintenance and simplifies the onboarding of new developers. With the schema, it’s easier to troubleshoot issues and plan new developments. It also helps understand the impact of any changes.

Analytics

Strong database schemas provide easier and faster data analytics. They organize data storage and define relationships between data elements to streamline queries and reporting. Analytic engines can then join data sources and perform aggregations more efficiently.

Agility

A flexible schema lets you extend features and functions smoothly. This way, you don’t have to perform massive overhauls when developing new applications in database systems. For example, a blog engine can add social sharing or multimedia features without changing existing data structures and set the path for step-by-step improvements.

Governance

Database schemas act as centralized hubs for rules and standards with guidelines for backup, monitoring, and compliance. This is particularly helpful for large organizations as it provides uniform data handling across multiple database instances. Schemas also help in assigning team roles for improved collaboration across departments.

Types of Database Schemas

Each schema type plays a unique role in the database life cycle. Let’s discuss these roles in detail.

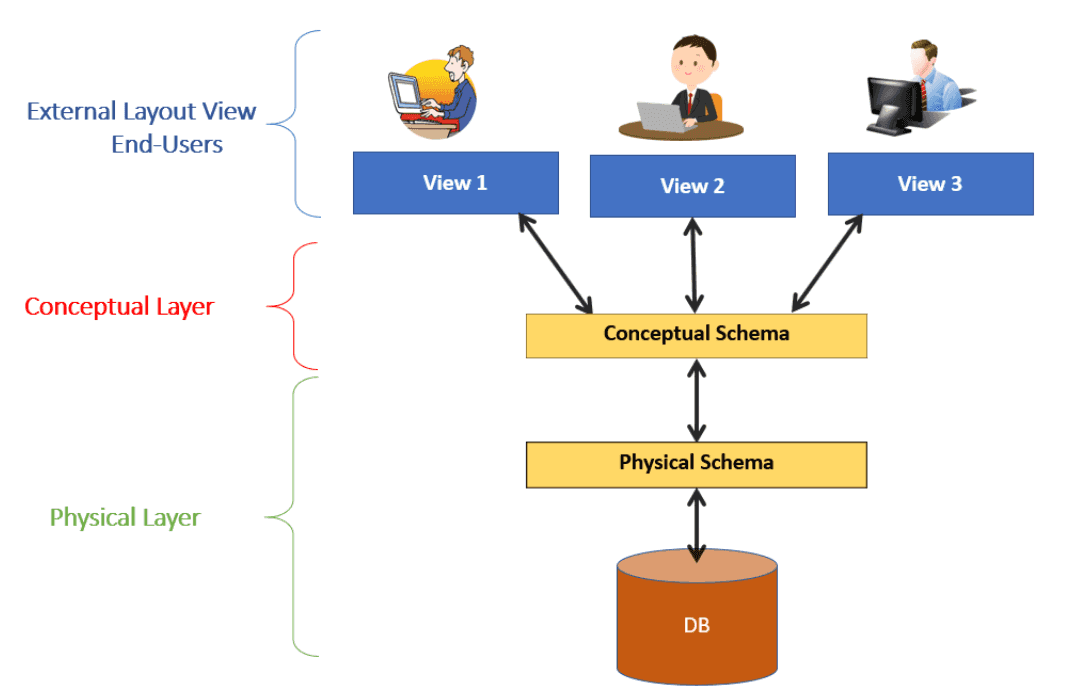

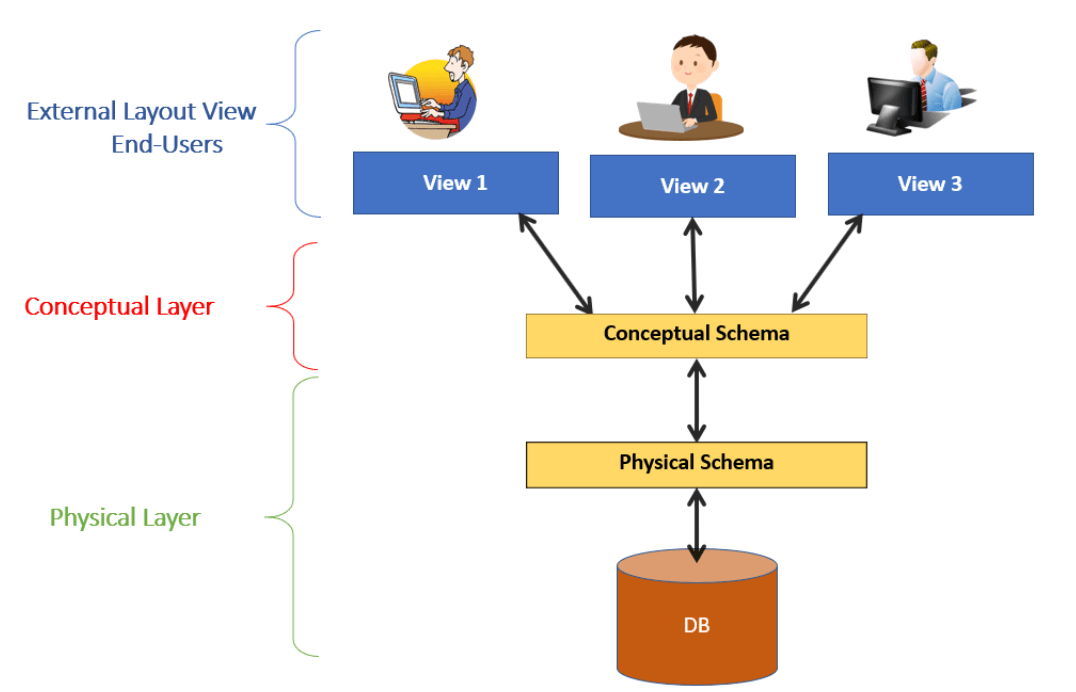

Conceptual Schema

The conceptual database schema is the highest level of abstraction that focuses on describing the main entities, attributes, and relationships included in the database design.

- It is developed early in the database planning process to capture business requirements and model the overall data landscape broadly.

- Conceptual schemas commonly use Entity-Relationship Diagrams (ERDs) to visually represent important entities and their attributes, as well as the relationships between those entities.

- ERDs let non-technical business stakeholders and database designers communicate effectively during requirements gathering.

- As the database design lifecycle progresses, the conceptual schemas are refined to solidify requirements before logical and physical design begins.

- The conceptual schema does not include technical details like data types, constraints, storage parameters, etc. It focuses on the important data entities and relationships from a business point of view.

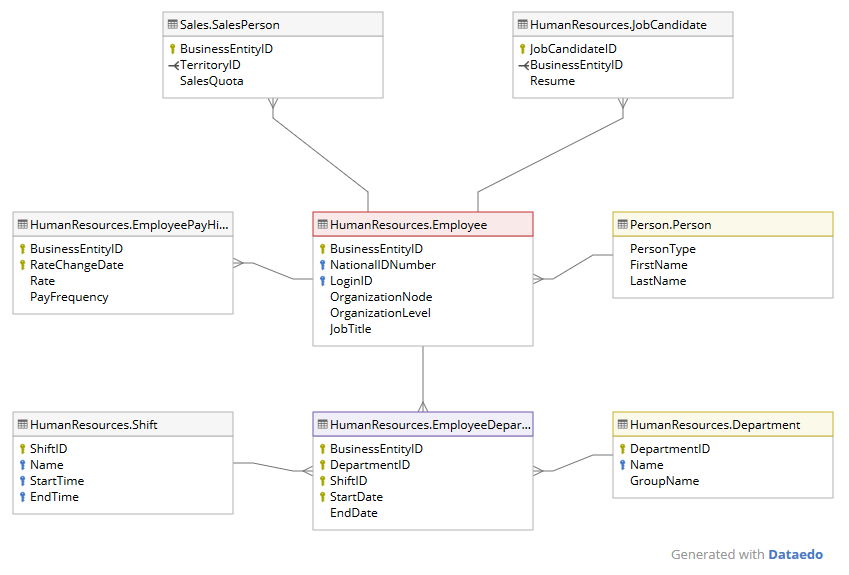

Logical Schema

The logical database schema adds more technical specifics, yet it still keeps some of the physical storage and implementation factors abstract.

- It defines the comprehensive logical structure of the database, like detailed data types, keys, field lengths, validation rules, views, indexes, explicit table relationships, joins, and normalization.

- The schema outlines the database's detailed logical framework. It also outlines views, indexes, and table relationships and sets parameters for joins and normalization.

- This schema type uses elements like entity-relationship diagrams, normal forms, and set theory to shape the structure and organization of the database. It also shows relationships and dependencies among various components.

- Logical schemas serve as the formal input for physically implementing the database system using DDL statements, table scripts, or declarative ORM frameworks.

Physical Schema

The physical database schema describes how the database will be materialized at the lowest level above storage media.

- The schema maps database elements like tables, indexes, partitions, files, segments, extents, blocks, nodes, and data types to physical storage components. This bridges the logical and physical aspects of database management.

- The physical schema specifies detailed physical implementation parameters like file names, tablespaces, compression methods, hashing techniques, integrity checks, physical ordering of records, object placement, and more.

- It includes hardware-specific optimizations for response time, throughput, and resource utilization to meet performance goals.

- It is tuned and optimized over time after the system is operational and real production data volumes and access patterns emerge.

The Role of Estuary In Database Management

Estuary is our real-time ETL tool designed to redefine your data management approach. Equipped with streaming SQL and TypeScript capabilities, it seamlessly transfers and transforms data among various databases, cloud-based services, and software applications.

Far from being just a data mover, Estuary focuses on the user experience and provides advanced controls to maintain data integrity and consistency. It serves as your all-in-one solution for integrating traditional databases with today's hybrid cloud architectures.

10 Key Features Of Estuary

- Universal data formatting: Our real-time ETL features easily manage a wide range of data formats and structures.

- Stay updated in real-time: Incremental data updates give you the most current data, thanks to real-time CDC features.

- Easy data retrieval: Fetch integrated data effortlessly from different sources through our globally unified schema functionality.

- The bridge to modern data solutions: Smoothly transition from traditional databases to modern hybrid cloud setups without any complications.

- All-in-one connectivity: Select from an extensive library of over 200 pre-configured connectors for hassle-free data extraction from various sources.

- Eliminate redundancies: Automated schema governance and data deduplication features streamline your operations and reduce unnecessary repetition.

- Adaptable to your needs: Our system architecture supports distributed Change Data Capture (CDC) at rates up to 7GB/s to adapt to your evolving data requirements.

- A comprehensive view of your customers: Combine real-time analytics with historical data to better understand customer interactions and improve your customization strategies.

- Uncompromising data security: Provides multiple layers of security protocols, including encryption and multifactor authentication, to secure your data integration processes.

- Added layers of security: Additional authentication and authorization measures provide an extra level of protection against unauthorized access, all without sacrificing data quality.

Types of Database Schema Design Patterns

Database schema design patterns offer a variety of structures, each well-suited for different types of data and usage scenarios. Choosing the correct design pattern can make data storage and retrieval more efficient. Let’s look at 5 common schema design patterns, each with its unique characteristics and applications.

Flat Schemas

A simple flat schema is a single table containing all data fields represented as columns. This table stores all data records without any relationships between elements in the schema.

Flat schema works well for smaller, less complex data sets rather than large interconnected data. Its simplicity provides quicker queries, thanks to the absence of table joins. However, this comes at the cost of data redundancy as all information is stored in a single table which can cause repeated records.

Although flat schemas are easy to implement, their scalability is limited and they can become inefficient for more complex use cases. Nonetheless, they are effective for simple transactional records or as initial prototypes that can be changed to more sophisticated database models later.

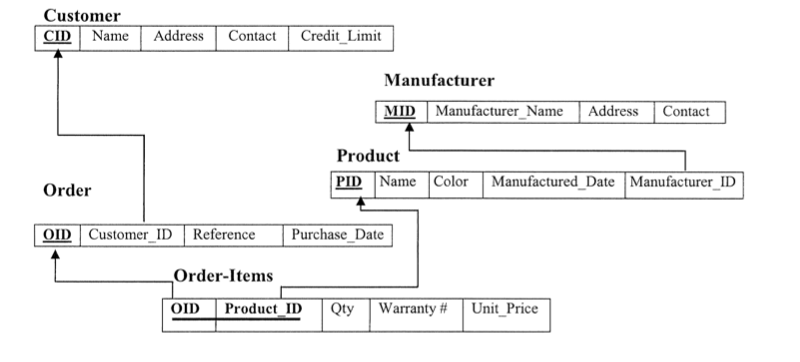

Relational Schemas

The relational model stands as the most versatile and widely used database schema design. It organizes data into multiple tables that are both modular and interrelated. This design approach normalizes data and reduces data redundancy as each table represents just one entity.

Relationships between tables are logically established at the schema level through primary and foreign keys. Although the data is normalized, the relational model still lets you recombine data from different tables via joins during queries.

This mix of isolated tables and interconnected relationships lets you easily expand the structure. This means you can change the schema without major disruptive changes. Existing applications can continue to operate without modification even as new features are added in separate tables. This flexibility makes relational models ideal for structuring complex, interconnected data sets.

Star Schemas

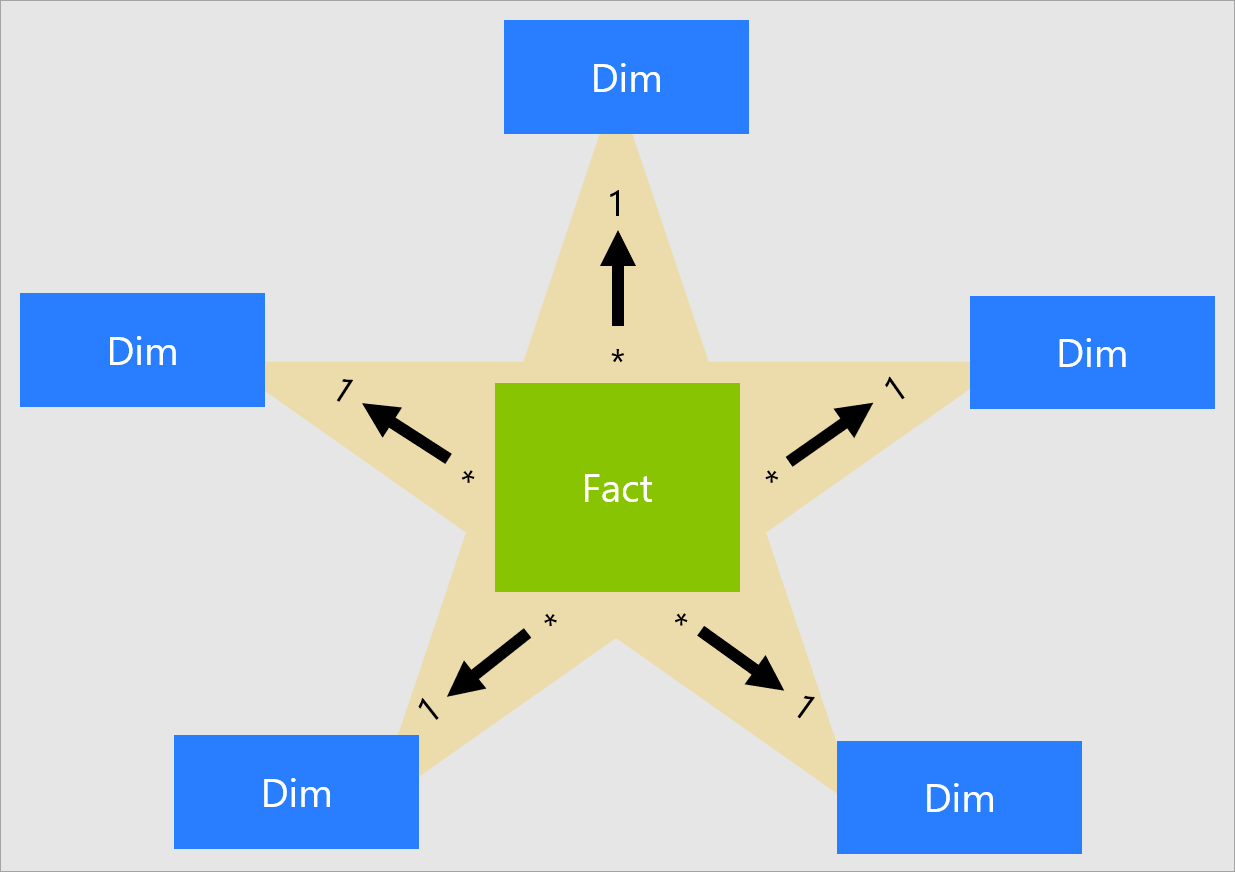

The star schema is a design pattern that helps in analytic data warehousing and business intelligence tasks. It structures data into a centralized fact table flanked by multiple-dimension tables, forming a star-like configuration.

Fact tables capture quantifiable events or business metrics like sales orders, shipments, or supply chain activities. On the other hand, dimension tables contain descriptive, contextual data like customer information, product details, and geographic locations.

This division into separate tables for facts and dimensions let star schemas support rapid queries even across large data sets. The centralized fact table gives quick access to all associated tables which makes this model particularly efficient for summarizing, aggregating, and analyzing large amounts of historical data.

However, the star schema has limitations. It's not the best choice when it comes to handling real-time transactional data or complex interrelationships among data points. Its design is most effective for one-to-many relationships between the fact table and its corresponding dimensions.

Snowflake Schemas

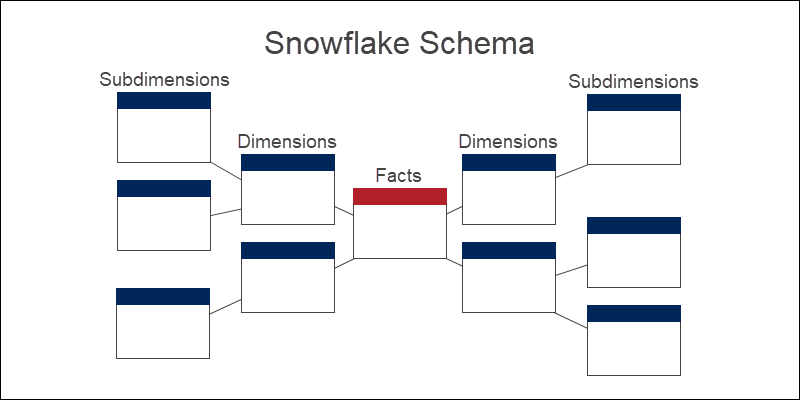

The snowflake schema is a variation of the star schema in which dimension tables are further broken down into sub-dimensions, creating a branching structure that resembles a snowflake. This extends the normalization process to the dimensions themselves.

For example, a Location dimension may be broken down into Country, State, and City sub-dimensions in a snowflake model. The extra normalization increases analytic flexibility but also involves additional table joins across these hierarchical dimensions.

Snowflake schemas isolate attributes to minimize duplication for better disk space utilization. The branching dimensions provide easy drill-down across multiple data aggregation levels. However, snowflake queries tend to be more complex because of added normalization.

Graph Database Schemas

A graph-oriented database schema stores data in nodes that directly relate to other nodes through typed relationship edges. This model efficiently represents highly interconnected data found in social networks, knowledge graphs, or IoT device networks.

Since these relationships are encoded directly at the schema level, graph databases quickly traverse complex networks of densely related nodes across multiple edges. Even as data volumes increase, query performance remains strong when oriented along the graph dimensions.

That said, graph schemas have their limitations. They are not very efficient at handling highly transactional data nor can they handle analytics that involve non-graph structures. For scenarios like social networks, fraud detection, and logistics where the linkage of data is a major concern, graph database schemas are ideal.

Examples of Database Schema Application Across Different Sectors

Database schemas provide the basic architecture for tackling unique data management needs in different fields. Let’s see how they use appropriate database schemas for their requirements.

eCommerce Website

Here’s how different database schemas are applied in an eCommerce platform:

- Search engine: To power the platform's search capabilities, another NoSQL database could be set up that specializes in text search and provides features like auto-suggestions, and fuzzy matching.

- Business analytics: Star schemas are employed in a data warehouse with historical sales data, website analytics, and customer behavior. These schemas let you quickly and efficiently run complex queries for analytics reports.

- Order management: A relational database schema handles the core transactional aspects that include tracking customers, orders, inventory, and shipping. The modular, normalized tables make it easy to update information while maintaining data integrity.

- Product catalog and reviews: A schemaless NoSQL database is ideal for the dynamic nature of a product catalog, including varying attributes and user-generated content like reviews and ratings. This lets you easily add new product categories without affecting the existing schema.

Banking Applications

In the banking sector, multiple types of database schemas are used to efficiently manage different financial activities. Let’s take a look at them.

- Customer communication: Unstructured JSON databases are ideal for storing various forms of customer interactions like emails or chat logs. These databases easily adapt to different kinds of data.

- Transaction handling: Graph databases are used for real-time transaction tracking. They connect customers to their accounts and transactions seamlessly for quick and secure processing of banking activities.

- Historical analysis: Data warehouses that store past transaction records use snowflake schemas for categorization by factors like customer demographics or account types. This helps in trend analysis and making informed decisions.

Healthcare Databases

Different database schema types have unique roles in healthcare systems for managing complex medical data:

- Medical standards: Reference schemas integrate data from different sources using standardized medical codes and terminologies. This makes the data consistent across the board.

- Clinical notes: Hybrid databases mix structured healthcare records with unstructured notes from healthcare providers. This provides a more complete view of a patient's healthcare journey.

- Patient records: Relational database schemas handle crucial healthcare entities like patient information, medications, and treatment history. With its modular, normalized tables, this schema makes it easy to update data while maintaining its integrity.

Customer Relationship Systems (CRM)

Database schemas in CRM systems address multiple business needs:

- Business metrics: Star schemas within a data warehouse aggregate critical business events like sales and customer interactions. This makes the analytical process more straightforward.

- Communication logs: Hybrid database schemas capture both structured CRM information and unstructured data like emails and calls. This makes the database richer and more versatile for analysis.

- Detail-driven analysis: Snowflake schemas refine broader categories like product types into more specific attributes. This supports more detailed analytics and helps businesses better understand their customer base.

Supply Chain Management

In modern supply chains, database schemas are used for:

- Time-based analytics: Temporal databases specialize in analyzing past performance data to make future predictions about shipping and resource management.

- Supply chain elements: Graph databases model connections between suppliers, manufacturing plants, and logistics centers. This helps in better planning and resource allocation.

- Location optimization: Geo-spatial databases plot the entire logistics network on a map. This helps make on-the-spot decisions for transport and distribution for route optimization and cost reductions.

Conclusion

As data scales, so do the intricacies of its functionality and the magnitude of maintenance challenges. This is where and when a database schema becomes crucial for not only handling sector-specific data challenges, but also for better data governance, flexibility, performance, and scalability.

If you are looking for a cutting-edge tool to help you in your data management, go for Estuary. It not only offers real-time database replication but also caters to a range of data needs. This makes Flow a must-have for your data management toolkit.

Sign up for free and start your journey towards efficient, real-time database management today. Contact our team for more details.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.