Apache Kafka is the most widely used distributed data streaming platform today. However, managed data streaming services like Confluent and Estuary Flow bring more to the table than Kafka’s pure infrastructure. So, which approach is the right choice for your business?

Today’s post compares Confluent Kafka vs. Apache Kafka and provides insights into how Estuary Flow also fits into the mix of event-streaming options. We’ll explore which solution is best for your real-time big data infrastructure.

All distributed streaming platforms work (more or less) similarly. They continuously process real-time data in the form of events, using a distributed architecture that can efficiently and durably move large volumes of data to one — or many — destinations.

Still, there are some important distinctions. And in today’s increasingly data-driven world, having the right distributed streaming platform can make all the difference.

We’ll review these three options, which represent three categories of event streaming software available to businesses:

- A popular streaming broker, Kafka.

- A managed version of Kafka, Confluent.

- A managed version of an alternative streaming broker, Estuary Flow.

We’ll highlight their characteristics and differences with a detailed feature comparison. Once you’ve read this post, you’ll be ready to choose the best solution for your real-time big data infrastructure.

Distributed Streaming Platforms – A Brief Overview

Data streaming platforms are the technological infrastructure behind real-time data. If you’ve ever interacted with continuously-updating information through an app or website, for instance:

- Tracking your food delivery driver or ride-share

- Viewing how many items are in stock at an online store

- Being asked to provide extra verification when signing in on a new device

…you’ve benefitted from data streaming.

Data streaming applications process data in terms of change events in the order that they occur. Other applications can then consume these events and update in milliseconds.

This makes streaming platforms very different from databases, which are more concerned with the state of your data than how it’s changing. Databases give you the full picture of your data, making them excellent for querying but not for alerting you of data changes.

Data streaming platforms like the ones we’re discussing today have the additional advantage of being distributed. This means that they are deployed over clusters of servers, rather than just one. This makes it possible for them to scale up and down and handle the amount of data streamed at any given moment without slowing down.

When deployed correctly, data streaming platforms provide a much more efficient way to build data pipelines than traditional batch methods.

Now, let’s take a closer look at the three platforms.

What Is Apache Kafka?

Apache Kafka is a distributed stream processing platform and event bus. Developed by LinkedIn and later donated to the Apache Software Foundation, the program is completely open-source.

Kafka provides a high-throughput, unified, and low-latency service to handle real-time data feeds and has consistently been one of the most popular data streaming platforms available today.

It has a huge market share with around 80% of companies from the Fortune 100 list currently deploying Kafka in some form — either independently or through Confluent (more on that below).

There’s a good reason for this: Kafka can be used to process, store, and analyze data at scale, making it powerful in almost every industry – from computer software, financial services, and healthcare, to government and transportation.

But there’s another reason for Kafka’s popularity with massive companies: these companies are able to support the large, well-funded engineering teams that are necessary to deploy and maintain Kafka…or pay another company to manage it for them.

Kafka is an open-ended framework. It provides the raw ingredients to build just about any data streaming product and can integrate with external systems. But actually implementing Kafka successfully is a different story.

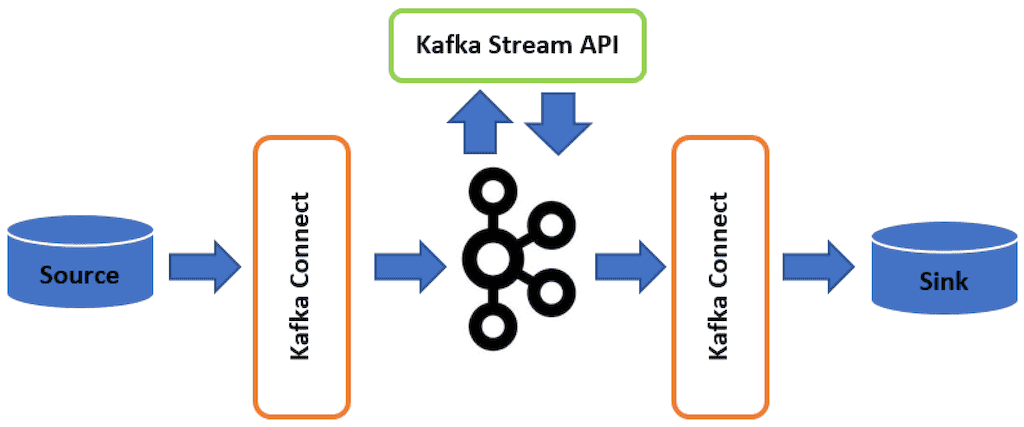

Simply put: using Kafka to its full potential is difficult. Even with the help of the Kafka Connect API to connect to external systems and Kafka Streams libraries for stream processing applications, the challenge remains daunting for small to mid-sized companies.

Pros Of Apache Kafka

Kafka has many advantages owing to its vast usage across industries and developmental maturity. It allows data distribution across multiple servers because of Kafka’s partitioned log model, which makes the platform incredibly scalable. It is also very fast with low latency because of its decoupled data streams.

It is also very fault-tolerant since Kafka distributes and replicates partitions across many servers, and data is written to disk. This means server failure is a non-issue.

Cons Of Apache Kafka

Kafka is far from the complete package and has many limitations; primarily the fact that it’s challenging to set up and managed, as discussed above. It can limit newer companies since they will be hesitant to work with the platform.

Even if your company is able to hire one or more specialists to manage your Kafka deployment, it’s still not a perfect system:

- Kafka doesn’t integrate well with systems that use batch processing.

- On-the-fly data transformation is difficult without using an outside framework.

- The way in which Kafka compresses and decompresses data flow can reduce performance and also affect its throughput.

- Kafka also doesn’t support wildcard topic selection and instead, only matches the exact topic name. This can make topic selection difficult in certain use cases.

What Is Confluent Kafka?

Enter Confluent. The Confluent platform was developed by the same engineers that brought Apache Kafka to the mainstream. Essentially, Confluent provides managed services built on Kafka that are easier for teams to deploy, maintain, and monitor.

In fact, most of the Fortune 100 companies mentioned above that use Kafka choose to use Confluent’s managed version.

Pros Of Confluent Kafka

By building user-friendly apps on top of Kafka’s powerful core infrastructure, Confluent makes it easy to manage data streaming.

The paid tier, Confluent Cloud, is cloud-hosted on your behalf. Includes a graphical user interface for creating, editing, and monitoring streams, auto-balancing capabilities, and data connectors based on the Kafka Connect API.

The platform also provides Confluent Open Source, which can be deployed for free. While it doesn’t include all the features of Confluent Cloud, the open-source version includes a built-in command line interface (CLI) in multiple programming languages, connectors for JDBC, ELasticSearch, and HDFS, and a REST proxy to connect to web applications.

Cons Of Confluent Kafka

While Confluent utilizes Kafka’s core functionalities to work, it is limited in terms of customization and personalization. The platform also doesn’t have the best reputation when it comes to fault tolerance. To prevent accidental data loss, the service does not support reducing the number of pods.

And because Confluent is a managed version of Apache Kafka, most of Kafka’s cons apply here, too.

What Is Estuary?

Like Confluent, Estuary Flow is a managed tool built on a streaming event broker. Rather than being built on Kafka, Flow is built on Gazette. It’s a real-time Data Operations platform for future-proof pipelines, including both historical and real-time data.

You can choose to work with Flow in an intuitive graphical user interface or its CLI tool. Flow also includes native support for typechecked, on-the-fly data transformation.

Flow guides data through its entire lifecycle, from collection to operationalization, in real time. It does this with a wide variety of data connectors, helping you create guided data pipelines from source to destination systems.

Put another way, Estuary Flow combines a Kafka-alternative streaming broker with a managed platform that will look familiar to those who use batch-based ETL tools like Fivetran.

Pros Of Estuary

- Estuary Flow can handle event streaming (real-time data) as well as batch data.

- Flow includes a wide variety of data connectors, so data pipeline creation between the most popular data systems is easy. This includes a Kafka connector.

- Streaming data is stored and validated as JSON and can be partitioned for optimal performance. Standardized JSON provides the structure needed for real-time data transformations within the platform.

- Because of how Gazette uses cloud storage, historical data is handled more efficiently than Kafka and systems based on Kafka.

Cons Of Estuary

- Gazette doesn’t have a history of community support to rival Kafka’s. The open-source community remains small and it’s hard to find support outside Estuary itself.

- Though self-hosting Estuary Flow is available, it’s challenging compared to the cloud-managed service.

Is Kafka An ETL Tool?

Traditional ETL has all but faded from the mainstream with it not being suitable for the demands of the data-driven future. Instead, businesses have begun adopting newer data pipeline architectures. This includes real-time data streaming ETL which allows data movement and transformation at a larger scale.

Kafka is the primary tool most companies use when setting up real-time ETL pipelines.

To summarize: Kafka isn’t necessarily an ETL tool, but it can be used to create them.

Confluent is a great example of how Kafka can be used to create a platform that resembles ETL in some ways but is still a broader framework overall.

This is also where Estuary comes into the frame. Built from the ground up as a real-time ELT, the platform can help with transformations, and low-latency materializations, and provide instant CDC (Change Data Capture). With its primary focus on end-to-end pipelines built with data connectors, the Flow user interface is more similar to modern ELT or ETL Tools.

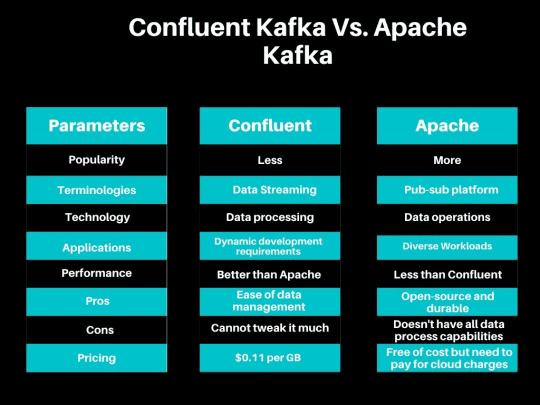

Confluent Kafka vs Apache Kafka vs Estuary – 4 Key Differences

Selecting a platform fit for your business can be a difficult affair. With three solid options to choose from, most businesses may automatically favor Kafka since it is already widely in use. But does its widespread use have genuine backing when it comes to features, underlying technology, and pricing?

Let’s find out.

1. Product Scope

Apache Kafka is an event streaming platform. It provides a publish/subscribe model to read and stream data events, stores those events, and gives a framework for event processing. That’s all it is — it’s a low-level framework that can be deployed in any matter of ways.

Confluent Kafka is a managed service built on top of that same streaming platform. This includes distributed cloud hosting, automatic scaling, a graphical user interface, monitoring capabilities, APIs for data processing, and more.

Estuary Flow is similar to Confluent in that it’s a managed service built on top of a streaming platform. But the streaming platform used — Gazette — is different. Like Confluent, Flow is designed to give developers and other stakeholders a meaningful way to work with a powerful but challenging streaming runtime.

2. Data Modelling

On a fundamental level, all the products we’re talking about in this article rely on a stateful event-streaming framework for data processing and storage.

When we look closely, there are differences between the technical details of these frameworks.

Kafka (and by extension, Confluent) models data in fundamental topics, which can be split into partitions.

Gazette (and by extension, Estuary Flow), on the other hand, fundamentally thinks of data in collections, which are split into journals. However, collections aren’t a direct analog to Kafka topics; they’re more similar to Kafka streams — they’re more fundamentally built on data in motion.

There are many more distinctions between the way these two technologies model streaming data. See the Flow documentation to learn more.

3. Data Connectors

If you hope to use any of these platforms to build real-time data pipelines, you’ll need integrations with other systems, otherwise known as connectors.

Kafka primarily offers the Kafka Connect API — an API with which developers can create their own connectors. Kafka itself doesn’t inherently manage or provide connectors, but they are created and shared by the open-source community, third-party service providers, and, of course, Confluent.

In the Confluent Kafka world, there are more pre-built connector options. Several dozen sources and sink (destination) connectors are available for self-hosted Confluent and Confluent Cloud. These connectors prioritize systems you might need to integrate with Kafka for app development.

Like Confluent, Estuary Flow includes a variety of connectors for source and destination systems. The main priority with Flow is facilitating end-to-end movement between a variety of systems. These include SaaS, but Estuary emphasizes developing connectors to high-scale technologies like cloud storage, databases, and pub/sub systems (including Kafka).

4. Pricing

Since Apache Kafka is an open-source platform, it is free to use, however, it does include the caveat that users must store their data on the cloud provider or on-premise systems.

Self-managed Confluent is also free to use. But to reap the full benefits of Confluent, you’ll need the paid option, Confluent Cloud. It’s priced by the hour on a case-by-case basis, but standard deployments start at $1.50/hour.

Estuary Flow has three plans to choose from. These are listed below:

- Free: Includes 10 GB of data per month with up to 2 connector instances, no credit card required.

- Cloud: Starts at $0.50/GB and approximately $100 per connector instance, with a 30-day free trial and options for up to 12 connector instances.

- Enterprise: Custom pricing tailored for mission-critical deployments with additional features like 24/7 support, SOC2 compliance, and private deployment options.

For full details, please visit their pricing page.

Confluent and Estuary Flow are fundamentally different in the way they’re priced: Confluent is charged by the hour, while Flow is only charged by the amount of data processed.

| Feature | Apache Kafka | Confluent | Estuary Flow |

|---|---|---|---|

| Deployment Model | Self-managed on-premise or cloud | Managed cloud or self-managed | Managed cloud or self-managed |

| Ease of Use | Challenging, requires specialized skills | User-friendly interface, monitoring | Intuitive interface, CLI tool |

| Data Connectors | Kafka Connect API, community-contributed | Pre-built connectors, extensive | Wide variety of connectors, including Kafka |

| Pricing Model | Open-source, additional costs for storage | Hourly pricing for managed services | Data processed-based pricing |

| Fault Tolerance | Distributed, fault-tolerant architecture | Fault tolerance considerations, data loss | Dependable fault-tolerance |

Conclusion

Kafka, regardless of the Apache or Confluent type, is a popular and widespread data streaming platform for good reason. You can’t go wrong with this option as the cornerstone of your real-time data infrastructure.

But in the last few years, other data streaming providers are entering the arena for the first time – including Estuary. This means you have more options than ever before when it comes to data streaming. There’s no one-size-fits-all answer, but the variations in priorities and features between the different platforms set you up to find the tool that’s best for your data.

To test out Estuary Flow, start your free trial, or contact our team for a free consultation.

About the author

With over 15 years in data engineering, a seasoned expert in driving growth for early-stage data companies, focusing on strategies that attract customers and users. Extensive writing provides insights to help companies scale efficiently and effectively in an evolving data landscape.